From the workbench distribution zip, take the kie-wb-*.war that corresponds to your

application server:

jboss-as7: tailored for JBoss AS 7 (which is being renamed to WildFly in version 8)tomcat7: the generic war, works on Tomcat and Jetty

Note

The differences between these war files are superficial only, to allow out-of-the-box

deployment. For example, some JARs might be excluded if the application server already supplies them.

To use the workbench on a different application server (WebSphere, WebLogic, ...), use the

tomcat7 war and tailor it to your application server's version.

The workbench stores its data, by default in the directory $WORKING_DIRECTORY/.niogit, for

example wildfly-8.0.0.Final/bin/.gitnio, but it can be overridden with the system property -Dorg.uberfire.nio.git.dir.

Note

In production, make sure to back up the workbench data directory.

Here's a list of all system properties:

org.uberfire.nio.git.dir: Location of the directory.niogit. Default: working directoryorg.uberfire.nio.git.daemon.enabled: Enables/disables git daemon. Default:trueorg.uberfire.nio.git.daemon.host: If git daemon enabled, uses this property as local host identifier. Default:localhostorg.uberfire.nio.git.daemon.port: If git daemon enabled, uses this property as port number. Default:9418org.uberfire.nio.git.ssh.enabled: Enables/disables ssh daemon. Default:trueorg.uberfire.nio.git.ssh.host: If ssh daemon enabled, uses this property as local host identifier. Default:localhostorg.uberfire.nio.git.ssh.port: If ssh daemon enabled, uses this property as port number. Default:8001org.uberfire.nio.git.ssh.cert.dir: Location of the directory.securitywhere local certtificates will be stored. Default: working directoryorg.uberfire.metadata.index.dir: Place where Lucene.indexfolder will be stored. Default: working directoryorg.uberfire.cluster.id: Name of the helix cluster, for example:kie-clusterorg.uberfire.cluster.zk: Connection string to zookeeper. This is of the formhost1:port1,host2:port2,host3:port3, for example:localhost:2188org.uberfire.cluster.local.id: Unique id of the helix cluster node, note that ':' is replaced with '_', for example:node1_12345org.uberfire.cluster.vfs.lock: Name of the resource defined on helix cluster, for example:kie-vfsorg.uberfire.cluster.autostart: Delays VFS clustering until the application is fully initialized to avoid conflicts when all cluster members create local clones. Default:falseorg.uberfire.sys.repo.monitor.disabled: Disable configuration monitor (do not disable unless you know what you're doing). Default:falseorg.uberfire.secure.key: Secret password used by password encryption. Default:org.uberfire.adminorg.uberfire.secure.alg: Crypto algorithm used by password encryption. Default:PBEWithMD5AndDESorg.uberfire.domain: security-domain name used by uberfire. Default:ApplicationRealmorg.guvnor.m2repo.dir: Place where Maven repository folder will be stored. Default: working-directory/repositories/kieorg.kie.example.repositories: Folder from where demo repositories will be cloned. The demo repositories need to have been obtained and placed in this folder. Demo repositories can be obtained from the kie-wb-6.2.0-SNAPSHOT-example-repositories.zip artifact. This System Property takes precedence over org.kie.demo and org.kie.example. Default: Not used.org.kie.demo: Enables external clone of a demo application from GitHub. This System Property takes precedence over org.kie.example. Default:trueorg.kie.example: Enables example structure composed by Repository, Organization Unit and Project. Default:false

To change one of these system properties in a WildFly or JBoss EAP cluster:

Edit the file

$JBOSS_HOME/domain/configuration/host.xml.Locate the XML elements

serverthat belong to themain-server-groupand add a system property, for example:<system-properties> <property name="org.uberfire.nio.git.dir" value="..." boot-time="false"/> ... </system-properties>

These steps help you get started with minimum of effort.

They should not be a substitute for reading the documentation in full.

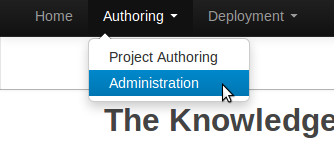

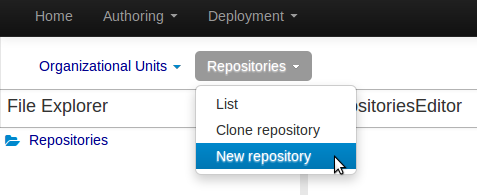

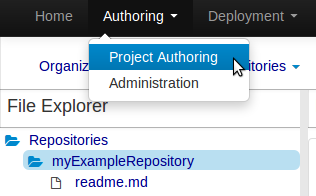

Create a new repository to hold your project by selecting the Administration Perspective.

Select the "New repository" option from the menu.

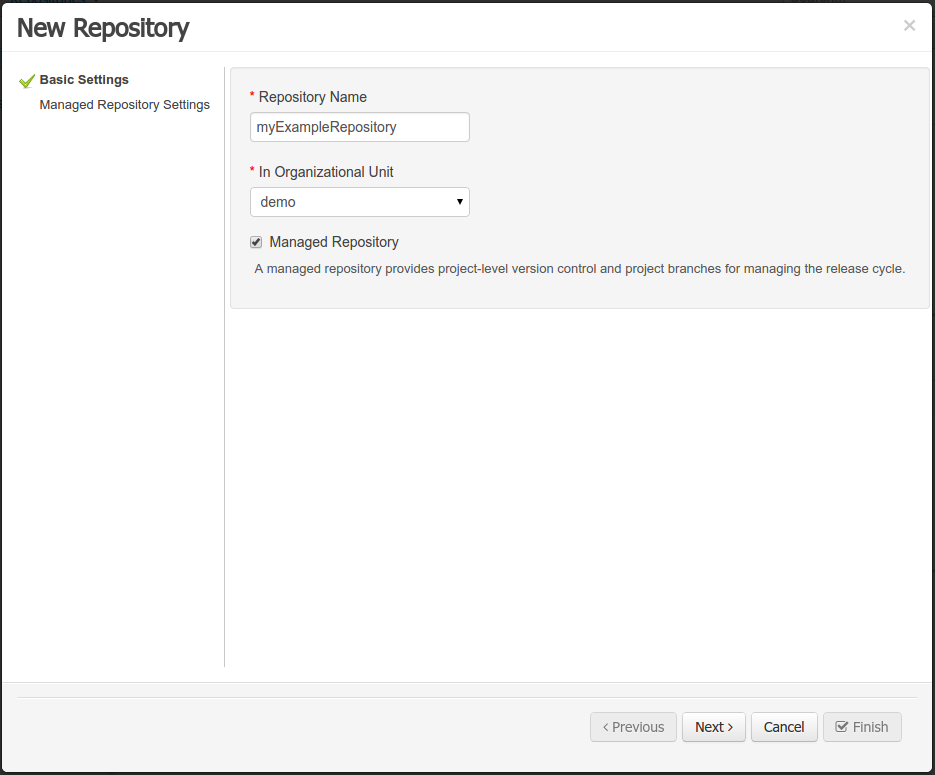

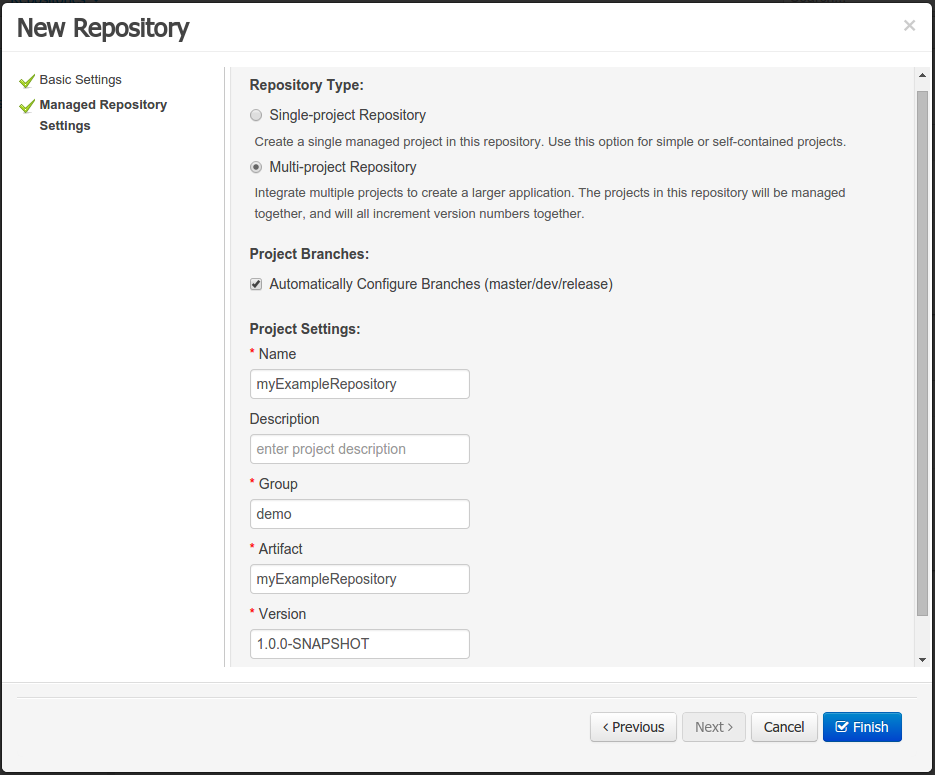

Enter the required information.

Select the Authoring Perspective to create a new project.

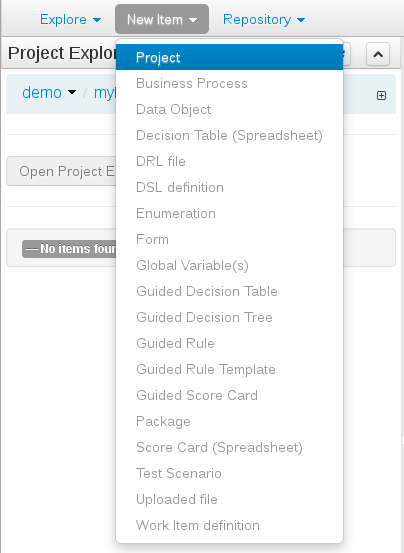

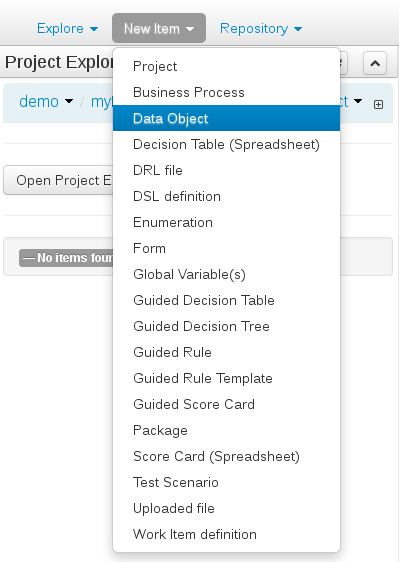

Select "Project" from the "New Item" menu.

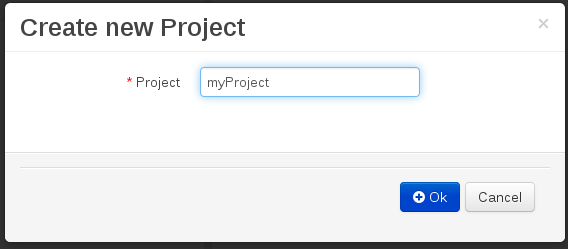

Enter a project name first.

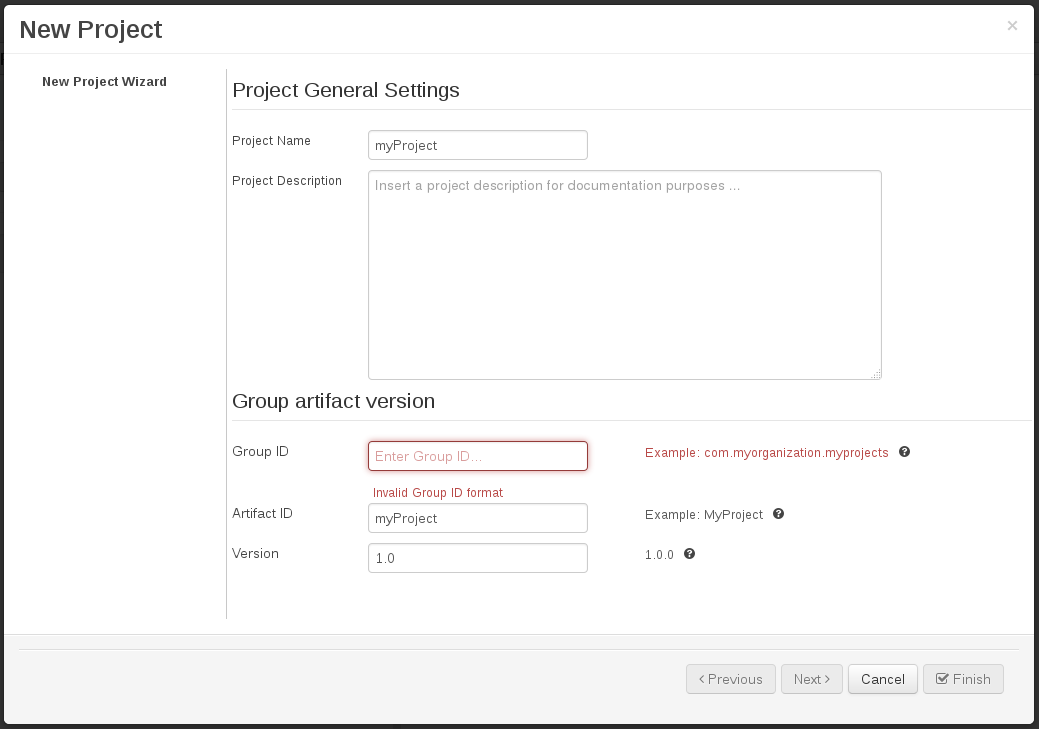

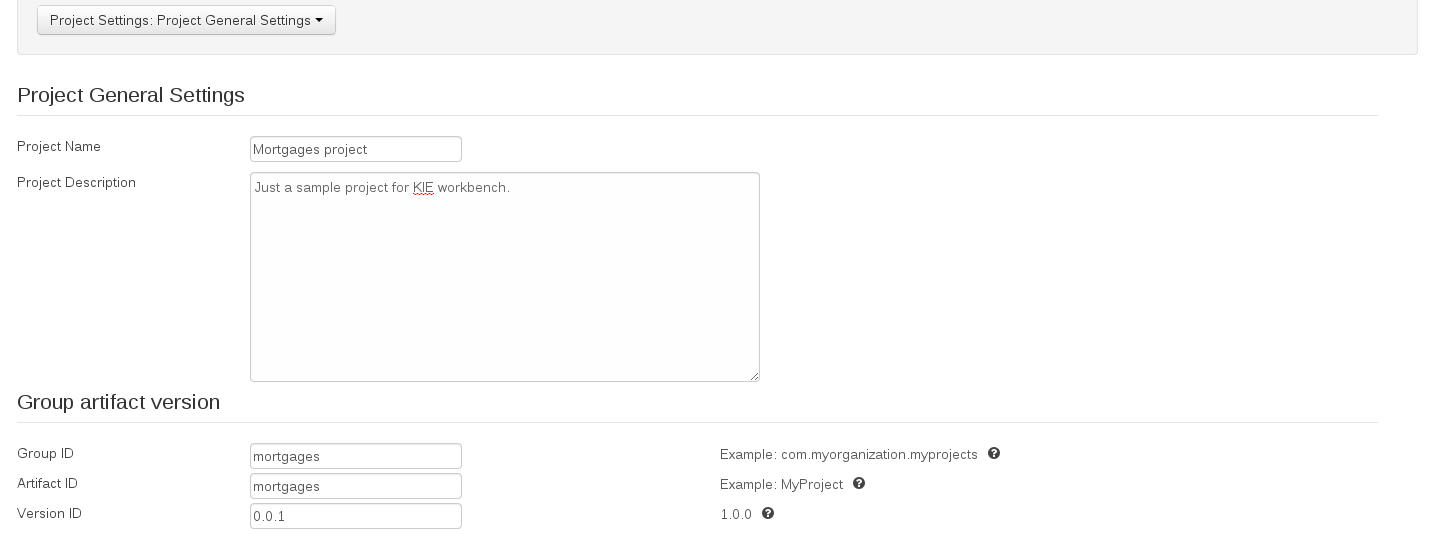

Enter the project details next.

Group ID follows Maven conventions.

Artifact ID is pre-populated from the project name.

Version is set as 1.0 by default.

After a project has been created you need to define Types to be used by your rules.

Select "Data Object" from the "New Item" menu.

Note

You can also use types contained in existing JARs.

Please consult the full documentation for details.

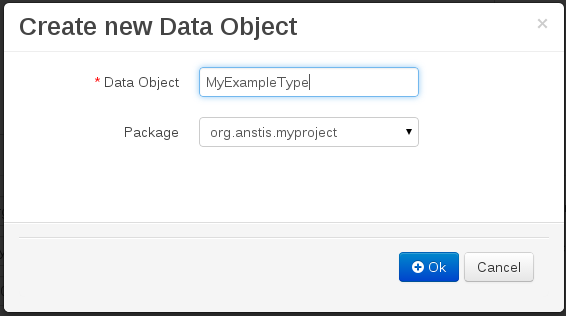

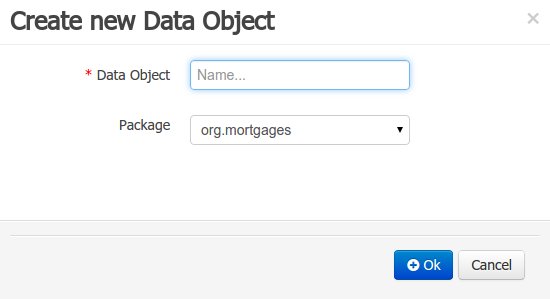

Set the name and select a package for the new type.

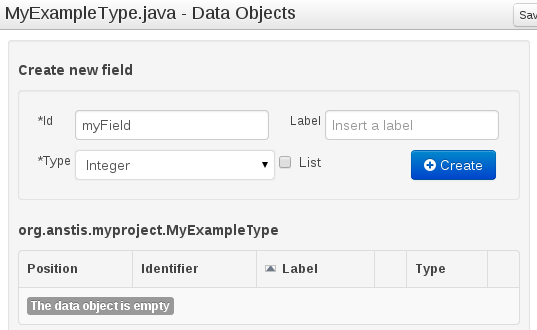

Set field name and type and click on "Create" to create a field for the type.

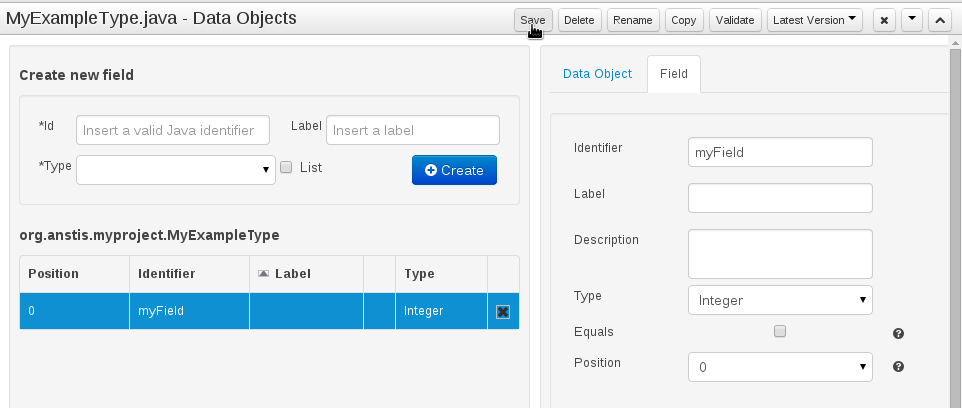

Click "Save" to update the model.

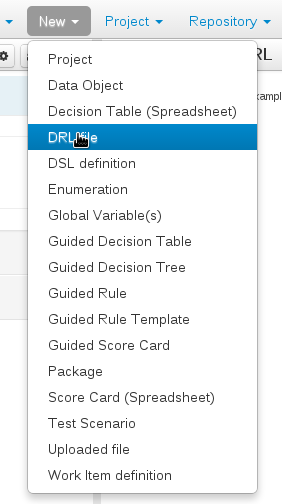

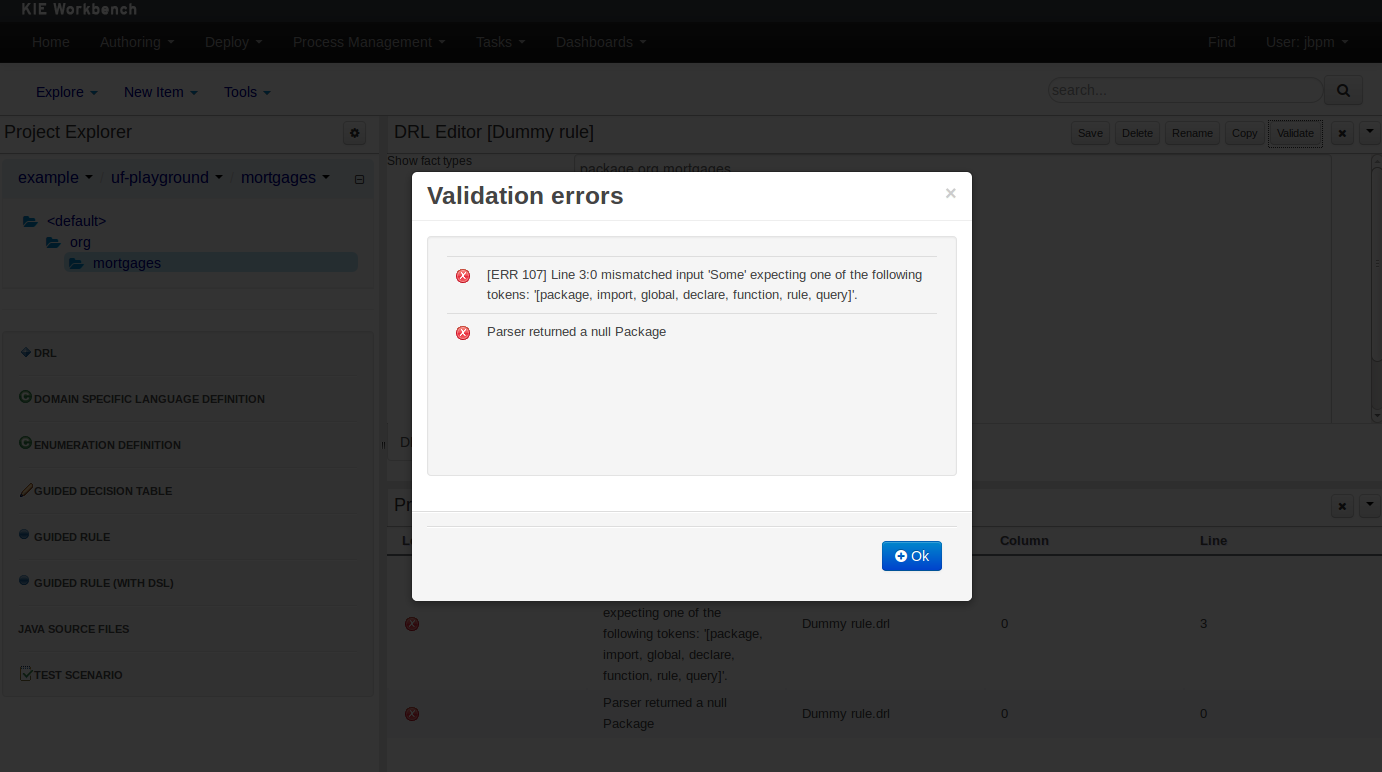

Select "DRL file" (for example) from the "New Item" menu.

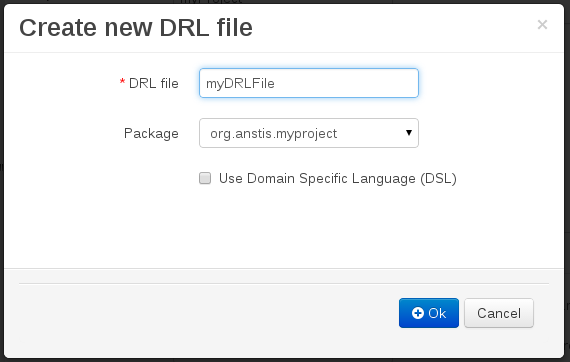

Enter a file name for the new rule.

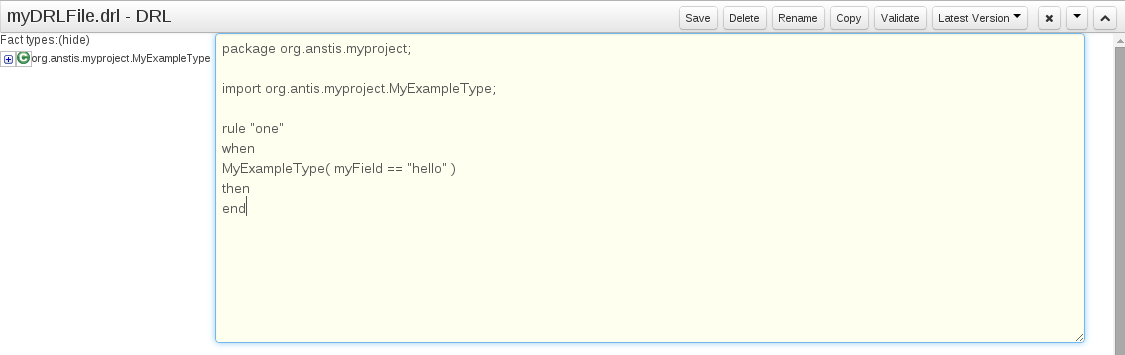

Enter a definition for the rule.

The definition process differs from asset type to asset type.

The full documentation has details about the different editors.

Once the rule has been defined it will need to be saved.

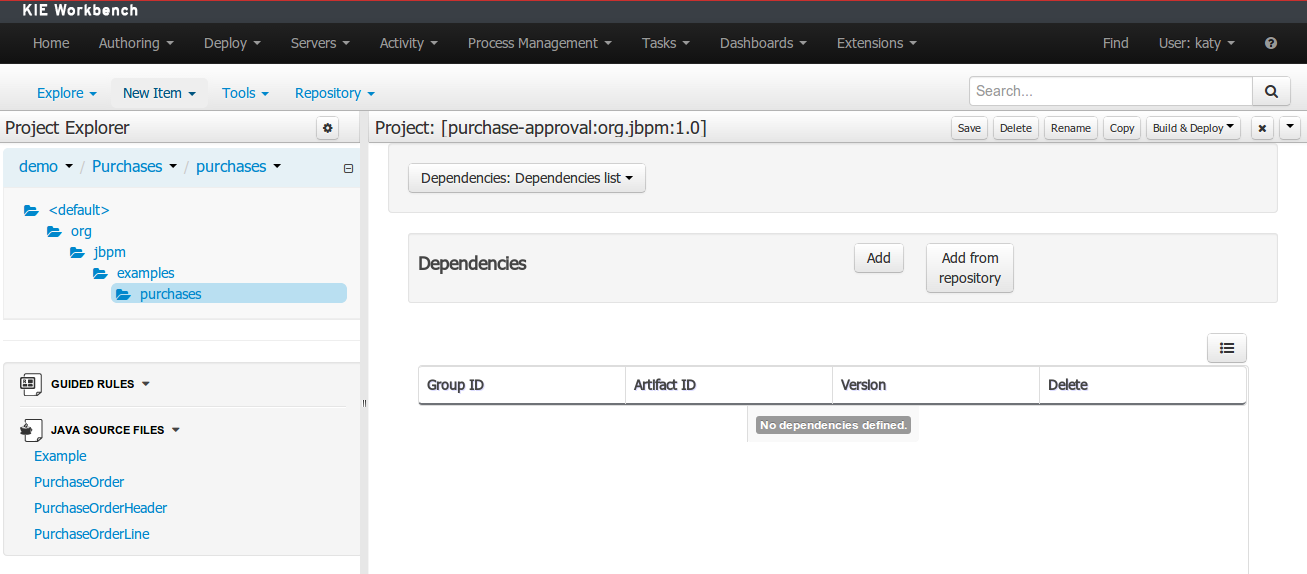

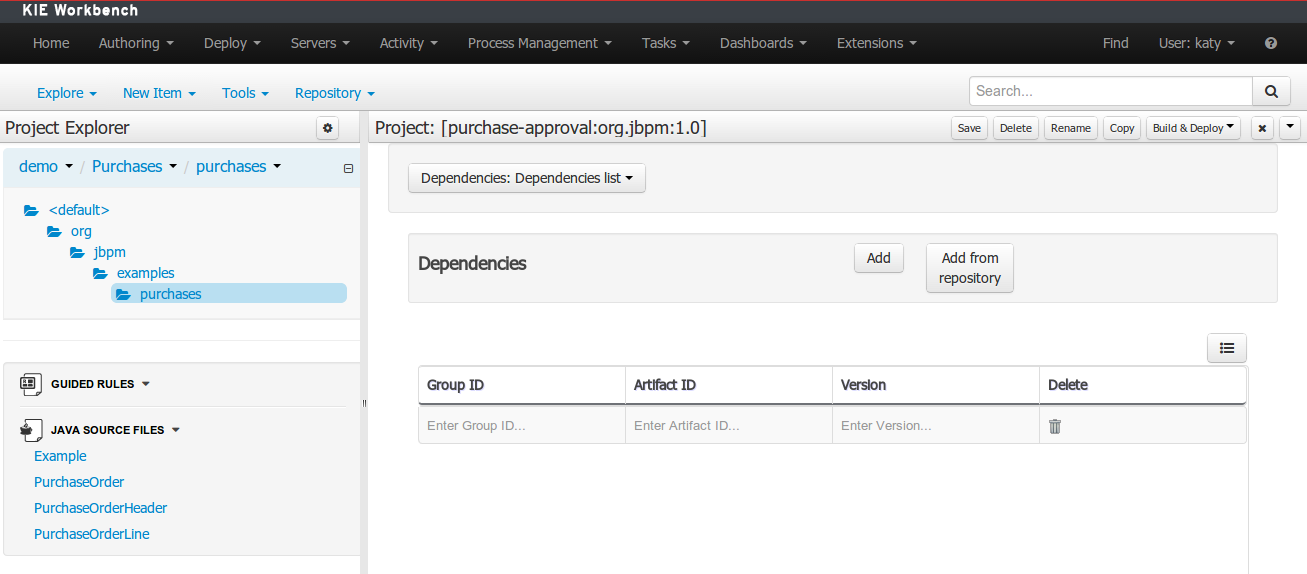

Once rules have been defined within a project; the project can be built and deployed to the Workbench's Maven Artifact Repository.

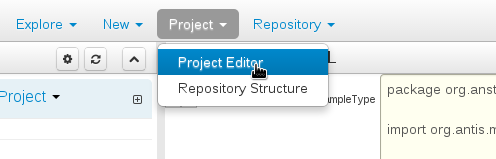

To build a project select the "Project Editor" from the "Project" menu.

Click "Build and Deploy" to build the project and deploy it to the Workbench's Maven Artifact Repository.

When you select Build & Deploy the workbench will deploy to any repositories defined in the Dependency Management section of the pom in your workbench project. You can edit the pom.xml file associated with your workbench project under the Repository View of the project explorer. Details on dependency management in maven can be found here : http://maven.apache.org/guides/introduction/introduction-to-dependency-mechanism.html

If there are errors during the build process they will be reported in the "Problems Panel".

Now the project has been built and deployed; it can be referenced from your own projects as any other Maven Artifact.

The full documentation contains details about integrating projects with your own applications.

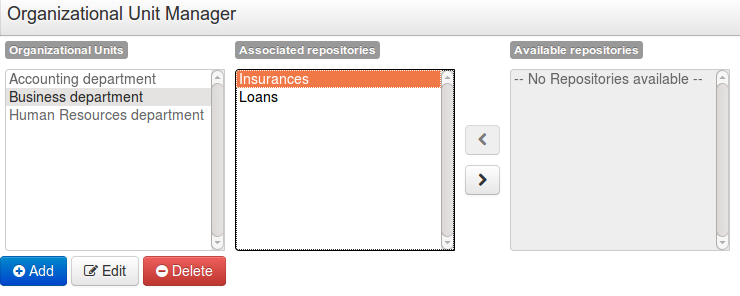

A workbench is structured with Organization Units, VFS repositories and projects:

Organization units are useful to model departments and divisions.

An organization unit can hold multiple repositories.

Repositories are the place where assets are stored and each repository is organized by projects and belongs to a single organization unit.

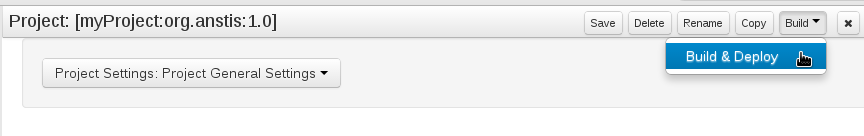

Repositories are in fact a Virtual File System based storage, that by default uses GIT as backend. Such setup allows workbench to work with multiple backends and, in the same time, take full advantage of backend specifics features like in GIT case versioning, branching and even external access.

A new repository can be created from scratch or cloned from an existing repository.

One of the biggest advantage of using GIT as backend is the ability to clone a repository from external and use your preferred tools to edit and build your assets.

Warning

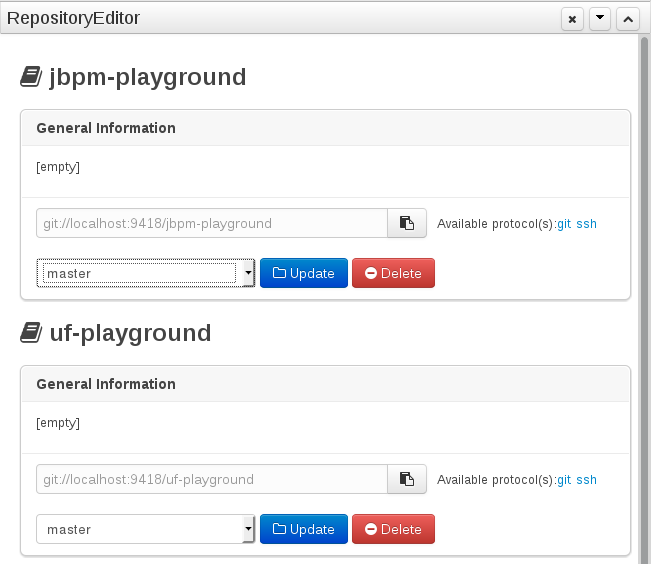

Never clone your repositories directly from .niogit directory. Use always the available protocol(s) displayed in repositories editor.

The workbench authenticates its users against the application server's authentication and authorization (JAAS).

On JBoss EAP and WildFly, add a user with the script $JBOSS_HOME/bin/add-user.sh (or

.bat):

$ ./add-user.sh

// Type: Application User

// Realm: empty (defaults to ApplicationRealm)

// Role: adminThere is no need to restart the application server.

The Workbench uses the following roles:

admin

analyst

developer

manager

user

Administrates the BPMS system.

Manages users

Manages VFS Repositories

Has full access to make any changes necessary

Developer can do almost everything admin can do, except clone repositories.

Manages rules, models, process flows, forms and dashboards

Manages the asset repository

Can create, build and deploy projects

Can use the JBDS connection to view processes

Analyst is a weaker version of developer and does not have access to the asset repository or the ability to deploy projects.

Daily user of the system to take actions on business tasks that are required for the processes to continue forward. Works primarily with the task lists.

Does process management

Handles tasks and dashboards

It is possible to restrict access to repositories using roles and organizational groups. To let an user access a repository.

The user either has to belong into a role that has access to the repository or to a role that belongs into an orgazinational group that has access to the repository. These restrictions can be managed with the command line config tool.

Provides capabilities to manage the system repository from command line. System repository contains the data about general workbench settings: how editors behave, organizational groups, security and other settings that are not editable by the user. System repository exists in the .niogit folder, next to all the repositories that have been created or cloned into the workbench.

Online (default and recommended) - Connects to the Git repository on startup, using Git server provided by the KIE Workbench. All changes are made locally and published to upstream when:

"push-changes" command is explicitly executed

"exit" is used to close the tool

Offline - Creates and manipulates system repository directly on the server (no discard option)

Table 9.1. Available Commands

| exit | Publishes local changes, cleans up temporary directories and quits the command line tool |

| discard | Discards local changes without publishing them, cleans up temporary directories and quits the command line tool |

| help | Prints a list of available commands |

| list-repo | List available repositories |

| list-org-units | List available organizational units |

| list-deployment | List available deployments |

| create-org-unit | Creates new organizational unit |

| remove-org-unit | Removes existing organizational unit |

| add-deployment | Adds new deployment unit |

| remove-deployment | Removes existing deployment |

| create-repo | Creates new git repository |

| remove-repo | Removes existing repository ( only from config ) |

| add-repo-org-unit | Adds repository to the organizational unit |

| remove-repo-org-unit | Removes repository from the organizational unit |

| add-role-repo | Adds role(s) to repository |

| remove-role-repo | Removes role(s) from repository |

| add-role-org-unit | Adds role(s) to organizational unit |

| remove-role-org-unit | Removes role(s) from organizational unit |

| add-role-project | Adds role(s) to project |

| remove-role-project | Removes role(s) from project |

| push-changes | Pushes changes to upstream repository (only in online mode) |

The tool can be found from kie-config-cli-${version}-dist.zip. Execute the kie-config-cli.sh script and by default it will start in online mode asking for a Git url to connect to ( the default value is ssh://localhost/system ). To connect to a remote server, replace the host and port with appropriate values, e.g. ssh://kie-wb-host/system.

./kie-config-cli.sh To operate in offline mode, append the offline parameter to the kie-config-cli.sh command. This will change the behaviour and ask for a folder where the .niogit (system repository) is. If .niogit does not yet exist, the folder value can be left empty and a brand new setup is created.

./kie-config-cli.sh offlineCreate a user with the role admin and log in with those credentials.

After successfully logging in, the account username is displayed at the top right. Click on it to review the roles of the current account.

After logging in, the home screen shows. The actual content of the home screen depends on the workbench variant (Drools, jBPM, ...).

The Workbench is comprised of different logical entities:

Part

A Part is a screen or editor with which the user can interact to perform operations.

Example Parts are "Project Explorer", "Project Editor", "Guided Rule Editor" etc. Parts can be repositioned.

Panel

A Panel is a container for one or more Parts.

Panels can be resized.

Perspective

A perspective is a logical grouping of related Panels and Parts.

The user can switch between perspectives by clicking on one of the top-level menu items; such as "Home", "Authoring", "Deploy" etc.

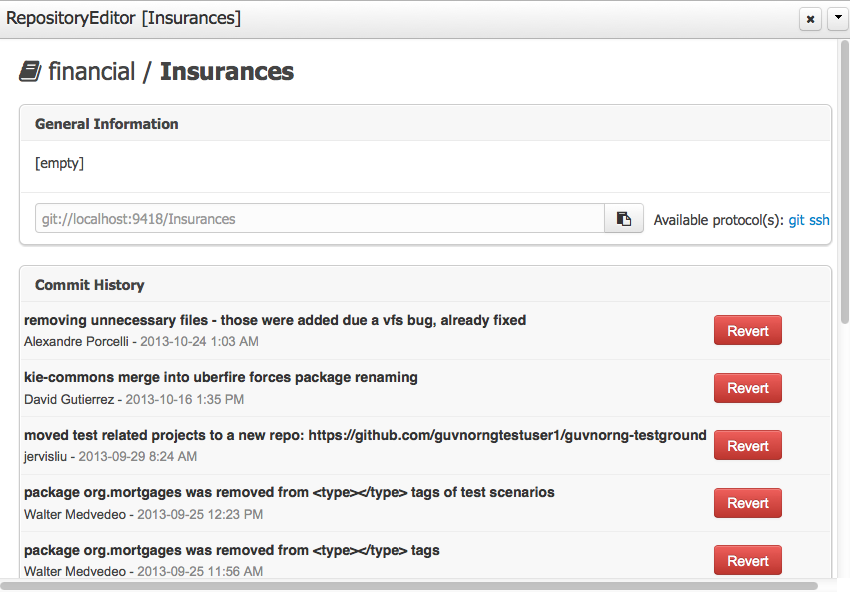

The Workbench consists of three main sections to begin; however its layout and content can be changed.

The initial Workbench shows the following components:-

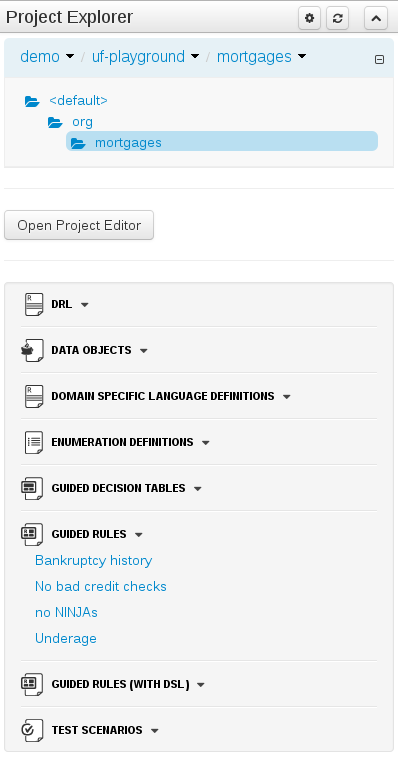

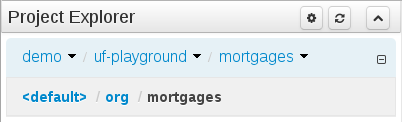

Project Explorer

This provides the ability for the user to browse their configuration; of Organizational Units (in the above "example" is the Organizational Unit), Repositories (in the above "uf-playground" is the Repository) and Project (in the above "mortgages" is the Project).

Problems

This provides the user will real-time feedback about errors in the active Project.

Empty space

This empty space will contain an editor for assets selected from the Project Explorer.

Other screens will also occupy this space by default; such as the Project Editor.

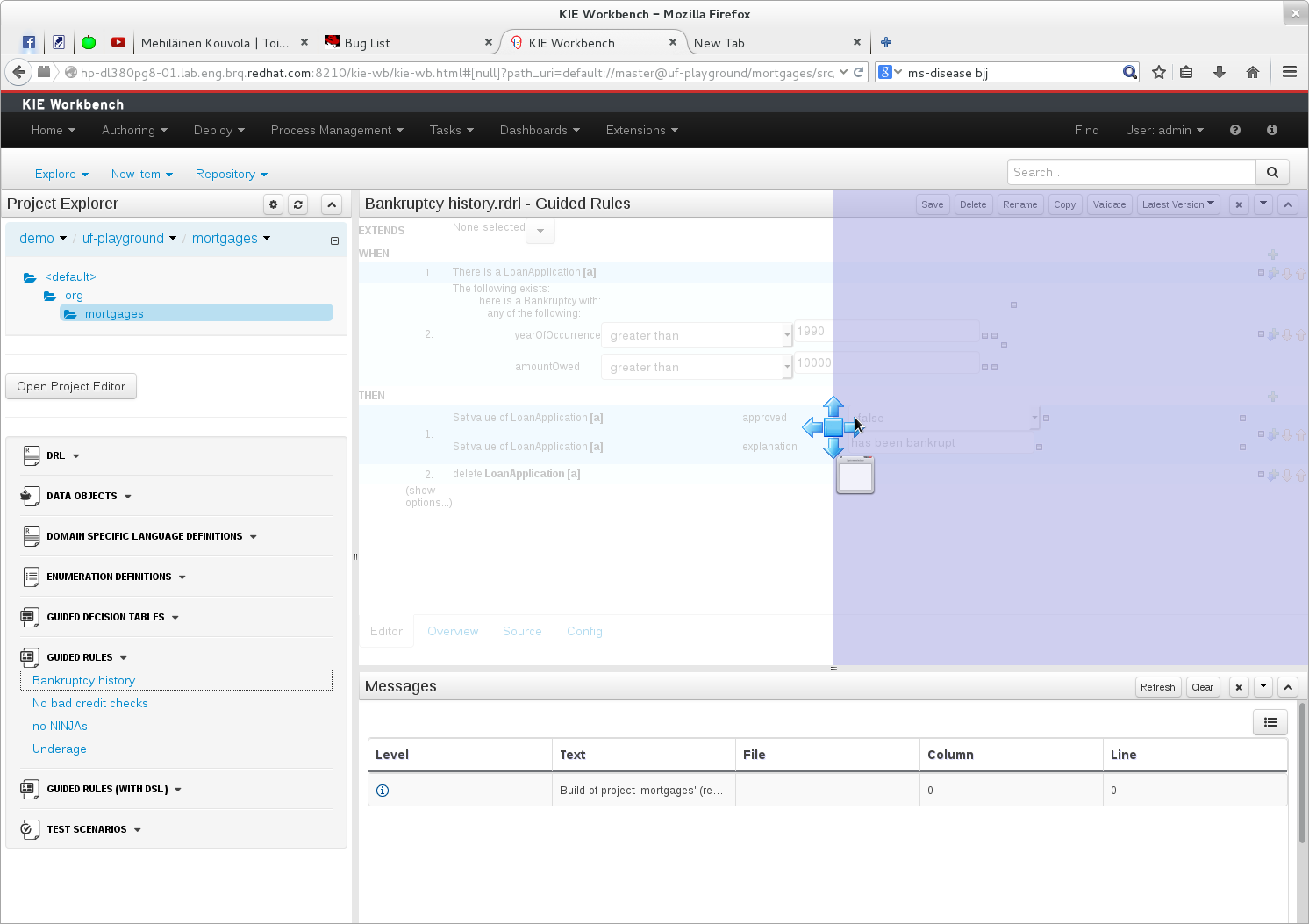

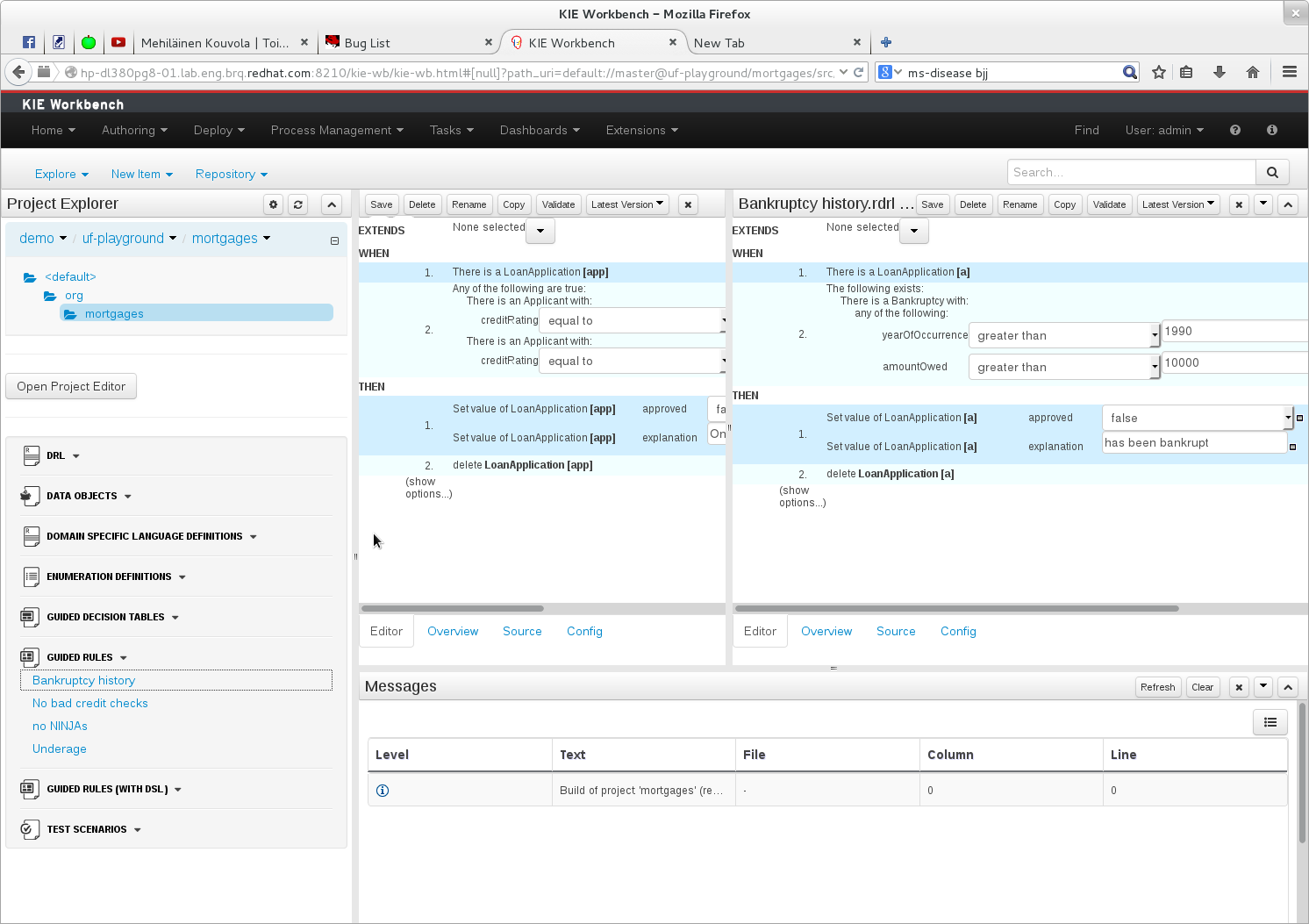

The default layout may not be suitable for a user. Panels can therefore be either resized or repositioned.

This, for example, could be useful when running tests; as the test defintion and rule can be repositioned side-by-side.

The following screenshot shows a Panel being resized.

Move the mouse pointer over the panel splitter (a grey horizontal or vertical line in between panels).

The cursor will changing indicating it is positioned correctly over the splitter. Press and hold the left mouse button and drag the splitter to the required position; then release the left mouse button.

The following screenshot shows a Panel being repositioned.

Move the mouse pointer over the Panel title ("Guided Editor [No bad credit checks]" in this example).

The cursor will change indicating it is positioned correctly over the Panel title. Press and hold the left mouse button. Drag the mouse to the required location. The target position is indicated with a pale blue rectangle. Different positions can be chosen by hovering the mouse pointer over the different blue arrows.

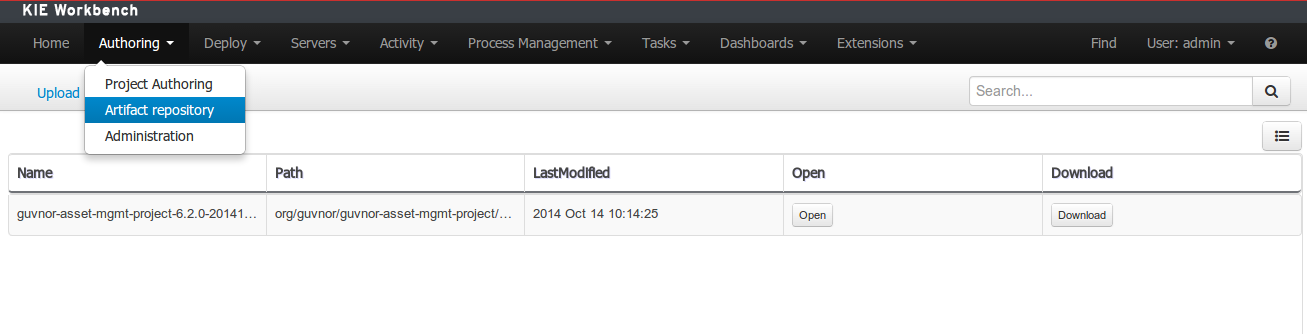

Projects often need external artifacts in their classpath in order to build, for example a domain model JARs. The artifact repository holds those artifacts.

The Artifact Repository is a full blown Maven repository. It follows the semantics of a Maven remote repository: all snapshots are timestamped. But it is often stored on the local hard drive.

By default the artifact repository is stored under $WORKING_DIRECTORY/repositories/kie, but

it can be overridden with the system property

-Dorg.guvnor.m2repo.dir. There is only 1 Maven repository per installation.

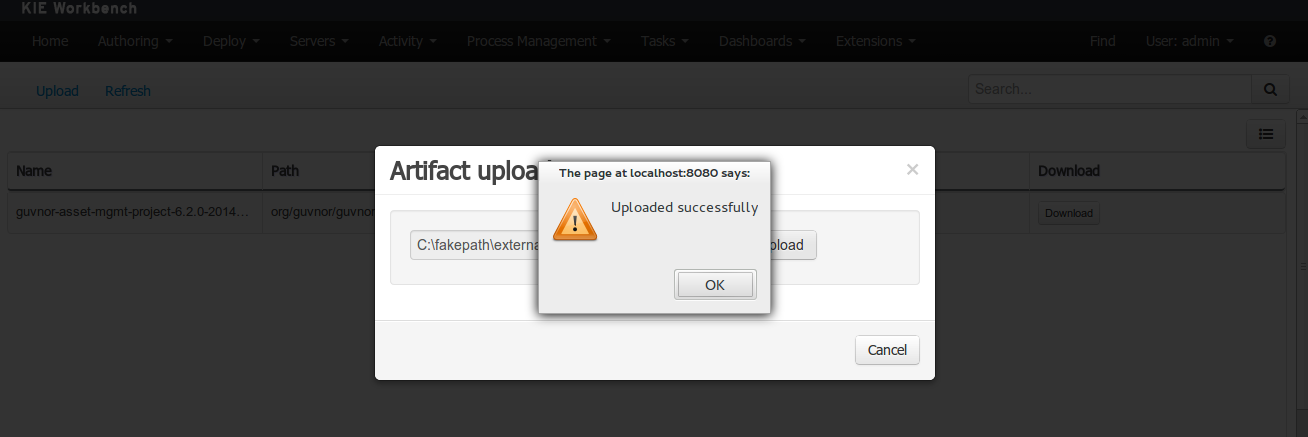

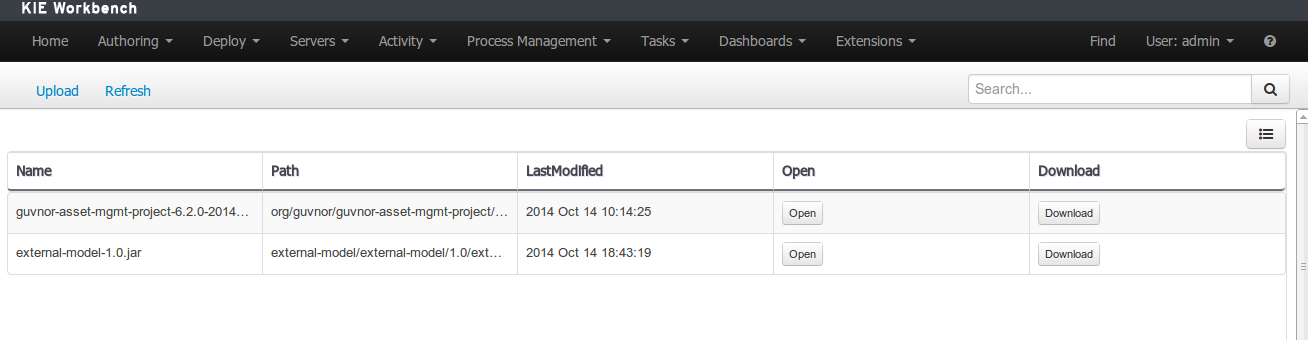

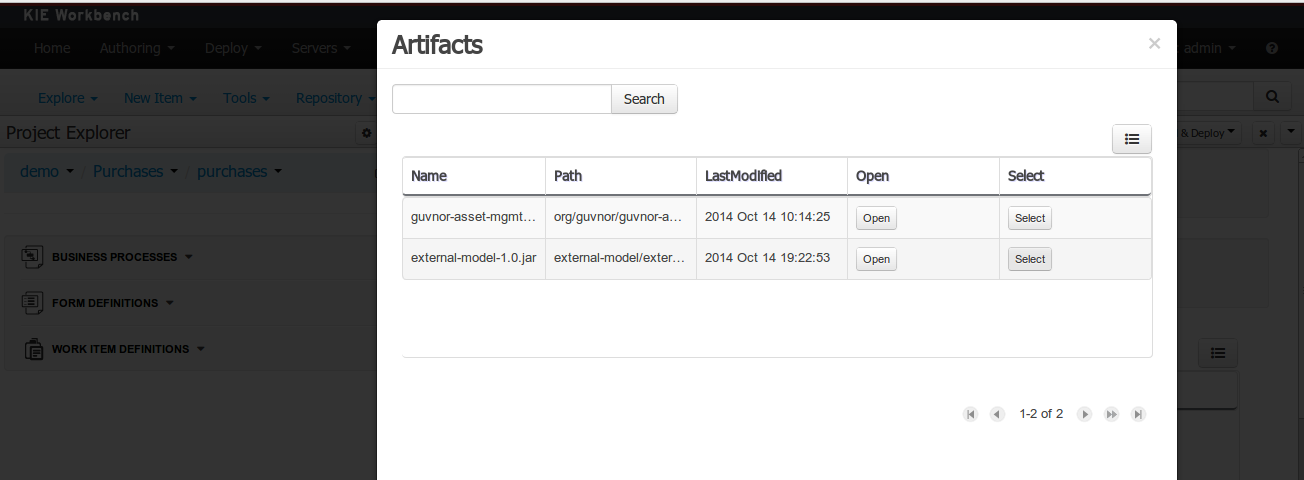

The Artifact Repository screen shows a list of the artifacts in the Maven repository:

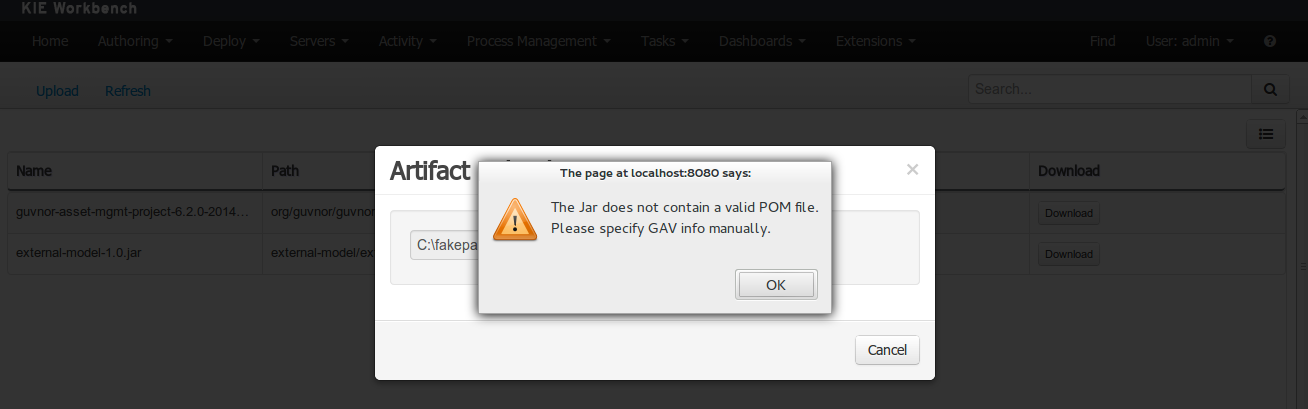

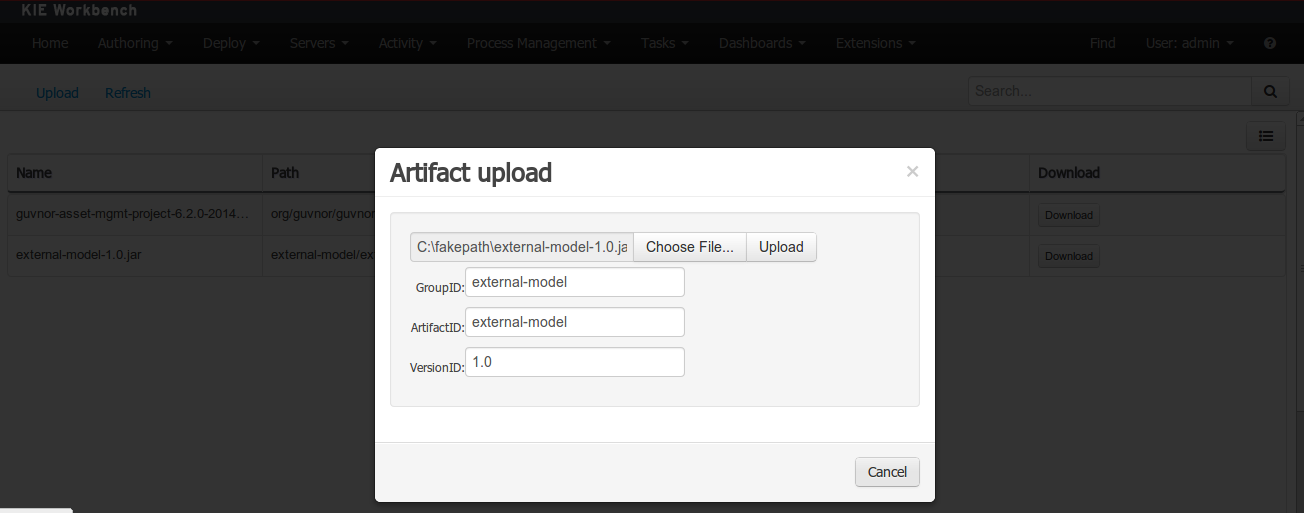

To add a new artifact to that Maven repository, either:

Use the upload button and select a JAR. If the JAR contains a POM file under

META-INF/maven(which every JAR build by Maven has), no further information is needed. Otherwise, a groupId, artifactId and version need be given too.

Using Maven,

mvn deployto that Maven repository. Refresh the list to make it show up.

Note

This remote Maven repository is relatively simple. It does not support proxying, mirroring, ... like Nexus or Archiva.

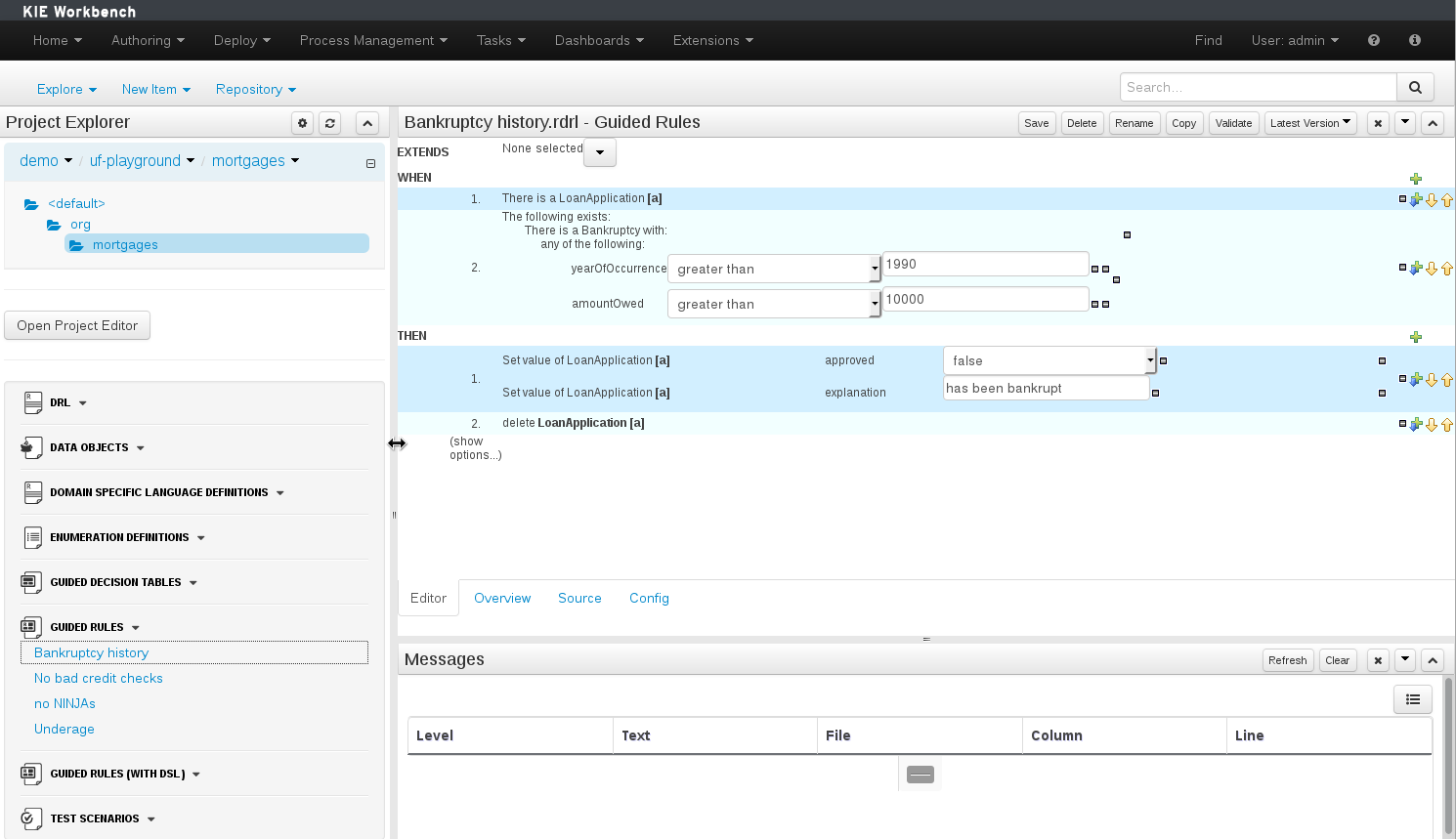

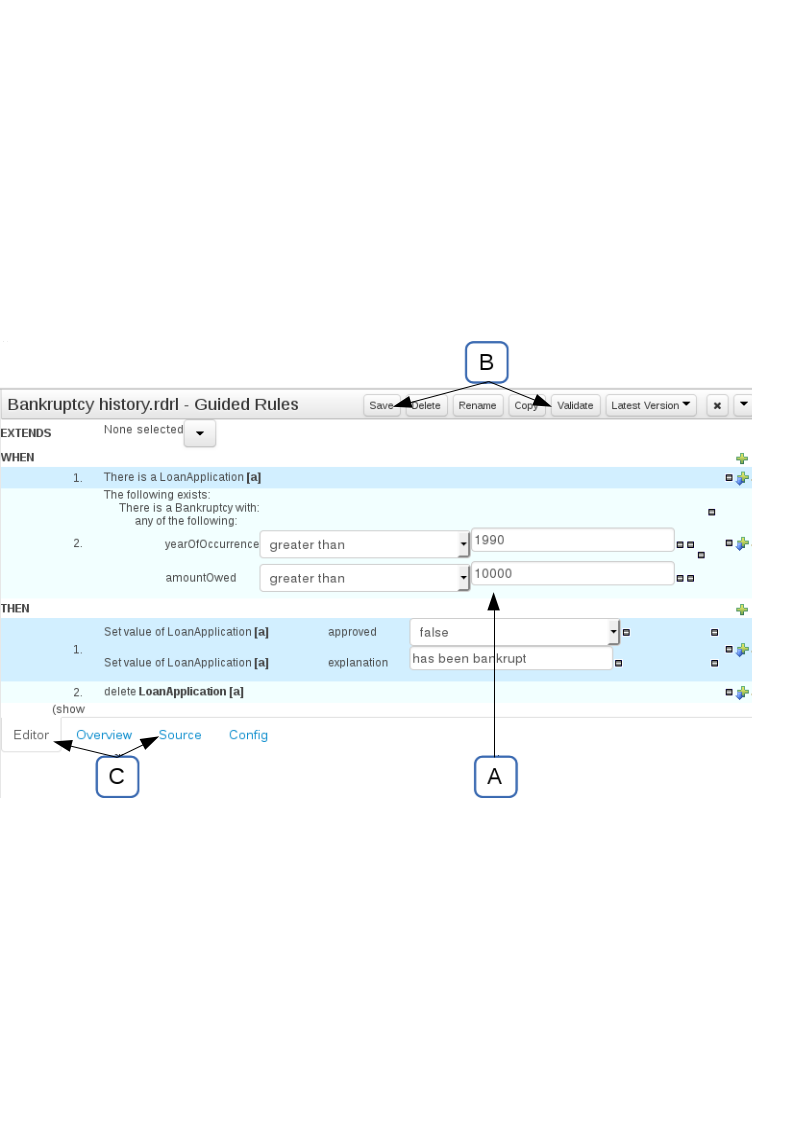

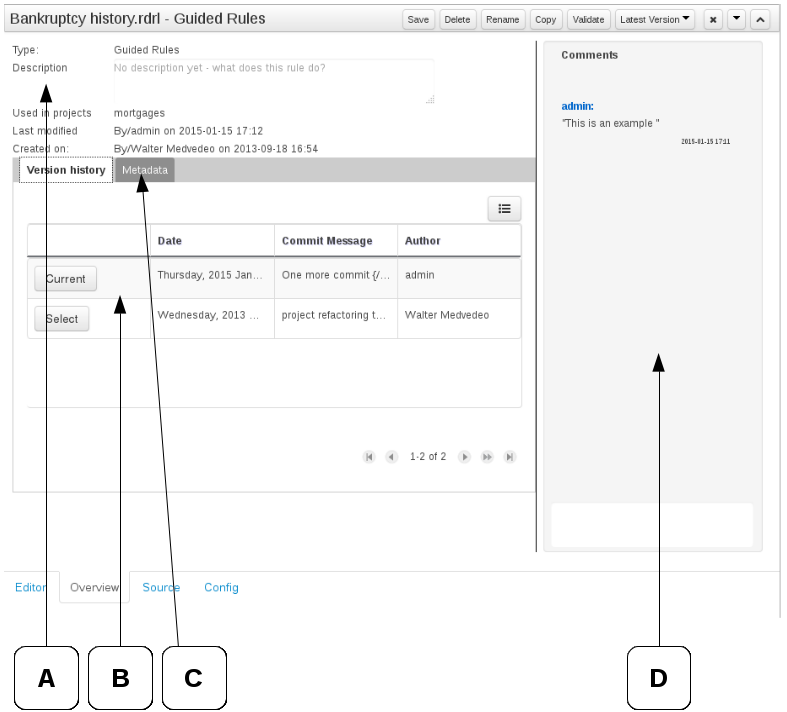

The Asset Editor is the principle component of the workbench User-Interface. It consists of two main views Editor and Overview.

The views

A : The editing area - exactly what form the editor takes depends on the Asset type.

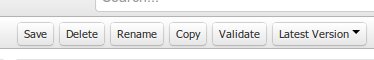

B : This menu bar contains various actions for the Asset; such as Saving, Renaming, Copy etc.

C : Different views for asset content or asset information.

Editor shows the main editor for the asset

Overview contains the metadata and conversation views for this editor. Explained in more detail below.

Source shows the asset in plain DRL. Note: This tab is only visible if the asset content can be generated into DRL.

Config contains the model imports used by the asset.

Overview

A : General information about the asset and the asset's description.

"Type:" The format name of the type of Asset.

"Description:" Description for the asset.

"Used in projects:" Names the projects where this rule is used.

"Last Modified:" Who made the last change and when.

"Created on:" Who created the asset and when.

B : Version history for the asset. Selecting a version loads the selected version into this editor.

C : Meta data (from the "Dublin Core" standard)

D : Comments regarding the development of the Asset can be recorded here.

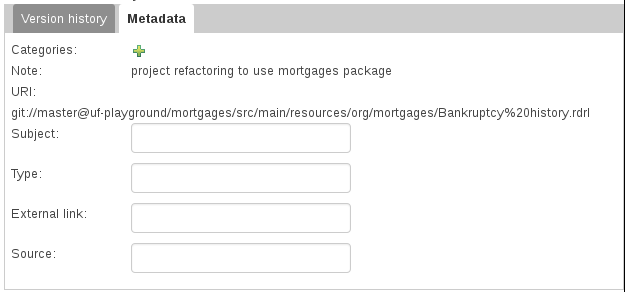

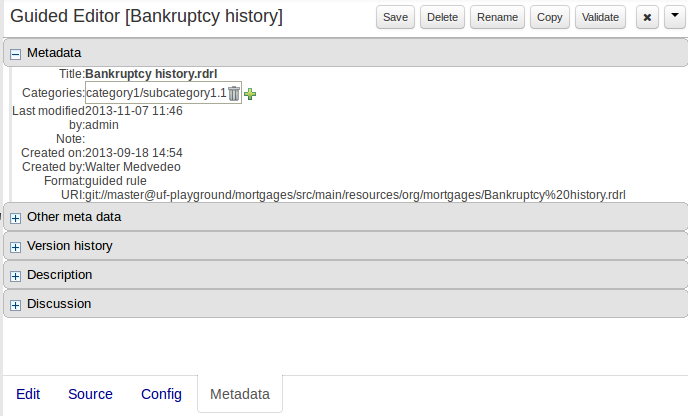

Metadata

A : Meta data:-

"Categories:" A deprecated feature for grouping the assets.

"Note:" A comment made when the Asset was last updated (i.e. why a change was made)

"URI:" URI to the asset inside the Git repository.

"Subject/Type/External link/Source" : Other miscellaneous meta data for the Asset.

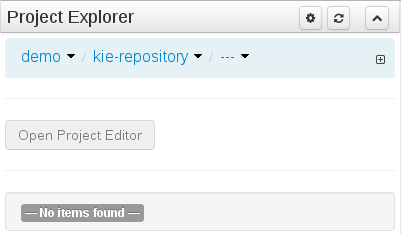

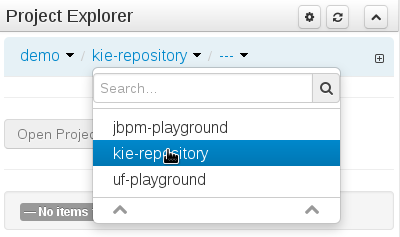

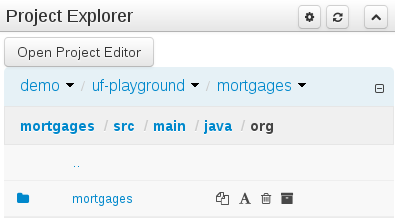

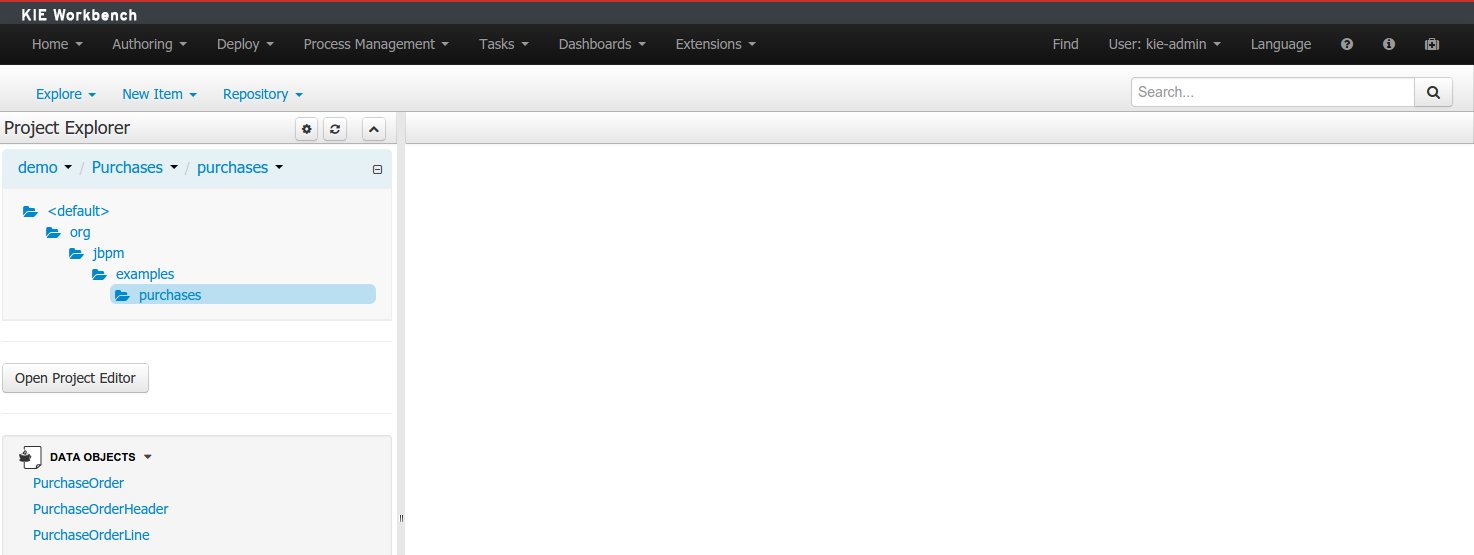

The Project Explorer provides the ability to browse different Organizational Units, Repositories, Projects and their files.

The initial view could be empty when first opened.

The user may have to select an Organizational Unit, Repository and Project from the drop-down boxes.

The default configuration hides Package details from view.

In order to reveal packages click on the icon as indicated in the following screen-shot.

After a suitable combination of Organizational Unit, Repository, Project and Package have been selected the Project Explorer will show the contents. The exact combination of selections depends wholly on the structures defined within the Workbench installation and projects. Each section contains groups of related files.

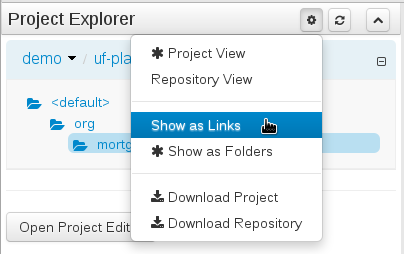

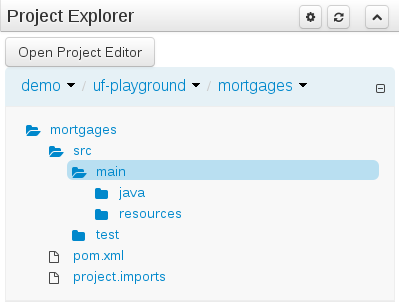

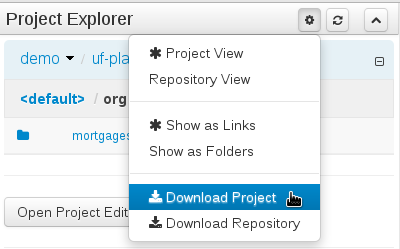

Project Explorer supports multiple views.

Project View

A simplified view of the underlying project structure. Certain system files are hidden from view.

Repository View

A complete view of the underlying project structure including all files; either user-defined or system generated.

Views can be selected by clicking on the icon within the Project Explorer, as shown below.

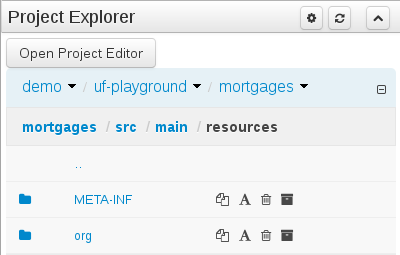

Both Project and Repository Views can be further refined by selecting either "Show as Folders" or "Show as Links".

Download Download and Download Repository make it possible to download the project or repository as a zip file.

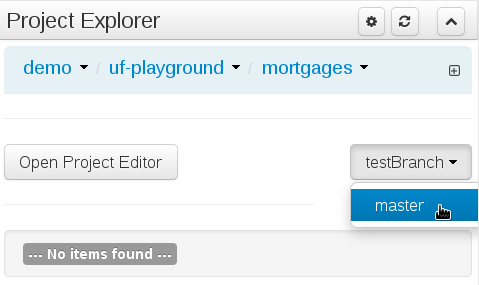

A branch selector will be visible if the repository has more than a single branch.

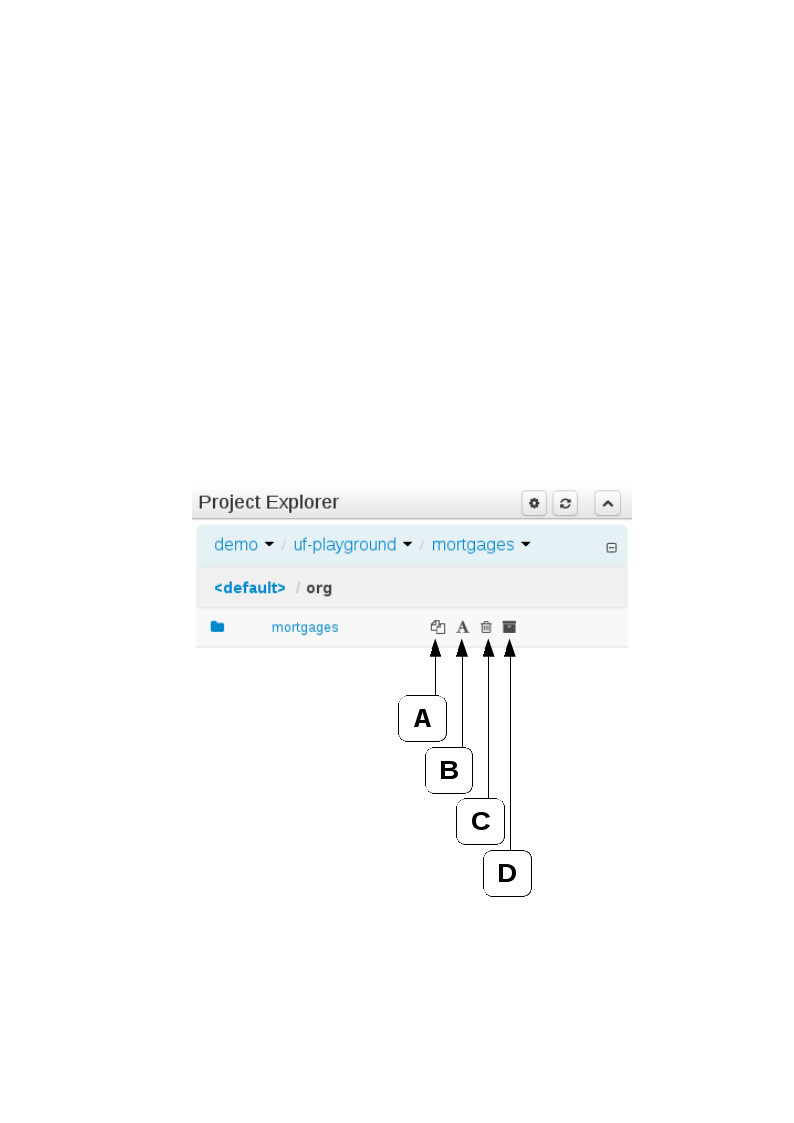

Copy, rename and delete actions are available on Links mode, for packages (in of Project View) and for files and directories as well (in Repository View). Download action is available for directories. Download downloads the selected the selected directory as a zip file.

A : Copy

B : Rename

C : Delete

D : Download

Warning

Workbench roadmap includes a refactoring and an impact analyses tools, but currenctly doesn't have it. Until both tools are provided make sure that your changes (copy/rename/delete) on packages, files or directories doesn't have a major impact on your project.

In cases that your change had an unexcepcted impact, Workbench allows you to restore your repository using the Repository editor.

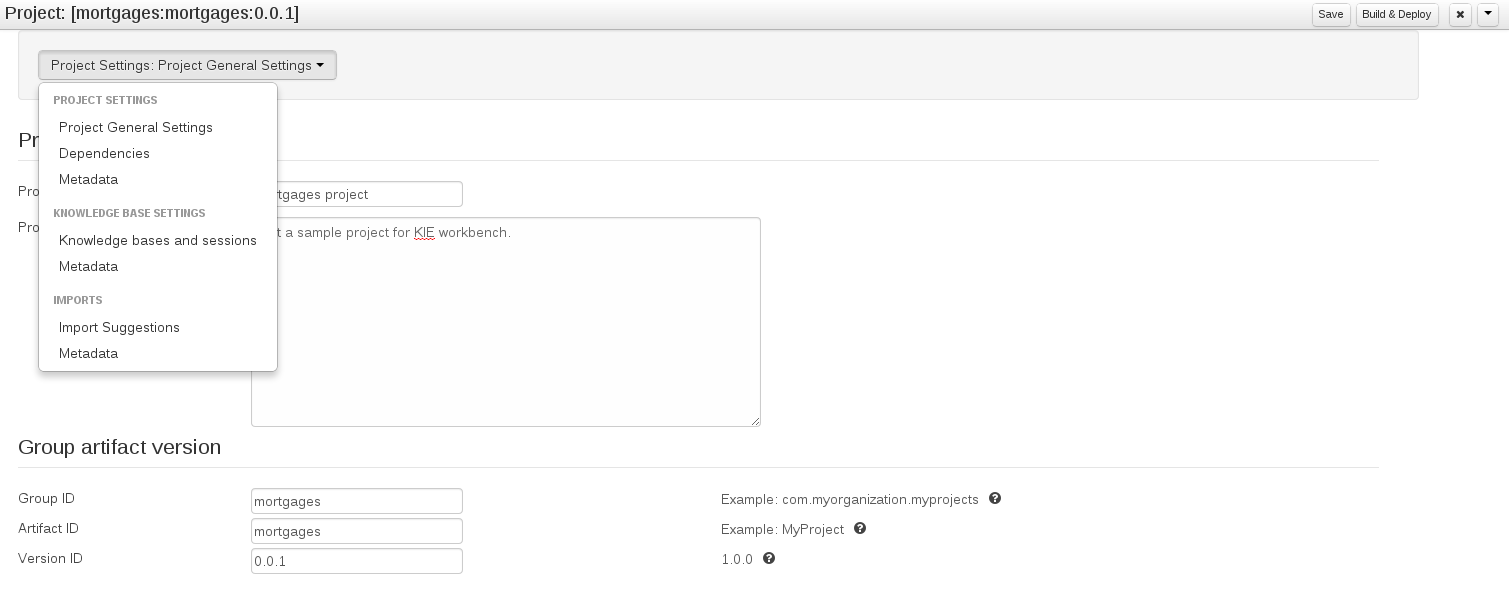

The Project Editor screen can be accessed from Project Explorer. Project Editor shows the settings for the currently active project.

Unlike most of the workbench editors, project editor edits more than one file. Showing everything that is needed for configuring the KIE project in one place.

Build & Depoy builds the current project and deploys the KJAR into the workbench internal Maven repository.

Project Settings edits the pom.xml file used by Maven.

General settings provide tools for project name and GAV-data (Group, Artifact, Version). GAV values are used as identifiers to differentiate projects and versions of the same project.

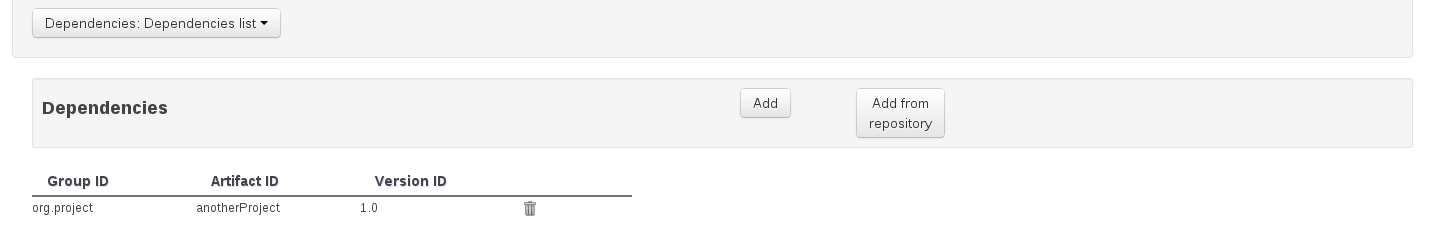

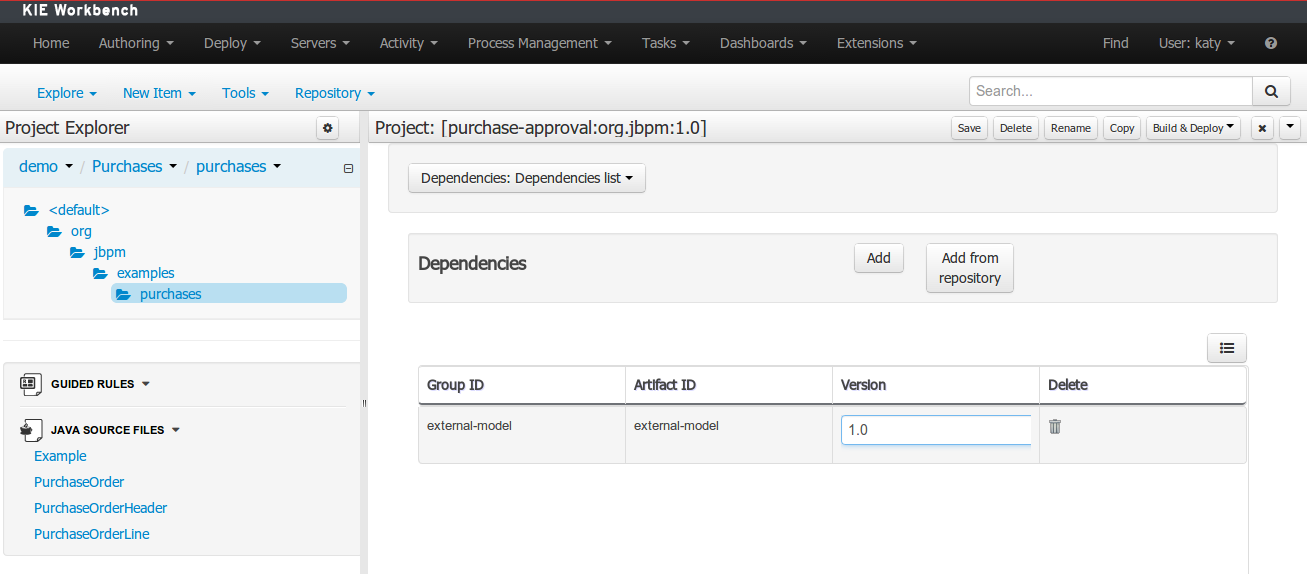

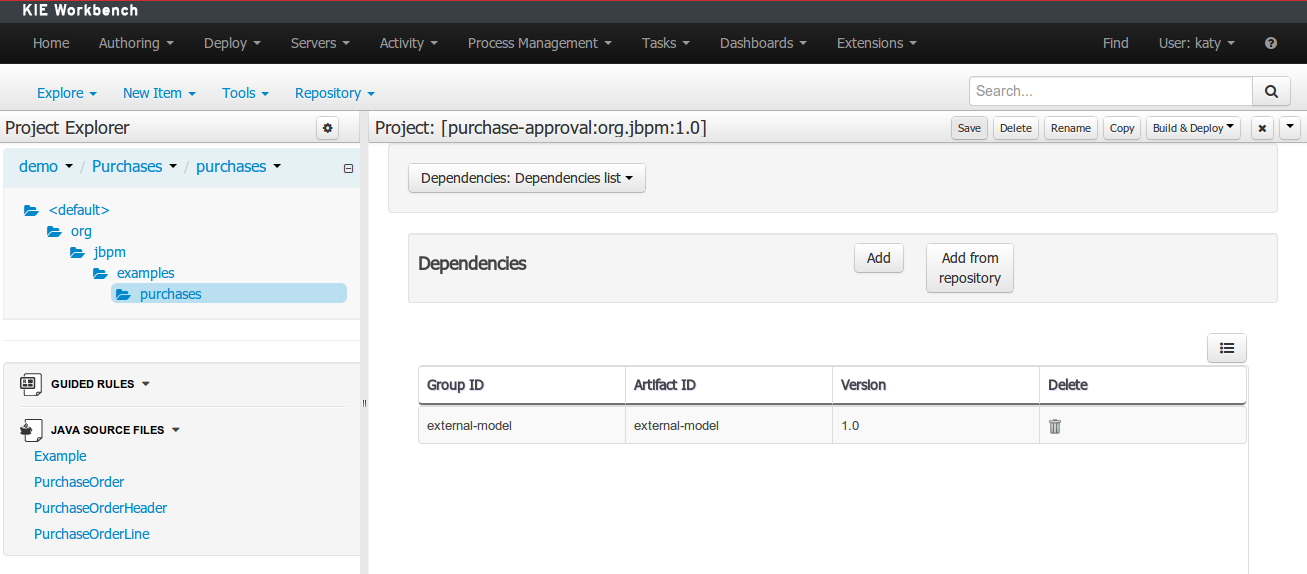

The project may have any number of either internal or external dependencies. Dependency is a project that has been built and deployed to a Maven repository. Internal dependencies are projects build and deployed in the same workbench as the project. External dependencies are retrieved from repositories outside of the current workbench. Each dependency uses the GAV-values to specify the project name and version that is used by the project.

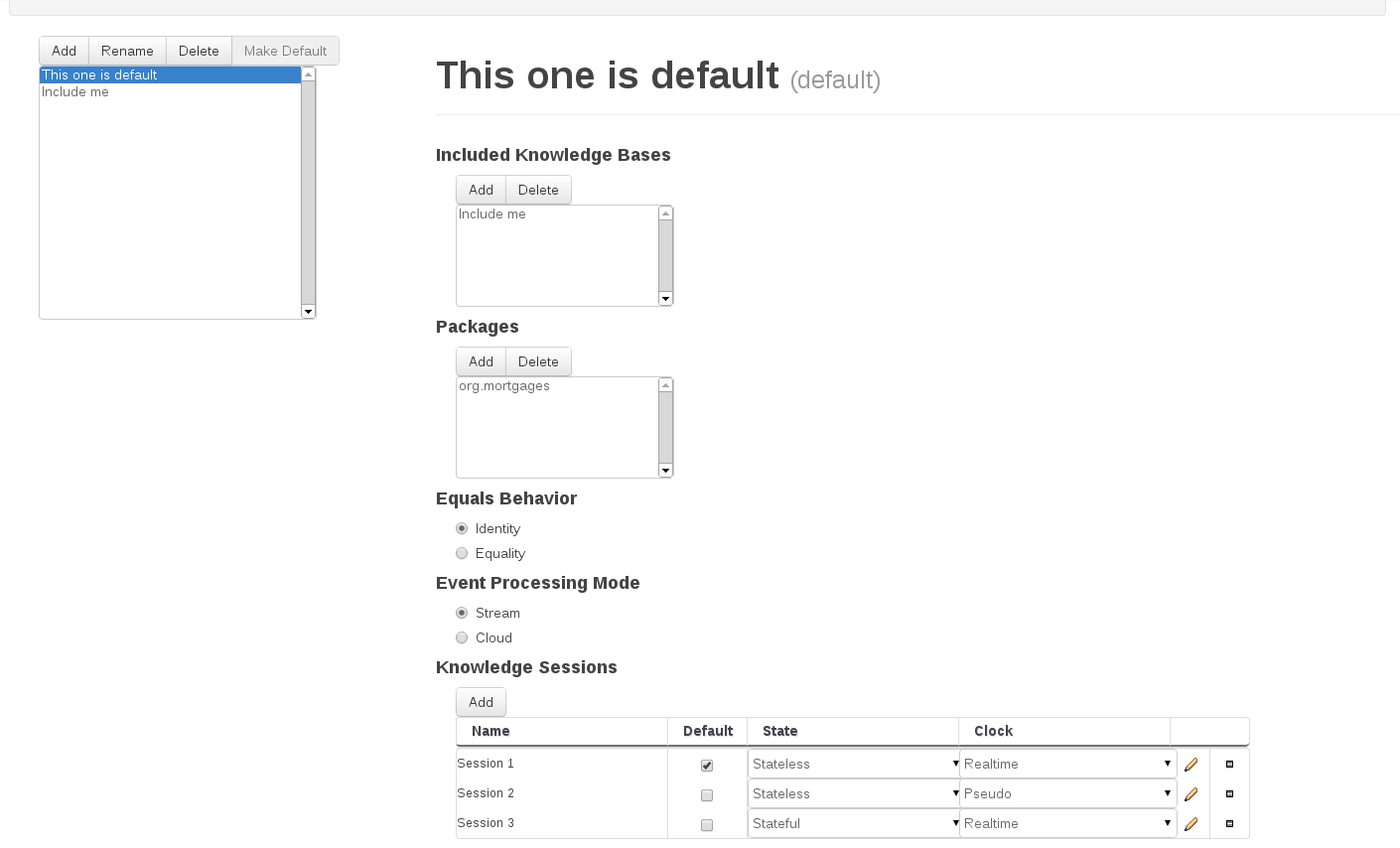

Knowledge Base Settings edits the kmodule.xml file used by Drools.

Note

For more information about the Knowledge Base properties, check the Drools Expert documentation for kmodule.xml.

Knowledge bases and sessions lists the knowledge bases and the knowledge sessions specified for the project.

Lists all the knowledge bases by name. Only one knowledge base can be set as default.

Knowledge base can include other knowledge bases. The models, rules and any other content in the included knowledge base will be visible and usable by the currently selected knowledge base.

Rules and models are stored in packages. The packages property specifies what packages are included into this knowledge base.

Equals behavior is explained in the Drools Expert part of the documentation.

Event processing mode is explained in the Drools Fusion part of the documentation.

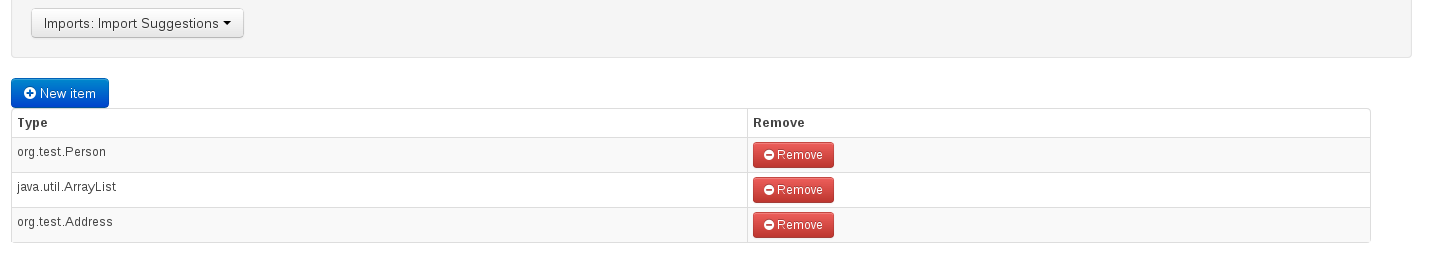

Settings edits the project.imports file used by the workbench editors.

Import Suggestions lists imports that are used as suggestions when using the guided editors the workbench has. Making it easier to work with the workbench, as there is no need to type each import in each file that uses the import.

Note

Unlike in the previous version of Guvnor. The imports listed in the import suggestions are not automatically added into the knowledge base or into the packages of the workbench. Each import needs to be explicitly added into each file.

The Workbench provides a common and consistent service for users to understand whether files authored within the environment are valid.

The Problems Panel shows real-time validation results of assets within a Project.

When a Project is selected from the Project Explorer the Problems Panel will refresh with validation results of the chosen Project.

When files are created, saved or deleted the Problems Panel content will update to show either new validation errors, or remove existing if a file was deleted.

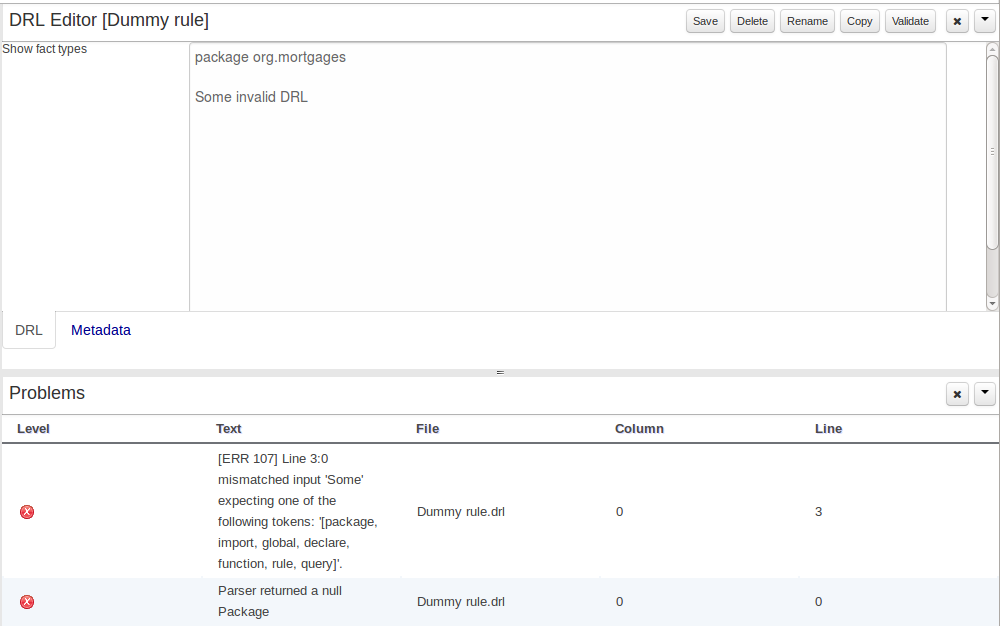

Figure 9.44. The Problems Panel

Here an invalid DRL file has been created and saved.

The Problems Panel shows the validation errors.

By default, a data model is always constrained to the context of a project. For the purpose of this tutorial, we will assume that a correctly configured project already exists and the authoring perspective is open.

To start the creation of a data model inside a project, take the following steps:

From the home panel, select the authoring perspective and use the project explorer to browse to the given project.

Open the Data Modeller tool by clicking on a Data Object file, or using the "New Item -> Data Object" menu option.

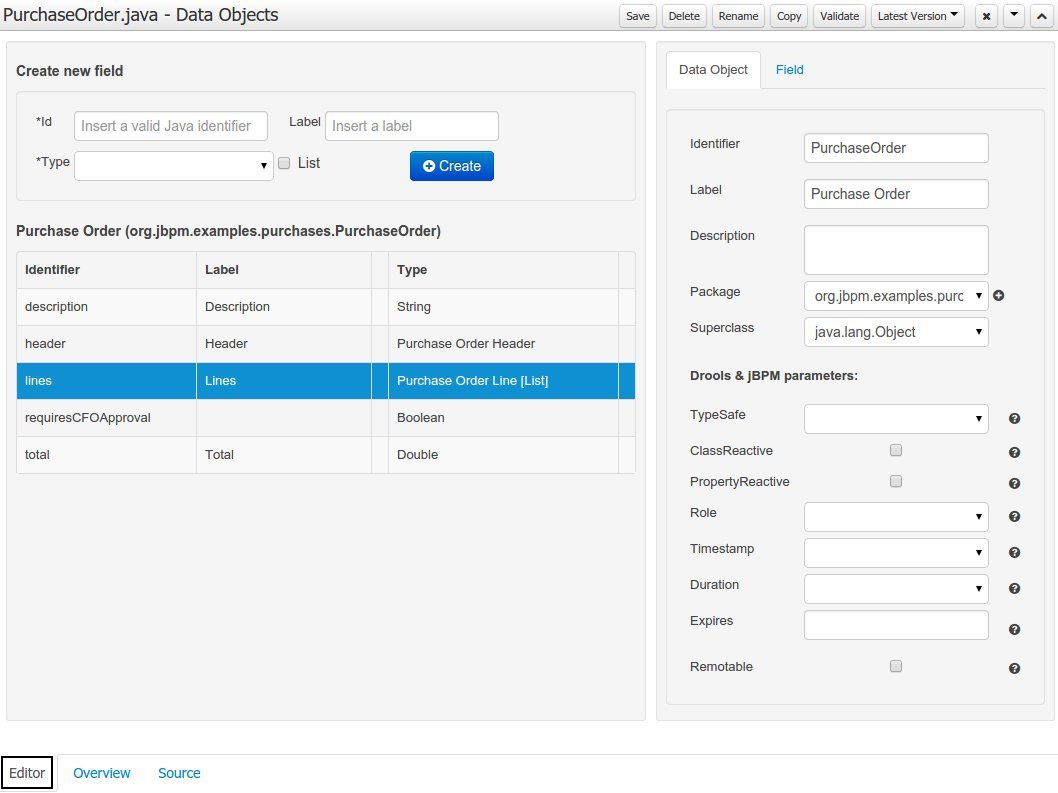

This will start up the Data Modeller tool, which has the following general aspect:

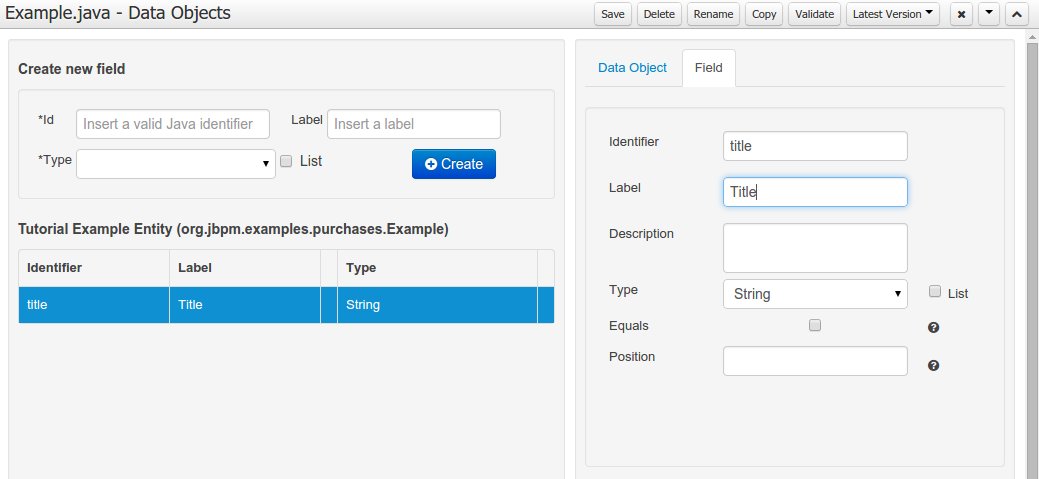

The "Editor" tab is divided into the following sections:

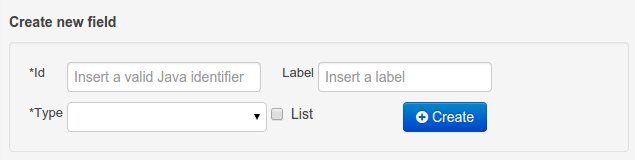

The new field section is dedicated to the creation of new fields.

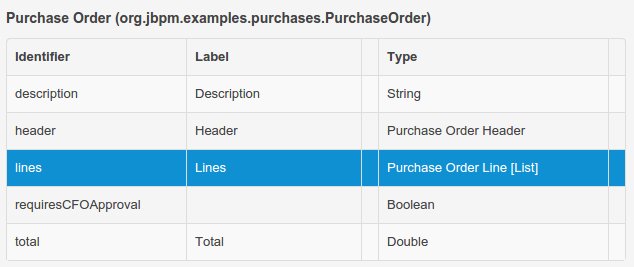

The Data Object's "field browser" section displays a list with the data object fields.

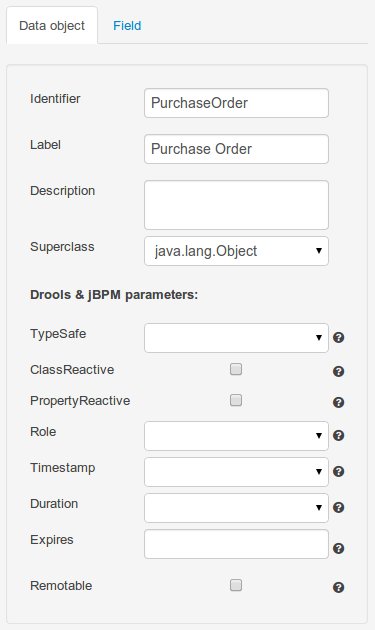

The "Data Object / field property editor" section. This is the rightmost section of the Data Modeller editor and visualizes a tabbed pane. The "Data object" tab allows the user to edit the class level properties of the data object, and the "Field" tab allows the edition of the properties for the currently selected field.

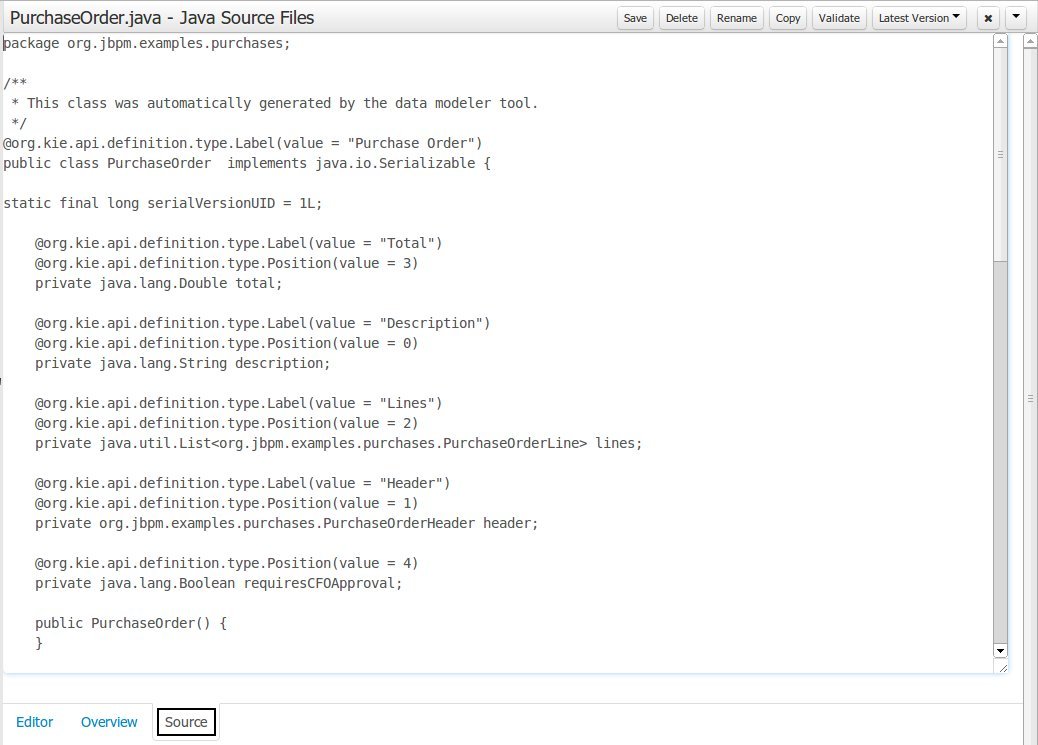

The "Source" tab shows an editor that allows the visualization and modification of the generated java code.

Round trip between the "Editor" and "Source" tabs is possible, and also source code preservation is provided. It means that not matter where the Java code was generated (e.g. Eclipse, Data modeller), the data modeller will only update the necessary code blocks to maintain the model updated.

The "Overview" tab shows the standard metadata and version information as the other workbench editors.

A data model consists of data objects which are a logical representation of some real-world data. Such data objects have a fixed set of modeller (or application-owned) properties, such as its internal identifier, a label, description, package etc. Besides those, a data object also has a variable set of user-defined fields, which are an abstraction of a real-world property of the type of data that this logical data object represents.

Creating a data object can be achieved using the workbench "New Item - Data Object" menu option.

Both resource name and location are mandatory parameters. When the "Ok" button is pressed a new Java file will be created and a new editor instance will be opened for the file edition.

Once the data object has been created, it now has to be completed by adding user-defined properties to its definition. This can be achieved by providing the required information in the "Create new field" section (see fig. "New field creation"), and clicking on the "Create" button when finished. The following fields can (or must) be filled out:

The field's internal identifier (mandatory). The value of this field must be unique per data object, i.e. if the proposed identifier already exists within current data object, an error message will be displayed.

A label (optional): as with the data object definition, the user can define a user-friendly label for the data object field which is about to be created. This has no further implications on how fields from objects of this data object will be treated. If a label is defined, then this is how the field will be displayed throughout the data modeller tool.

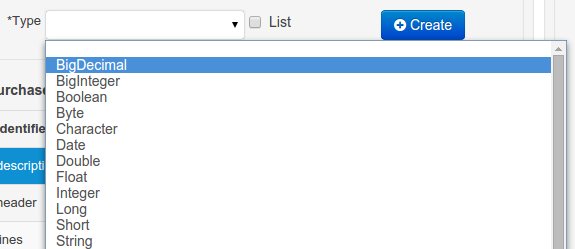

A field type (mandatory): each data object field needs to be assigned with a type.

This type can be either of the following:

A 'primitive java object' type: these include most of the object equivalents of the standard Java primitive types, such as Boolean, Short, Float, etc, as well as String, Date, BigDecimal and BigInteger.

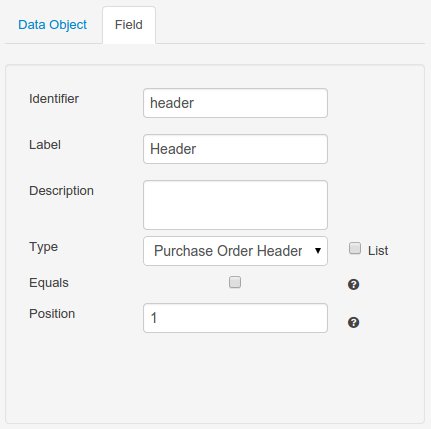

A 'data object' type: any user defined data object automatically becomes a candidate to be defined as a field type of another data object, thus enabling the creation of relationships between them. A data object field can be created either in 'single' or in 'multiple' form, the latter implying that the field will be defined as a collection of this type, which will be indicated by selecting "List" checkbox.

A 'primitive java' type: these include java primitive types byte, short, int, long, float, double, char and boolean.

When finished introducing the initial information for a new field, clicking the 'Create' button will add the newly created field to the end of the data object's fields table below:

The new field will also automatically be selected in the data object's field list, and its properties will be shown in the Field tab of the Property editor. The latter facilitates completion of some additional properties of the new field by the user (see below).

At any time, any field (without restrictions) can be deleted from a data object definition by clicking on the corresponding 'x' icon in the data object's fields table.

As stated before, both data objects as well as fields require some of their initial properties to be set upon creation. These are by no means the only properties data objects and fields have. Below we will give a detailed description of the additional data object and field properties.

Description: this field allows the user to introduce some kind of description for the current data object, for documentation purposes only. As with the label property, this is conceptual information that will not influence the use or treatment of this data object or its instances in any way.

TypeSafe: this property allows to enable/disable the type safe behaviour for current type. By default all type declarations are compiled with type safety enabled. (See Drools for more information on this matter).

ClassReactive: this property allows to mark this type to be treated as "Class Reactive" by the Drools engine. (See Drools for more information on this matter).

PropertyReactive: this property allows to mark this type to be treated as "Property Reactive" by the Drools engine. (See Drools for more information on this matter).

Role: this property allows to configure how the Drools engine should handle instances of this type: either as regular facts or as events. By default all types are handled as a regular fact, so for the time being the only value that can be set is "Event" to declare that this type should be handled as an event. (See Drools Fusion for more information on this matter).

Timestamp: this property allows to configure the "timestamp" for an event, by selecting one of his attributes. If set the engine will use the timestamp from the given attribute instead of reading it from the Session Clock. If not, the engine will automatically assign a timestamp to the event. (See Drools Fusion for more information on this matter).

Duration: this property allows to configure the "duration" for an event, by selecting one of his attributes. If set the engine will use the duration from the given attribute instead of using the default event duration = 0. (See Drools Fusion for more information on this matter).

Expires: this property allows to configure the "time offset" for an event expiration. If set, this value must be a temporal interval in the form: [#d][#h][#m][#s][#[ms]] Where [ ] means an optional parameter and # means a numeric value. e.g.: 1d2h, means one day and two hours. (See Drools Fusion for more information on this matter).

Remotable: If checked this property makes the data object available to be used with jBPM remote services as REST, JMS and WS. (See jBPM for more information on this matter).

Description: this field allows the user to introduce some kind of description for the current field, for documentation purposes only. As with the label property, this is conceptual information that will not influence the use or treatment of this data object or its instances in any way.

Equals: checking this property for a data object field implies that it will be taken into account, at the code generation level, for the creation of both the equals() and hashCode() methods in the generated Java class. We will explain this in more detail in the following section.

Position: this field requires a zero or positive integer. When set, this field will be interpreted by the Drools engine as a positional argument (see the section below and also the Drools documentation for more information on this subject).

The data model in itself is merely a visual tool that allows the user to define high-level data structures, for them to interact with the Drools Engine on the one hand, and the jBPM platform on the other. In order for this to become possible, these high-level visual structures have to be transformed into low-level artifacts that can effectively be consumed by these platforms. These artifacts are Java POJOs (Plain Old Java Objects), and they are generated every time the data model is saved, by pressing the "Save" button in the top Data Modeller Menu. Additionally when the user round trip between the "Editor" and "Source" tab, the code is auto generated to maintain the consistency with the Editor view and vice versa.

The resulting code is generated according to the following transformation rules:

The data object's identifier property will become the Java class's name. It therefore needs to be a valid Java identifier.

The data object's package property becomes the Java class's package declaration.

The data object's superclass property (if present) becomes the Java class's extension declaration.

The data object's label and description properties will translate into the Java annotations "@org.kie.api.definition.type.Label" and "@org.kie.api.definition.type.Description", respectively. These annotations are merely a way of preserving the associated information, and as yet are not processed any further.

The data object's role property (if present) will be translated into the "@org.kie.api.definition.type.Role" Java annotation, that IS interpreted by the application platform, in the sense that it marks this Java class as a Drools Event Fact-Type.

The data object's type safe property (if present) will be translated into the "@org.kie.api.definition.type.TypeSafe Java annotation. (see Drools)

The data object's class reactive property (if present) will be translated into the "@org.kie.api.definition.type.ClassReactive Java annotation. (see Drools)

The data object's property reactive property (if present) will be translated into the "@org.kie.api.definition.type.PropertyReactive Java annotation. (see Drools)

The data object's timestamp property (if present) will be translated into the "@org.kie.api.definition.type.Timestamp Java annotation. (see Drools)

The data object's duration property (if present) will be translated into the "@org.kie.api.definition.type.Duration Java annotation. (see Drools)

The data object's expires property (if present) will be translated into the "@org.kie.api.definition.type.Expires Java annotation. (see Drools)

The data object's remotable property (if present) will be translated into the "@org.kie.api.remote.Remotable Java annotation. (see jBPM)

A standard Java default (or no parameter) constructor is generated, as well as a full parameter constructor, i.e. a constructor that accepts as parameters a value for each of the data object's user-defined fields.

The data object's user-defined fields are translated into Java class fields, each one of them with its own getter and setter method, according to the following transformation rules:

The data object field's identifier will become the Java field identifier. It therefore needs to be a valid Java identifier.

The data object field's type is directly translated into the Java class's field type. In case the field was declared to be multiple (i.e. 'List'), then the generated field is of the "java.util.List" type.

The equals property: when it is set for a specific field, then this class property will be annotated with the "@org.kie.api.definition.type.Key" annotation, which is interpreted by the Drools Engine, and it will 'participate' in the generated equals() method, which overwrites the equals() method of the Object class. The latter implies that if the field is a 'primitive' type, the equals method will simply compares its value with the value of the corresponding field in another instance of the class. If the field is a sub-entity or a collection type, then the equals method will make a method-call to the equals method of the corresponding data object's Java class, or of the java.util.List standard Java class, respectively.

If the equals property is checked for ANY of the data object's user defined fields, then this also implies that in addition to the default generated constructors another constructor is generated, accepting as parameters all of the fields that were marked with Equals. Furthermore, generation of the equals() method also implies that also the Object class's hashCode() method is overwritten, in such a manner that it will call the hashCode() methods of the corresponding Java class types (be it 'primitive' or user-defined types) for all the fields that were marked with Equals in the Data Model.

The position property: this field property is automatically set for all user-defined fields, starting from 0, and incrementing by 1 for each subsequent new field. However the user can freely changes the position among the fields. At code generation time this property is translated into the "@org.kie.api.definition.type.Position" annotation, which can be interpreted by the Drools Engine. Also, the established property order determines the order of the constructor parameters in the generated Java class.

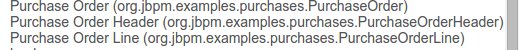

As an example, the generated Java class code for the Purchase Order data object, corresponding to its definition as shown in the following figure purchase_example.jpg is visualized in the figure at the bottom of this chapter. Note that the two of the data object's fields, namely 'header' and 'lines' were marked with Equals, and have been assigned with the positions 2 and 1, respectively).

package org.jbpm.examples.purchases;

/**

* This class was automatically generated by the data modeler tool.

*/

@org.kie.api.definition.type.Label("Purchase Order")

@org.kie.api.definition.type.TypeSafe(true)

@org.kie.api.definition.type.Role(org.kie.api.definition.type.Role.Type.EVENT)

@org.kie.api.definition.type.Expires("2d")

@org.kie.api.remote.Remotable

public class PurchaseOrder implements java.io.Serializable

{

static final long serialVersionUID = 1L;

@org.kie.api.definition.type.Label("Total")

@org.kie.api.definition.type.Position(3)

private java.lang.Double total;

@org.kie.api.definition.type.Label("Description")

@org.kie.api.definition.type.Position(0)

private java.lang.String description;

@org.kie.api.definition.type.Label("Lines")

@org.kie.api.definition.type.Position(2)

@org.kie.api.definition.type.Key

private java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> lines;

@org.kie.api.definition.type.Label("Header")

@org.kie.api.definition.type.Position(1)

@org.kie.api.definition.type.Key

private org.jbpm.examples.purchases.PurchaseOrderHeader header;

@org.kie.api.definition.type.Position(4)

private java.lang.Boolean requiresCFOApproval;

public PurchaseOrder()

{

}

public java.lang.Double getTotal()

{

return this.total;

}

public void setTotal(java.lang.Double total)

{

this.total = total;

}

public java.lang.String getDescription()

{

return this.description;

}

public void setDescription(java.lang.String description)

{

this.description = description;

}

public java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> getLines()

{

return this.lines;

}

public void setLines(java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> lines)

{

this.lines = lines;

}

public org.jbpm.examples.purchases.PurchaseOrderHeader getHeader()

{

return this.header;

}

public void setHeader(org.jbpm.examples.purchases.PurchaseOrderHeader header)

{

this.header = header;

}

public java.lang.Boolean getRequiresCFOApproval()

{

return this.requiresCFOApproval;

}

public void setRequiresCFOApproval(java.lang.Boolean requiresCFOApproval)

{

this.requiresCFOApproval = requiresCFOApproval;

}

public PurchaseOrder(java.lang.Double total, java.lang.String description,

java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> lines,

org.jbpm.examples.purchases.PurchaseOrderHeader header,

java.lang.Boolean requiresCFOApproval)

{

this.total = total;

this.description = description;

this.lines = lines;

this.header = header;

this.requiresCFOApproval = requiresCFOApproval;

}

public PurchaseOrder(java.lang.String description,

org.jbpm.examples.purchases.PurchaseOrderHeader header,

java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> lines,

java.lang.Double total, java.lang.Boolean requiresCFOApproval)

{

this.description = description;

this.header = header;

this.lines = lines;

this.total = total;

this.requiresCFOApproval = requiresCFOApproval;

}

public PurchaseOrder(

java.util.List<org.jbpm.examples.purchases.PurchaseOrderLine> lines,

org.jbpm.examples.purchases.PurchaseOrderHeader header)

{

this.lines = lines;

this.header = header;

}

@Override

public boolean equals(Object o)

{

if (this == o)

return true;

if (o == null || getClass() != o.getClass())

return false;

org.jbpm.examples.purchases.PurchaseOrder that = (org.jbpm.examples.purchases.PurchaseOrder) o;

if (lines != null ? !lines.equals(that.lines) : that.lines != null)

return false;

if (header != null ? !header.equals(that.header) : that.header != null)

return false;

return true;

}

@Override

public int hashCode()

{

int result = 17;

result = 31 * result + (lines != null ? lines.hashCode() : 0);

result = 31 * result + (header != null ? header.hashCode() : 0);

return result;

}

}

Using an external model means the ability to use a set for already defined POJOs in current project context. In order to make those POJOs available a dependency to the given JAR should be added. Once the dependency has been added the external POJOs can be referenced from current project data model.

There are two ways to add a dependency to an external JAR file:

Dependency to a JAR file already installed in current local M2 repository (typically associated the the user home).

Dependency to a JAR file installed in current KIE Workbench/Drools Workbench "Guvnor M2 repository". (internal to the application)

To add a dependency to a JAR file in local M2 repository follow this steps.

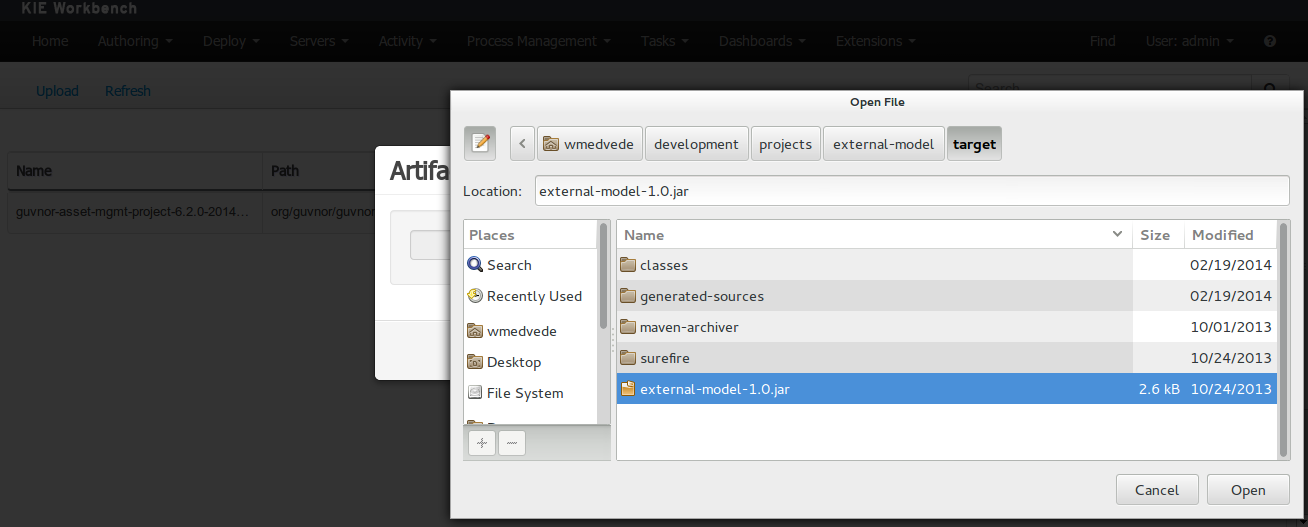

To add a dependency to a JAR file in current "Guvnor M2 repository" follow this steps.

Once the file has been loaded it will be displayed in the repository files list.

If the uploaded file is not a valid Maven JAR (don't have a pom.xml file) the system will prompt the user in order to provide a GAV for the file to be installed.

Open the project editor (see bellow) and click on the "Add from repository" button to open the JAR selector to see all the installed JAR files in current "Guvnor M2 repository". When the desired file is selected the project should be saved in order to make the new dependency available.

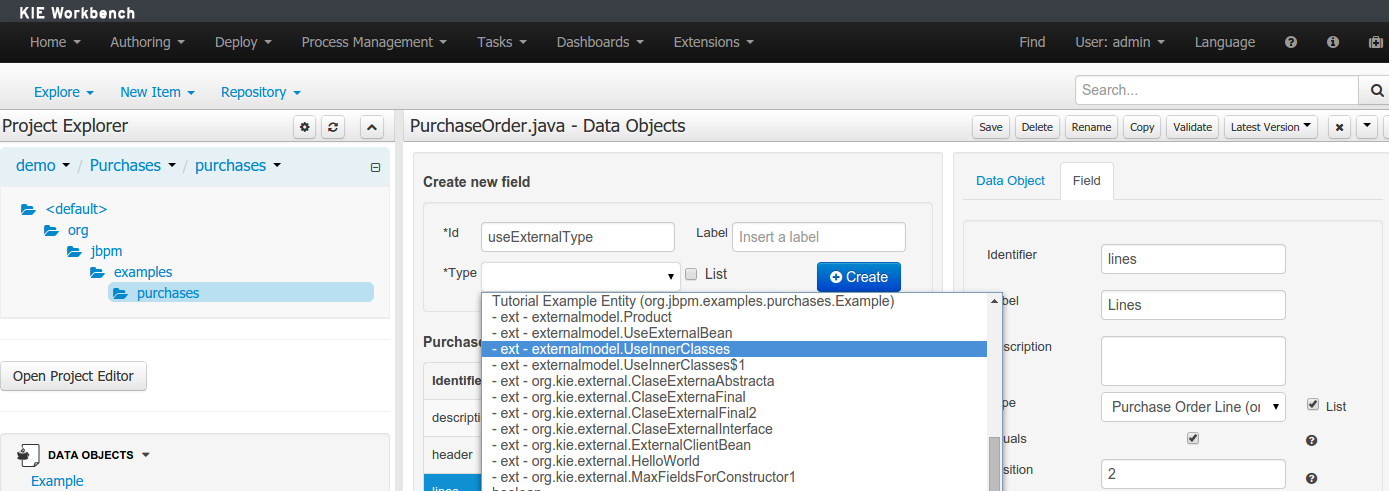

When a dependency to an external JAR has been set, the external POJOs can be used in the context of current project data model in the following ways:

External POJOs can be extended by current model data objects.

External POJOs can be used as field types for current model data objects.

The following screenshot shows how external objects are prefixed with the string " -ext- " in order to be quickly identified.

Current version implements roundtrip and code preservation between Data modeller and Java source code. No matter where the Java code was generated (e.g. Eclipse, Data modeller), the data modeller will only create/delete/update the necessary code elements to maintain the model updated, i.e, fields, getter/setters, constructors, equals method and hashCode method. Also whatever Type or Field annotation not managed by the Data Modeler will be preserved when the Java sources are updated by the Data modeller.

Aside from code preservation, like in the other workbench editors, concurrent modification scenarios are still possible. Common scenarios are when two different users are updating the model for the same project, e.g. using the data modeller or executing a 'git push command' that modifies project sources.

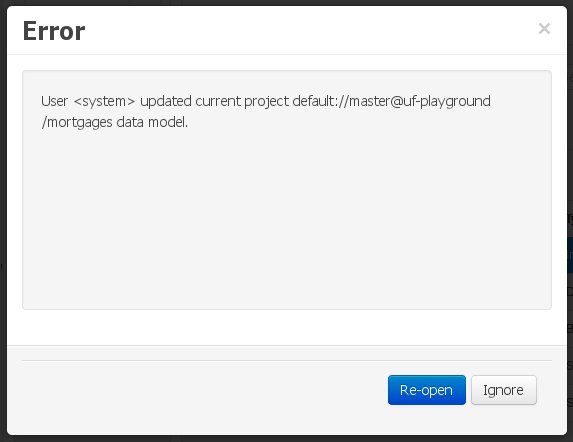

From an application context's perspective, we can basically identify two different main scenarios:

In this scenario the application user has basically just been navigating through the data model, without making any changes to it. Meanwhile, another user modifies the data model externally.

In this case, no immediate warning is issued to the application user. However, as soon as the user tries to make any kind of change, such as add or remove data objects or properties, or change any of the existing ones, the following pop-up will be shown:

The user can choose to either:

Re-open the data model, thus loading any external changes, and then perform the modification he was about to undertake, or

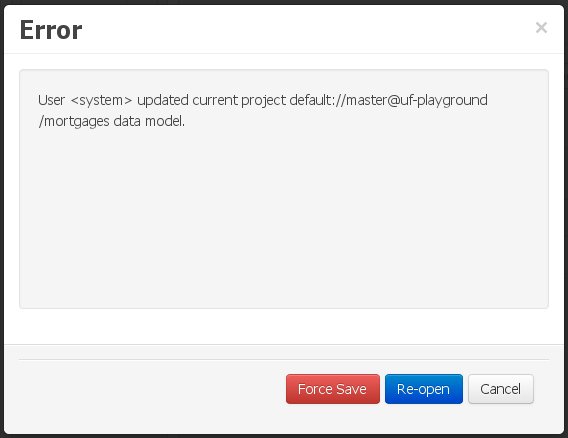

Ignore any external changes, and go ahead with the modification to the model. In this case, when trying to persist these changes, another pop-up warning will be shown:

The "Force Save" option will effectively overwrite any external changes, while "Re-open" will discard any local changes and reload the model.

Warning

"Force Save" overwrites any external changes!

The application user has made changes to the data model. Meanwhile, another user simultaneously modifies the data model from outside the application context.

In this alternative scenario, immediately after the external user commits his changes to the asset repository (or e.g. saves the model with the data modeller in a different session), a warning is issued to the application user:

As with the previous scenario, the user can choose to either:

Re-open the data model, thus losing any modifications that where made through the application, or

Ignore any external changes, and continue working on the model.

One of the following possibilities can now occur:

The user tries to persist the changes he made to the model by clicking the "Save" button in the data modeller top level menu. This leads to the following warning message:

The "Force Save" option will effectively overwrite any external changes, while "Re-open" will discard any local changes and reload the model.

Categories allow assets to be labelled (or tagged) with any number of categories that you define. Assets can belong to any number of categories. In the below diagram, you can see this can in effect create a folder/explorer like view of categories. The names can be anything you want, and are defined by the Workbench administrator (you can also remove/add new categories).

Note

Categories do not have the same role in the current release of the Workbench as they had in prior versions (up to and including 5.5). Projects can no longer be built using a selector to include assets that are labelled with certain Categories. Categories are therefore considered a deprecated feature.

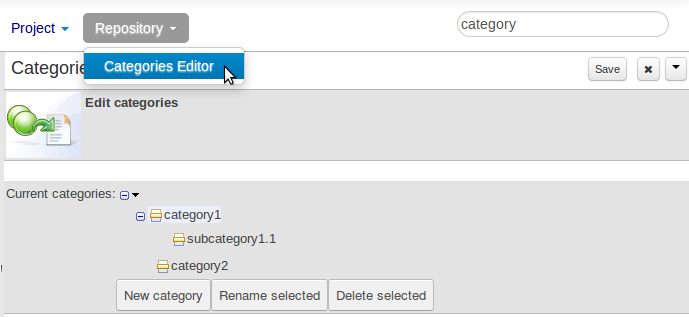

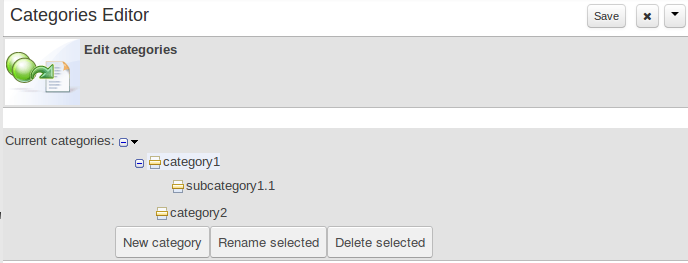

The Categories Editor is available from the Repository menu on the Authoring Perspective.

The below view shows the administration screen for setting up categories (there) are no categories in the system by default. As the categories can be hierarchical you chose the "parent" category that you want to create a sub-category for. From here categories can also be removed (but only if they are not in use by any current versions of assets).

Generally categories are created with meaningful name that match the area of the business the rule applies to (if the rule applies to multiple areas, multiple categories can be attached).

Assets can be assigned Categories using the MetaData tab on the assets' editor.

When you open an asset to view or edit, it will show a list of categories that it currently belongs to If you make a change (remove or add a category) you will need to save the asset - this will create a new item in the version history. Changing the categories of a rule has no effect on its execution.

As we already know, Workbench provides a set of editors to author assets in different formats. According to asset’s format a specialized editor is used.

One additional feature provided by Workbench is the ability to embed it in your own (Web) Applications thru it's standalone mode. So, if you want to edit rules, processes, decision tables, etc... in your own applications without switch to Workbench, you can.

In order to embed Workbench in your application all you'll need is the Workbench application deployed and running in a web/application server and, from within your own web applications, an iframe with proper HTTP query parameters as described in the following table.

Table 9.2. HTTP query parameters for standalone mode

| Parameter Name | Explanation | Allow multiple values | Example |

|---|---|---|---|

| standalone | With just the presence of this parameter workbench will switch to standalone mode. | no | (none) |

| path | Path to the asset to be edited. Note that asset should already exists. | no | git://master@uf-playground/todo.md |

| perspective | Reference to an existing perspective name. | no | org.guvnor.m2repo.client.perspectives.GuvnorM2RepoPerspective |

| header | Defines the name of the header that should be displayed (useful for context menu headers). | yes | ComplementNavArea |

Note

Path and Perspective parameters are mutual exclusive, so can't be used together.

This section of the documentation describes the main features included that contribute to the Asset Management functionality provided in the KIE Workbench and KIE Drools Workbench. All the features described here are entirely optional, but the usage is recommended if you are planning to have multiple projects. All the Asset Management features try to impose good practices on the repository structure that will make the maintainace, versioning and distribution of the projects simple and based on standards. All the Asset Management features are implemented using jBPM Business Processes, which means that the logic can be reused for external applications as well as adapted for domain specific requirements when needed.

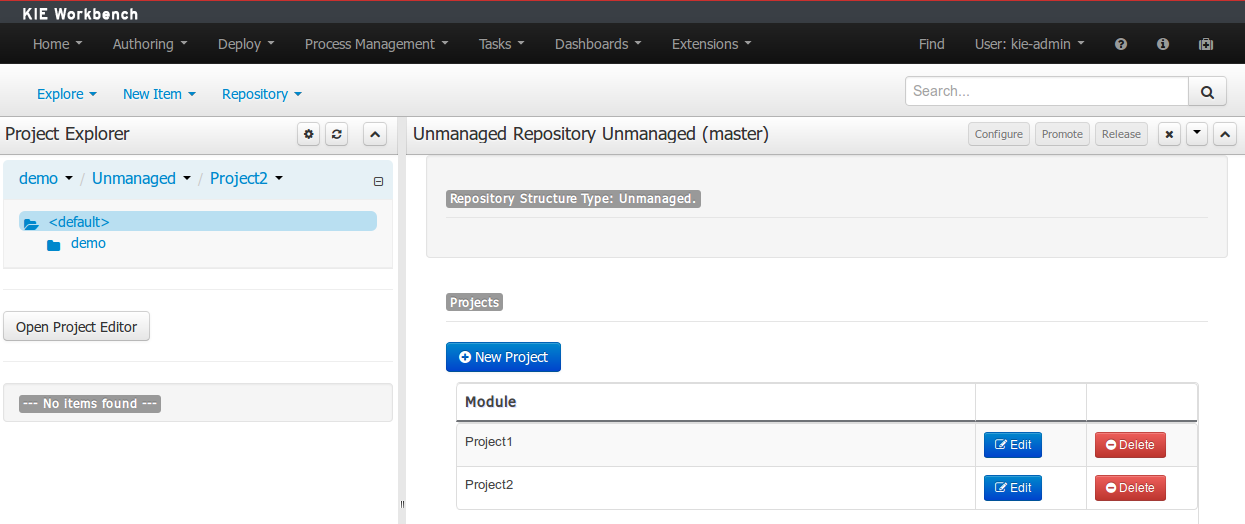

Since the creation of the assets management features repositories can be classified into Managed or Unmanaged.

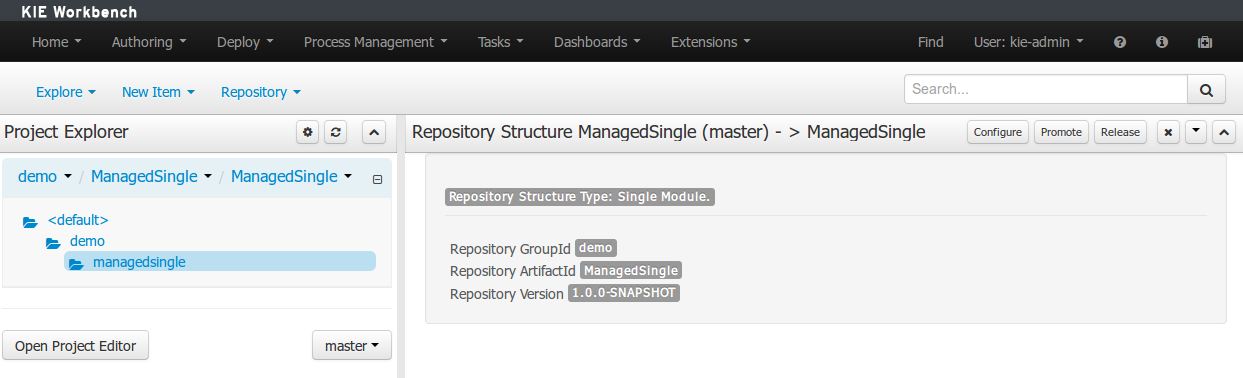

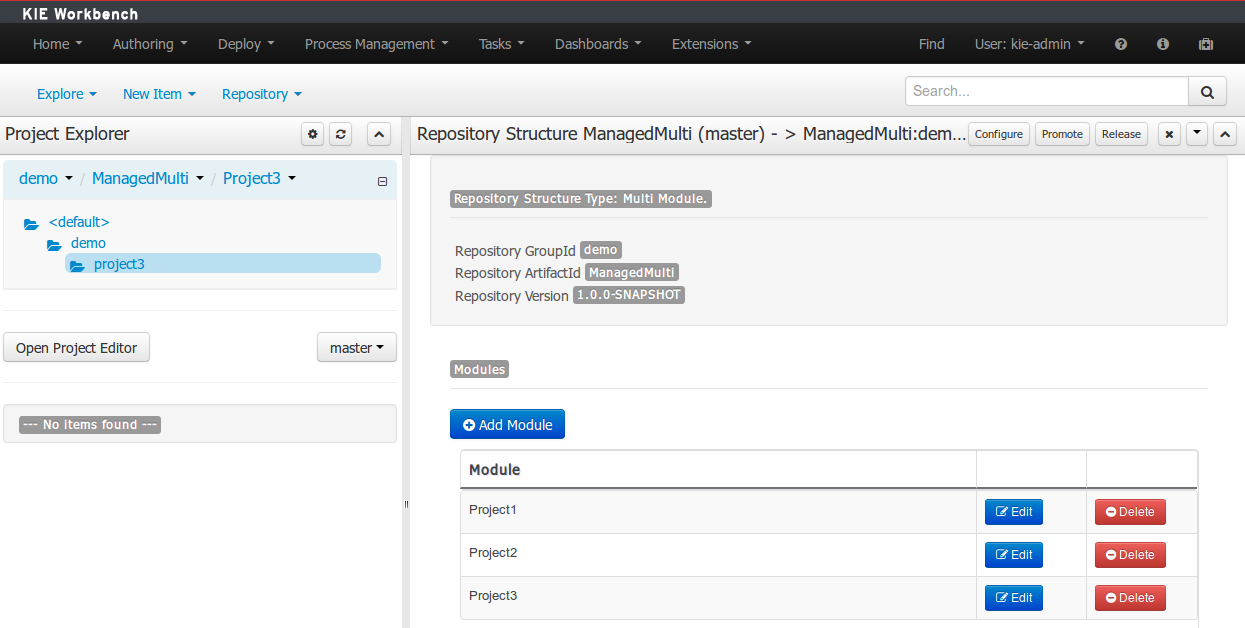

All new assets management features are available for this type of repositories. Additionally a managed repository can be "Single Project" or "Multi Project".

A "Single Project" managed repository will contain just one Project. And a "Multi Project" managed repository can contain multiple Projects. All of them related through the same parent, and they will share the same group and version information.

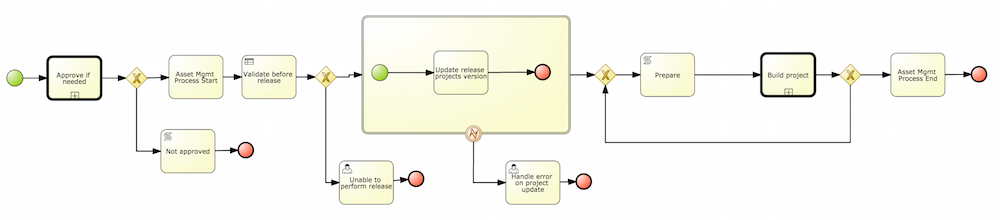

There are 4 main processes which represent the stages of the Asset Management feature: Configure Repository, Promote Changes, Build and Release.

The Configure Repository process is in charge of the post initialization of the repository. This process will be automatically triggered if the user selects to create a Managed Repository on the New repository wizzard. If they decide to use the governance feature the process will kick in and as soon as the repository is created. A new development and release branches will be created. Notice that the first time that this process is called, the master branch is picked and both branches (dev and release) will be based on it.

By default the asset management feature is not enabled so make sure to select Managed Repository on the New Repository Wizzard. When we work inside a managed repository, the development branch is selected for the users to work on. If multiple dev branches are created, the user will need to pick one.

When some work is done in the developments branch and the users reach a point where the changes needs to be tested before going into production, they will start a new Promote Changes process so a more technical user can decide and review what needs to be promoted. The users belonging to the "kiemgmt" group will see a new Task in their Group Task List which will contain all the files that had being changed. The user needs to select the assets that will be promoting via the UI. The underlying process will be cherry-picking the commits selected by the user to the release branch. The user can specify that a review is needed by a more technical user.

This process can be repeated multiple times if needed before creating the artifacts for the release.

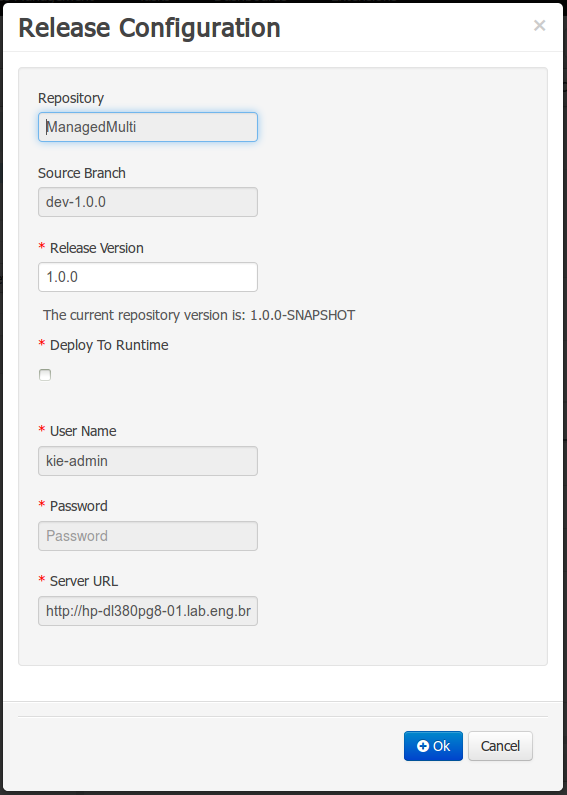

The Build process can be triggered to build our projects from different branches. This allows us to have a more flexible way to build and deploy our projects to different runtimes.

This section describes the common usage flow for the asset management features showing all the screens involved.

The first contact with the Asset Management features starts on the Repository creation.

If the user chooses to create a Managed Respository a new page in the wizzard is enabled:

When a managed repository is created the assets management configuration process is automatically launched in order to create the repository branches, and the corresponding project structure is also created.

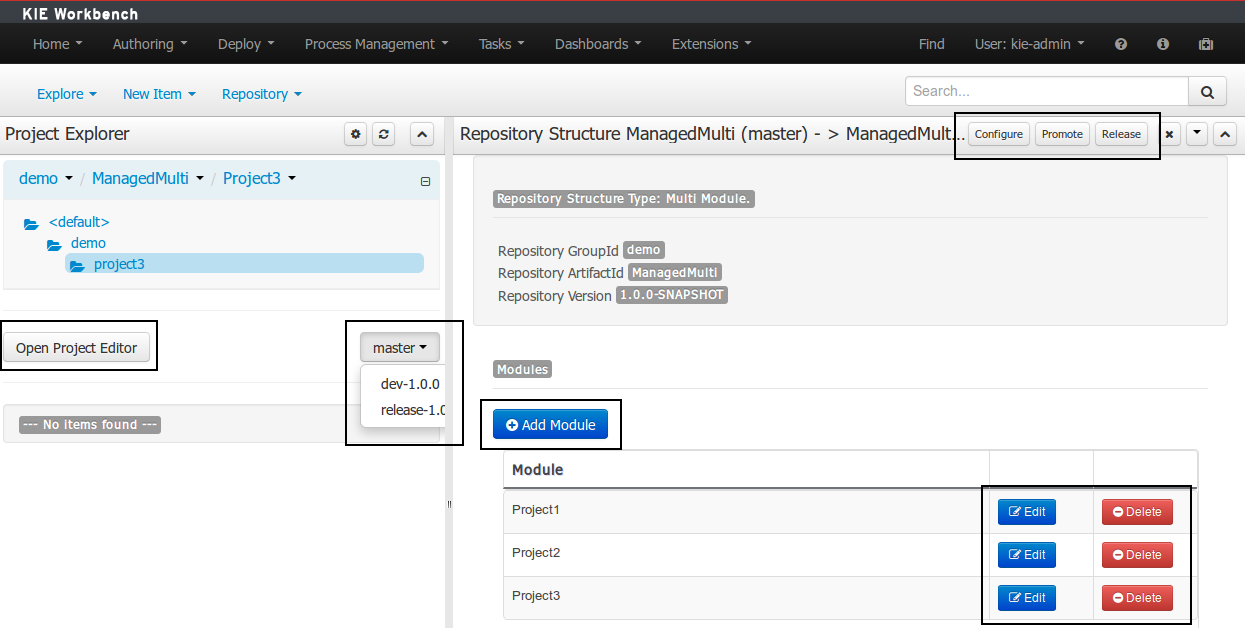

Once a repository has been created it can be managed through the Repository Structure Screen.

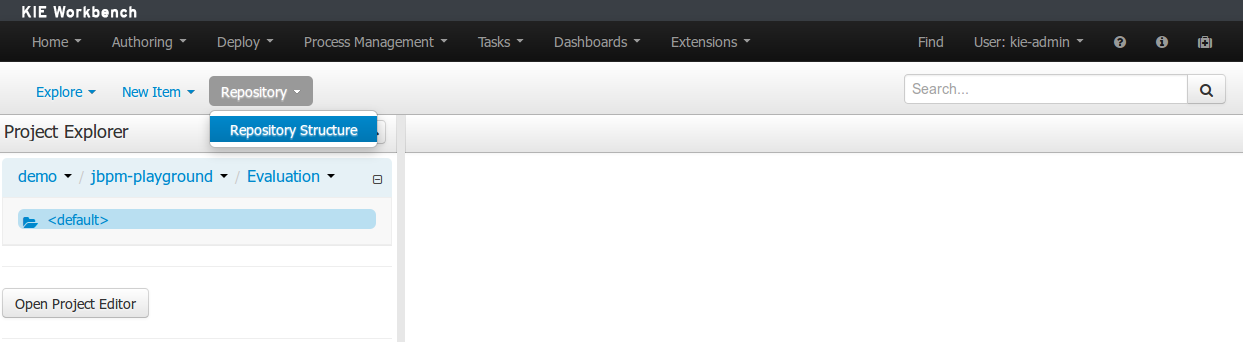

To open the Repository Structure Screen for a given repository open the Project Authoring Perspective, browse to the given repository and select the "Repository -> Repository Structure" menu option.

The following picture shows an example of a single project managed repository structure.

The following picture shows an example of a multi project managed repository structure.

The following picture shows the screen areas related to managed repositories operations.

The branch selector lets to switch between the different branches created by the Configure Repository Process.

From the repository structure screen it's also possible to create, edit or delete projects from current repository.

The assets management processes can also be launched from the Project Structure Screen.

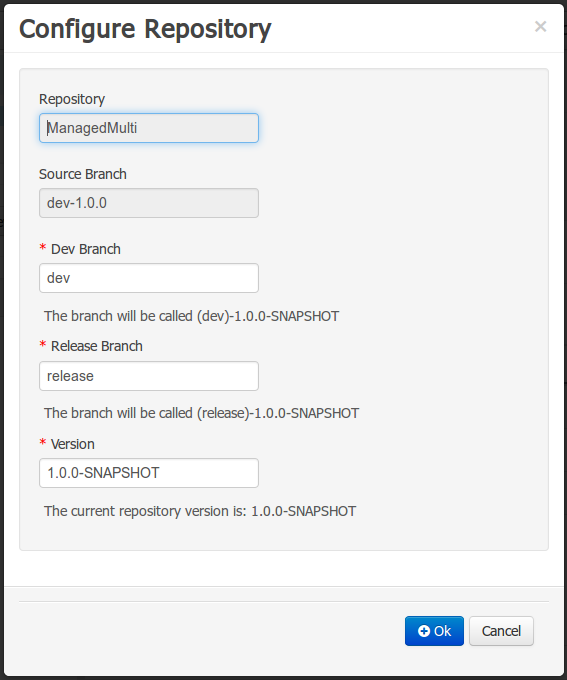

Filling the parameters bellow a new instance of the Configure Repository can be started. (see Configure Repository Process)

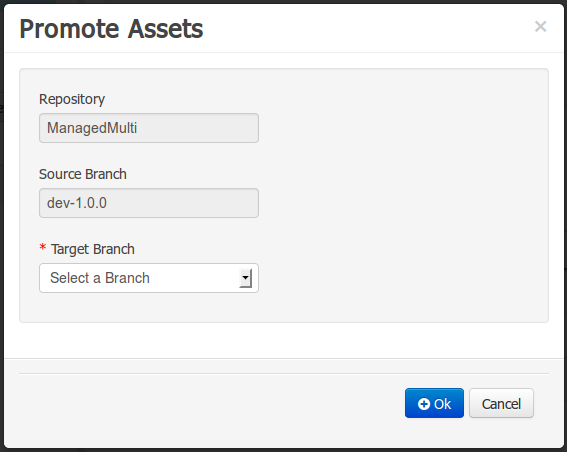

Filling the parameters bellow a new instance of the Promote Changes Process can be started. (see Promote Changes Process)