Getting Started

Introduction and getting started with jBPM

1. Overview

1.1. What is jBPM?

jBPM is a flexible Business Process Management (BPM) Suite. It is light-weight, fully open-source (distributed under Apache License 2.0) and written in Java. It allows you to model, execute, and monitor business processes and cases throughout their life cycle.

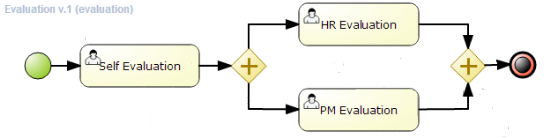

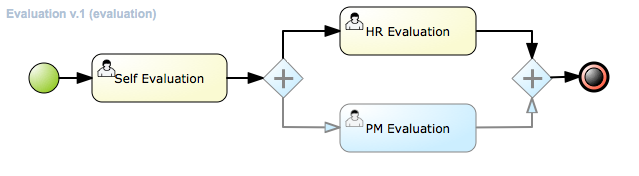

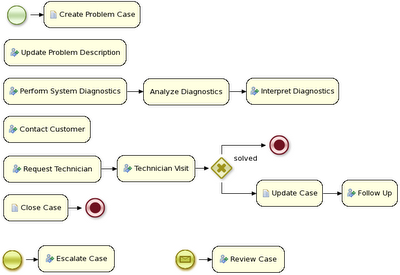

A business process allows you to model your business goals by describing the steps that need to be executed to achieve those goals, and the order of those goals is depicted using a flow chart. This process greatly improves the visibility and agility of your business logic. jBPM focuses on executable business processes, which are business processes that contain enough detail so they can actually be executed on a BPM jBPM engine. Executable business processes bridge the gap between business users and developers as they are higher-level and use domain-specific concepts that are understood by business users but can also be executed directly.

Business processes need to be supported throughout their entire life cycle: authoring, deployment, process management and task lists, and dashboards and reporting.

The core of jBPM is a light-weight, extensible workflow engine written in pure Java that allows you to execute business processes using the latest BPMN 2.0 specification. It can run in any Java environment, embedded in your application or as a service.

On top of the jBPM engine, a lot of features and tools are offered to support business processes throughout their entire life cycle:

-

Pluggable human task service based on WS-HumanTask for including tasks that need to be performed by human actors.

-

Pluggable persistence and transactions (based on JPA / JTA).

-

Case management capabilities added to the jBPM engine to support more adaptive and flexible use cases

-

Web-based process designer to support the graphical creation and simulation of your business processes (drag and drop).

-

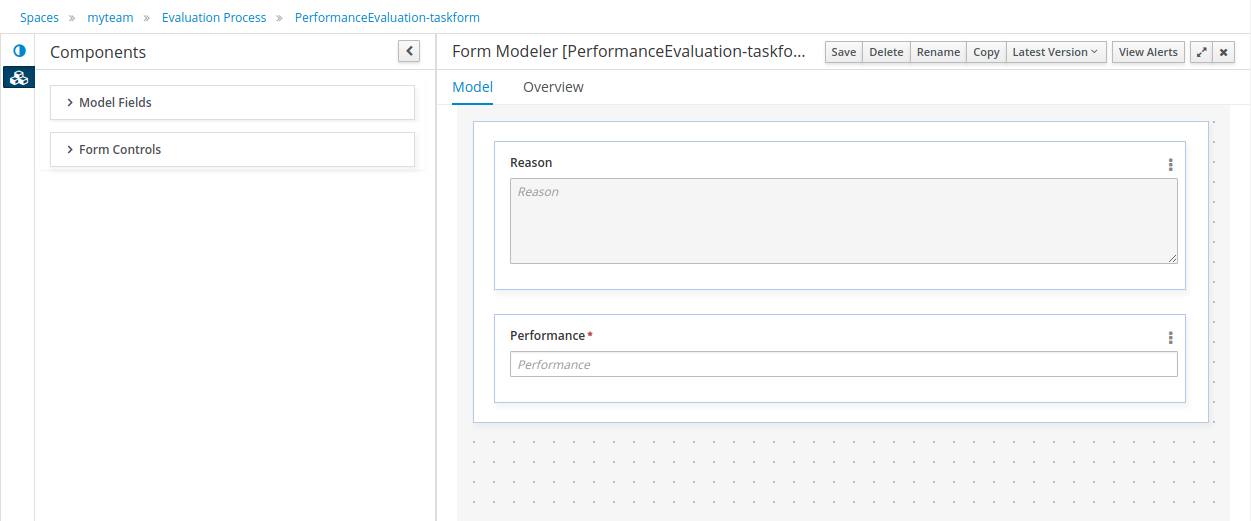

Web-based data modeler and form modeler to support the creation of data models and task forms

-

Web-based, customizable dashboards and reporting

-

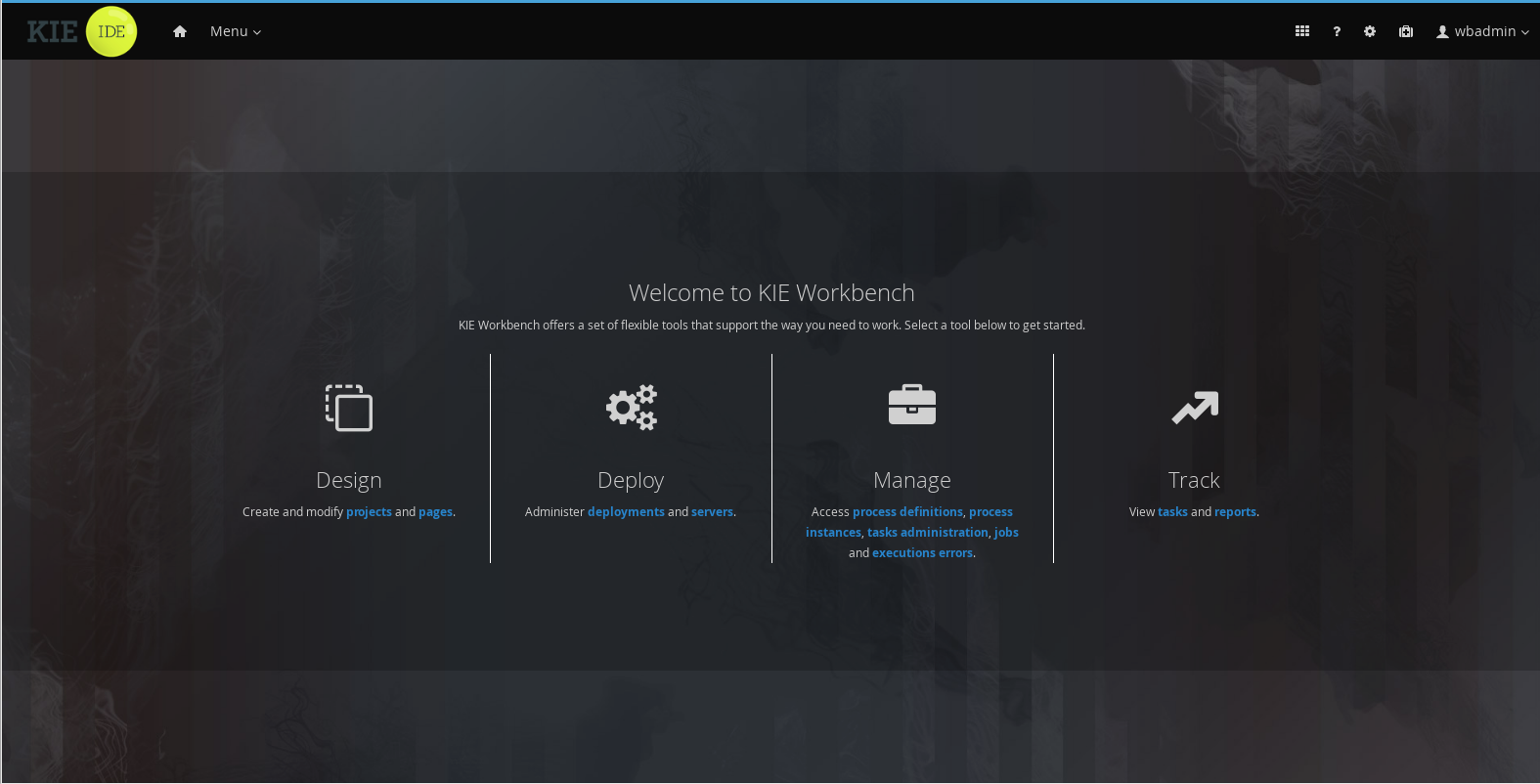

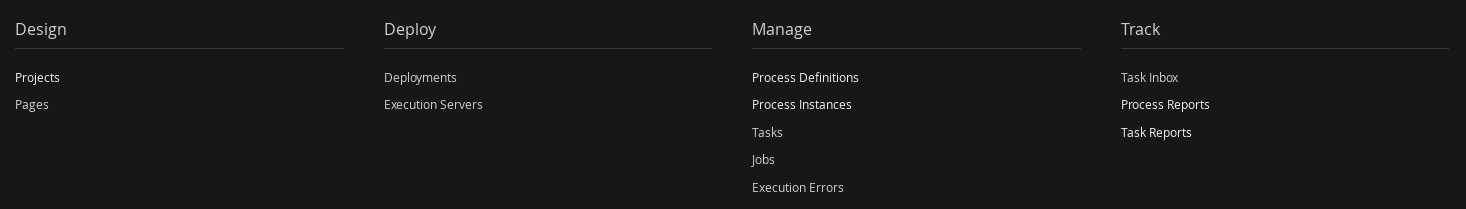

All combined in one web-based Business Central application, supporting the complete BPM life cycle:

-

Modeling and deployment - author your processes, rules, data models, forms and other assets

-

Execution - execute processes, tasks, rules and events on the core runtime engine

-

Runtime Management - work on assigned task, manage process instances, etc

-

Reporting - keep track of the execution using Business Activity Monitoring capabilities

-

-

Eclipse-based developer tools to support the modeling, testing and debugging of processes

-

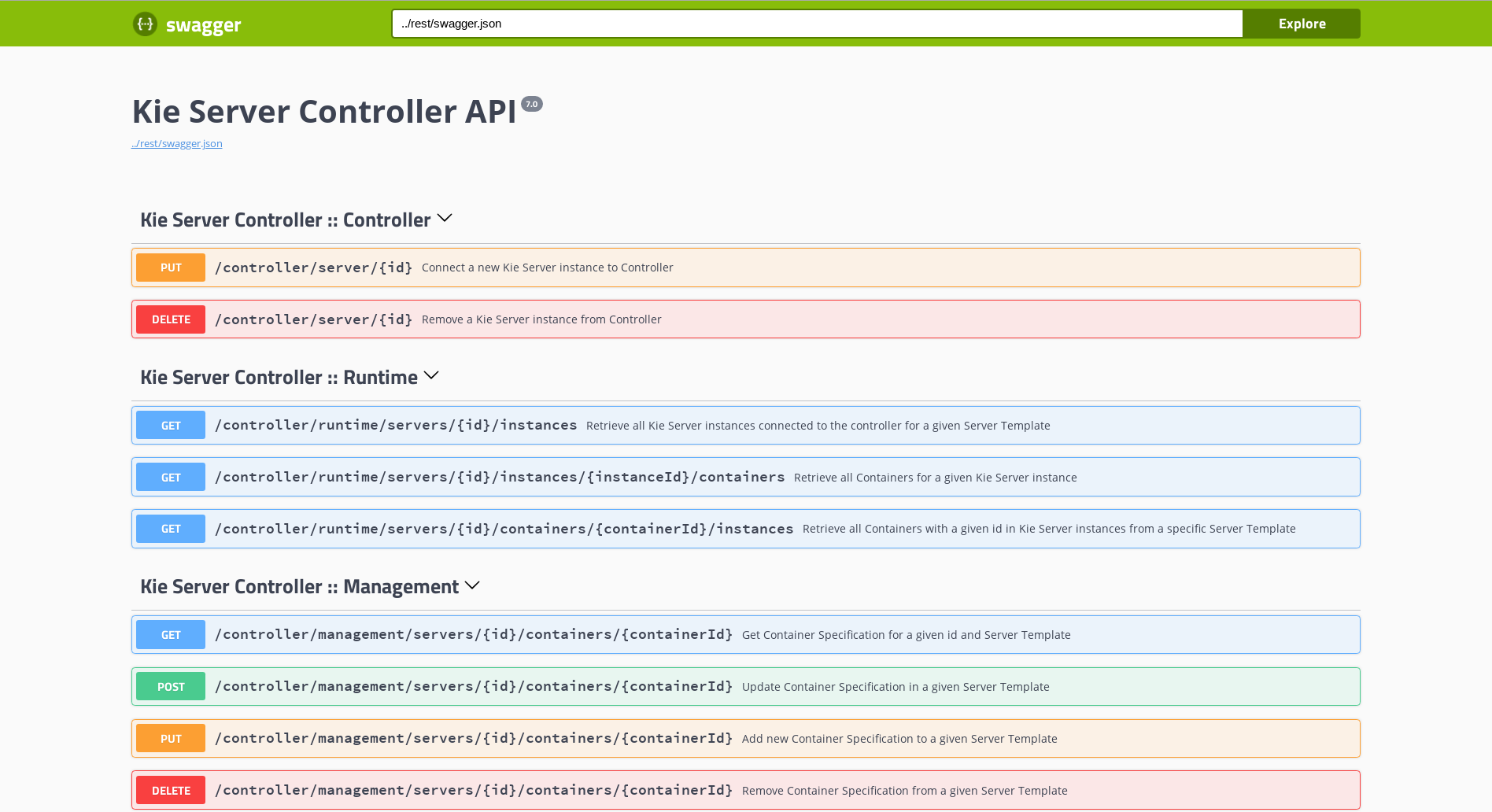

Remote API to jBPM engine as a service (REST, JMS, Remote Java API)

-

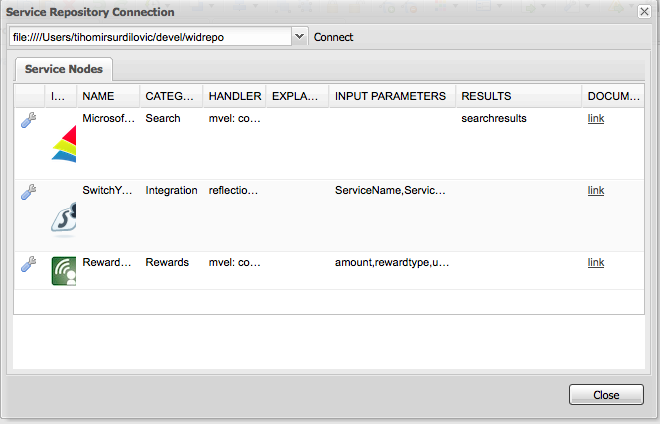

Integration with Maven, Spring, OSGi, etc.

BPM creates the bridge between business analysts, developers and end users by offering process management features and tools in a way that both business users and developers like. Domain-specific nodes can be plugged into the palette, making the processes more easily understood by business users.

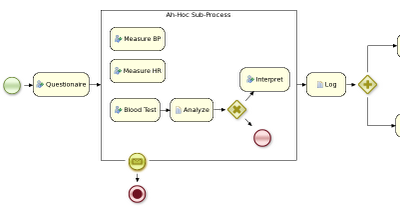

jBPM supports case management by offering more advanced features to support adaptive and dynamic processes that require flexibility to model complex, real-life situations that cannot easily be described using a rigid process. We bring control back to the end users by allowing them to control which parts of the process should be executed; this allows dynamic deviation from the process.

jBPM is not just an isolated jBPM engine. Complex business logic can be modeled as a combination of business processes with business rules and complex event processing. jBPM can be combined with the Drools project to support one unified environment that integrates these paradigms where you model your business logic as a combination of processes, rules and events.

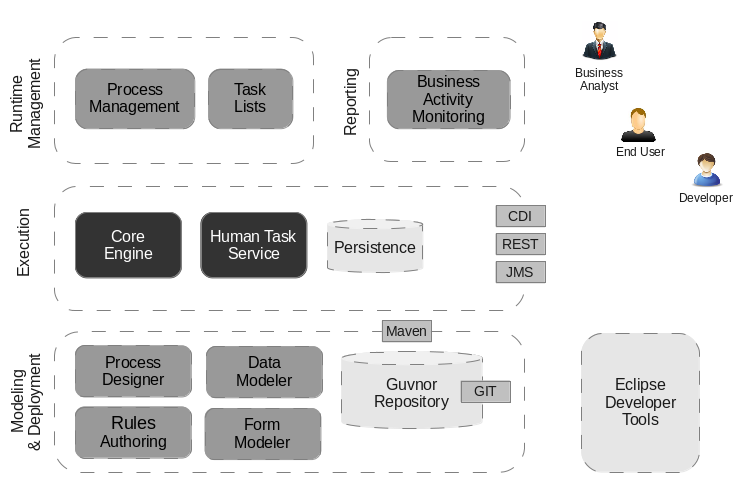

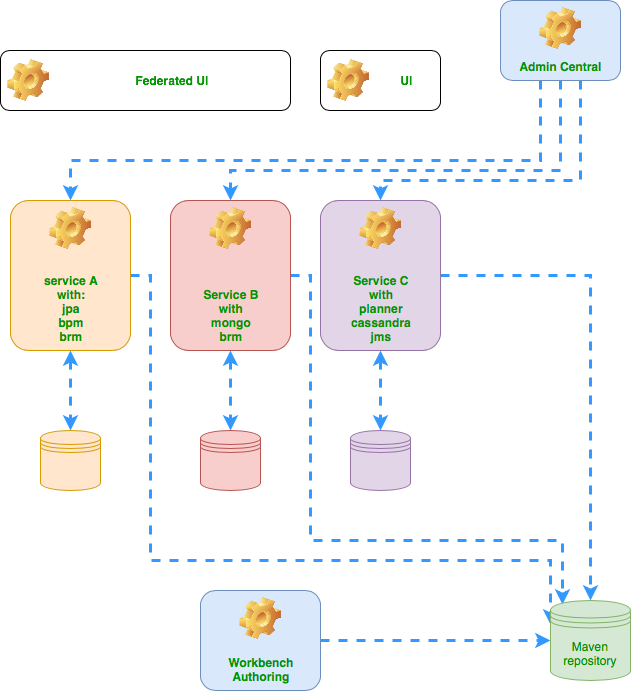

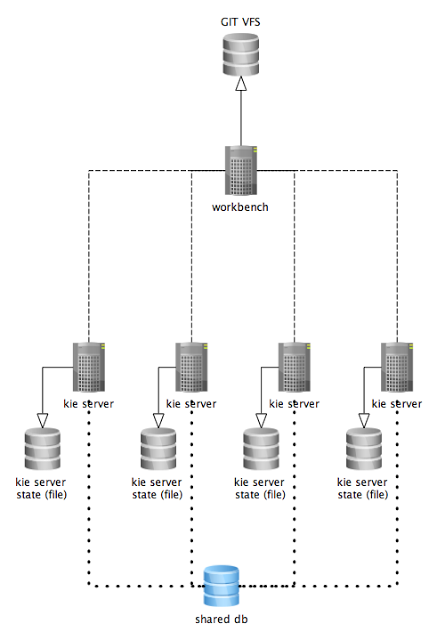

1.2. Overview of jBPM

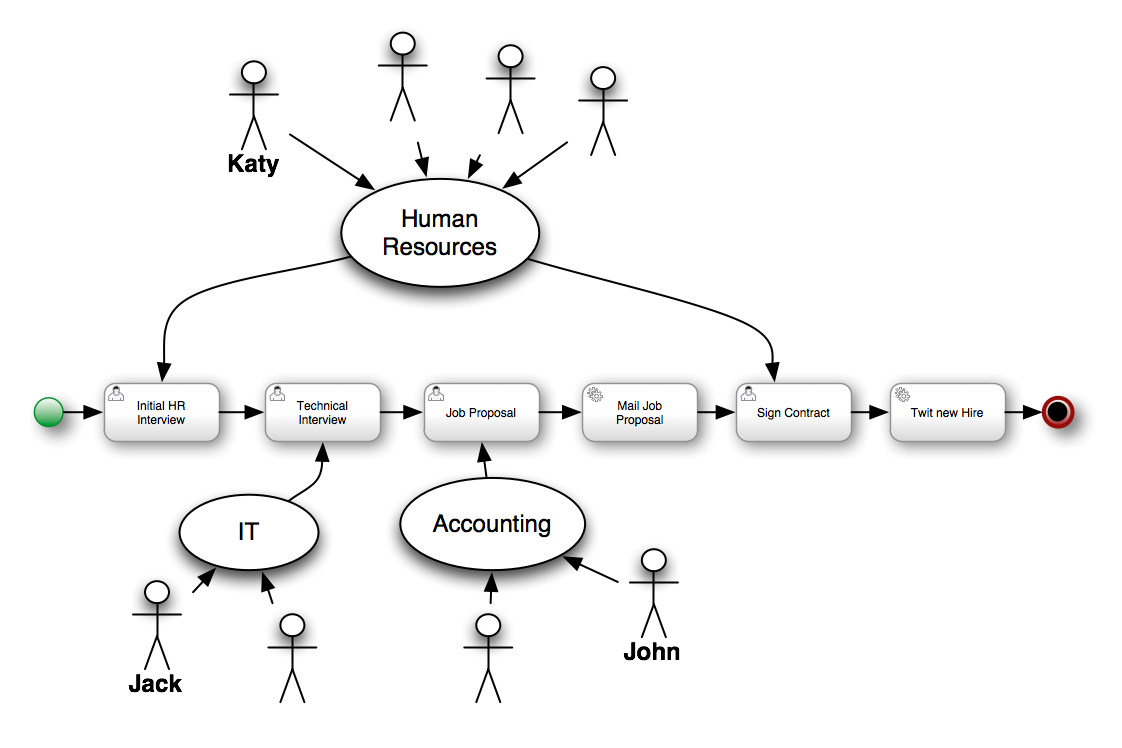

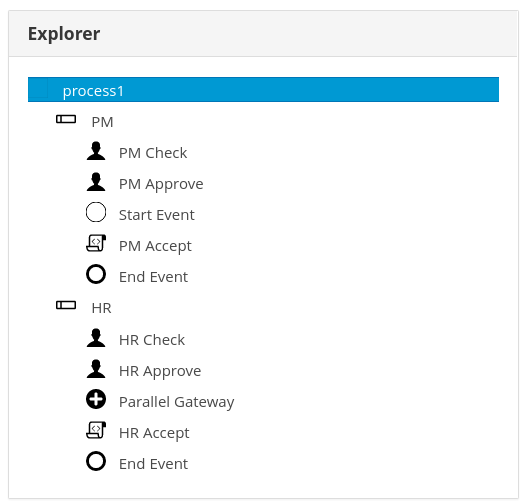

This figure gives an overview of the different components of the jBPM project.

-

The core engine is the heart of the project and allows you to execute business processes in a flexible manner. It is a pure Java component that you can choose to embed as part of your application or deploy it as a service and connect to it through the web-based UI or remote APIs.

-

An optional core service is the human task service that will take care of the human task life cycle if human actors participate in the process.

-

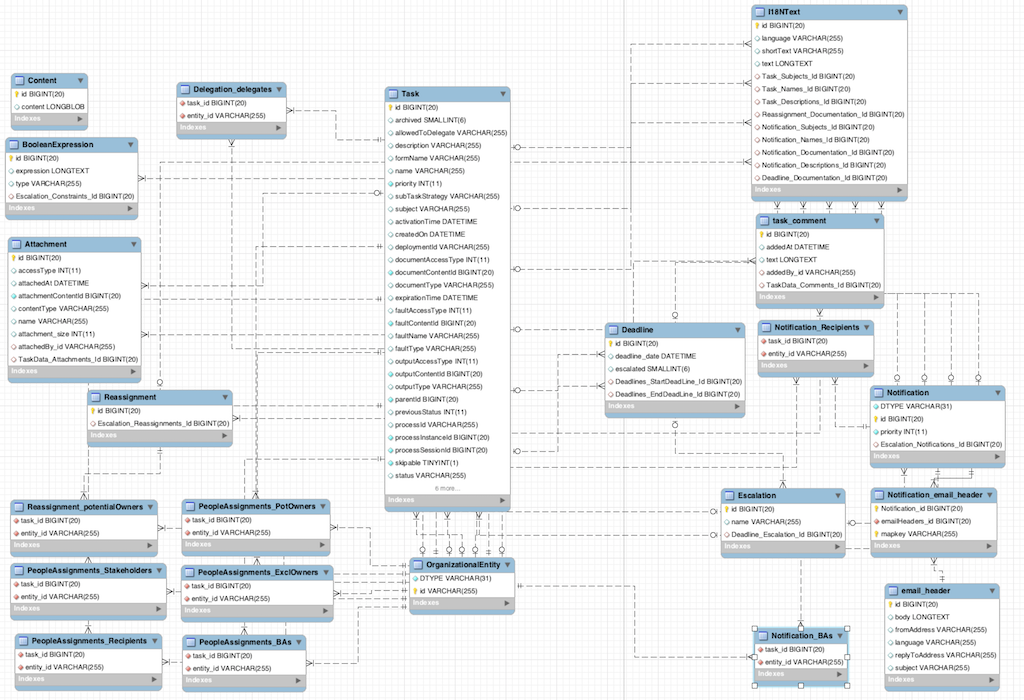

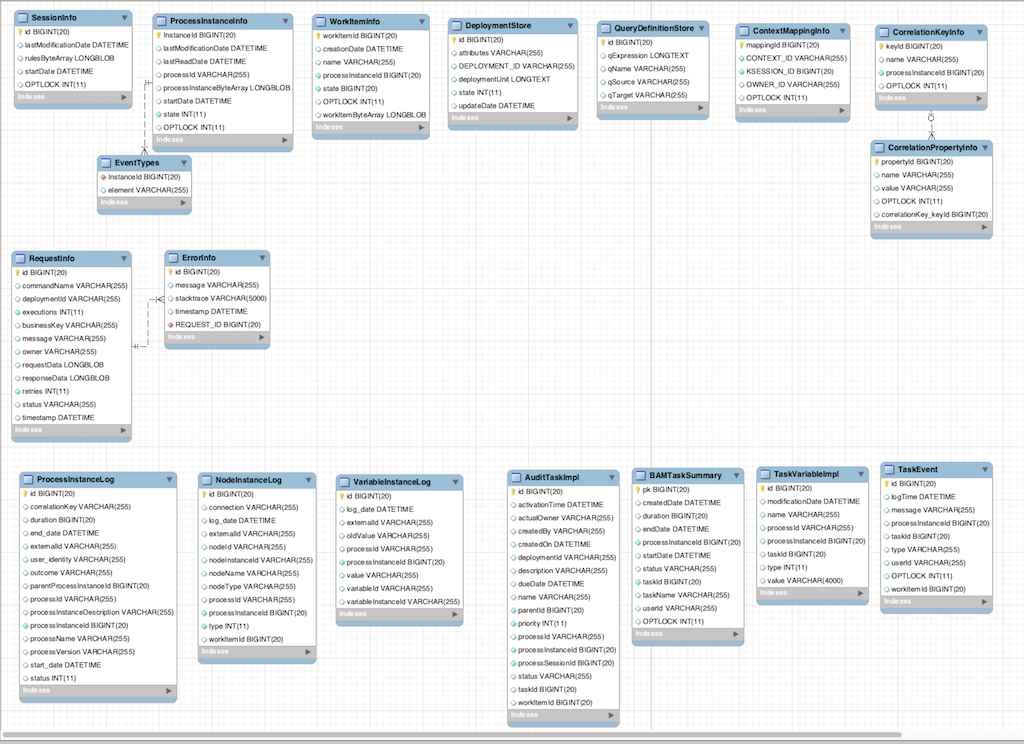

Another optional core service is runtime persistence; this will persist the state of all your process instances and log audit information about everything that is happening at runtime.

-

Applications can connect to the core engine through its Java API or as a set of CDI services, but also remotely through a REST and JMS API.

-

-

Web-based tools allow you to model, simulate and deploy your processes and other related artifacts (like data models, forms, rules, etc.):

-

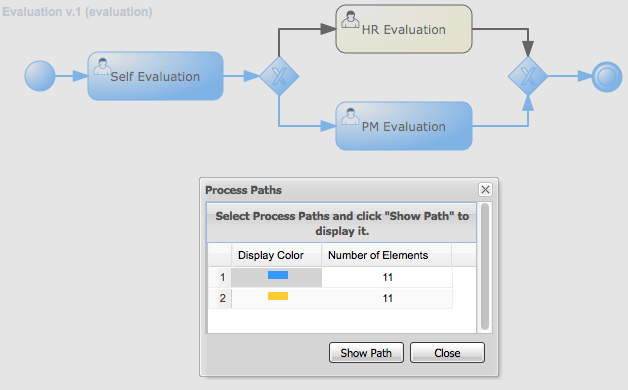

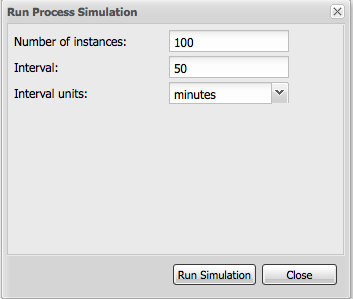

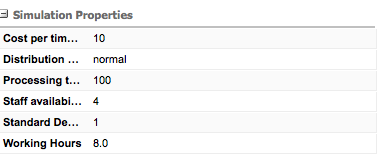

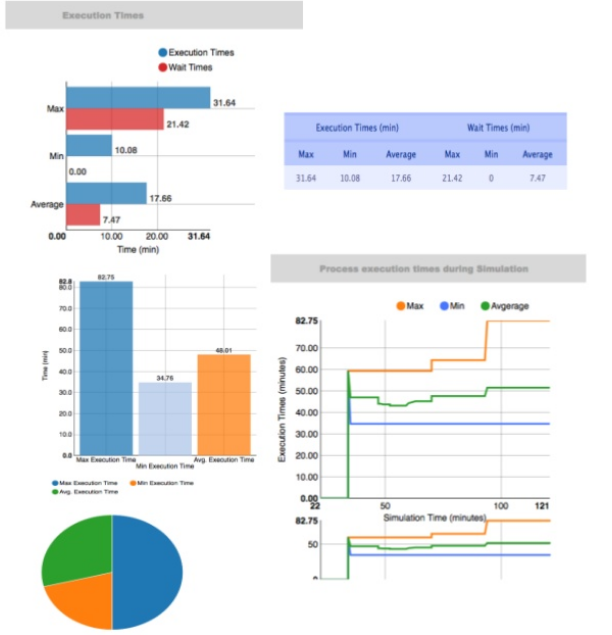

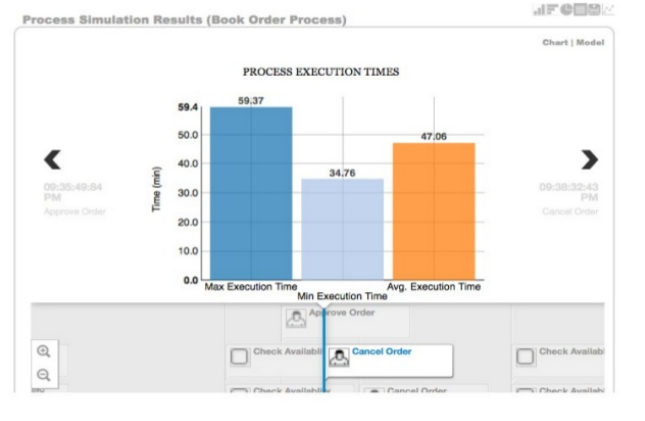

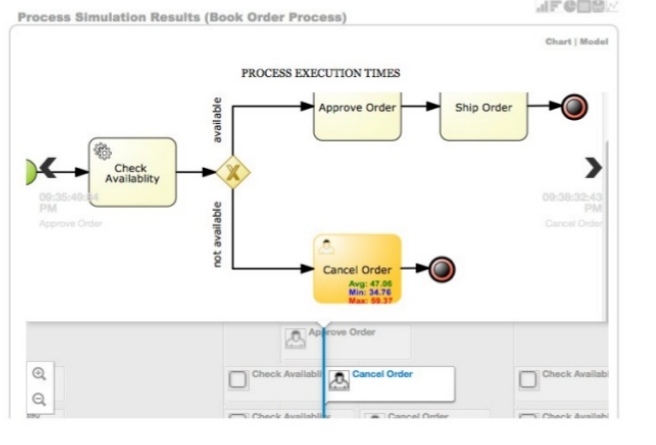

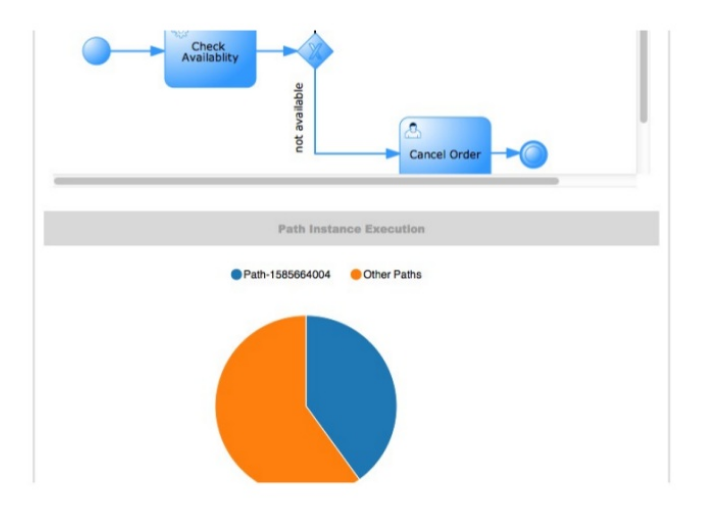

The process designer allows business users to design and simulate business processes in a web-based environment.

-

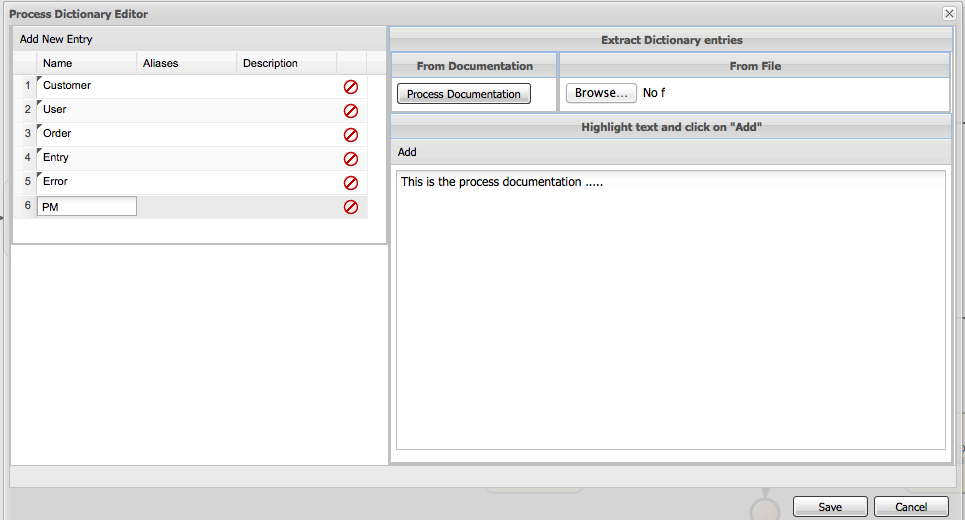

The data modeler allows non-technical users to view, modify and create data models for use in your processes.

-

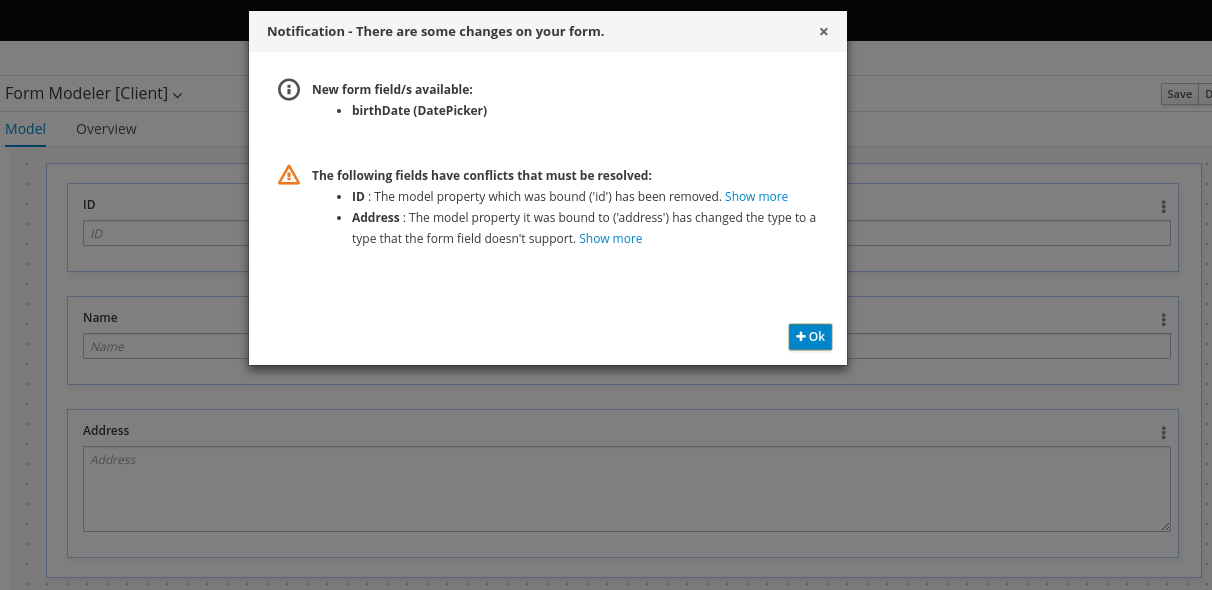

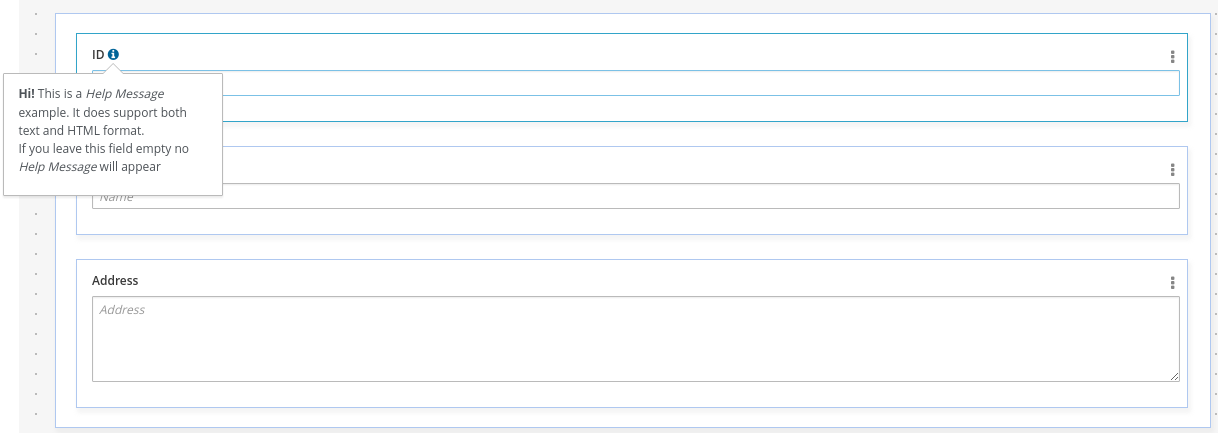

A web-based form modeler also allows you to create, generate or edit forms related to your processes (to start the process or to complete one of the user tasks).

-

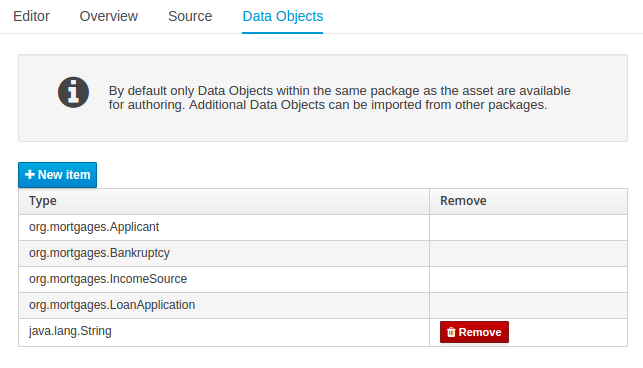

Rule authoring allows you to specify different types of business rules (decision tables, guided rules, etc.) for combination with your processes.

-

All assets are stored and managed by the Guvnor repository (exposed through Git) and can be managed (versioning), built and deployed.

-

-

The web-based management console allows business users to manage their runtime (manage business processes like start new processes, inspect running instances, etc.), to manage their task list and to perform Business Activity Monitoring (BAM) and see reports.

-

The Eclipse-based developer tools are an extension to the Eclipse IDE, targeted towards developers, and allows you to create business processes using drag and drop, test and debug your processes, etc.

Each of the component is described in more detail below.

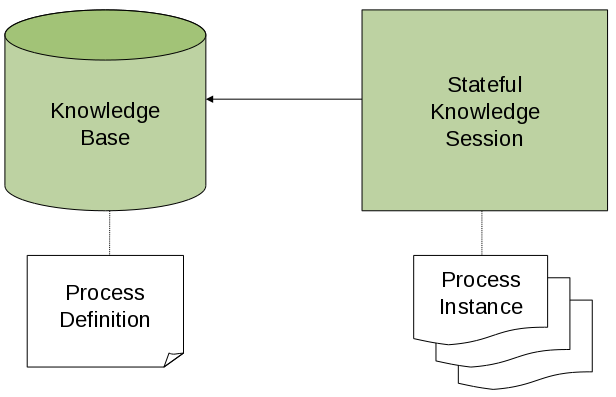

1.3. jBPM engine in jBPM

The jBPM engine implements the Business Process Management (BPM) paradigm in jBPM. BPM is a business methodology that enables modeling, measuring, and optimizing processes within an enterprise.

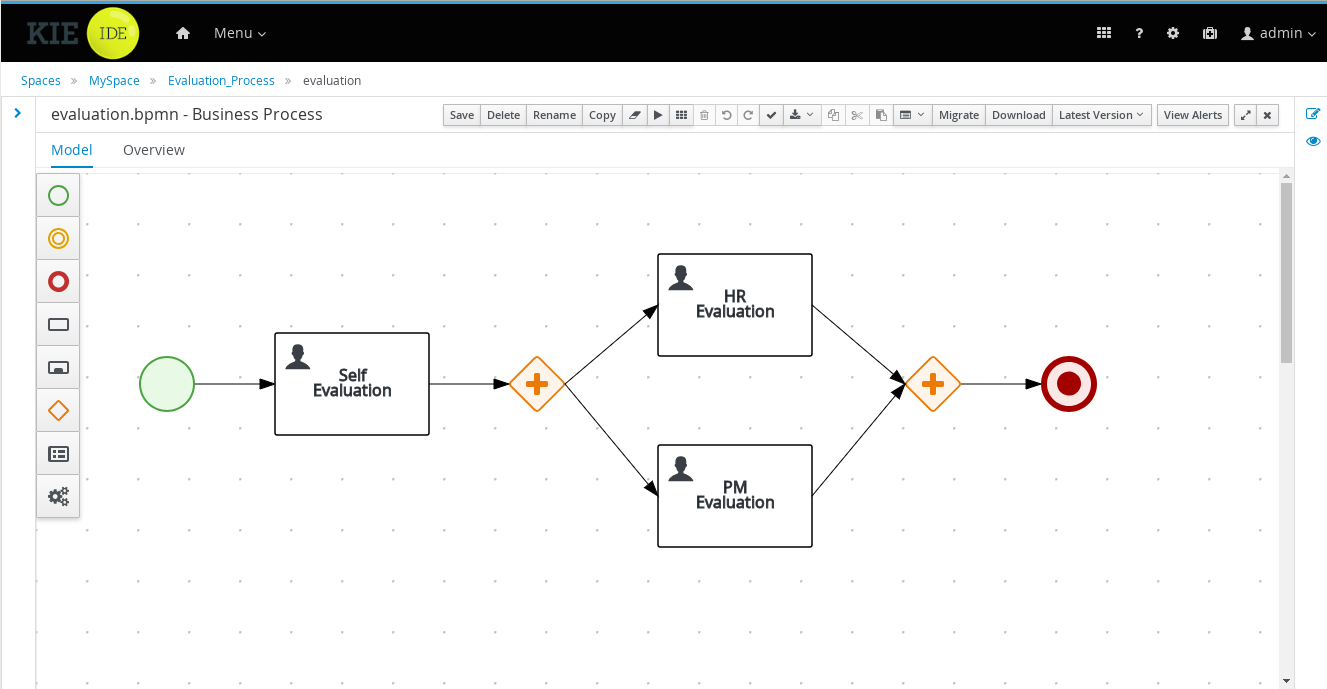

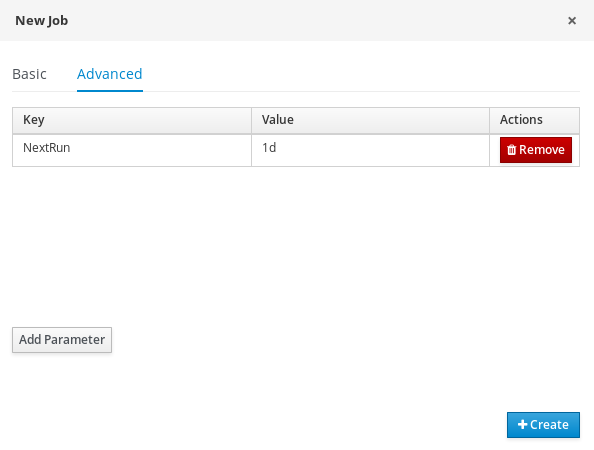

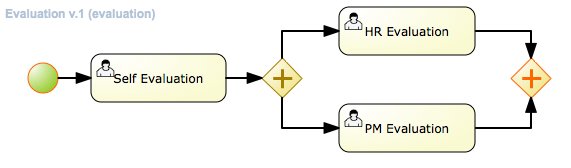

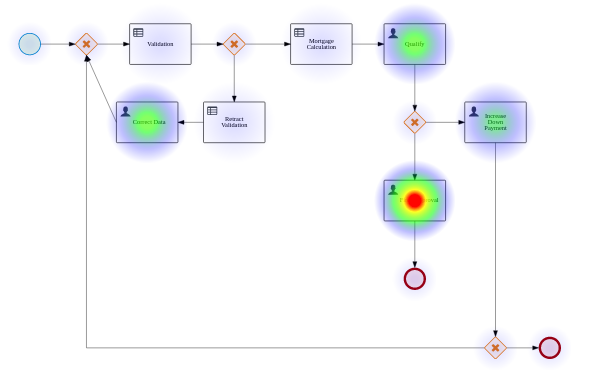

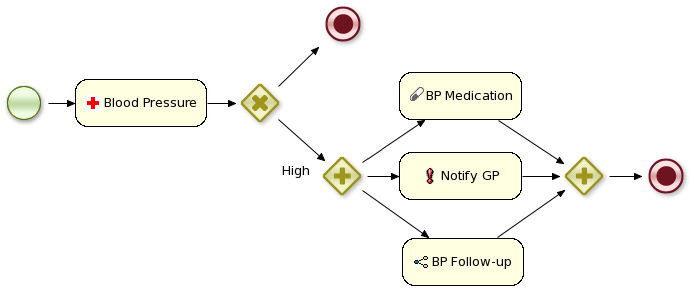

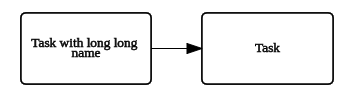

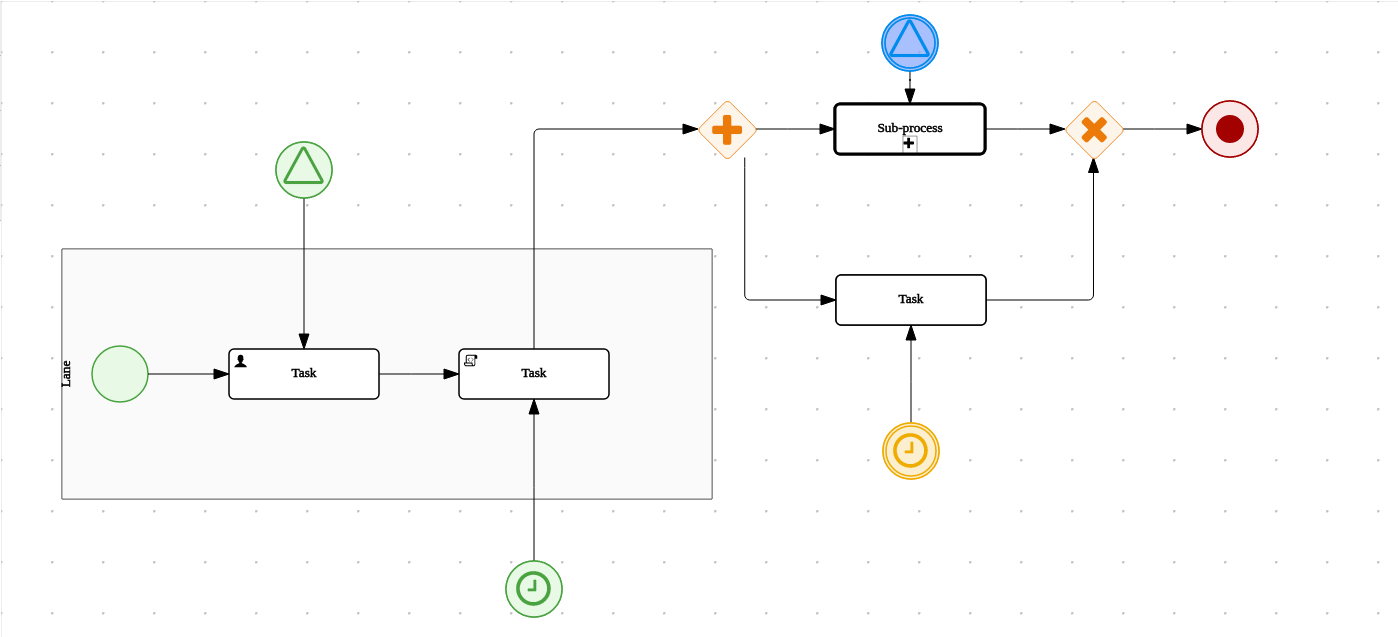

In BPM, a repeatable business process is represented as a workflow diagram. The Business Process Model and Notation (BPMN) specification defines the available elements of this diagram. The jBPM engine implements a large subset of the BPMN 2.0 specification.

With the jBPM engine, business analysts can develop the diagram itself. Developers can implement the business logic of every element of the flow in code, making an executable business process. Users can execute the business process and interact with it as necessary. Analysts can generate metrics that reflect the efficiency of the process.

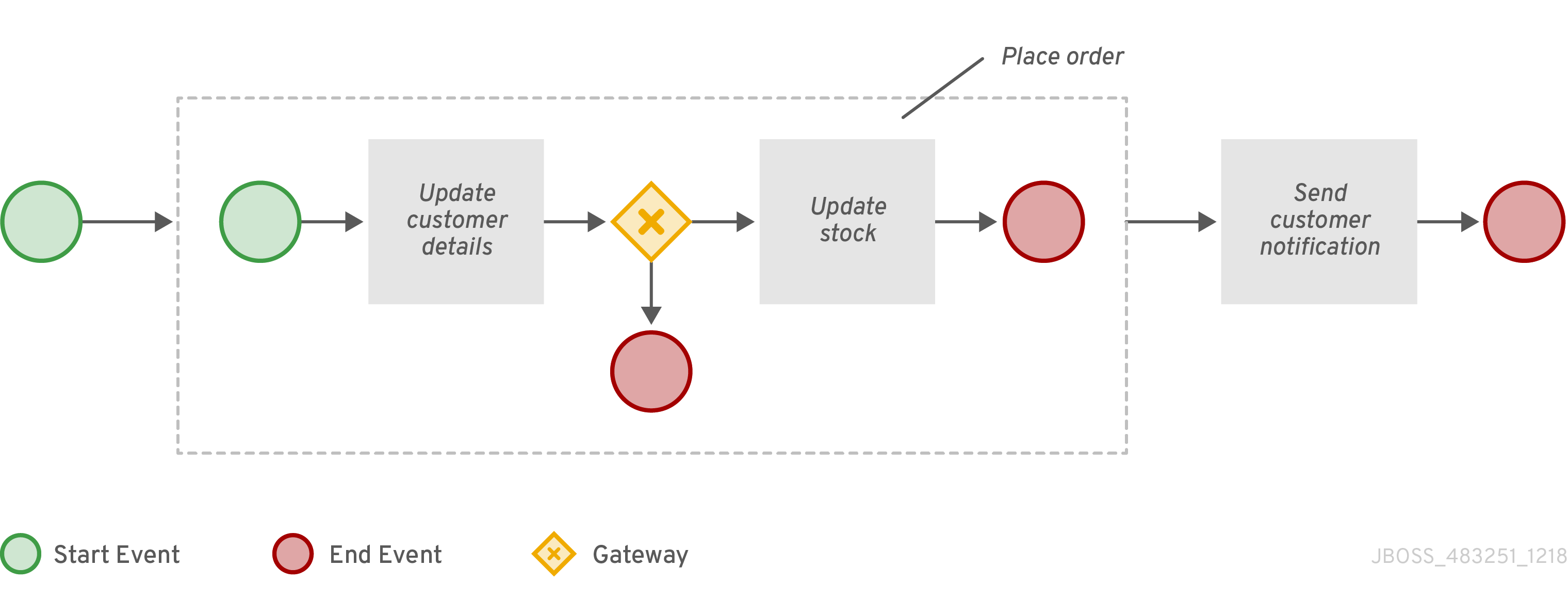

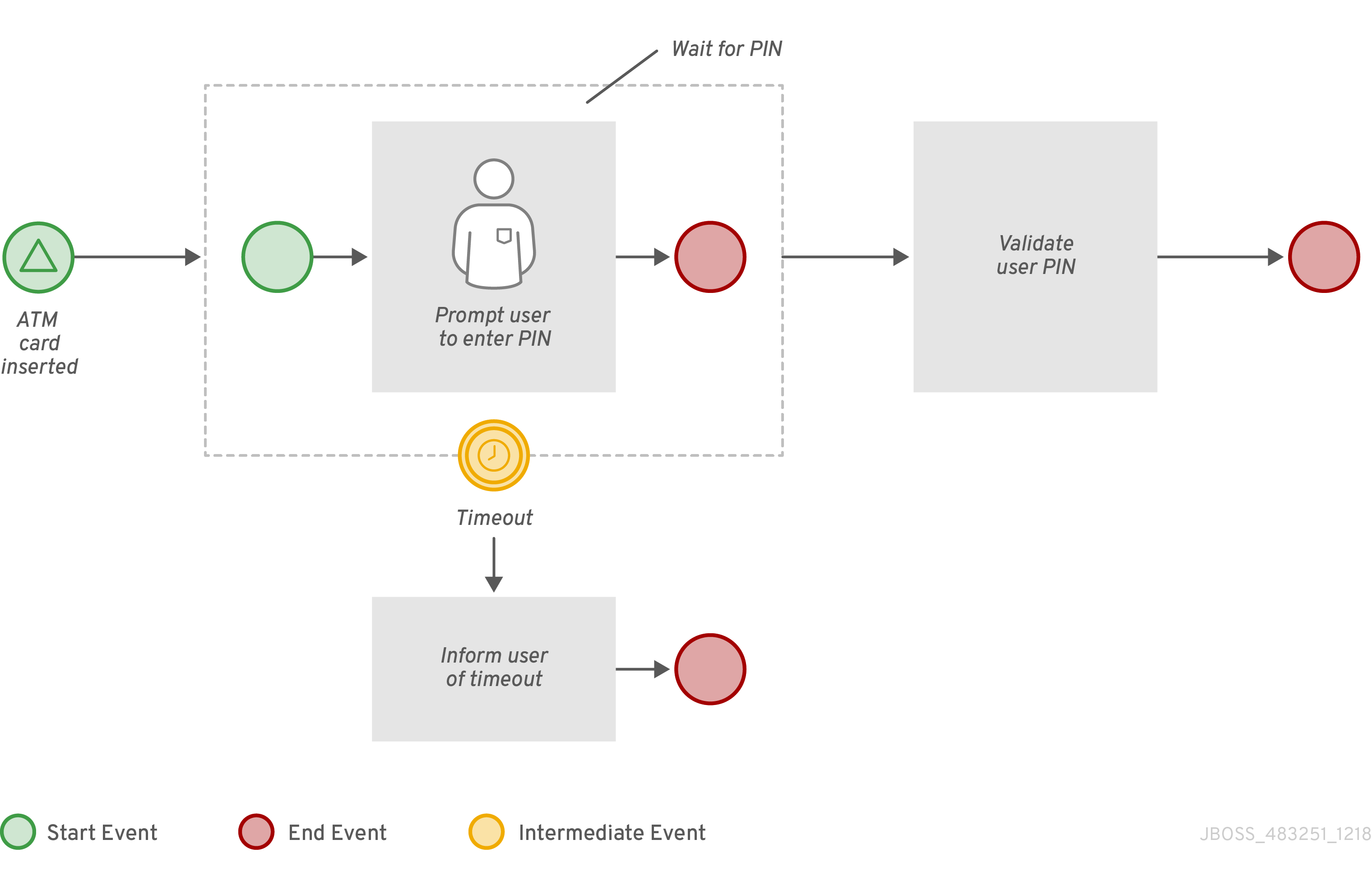

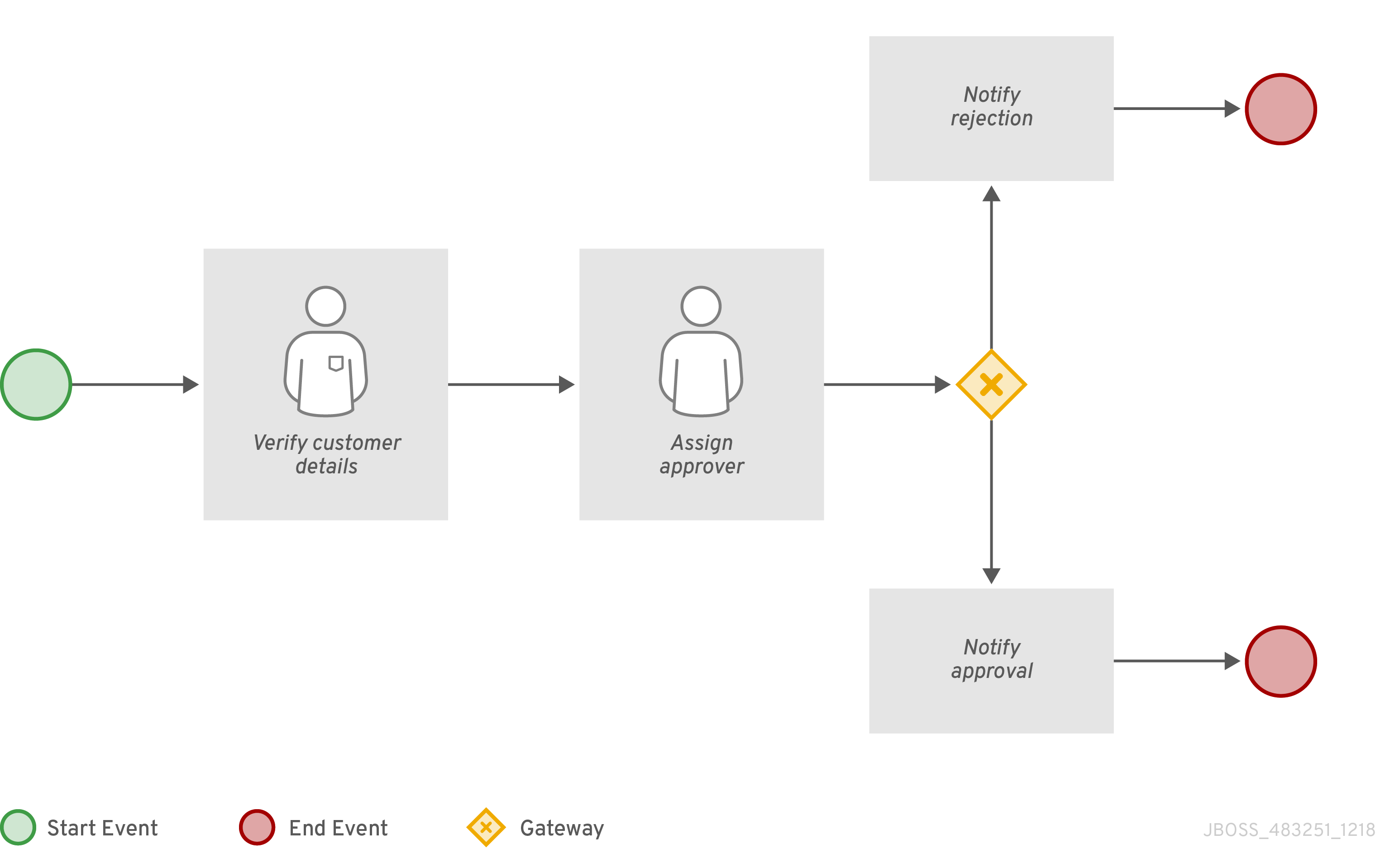

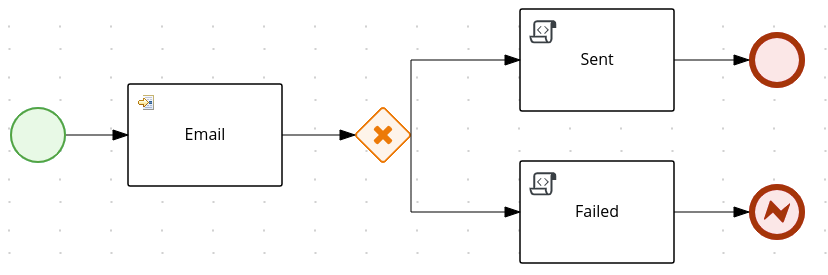

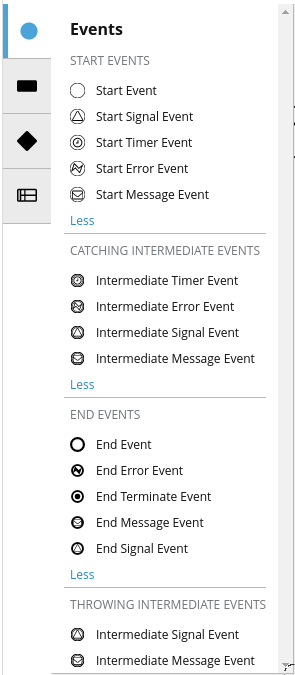

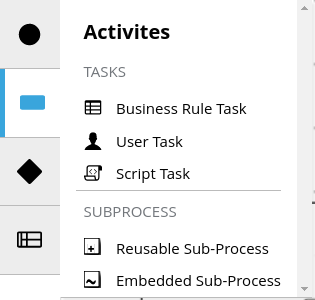

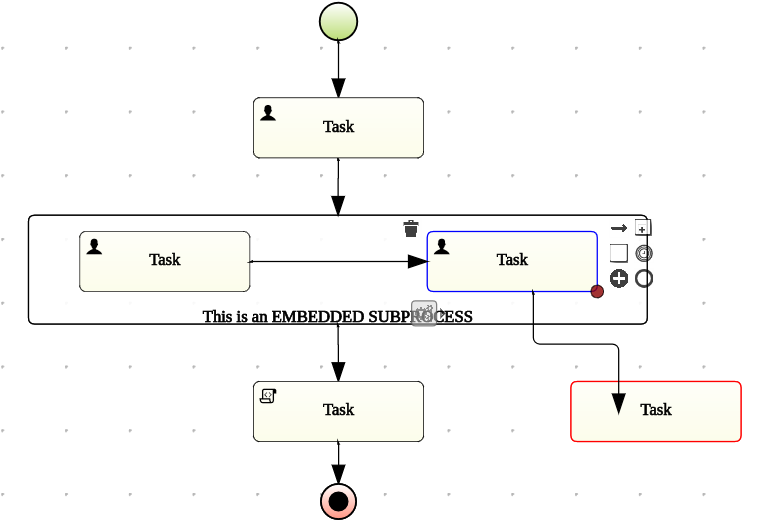

The workflow diagram consists of a number of nodes. The BPMN specification defines many kinds of nodes, including the following principal types:

-

Event: Nodes representing something happening in the process or outside of the process. Typical events are the start and the end of a process. An event can throw messages to other processes and catch such messages. Circles on the diagram represent events.

-

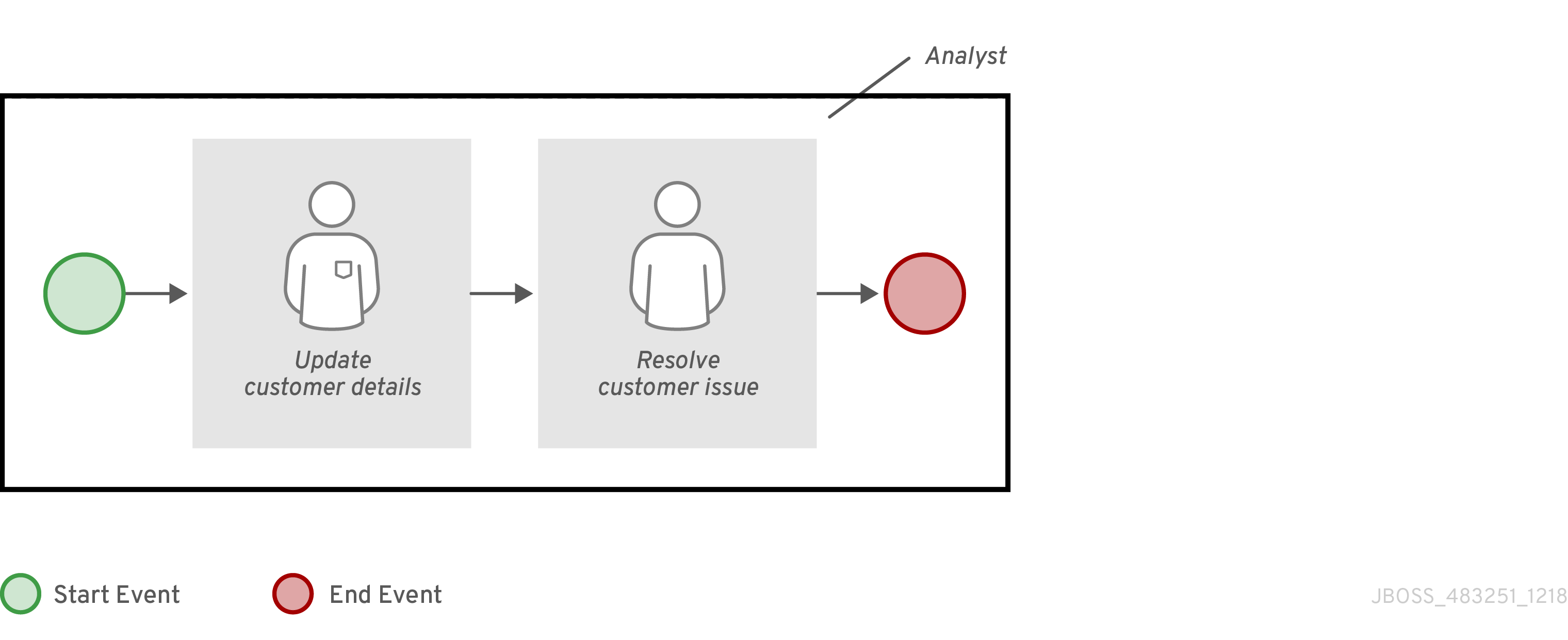

Activity: Nodes representing an action that must be taken (whether automatically or with user involvement). Typical events are a task, which represents an action taken within the process, and a call to a sub-process. Rounded rectangles on the diagram represent activities.

-

Gateway: A branching or merging node. A typical gateway evaluates an expression and, depending on the result, continues to one of several execution paths. Diamond shapes on the diagram represent gateways.

When a user starts the process, a process instance is created. The process instance contains a set of data, or context, stored in process variables. The state of a process instance includes all the context data and also the current active node (or, in some cases, several active nodes).

Some of these variables can be initialized when a user starts the process. An activity can read from process variables and write to process variables. A gateway can evaluate process variables to determine the execution path.

For example, a purchase process in a shop can be a business process. The content of the user’s cart can be the initial process context. At the end of execution, the process context can contain the payment confirmation and shipment tracking details.

Optionally, you can use the BPMN data modeler in Business Central to design the model for the data in process variables.

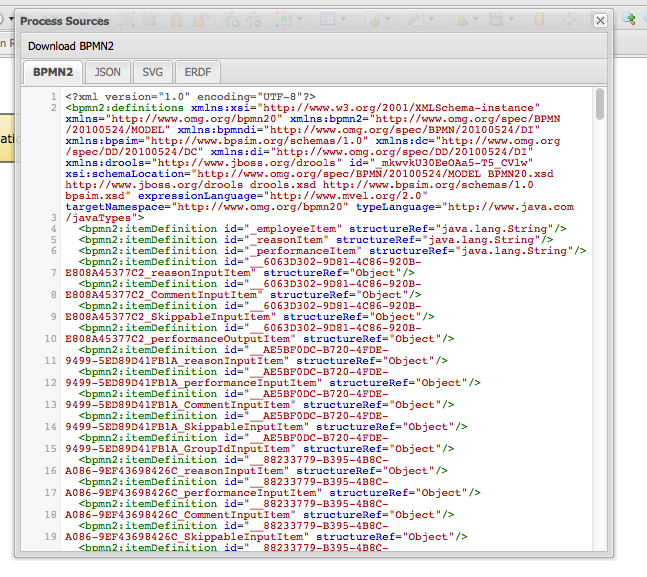

The workflow diagram is represented in code by an XML business process definition. The logic of events, gateways, and sub-process calls are defined within the business process definition.

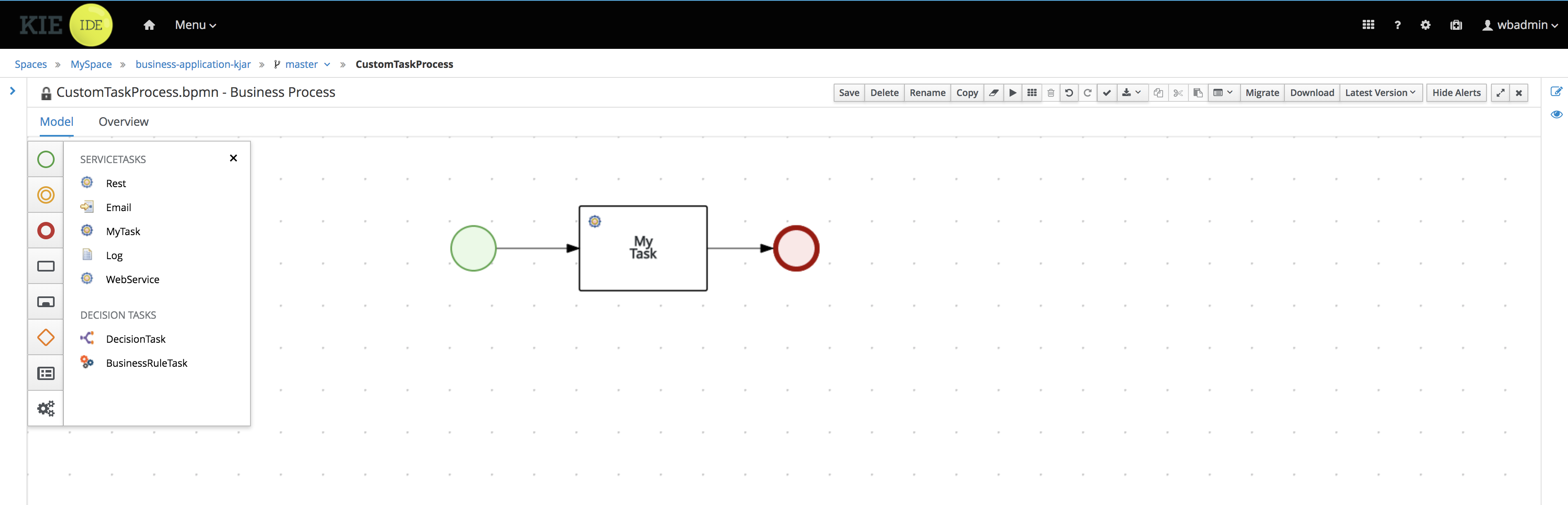

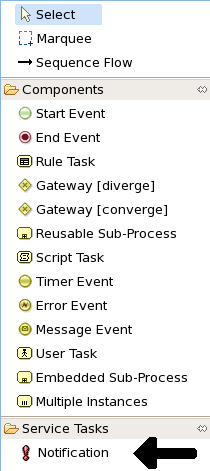

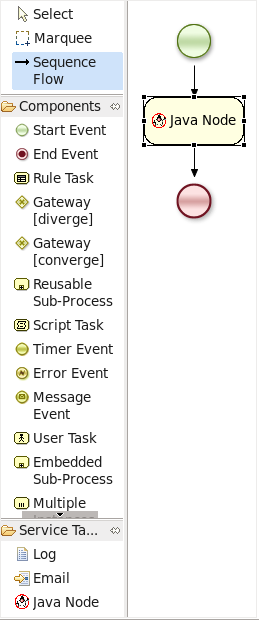

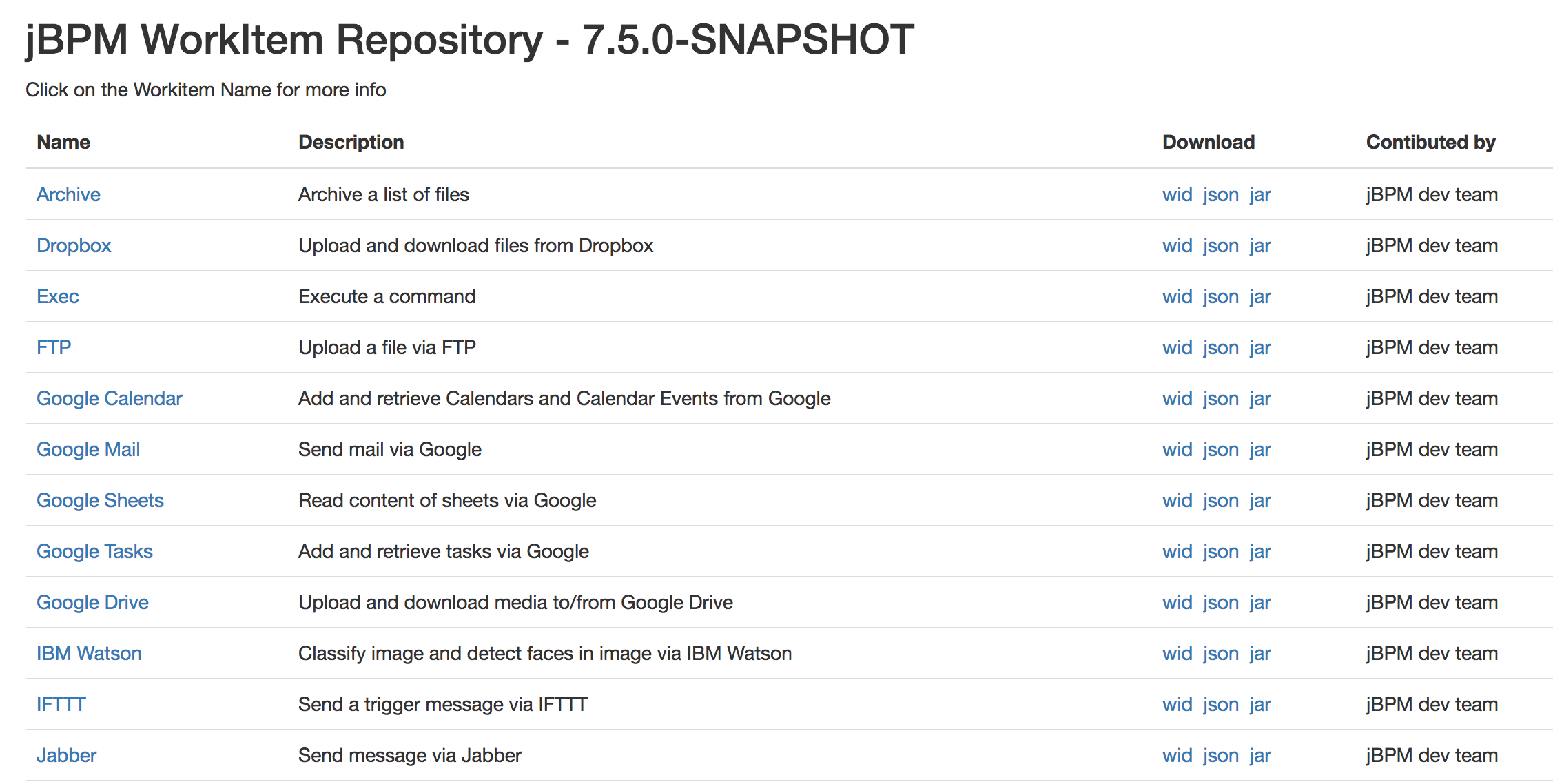

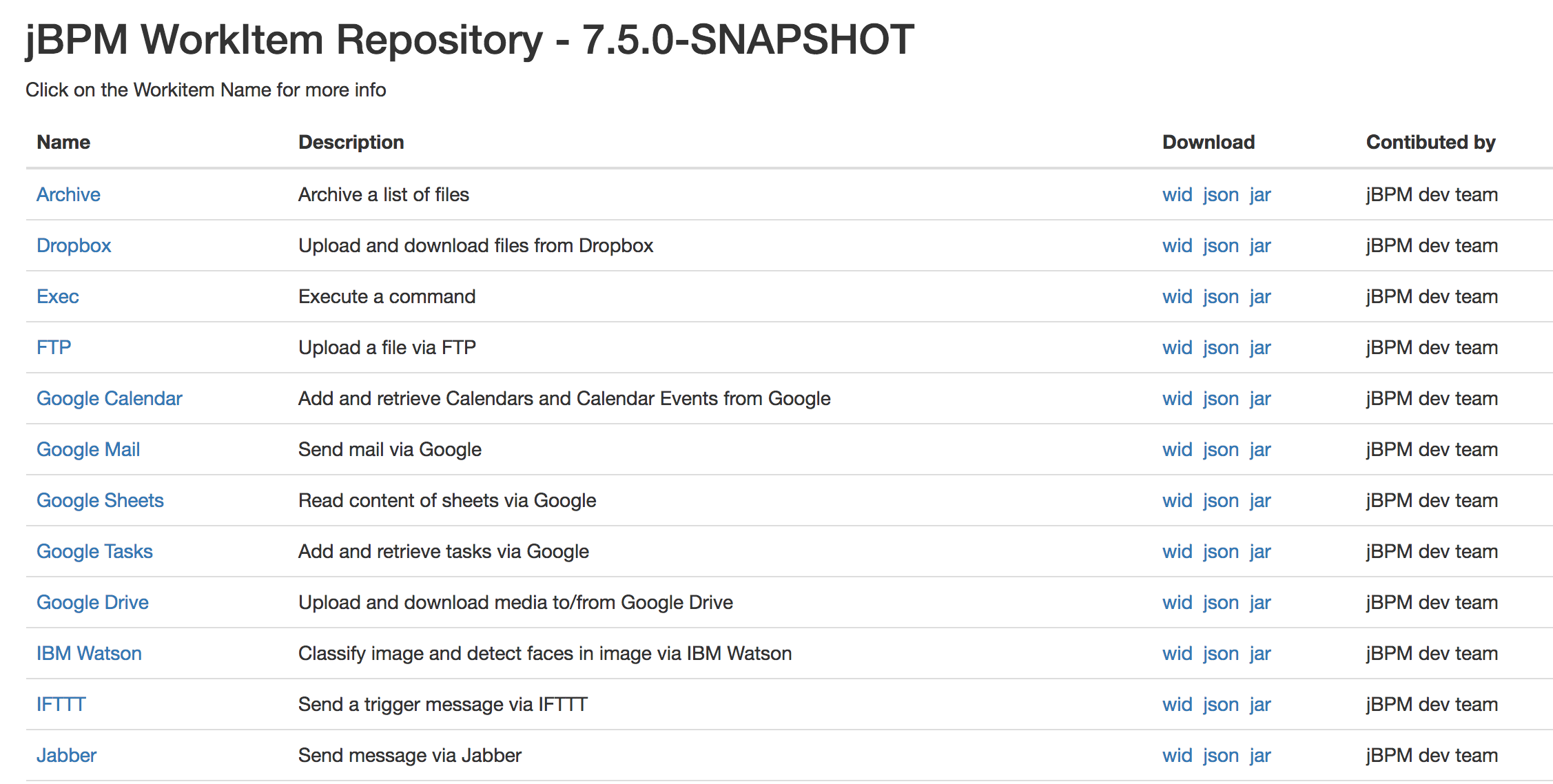

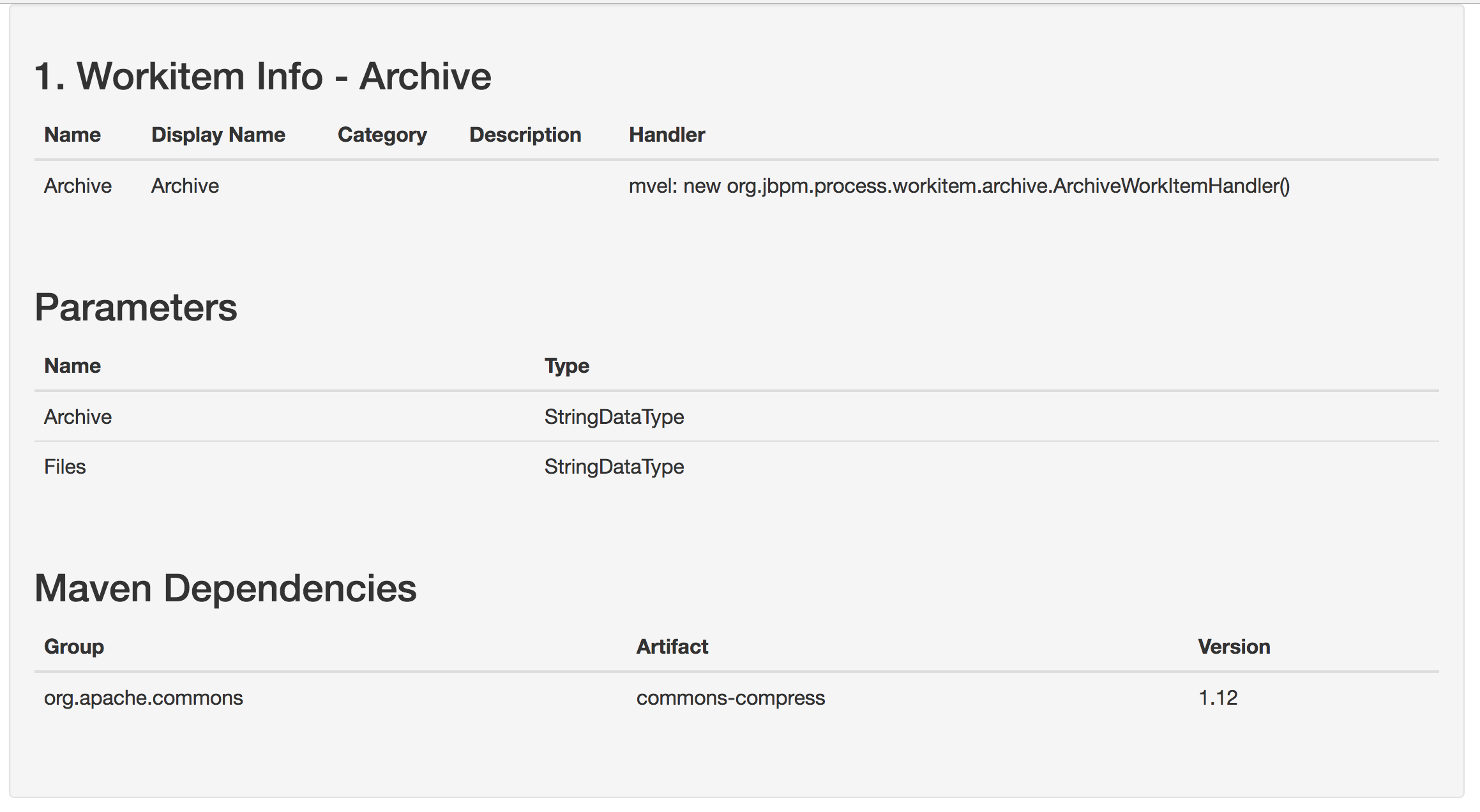

Some task types (for example, script tasks and the standard decision engine rule task) are implemented in the engine. For other task types, including all custom tasks, when the task must be executed the jBPM engine executes a call using the Work Item Handler API. Code external to the engine can implement this API, providing a flexible mechanism for implementing various tasks.

The jBPM engine includes a number of predefined types of tasks. These types include a script task that runs user Java code, a service task that calls a Java method or a Web Service, a decision task that calls a decision engine service, and other custom tasks (for example, REST and database calls).

Another predefined type of task is a user task, which includes interaction with a user. User tasks in the process can be assigned to users and groups.

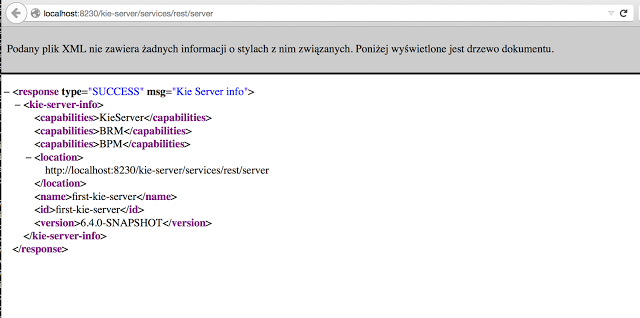

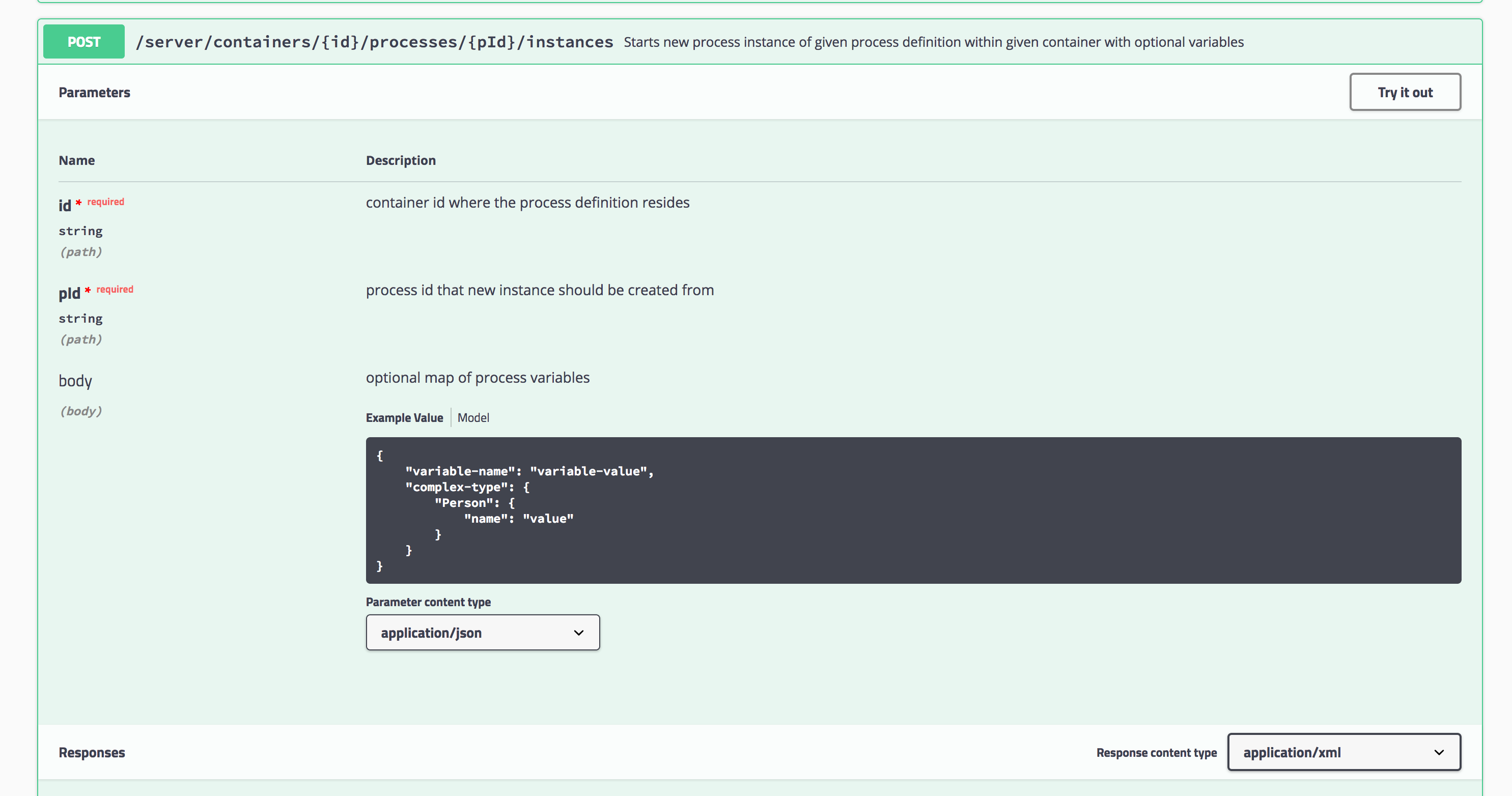

The jBPM engine uses the KIE API to interact with other software components. You can run business processes as services on a KIE Server and interact with them using a REST implementation of the KIE API. Alternatively, you can embed business processes in your application and interact with them using KIE API Java calls. In this case, you can run the jBPM engine in any Java environment.

Business Central includes a user interface for users executing human tasks and a form modeler for creating the web forms for human tasks. However, you can also implement a custom user interface that interacts with the jBPM engine using the KIE API.

The jBPM engine supports the following additional features:

-

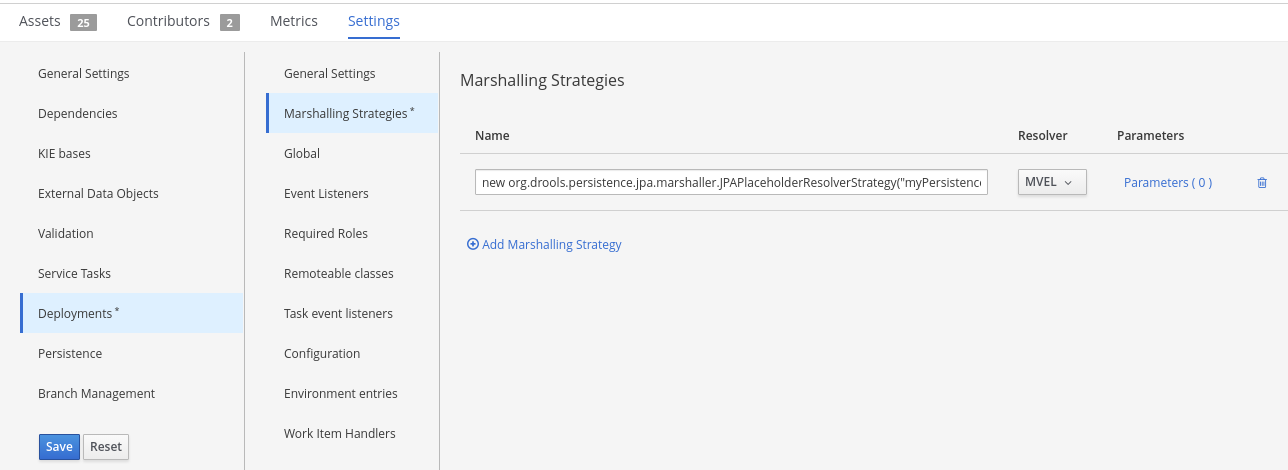

Support for persistence of the process information using the JPA standard. Persistence preserves the state and context (data in process variables) of every process instance, so that they are not lost in case any components are restarted or taken offline for some time. You can use an SQL database engine to store the persistence information.

-

Pluggable support for transactional execution of process elements using the JTA standard. If you use a JTA transaction manager, every element of the business process starts as a transaction. If the element does not complete, the context of the process instance is restored to the state in which it was before the element started.

-

Support for custom extension code, including new node types and other process languages.

-

Support for custom listener classes that are notified about various events.

-

Support for migrating running process instances to a new version of their process definition

The jBPM engine can also be integrated with other independent core services:

-

The human task service can manage user tasks when human actors need to participate in the process. It is fully pluggable and the default implementation is based on the WS-HumanTask specification. The human task service manages the lifecycle of the tasks, task lists, task forms, and some more advanced features like escalation, delegation, and rule-based assignments.

-

The history log can store all information about the execution of all the processes in the jBPM engine. While runtime persistence stores the current state of all active process instances, you need the history log to ensure access to historic information. The history log contains all current and historic states of all active and completed process instances. You can use the log to query for any information related to the execution of process instances for monitoring and analysis.

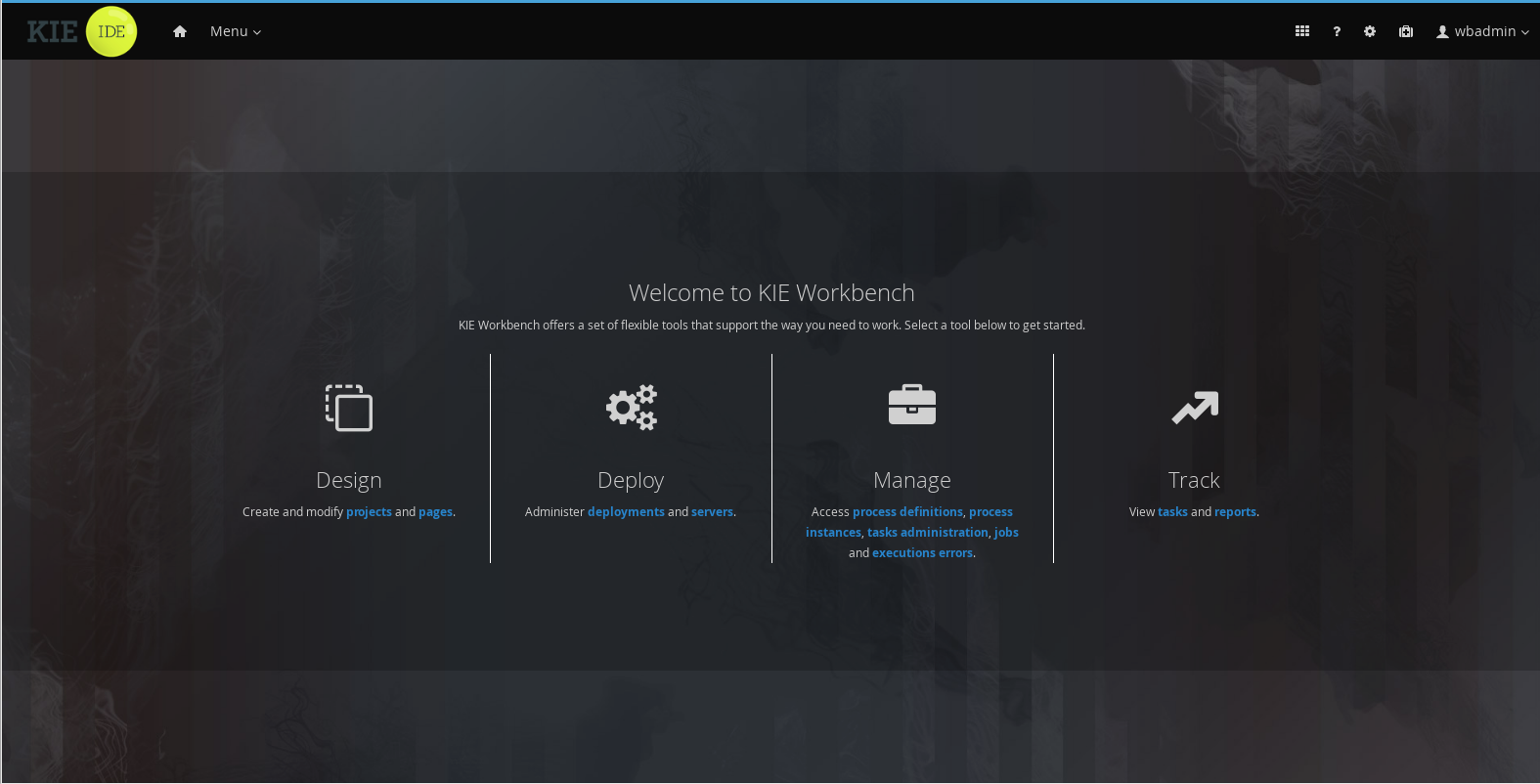

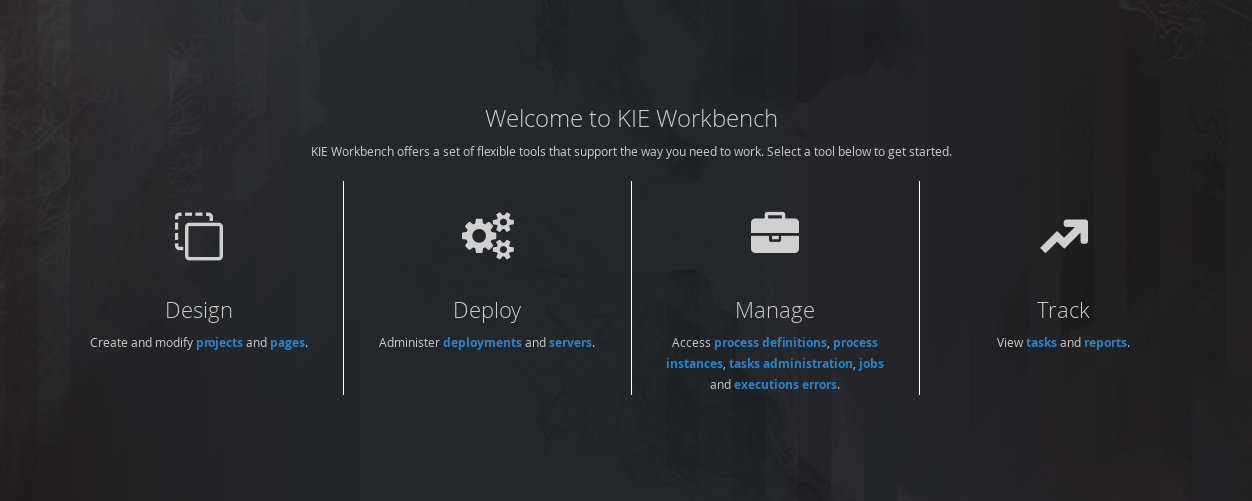

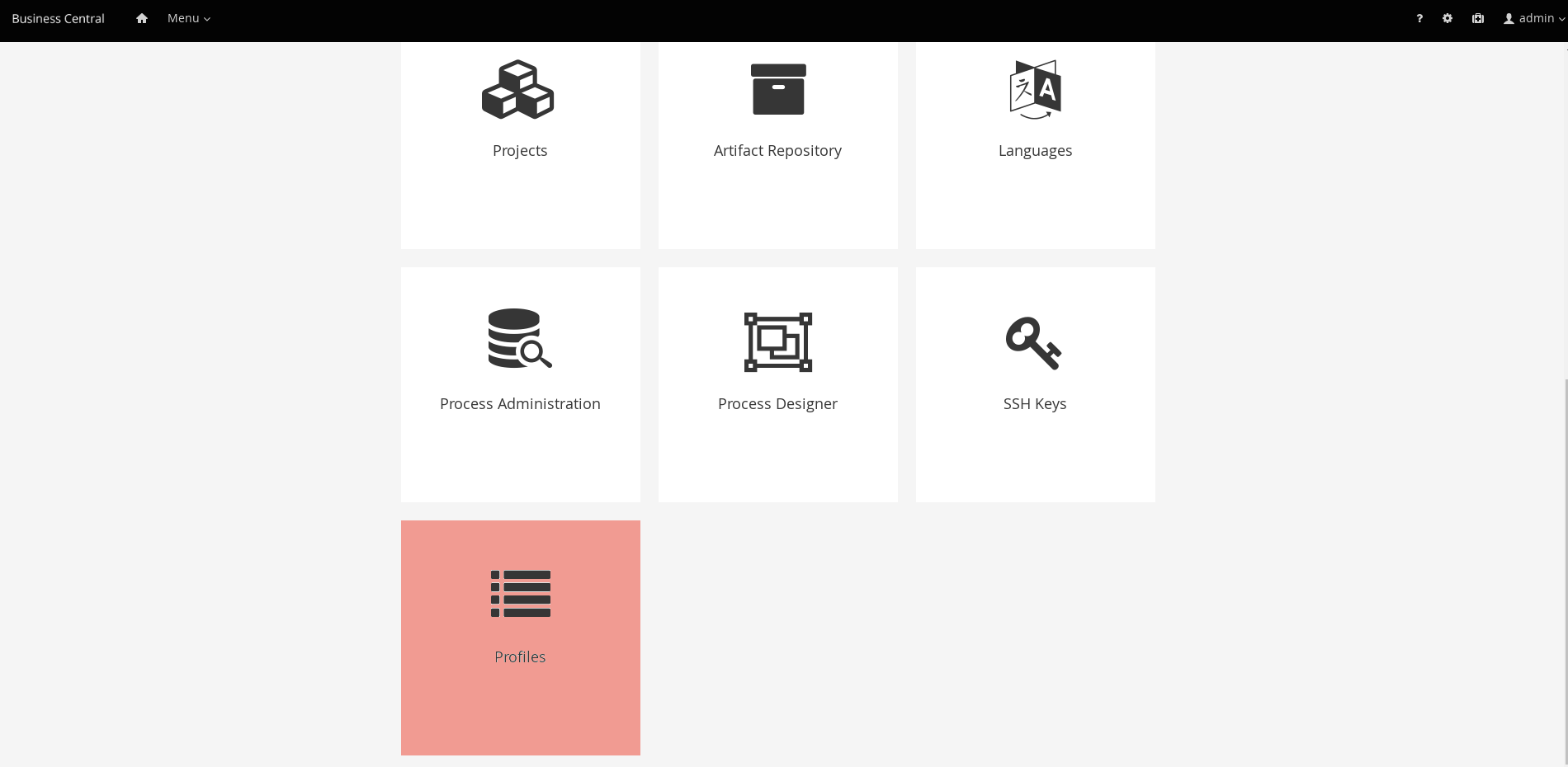

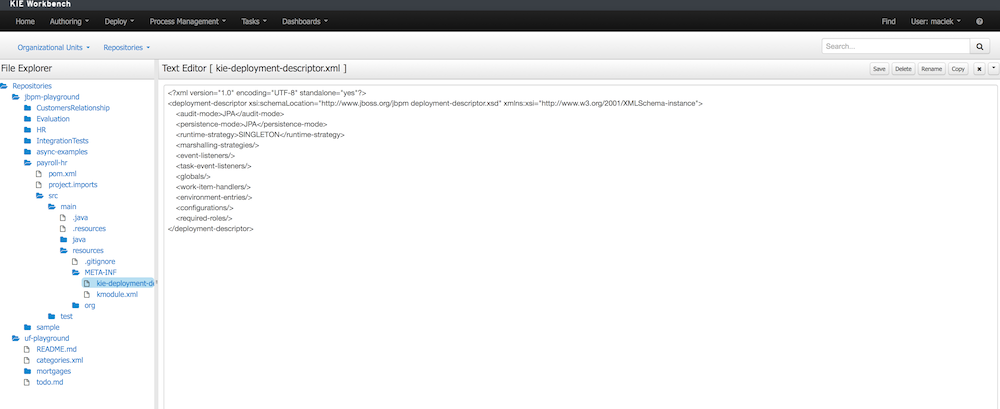

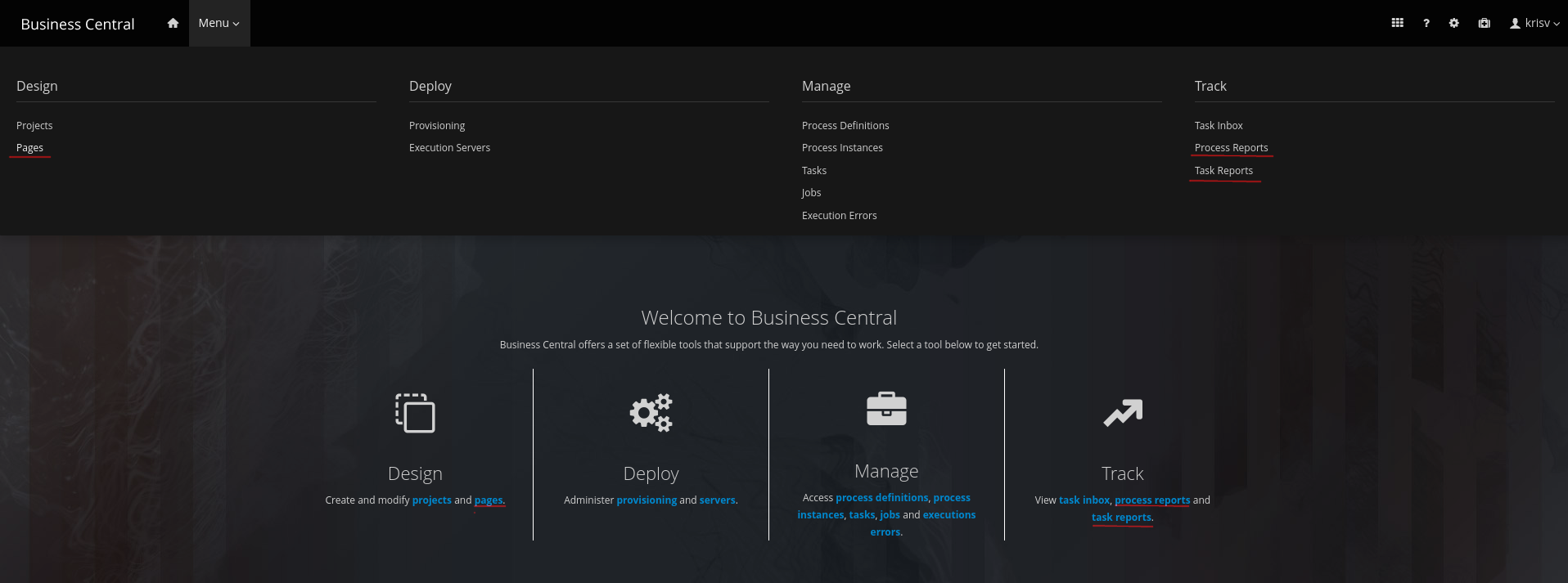

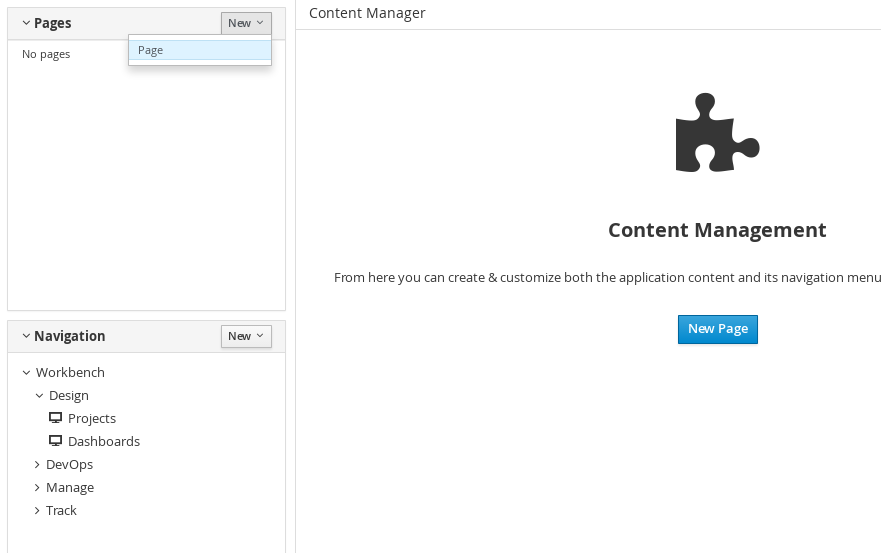

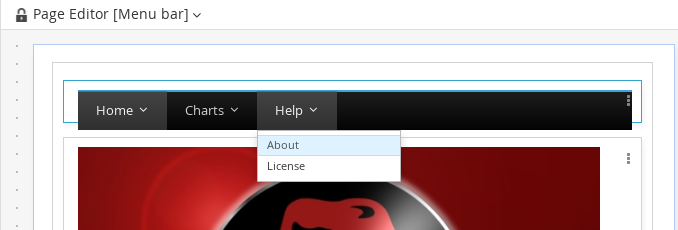

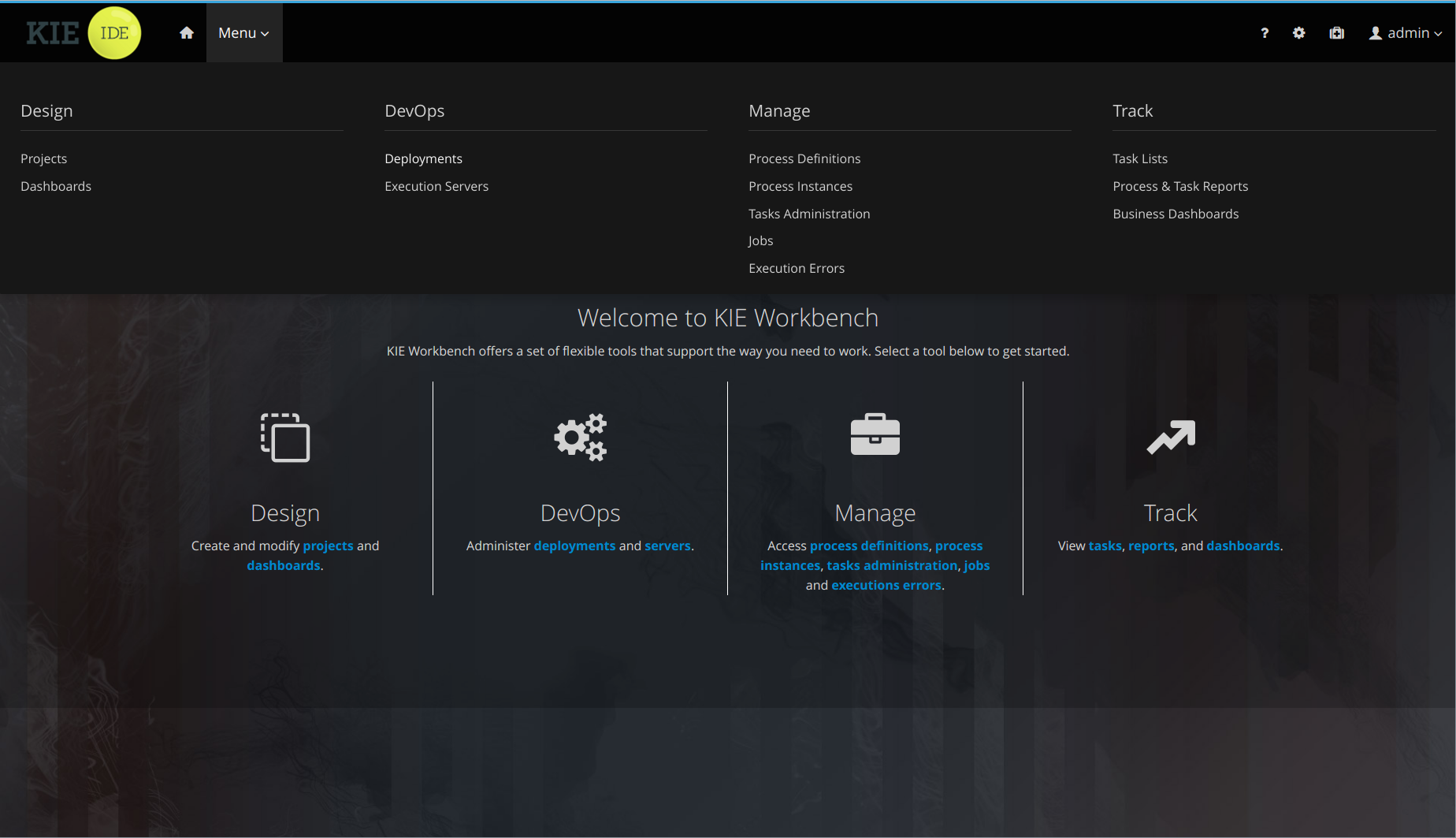

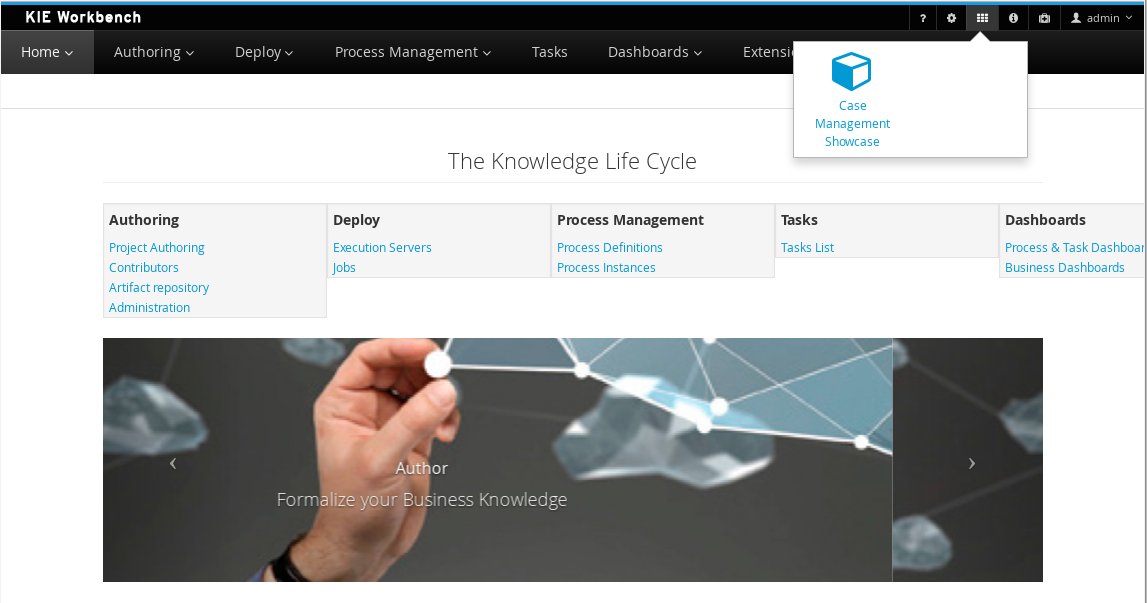

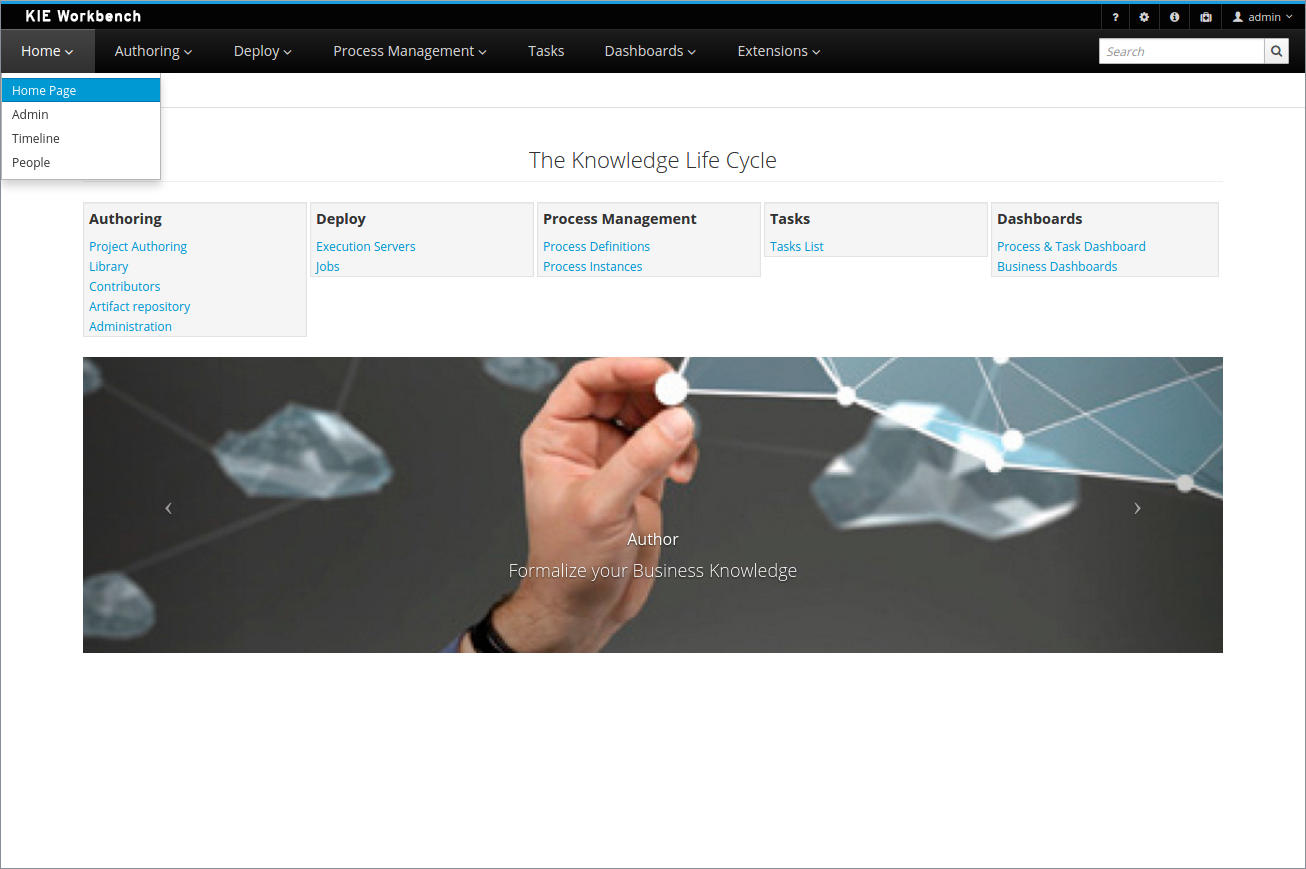

1.4. Business Central

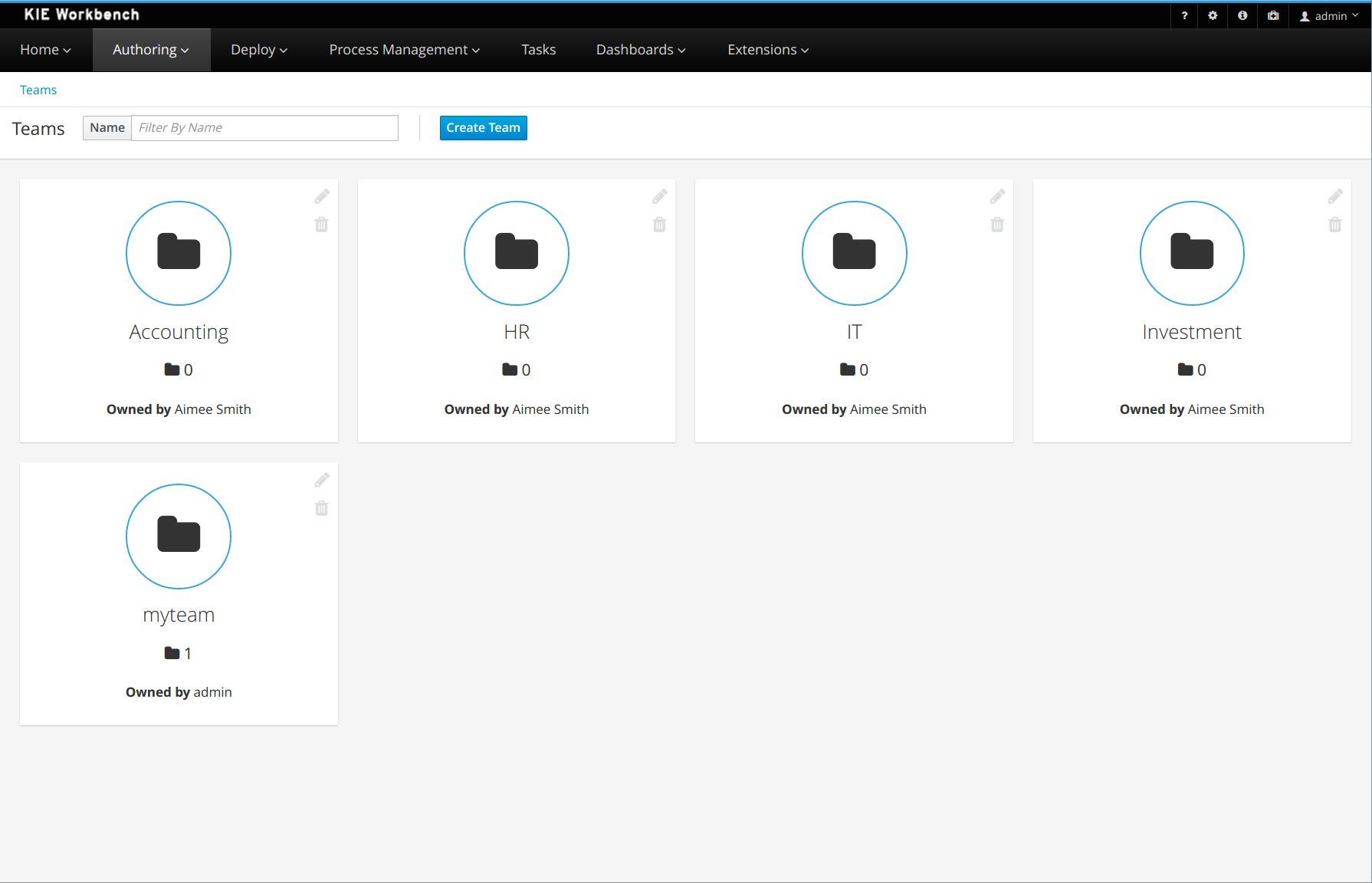

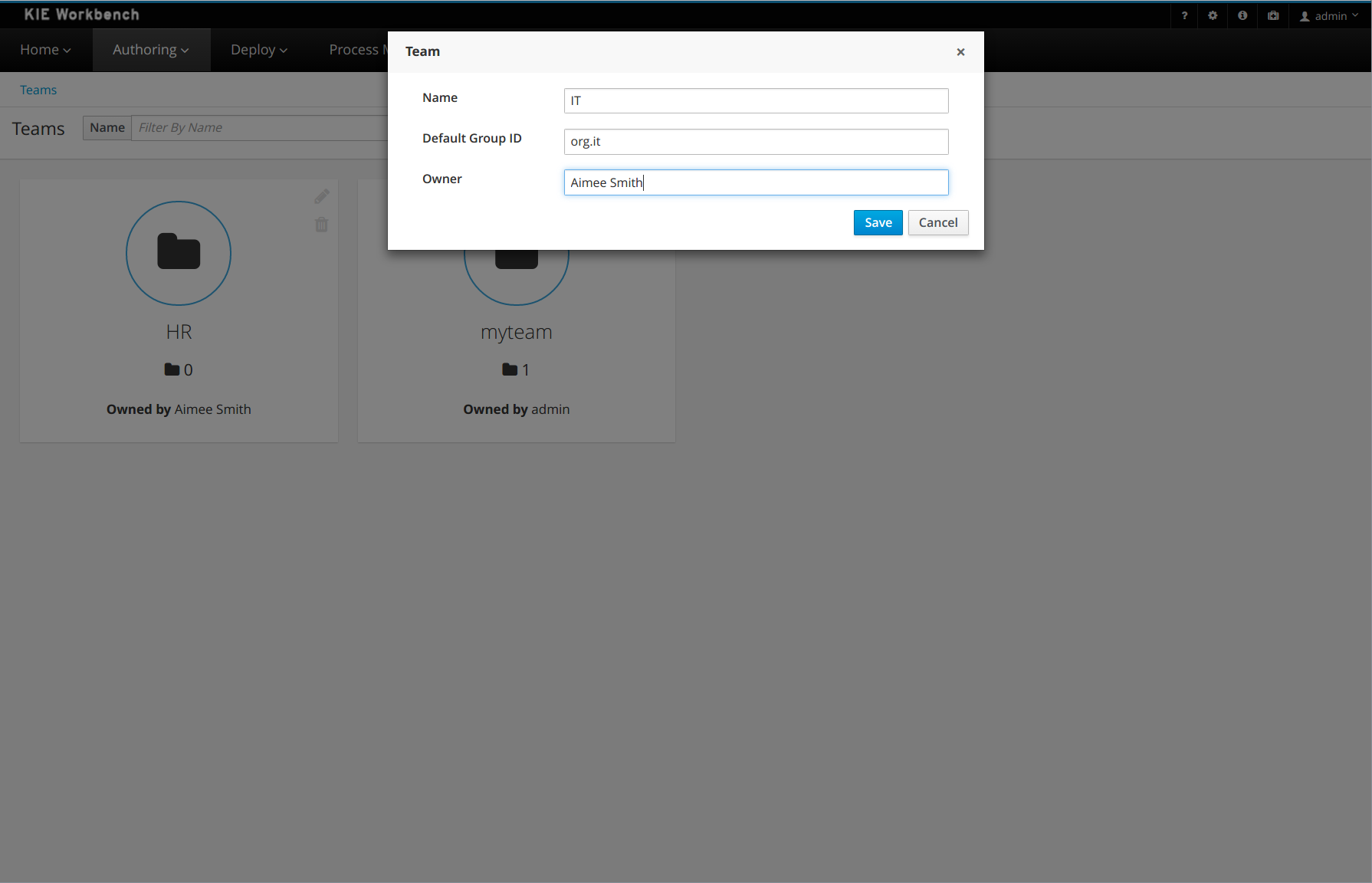

The Business Central web-based application covers the complete life cycle of BPM projects starting at authoring phase, going through implementation, execution and monitoring. It combines a series web-based tools into one configurable solution to manage all assets and runtime data needed for the business solution.

It supports the following:

-

A repository service to store your business processes and related artifacts, using a Git repository, which supports versioning, remote Git access (as a file system) and access via REST.

-

A web-based user interface to manage your business processes, targeted towards business users; it also supports the visualization (and editing) of your artifacts (the web-based editors like designer, data and form modeler are integrated here), but also categorisation, build and deployment, etc..

-

Collaboration features which enable multiple actors (for example business users and developers) to work together on the same project.

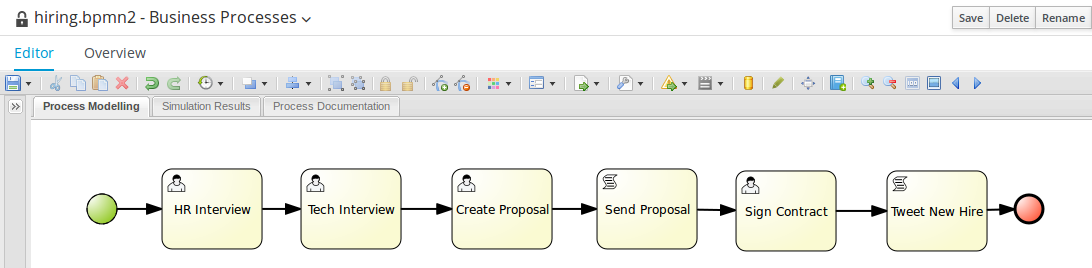

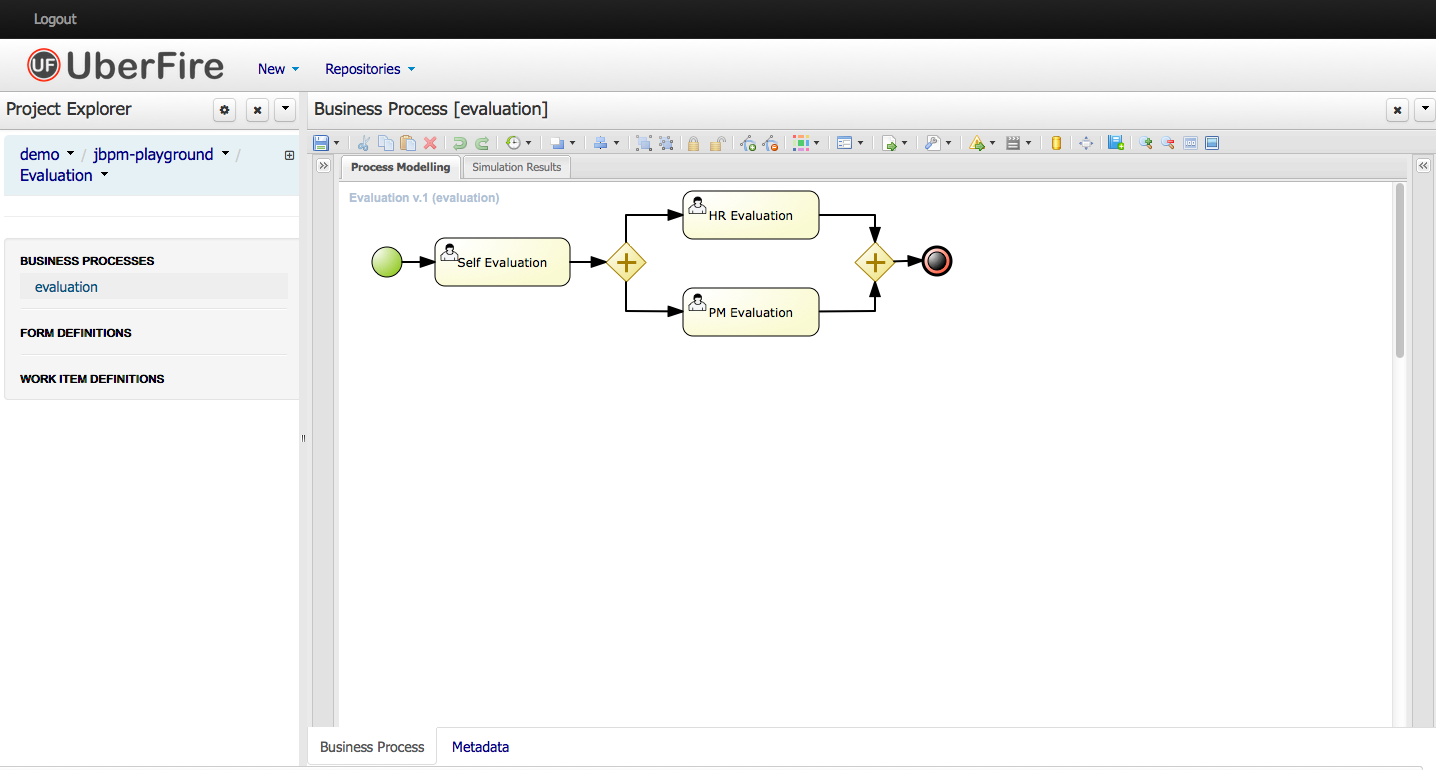

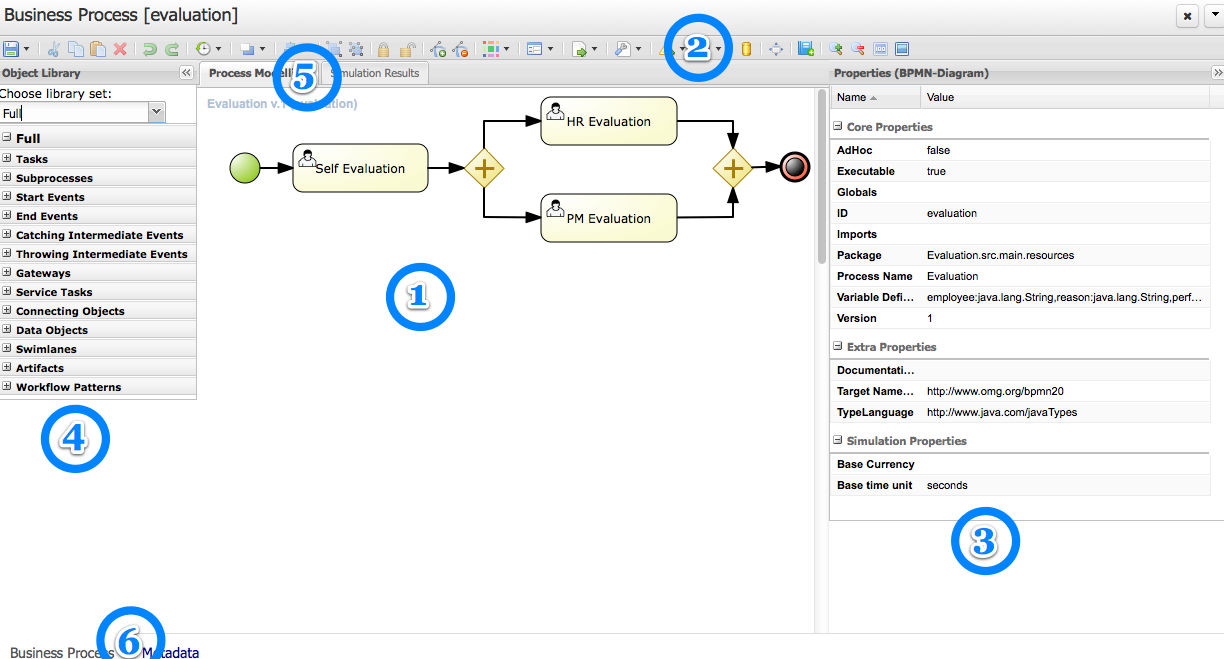

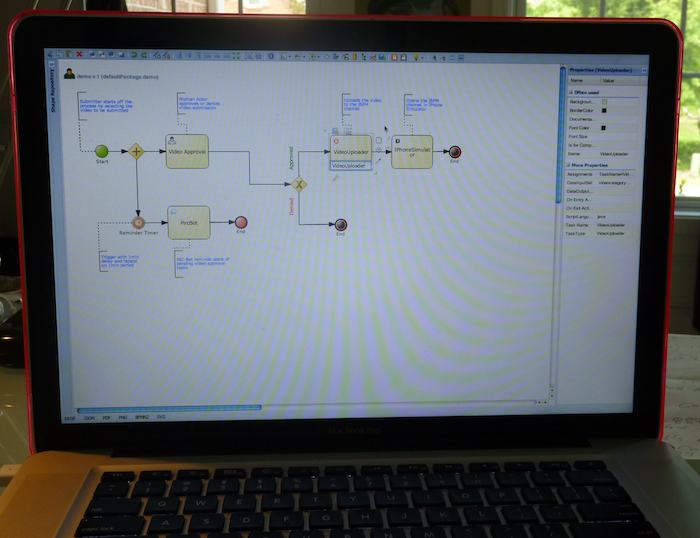

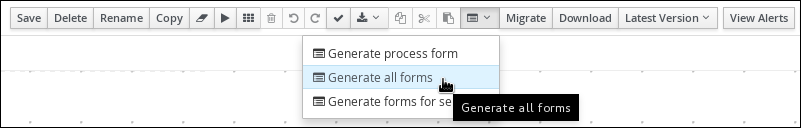

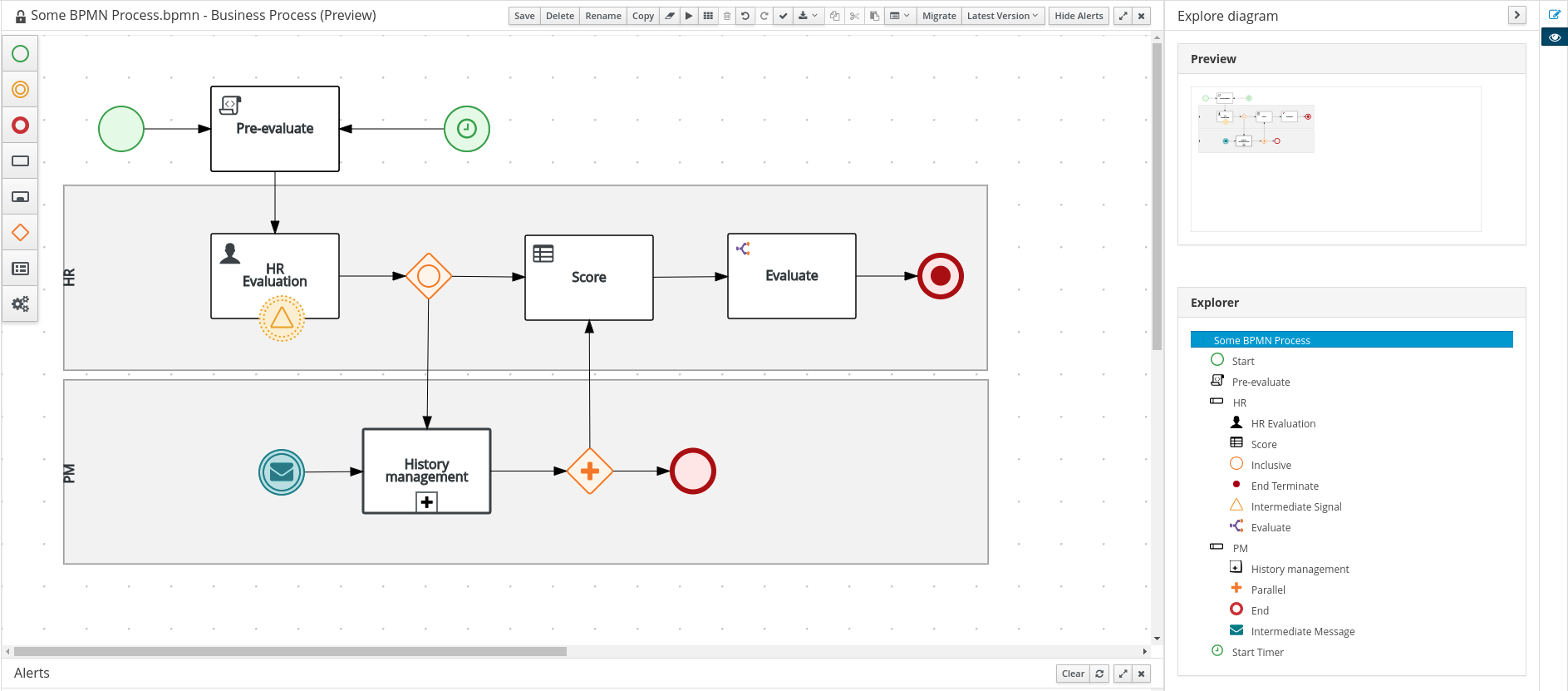

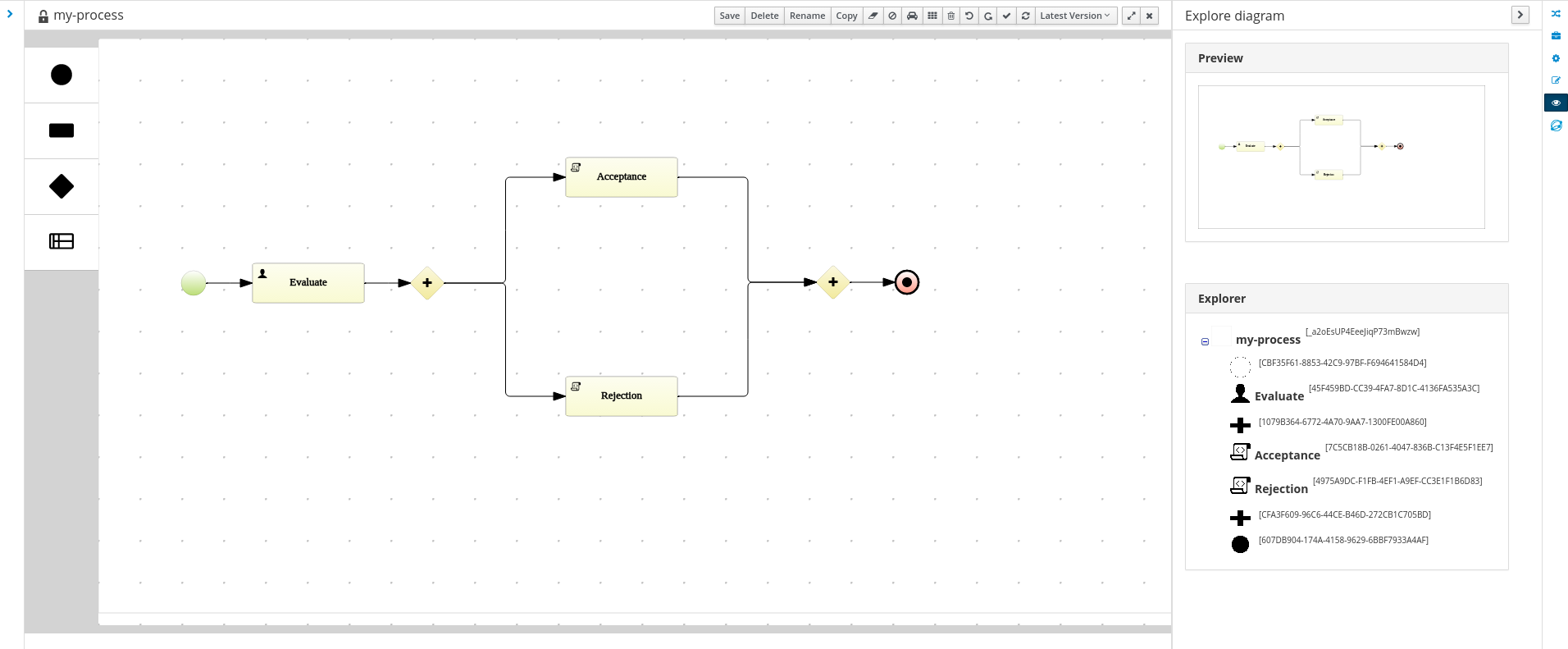

1.4.1. Process Designer

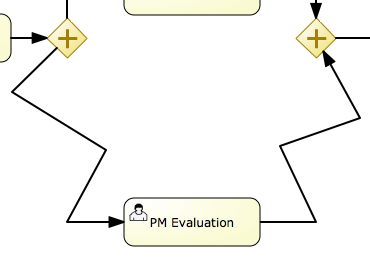

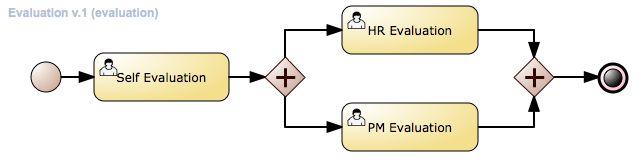

The web-based jBPM Designer allows you to model your business processes in a web-based environment. It is targeted towards business users and offers a graphical editor for viewing and editing your business processes (using drag and drop), similar to the Eclipse plugin. It supports round-tripping between the Eclipse editor and the web-based designer. It also supports simulation of processes.

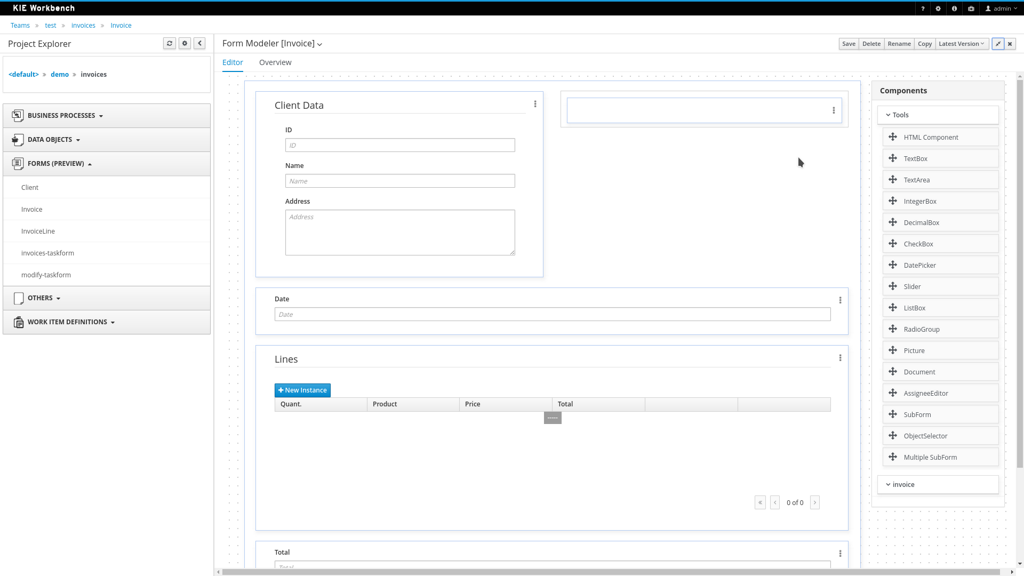

1.4.2. Data Modeler

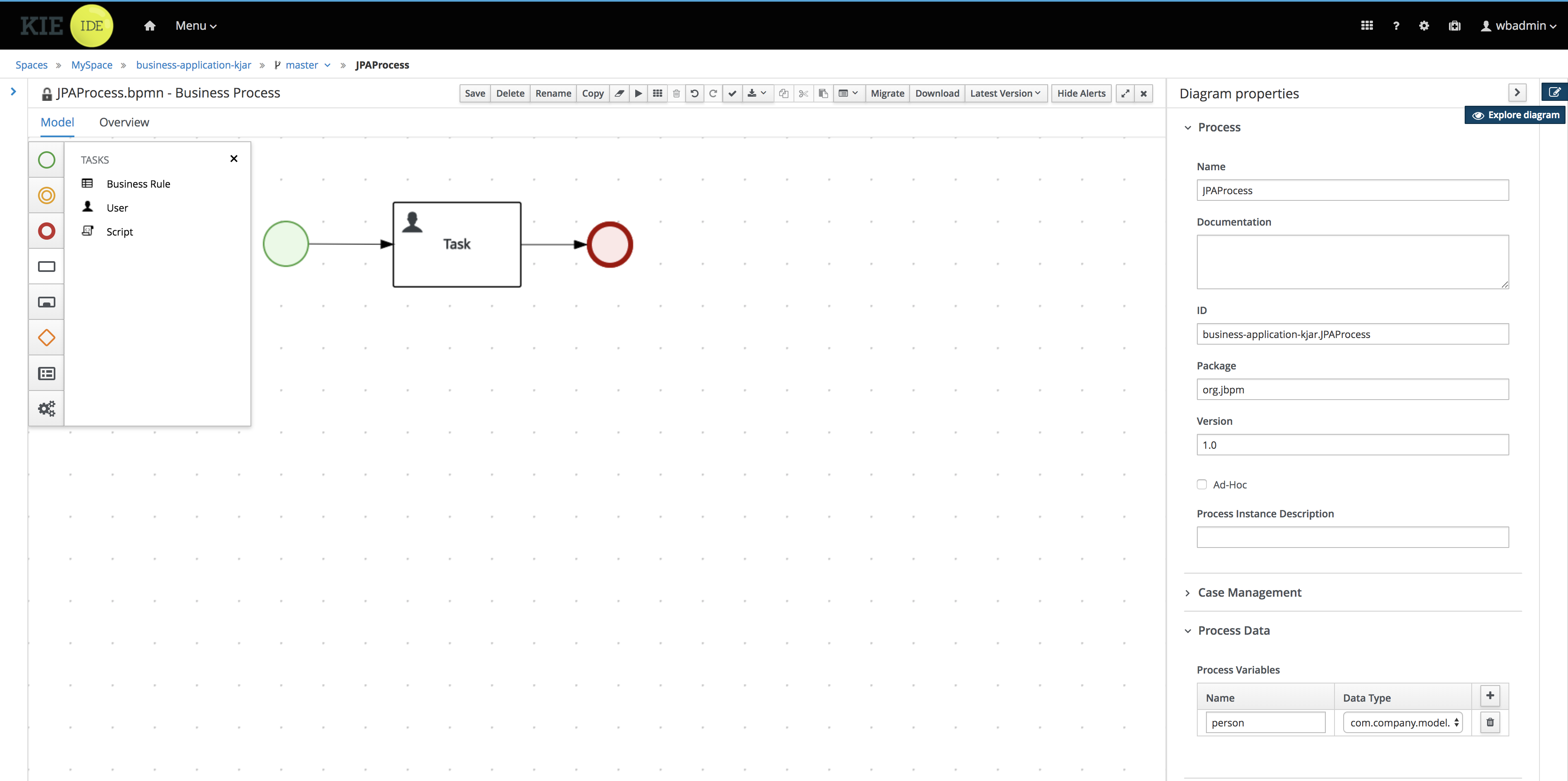

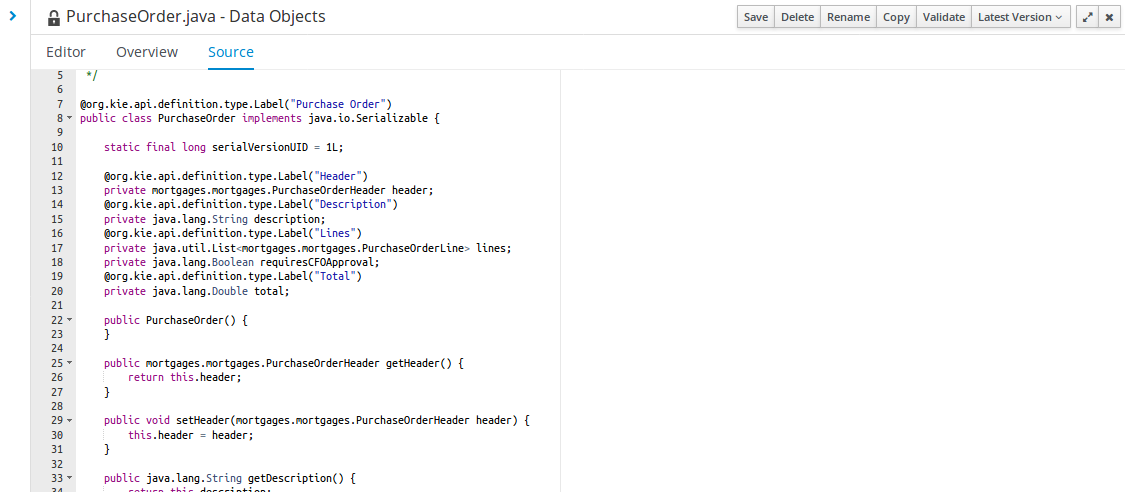

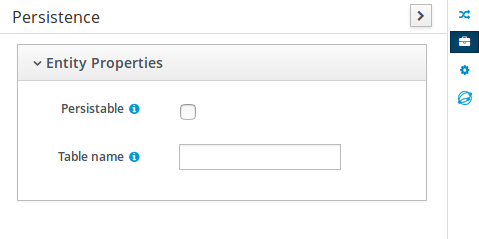

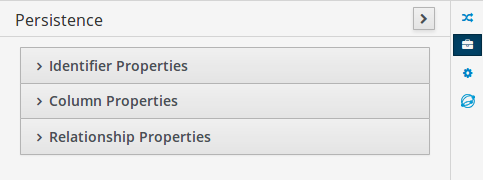

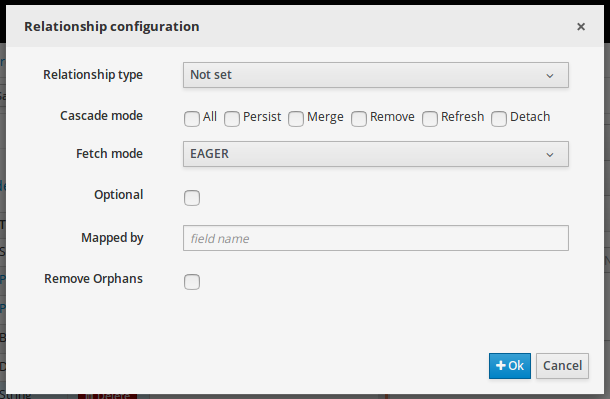

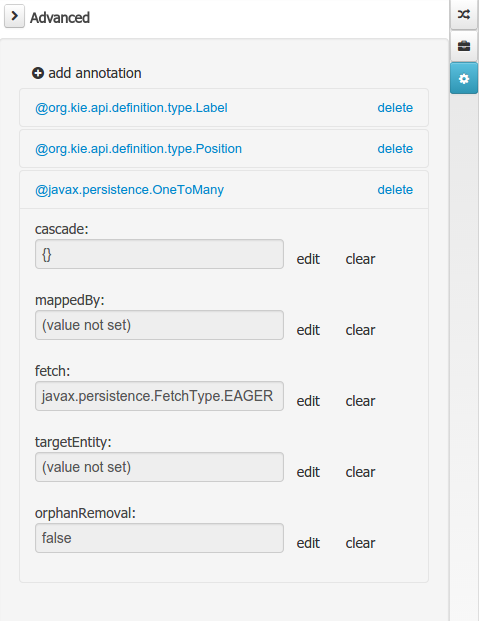

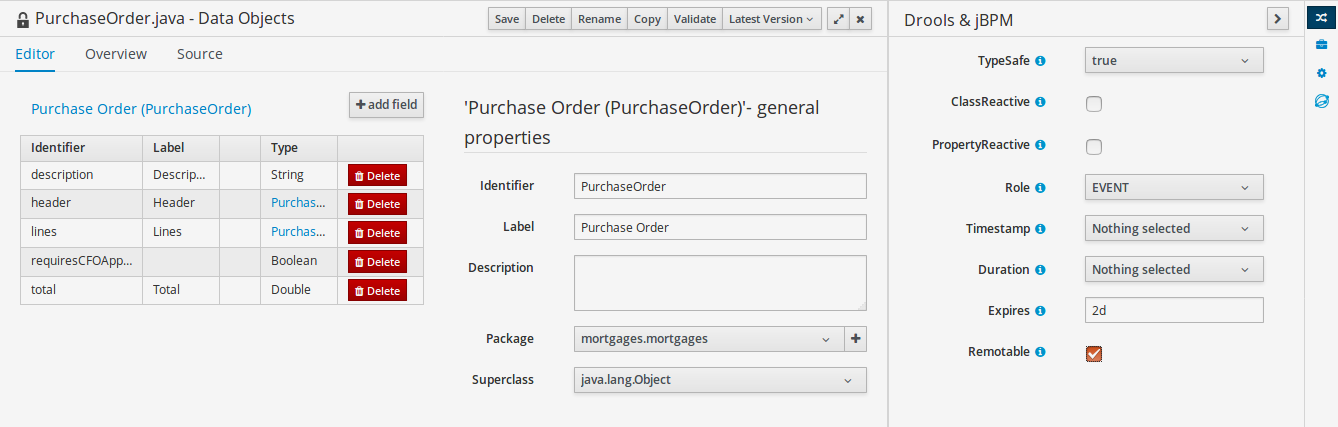

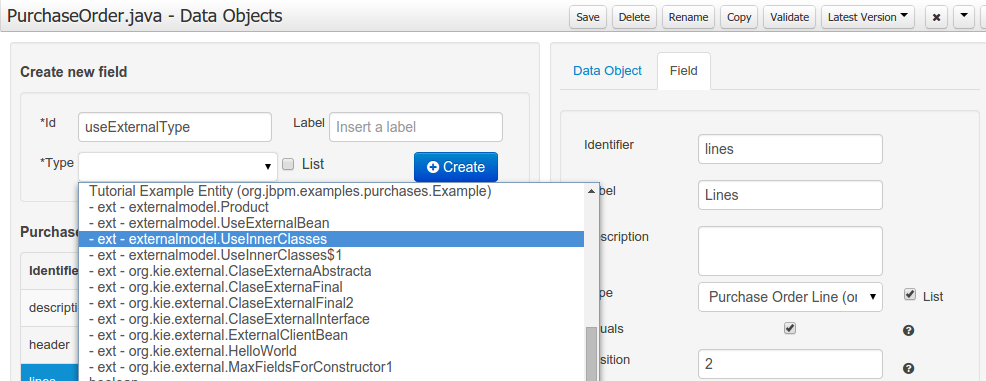

Processes almost always have some kind of data to work with. The data modeler allows non-technical users to view, edit or create these data models.

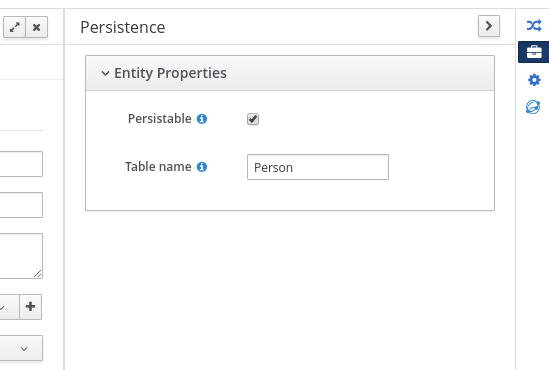

Typically, a business process analyst or data analyst will capture the requirements for a process or application and turn these into a formal set of interrelated data structures. The new Data Modeler tool provides an easy, straightforward and visual aid for building both logical and physical data models, without the need for advanced development skills or explicit coding. The data modeler is transparently integrated into Business Central. Its main goals are to make data models first class citizens in the process improvement cycle and allow for full process automation through the integrated use of data structures (and the forms that will be used to interact with them).

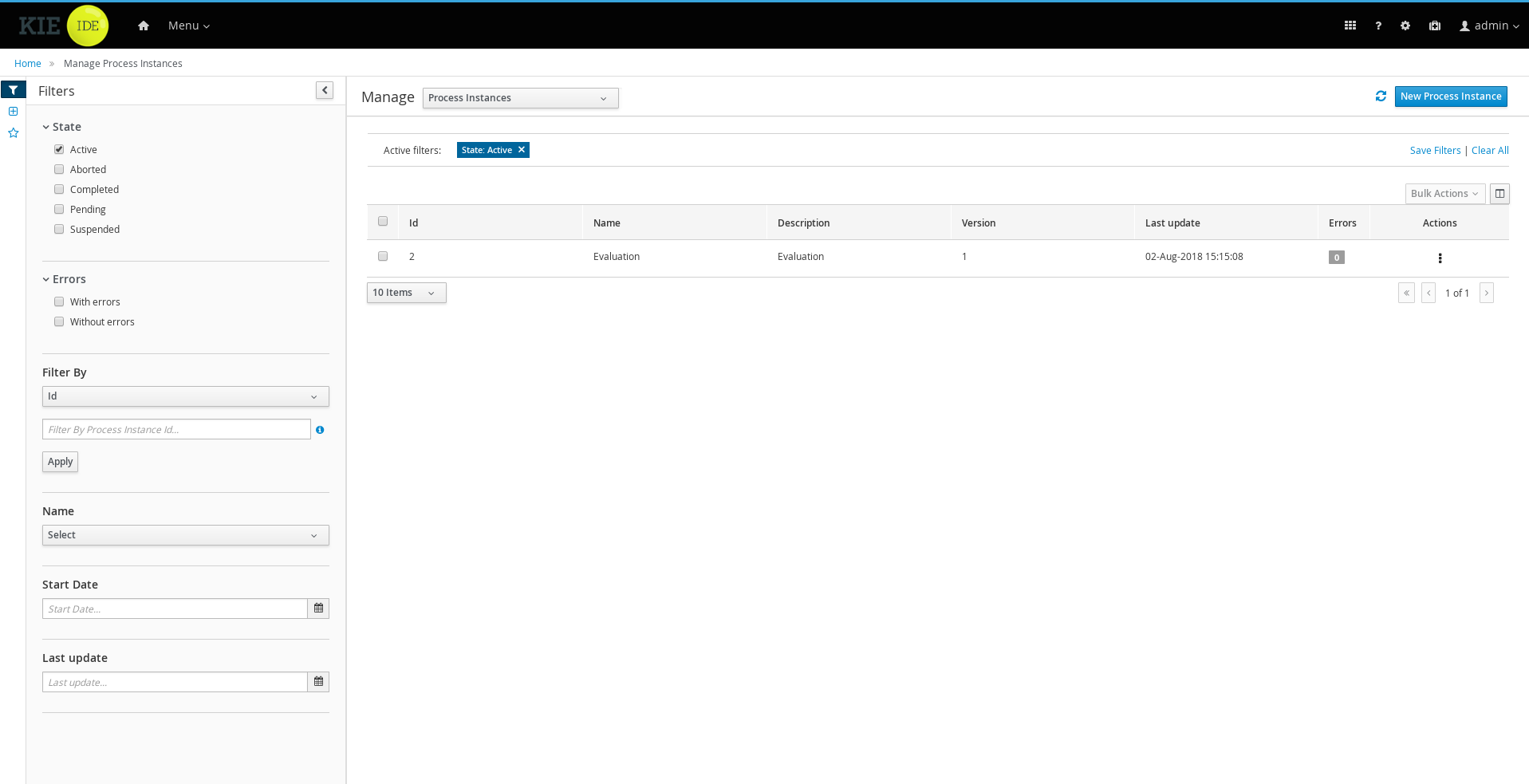

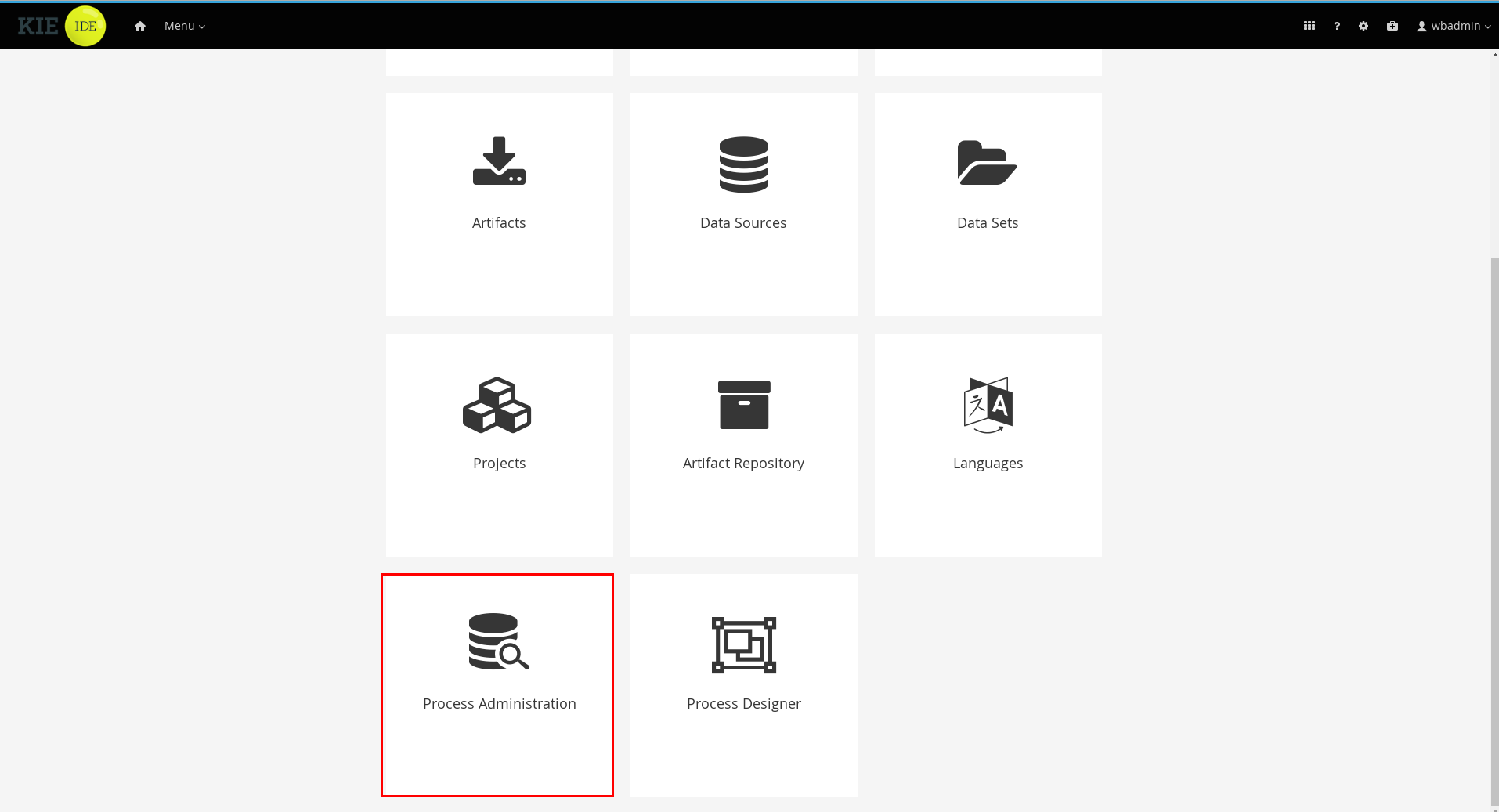

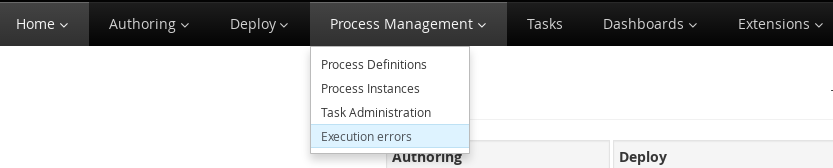

1.4.3. Process Management

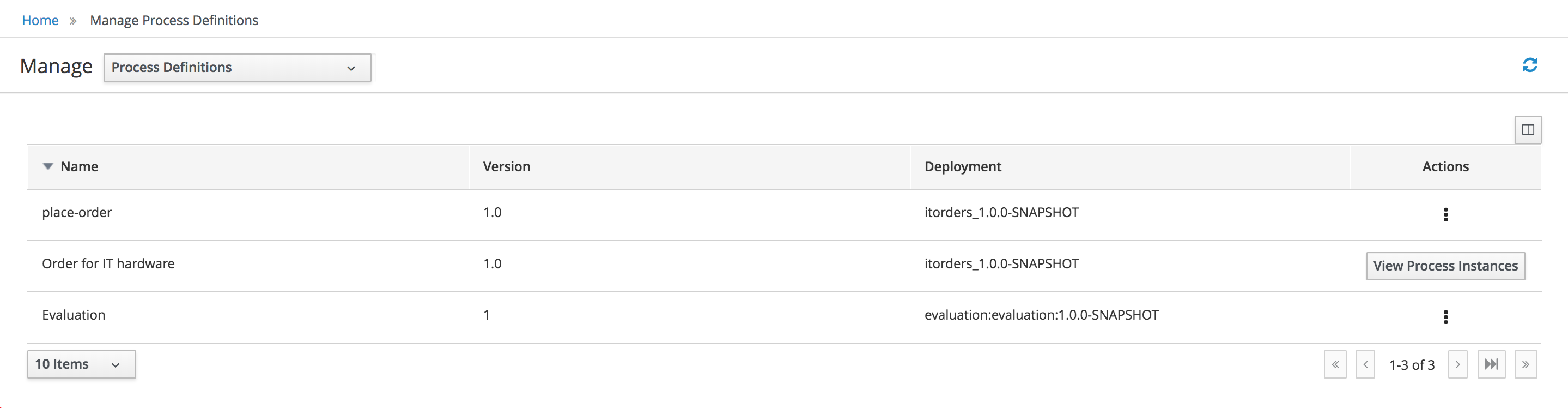

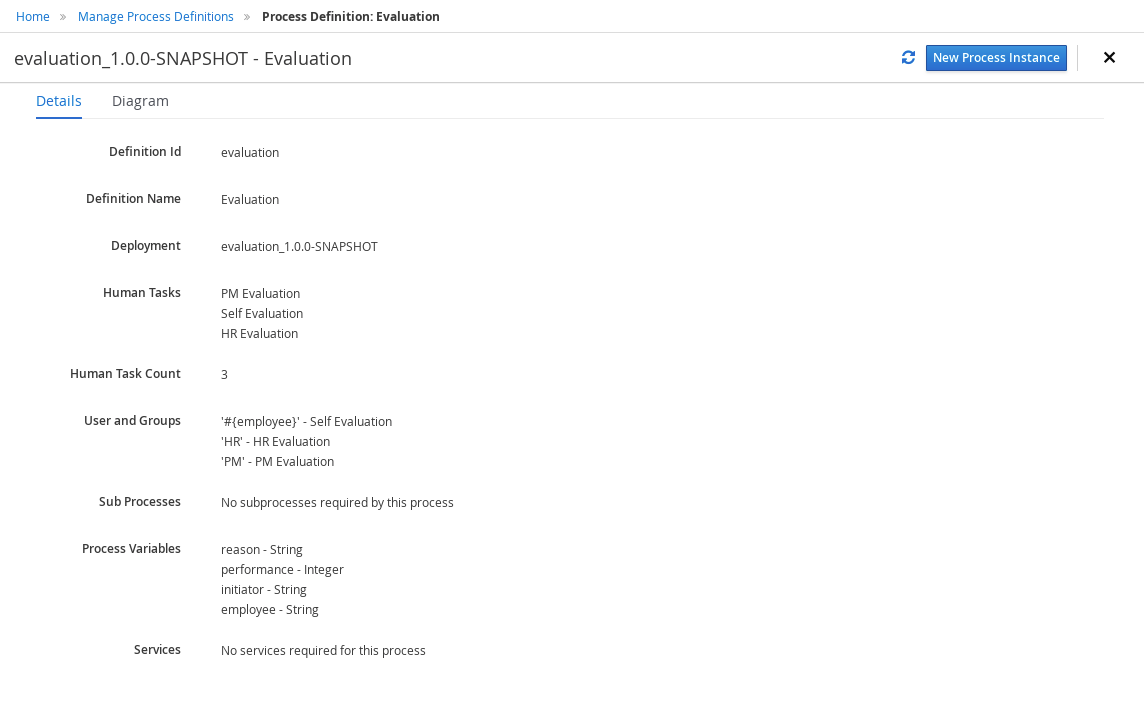

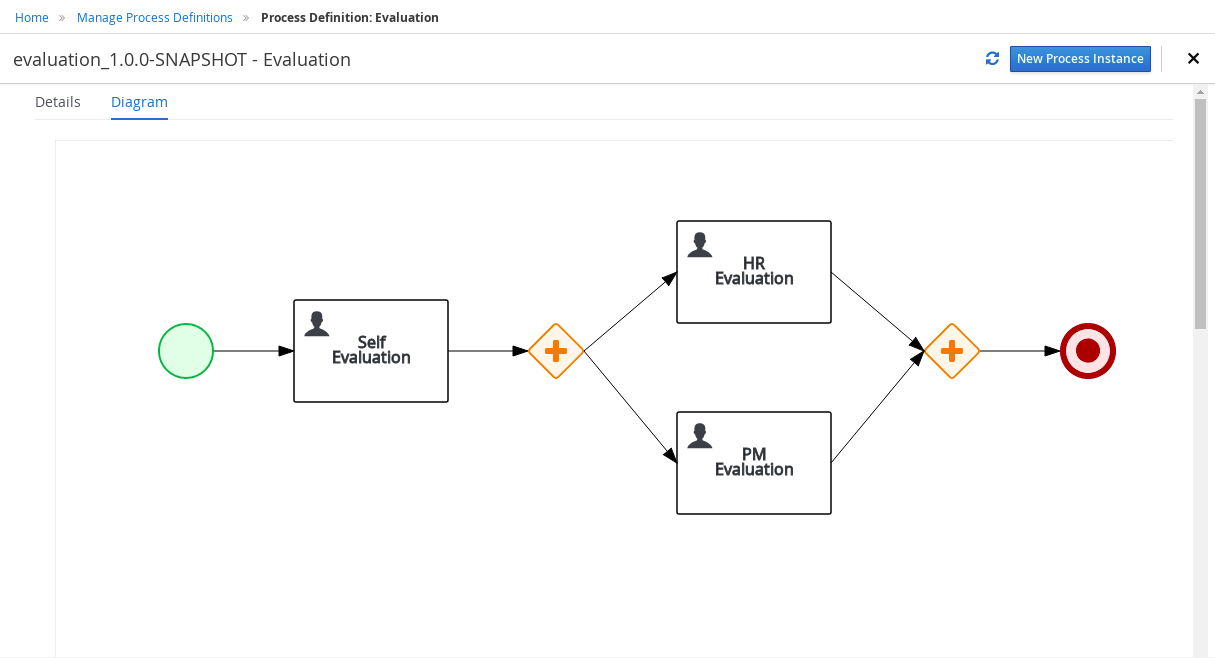

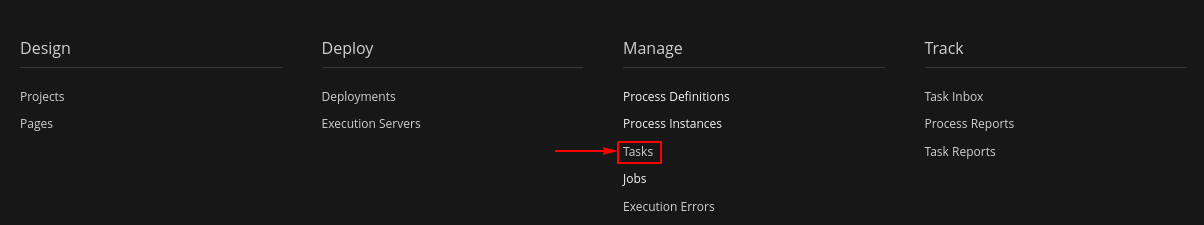

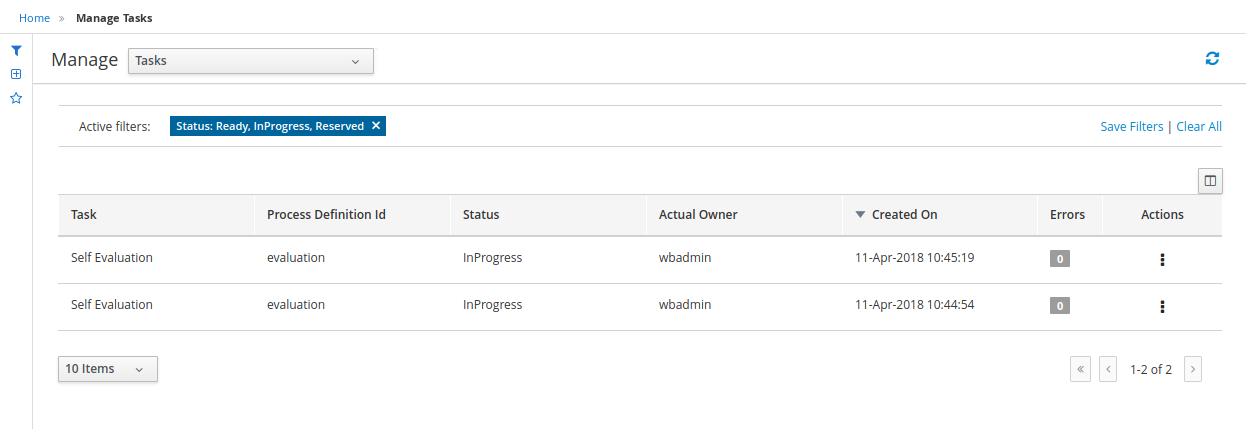

Business processes and all its related runtime information can be managed through Business Central. It is targeted towards process administrators users and its main features include:

-

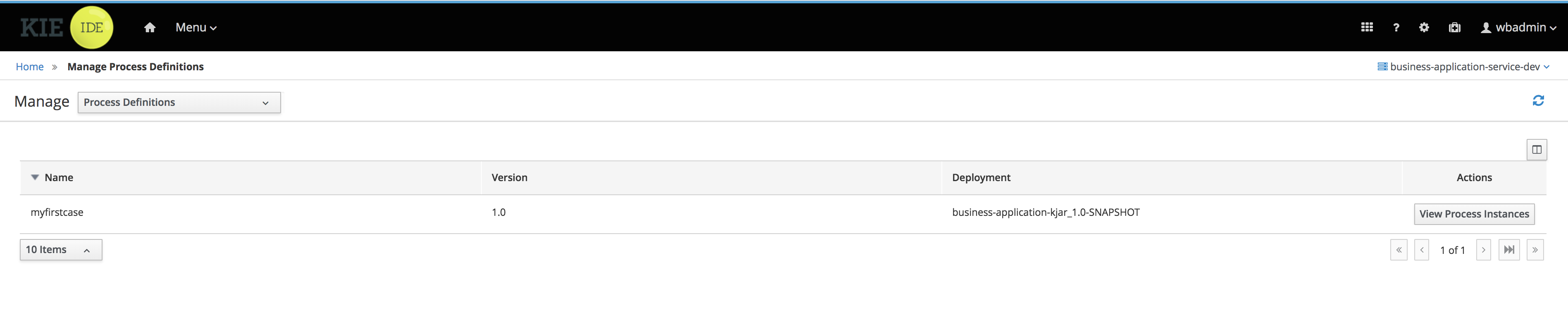

Process definitions management: view the entire list of process currently deployed into a Kie Server and its details.

-

Process instances management: the ability to start new process instances, get a filtered list of process instances, visually inspect the state of a specific process instances.

-

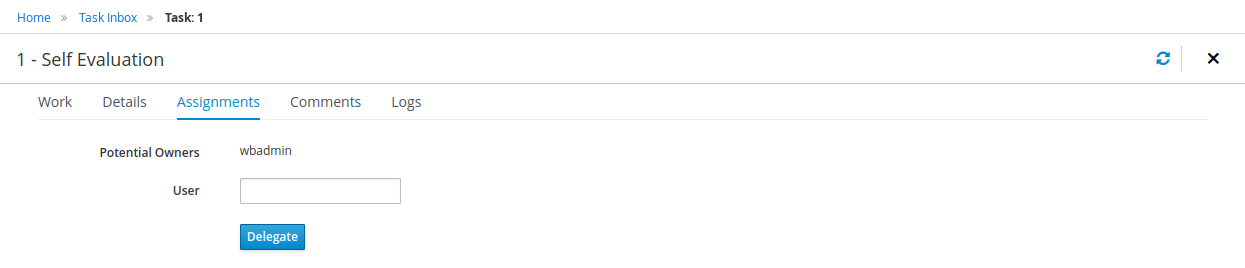

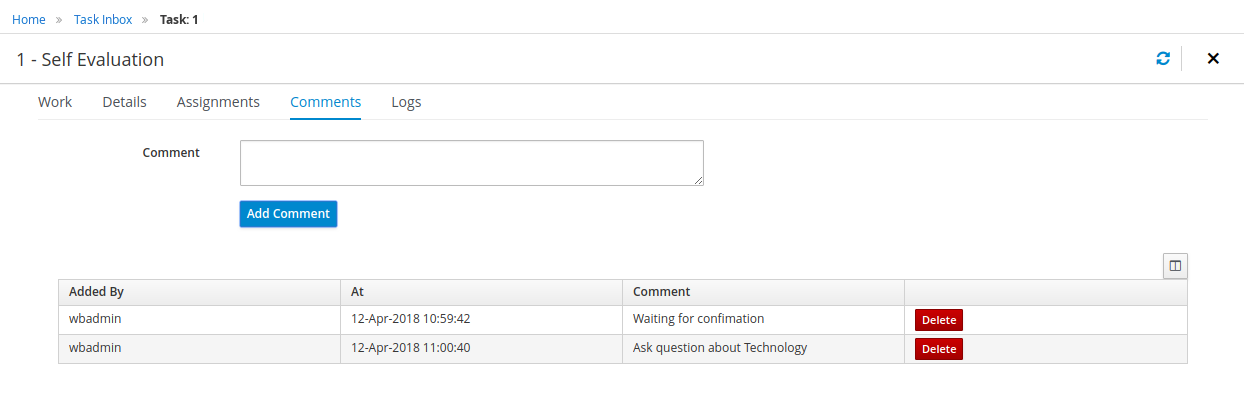

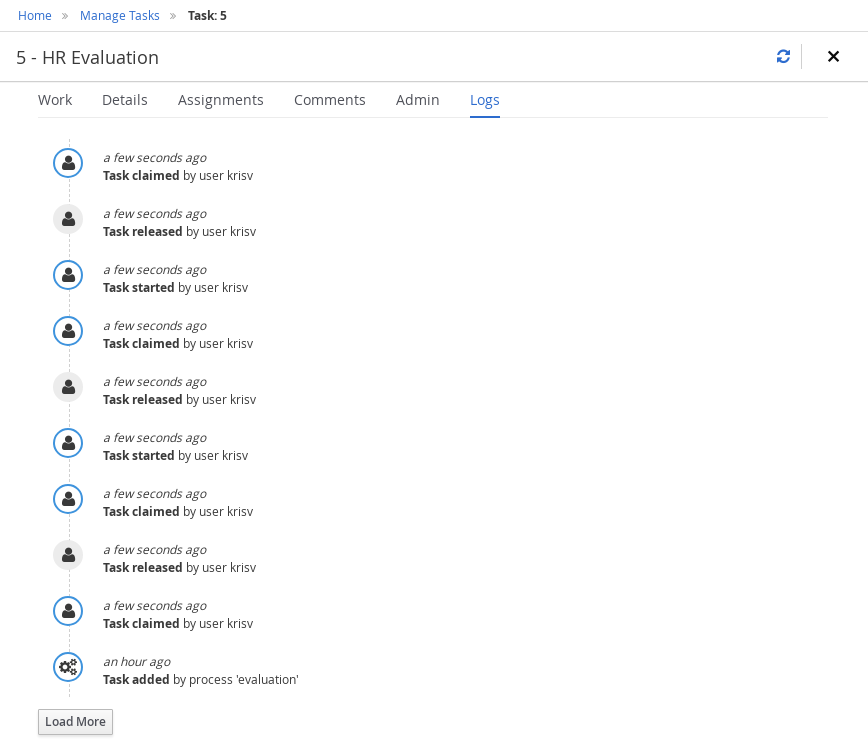

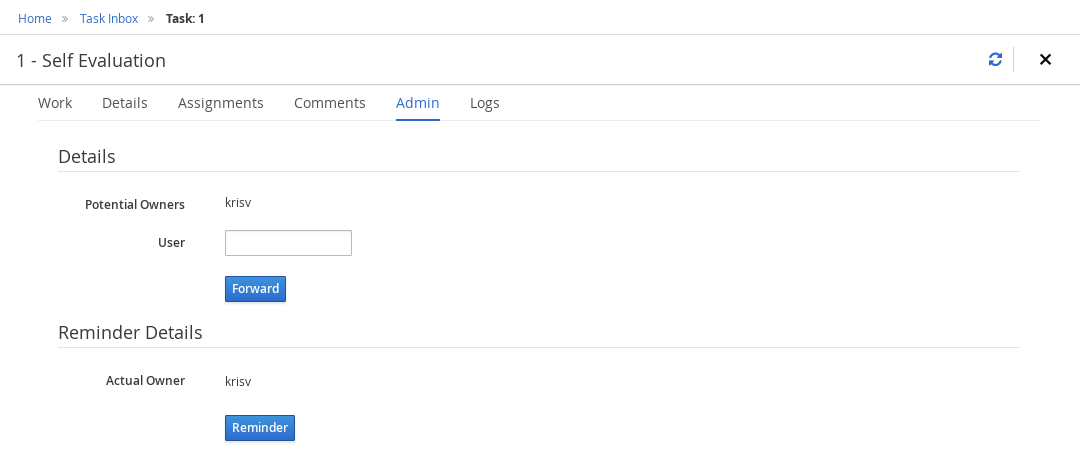

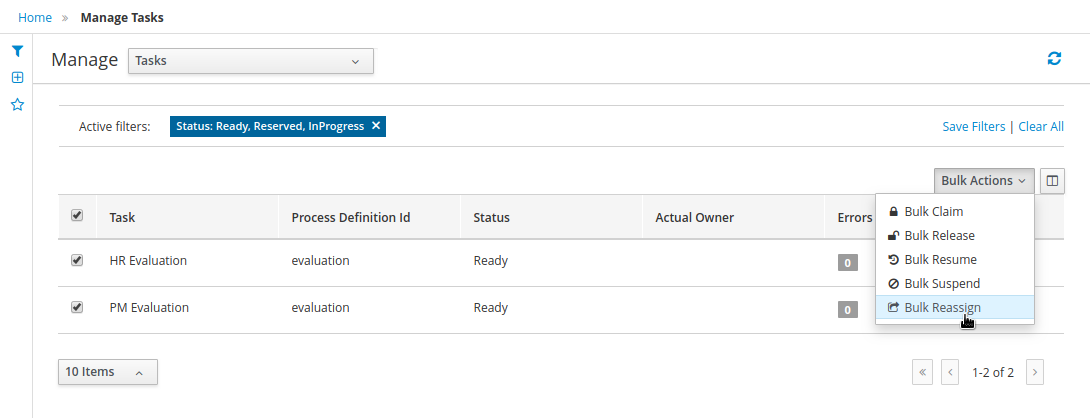

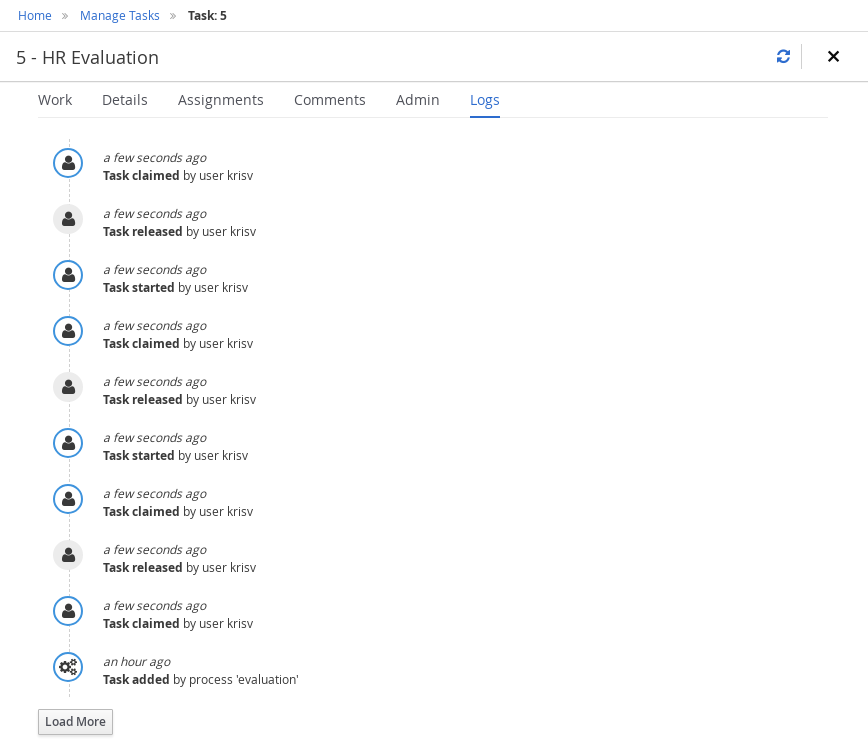

Human tasks management: being able to get a list of all tasks, view details such as current assignees, comments, activity logs as well as send reminders and forward tasks to different users and more.

-

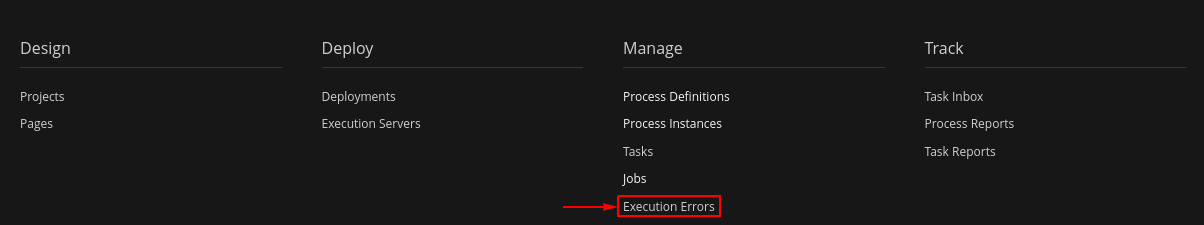

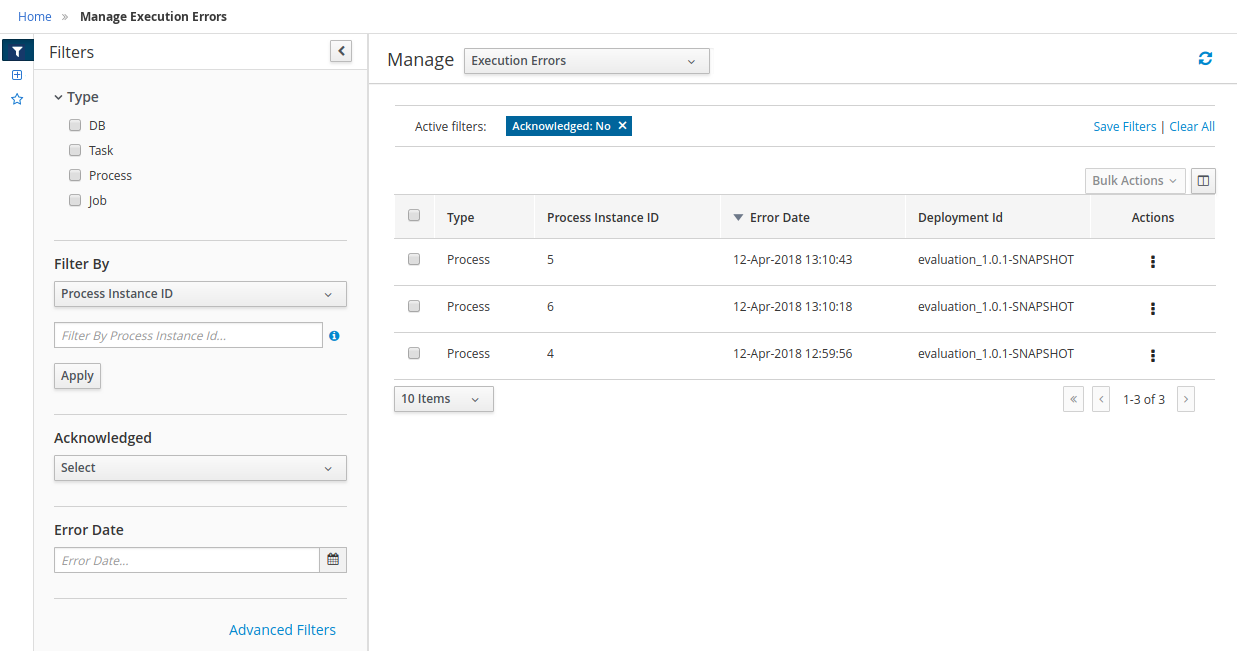

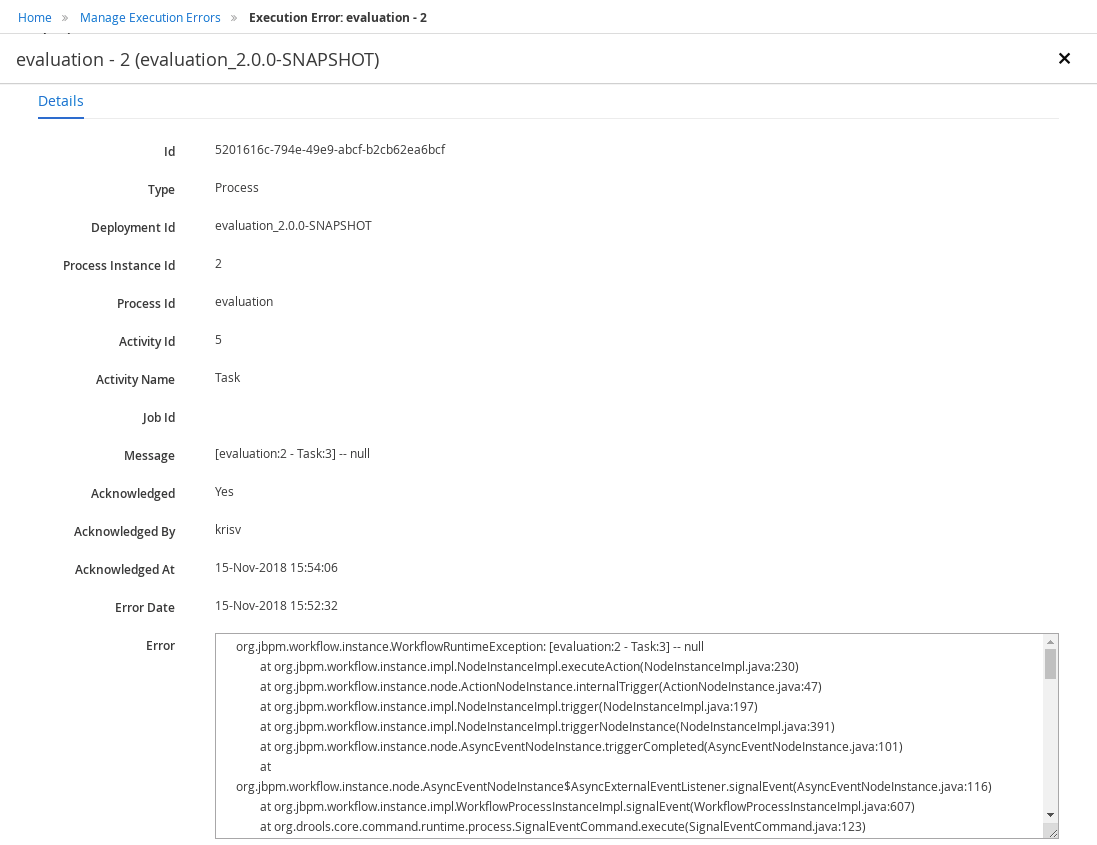

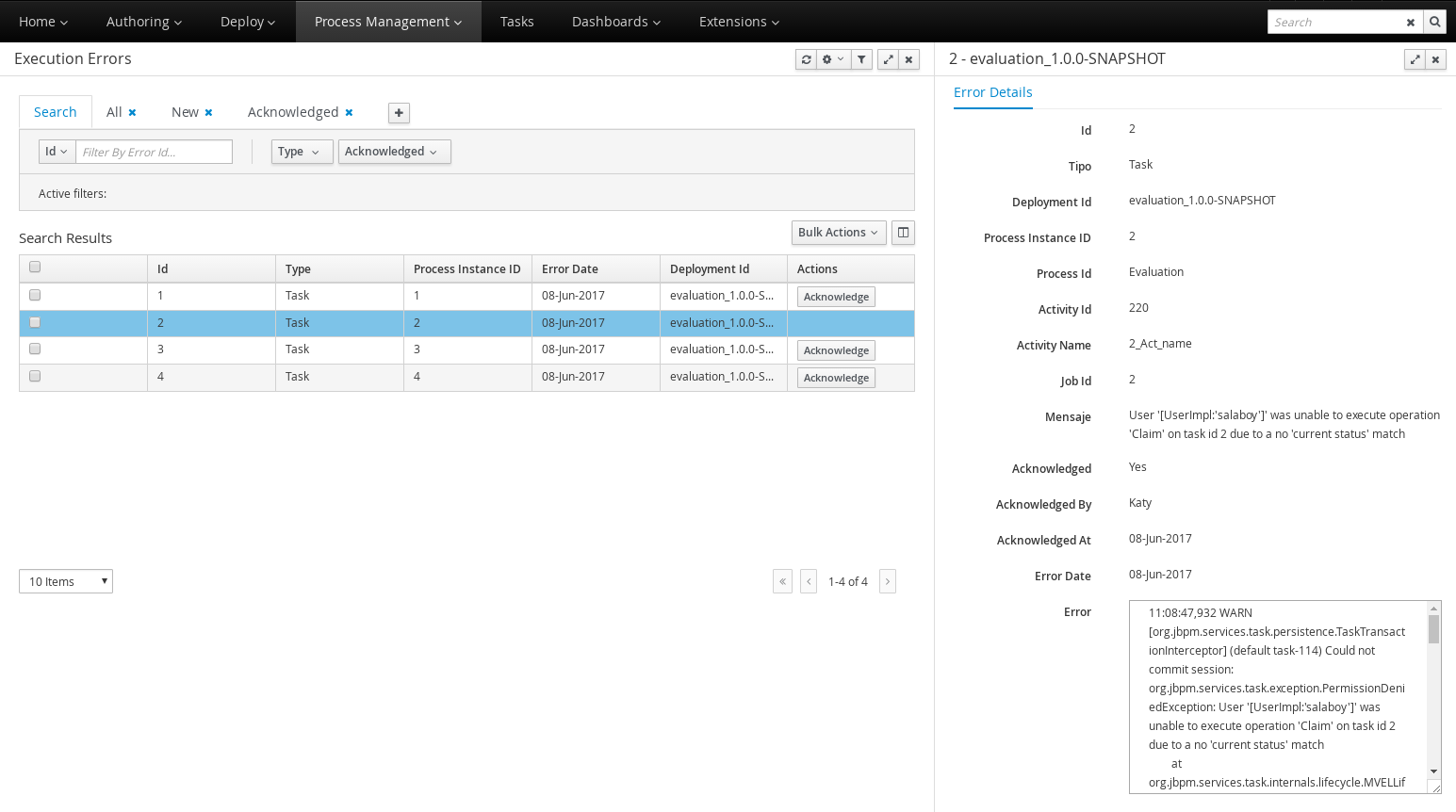

Execution Errors management: allows administrators to view any execution error reported in the Kie Server instance, inspect its details including stacktrace and perform the error acknowledgement.

-

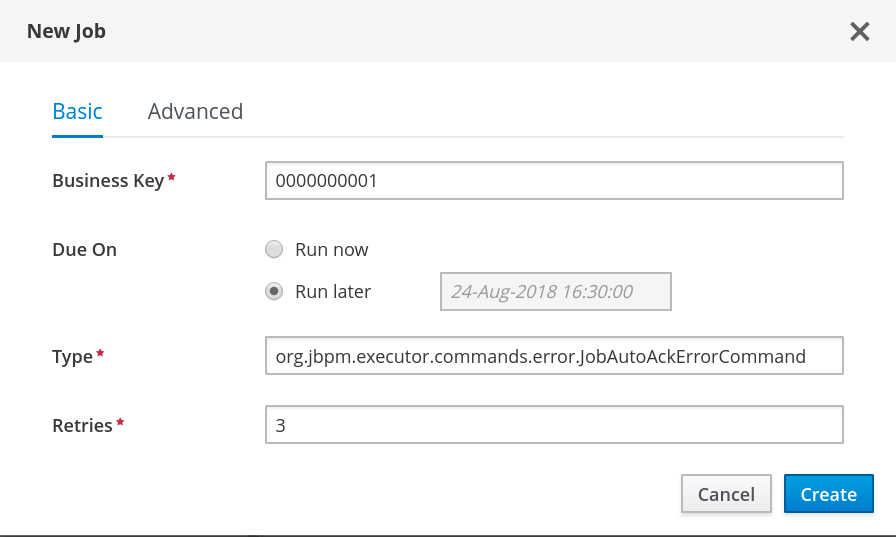

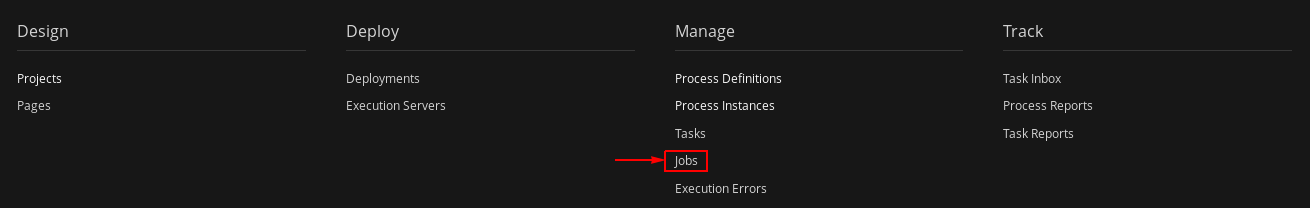

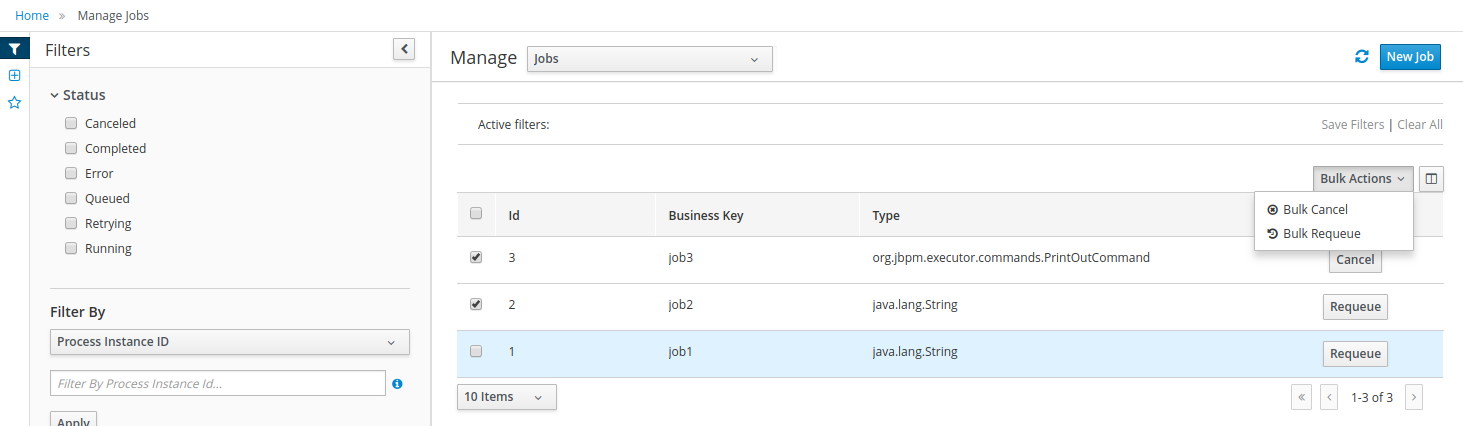

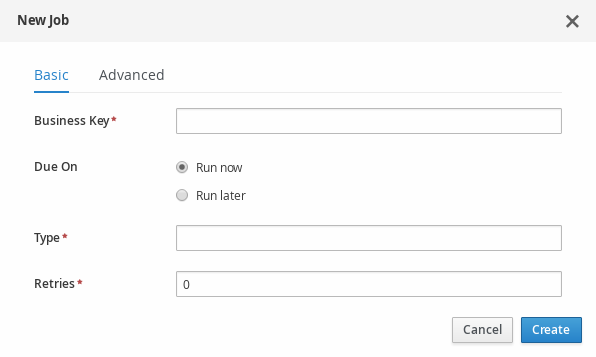

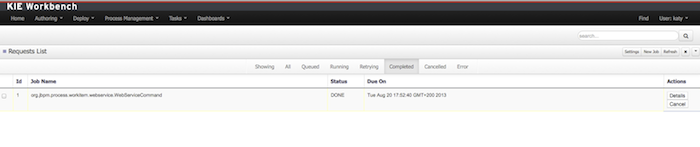

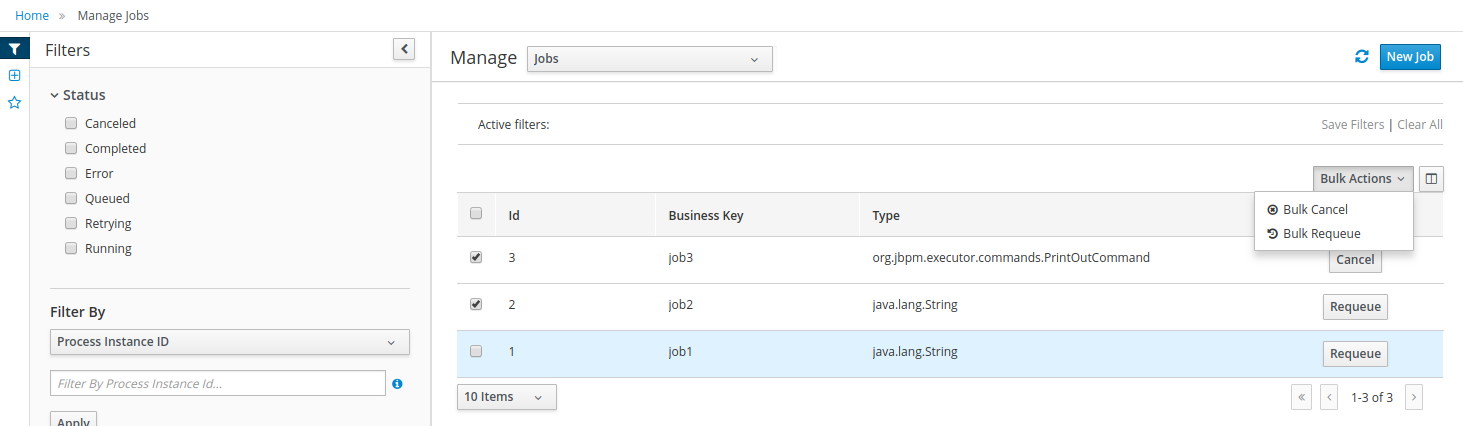

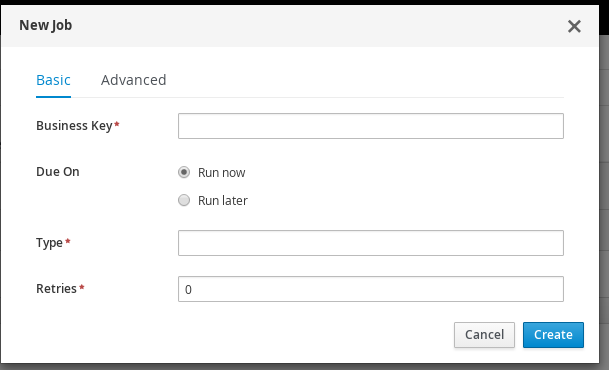

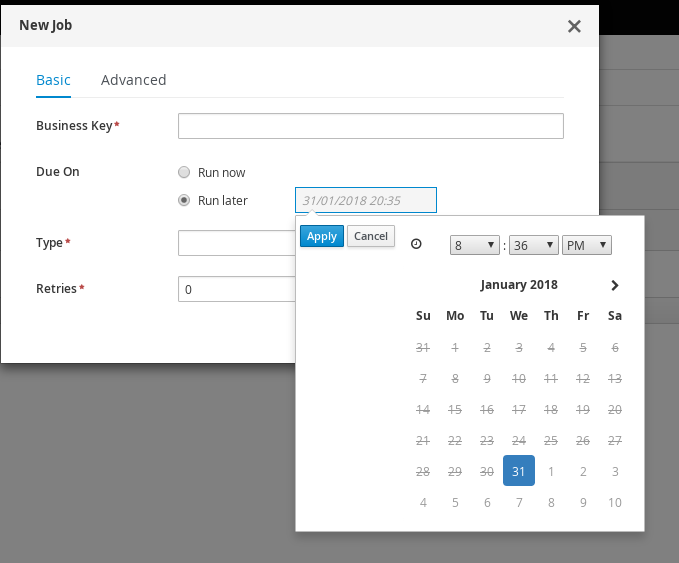

Jobs management: possibility to view currently scheduled and schedule new Jobs to run in the Kie Server instance.

For more details around the entire management section please read the process management chapter.

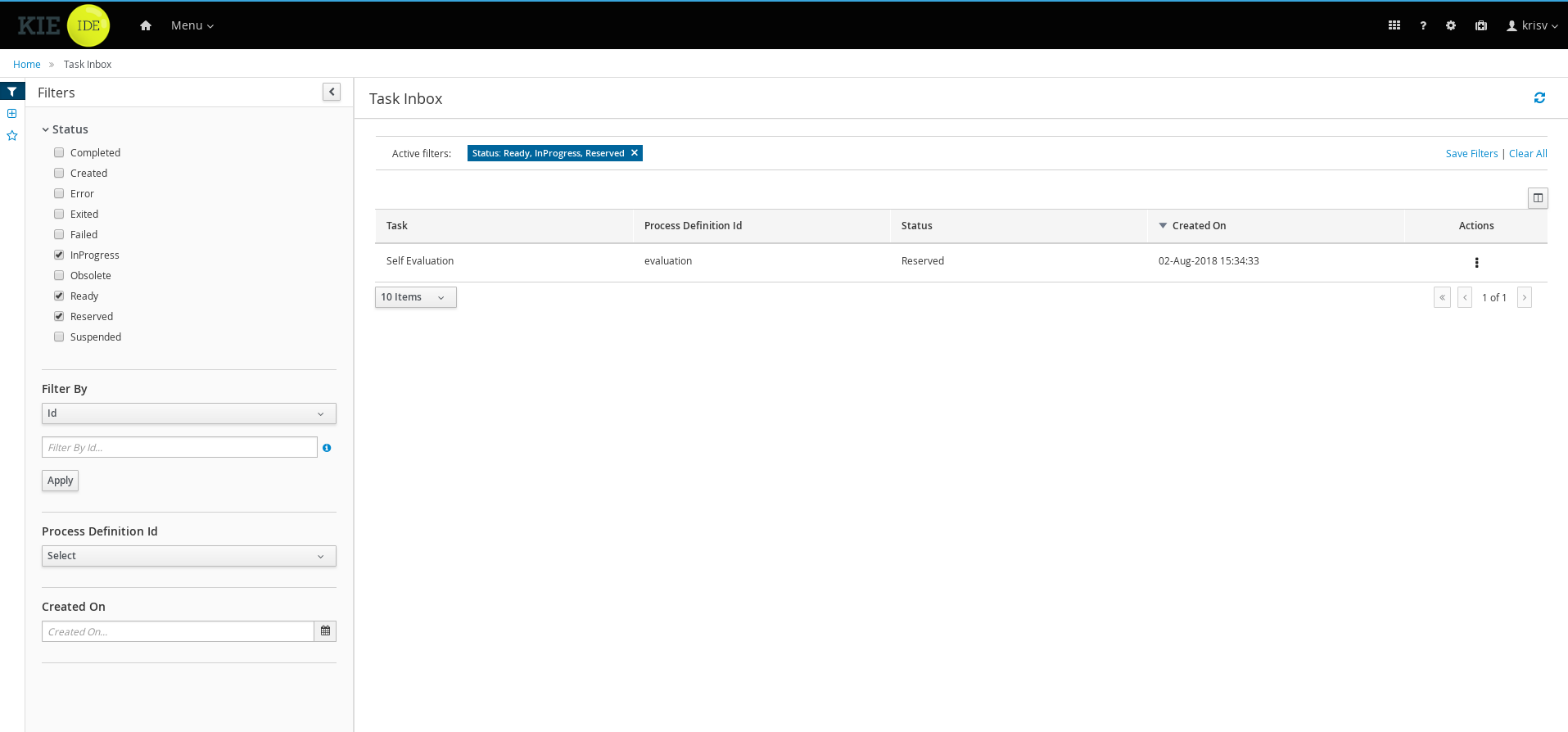

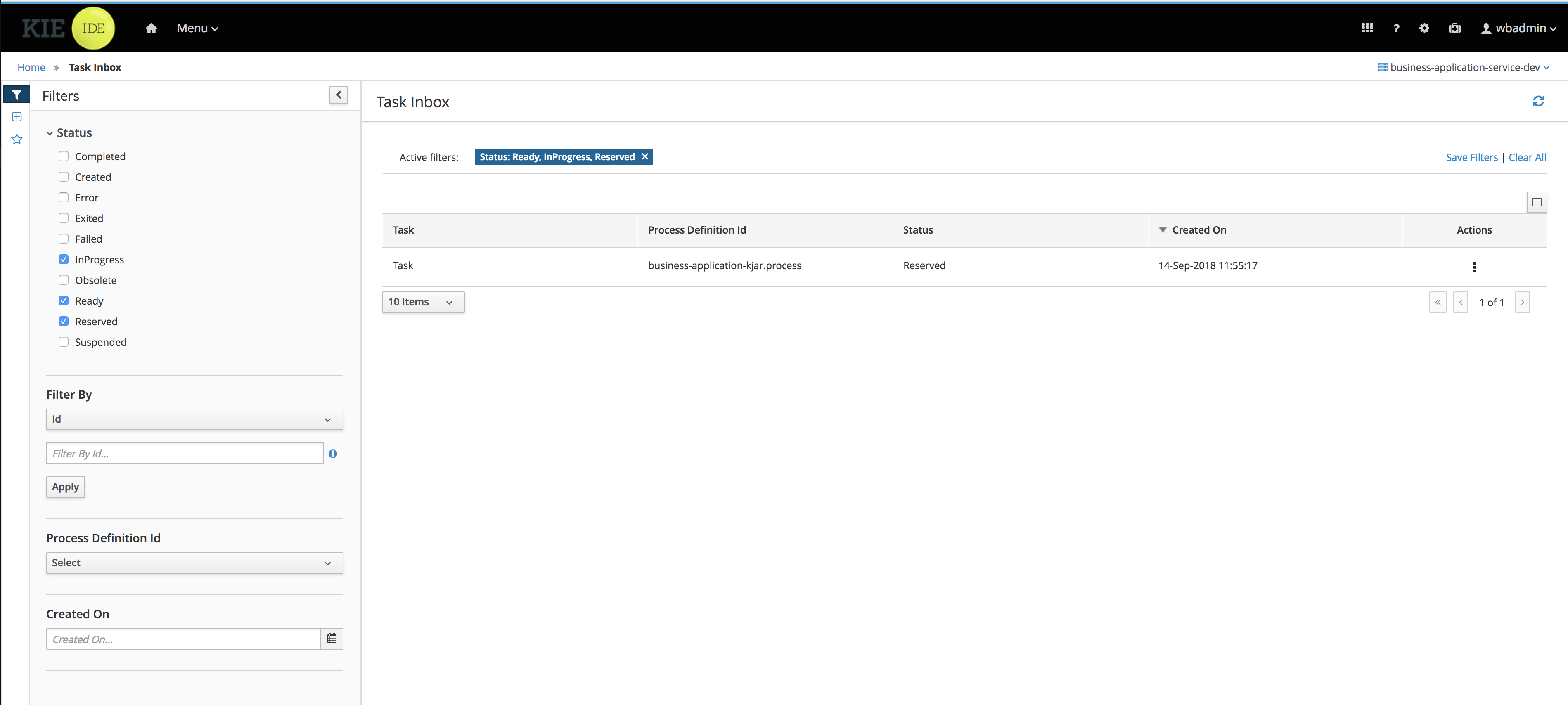

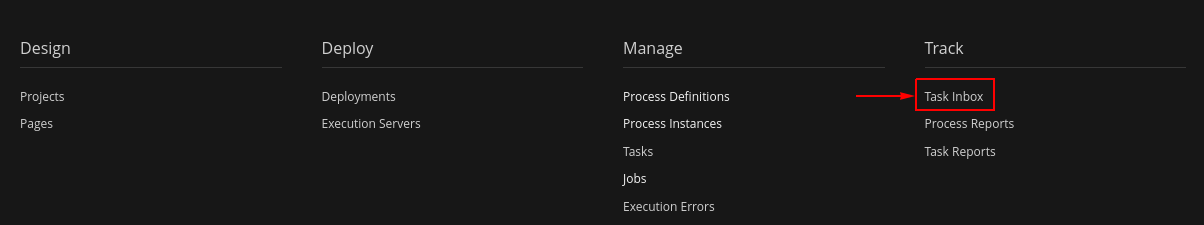

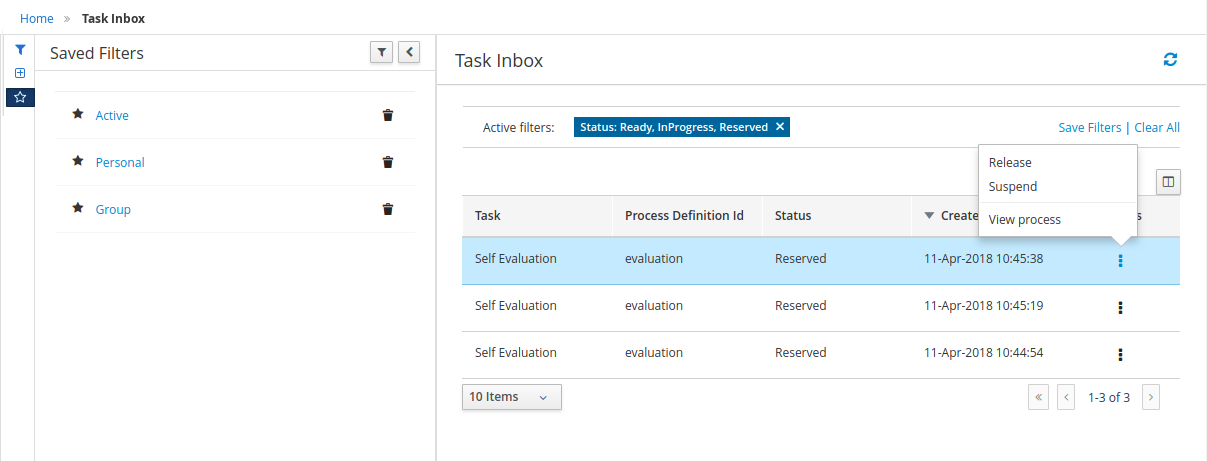

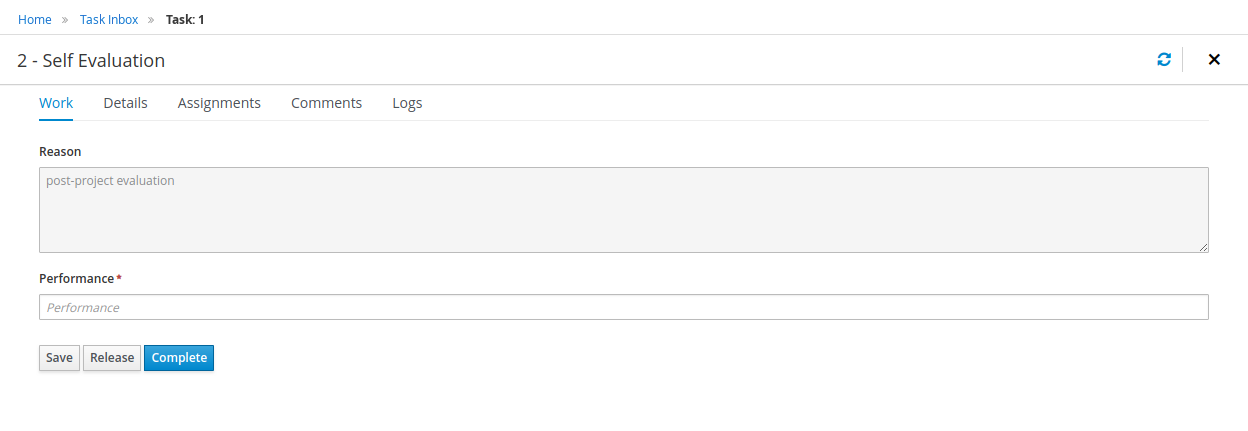

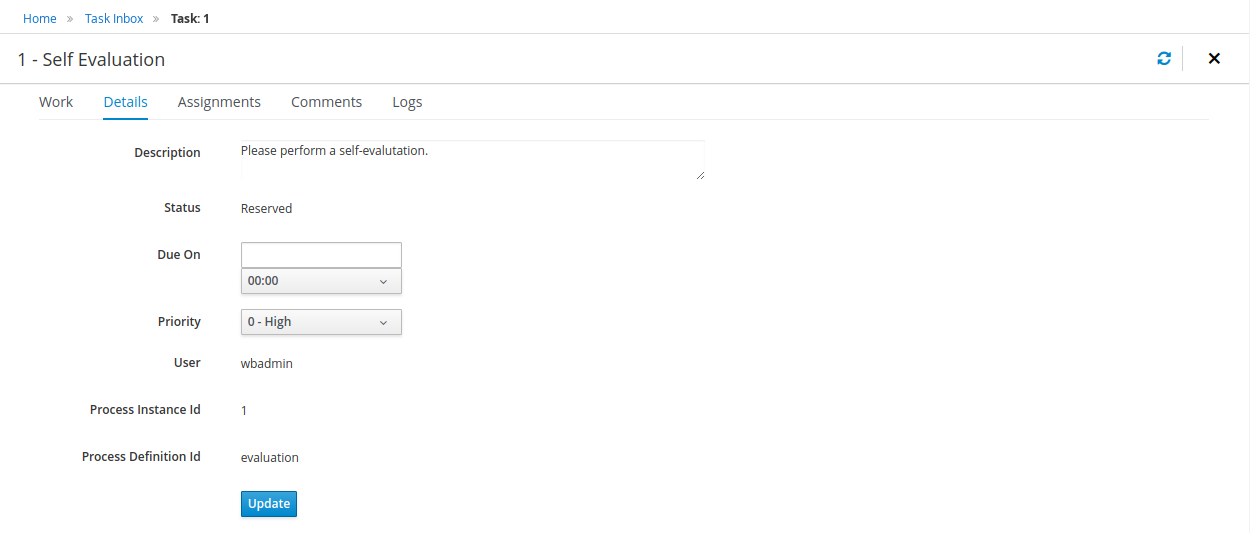

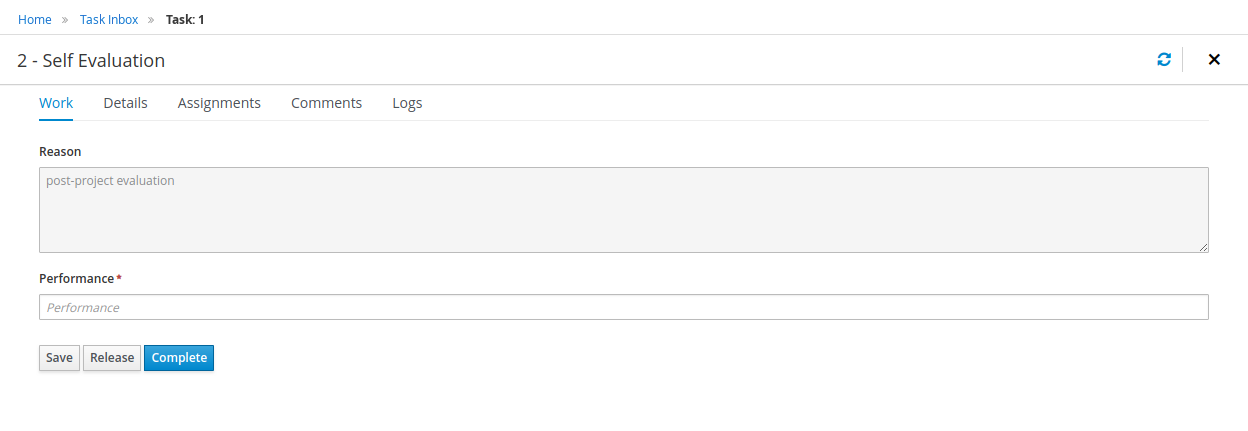

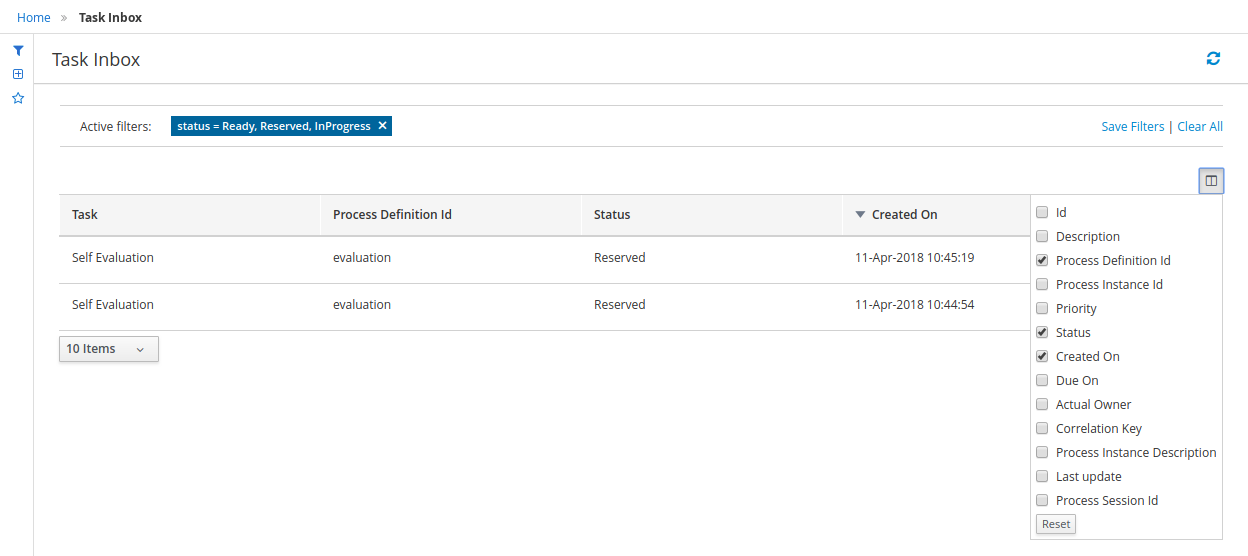

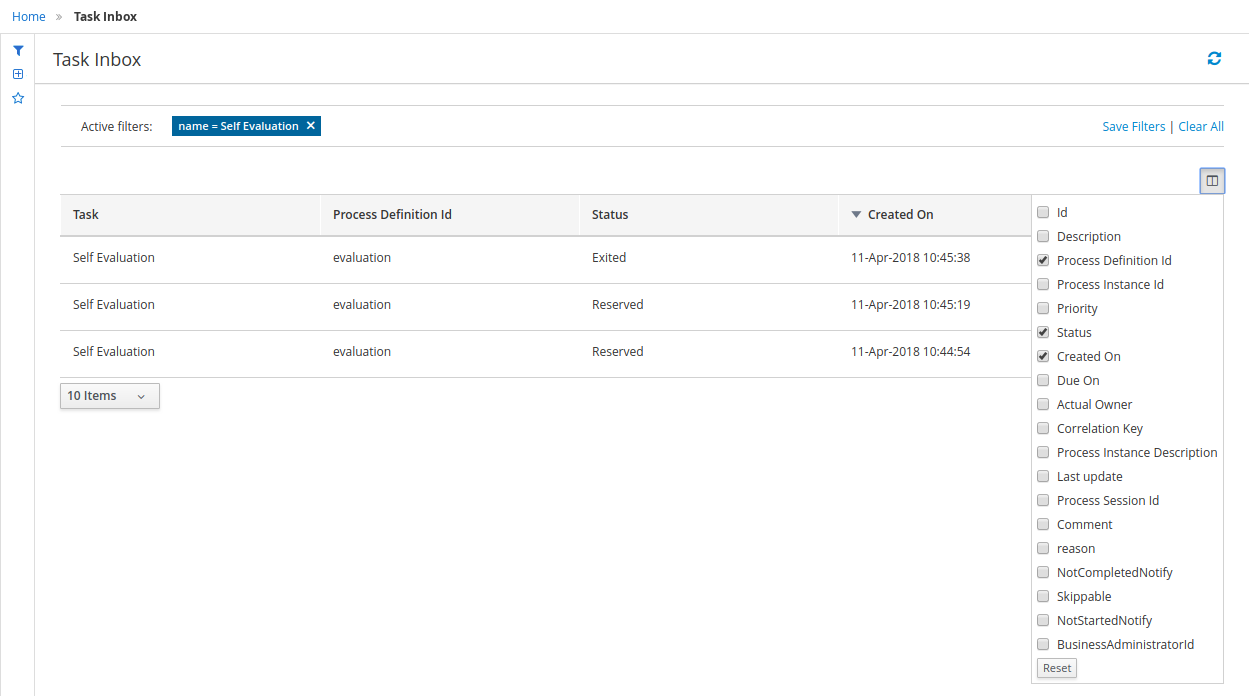

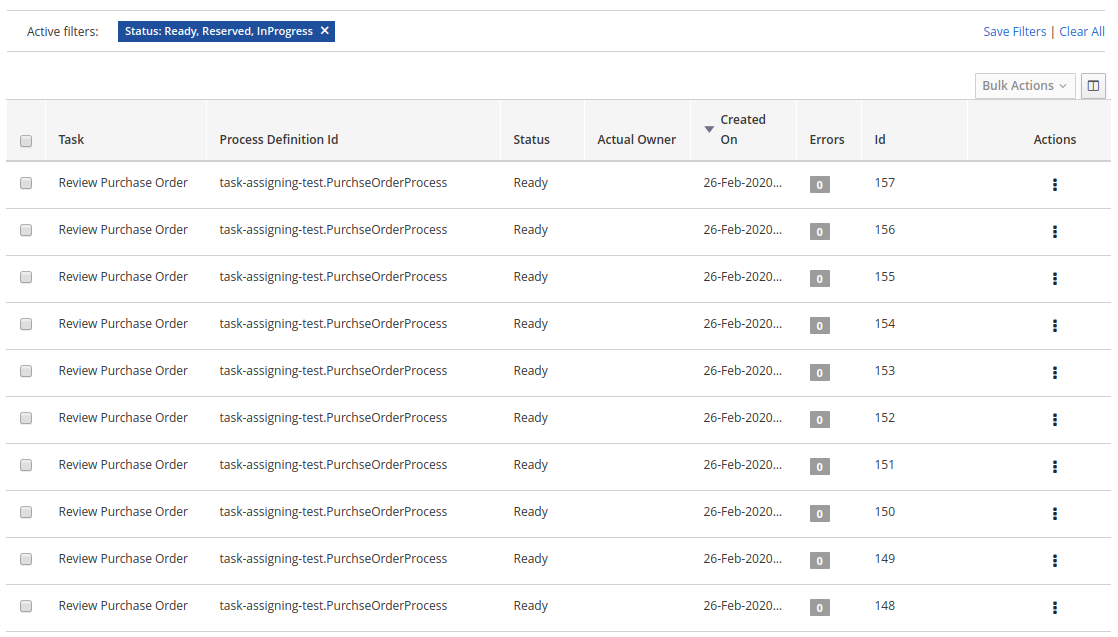

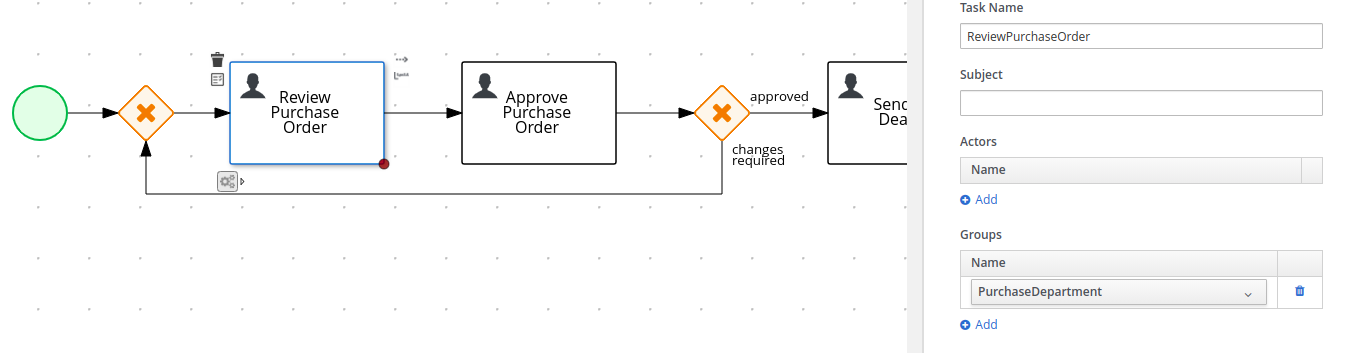

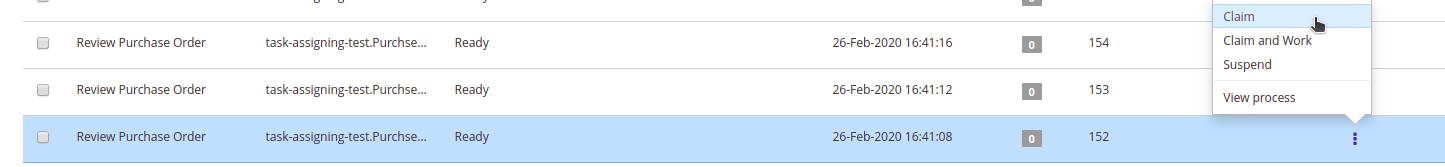

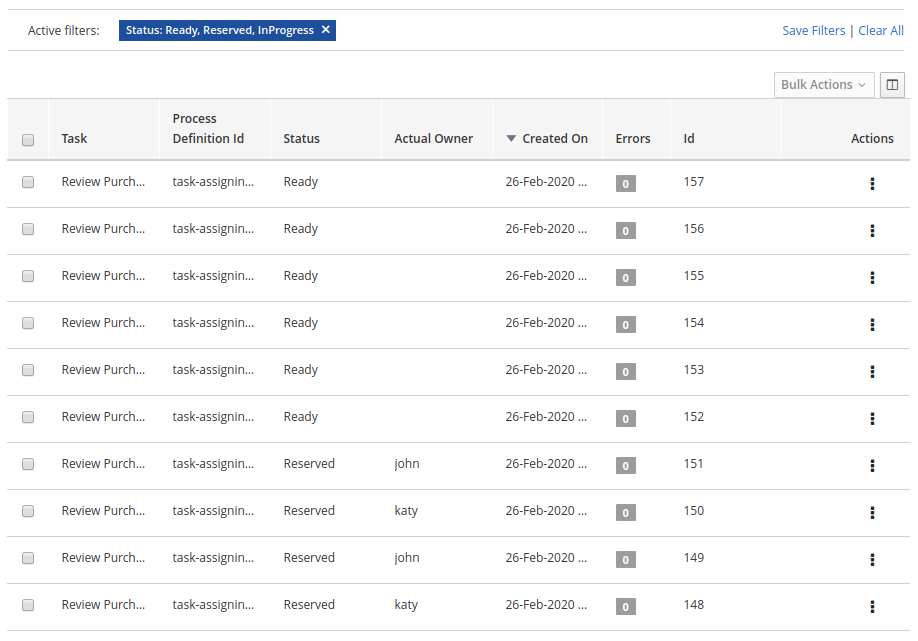

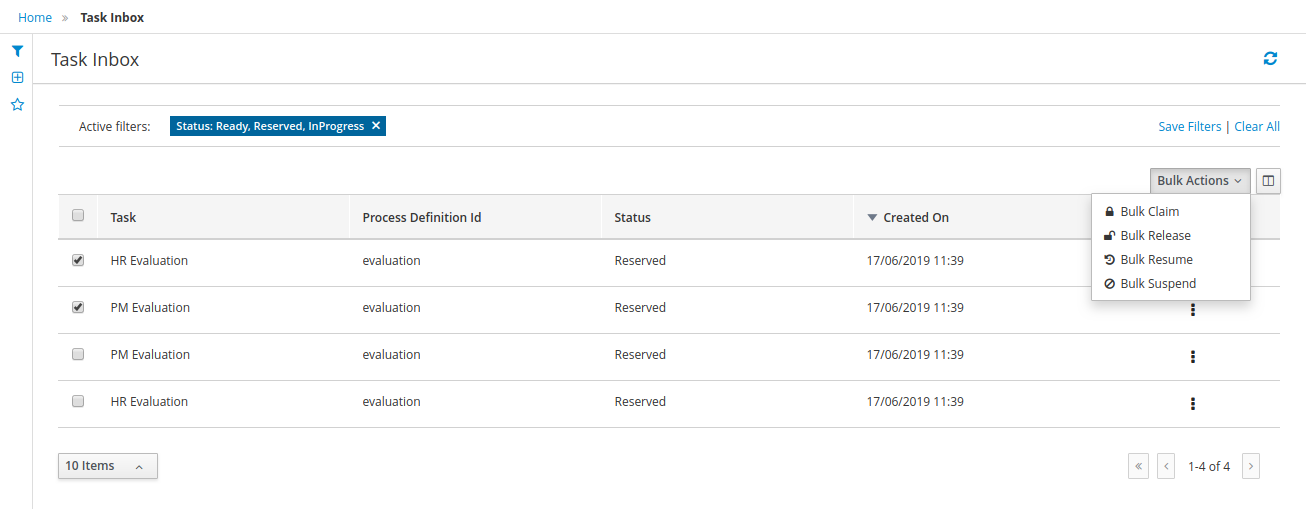

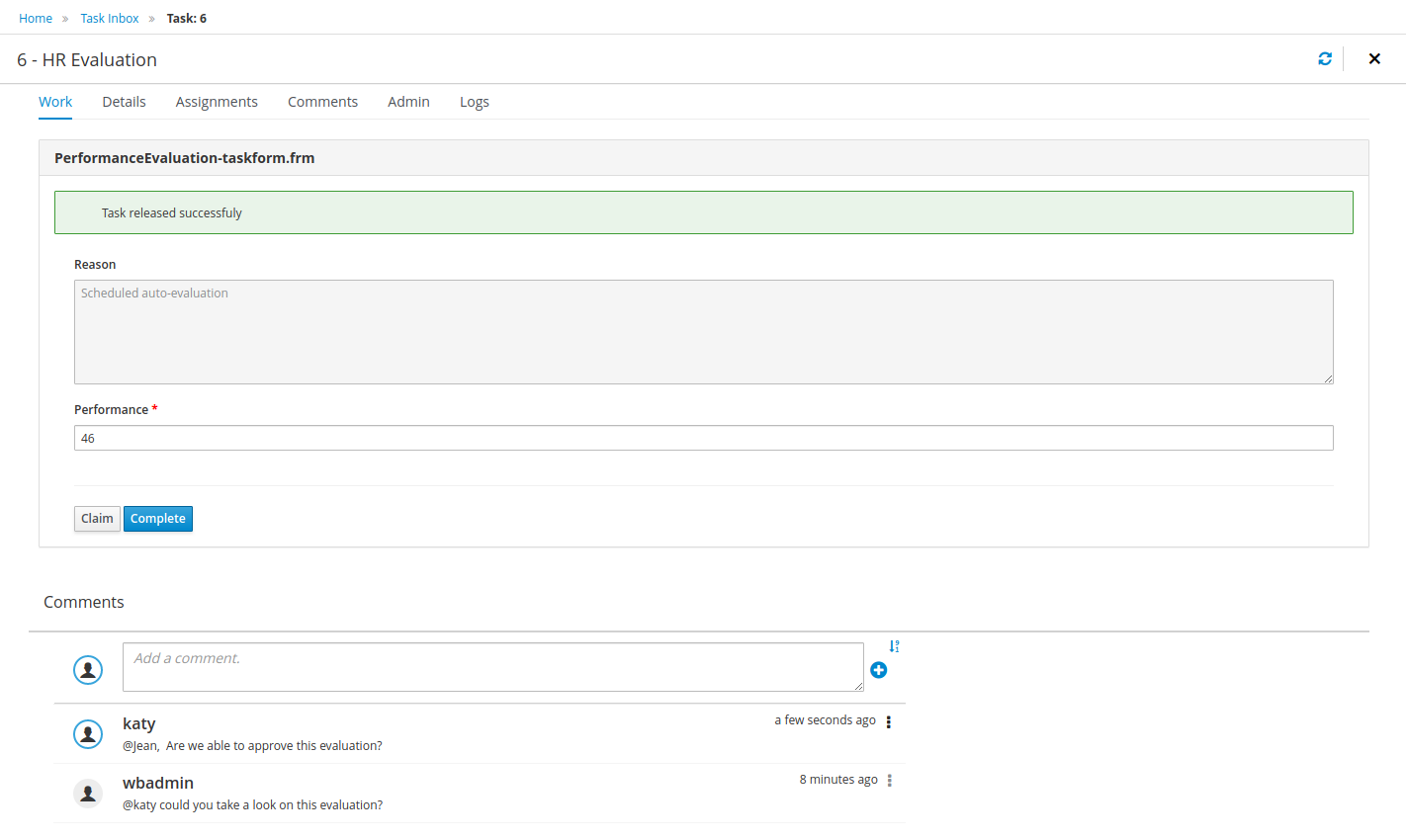

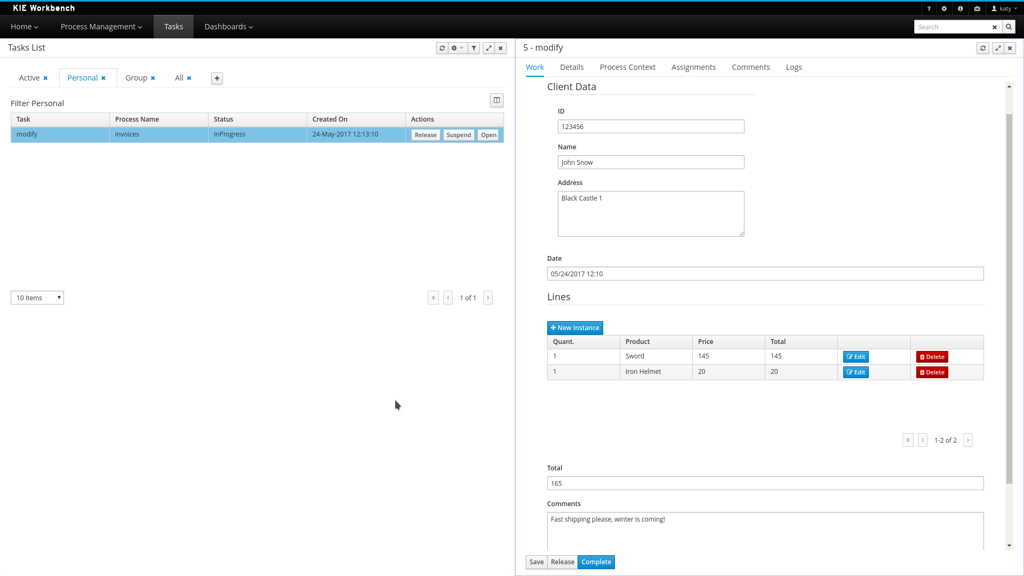

1.4.4. Task Inbox

As often part of any process execution, human involvement is needed to review, approve or provide extra information. Business Central provides a Task Inbox section where any user potentially involved with these task can manage its workload. In there, users are able to get a list of all tasks, complete tasks using customizable task forms, collaborate using comments and more.

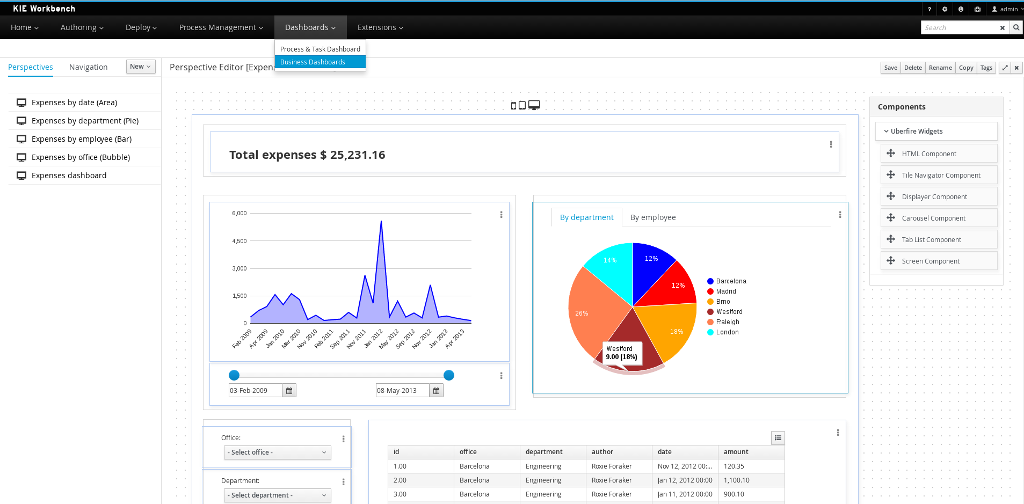

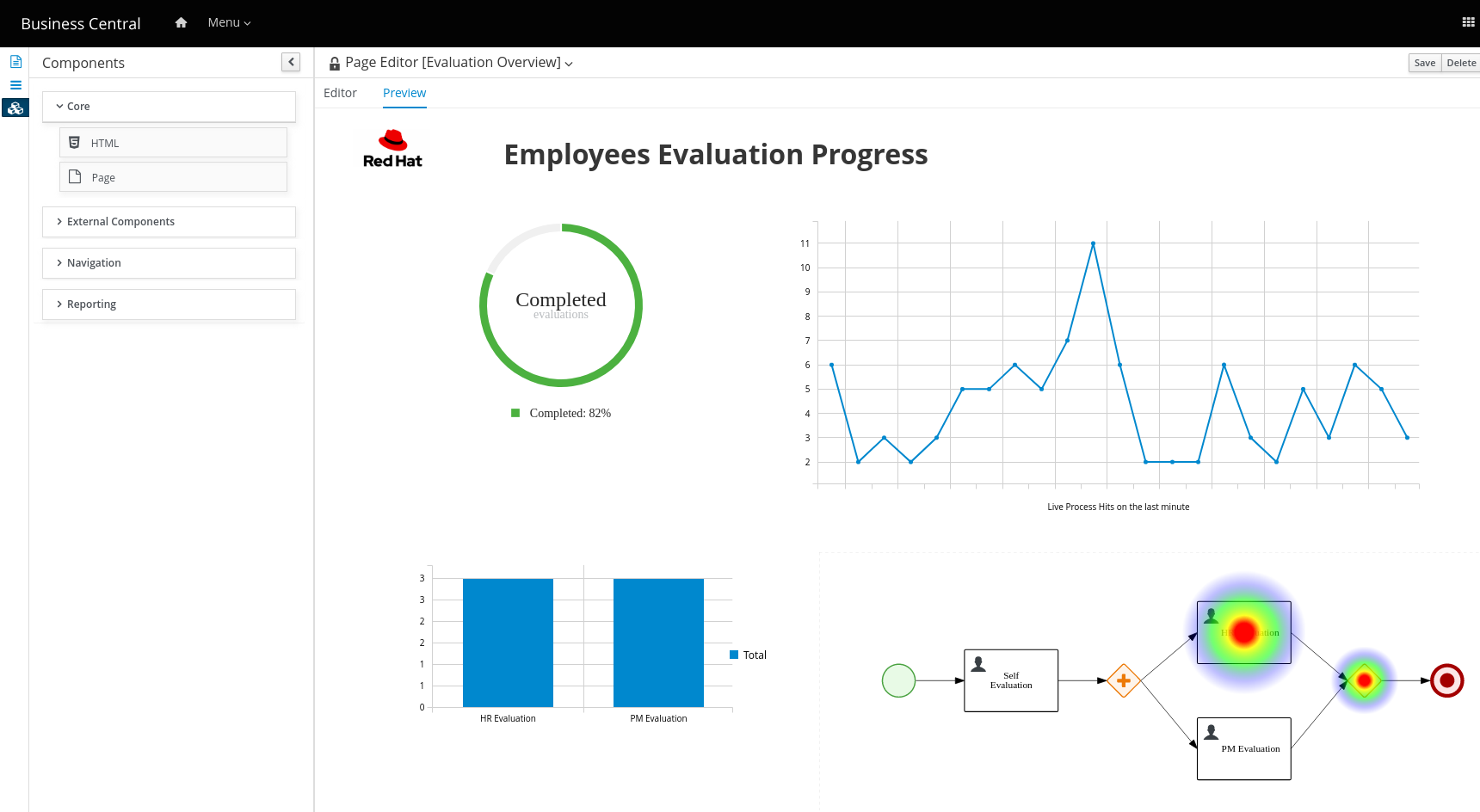

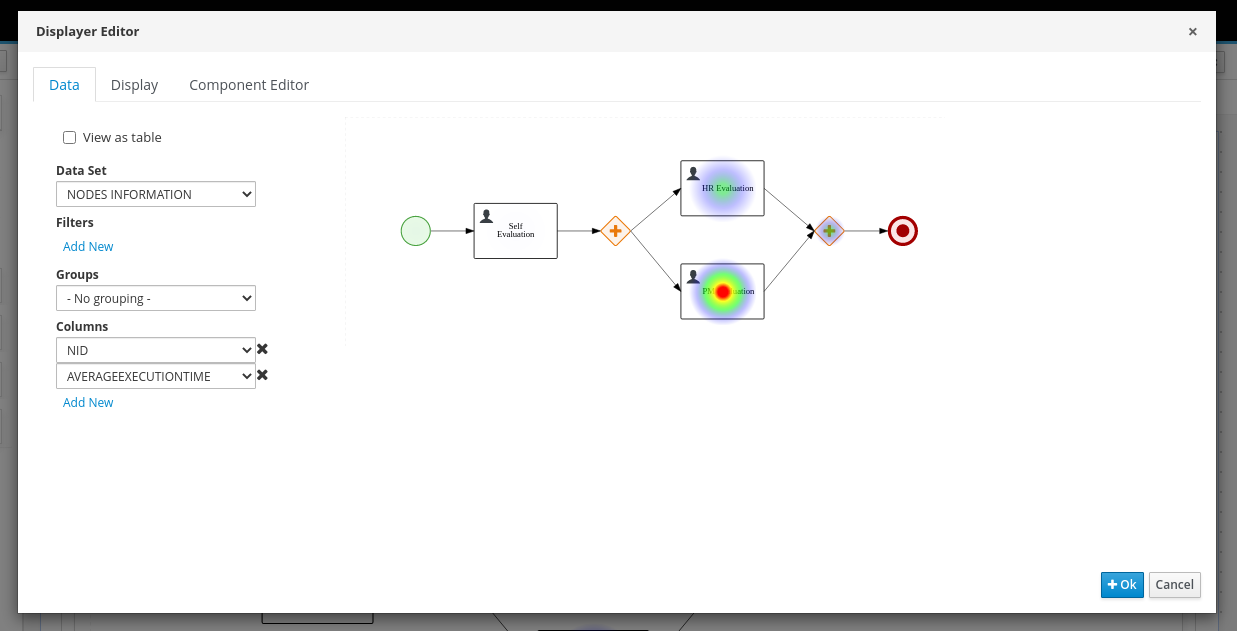

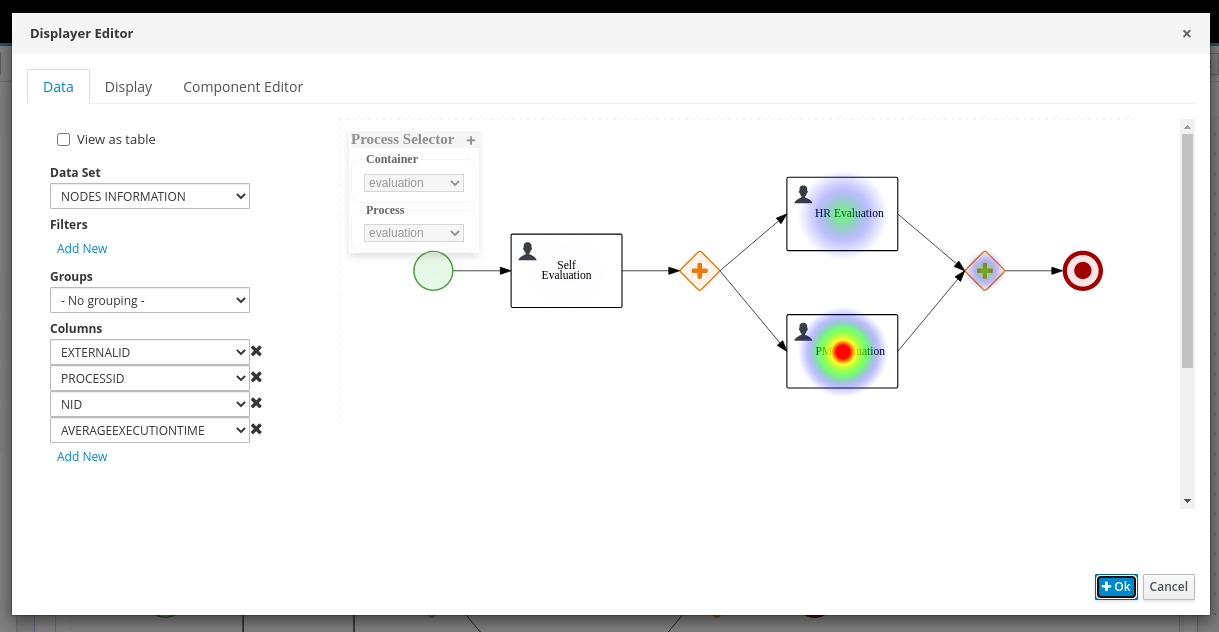

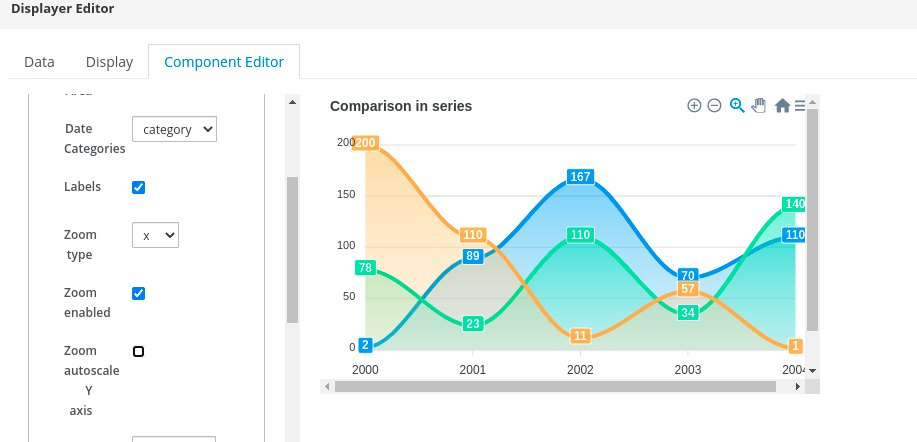

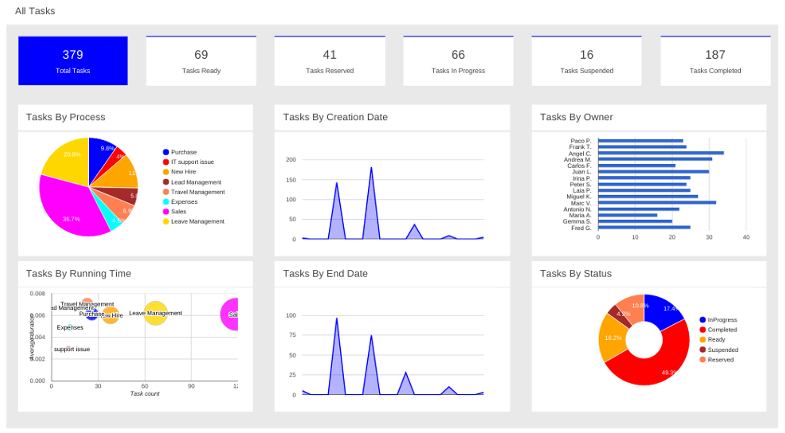

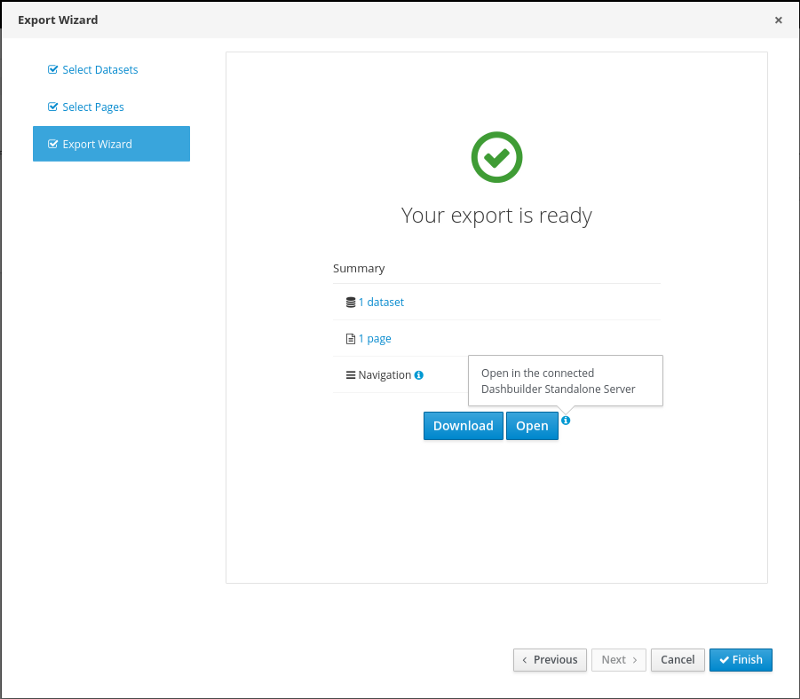

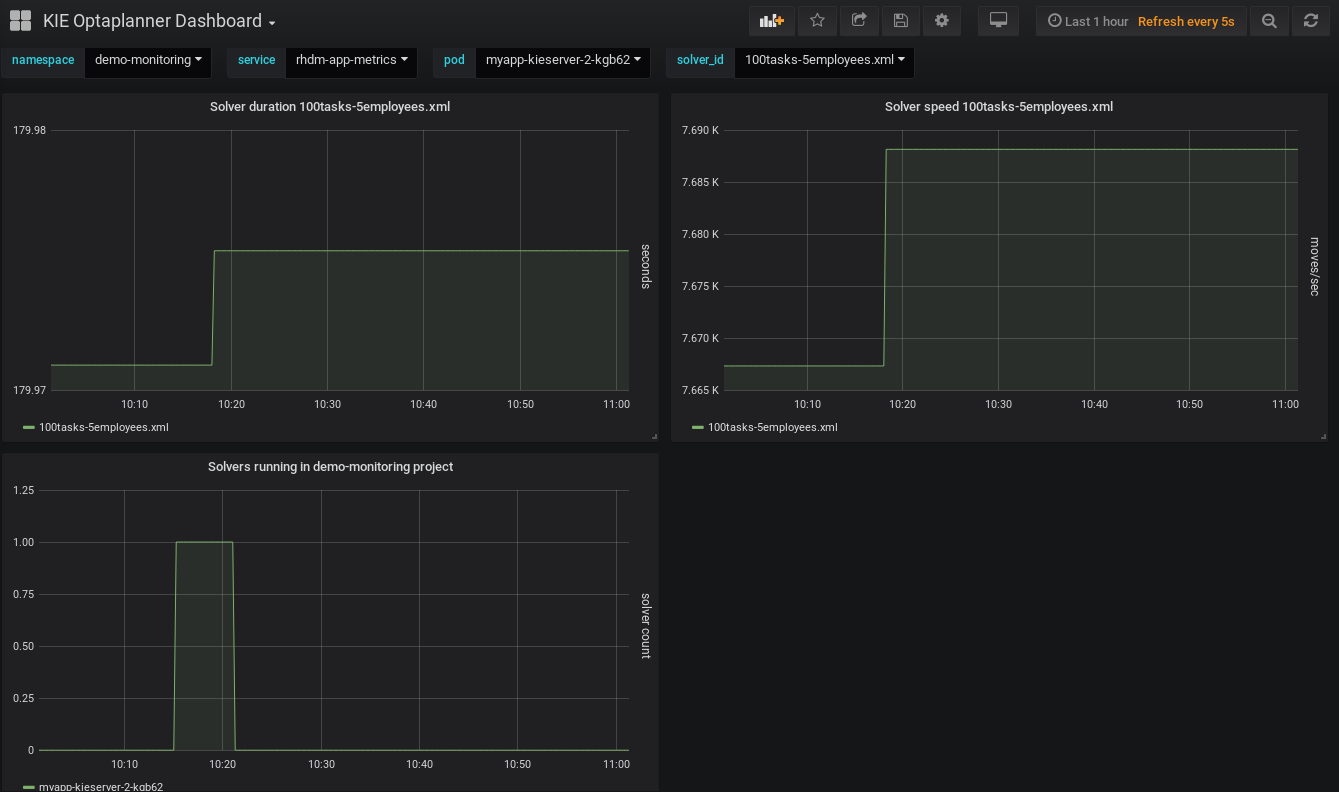

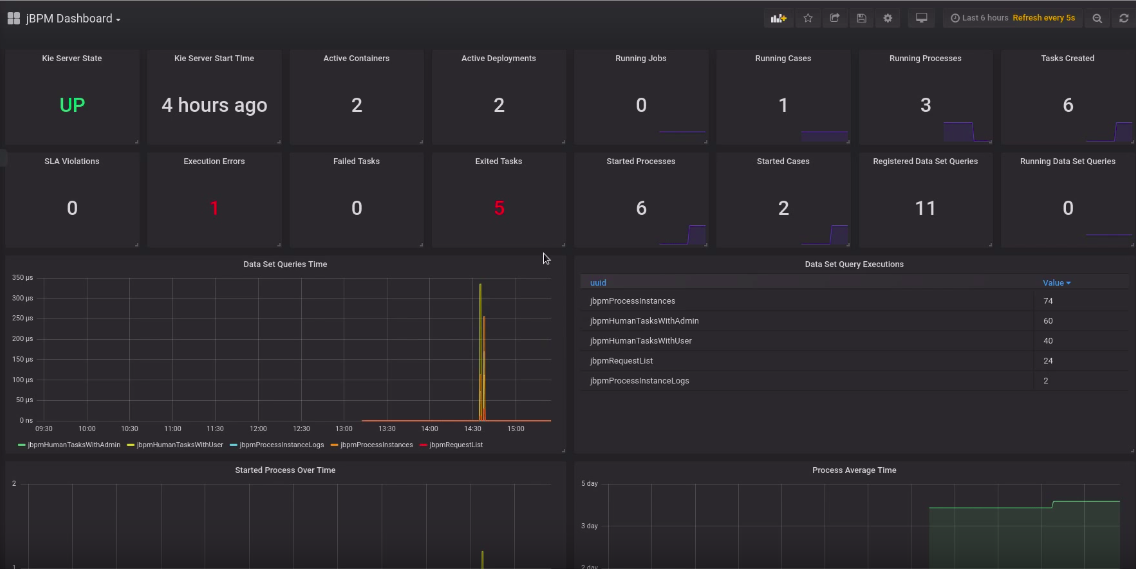

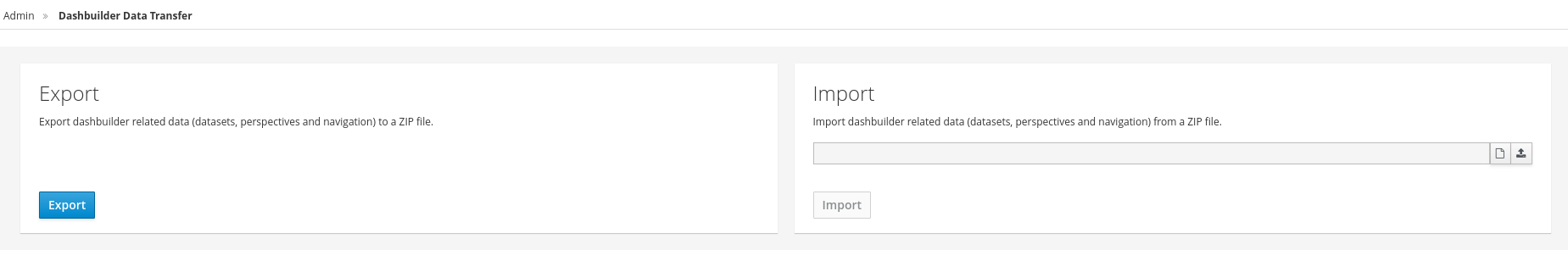

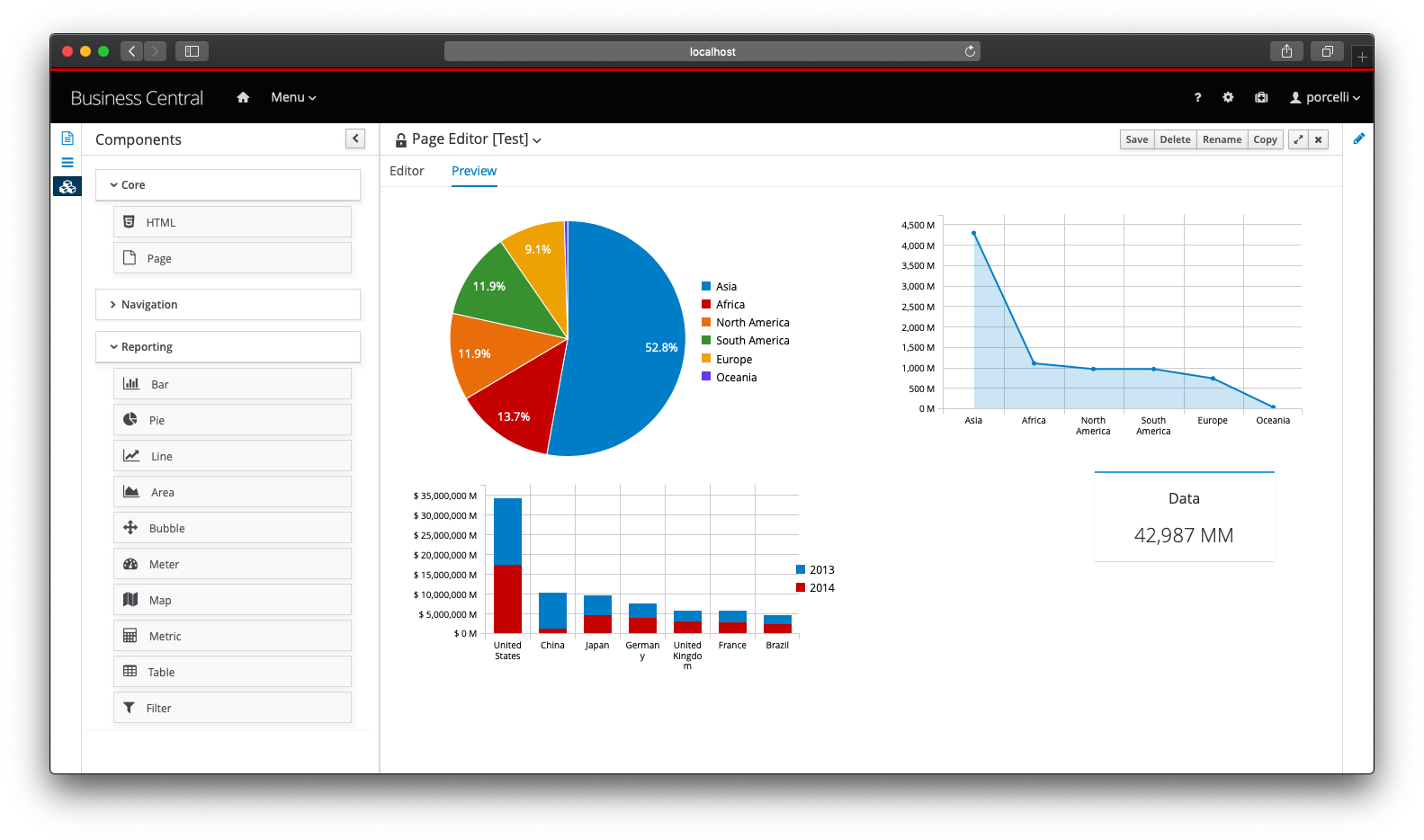

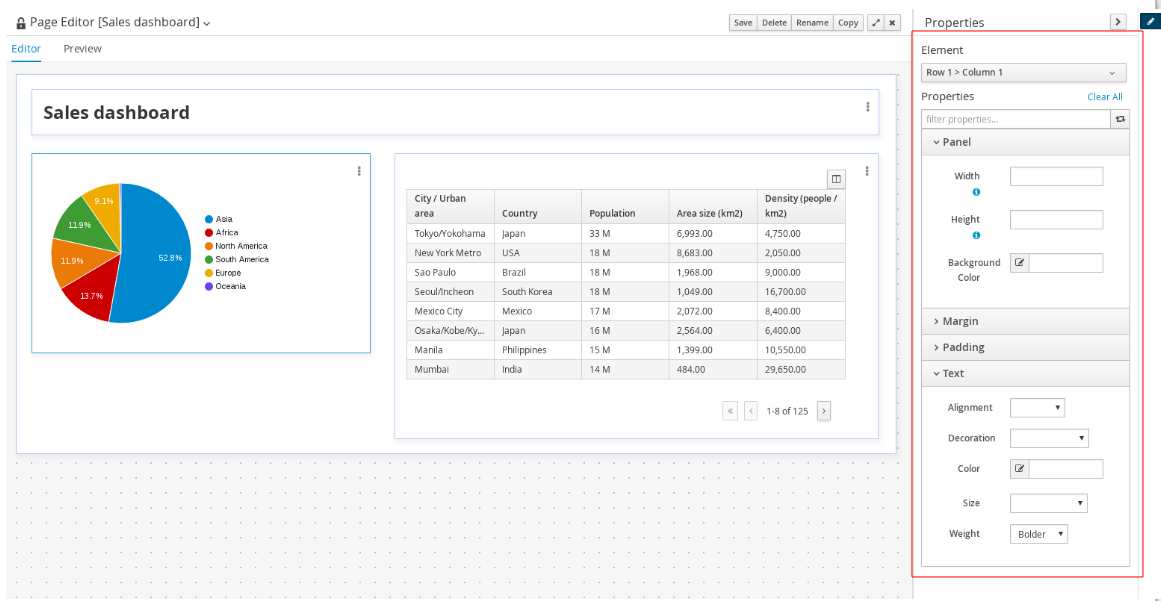

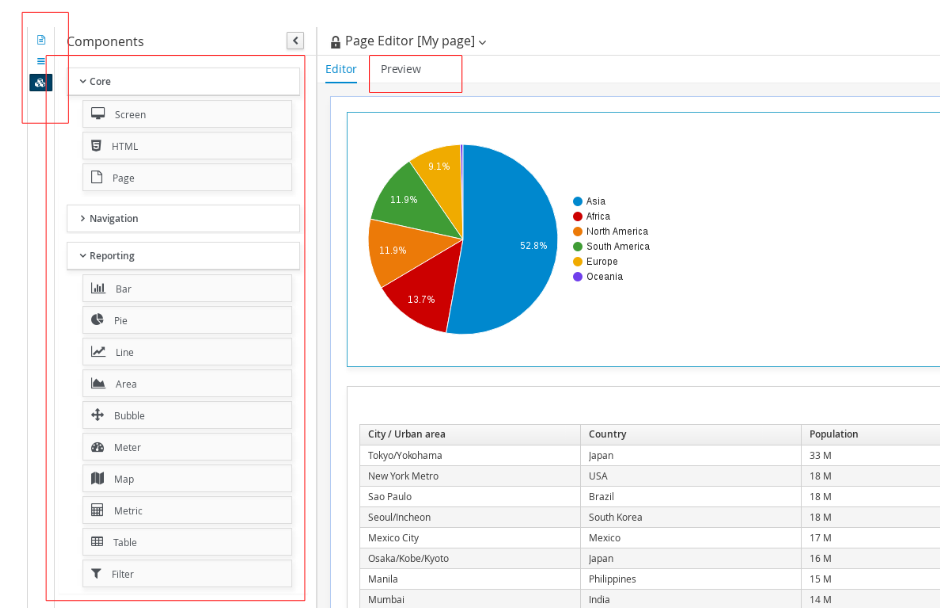

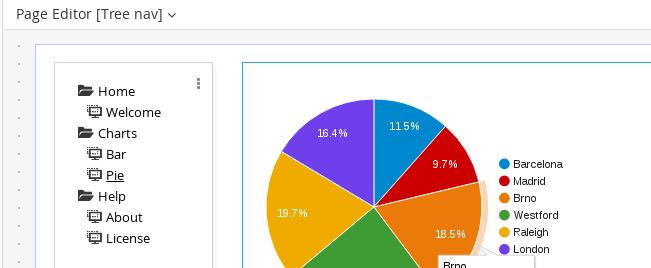

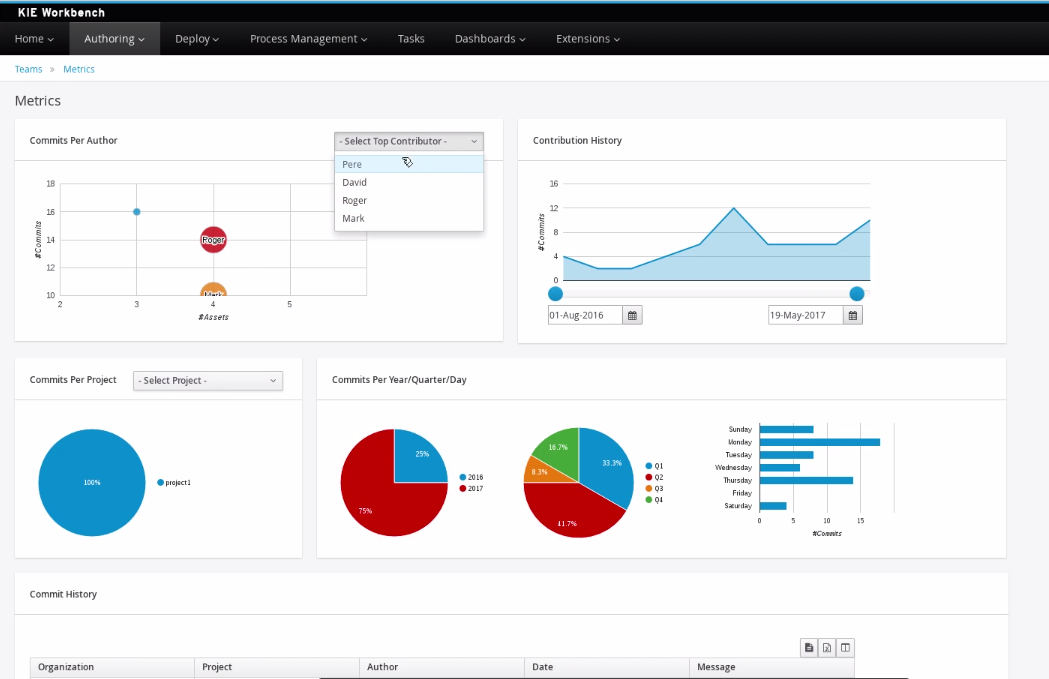

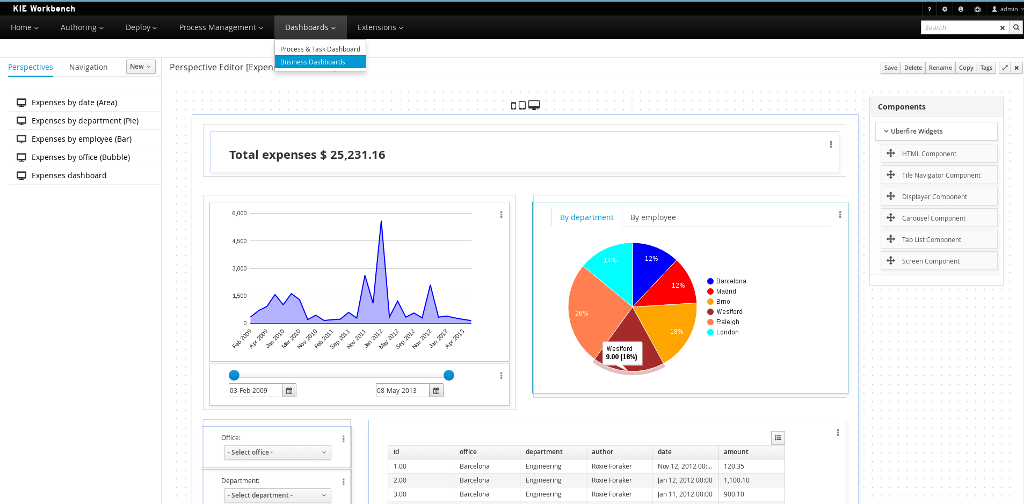

1.4.5. Business Activity Monitoring

As of version 6.0, jBPM comes with a full-featured BAM tooling which allows non-technical users to visually compose business dashboards. With this brand new module, to develop business activity monitoring and reporting solutions on top of jBPM has never been so easy!

Key features:

-

Visual configuration of dashboards (Drag’n’drop).

-

Graphical representation of KPIs (Key Performance Indicators).

-

Configuration of interactive report tables.

-

Data export to Excel and CSV format.

-

Filtering and search, both in-memory or SQL based.

-

Data extraction from external systems, through different protocols.

-

Granular access control for different user profiles.

-

Look’n’feel customization tools.

-

Pluggable chart library architecture.

Target users:

-

Managers / Business owners. Consumer of dashboards and reports.

-

IT / System architects. Connectivity and data extraction.

-

Analysts / Developers. Dashboard composition & configuration.

To get further information about the new and noteworthy BAM capabilities of jBPM please read the chapter Business Activity Monitoring.

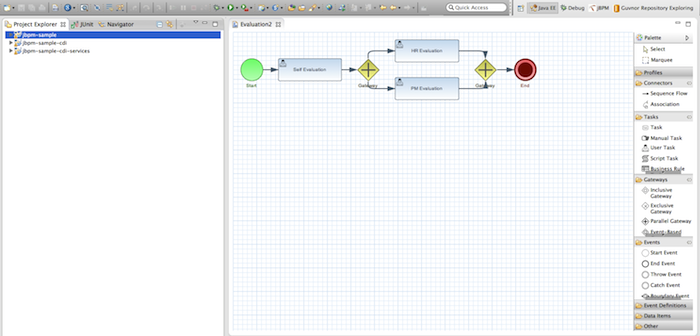

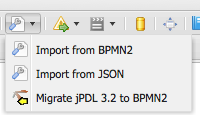

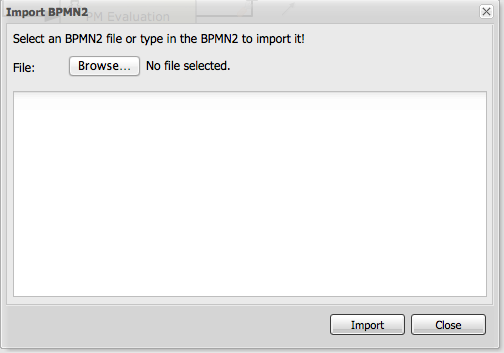

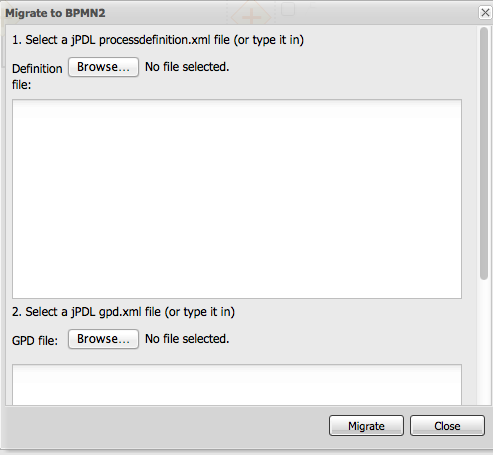

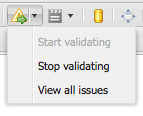

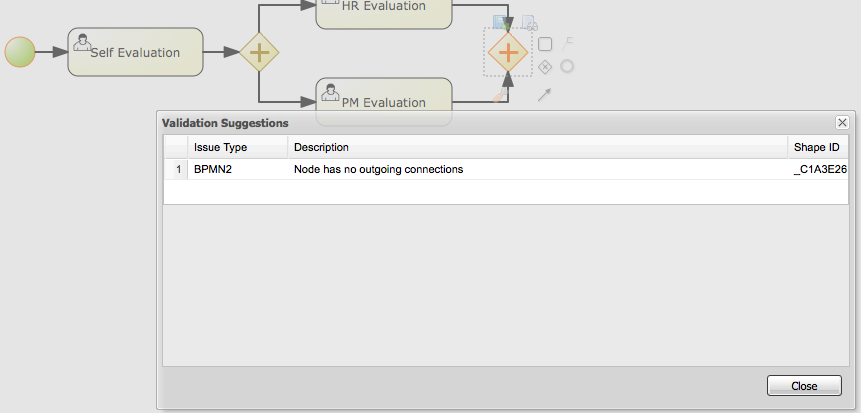

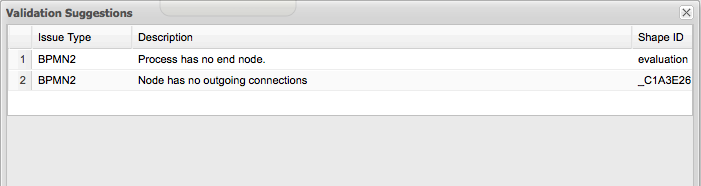

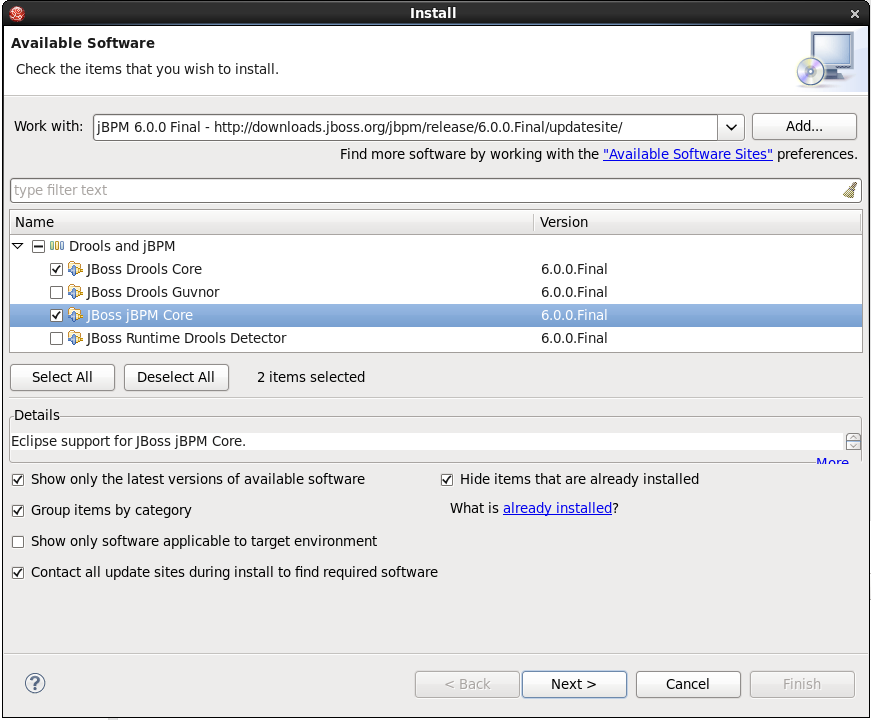

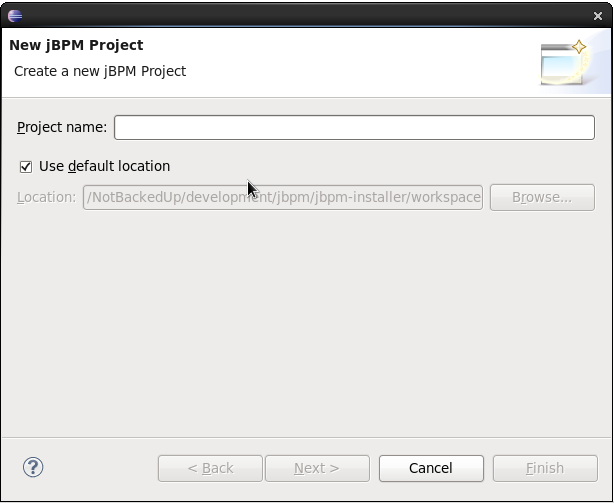

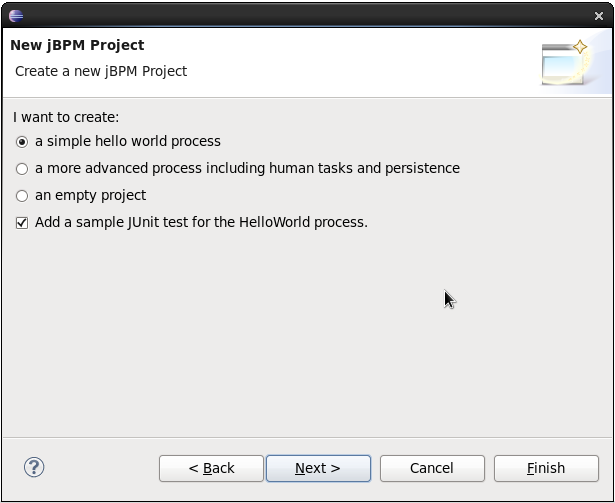

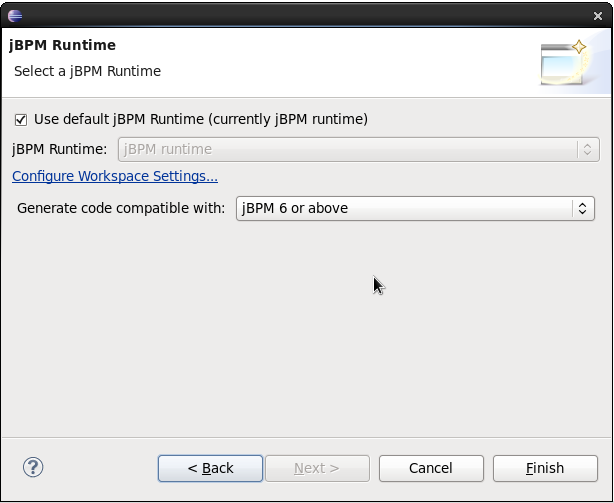

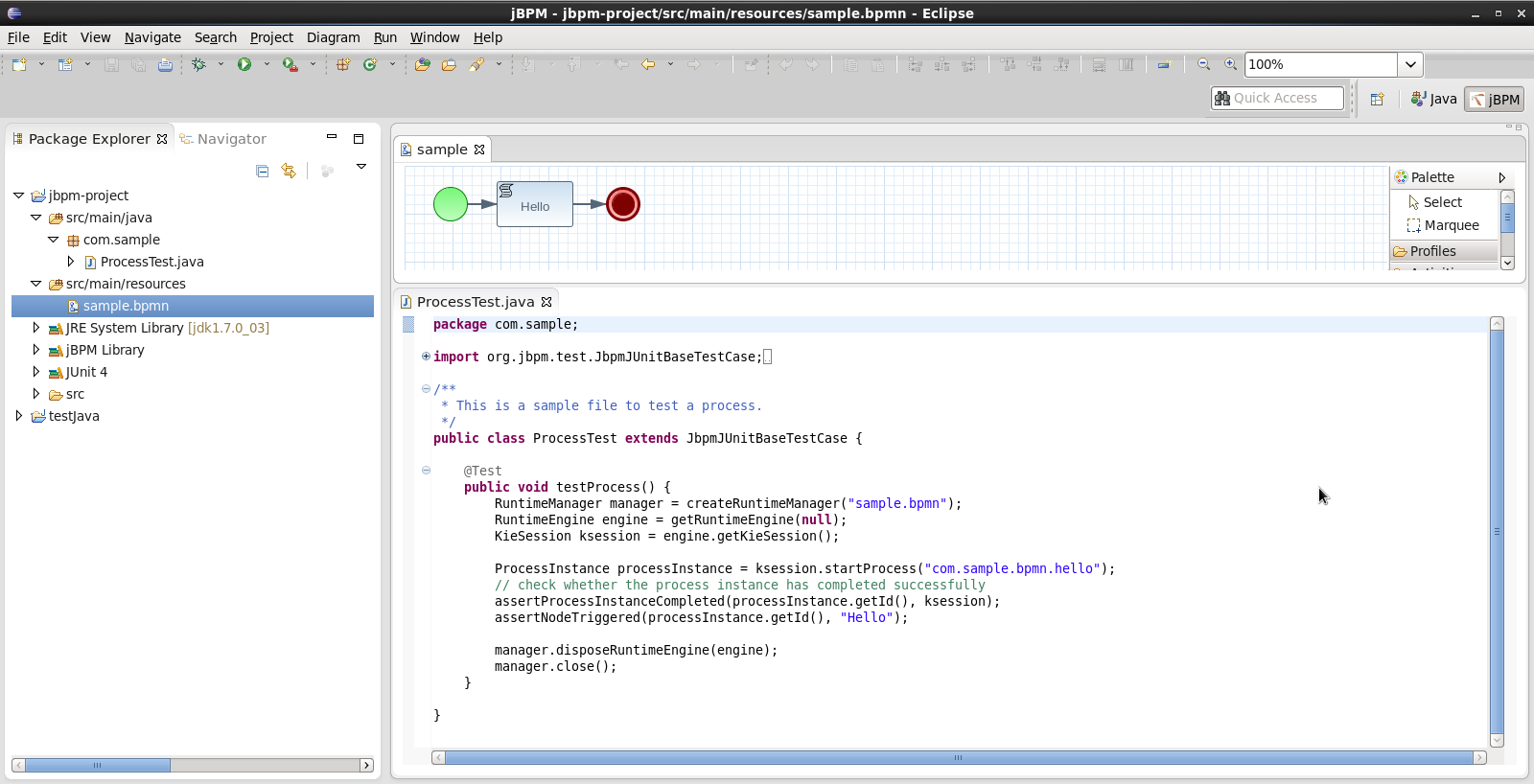

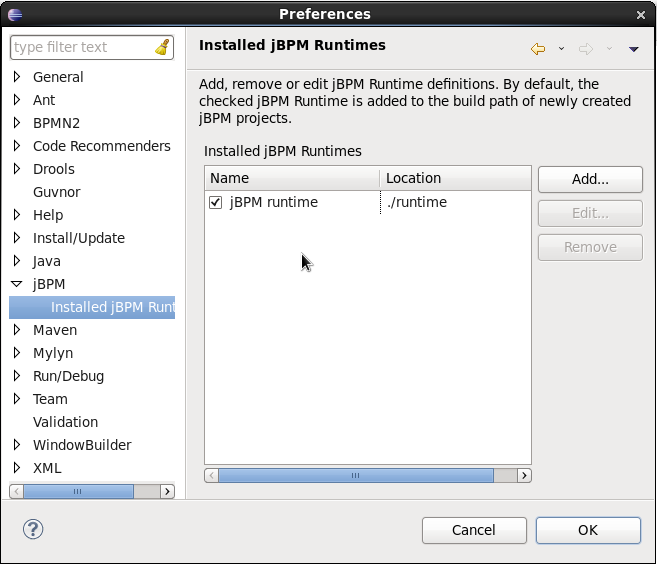

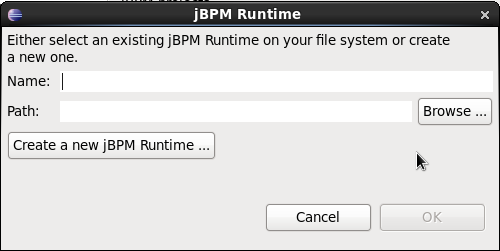

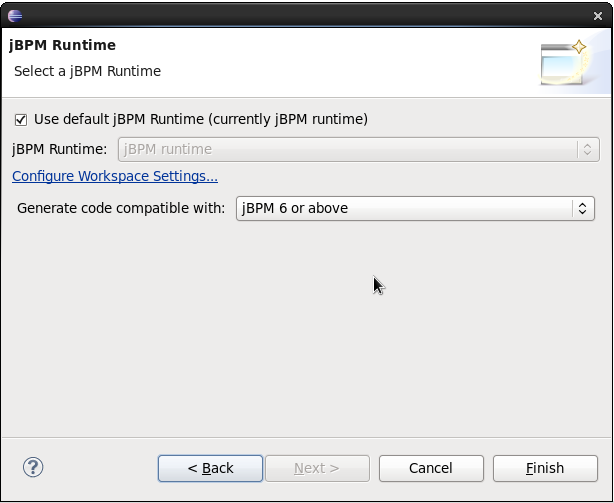

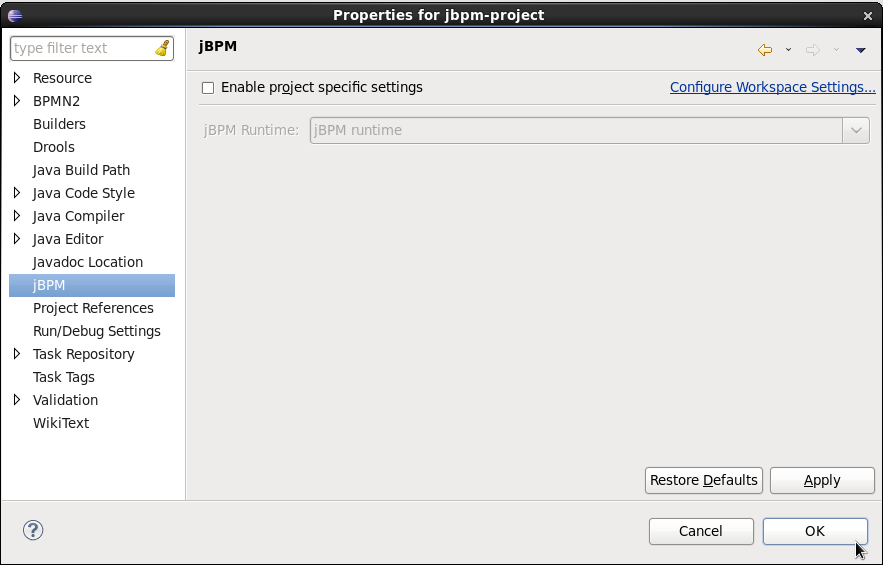

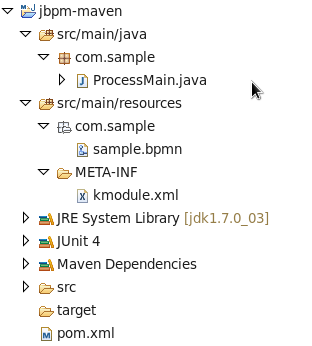

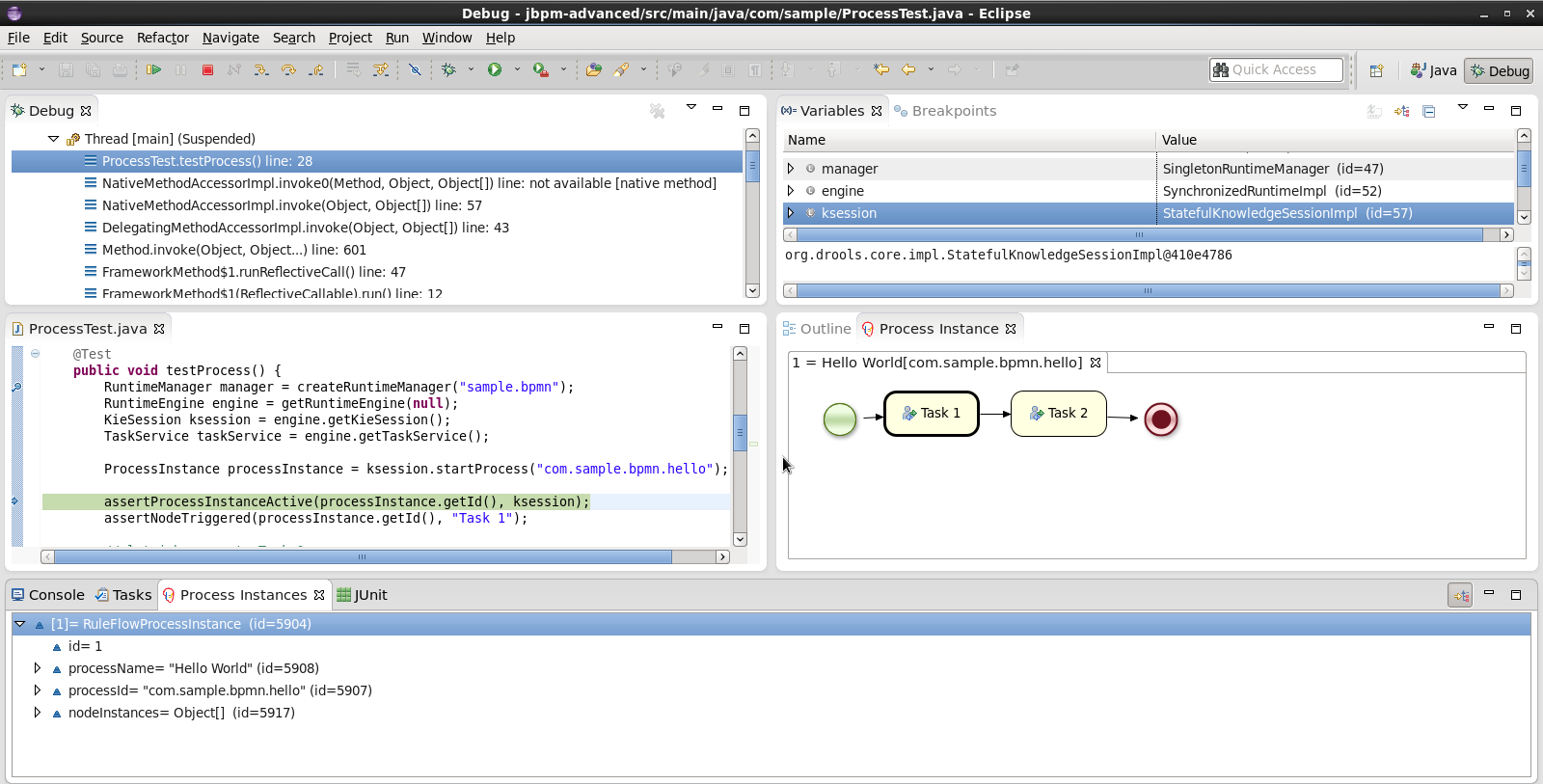

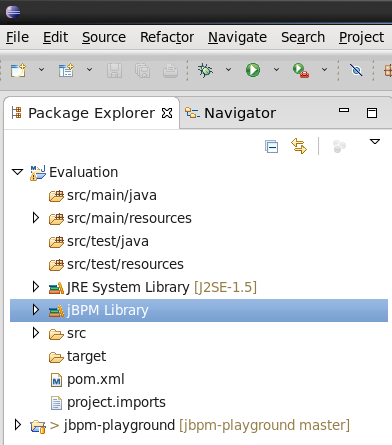

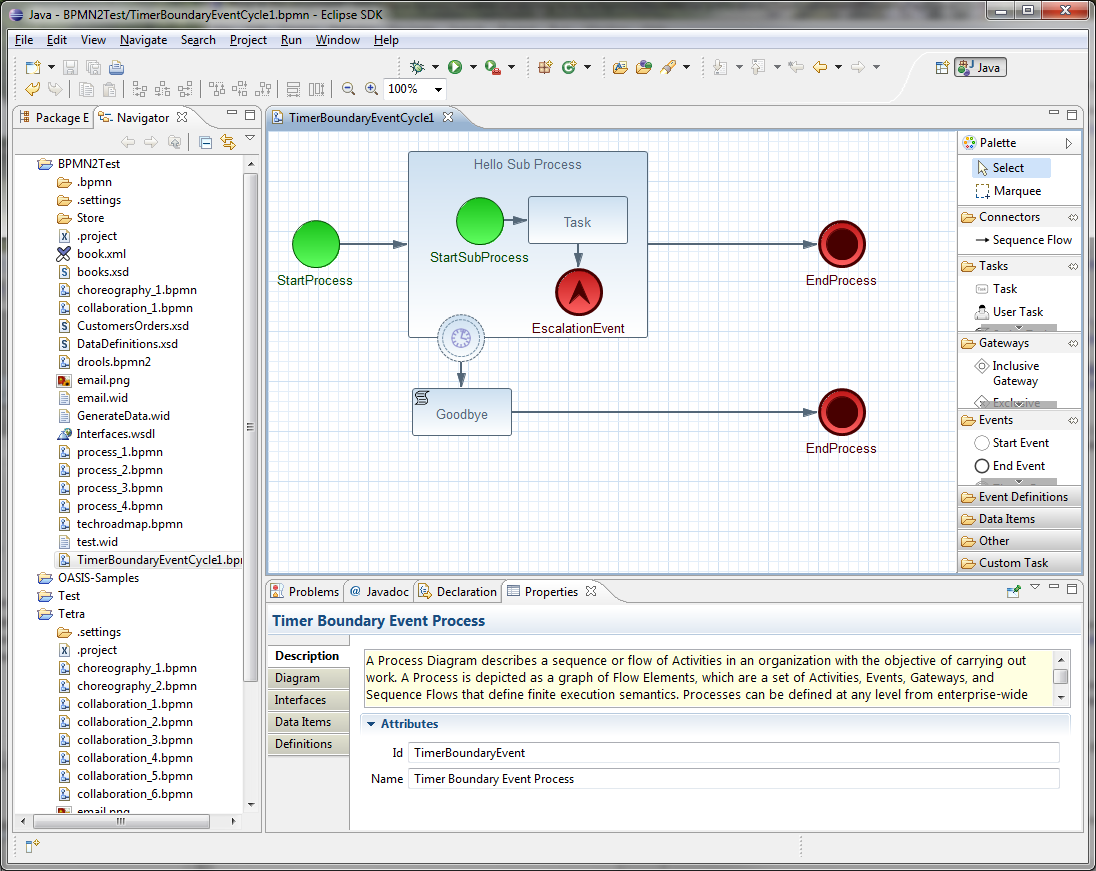

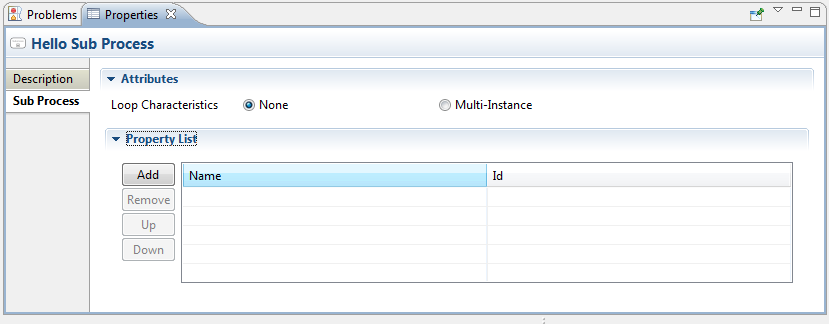

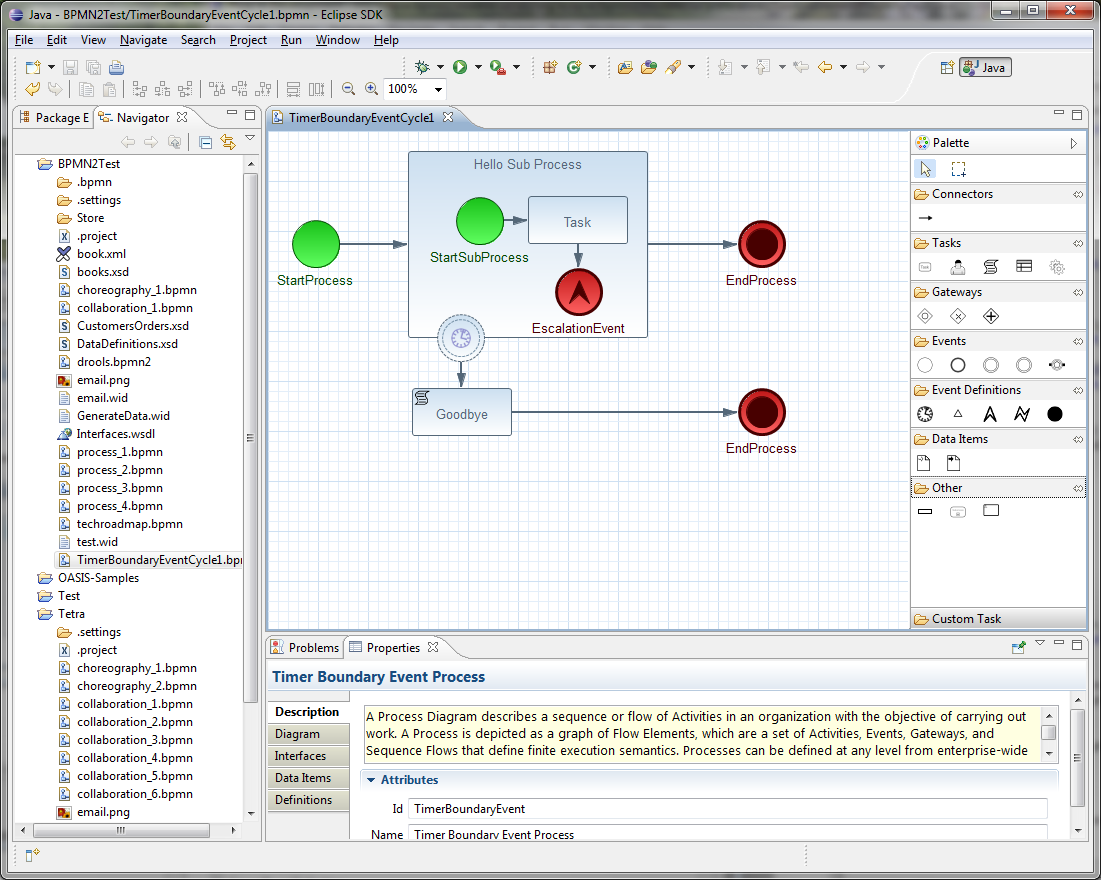

1.5. Eclipse Developer Tools

The Eclipse-based tools are a set of plugins to the Eclipse IDE and allow you to integrate your business processes in your development environment. It is targeted towards developers and has some wizards to get started, a graphical editor for creating your business processes (using drag and drop) and a lot of advanced testing and debugging capabilities.

It includes the following features:

-

Wizard for creating a new jBPM project

-

A graphical editor for BPMN 2.0 processes

-

The ability to plug in your own domain-specific nodes

-

Validation

-

Runtime support (so you can select which version of jBPM you would like to use)

-

Graphical debugging to see all running process instances of a selected session, to visualize the current state of one specific process instance, etc.

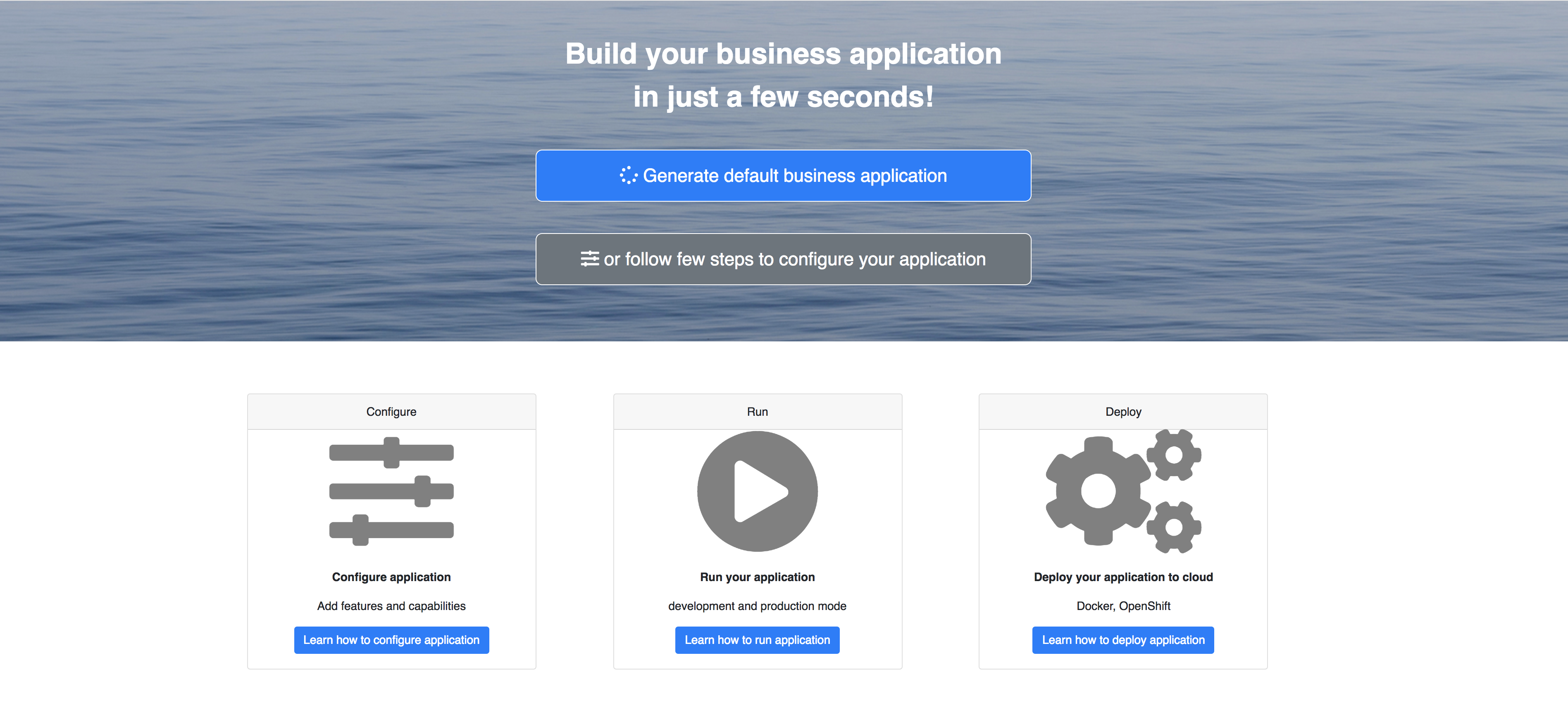

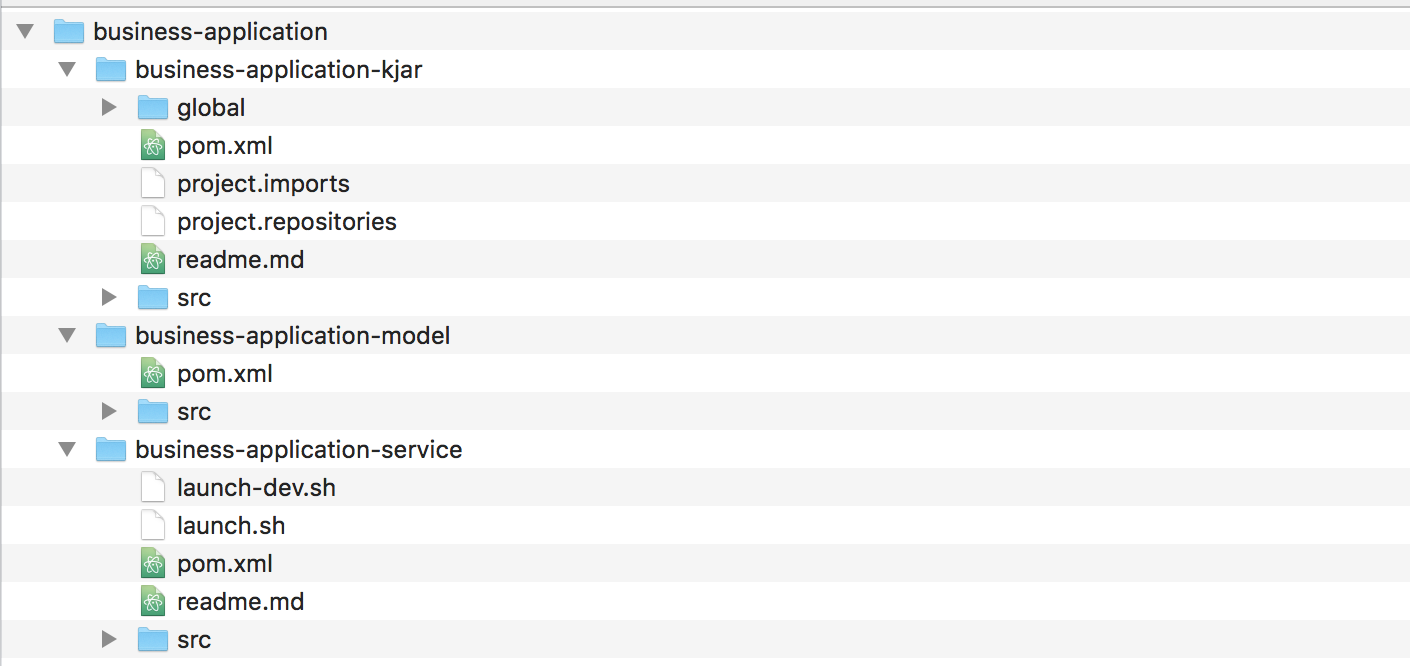

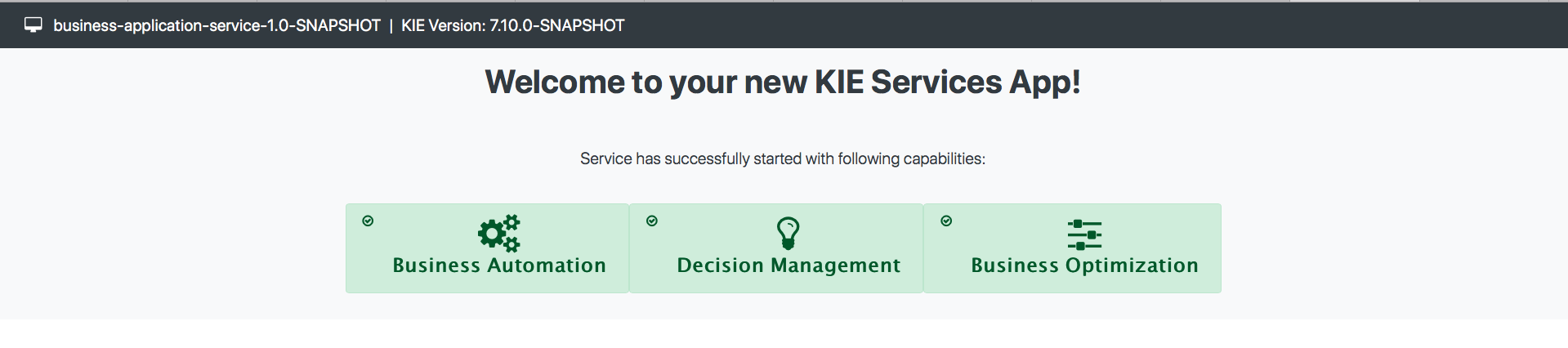

2. Getting Started

We recommend taking a look at our Getting Start page as a starting point for getting a full environment up and running with all the components you need in order to design, deploy, run and monitor a process. Alternatively, you can also take a quick tutorial that will guide you through most of the components using a simple example available in the Installer Chapter. This will teach you how to download and use the installer to create a demo setup, including most of the components. It uses a simple example to guide you through the most important features. Screencasts are available to help you out as well.

If you like to read more information first, the following chapters first focus on the core jBPM engine (API, BPMN 2.0, etc.). Further chapters will then describe the other components and other more complex topics like domain-specific processes, flexible processes, etc. After reading the core chapters, you should be able to jump to other chapters that you might find interesting.

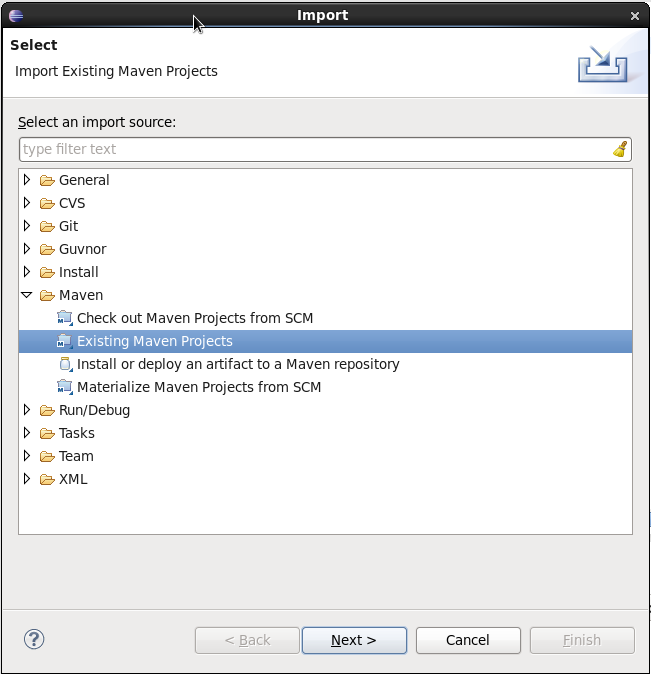

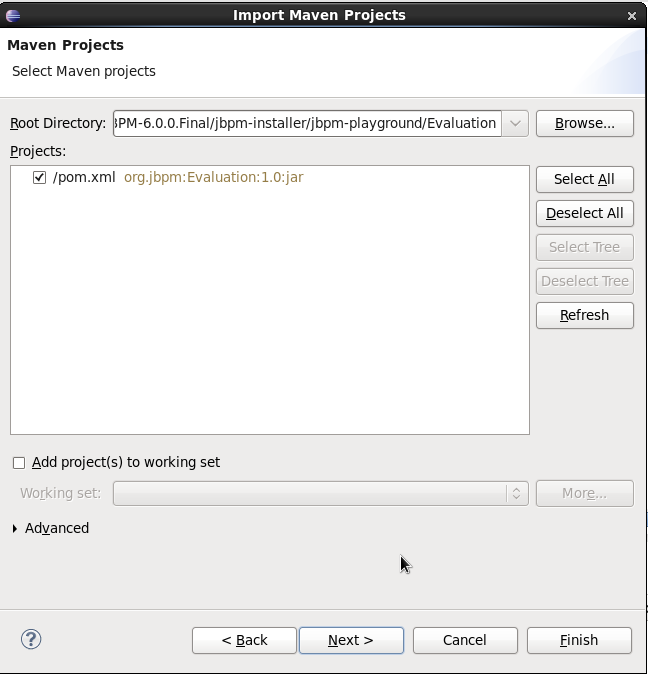

You can also start playing around with some examples that are offered in a separate download. Check out the Examples chapter to see how to start playing with these.

After reading through these chapters, you should be ready to start creating your own processes and integrate the jBPM engine with your application. These processes can be started from the installer or be started from scratch.

2.1. Downloads

Latest releases can be downloaded from jBPM.org. Just pick the artifact you want:

-

server: single zip distribution with jBPM server (including WildFly, Business Central, jBPM case management showcase and service repository)

-

bin: all the jBPM binaries (JARs) and their transitive dependencies

-

src: the sources of the core components

-

docs: the documentation

-

examples: some jBPM examples, can be imported into Eclipse

-

installer: the jBPM Installer, downloads and installs a demo setup of jBPM

-

installer-full: full jBPM Installer, downloads and installs a demo setup of jBPM, already contains a number of dependencies prepackaged (so they don’t need to be downloaded separately)

Older releases are archived at http://downloads.jboss.org/jbpm/release/.

Alternatively, you can also use one of the many Docker images available for use at the Download section.

2.2. Community

Here are a lot of useful links part of the jBPM community:

-

jBPM Setup and jBPM Usage user forums and mailing lists

-

A JIRA bug tracking system for bugs, feature requests and roadmap

Please feel free to join us in our IRC channel at chat.freenode.net#jbpm. This is where most of the real-time discussion about the project takes place and where you can find most of the developers most of their time as well. Don’t have an IRC client installed? Simply go to http://webchat.freenode.net/, input your desired nickname, and specify #jbpm. Then click login to join the fun.

2.3. Sources

2.3.1. License

The jBPM code itself is using the Apache License v2.0.

Some other components we integrate with have their own license:

-

The new Eclipse BPMN2 plugin is Eclipse Public License (EPL) v1.0.

-

The legacy web-based designer is based on Oryx/Wapama and is MIT License

-

The Drools project is Apache License v2.0.

2.3.2. Source code

jBPM now uses git for its source code version control system. The sources of the jBPM project can be found here (including all releases starting from jBPM 5.0-CR1):

The source of some of the other components can be found here:

2.3.3. Building from source

If you’re interested in building the source code, contributing, releasing, etc. make sure to read this README.

2.4. Getting Involved

We are often asked "How do I get involved". Luckily the answer is simple, just write some code and submit it :) There are no hoops you have to jump through or secret handshakes. We have a very minimal "overhead" that we do request to allow for scalable project development. Below we provide a general overview of the tools and "workflow" we request, along with some general advice.

If you contribute some good work, don’t forget to blog about it :)

2.4.1. Sign up to jboss.org

Signing to jboss.org will give you access to the JBoss wiki, forums and JIRA. Go to https://www.jboss.org/ and click "Register".

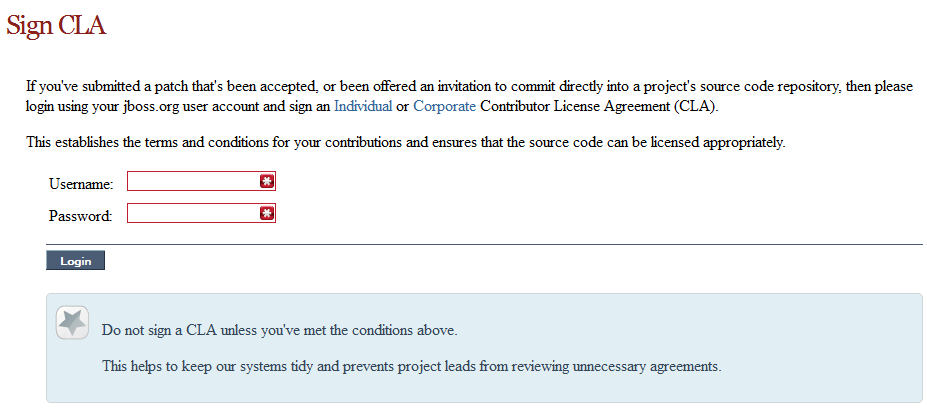

2.4.2. Sign the Contributor Agreement

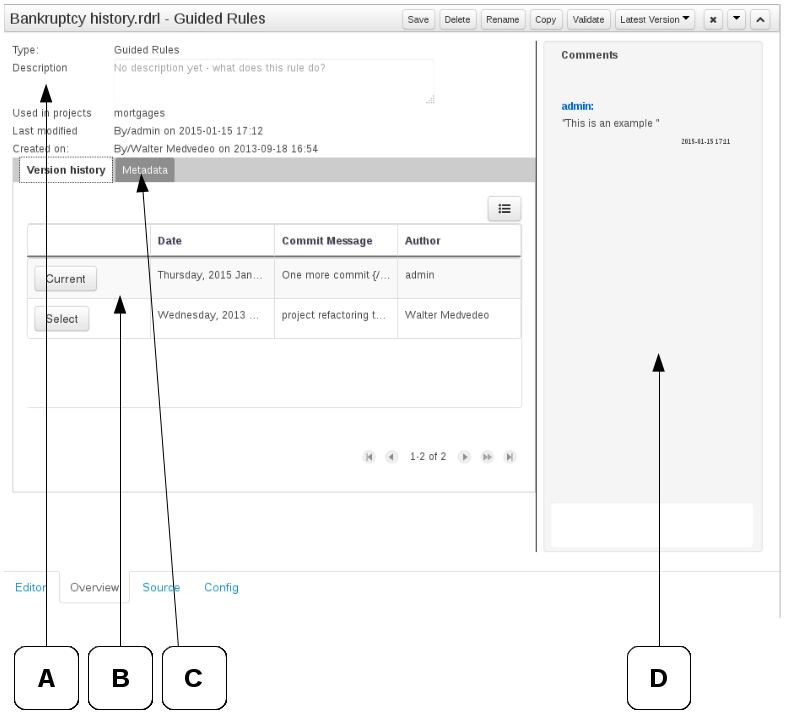

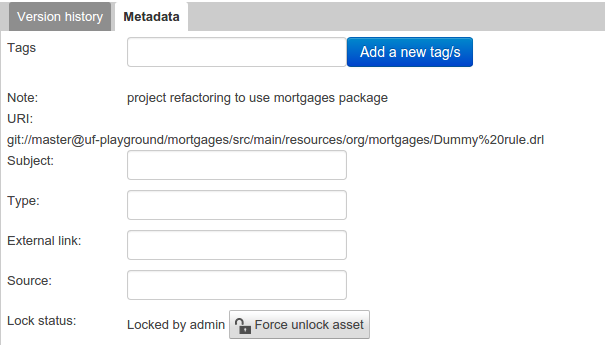

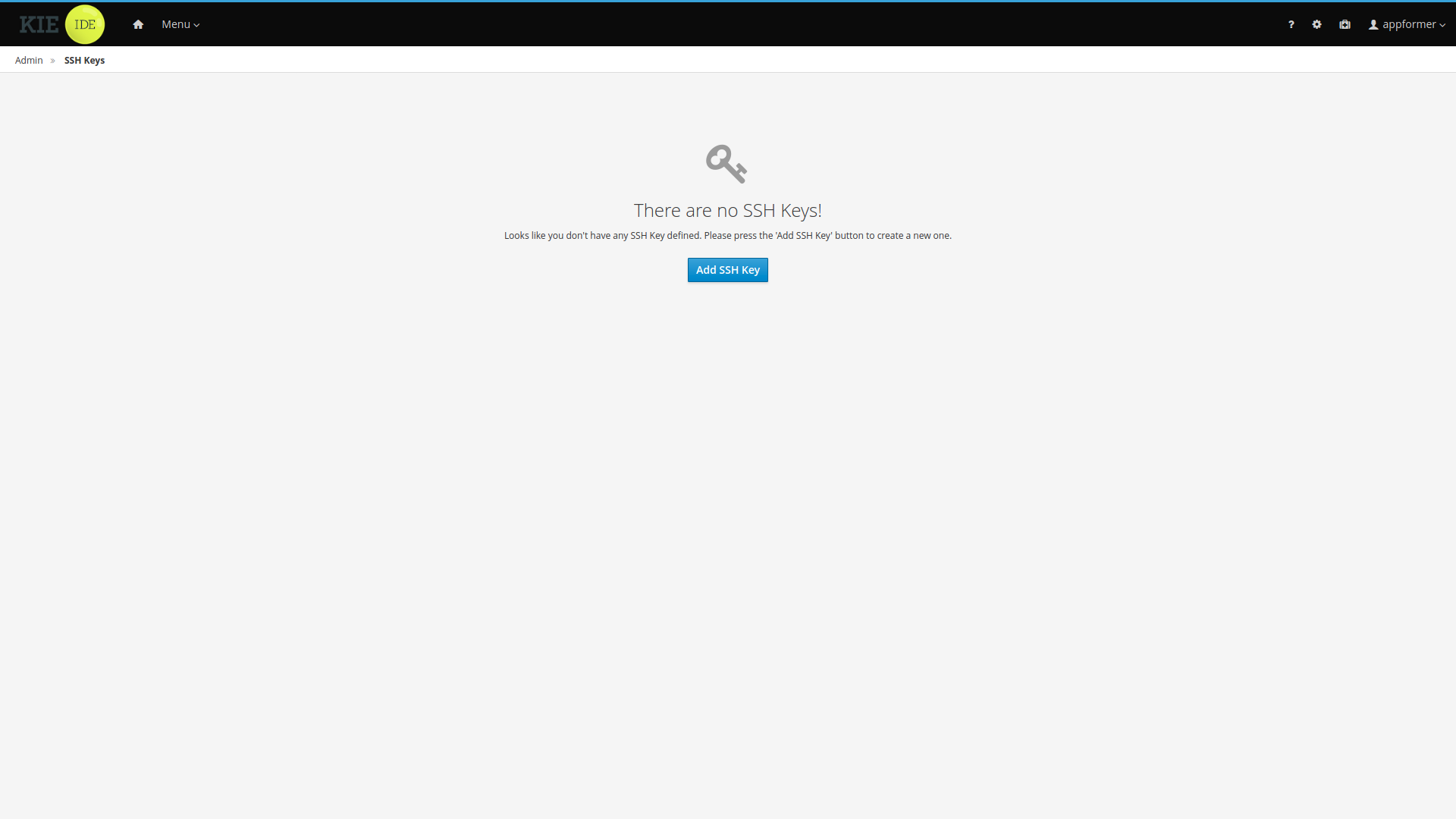

The only form you need to sign is the contributor agreement, which is fully automated via the web. As the image below says "This establishes the terms and conditions for your contributions and ensures that source code can be licensed appropriately"

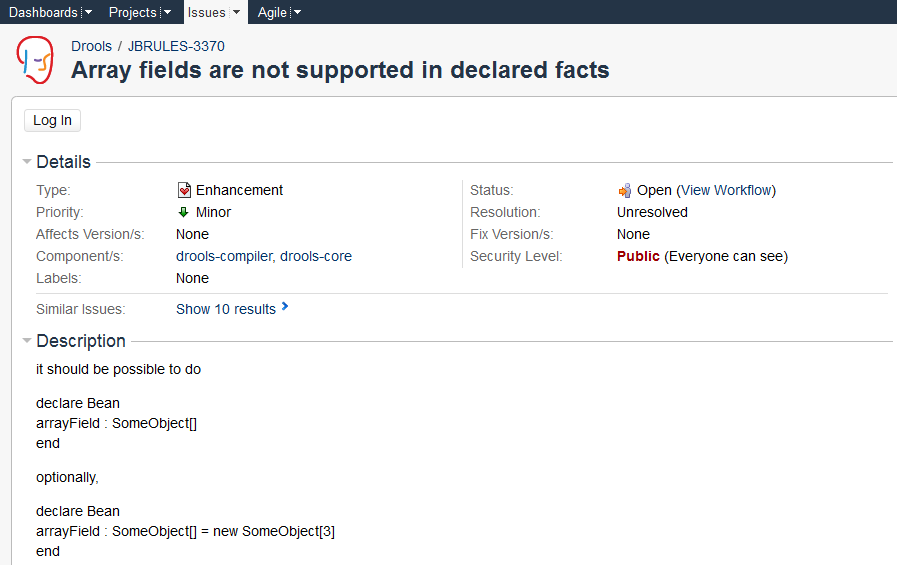

2.4.3. Submitting issues via JIRA

To be able to interact with the core development team you will need to use JIRA, the issue tracker. This ensures that all requests are logged and allocated to a release schedule and all discussions captured in one place. Bug reports, bug fixes, feature requests and feature submissions should all go here. General questions should be undertaken at the mailing lists.

Minor code submissions, like format or documentation fixes do not need an associated JIRA issue created.

2.4.4. Fork GitHub

With the contributor agreement signed and your requests submitted to JIRA you should now be ready to code :) Create a GitHub account and fork any of the Drools, jBPM or Guvnor repositories. The fork will create a copy in your own GitHub space which you can work on at your own pace. If you make a mistake, don’t worry blow it away and fork again. Note each GitHub repository provides you the clone (checkout) URL, GitHub will provide you URLs specific to your fork.

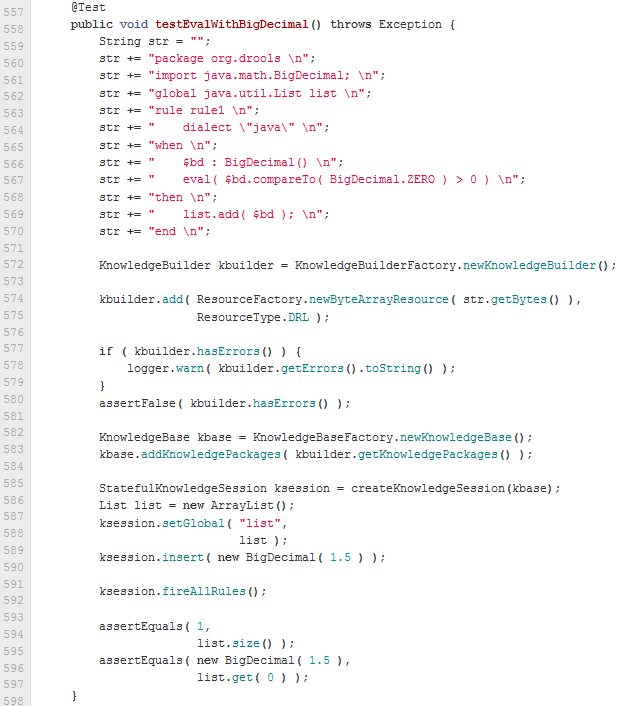

2.4.5. Writing Tests

When writing tests, try and keep them minimal and self contained. We prefer to keep the DRL fragments within the test, as it makes for quicker reviewing. If there are a large number of rules then using a String is not practical so then by all means place them in separate DRL files instead to be loaded from the classpath. If your tests need to use a model, please try to use those that already exist for other unit tests; such as Person, Cheese or Order. If no classes exist that have the fields you need, try and update fields of existing classes before adding a new class.

There are a vast number of tests to look over to get an idea, MiscTest is a good place to start.

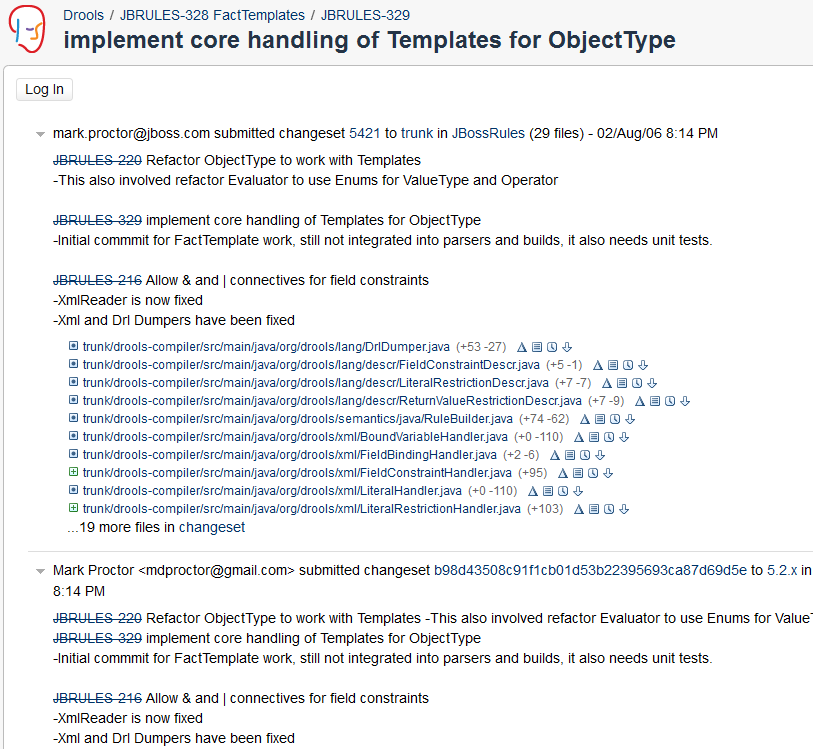

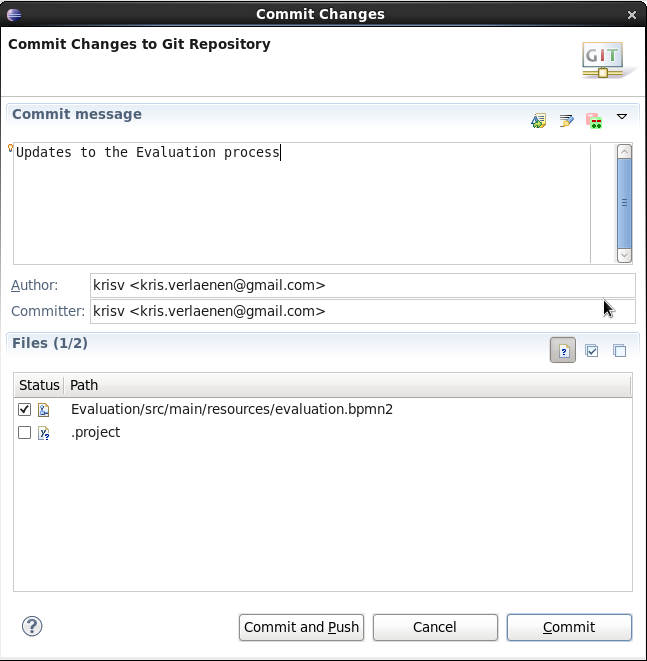

2.4.6. Commit with Correct Conventions

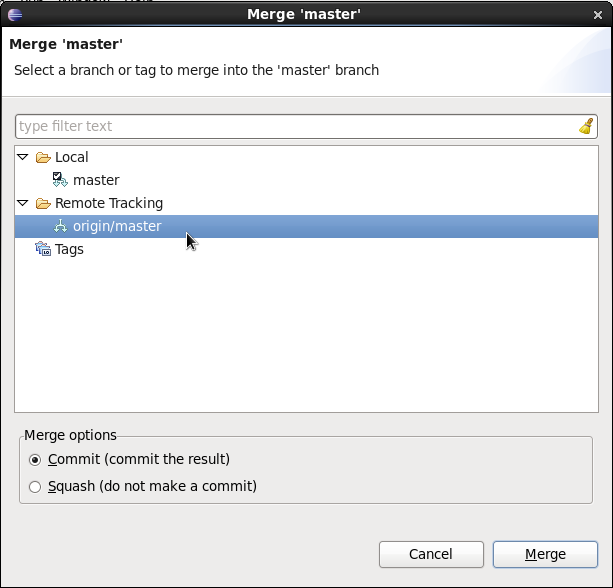

When you commit, make sure you use the correct conventions. The commit must start with the JIRA issue id, such as DROOLS-1946. This ensures the commits are cross referenced via JIRA, so we can see all commits for a given issue in the same place. After the id the title of the issue should come next. Then use a newline, indented with a dash, to provide additional information related to this commit. Use an additional new line and dash for each separate point you wish to make. You may add additional JIRA cross references to the same commit, if it’s appropriate. In general try to avoid combining unrelated issues in the same commit.

Don’t forget to rebase your local fork from the primary branch and then push your commits back to your fork.

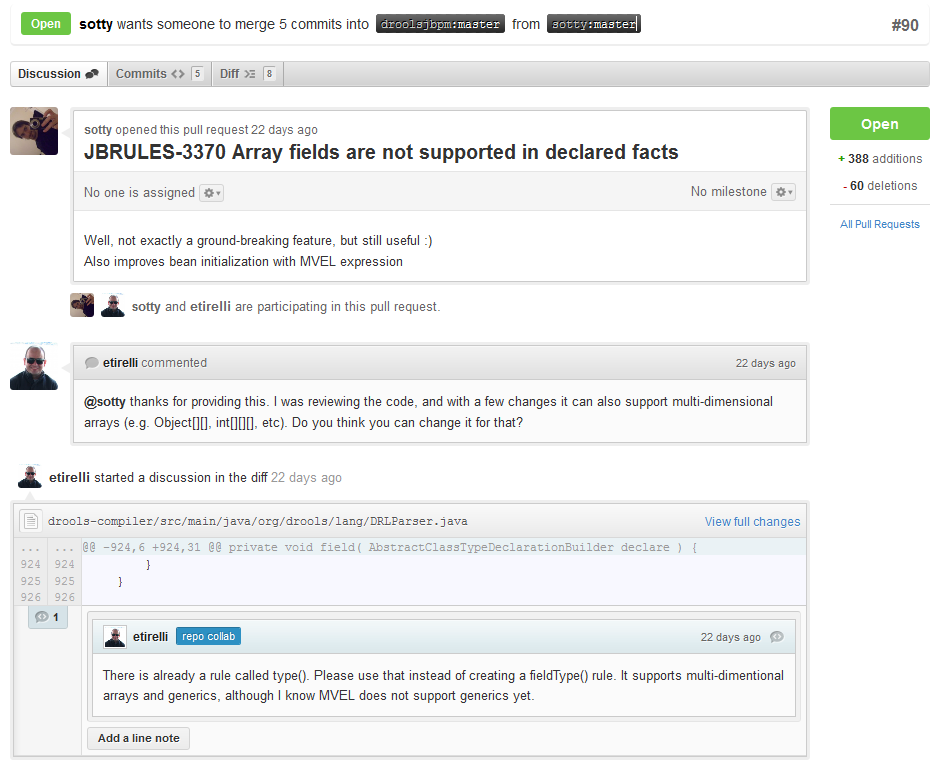

2.4.7. Submit Pull Requests

With your code rebased from primary branch and pushed to your personal GitHub area, you can now submit your work as a pull request. If you look at the top of the page in GitHub for your work area there will be a "Pull Request" button. Selecting this will then provide a gui to automate the submission of your pull request.

The pull request then goes into a queue for everyone to see and comment on. Below you can see a typical pull request. The pull requests allow for discussions and it shows all associated commits and the diffs for each commit. The discussions typically involve code reviews which provide helpful suggestions for improvements, and allows for us to leave inline comments on specific parts of the code. Don’t be disheartened if we don’t merge straight away, it can often take several revisions before we accept a pull request. Luckily GitHub makes it very trivial to go back to your code, do some more commits and then update your pull request to your latest and greatest.

It can take time for us to get round to responding to pull requests, so please be patient. Submitted tests that come with a fix will generally be applied quite quickly, where as just tests will often way until we get time to also submit that with a fix. Don’t forget to rebase and resubmit your request from time to time, otherwise over time it will have merge conflicts and core developers will general ignore those.

2.5. What to do if I encounter problems or have questions?

You can always contact the jBPM community for assistance.

IRC: #jbpm at chat.freenode.net

jBPM Setup Google Group - Installation, configuration, setup and administration discussions for Business Central, Eclipse, runtime environments and general enterprise architectures.

jBPM Usage Google Group - Authoring, executing and managing processes with jBPM. Any questions regarding the use of jBPM. General API help and best practices in building BPM systems.

Visit our website for more options on how to get help.

Legacy jBPM User Forum - serves as an archive; post new questions to one of the Google Groups above

3. Getting started with process services in jBPM

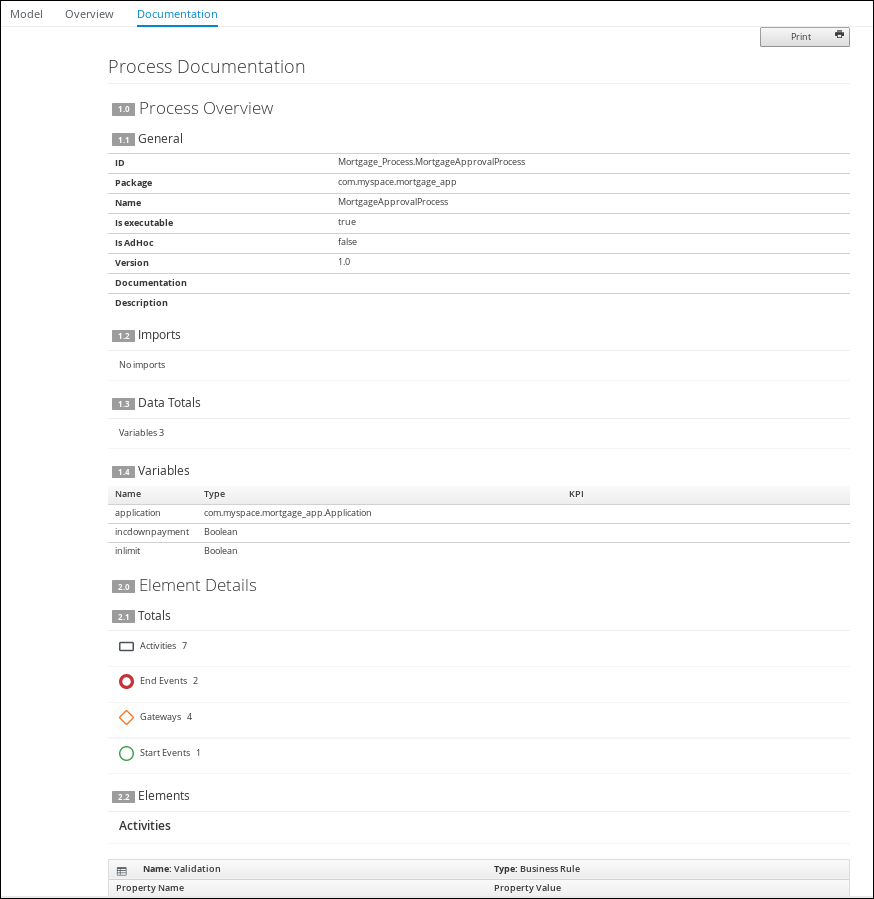

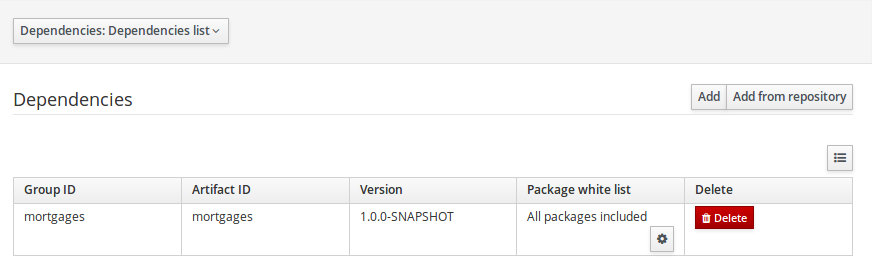

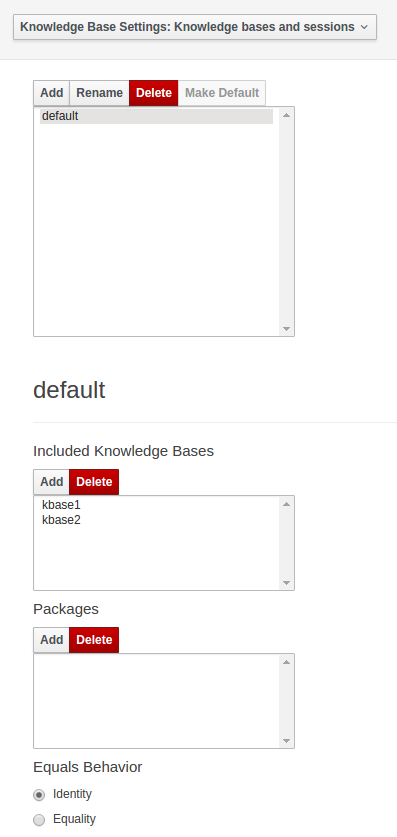

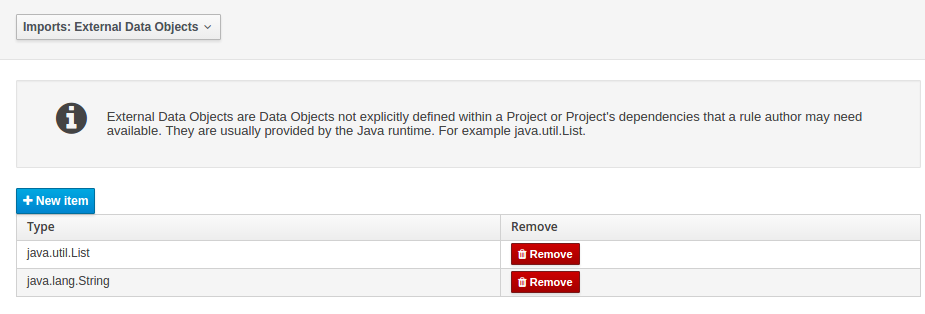

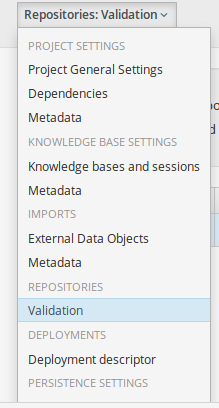

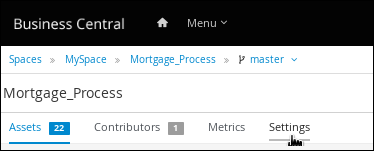

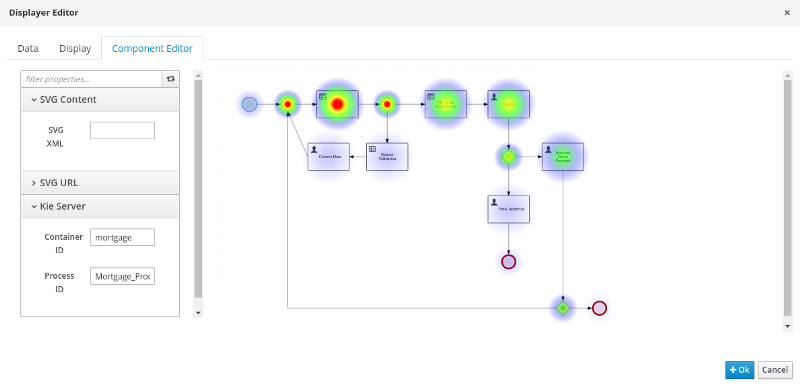

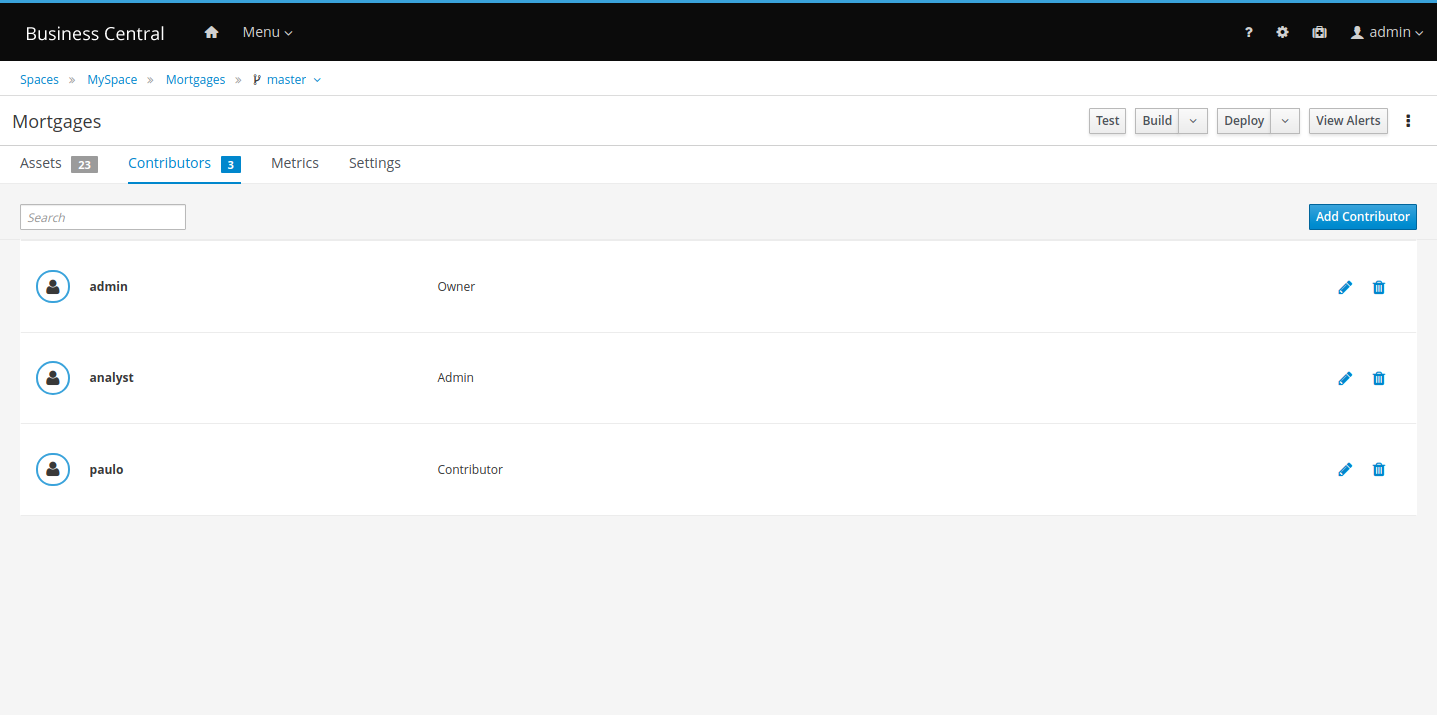

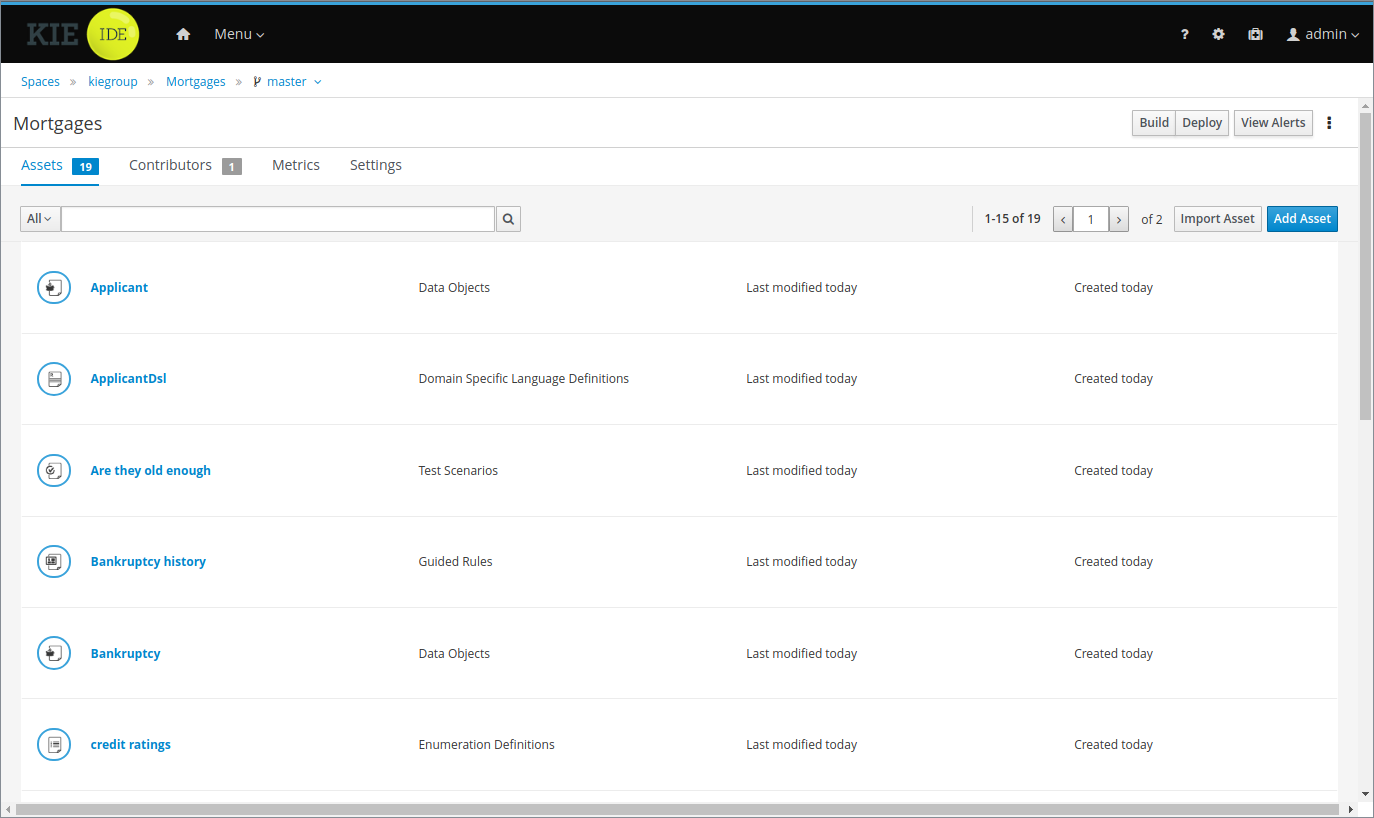

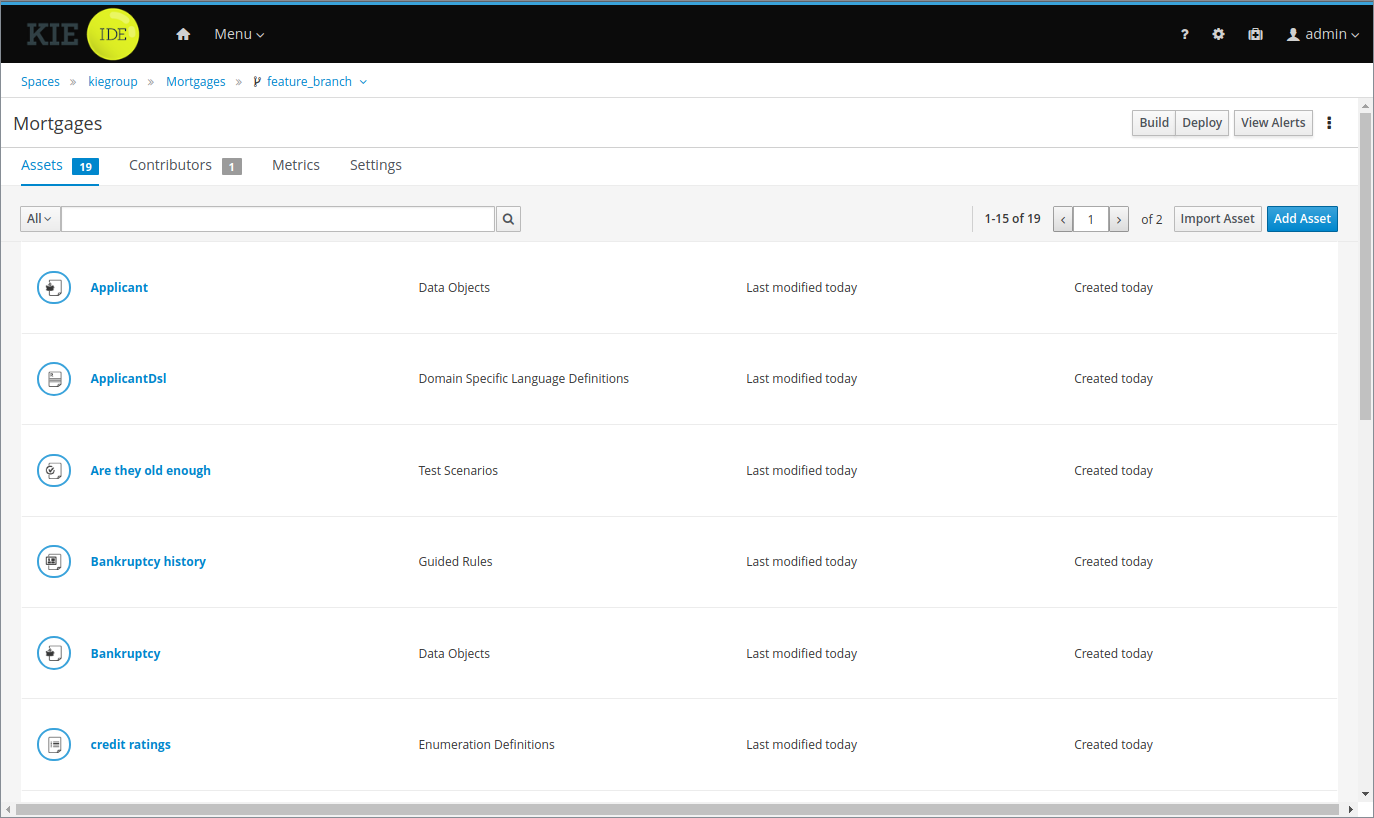

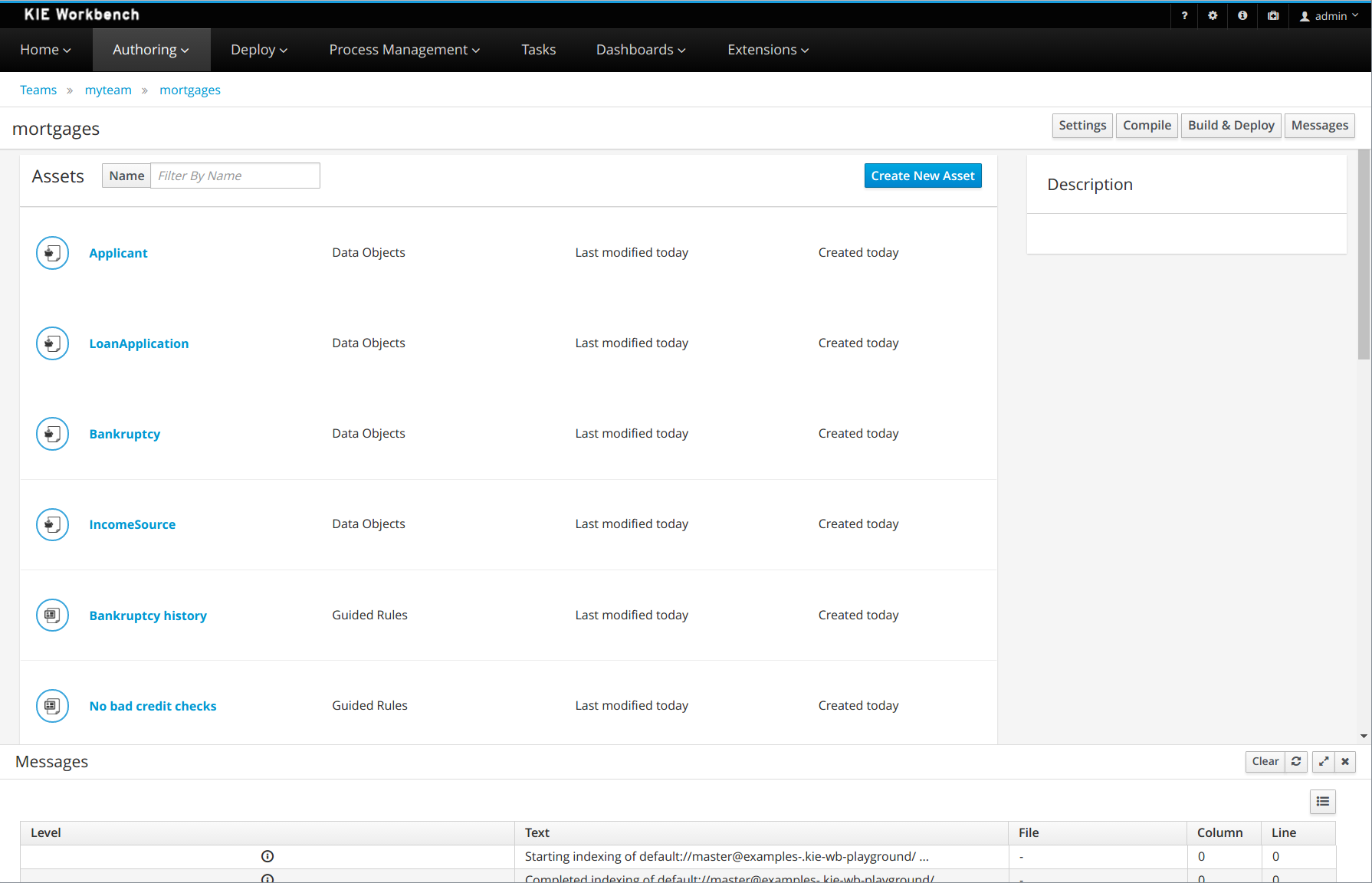

As a business rules and processes developer, you can use Business Central in jBPM to design business processes to meet specific business requirements. jBPM provides sample projects in Business Central that contain business assets for reference purposes. This document describes how to create a new mortgage process project, data objects, and business process to familiarize yourself with Business Central and the process designer.

You will then refer to the Mortgage_Process sample project included in Business Central to review the sample project’s business rules, decision tables, and forms. You will build and deploy the Mortgage_Process sample project and execute the project’s defined functionality.

-

Red Hat JBoss Enterprise Application Platform 7.4 is installed. For details, see the Red Hat JBoss Enterprise Application Platform 7.4 Installation Guide.

-

jBPM is installed and configured with KIE Server. For more information, see Installing and configuring jBPM on Red Hat JBoss EAP 7.4.

-

jBPM is running and you can log in to Business Central with the

developerrole. For more information, see Planning a jBPM installation.

3.1. Overview

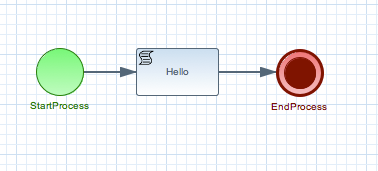

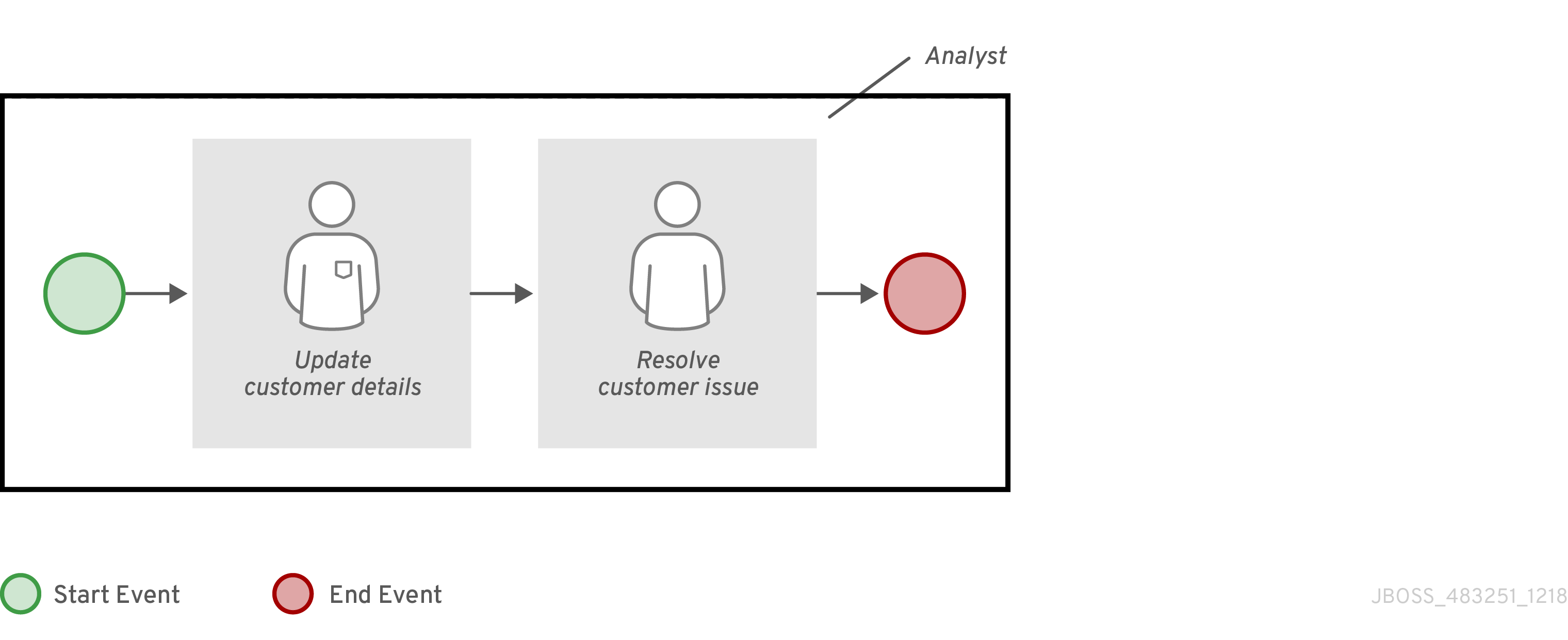

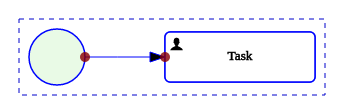

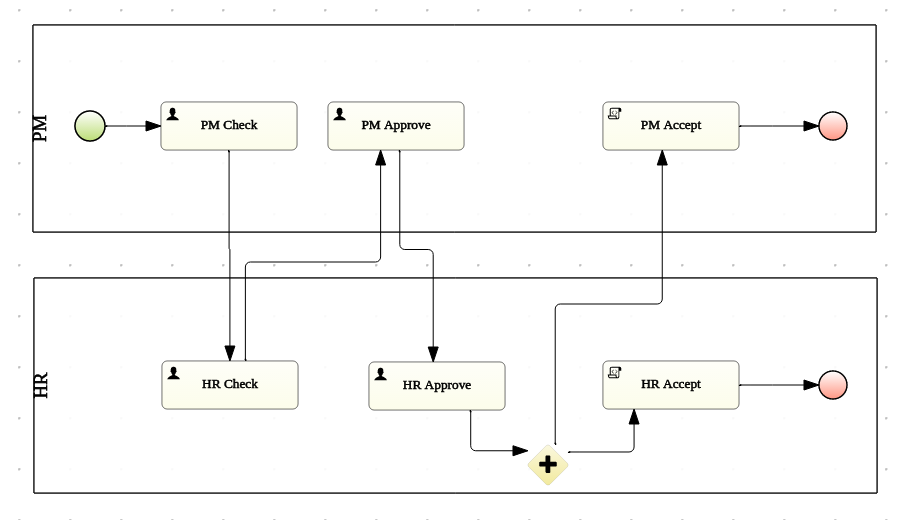

Business Central enables you to automate your business processes. A business process is a diagram that describes the order in which a series of steps must be executed and consists of predefined nodes and connections. Each node represents one step in the process while the connections specify how to transition from one node to another.

For example, a bank offers a housing mortgage loan service. Using Business Central, the housing mortgage department of the bank creates a complete business process for the mortgage loan.

When a customer wants to buy a new property using credit, the following steps occur:

-

The customer contacts a broker at the bank who assists in filing for a mortgage loan.

-

The broker collects information about the property and the customer, such as the salary of the customer, social security number, the property sale price, and the requested loan amount.

-

The broker then submits a request on behalf of the customer.

Whenever a customer submits a request, a new process instance is created. This ensures consistency in the quality of evaluating each request, provides complete visibility into the status of each request, and makes the process efficient and effective.

3.2. Creating the mortgage-process project

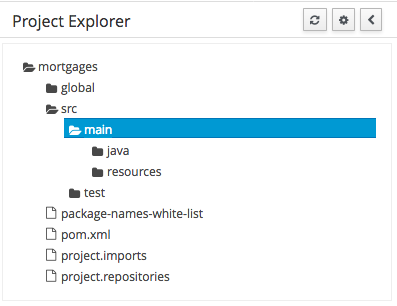

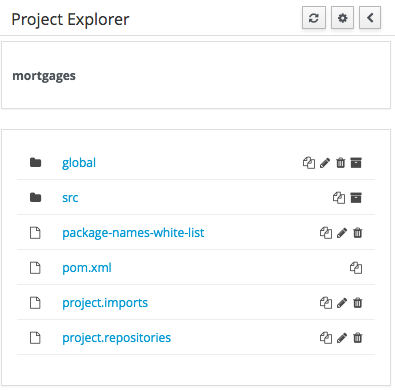

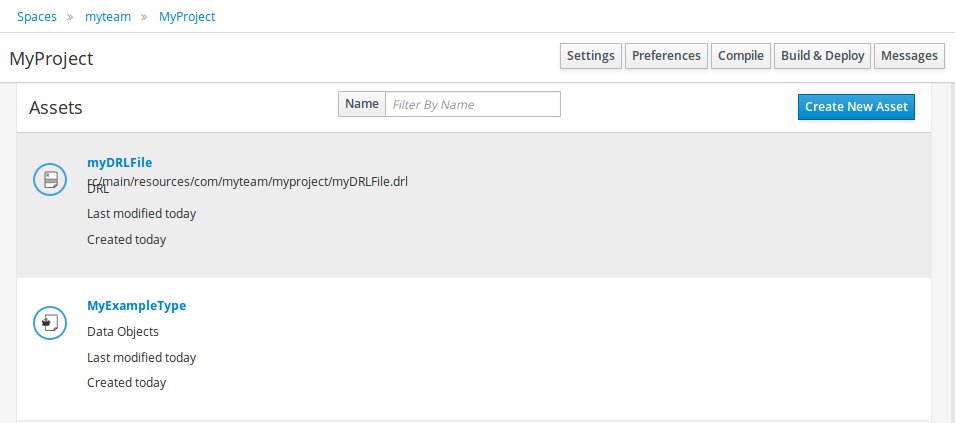

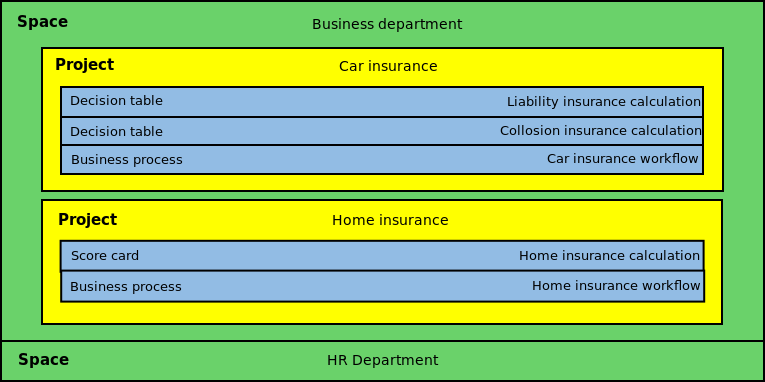

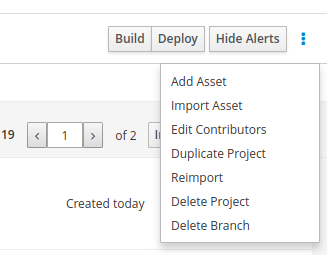

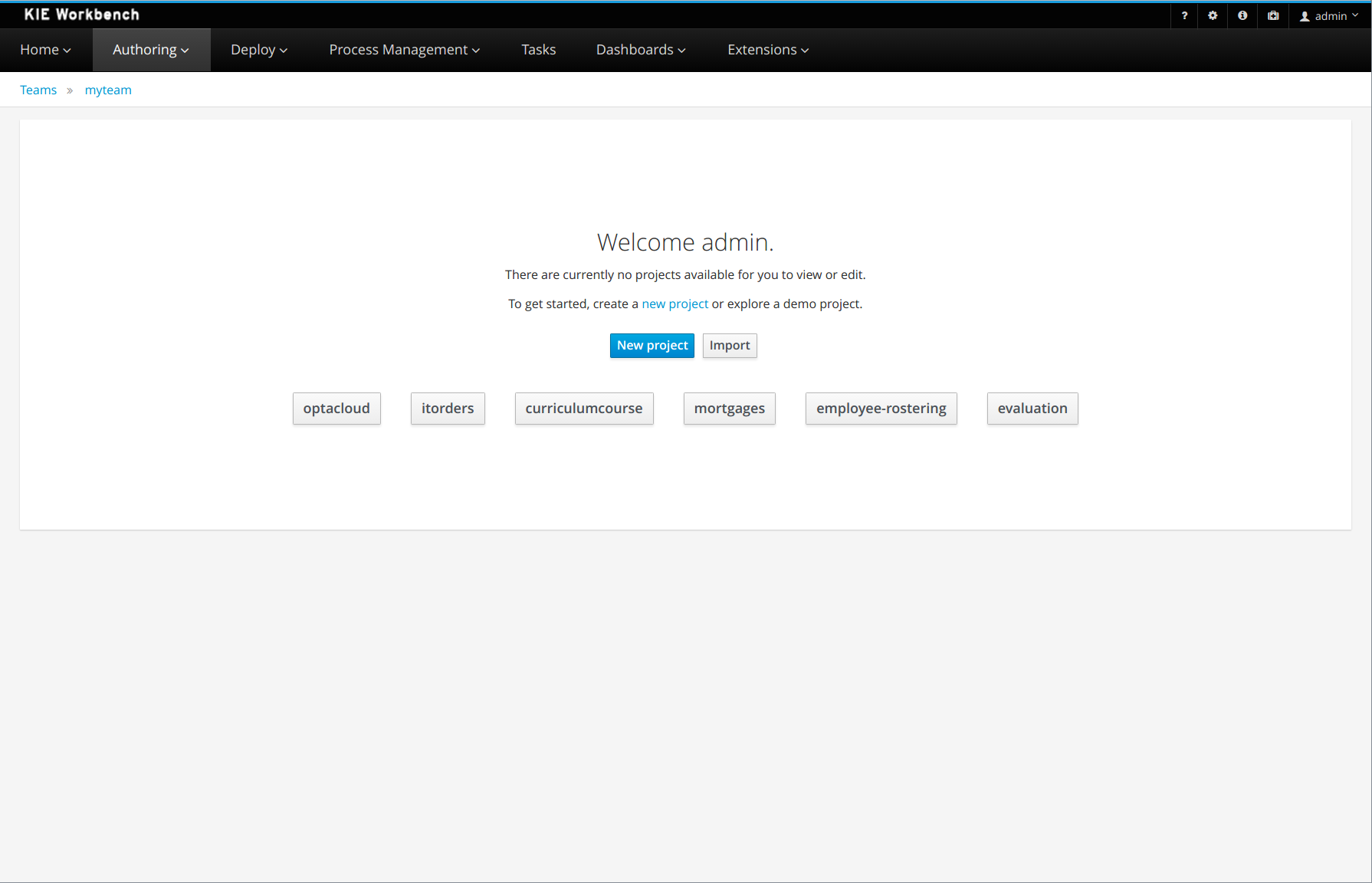

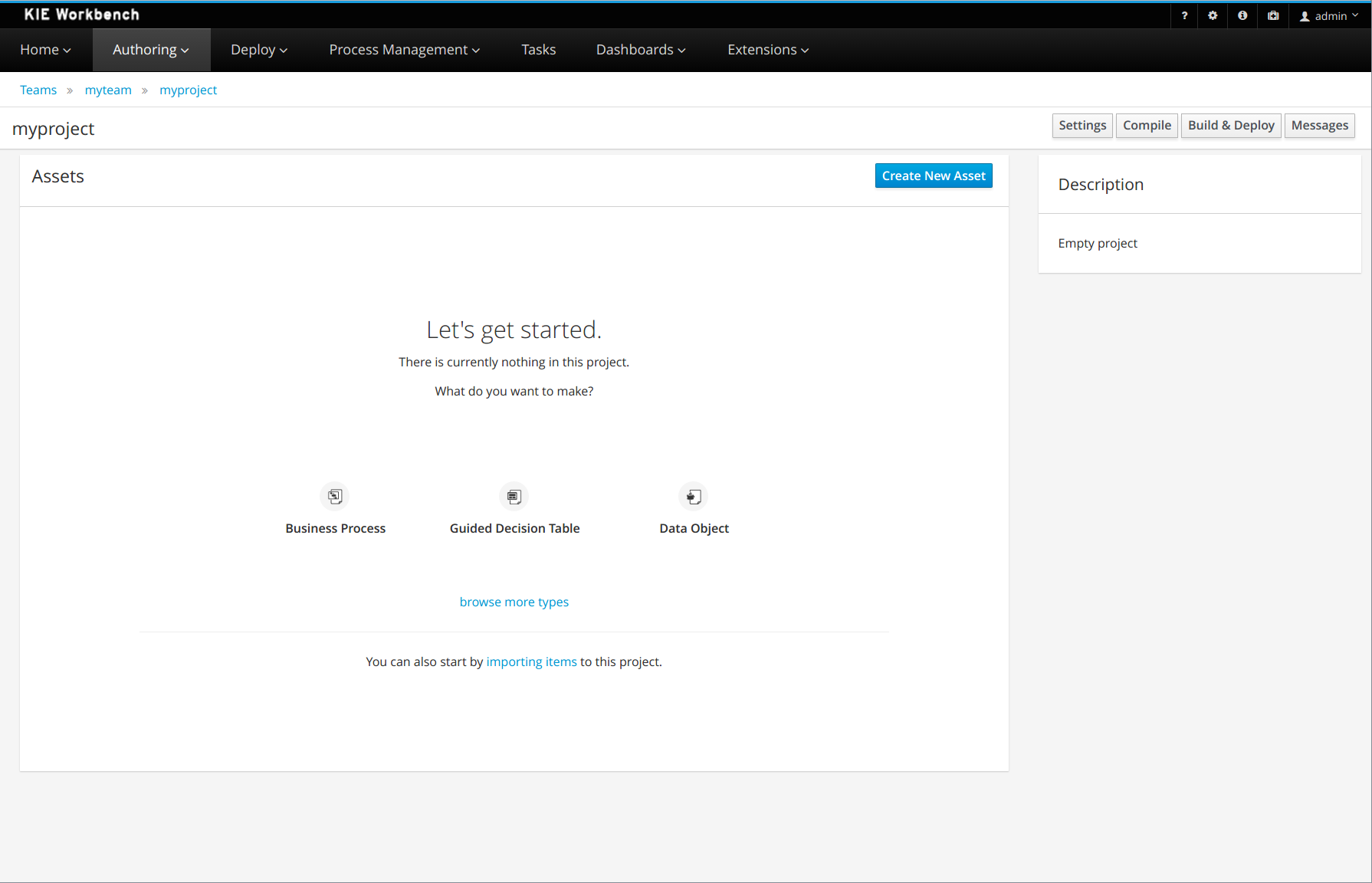

A project is a container for assets such as data objects, business processes, guided rules, decision tables, and forms. The project that you are creating is similar to the existing Mortgage_Process sample project in Business Central.

-

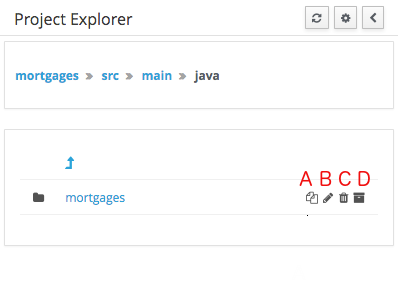

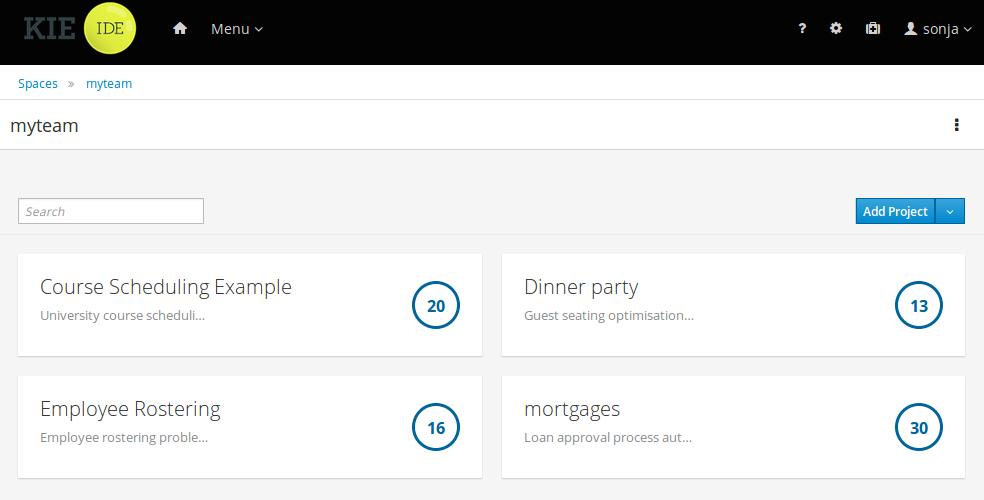

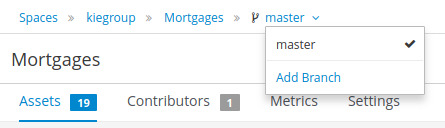

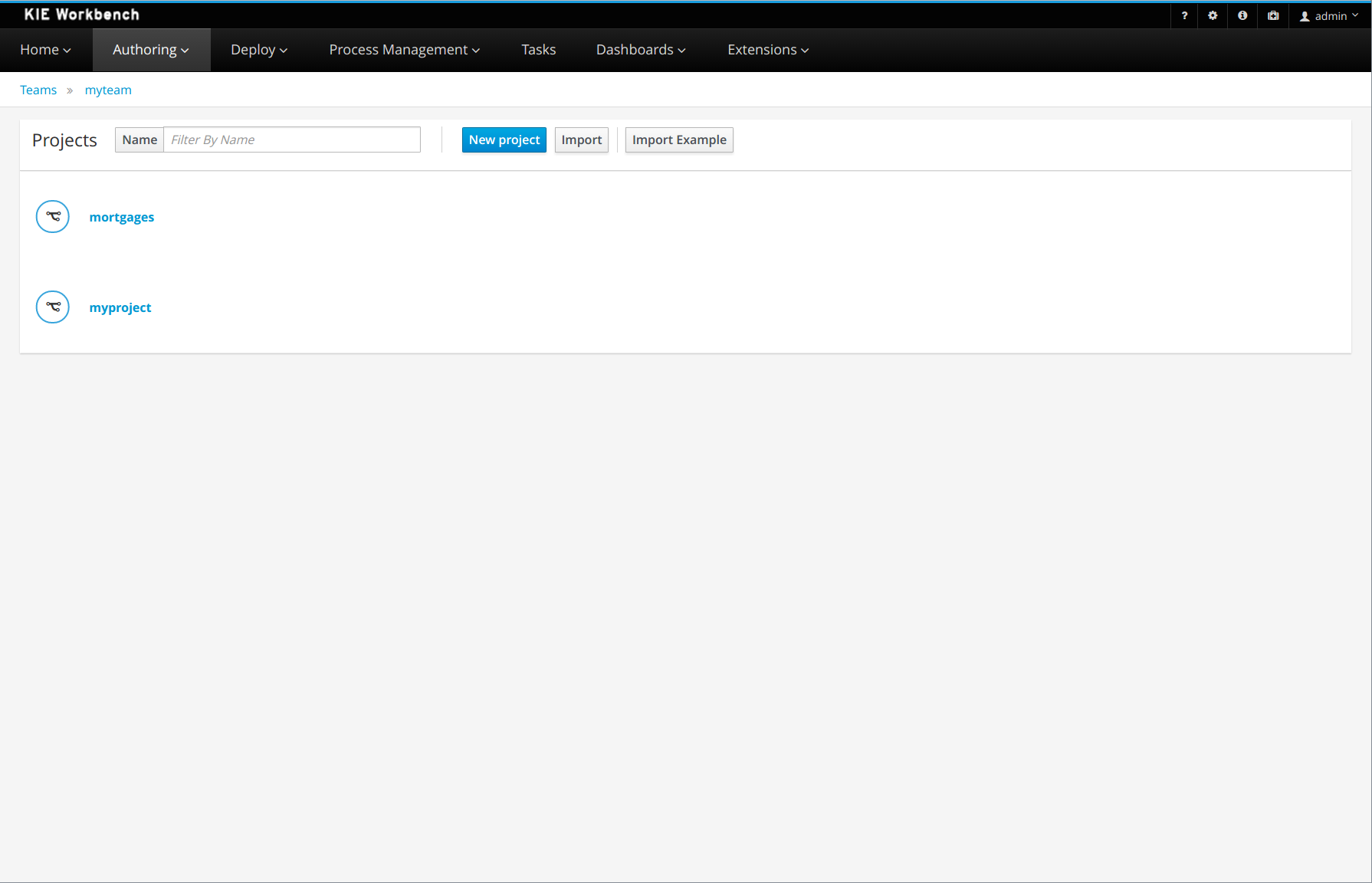

In Business Central, go to Menu → Design → Projects.

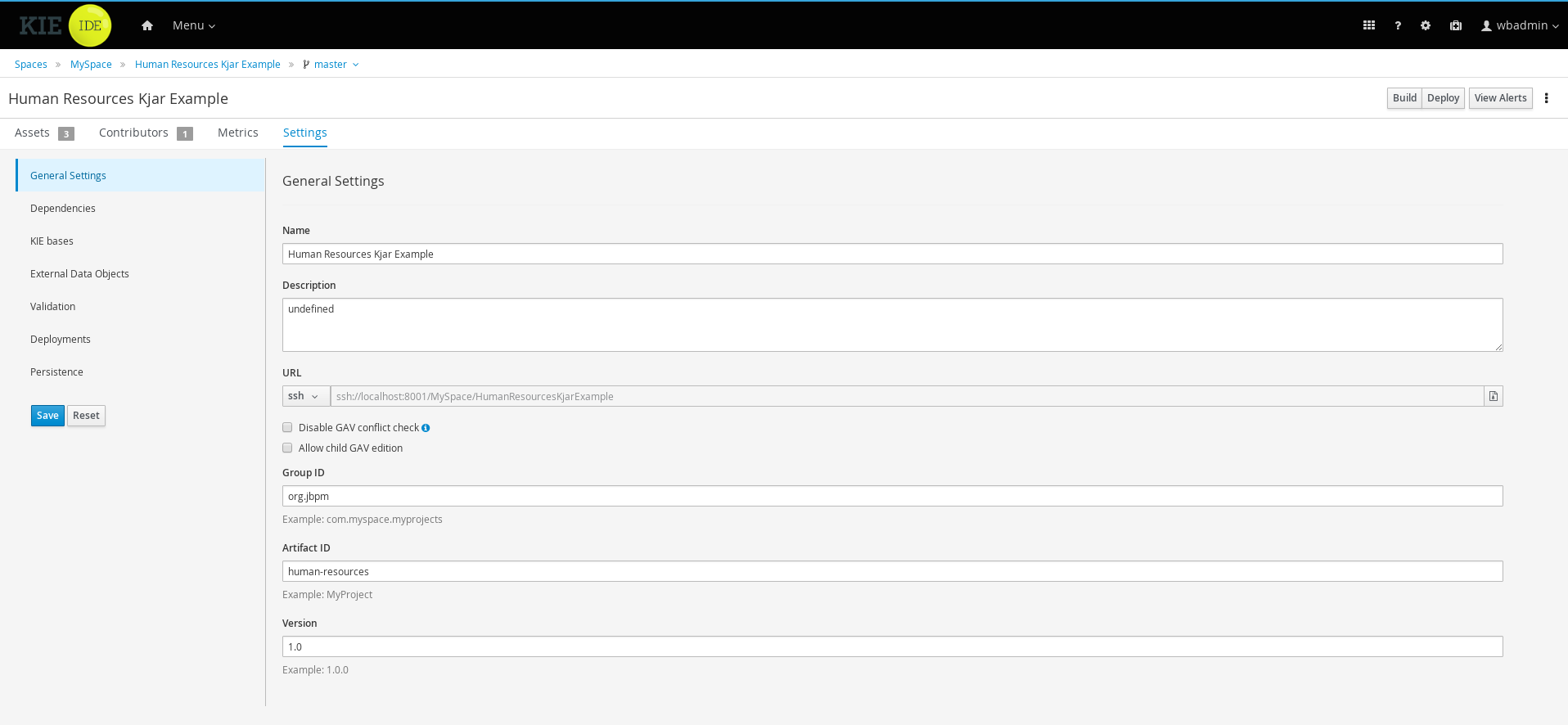

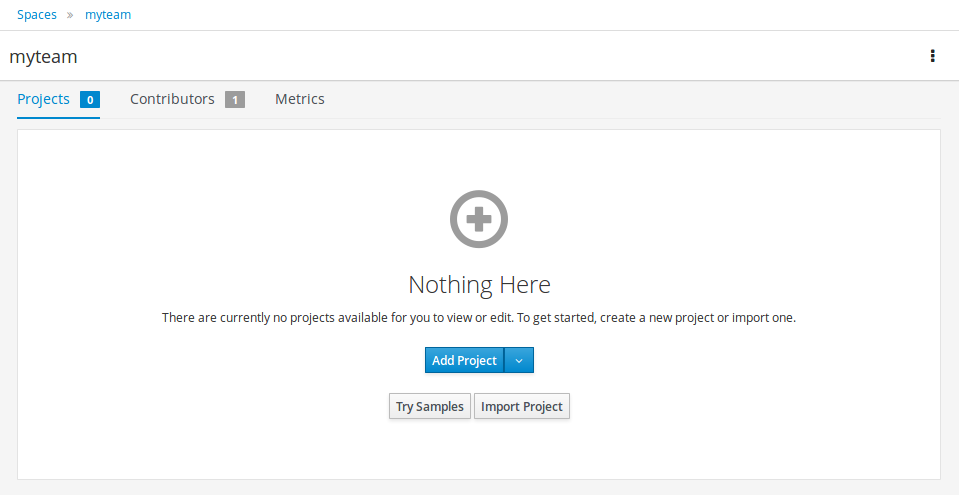

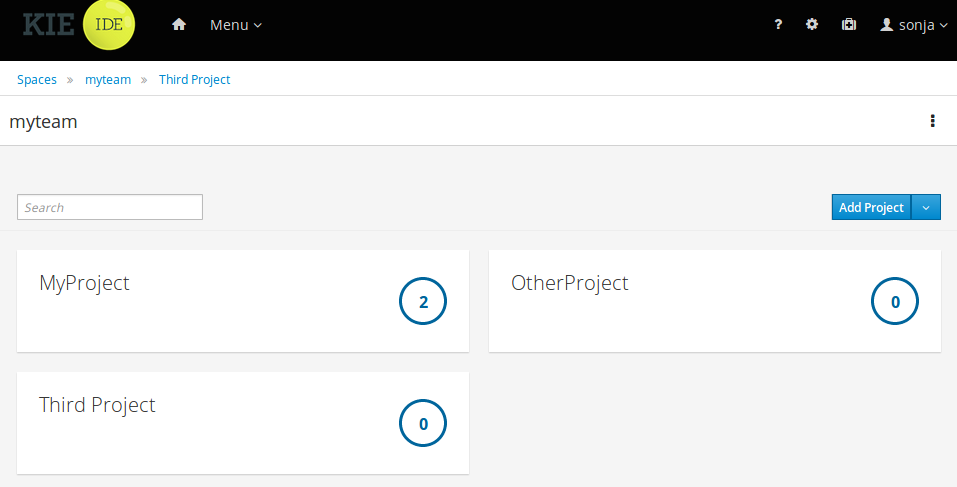

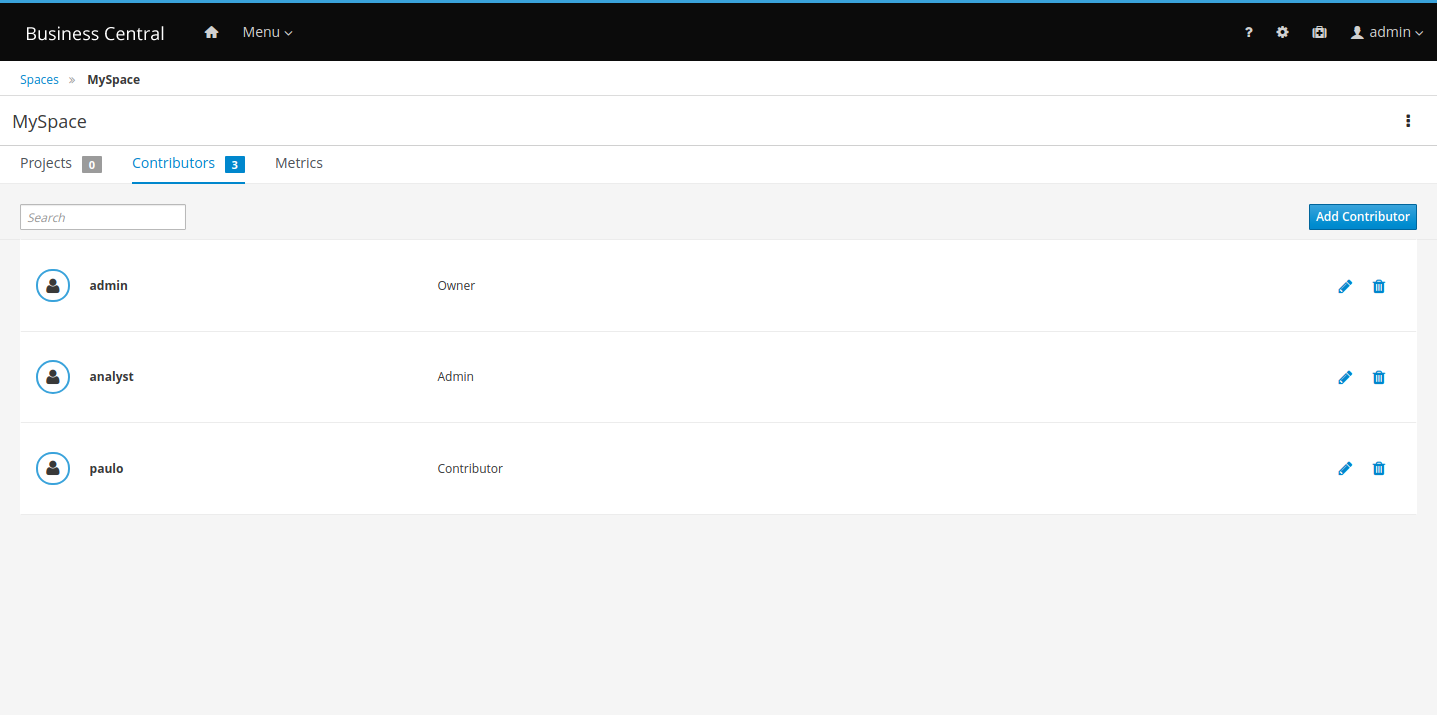

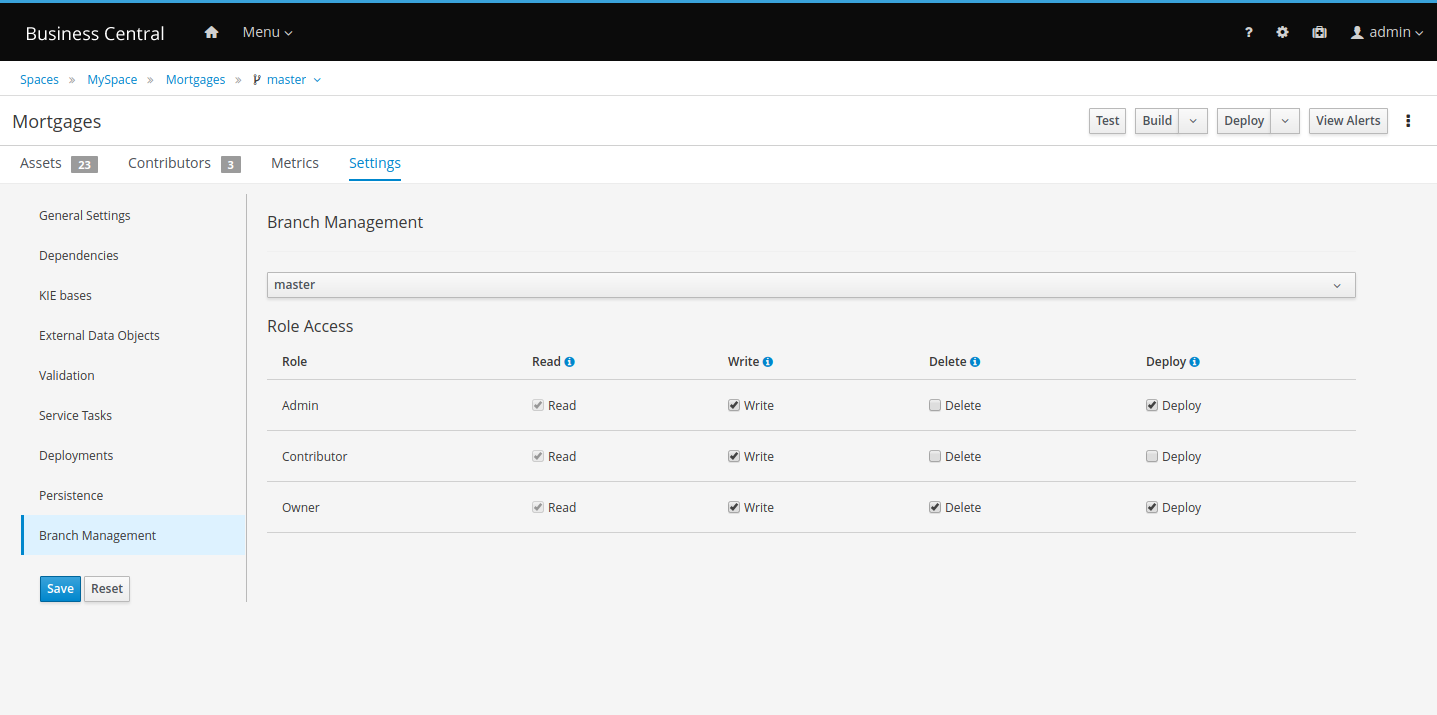

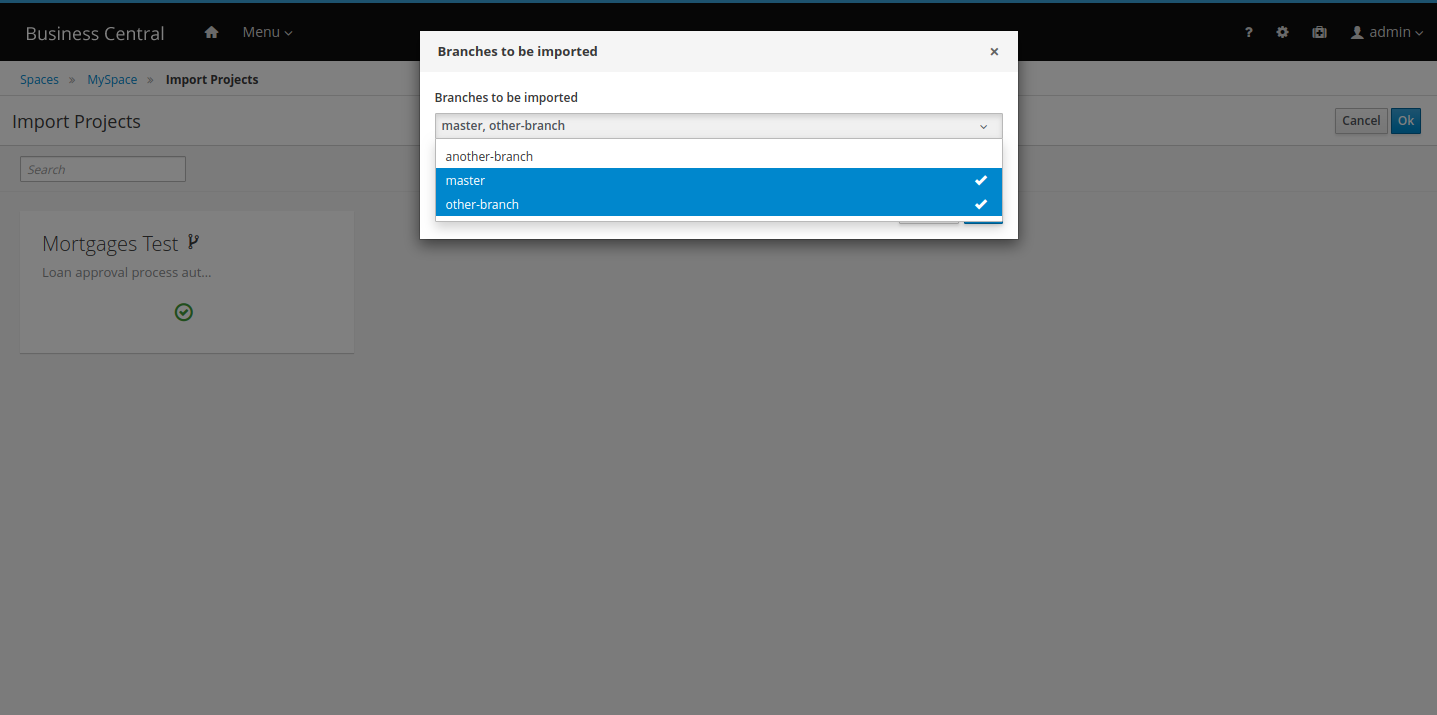

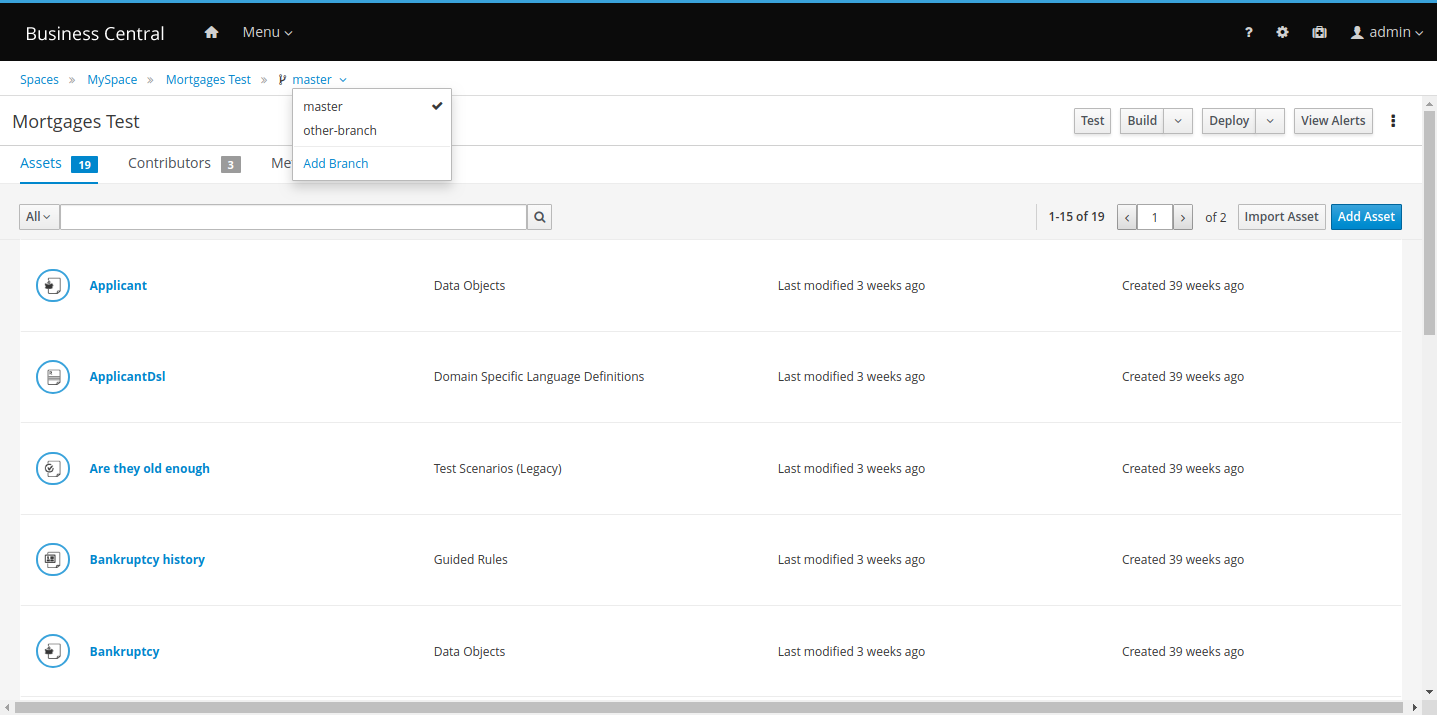

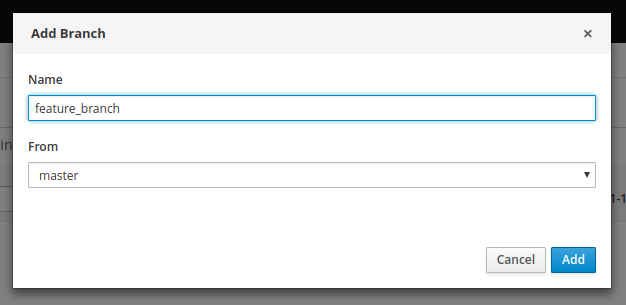

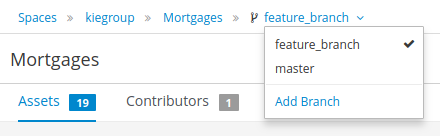

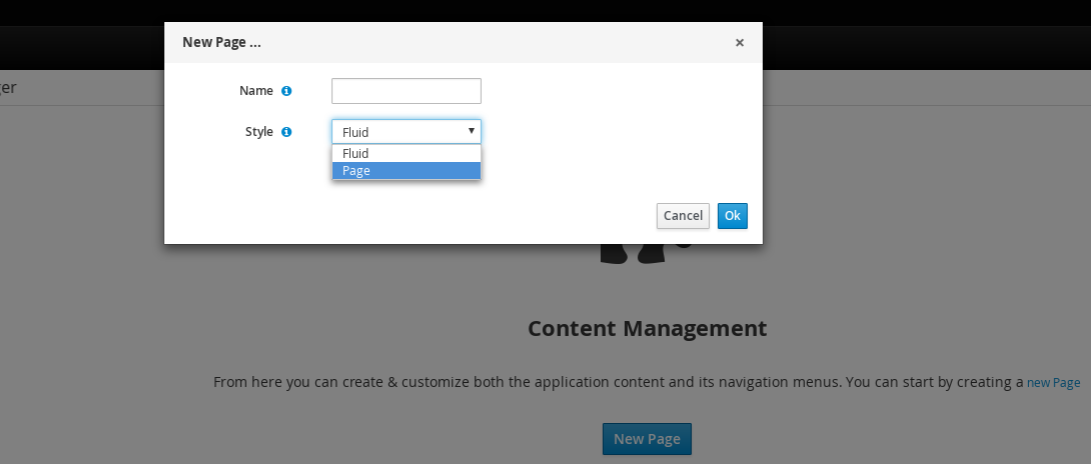

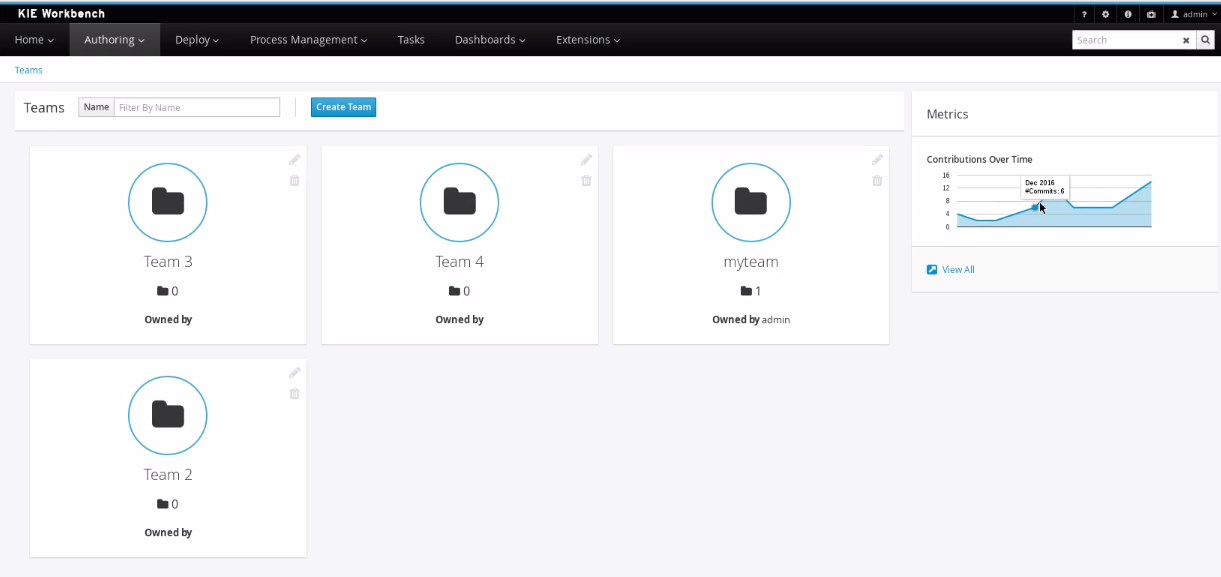

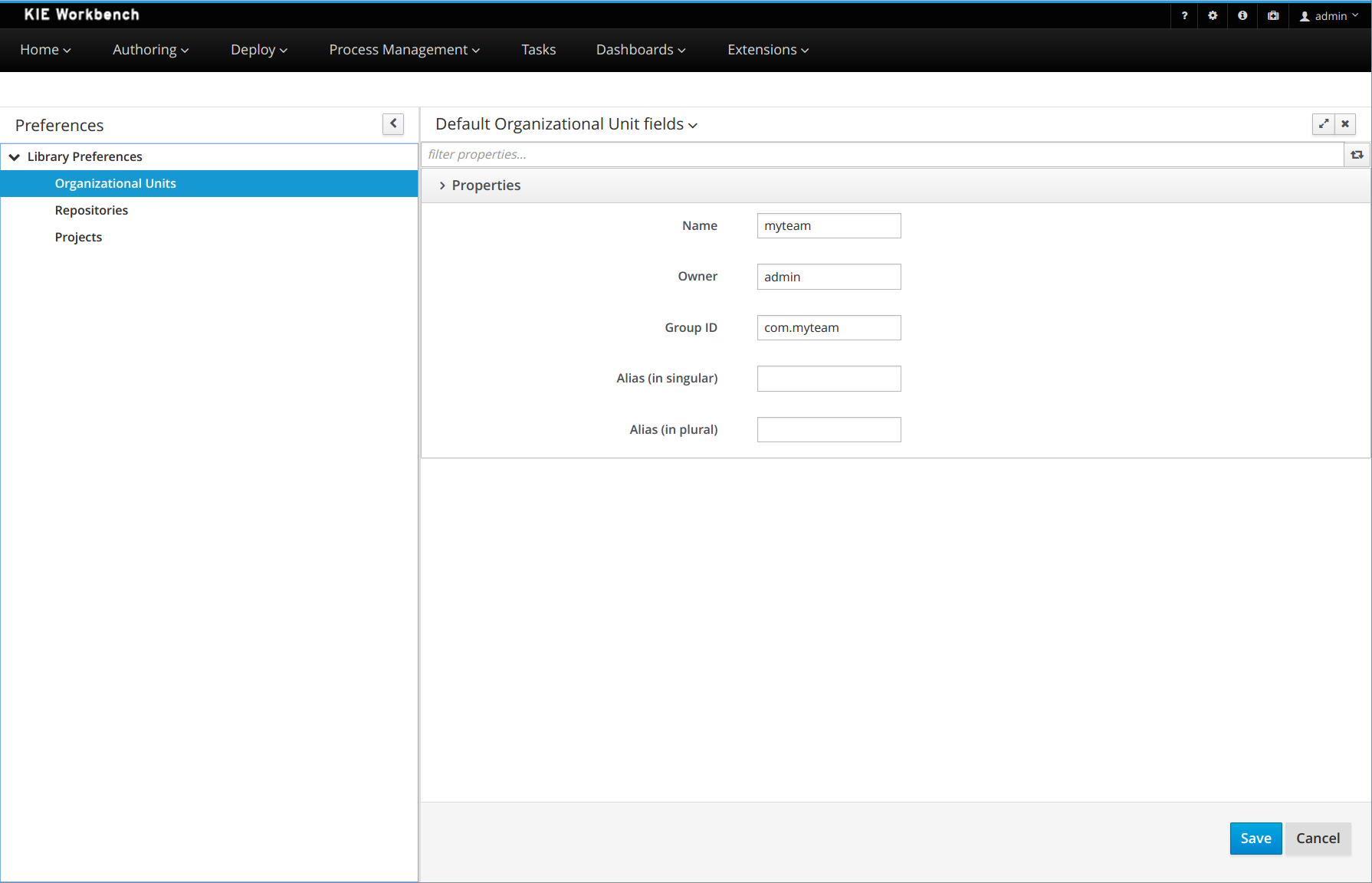

jBPM provides a default space called MySpace, as shown in the following image. You can use the default space to create and test example projects.

Figure 7. Default space

Figure 7. Default space -

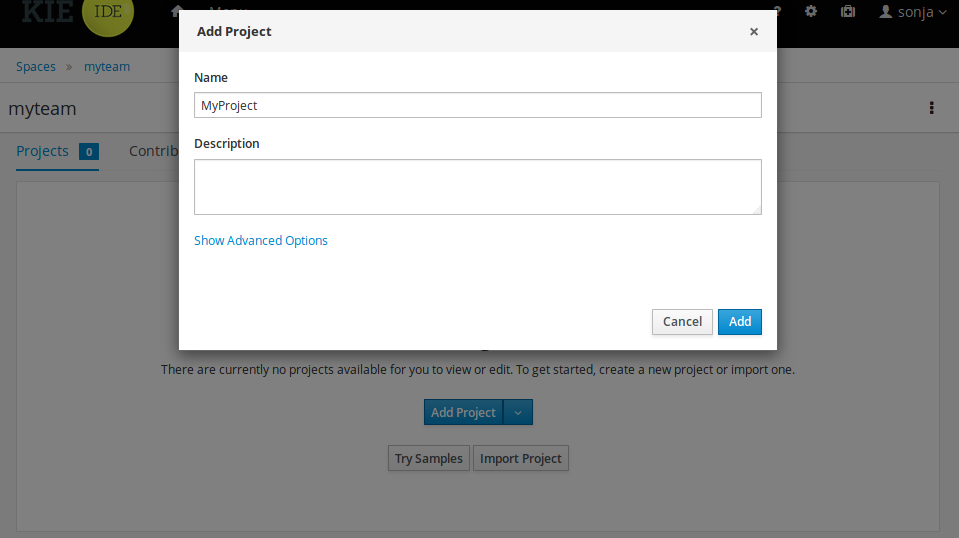

Click Add Project.

-

Enter

mortgage-processin the Name field. -

Click Configure Advanced Options and modify the GAV fields with the following values:

-

Group ID:

com.myspace -

Artifact ID:

mortgage-process -

Version:

1.0.0

-

-

Click Add.

The Assets view of the project opens.

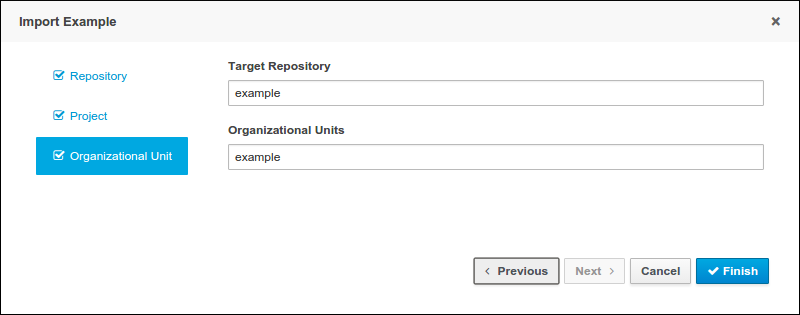

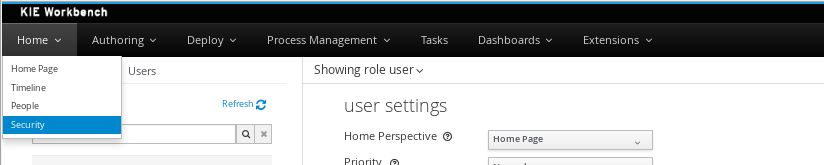

3.3. Creating users

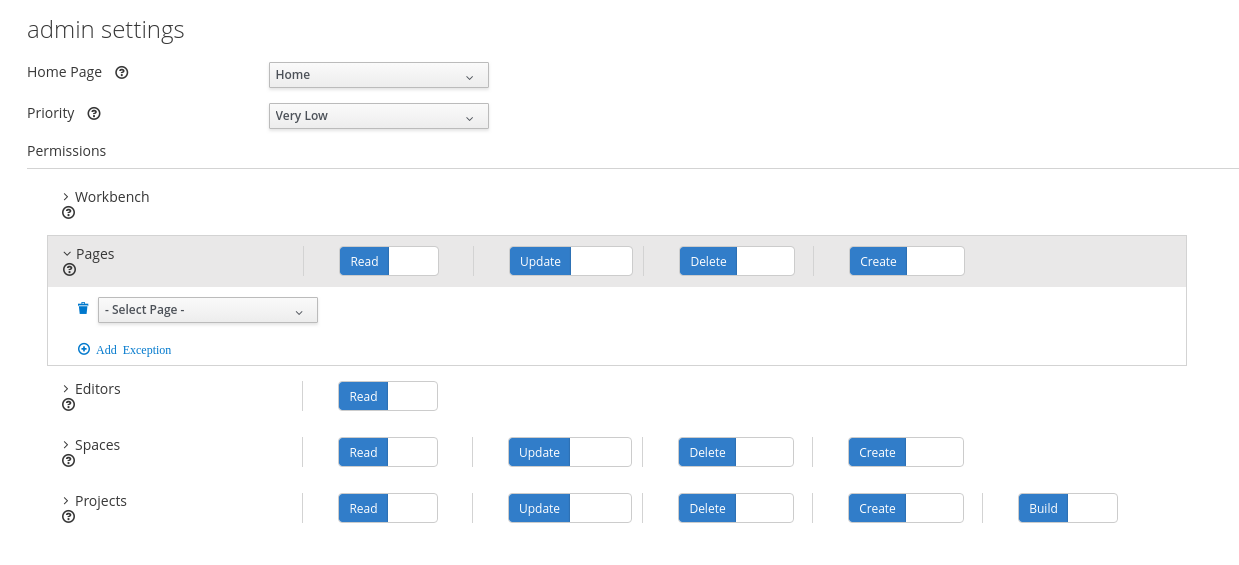

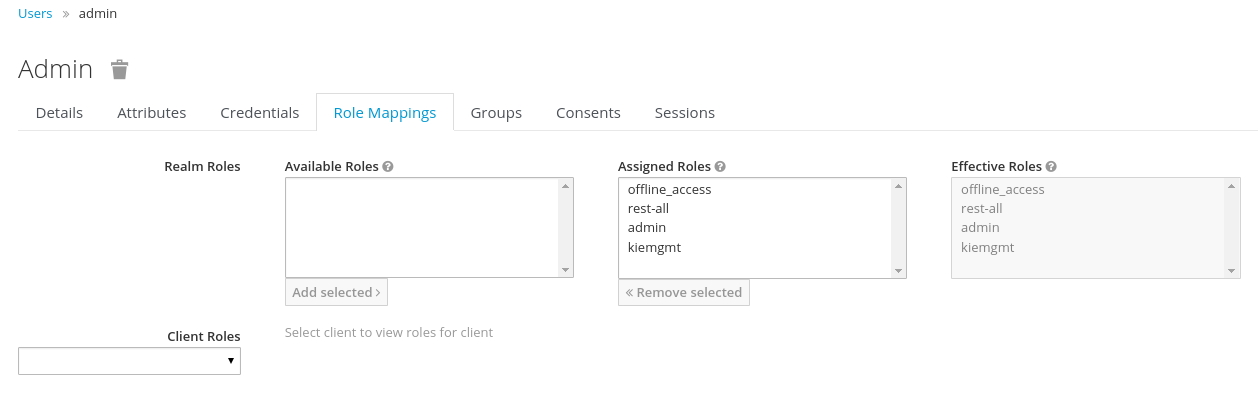

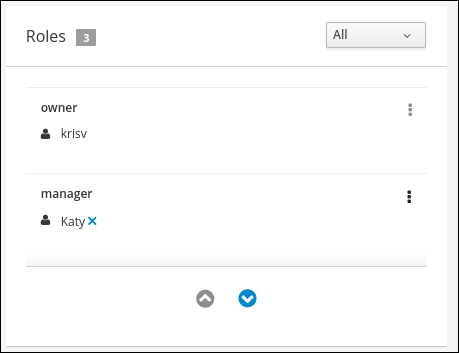

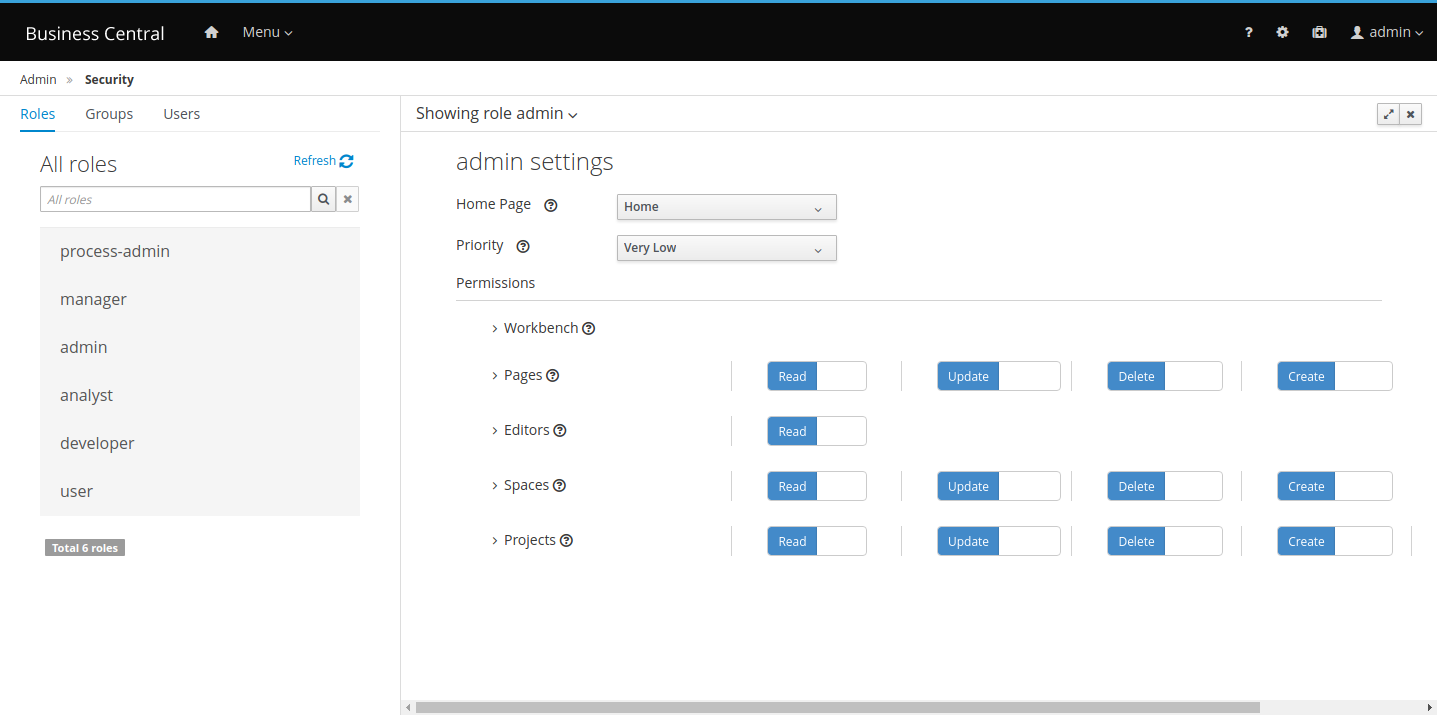

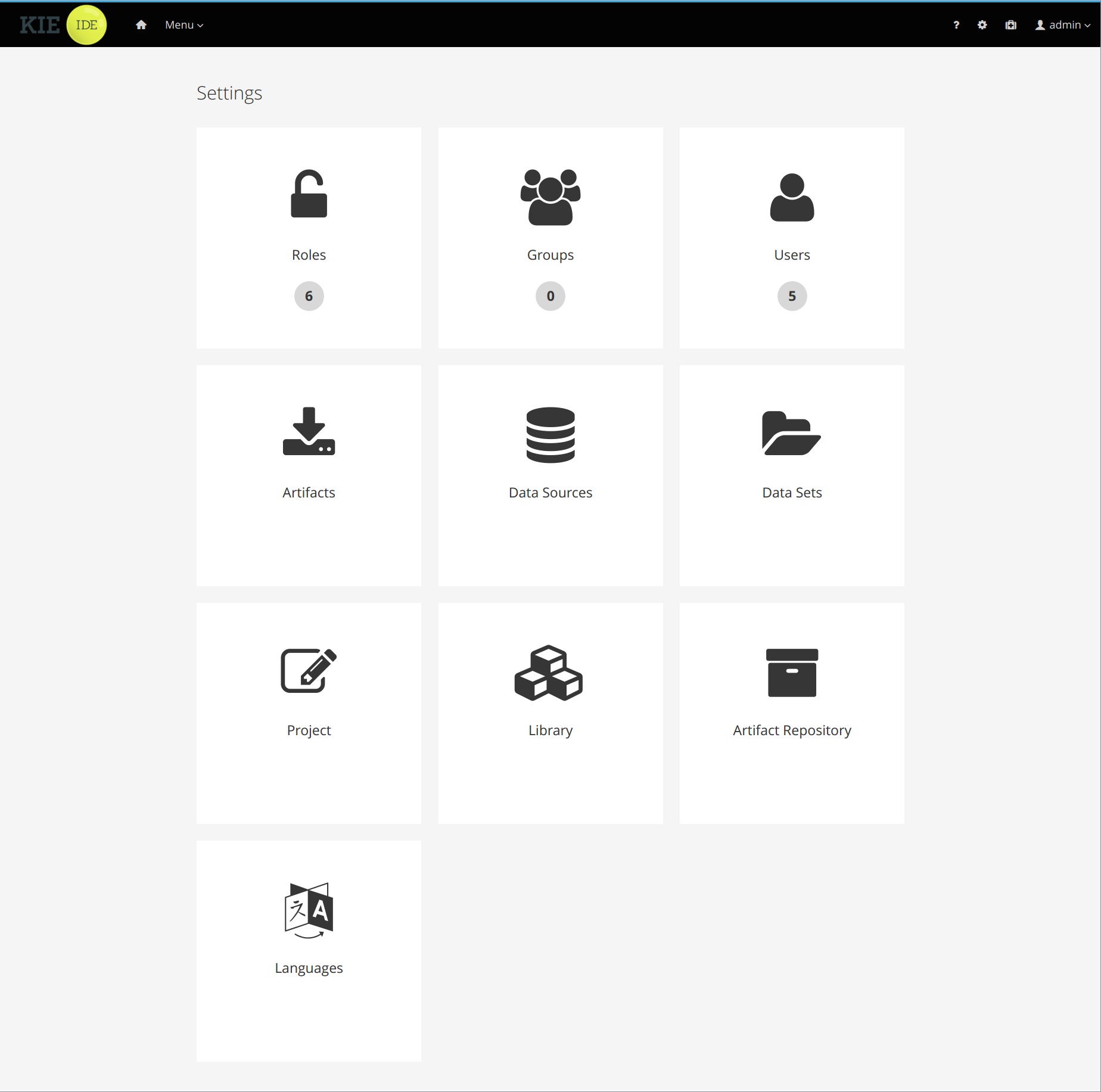

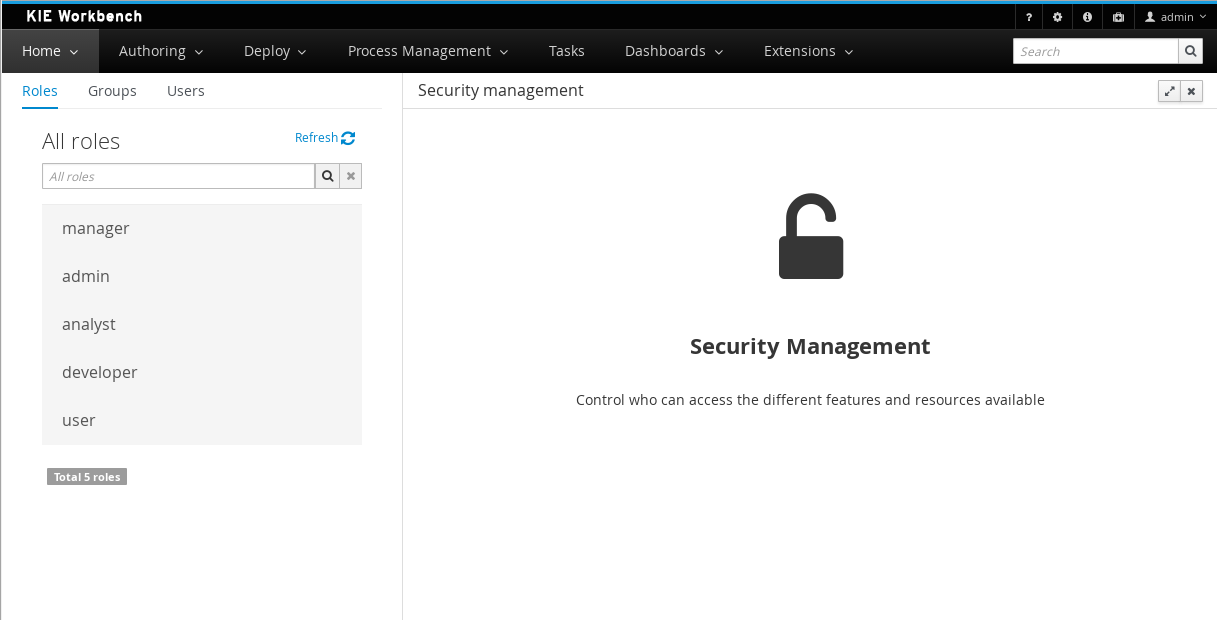

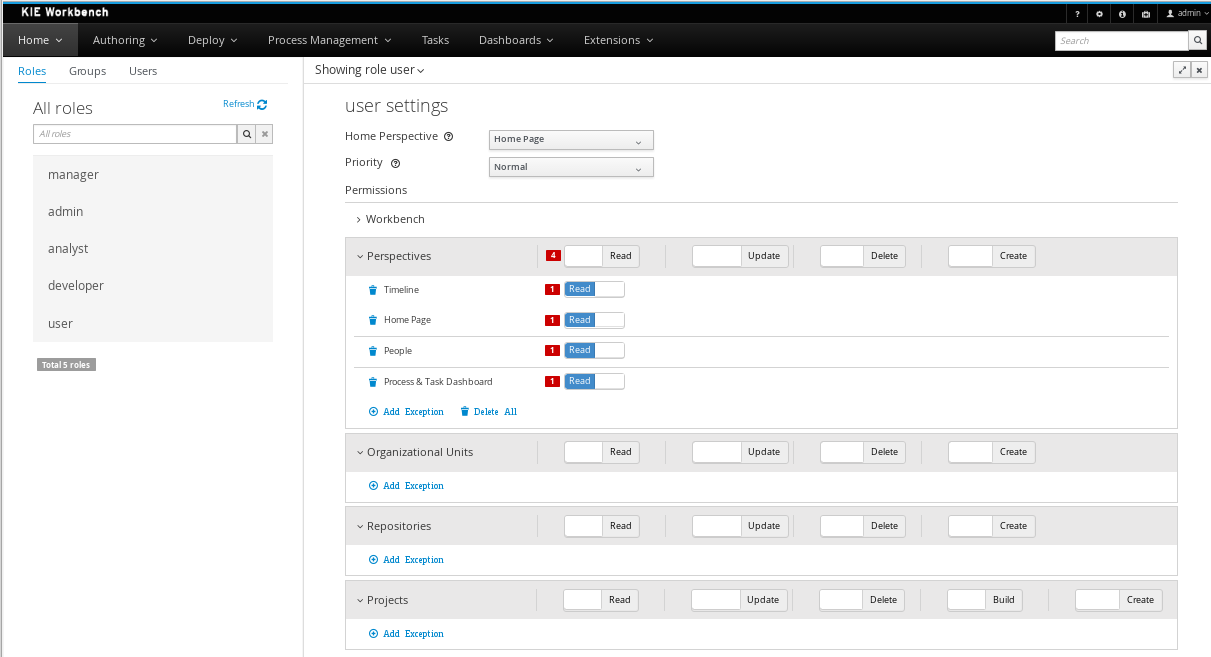

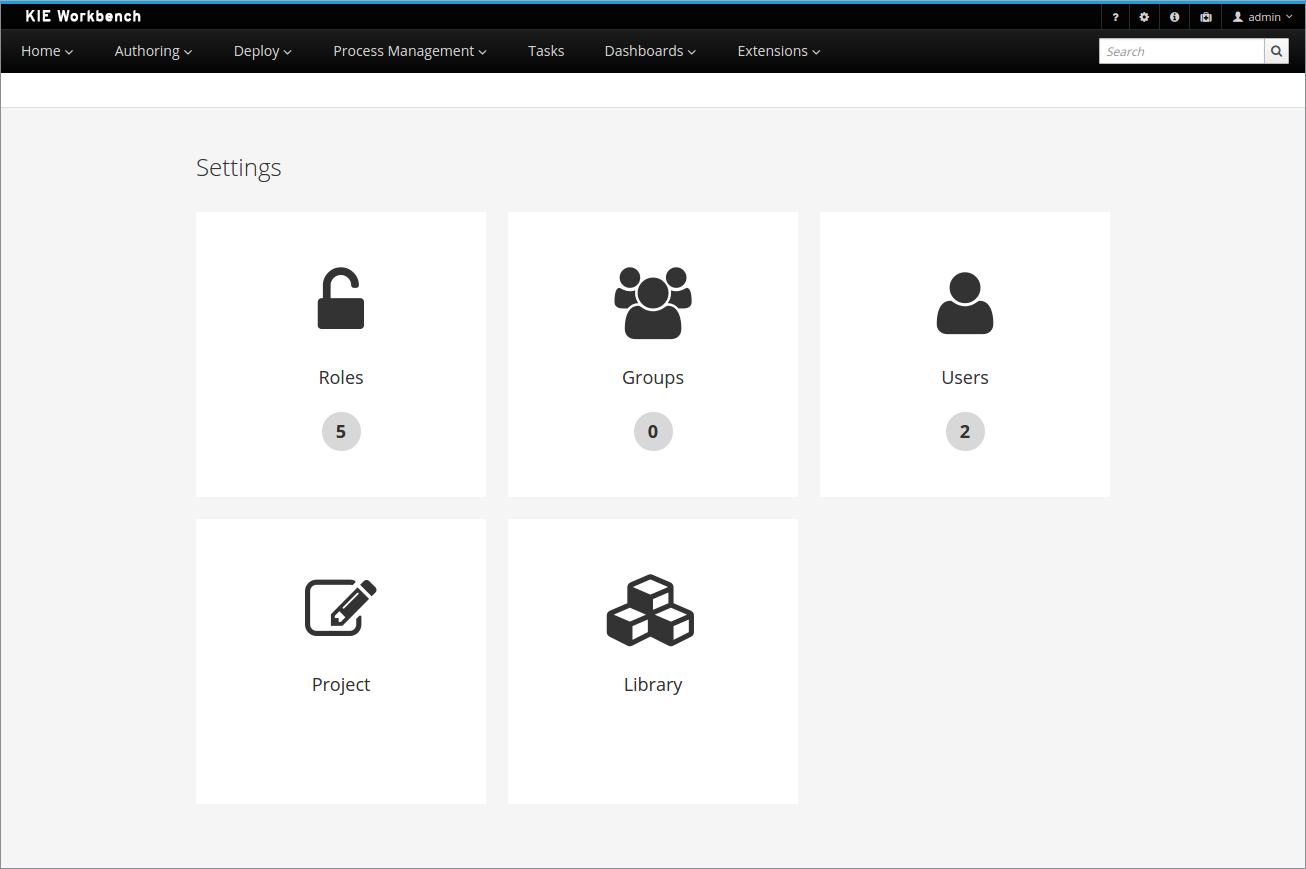

You can create as many Business Central users as you require. User privileges and settings are controlled by the roles assigned to a user and the groups that a user belongs to. For this example, you must create two new users: Katy who will act as the bank’s loan manager and approver, and Bill who will act as the broker requesting the loan. For more information on creating users, see the Creating users chapter of Installing and configuring jBPM on Red Hat JBoss EAP 7.4.

In Business Central, you can use groups and roles to control permissions for a collection of users. You can create as many groups and roles as you want but a group must have at least one user.

-

For this example, the user or users working on the tasks must be assigned to one or more of the following groups and roles:

-

approver group: For the Qualify task

-

broker group: For the Correct Data and Increase Down Payment tasks

-

manager role: For the Final Approval task

-

-

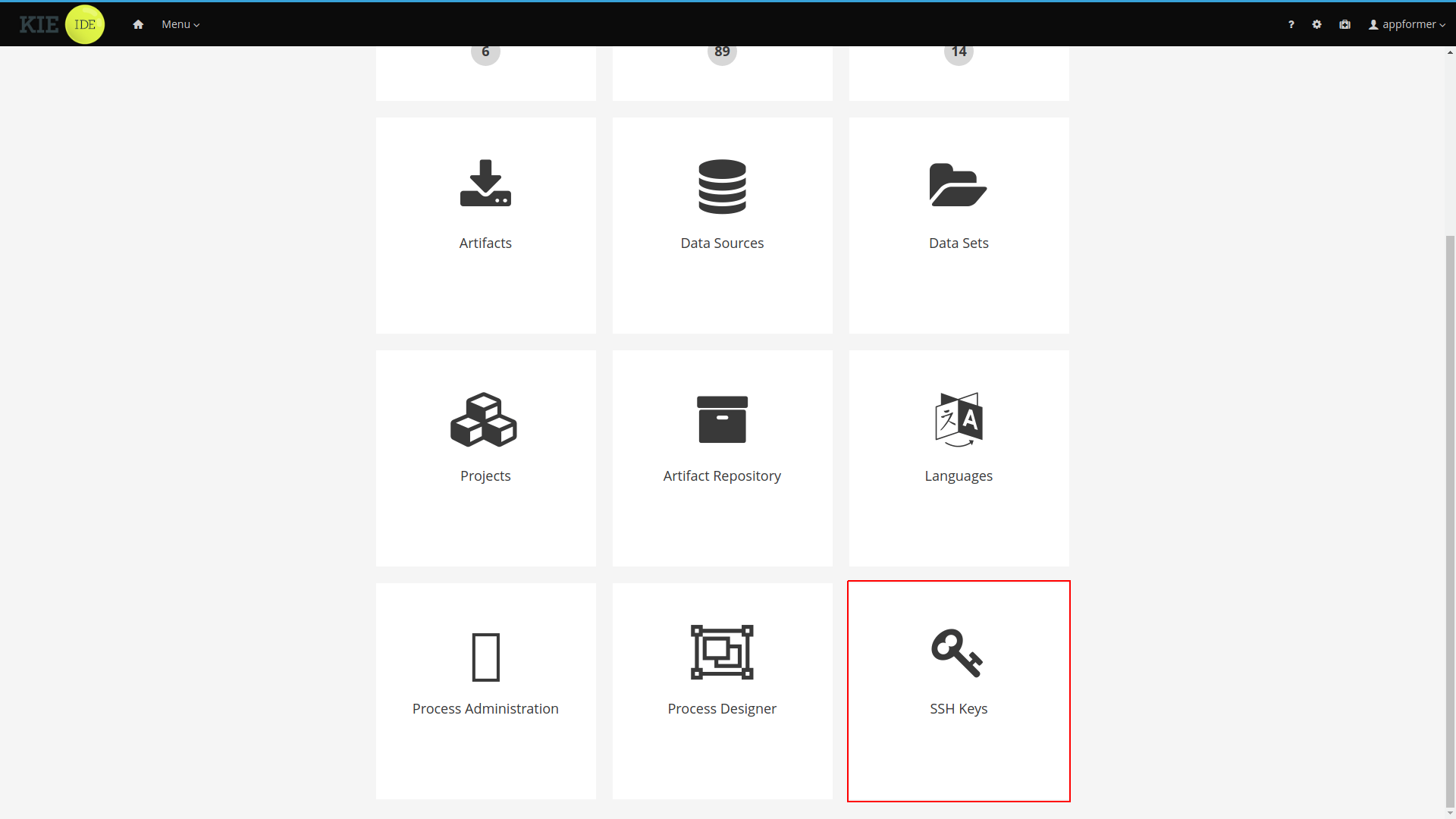

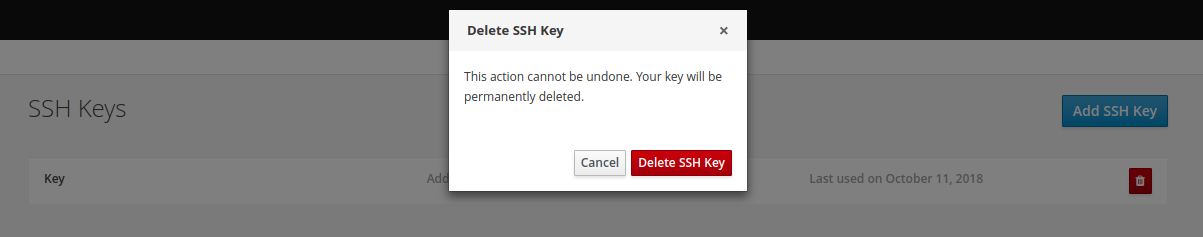

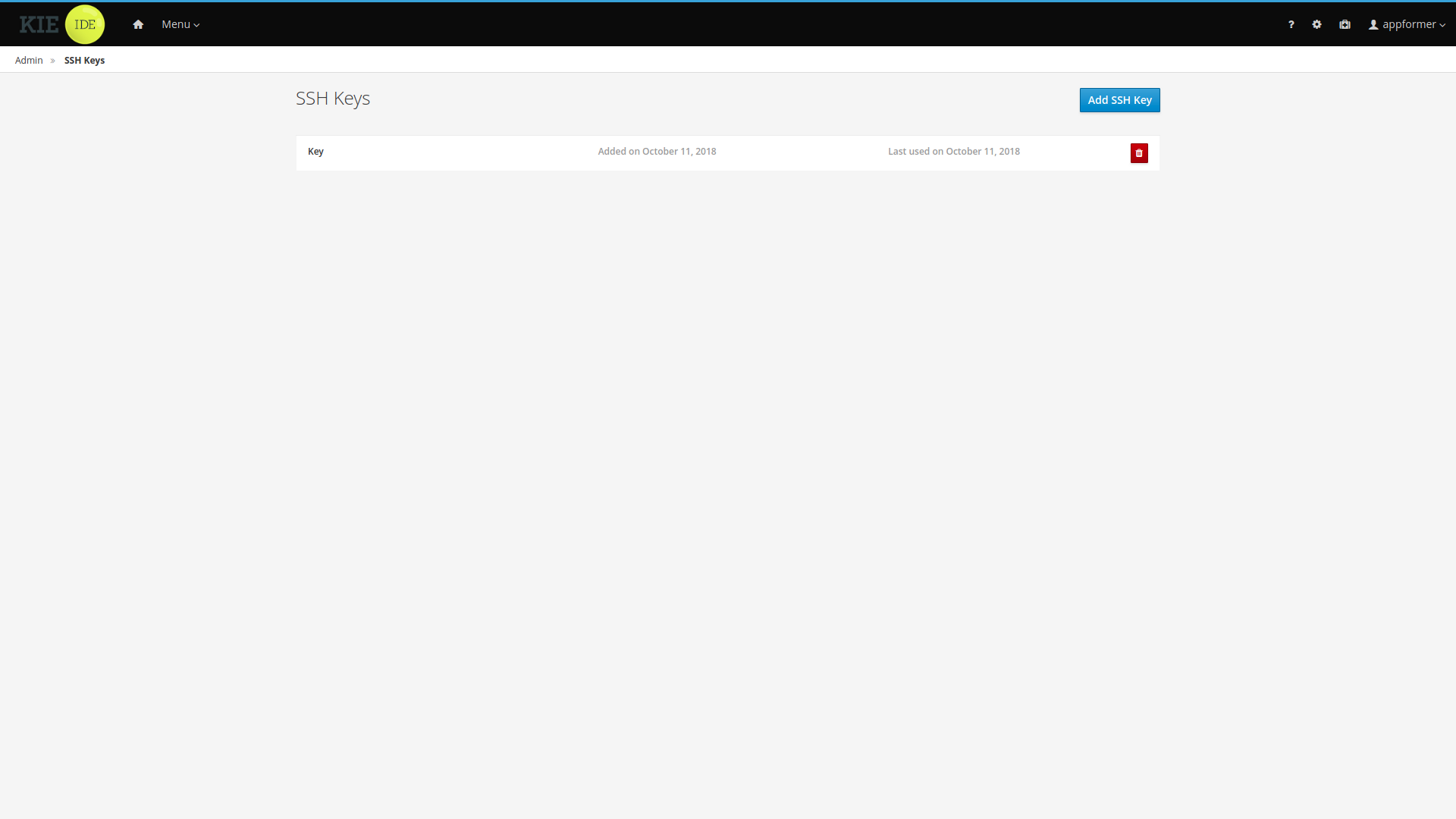

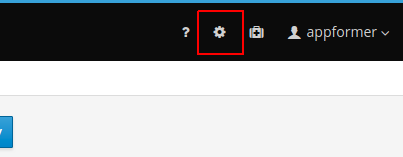

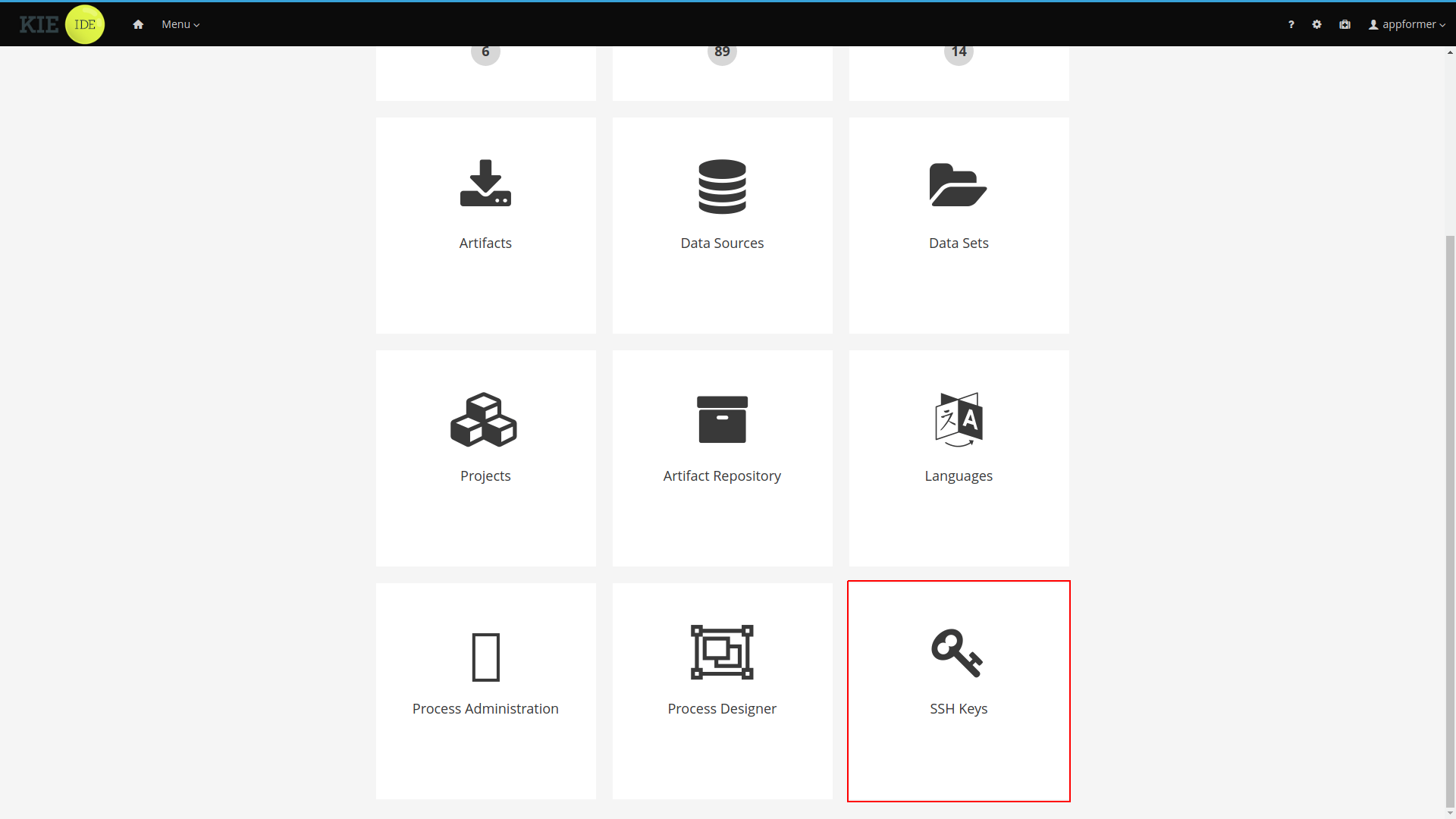

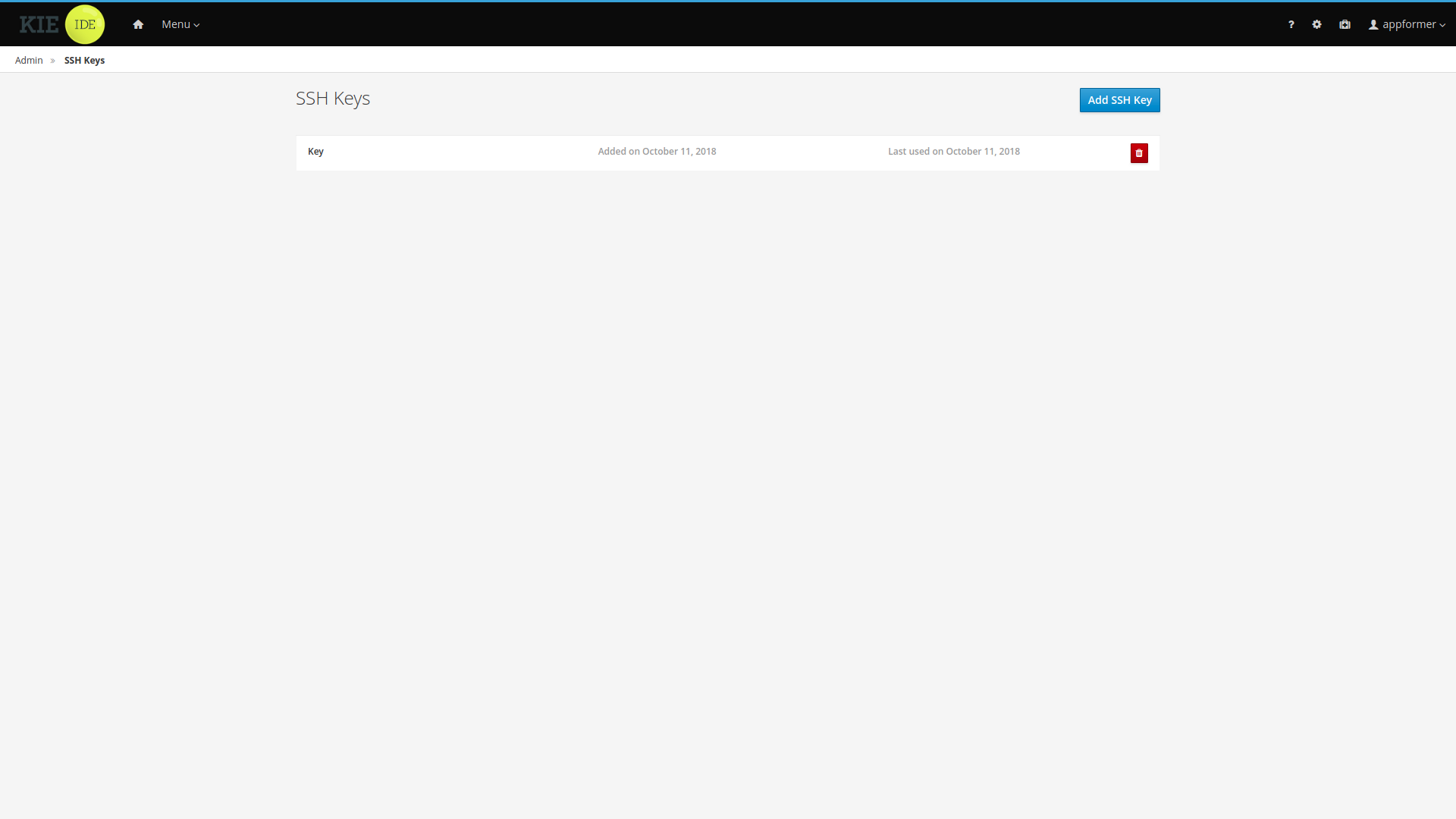

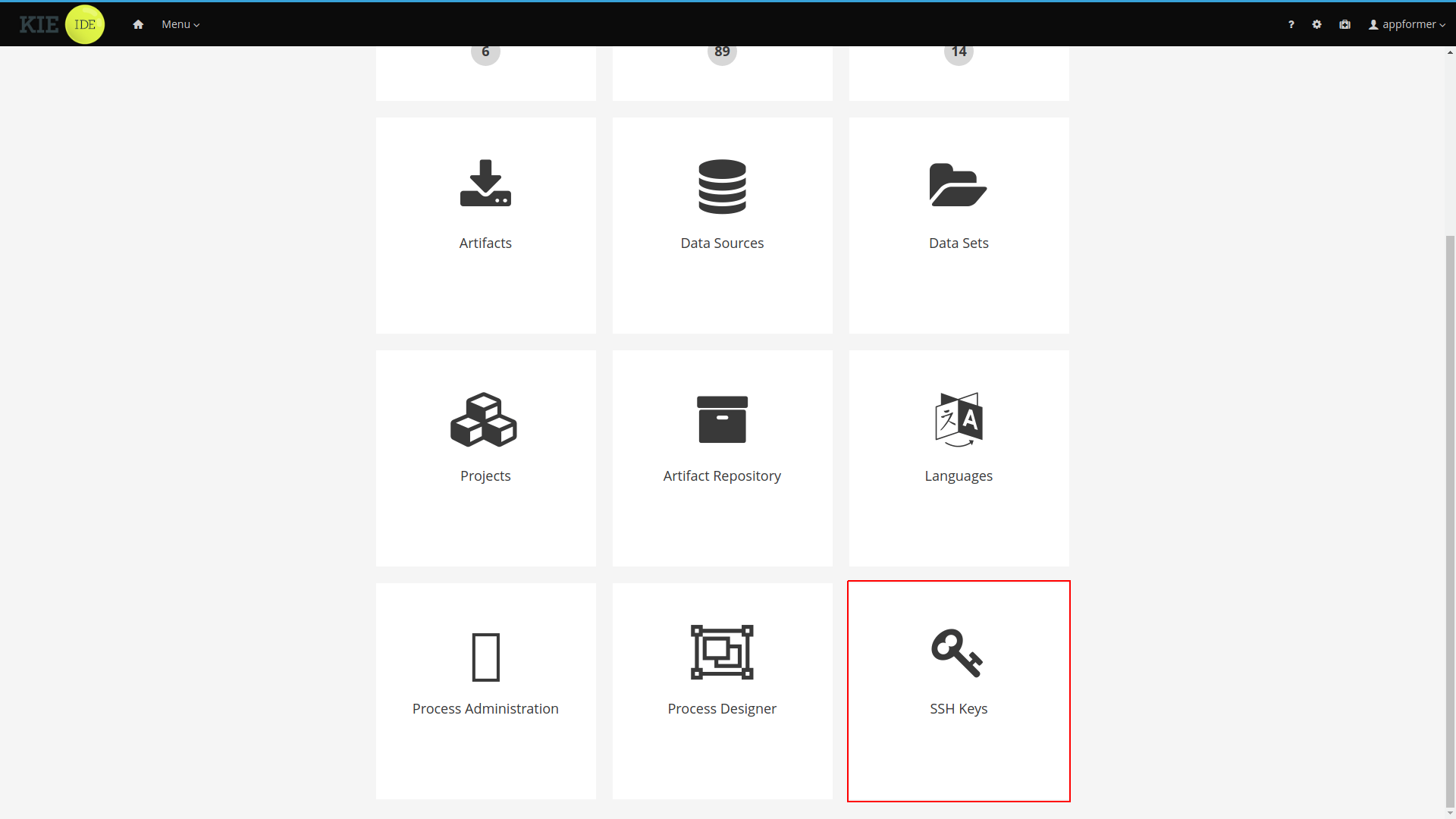

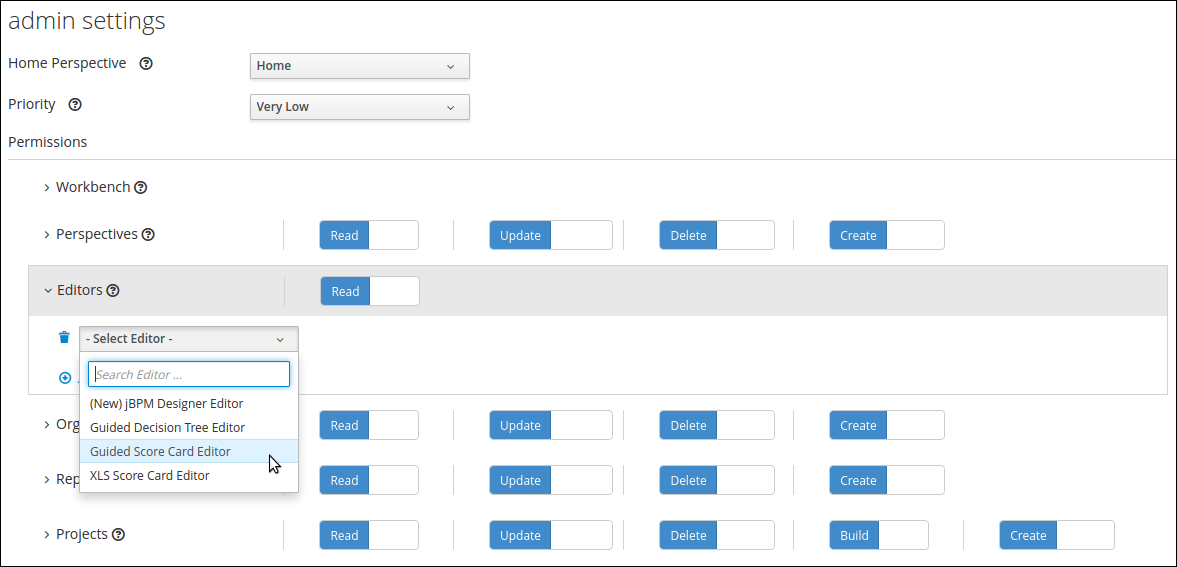

Click the gear icon

in the upper-right corner and click Users.

in the upper-right corner and click Users. -

Click

, enter

, enter Katy, click Next, and click Create. -

Click Yes to set a password, enter

Katyin both fields, and click Change. -

Enter

Bill, click Next, and click Create. -

Click Yes to set a password, enter

Billin both fields, and click Change. -

Click the Groups tab and click

, enter

, enter approver, and click Next. -

Select

Katyfrom the user list, and click Add selected users. -

Enter

broker, and click Next. -

Select

Billfrom the user list, and click Add selected users. -

Click the Users tab, select

Katy, and click Edit → Roles → Add roles. -

Select

manager, click Add to selected roles, and click Save. -

Click the Groups tab and click Edit → Groups → Add to groups.

-

Select

approverandkie-server, and click Add to selected groups. -

Click Save.

-

Click the Users tab, select

Billfrom the user list, and click Edit → Roles → Add roles. -

Select

user, and click Add to selected roles. -

Click the Groups tab, click

, select

, select kie-server, and click Add to selected groups. -

Click Save.

3.4. Creating the mortgage-process data objects

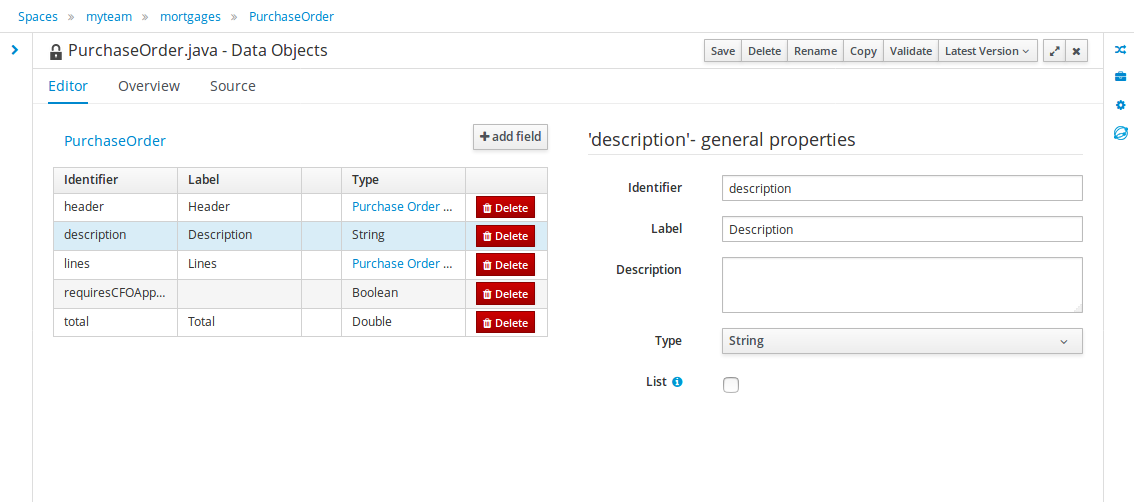

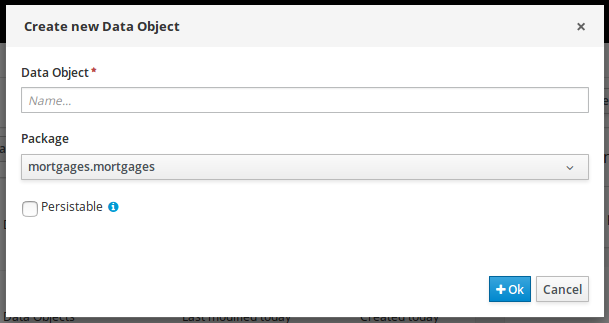

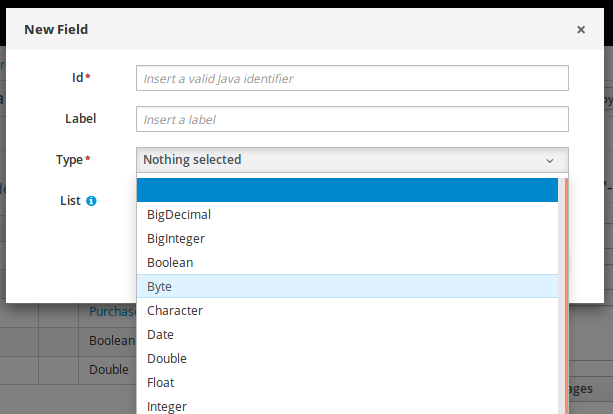

Data objects are the building blocks for the rule assets that you create. Data objects are custom data types implemented as Java classes in specified packages of your project. These custom data types determine what data your assets and your decision services are based on.

The mortgage process project uses the following data objects:

-

Applicant -

Property -

ValidationErrorDO -

Application

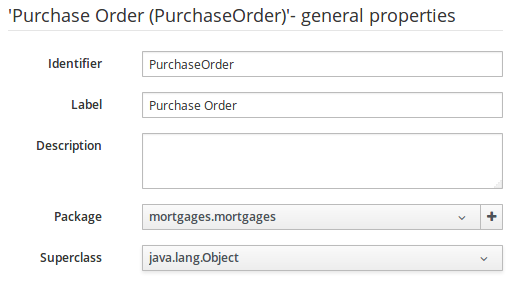

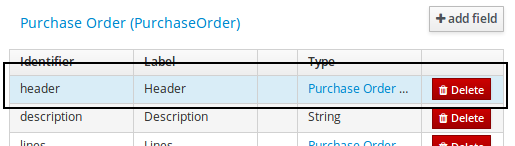

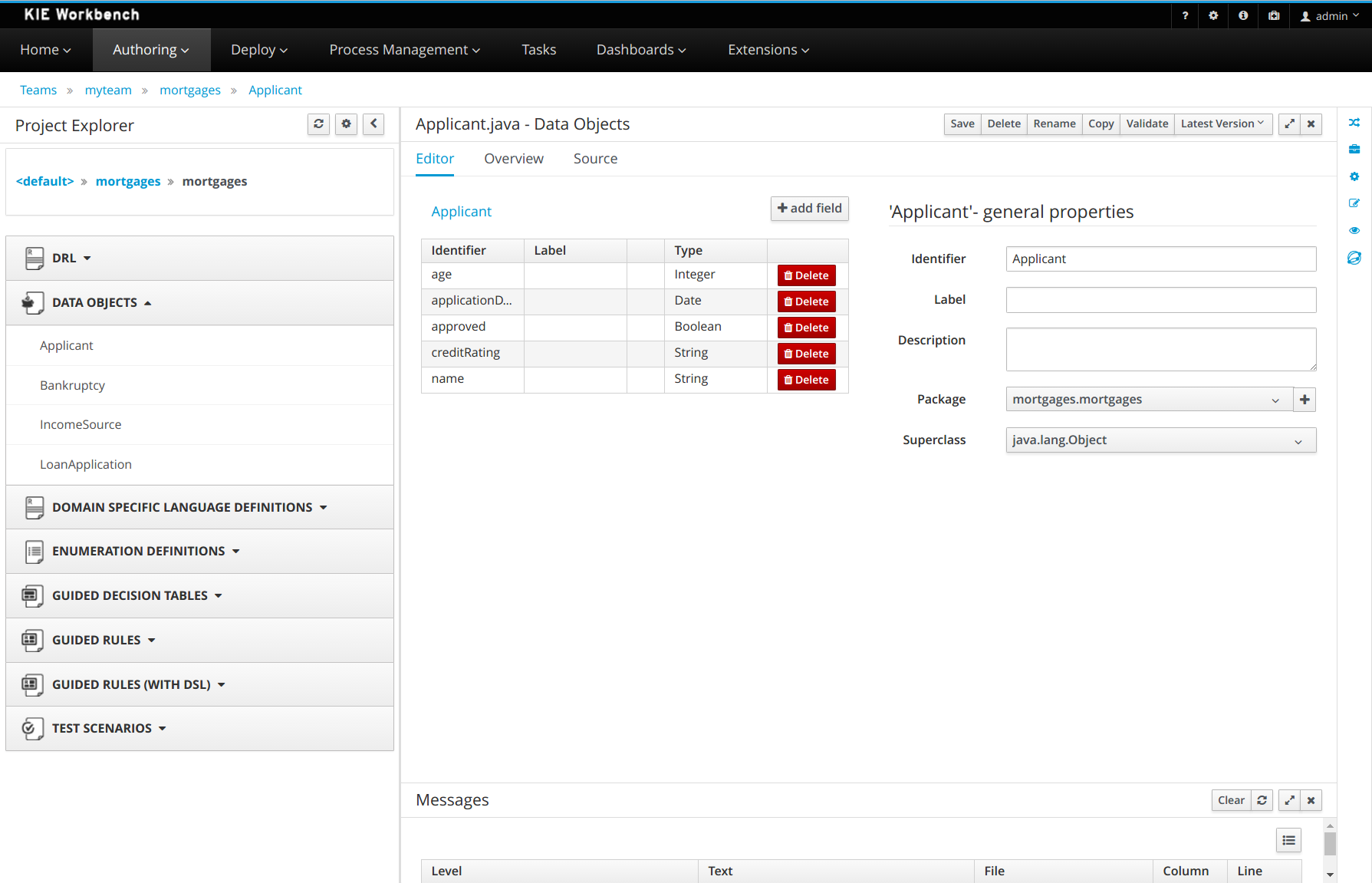

3.4.1. Creating the Applicant data object

Create the Applicant data object, which contains information about the applicant. This is the basic information required to apply for the loan in this tutorial.

-

In Business Central, click the MySpace default space.

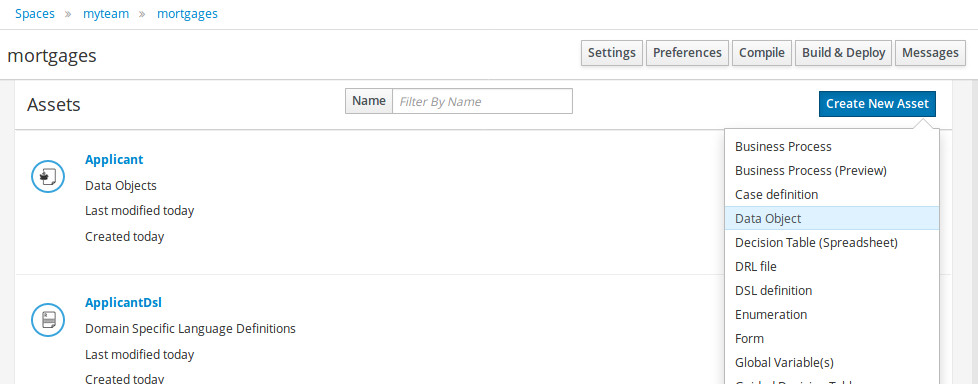

-

Click Menu → Design → Projects and click mortgage-process.

-

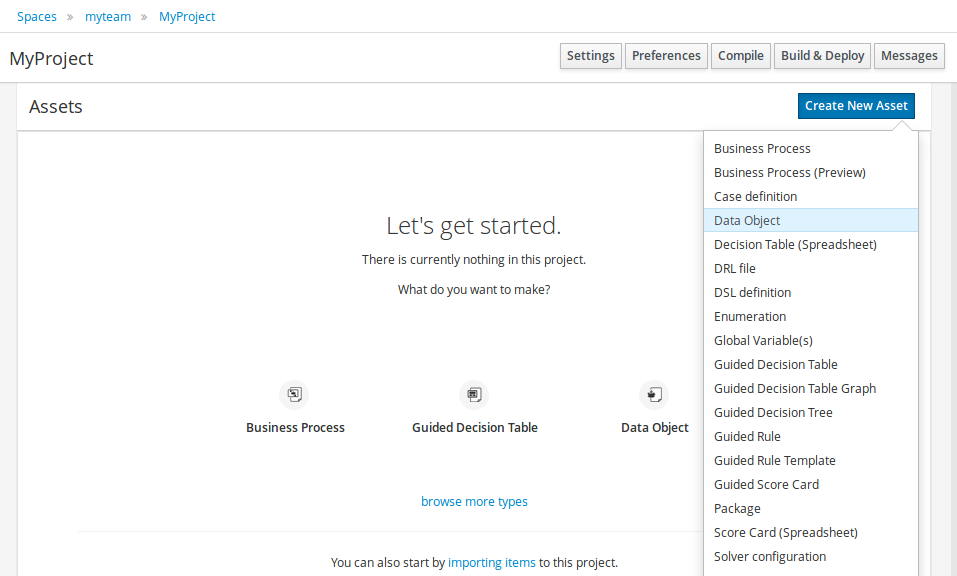

Click Add Asset and select Data Object.

-

Enter

Applicantin the Data Object field of the Create new Data Object window. -

Select

com.myspace.mortgage_appfrom the Package drop-down menu and click Ok. -

Enter

Applicantin the Label field of the 'Applicant'- general properties section. -

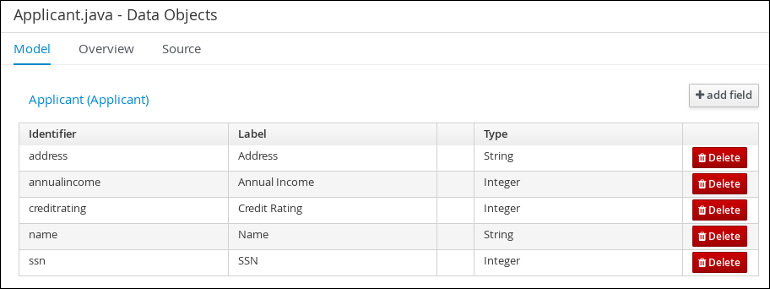

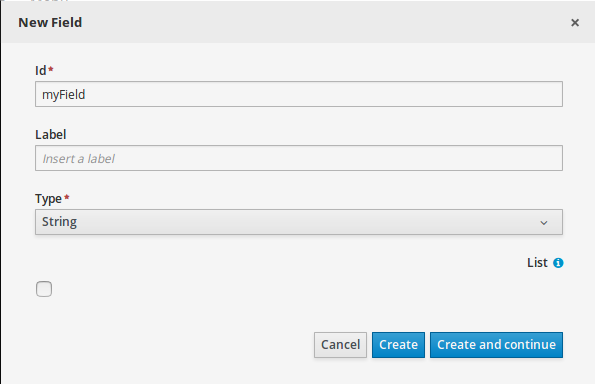

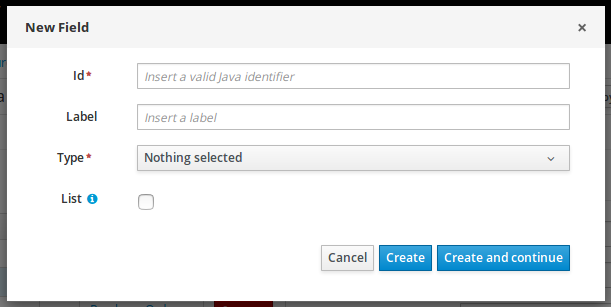

Click +add field and input the following Applicant data object values. Click Create and continue after each addition. For the last addition, click Create.

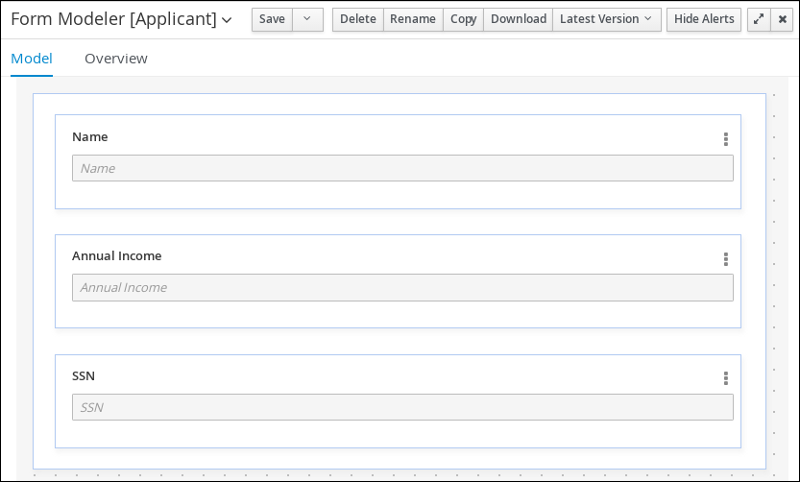

Figure 8. Applicant data object field values

Figure 8. Applicant data object field values -

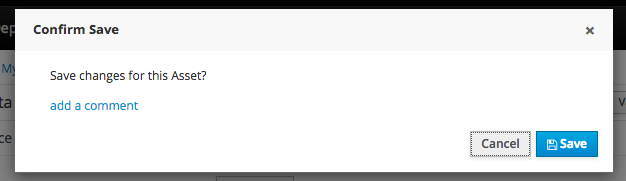

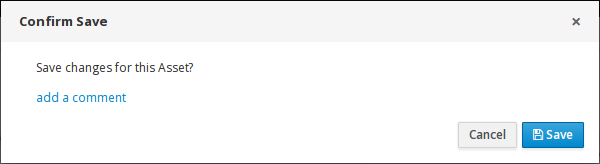

Click Save.

3.4.2. Creating the Property data object

Create the Property data object, which contains information about the property details, such as the property age and price.

-

In Business Central, click the MySpace default space.

-

Click Menu → Design → Projects and click mortgage-process.

-

Click Add Asset and select Data Object.

-

Enter

Propertyin the Data Object field of the Create new Data Object window. -

Select

com.myspace.mortgage_appfrom the Package drop-down menu and click Ok. -

Enter

Propertyin the Label field of the 'Property'- general properties section. -

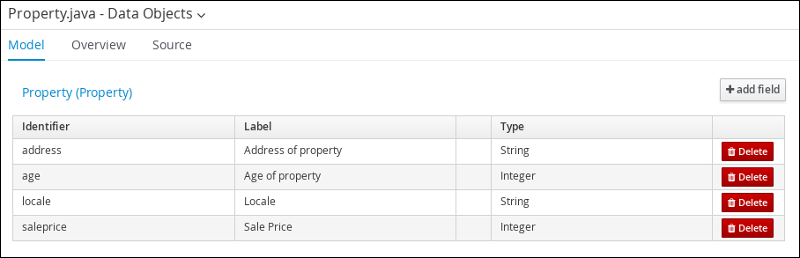

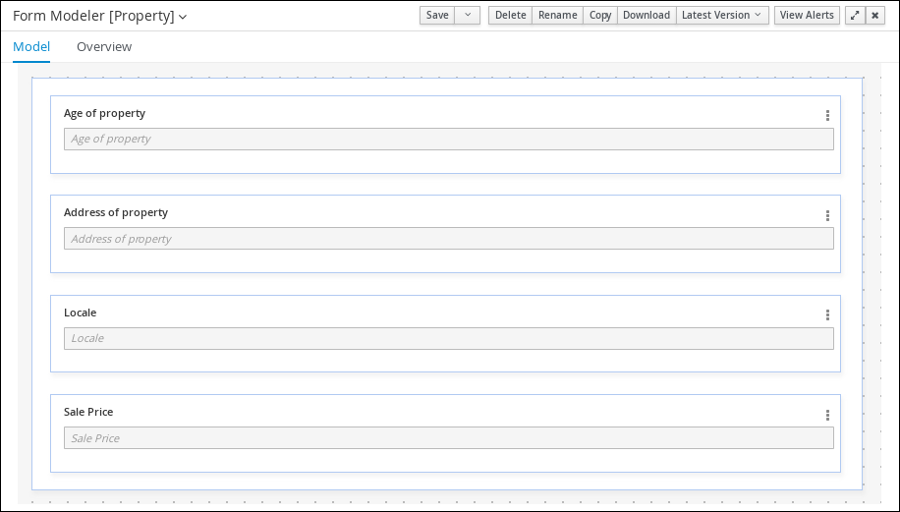

Click +add field and input the following Property data object values. Click Create and continue after each addition. For the last addition, click Create.

Figure 9. Property data object field values

Figure 9. Property data object field values -

Click Save.

3.4.3. Creating the ValidationErrorDO data object

Create the ValidationErrorDO data object, which specifies the cause of an application error.

-

In Business Central, click the MySpace default space.

-

Click Menu → Design → Projects and click mortgage-process.

-

Click Add Asset and select Data Object.

-

Enter

ValidationErrorDOin the Data Object field of the Create new Data Object window. -

Select

com.myspace.mortgage_appfrom the Package drop-down menu and click Ok. -

Enter

ValidationErrorDOin the Label field of the 'ValidationErrorDO'- general properties section. -

Click +add field and input the following ValidationErrorDO data object values. Click Create and continue after each addition. For the last addition, click Create.

Figure 10. ValidationErrorDO data object field values

Figure 10. ValidationErrorDO data object field values -

Click Save.

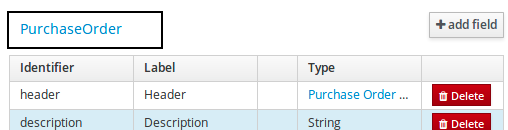

3.4.4. Creating the Application data object

Create the Application data object, which contains information about the mortgage details, such as the down payment and the mortgage amount.

-

In Business Central, click the MySpace default space.

-

Click Menu → Design → Projects and click mortgage-process.

-

Click Add Asset and select Data Object.

-

Enter

Applicationin the Data Object field of the Create new Data Object window. -

Select

com.myspace.mortgage_appfrom the Package drop-down menu and click Ok. -

Enter

Applicationin the Label field of the 'Application'- general properties section. -

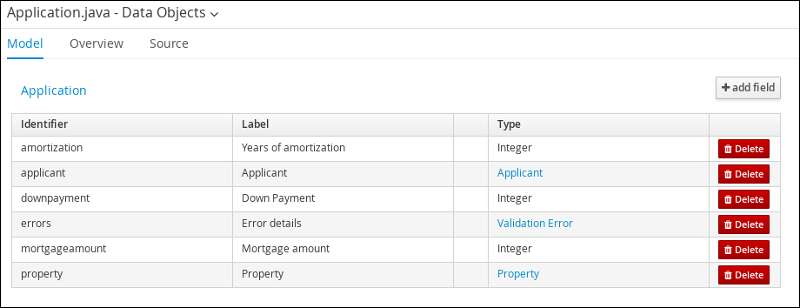

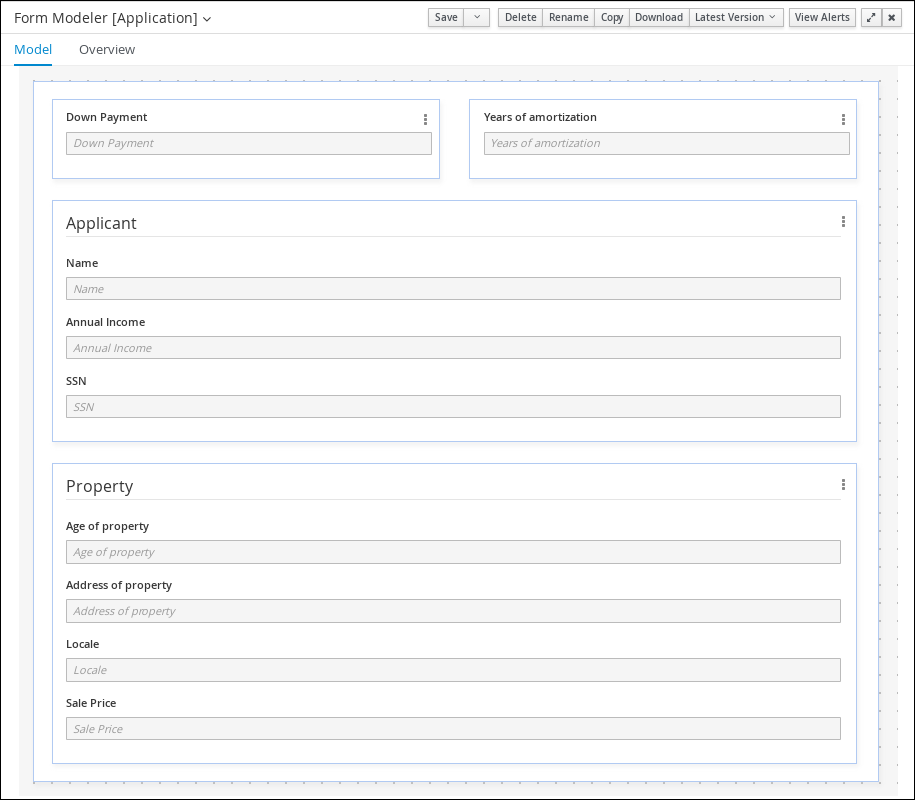

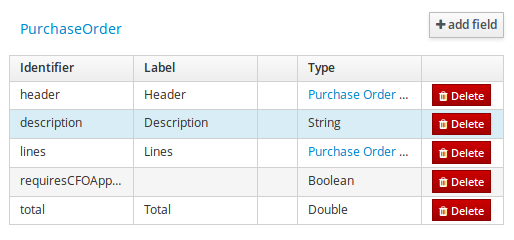

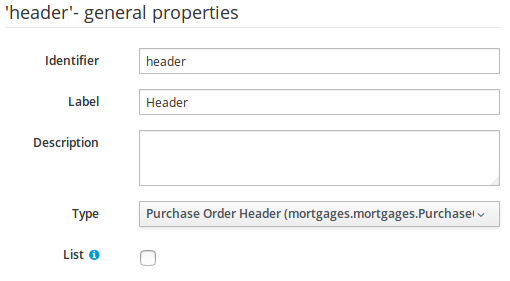

Click +add field and input the following Application data object values. Click Create and continue after each addition. For the last addition, click Create.

Figure 11. Application data object field values

Figure 11. Application data object field values -

Click Save.

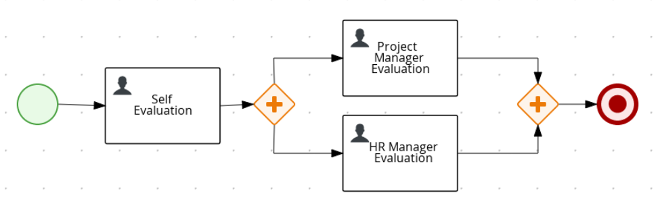

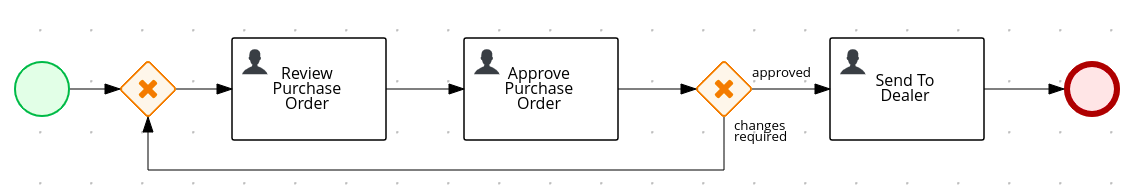

3.5. Business processes in Business Central

A business process is a diagram that describes the order in which a series of steps must be executed using a flow chart. A business process consists of a collection of nodes that are linked to each other using connections. Each of the nodes represents one step in the overall process while the connections specify how to transition from one node to the other.

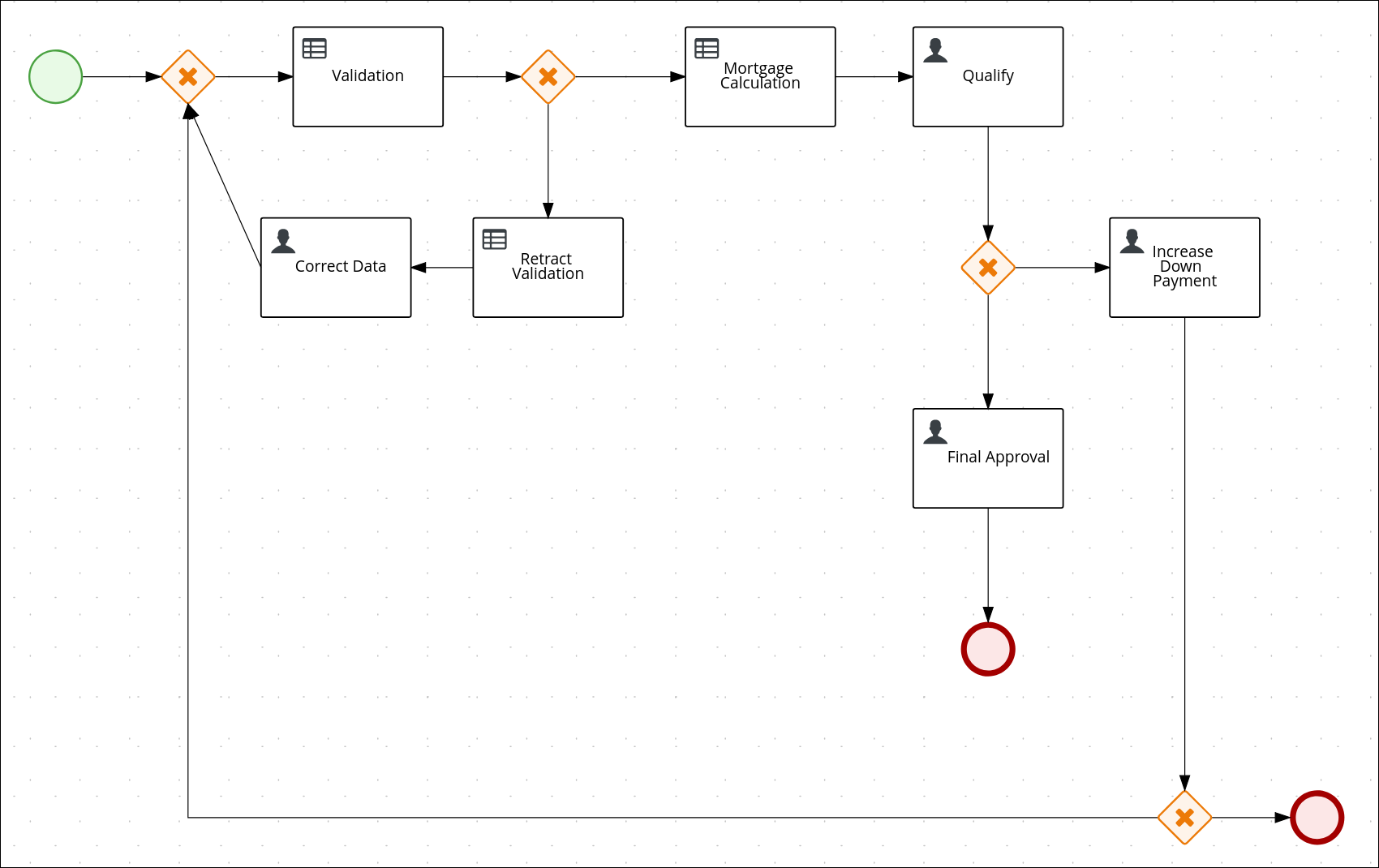

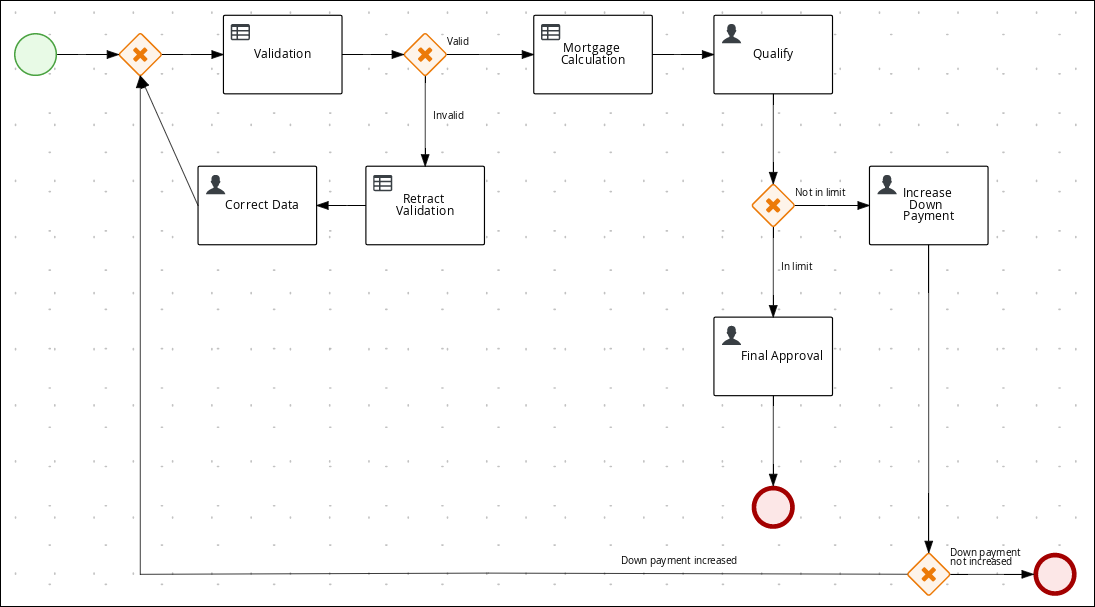

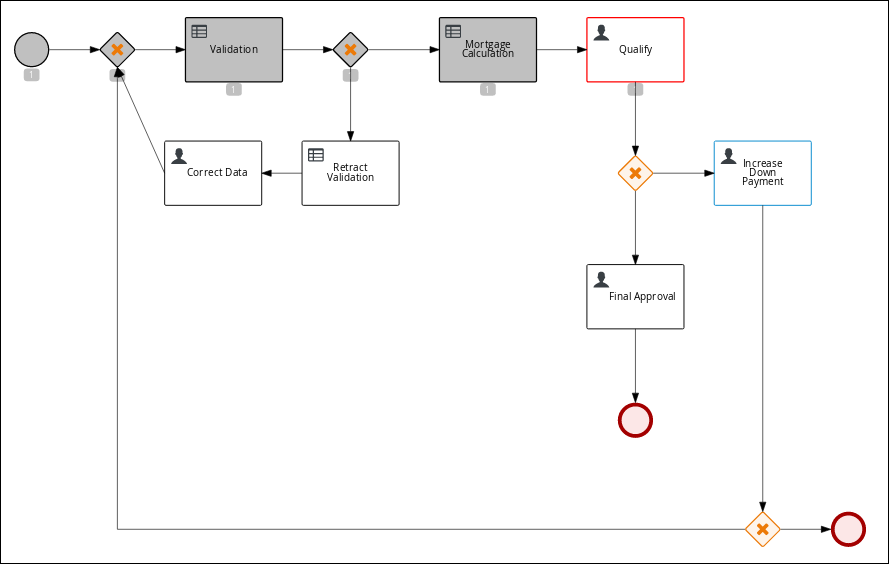

The sample Mortgage_Process contains the following predefined MortgageApprovalProcess business process.

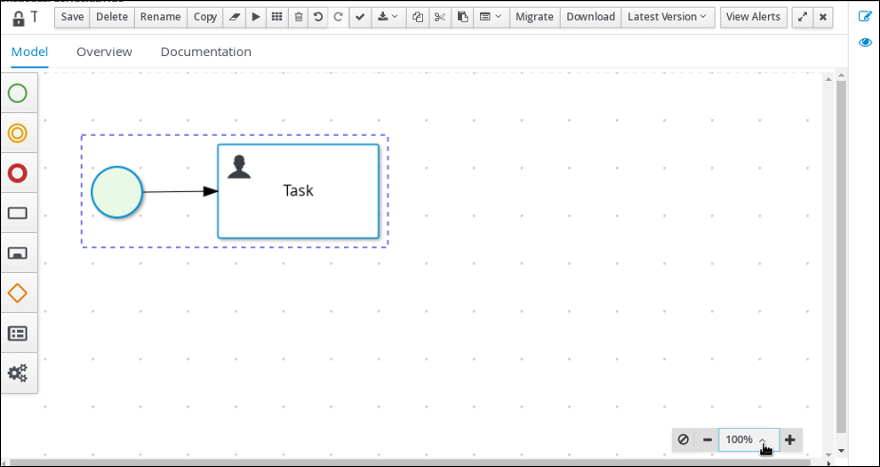

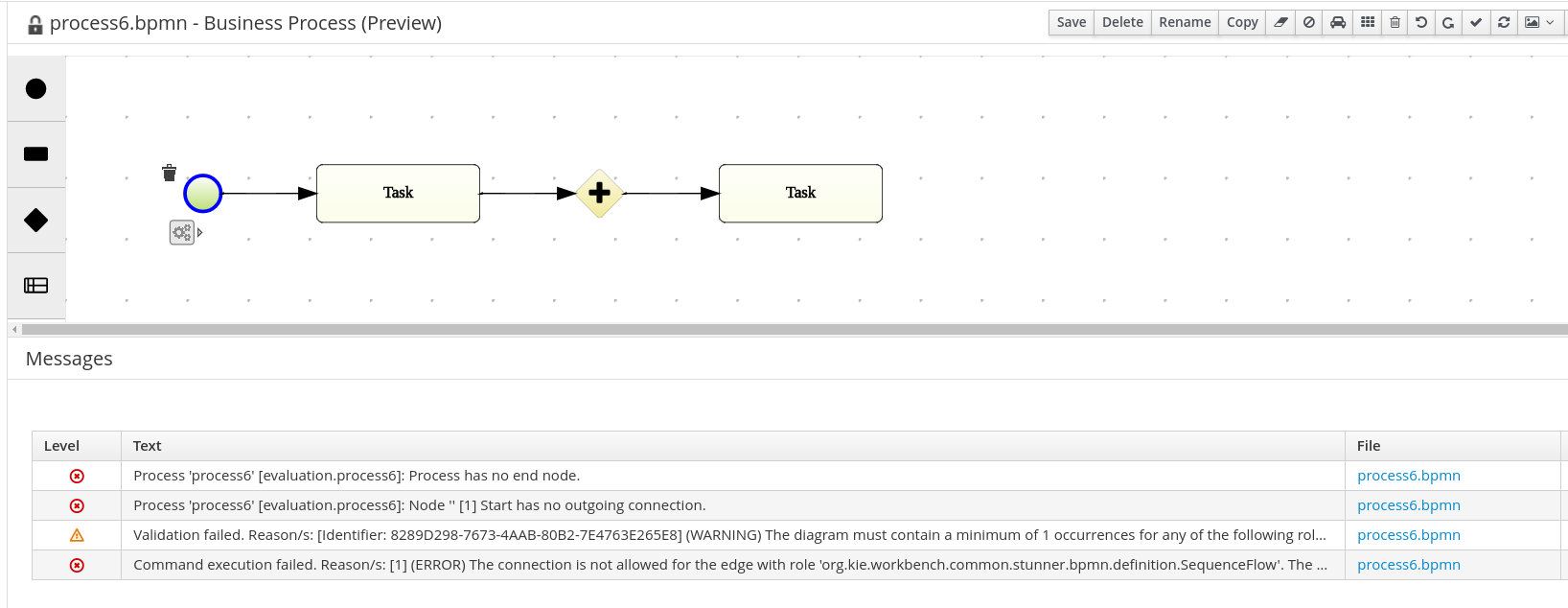

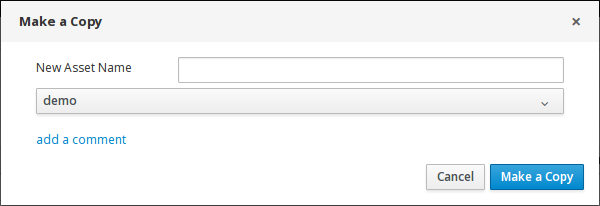

3.5.1. Creating the business process

The following procedures guide you through the creation of tasks, connections, and gateways that make up the MortgageApprovalProcess business process. The mortgage validation business process determines whether a mortgage application contains all required data. If the specified data requirements are met, the application proceeds on to the mortgage calculation business process.

-

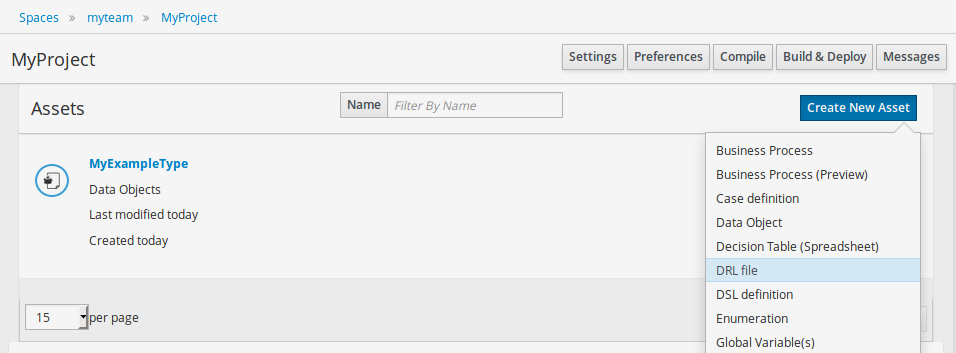

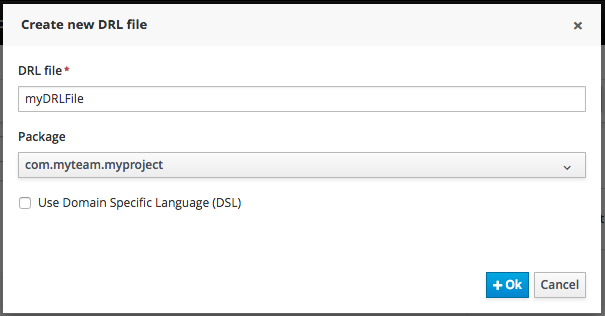

In Business Central, go to Menu → Design → Projects → Mortgage-Process.

-

Click Add Asset → Business Process.

-

Enter the following values:

-

Business Process:

MortgageApprovalProcess -

Package: Select

com.myspace.mortgage_appThe Package specifies the location inside the existing project where the asset will be created. In this example, it is created in

com/myspace/mortgage_app.

-

-

Click Ok. The diagram editor opens.

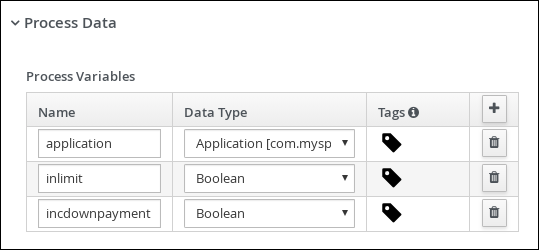

-

In the upper-right corner, click the Properties

icon.

icon. -

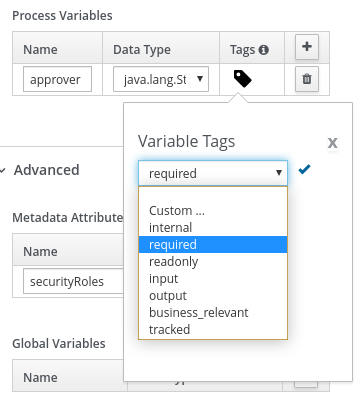

Scroll down and expand Process Data and click

in the Process Variables section.

in the Process Variables section. -

Enter the following values:

-

Name:

application -

Data Type:

Application [com.myspace.mortgage_app]

-

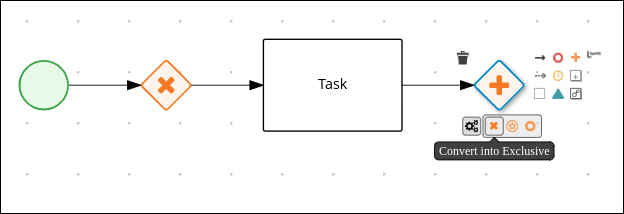

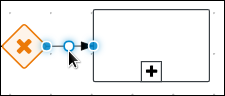

3.5.1.1. Creating outgoing connections and exclusive gateways

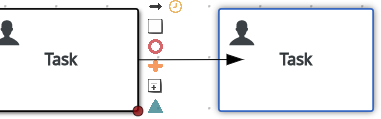

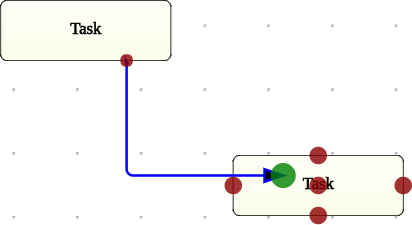

This section shows you how to create outgoing connections, exclusive gateways, and business rule tasks. Use exclusive gateways to make decisions and react to events based on the available data.

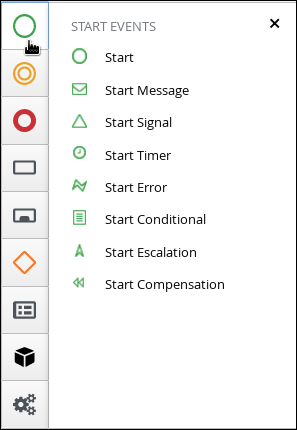

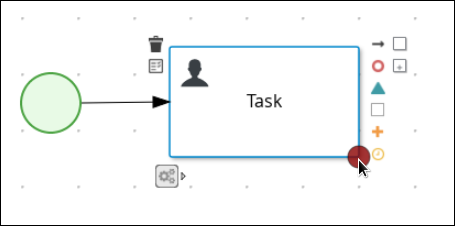

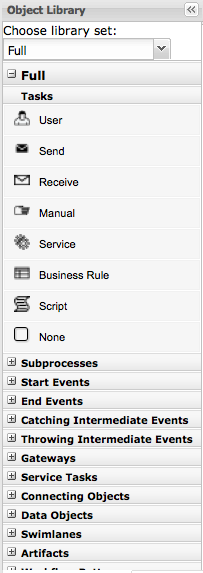

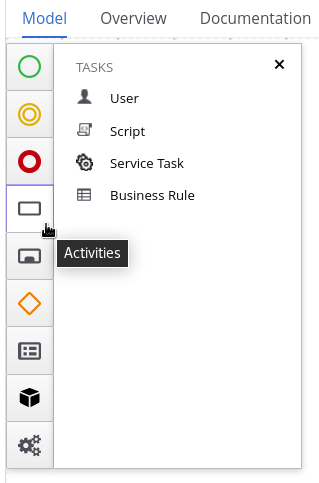

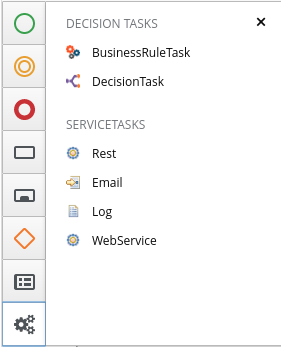

jBPM contains a predefined selection of node types to simplify business process creation. The predefined node panel is located on the left side of the diagram editor.

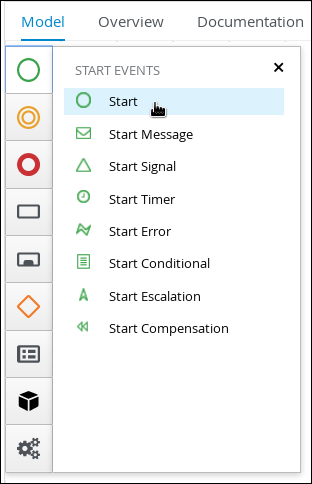

-

Drag a start event node onto the canvas.

-

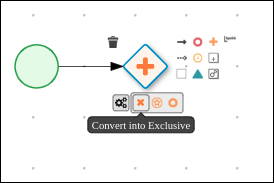

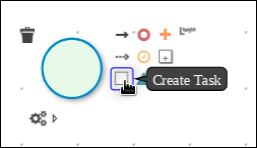

Create an outgoing connection from the start event to an exclusive gateway:

-

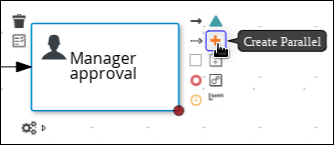

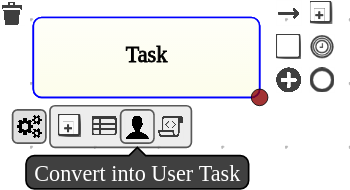

On the canvas, click the start event node and click the Create Parallel icon.

-

Hover over the

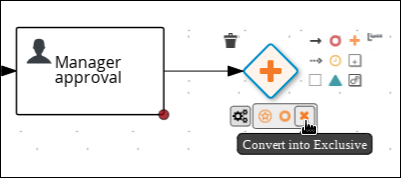

icon of the parallel and click the Convert into Exclusive icon.

icon of the parallel and click the Convert into Exclusive icon.

-

-

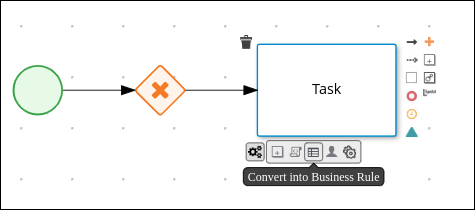

Create an outgoing connection from the exclusive gateway to a business rule task:

-

On the canvas, click the exclusive gateway and click the Create Task icon.

-

Hover over the

icon of the task and click the Convert into Business Rule icon.

icon of the task and click the Convert into Business Rule icon.

-

-

Configure the business rule task:

-

Click the business rules task.

-

If the Properties panel is not open, click the Properties

icon in the upper-right corner.

icon in the upper-right corner. -

In the Properties panel, enter

Validationin the Name field. -

Expand Implementation/Execution, select New from the Rule Flow Group menu, and input validation.

-

In the On Exit Action field, enter the following Java expression:

System.out.println(application.getProperty()); -

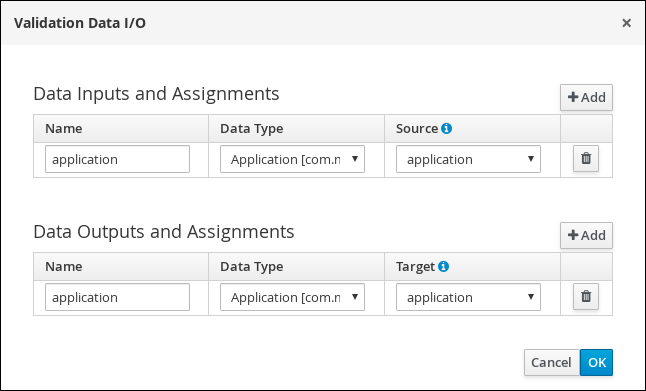

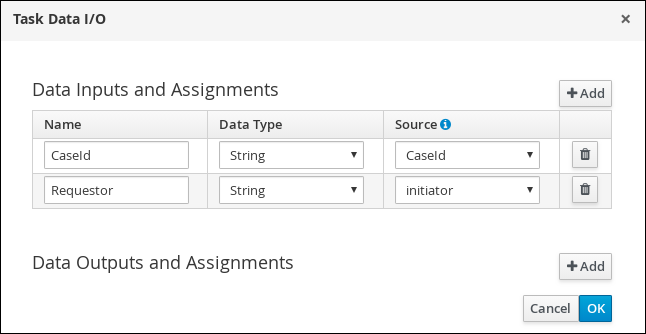

Expand Data Assignments and click

next to Assignments.

next to Assignments. -

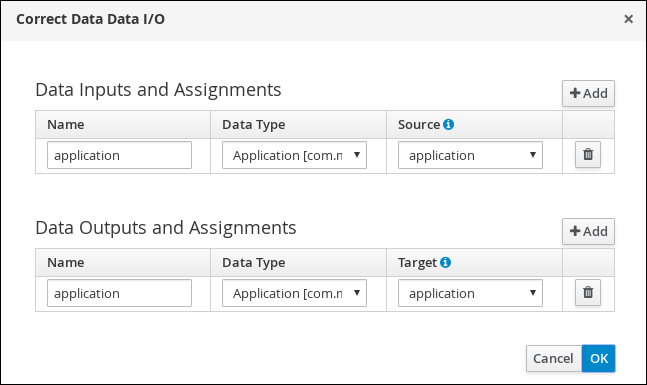

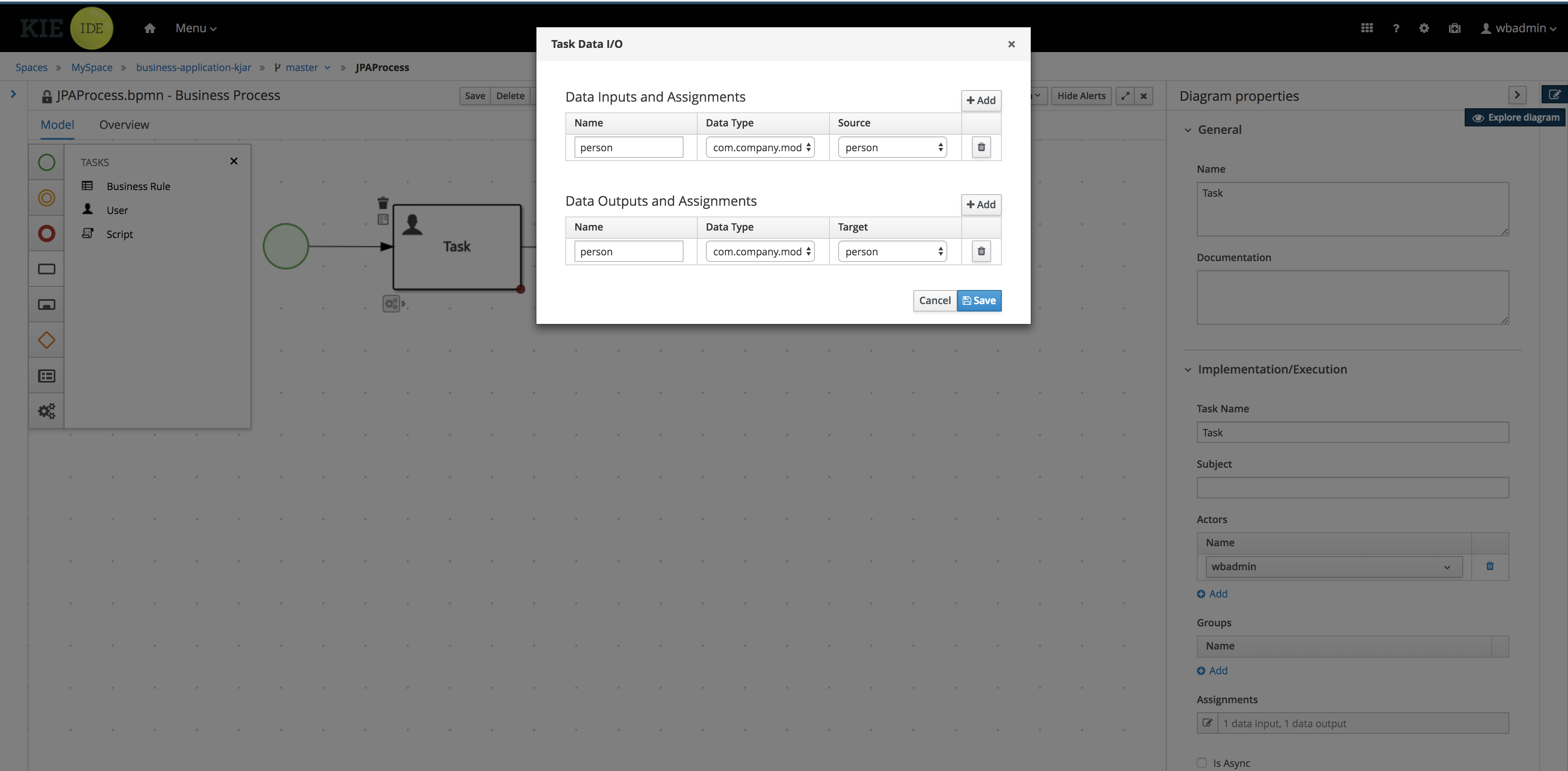

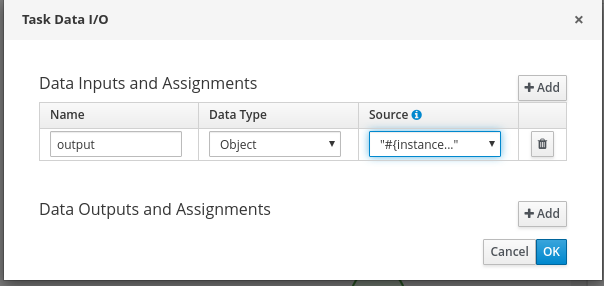

In the Validation Data I/O window, click Add and create the following assignments:

-

Data Inputs and Assignements

-

Name: application

-

Data Type: Application [com.myspace.mortgage_app]

-

Source: application

-

-

Data Outputs and Assignements

-

Name: application

-

Data Type: Application [com.myspace.mortgage_app]

-

Target: application

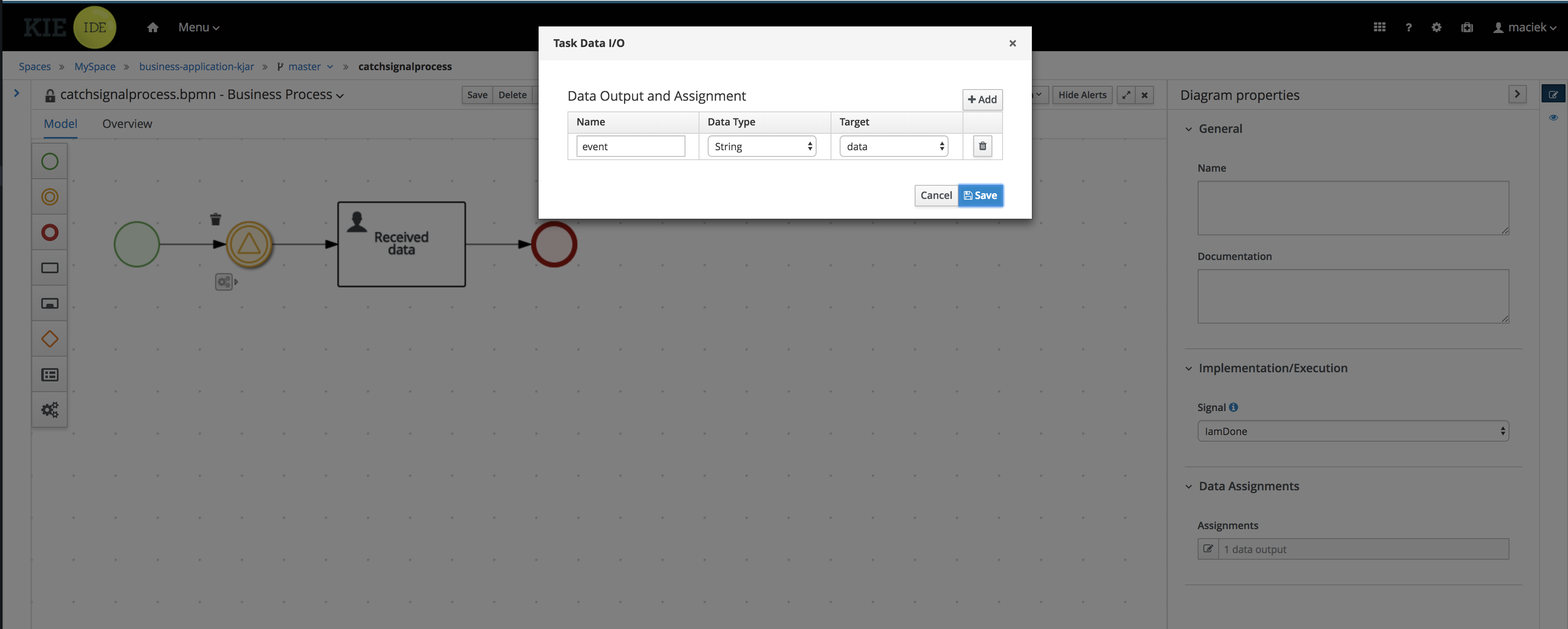

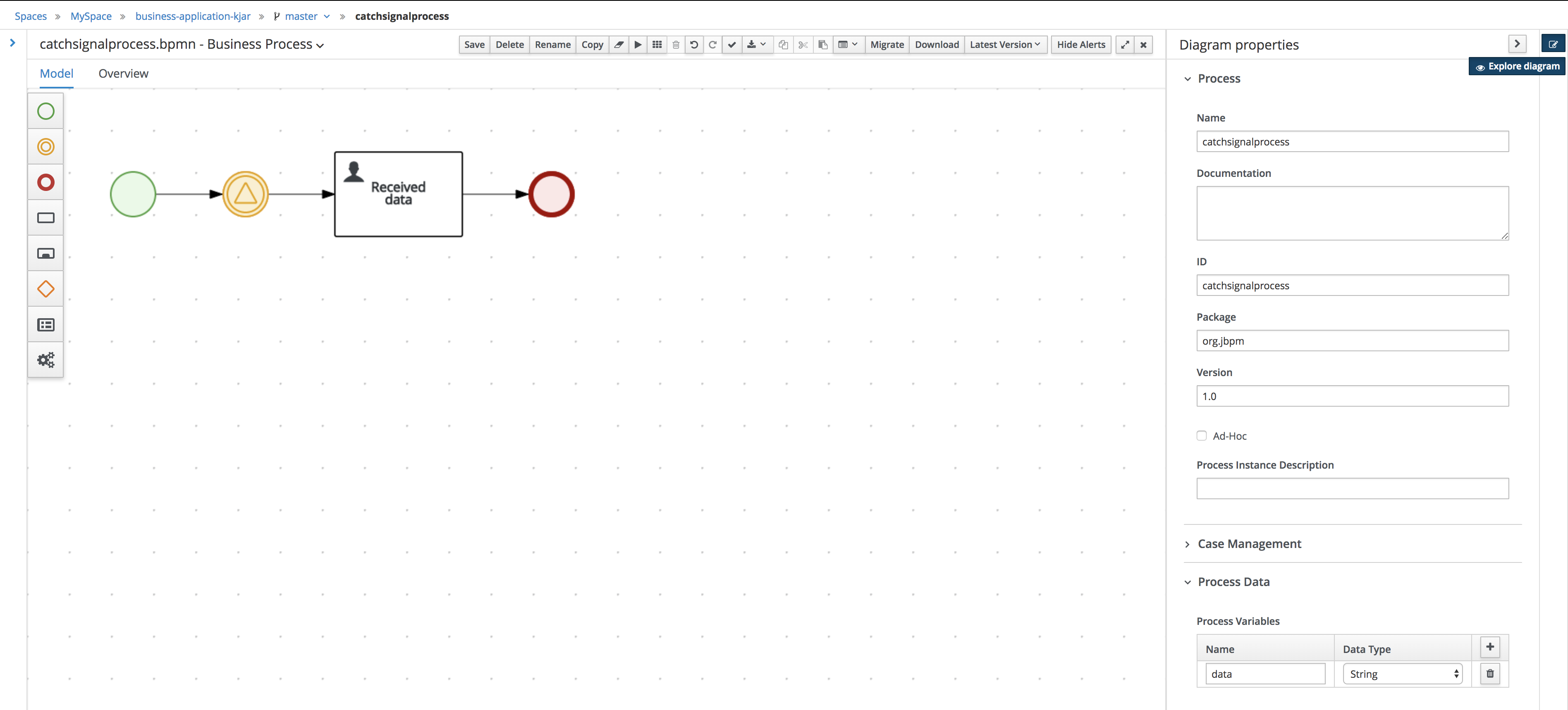

Figure 12. Validation Data I/O assignments

Figure 12. Validation Data I/O assignments

-

-

-

-

Click OK in the Validation Data I/O window.

-

Above the canvas, click Save to confirm your changes.

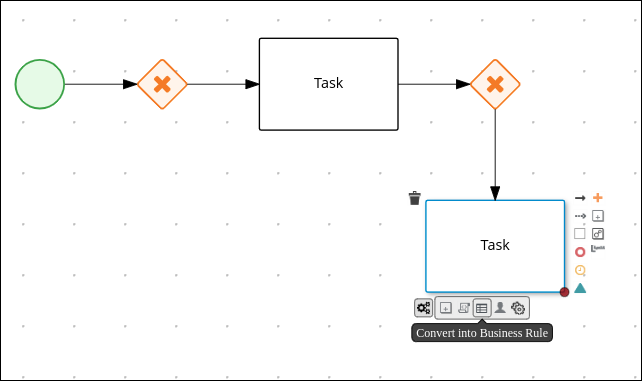

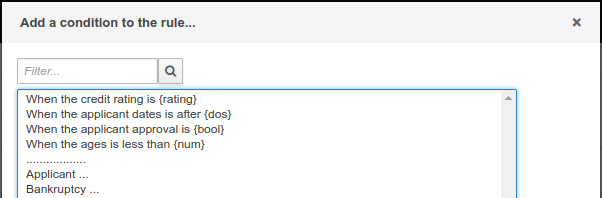

3.5.1.2. Defining the validation data

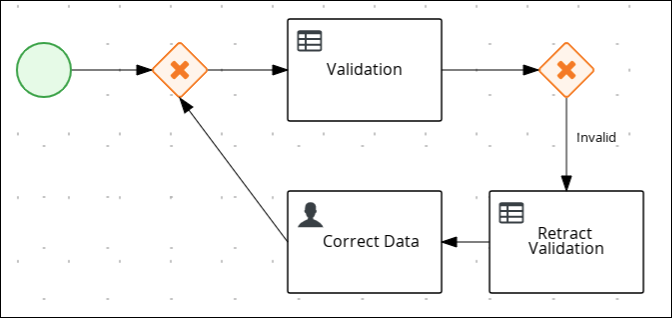

This section shows you how to define the validation data that determines whether the application data is correct, contains an error, or has missing information.

-

Create an outgoing connection from the Validation task to an exclusive gateway:

-

Click the Validation task and click the Create Parallel icon.

-

Hover over the

icon of the parallel and click the Convert into Exclusive icon.

icon of the parallel and click the Convert into Exclusive icon.

-

-

Create an outgoing connection from the exclusive gateway to a new business rule task:

-

Click the exclusive gateway and click the Create Task icon.

-

Drag the new task below the exclusive gateway as shown in the following image.

-

Hover over the

icon of the task and click the Convert into Business Rule icon.

icon of the task and click the Convert into Business Rule icon.

-

If the Properties panel is not open, click the Properties

icon in the upper-right corner.

icon in the upper-right corner. -

In the Properties panel, enter

Retract Validationin the Name field. -

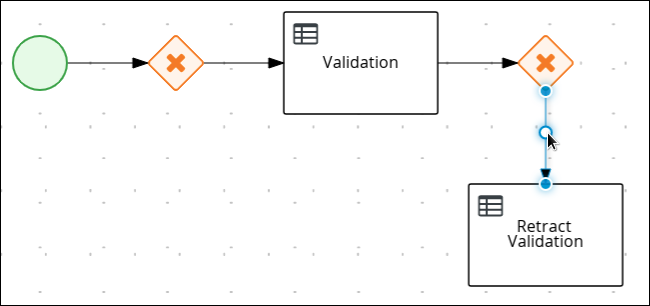

Expand Implementation/Execution, select New from the Rule Flow Group menu, and input

error.

-

-

Configure the connection between the exclusive gateway and the business rule task:

-

Click the connection.

-

If the Properties panel is not open, click the Properties

icon in the upper-right corner.

icon in the upper-right corner. -

In the Properties panel, enter

Invalidin the Name field. -

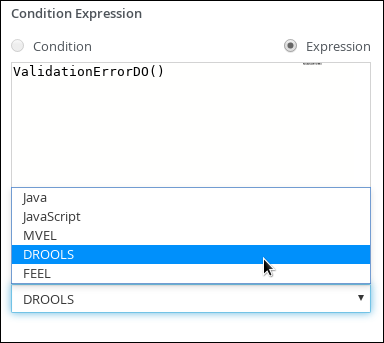

Expand Implementation/Execution and select Expression in the Condition Expression section.

-

From the list, select drools and enter

ValidationErrorDO()in the Condition Expression field.

-

-

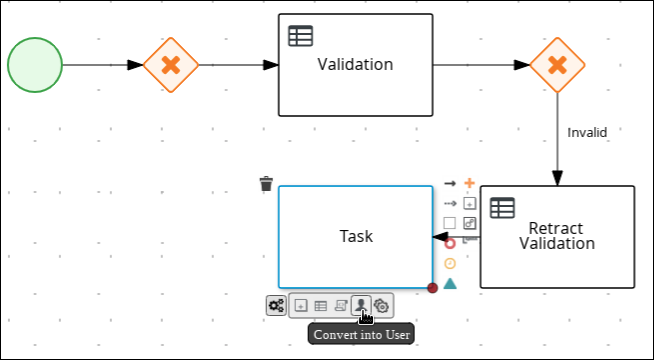

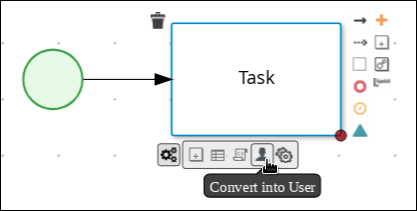

Create an outgoing connection from the Retract Validation task to a new user task:

-

Click the Retract Validation task and click the Create Task icon.

-

Drag the new task below the Validation task as shown below.

-

Hover over the

icon of the task and click the Convert into User icon.

icon of the task and click the Convert into User icon.

-

Click the new user task and in the Properties panel, enter

Correct Datain the Name field. -

Expand Implementation/Execution and enter

CorrectDatain the Task Name field. -

Select New from the Groups menu, and input

broker. -

Click

next to Assignments.

next to Assignments. -

In the Correct Data Data I/O window, click Add and create the following assignments:

-

Name: application

-

Data Type: Application [com.myspace.mortgage_app]

-

Source: application

-

Data Outputs and Assignements

-

-

Name: application

-

Data Type: Application [com.myspace.mortgage_app]

-

Target: application

Figure 13. Correct Data Data I/O assignments

Figure 13. Correct Data Data I/O assignments

-

-

Click OK in the Correct Data Data I/O window.

-

Above the canvas, click Save.

-

-

Click the Correct Data user task then click the Create sequence Flow icon and drag it back to the first exclusive gateway. Your workflow should look similar to the following diagram:

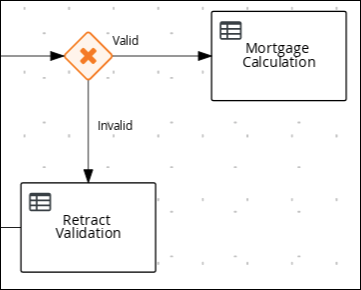

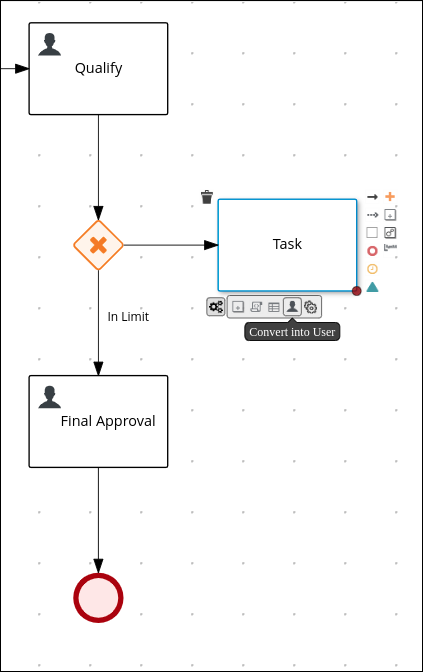

3.5.1.3. Calculating the mortgage

The mortgage calculation business process determines the applicant’s mortgage borrowing limit.

-

Return to the second exclusive gateway and create an outgoing connection to a new business rule task.

-

Click the created connection and in the Properties panel, input

Validin the Name field.-

Expand Implementation/Execution and select Expression in the Condition Expression section.

-

From the list, select drools and enter

not ValidationErrorDO()in the Condition Expression field.

-

-

Click the new business rule task and in the Properties panel, input

Mortgage Calculationin the Name field.

-

Expand Implementation/Execution, select New from the Rule Flow Group menu, and input

mortgagecalculation.

-

-

Expand Data Assignments and click

next to Assignments.

next to Assignments. -

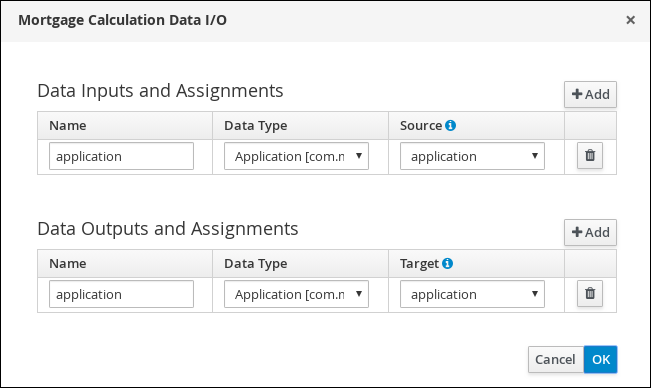

In the Mortgage Calculation Data I/O window, click Add to create the following assignments and click Save.

Figure 14. Mortgage Calculation Data I/O assignments

Figure 14. Mortgage Calculation Data I/O assignments -

Click OK in the Mortgage Calculation Data I/O window.

-

Click an empty space on the canvas, scroll down, expand Process Data, and click

next to Process Variables. Enter the following values:

next to Process Variables. Enter the following values:-

Name:

inlimit -

Data Type:

Boolean

-

-

Create an outgoing connection from the Mortgage Calculation task to a new user task.

-

Click the user task, enter

Qualifyin the Name field. -

Expand Implementation/Execution and enter

Qualifyin the Task Name field. -

Select New from the Groups menu, and input

approver. -

Click

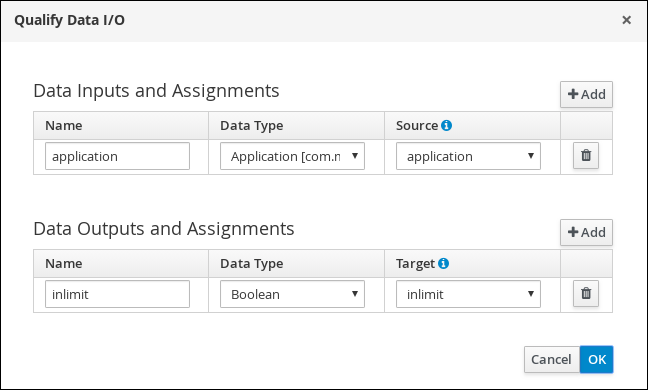

next to Assignments. In the Qualify Data I/O window, click Add to create the following assignments:

next to Assignments. In the Qualify Data I/O window, click Add to create the following assignments: Figure 15. Qualify Data I/O assignments

Figure 15. Qualify Data I/O assignments -

Click OK in the Qualify Data I/O window.

-

Above the canvas, click Save to confirm your changes.

-

Click the Qualify user task, click the Create parallel menu icon, and convert it to an exclusive gateway.

-

Drag the new exclusive gateway below the Qualify user task.

-

Create an outgoing connection from the exclusive gateway and connect it to a new user task.

-

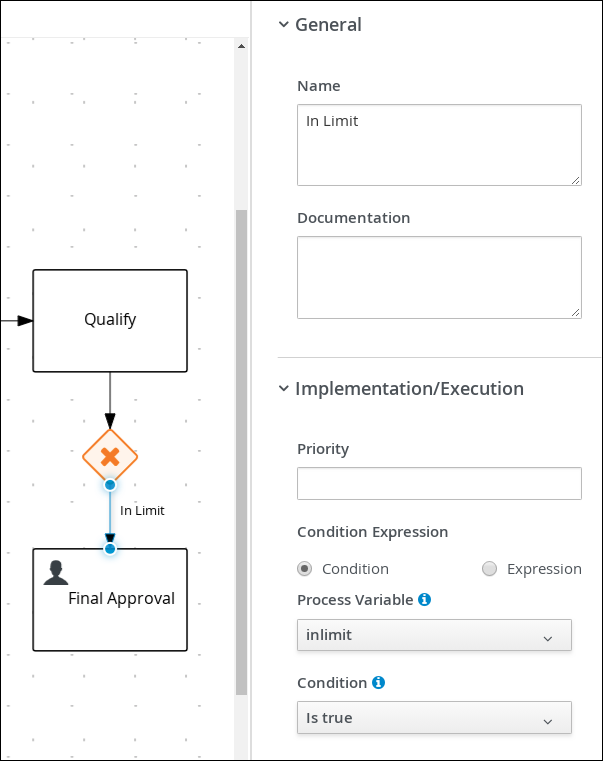

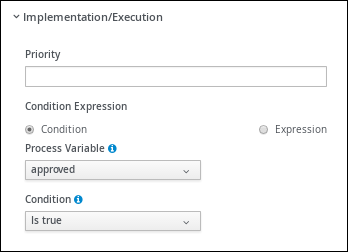

Click the connection and input

In Limitin the Name field of the Properties panel. -

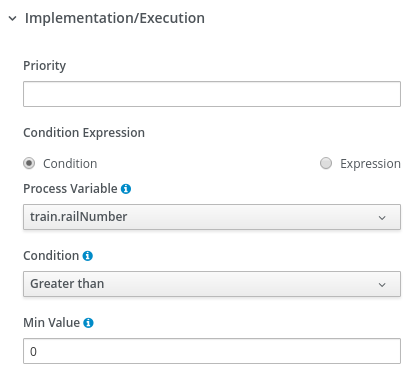

Expand Implementation/Execution and select Condition in the Condition Expression section.

-

Select inlimit from the Process Variable drop-down menu and select Is true from the Condition drop-down menu.

-

Click the user task, enter

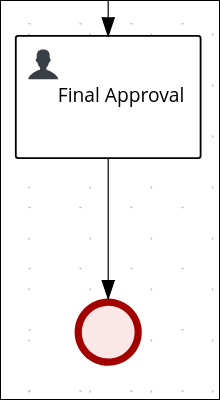

Final Approvalin the Name field. -

Expand Implementation/Execution and enter

FinalApprovalin the Task Name field. -

Select New from the Groups menu, and input

manager. -

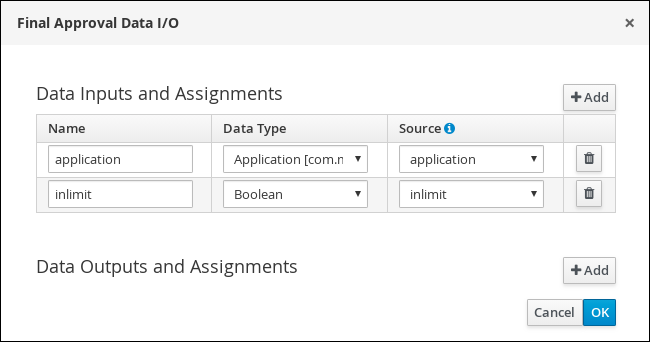

Click

next to Assignments. In the Final Approval Data I/O window, click Add to create the following assignments:

next to Assignments. In the Final Approval Data I/O window, click Add to create the following assignments: Figure 16. Final Approval Data I/O assignments

Figure 16. Final Approval Data I/O assignments -

Click OK in the Final Approval Data I/O window.

-

Above the canvas, click Save to confirm your changes.

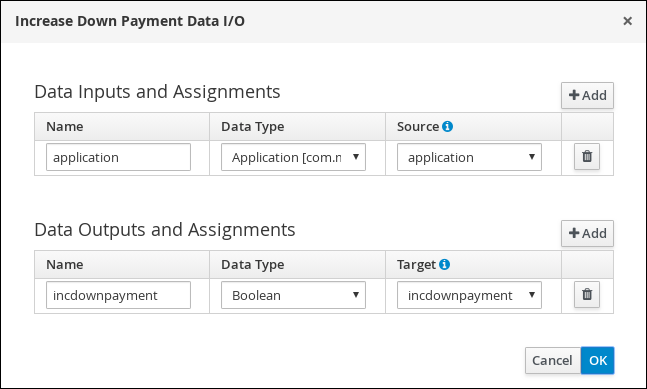

3.5.1.4. Increasing the down payment

The Increasing the Down Payment business process checks to see if the applicant qualifies for the loan by increasing their down payment. The final result is either the final loan approval, or loan denial based on the applicant’s inability to increase the down payment.

-

Click the Final Approval user task and select Create End from the user task quick menu.

-

Move the end event below the Final Approval user task.

-

Return to the exclusive gateway that connects with the Final Approval user task. Create a second outgoing connection and connect it to a new user task.

-

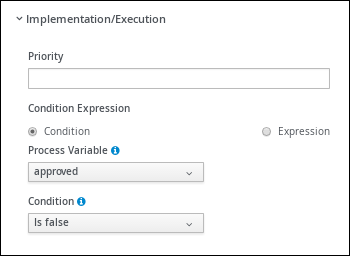

Click the connection and input

Not in Limitin the Name field of the Properties panel. -

Expand Implementation/Execution and select Condition in the Condition Expression section.

-

Select inlimit from the Process Variable drop-down menu and select Is false from the Condition drop-down menu.

-

Click an empty space on the canvas, scroll down, expand Process Data, and click

next to Process Variables. Enter the following values:

next to Process Variables. Enter the following values:-

Name:

incdownpayment -

Data Type:

Boolean

-

-

Click the new user task and in the Properties panel, input

Increase Down Paymentin the Name field. -

Expand Implementation/Execution and enter

IncreaseDownPaymentin the Task Name field. -

Select New from the Groups menu, and input

broker. -

Click

next to Assignments. In the Increase Down Payment Data I/O window, click Add to create the following assignments:

next to Assignments. In the Increase Down Payment Data I/O window, click Add to create the following assignments: Figure 17. Increase Down Payment Data I/O assignments

Figure 17. Increase Down Payment Data I/O assignments -

Click OK in the Increase Down Payment Data I/O window.

-

Above the canvas, click Save to confirm your changes.

-

Click the Increase Down Payment user task, click the Create parallel menu icon, and convert it to an exclusive gateway.

-

Drag the new exclusive gateway below the Increase Down Payment user task.

-

Create an outgoing connection from the exclusive gateway to an end event.

-

Click the connection and input

Down payment not increasedin the Name field of the Properties panel. -

Expand Implementation/Execution and select Expresssion in the Condition Expression section.

-

Input return !incdownpayment; and select java from the drop-down menu.

-

Create an outgoing connection from the exclusive gateway and connect it to the first exclusive gateway.

-

Click the connection and input

Down payment increasedin the Name field of the Properties panel. -

Expand Implementation/Execution and select Expresssion in the Condition Expression section.

-

Input return incdownpayment; and select java from the drop-down menu.

-

Above the canvas, click Save to confirm your changes and save the entire business process.

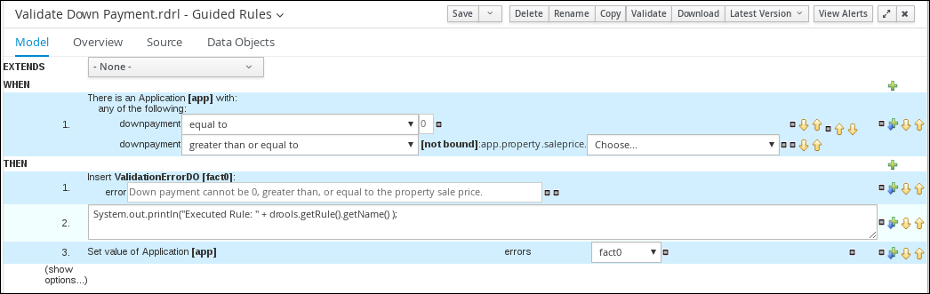

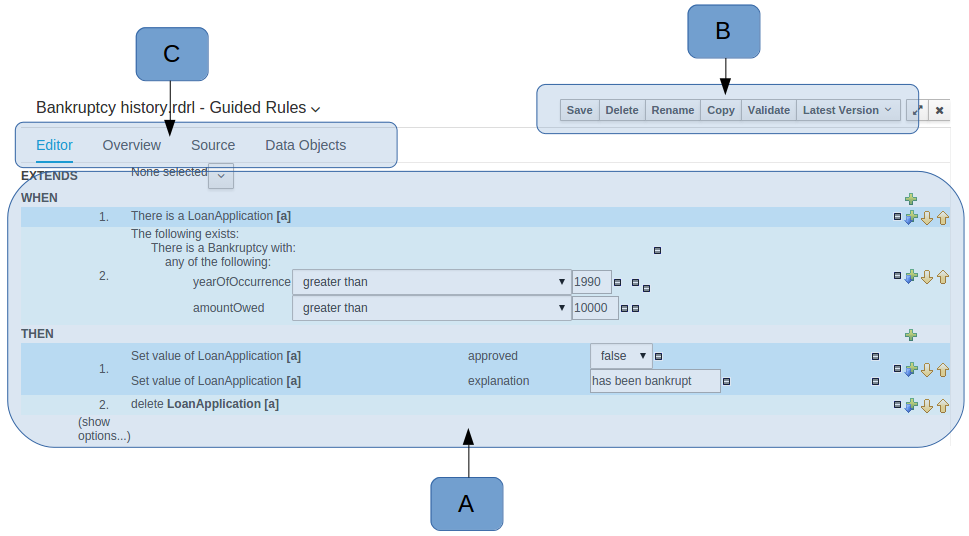

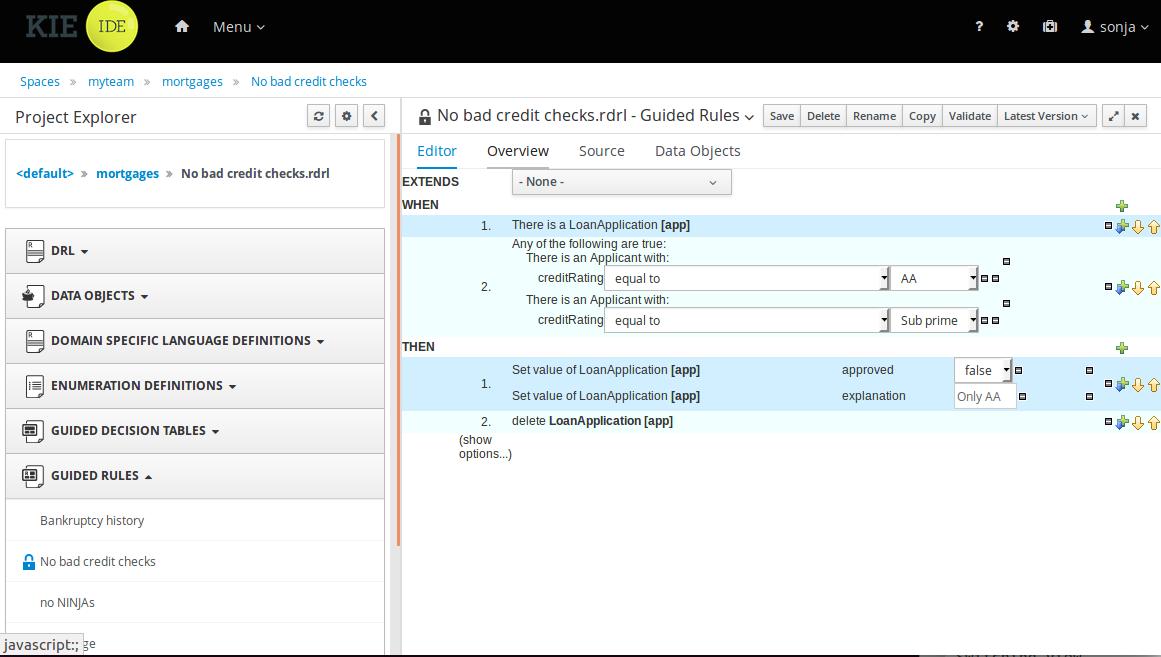

3.5.2. Viewing the Mortgage_Process business rules

The goal of this chapter is to introduce you to the predefined business rules for the Mortgage_Process project. For this tutorial, you do not create and define the business rules. Instead, review the WHEN and THEN rules that are already defined in the Mortgage_Process sample project’s preconfigured business rules. For information about creating guided business rules, see Designing a decision service using guided rules.

3.5.2.1. Viewing the Validate Down Payment guided rule

Review the WHEN and THEN rules so that you understand how the conditions are set and used later when you run the process.

-

Click Menu → Design → Projects, and click Mortgage_Process.

-

From the asset list, click the right arrow to view the second page of the asset list and click the Validate Down Payment guided rule.

-

Review the Validate Down Payment guided rule’s WHEN and THEN conditions and values.

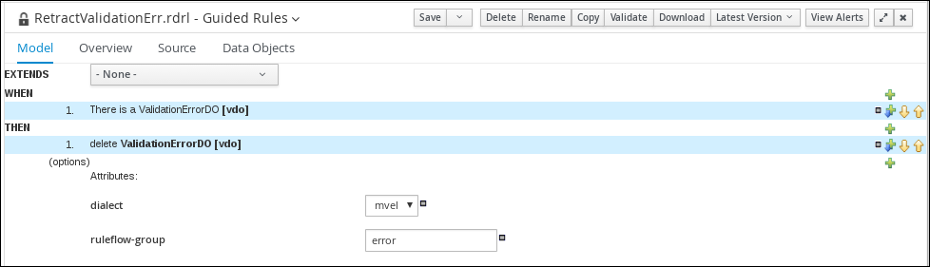

3.5.2.2. Viewing the RetractValidationErr guided rule

Review the WHEN and THEN rules so that you understand how the conditions are set and used later when you run the process.

-

Click Menu → Design → Projects, and click Mortgage_Process.

-

From the asset list, click the right arrow to view the second page of the asset list and click the RetractValidationErr guided rule.

-

Review the RetractValidationErr guided rule’s WHEN and THEN conditions and values.

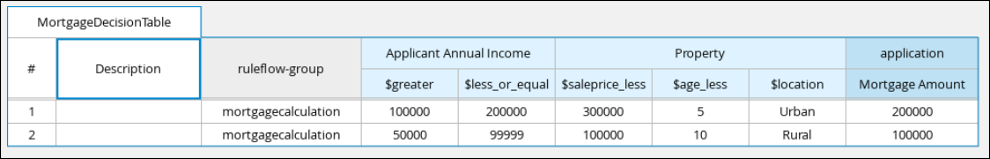

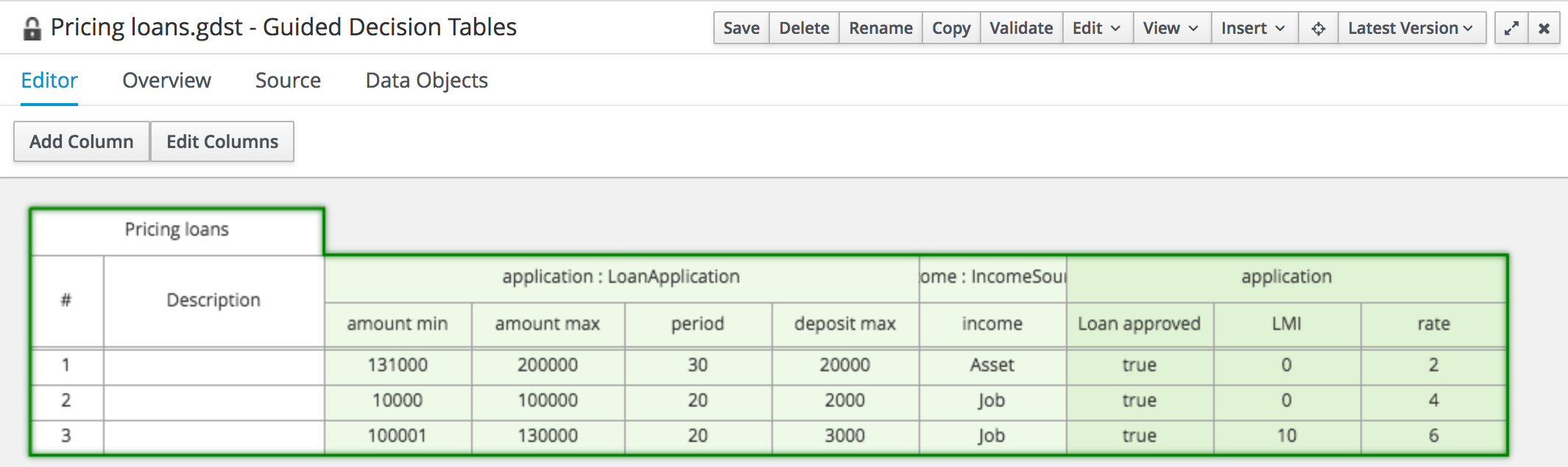

3.5.3. Viewing the mortgage decision table

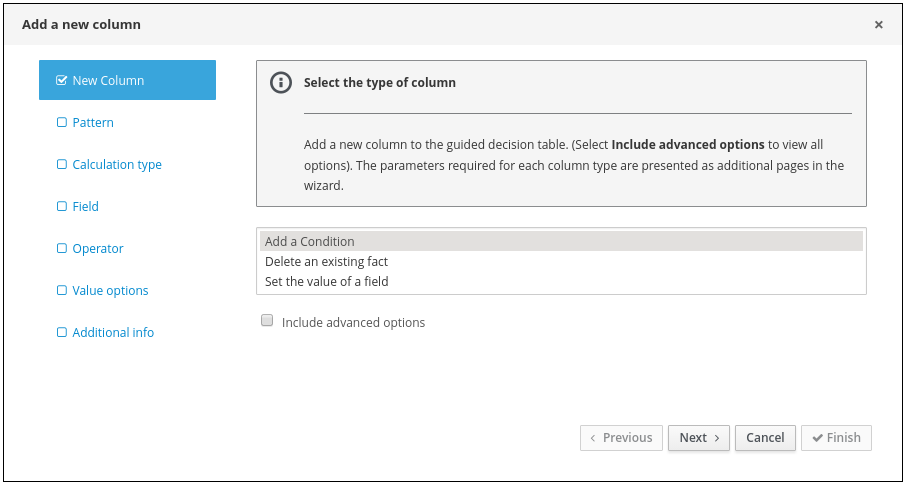

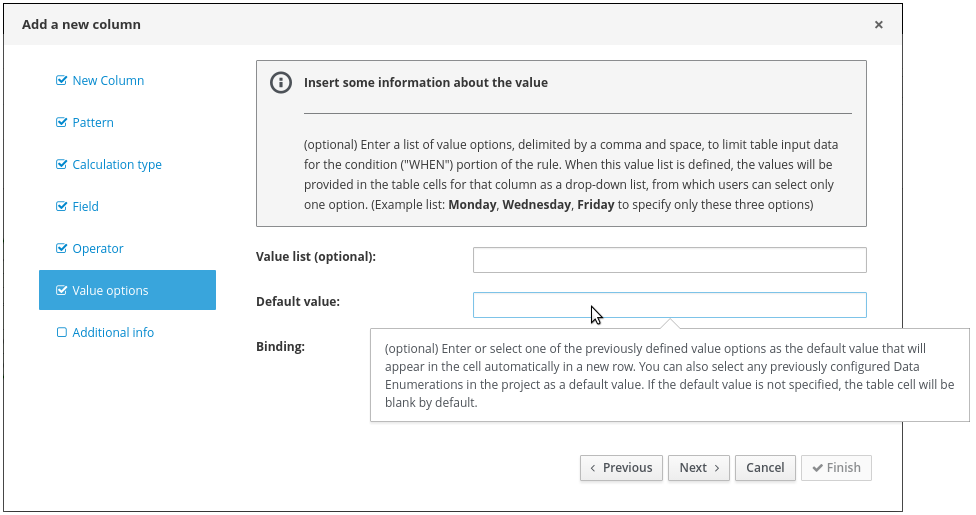

The goal of this chapter is to introduce you to the MortgageDecisionTable decision table. For this tutorial, you do not create and set the decision table conditions. Instead, review the values and the conditions that are already defined in the Mortgage_Process sample project’s MortgageDecisionTable Guided Decision Tables asset. For information about creating decision tables, see Designing a decision service using guided decision tables.

-

The business rules have been defined. For more information, see Viewing the Mortgage_Process business rules.

-

In Business Central, go to Menu → Design → Projects → Mortgage_Process.

-

Scroll down and click the MortgageDecisionTable Guided Decision Tables asset.

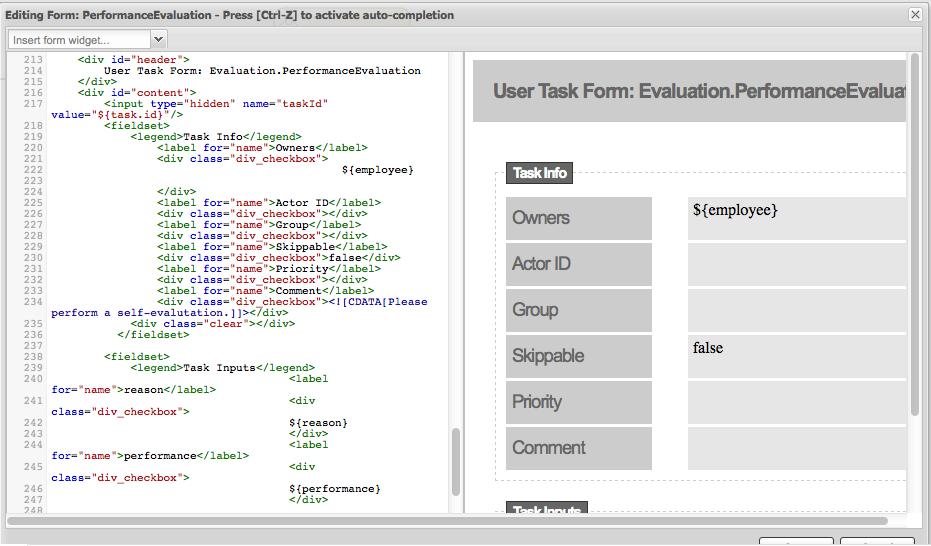

3.6. Forms in Business Central

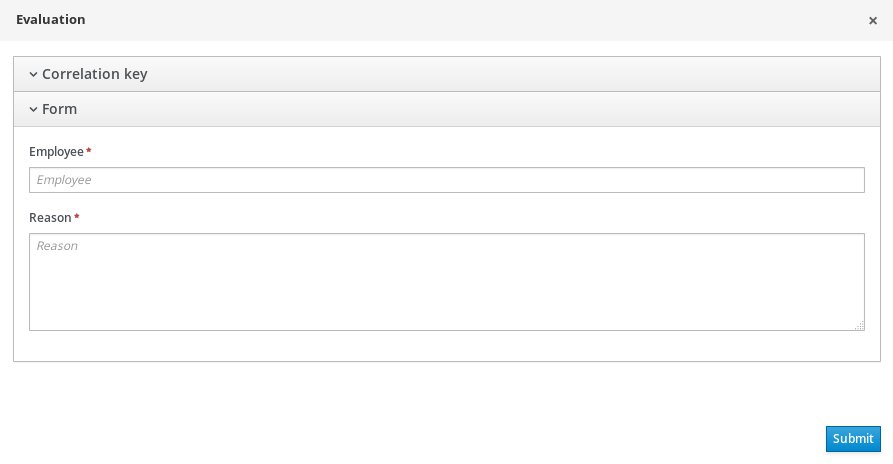

A form is a layout definition for a page, defined as HTML, that is displayed as a dialog window to the user during process and task instantiation. Task forms acquire data from a user for both the process and task instance execution, whereas process forms take input and output from process variables.

The input is then mapped to the task using the data input assignment, which you can use inside of a task. When the task is completed, the data is mapped as a data output assignment to provide the data to the parent process instance.

3.6.1. Viewing the Mortgage_Process forms

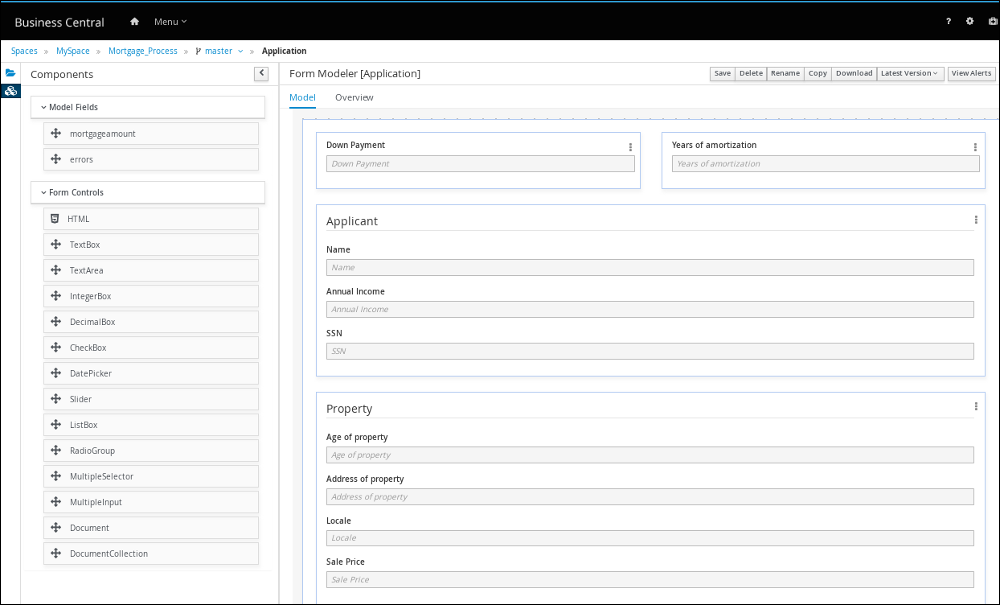

The goal of this chapter is to introduce you to the Mortgage_Process sample project’s predefined forms which are used for collecting user data for the mortgage application business process. For this tutorial, you do not create and modify the Mortgage_Process forms. Instead, review the predefined sample forms. For information about creating forms, see Designing business processes using BPMN models.

-

In Business Central, go to Menu → Design → Projects → Mortgage_Process.

-

From the asset list, click the right arrow to view the second page of the asset list and select the Applicant form.

Figure 19. Applicant sample form

Figure 19. Applicant sample form -

Click Menu → Design → Projects → Mortgage_Process.

-

From the asset list, select the Property form. The Property form is shown in the following screenshot:

Figure 20. Property sample form

Figure 20. Property sample form -

Click Menu → Design → Projects → Mortgage_Process.

-

From the asset list, select the Application form. The Application form is shown in the following screenshot:

Figure 21. Application sample form

Figure 21. Application sample form -

Click the X icon in the upper-right corner to close the editor.

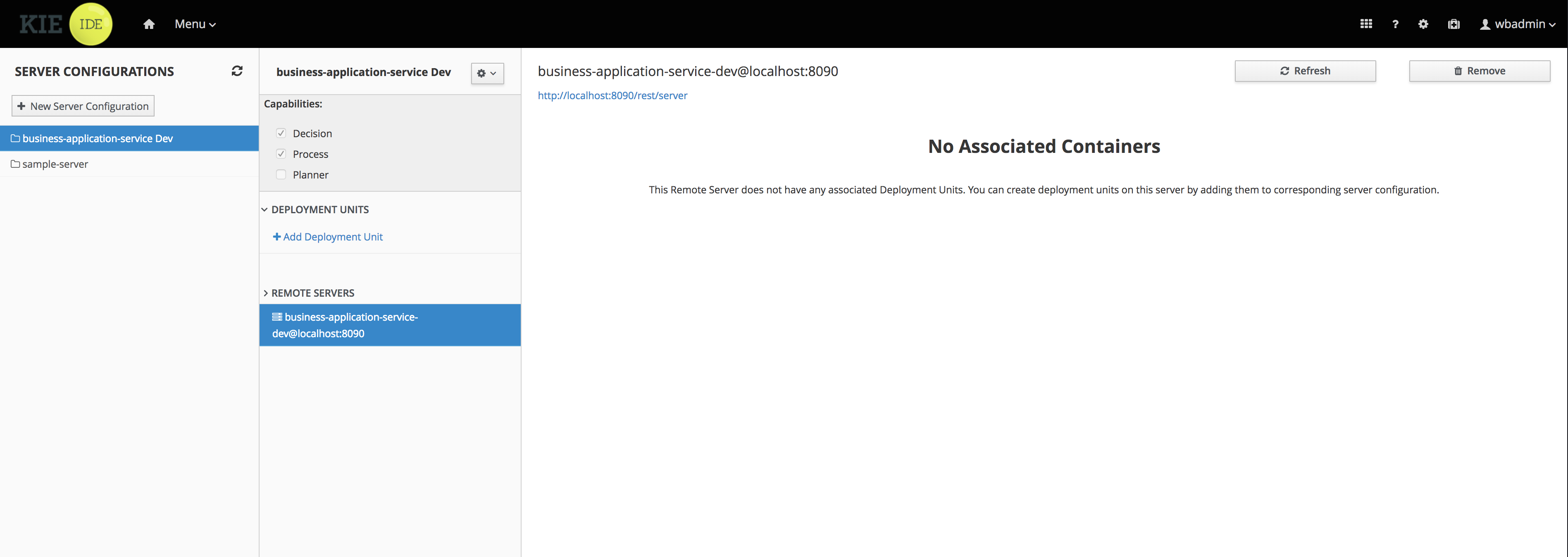

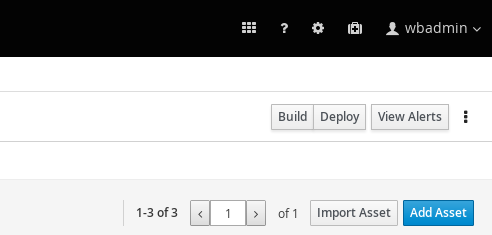

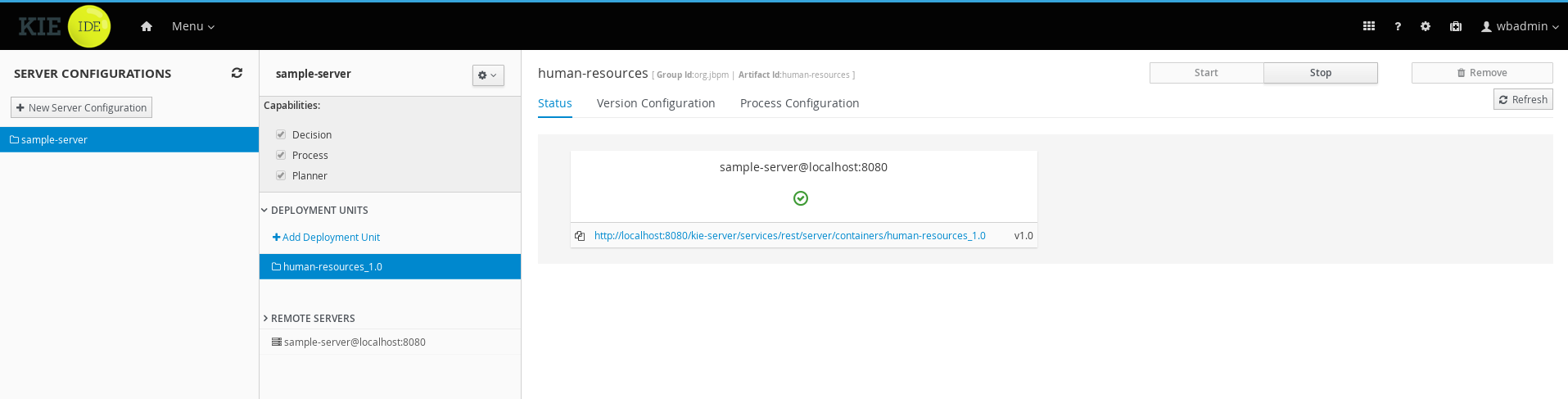

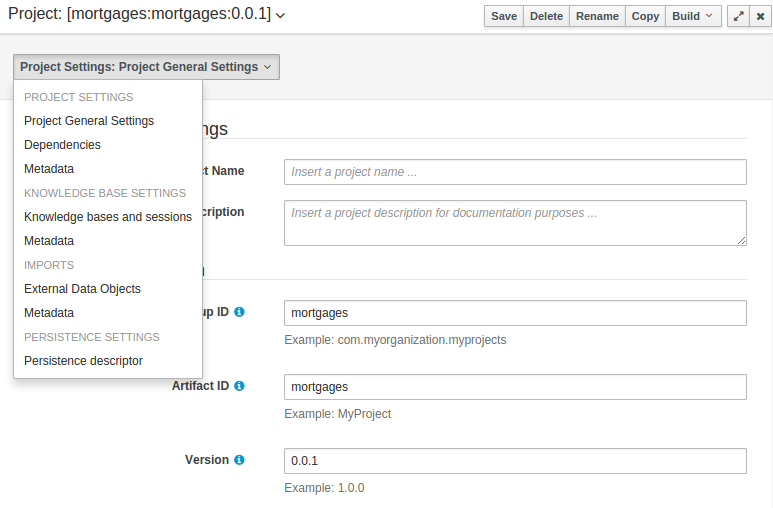

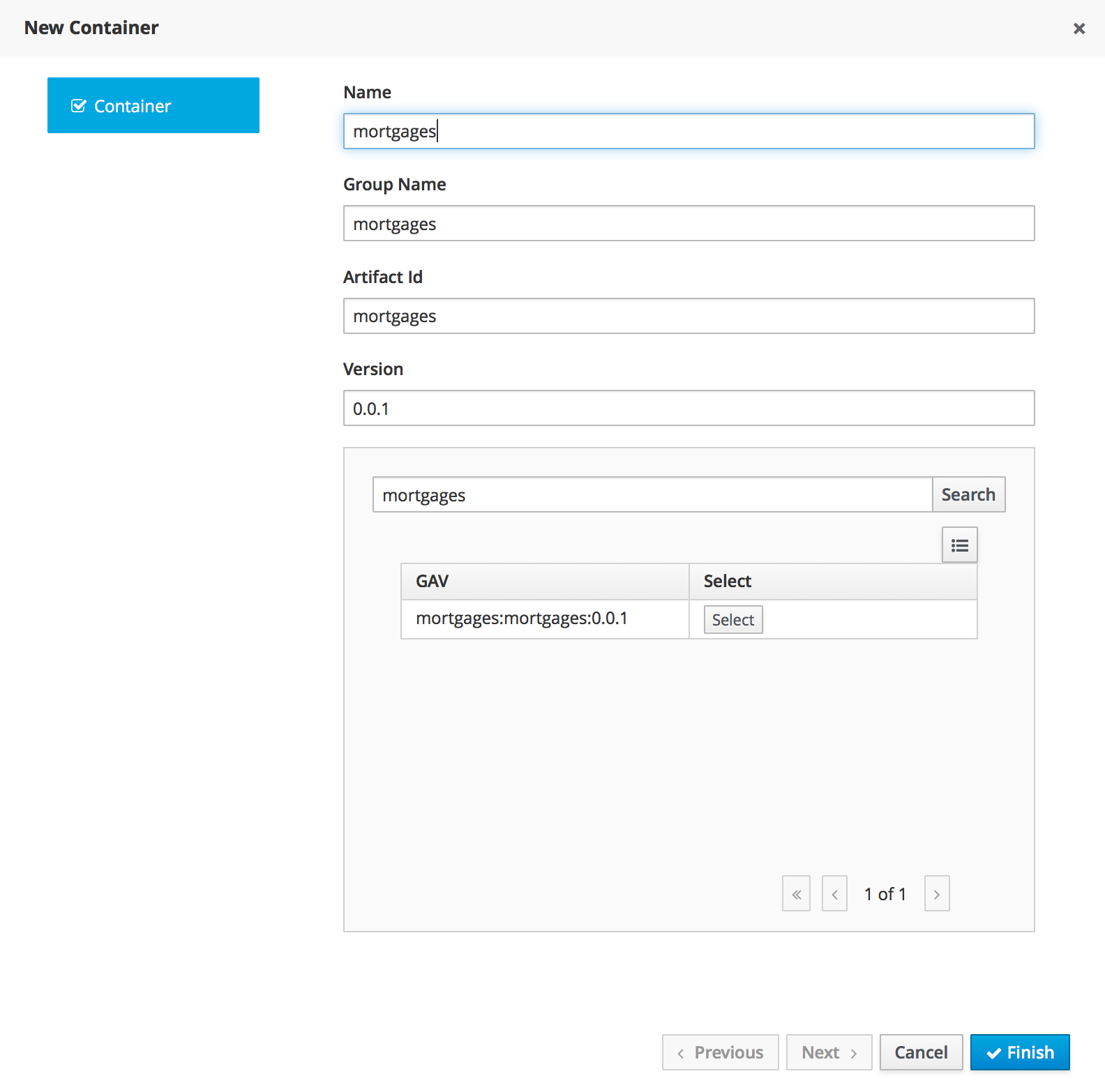

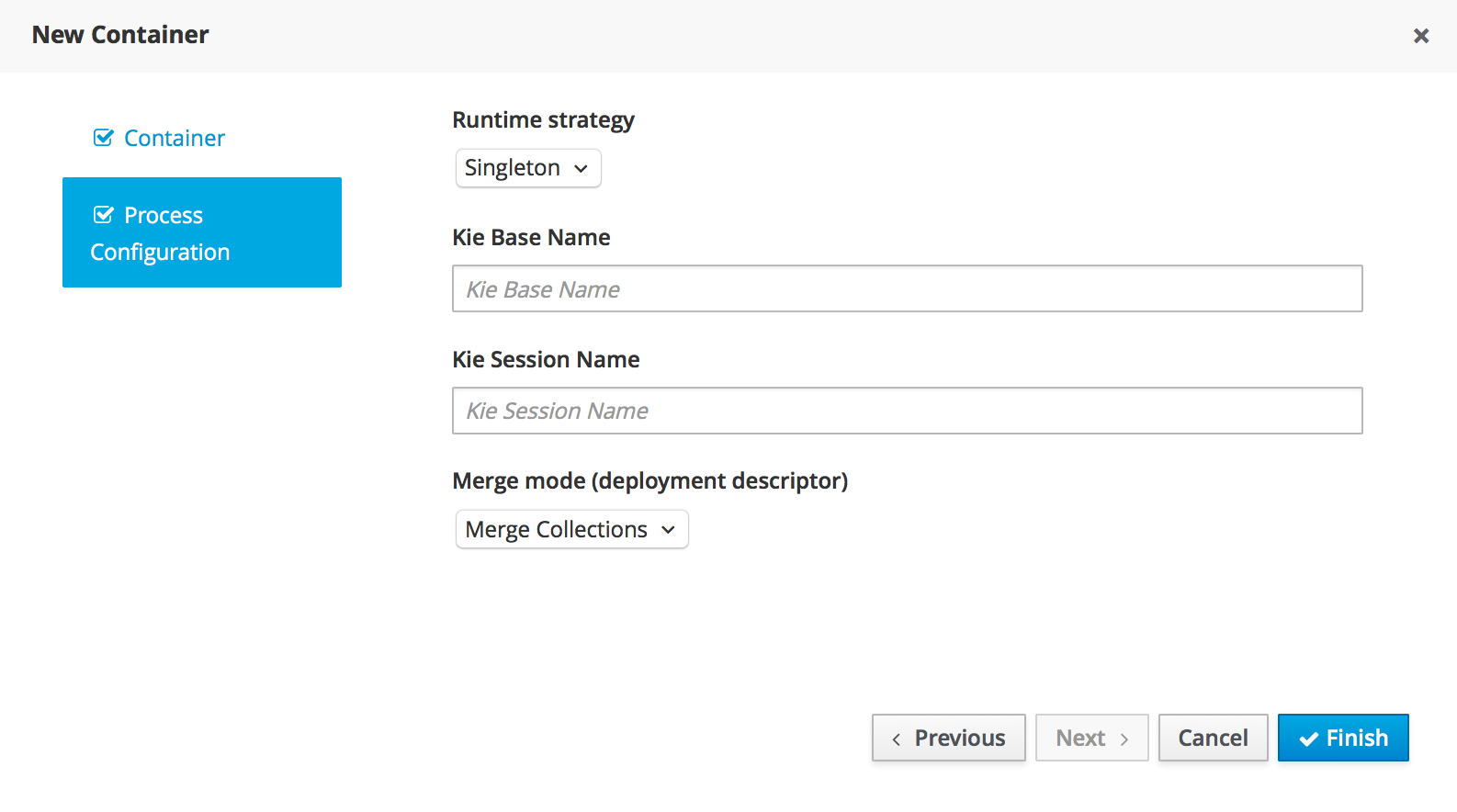

3.7. Deploying the MortgageApprovalProcess process application

The following chapter instructs you how to build and deploy a new instance of the Mortgage_Process application in jBPM.

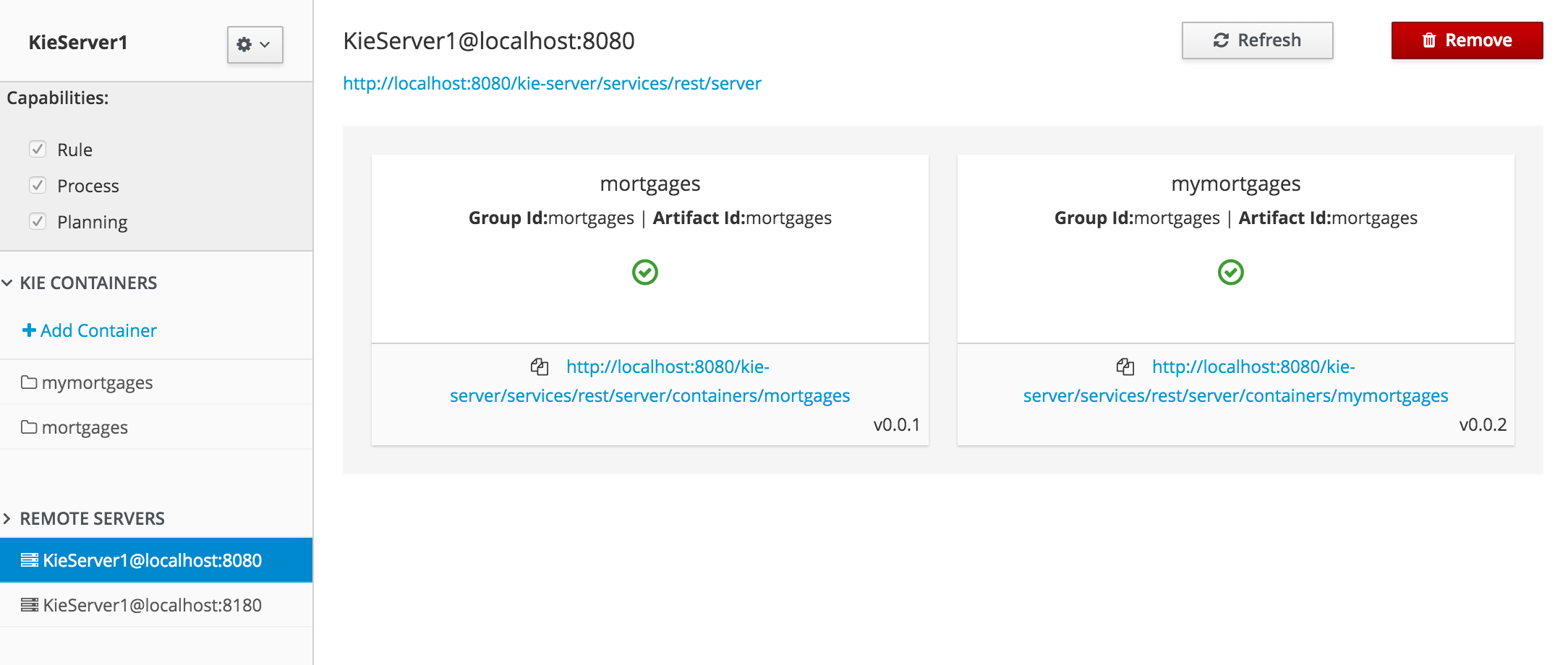

-

KIE Server is deployed and connected to Business Central.

-

In Business Central, go to Menu → Design → Projects → Mortgage_Process.

-

Click Deploy.

-

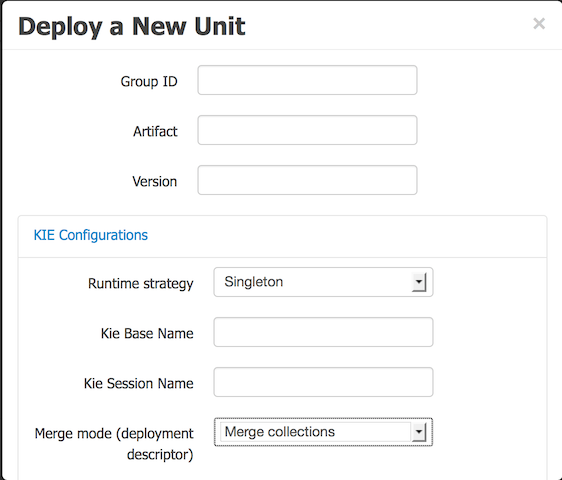

If no KIE container (deployment unit) is included with the project name, a container with default values is automatically created.

-

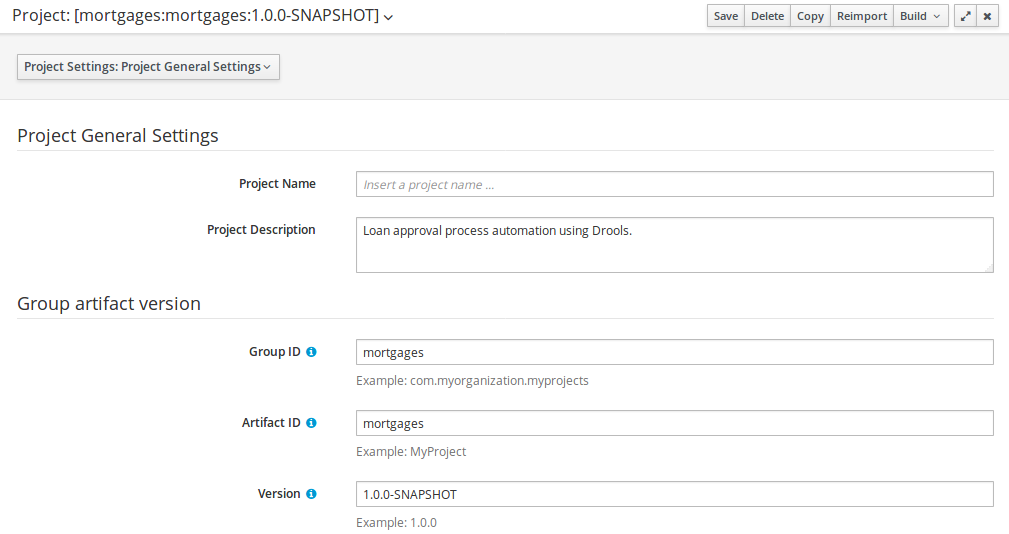

If an older version of the project is already deployed, go to the project settings and change the project version. When finished, save the change and click Deploy. This deploys a new version of the same project with the latest changes in place, alongside the older version(s).

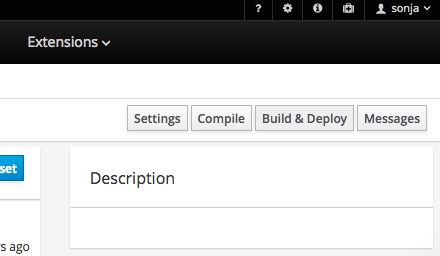

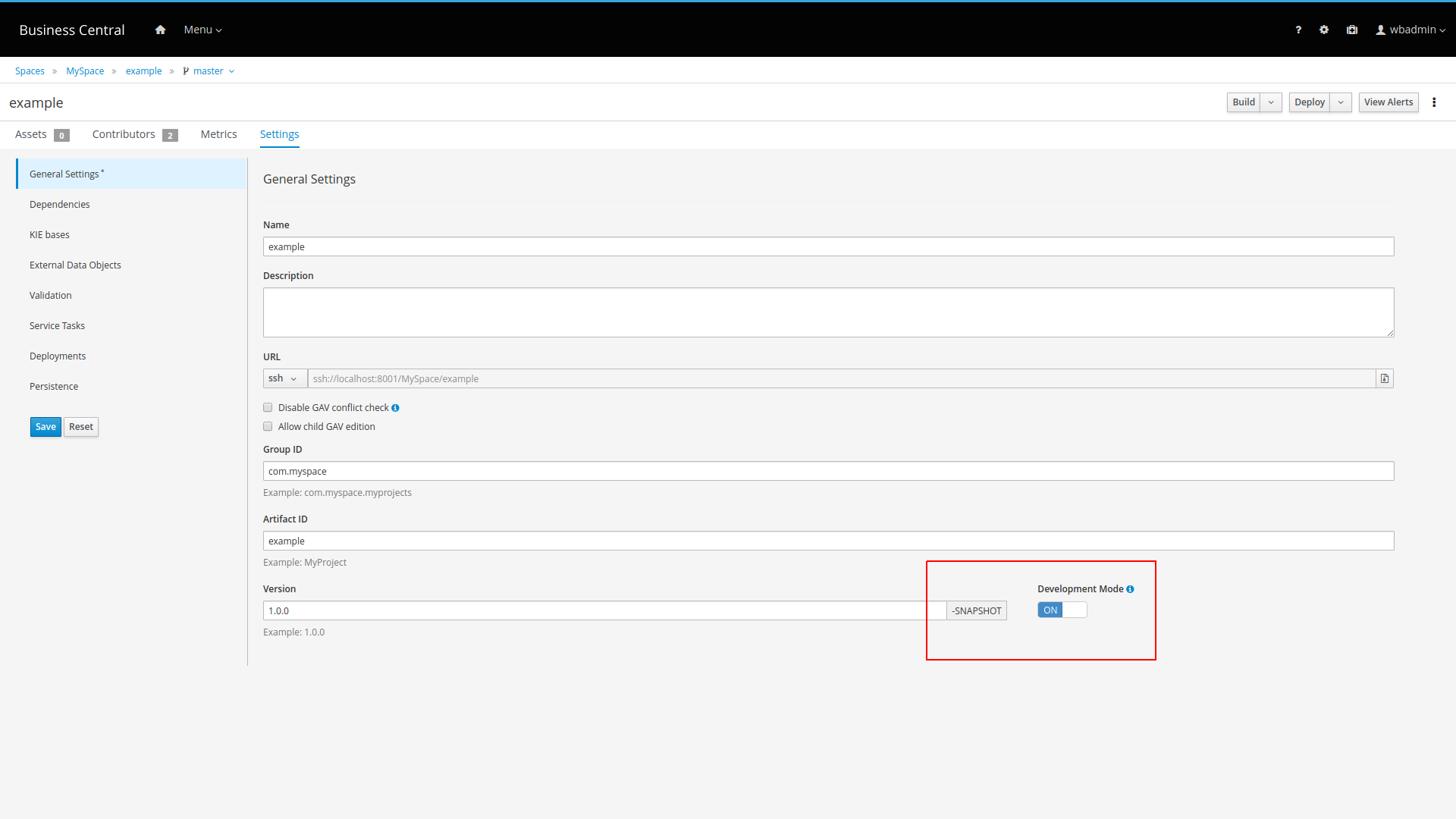

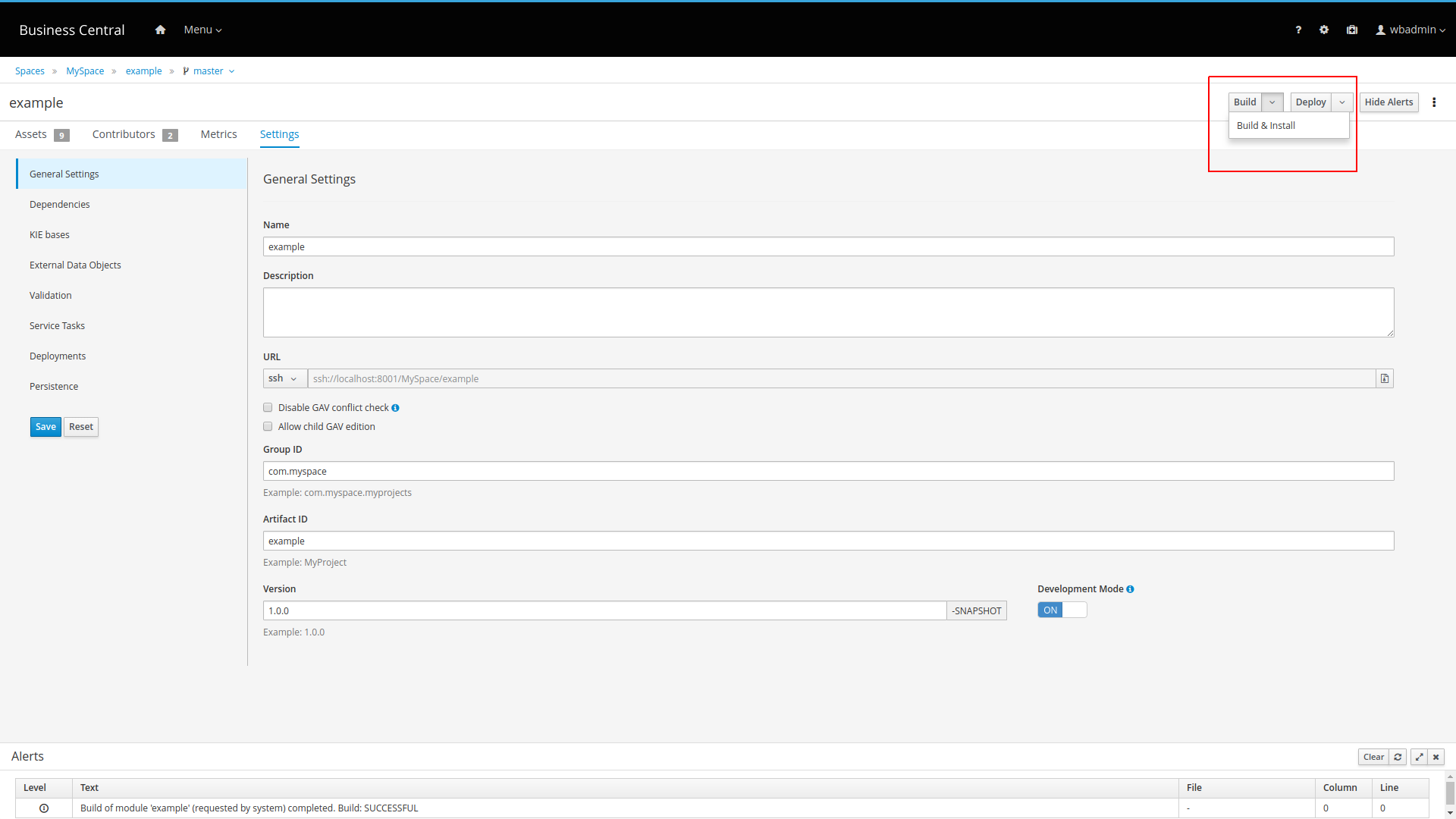

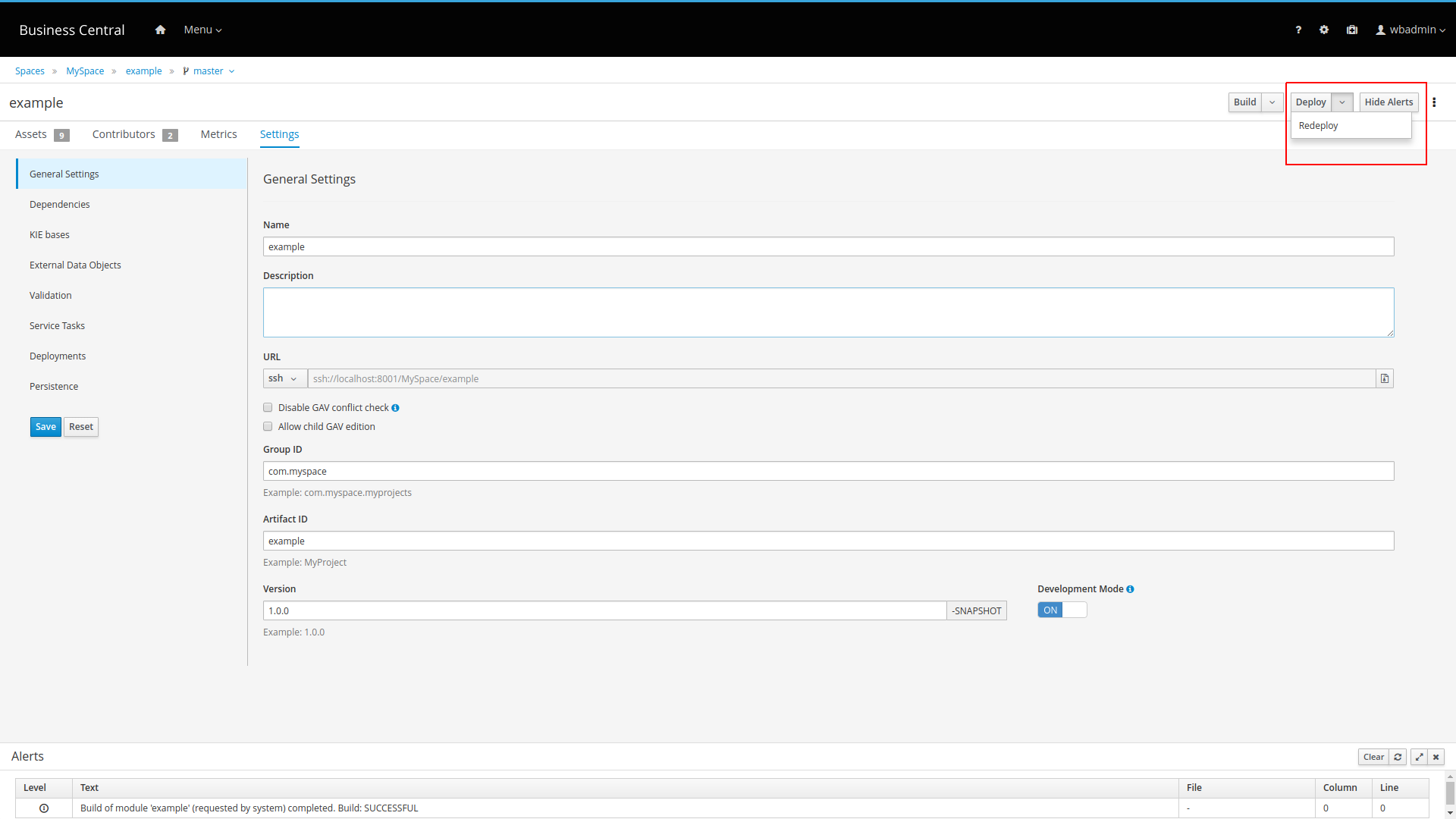

You can also select the Build & Install option to build the project and publish the KJAR file to the configured Maven repository without deploying to a KIE Server. In a development environment, you can click Deploy to deploy the built KJAR file to a KIE Server without stopping any running instances (if applicable), or click Redeploy to deploy the built KJAR file and replace all instances. The next time you deploy or redeploy the built KJAR, the previous deployment unit (KIE container) is automatically updated in the same target KIE Server. In a production environment, the Redeploy option is disabled and you can click Deploy only to deploy the built KJAR file to a new deployment unit (KIE container) on a KIE Server.

To configure the KIE Server environment mode, set the

org.kie.server.modesystem property toorg.kie.server.mode=developmentororg.kie.server.mode=production. To configure the deployment behavior for a corresponding project in Business Central, go to project Settings → General Settings → Version and toggle the Development Mode option. By default, KIE Server and all new projects in Business Central are in development mode. You cannot deploy a project with Development Mode turned on or with a manually addedSNAPSHOTversion suffix to a KIE Server that is in production mode.

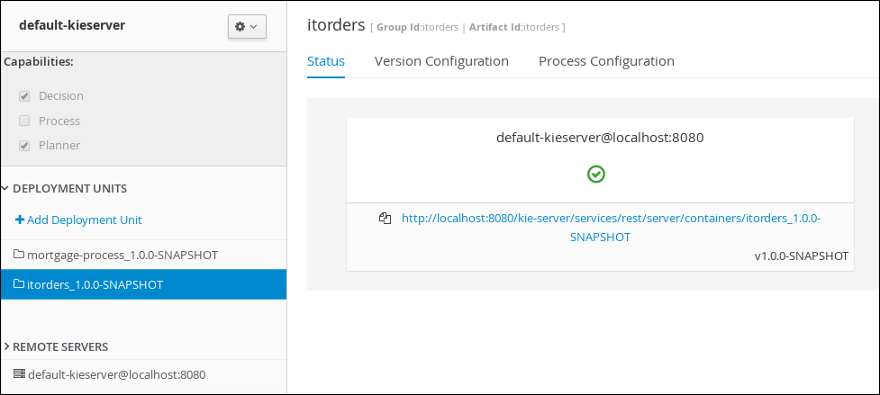

-

-

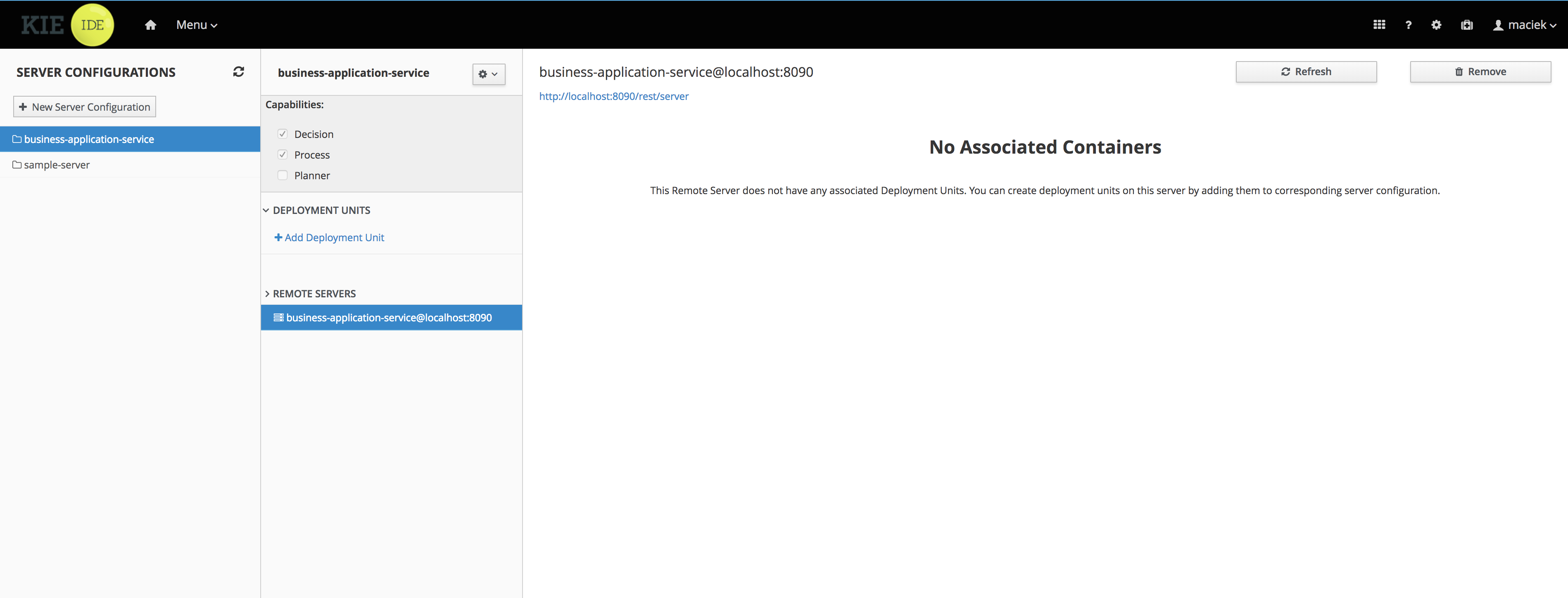

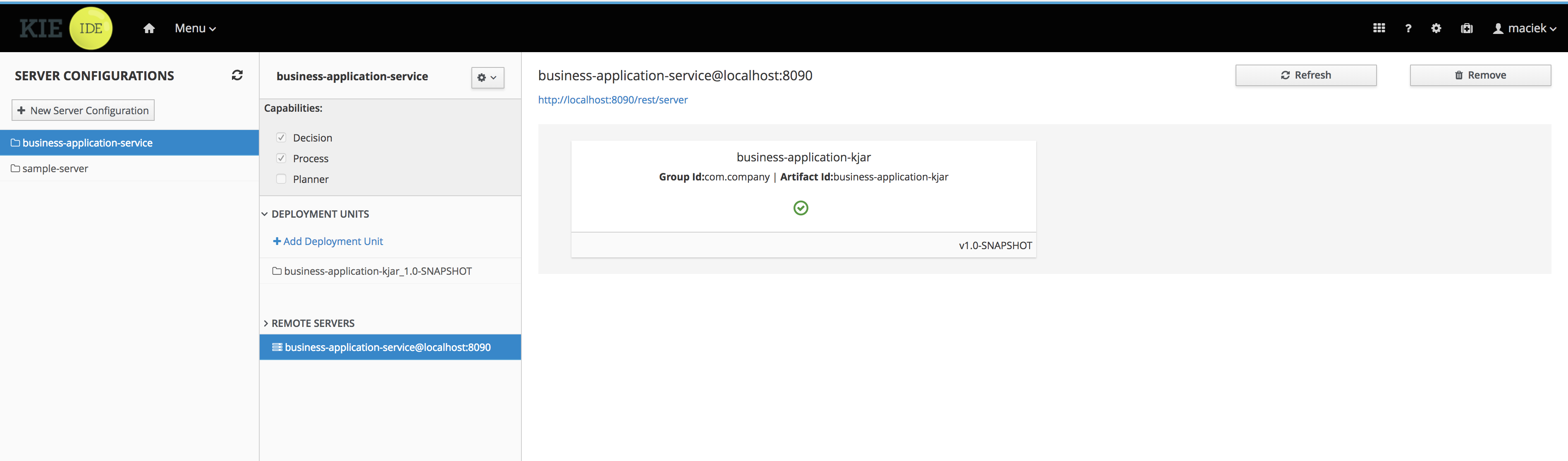

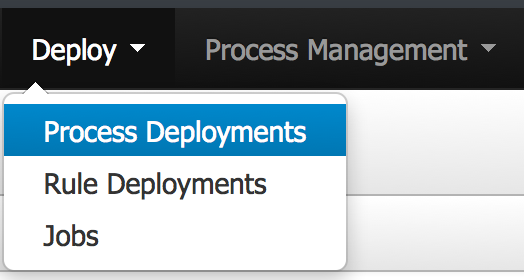

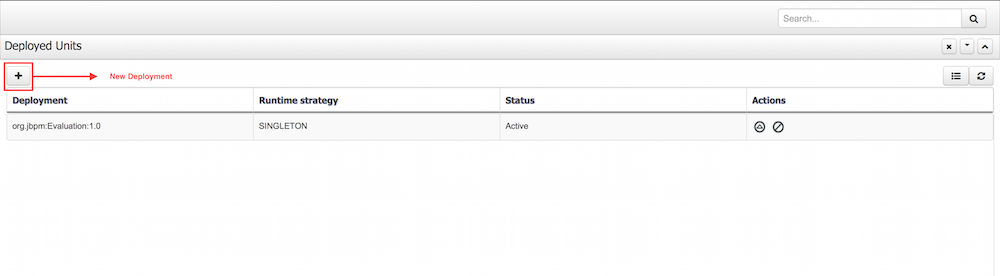

To review project deployment details, click View deployment details in the deployment banner at the top of the screen or in the Deploy drop-down menu. This option directs you to the Menu → Deploy → Execution Servers page.

3.8. Executing the MortgageApprovalProcess process application

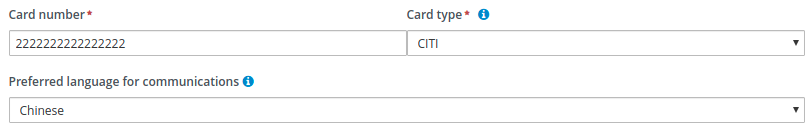

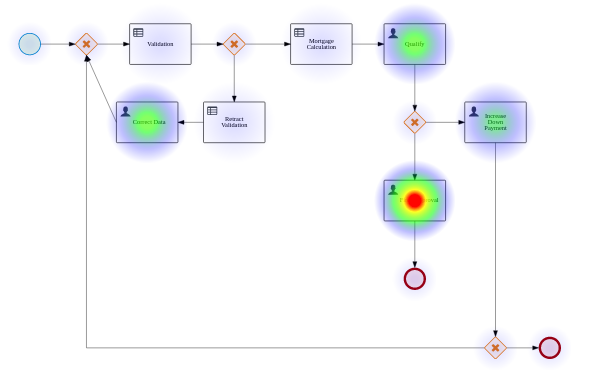

Now that you have deployed the project, you can execute the project’s defined functionality. For this tutorial you input data into a mortgage application form acting as the mortgage broker. The MortgageApprovalProcess business process runs and determines whether or not the applicant has offered an acceptable down payment based on the decision rules that you defined earlier. The business process either ends the rule testing or requests that the applicant increase the down payment to proceed. If the application passes the business rule testing, the bank’s approver reviews the application and either approve or deny the loan.

-

KIE Server is deployed and connected to Business Central.

-

The Mortgage_Process application has been deployed.

-

The users working on the tasks are members of the following groups and roles:

-

approver group: For the Qualify task

-

broker group: For the Correct Data and Increase Down Payment tasks

-

manager role: For the Final Approval task

-

-

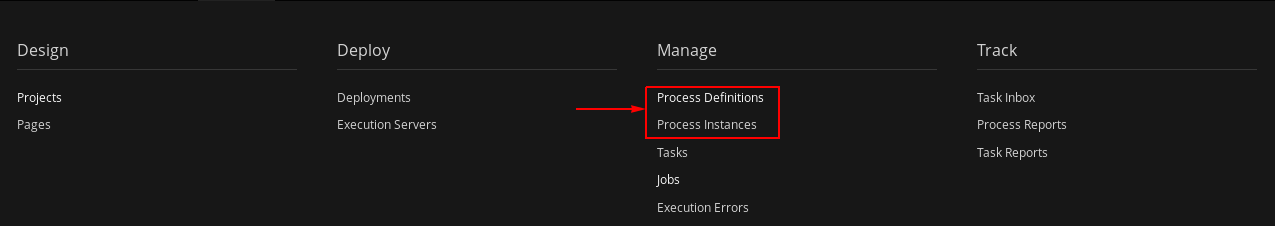

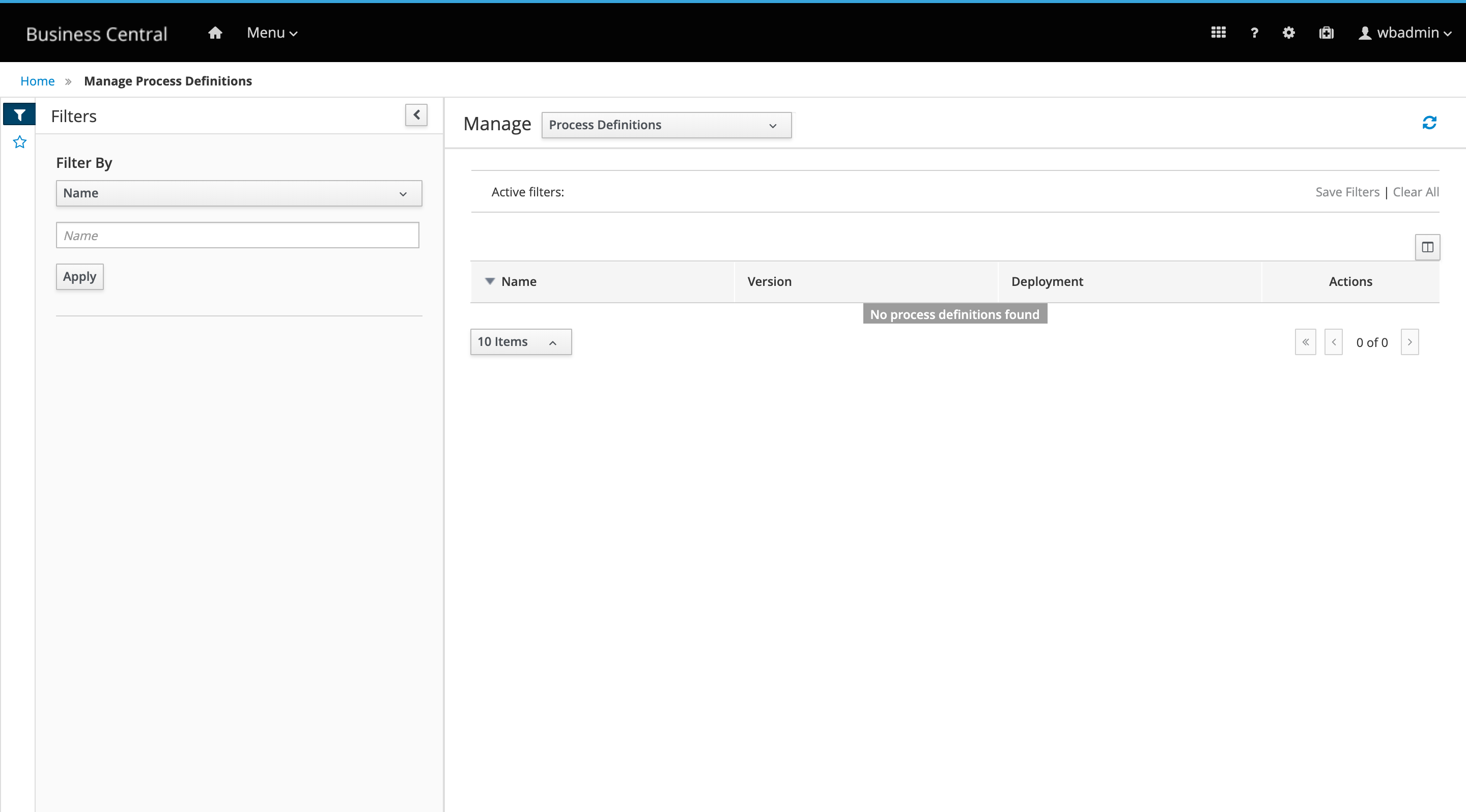

Log in to jBPM as

Bill(the broker) and click Menu → Manage → Process Definitions. -

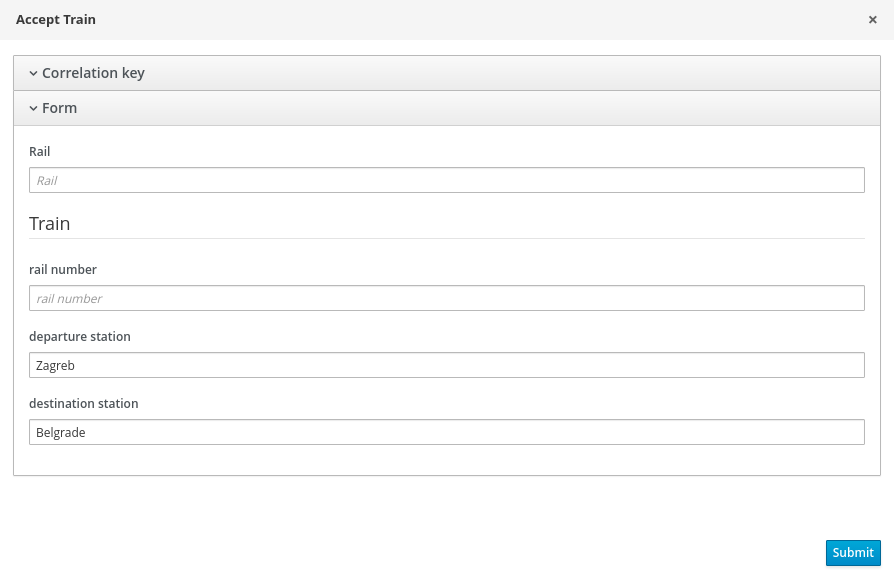

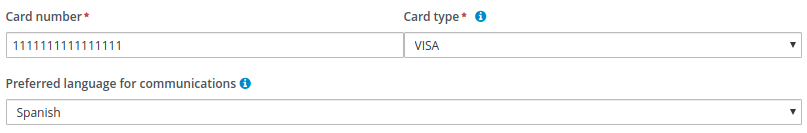

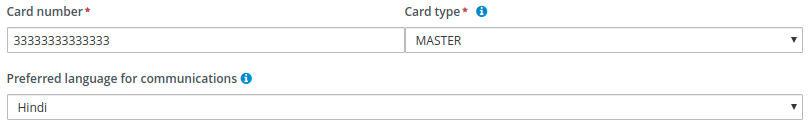

Click the three vertical dots in the Actions column and select Start to start to open the Application form and input the following values in to the form fields:

-

Down Payment:

30000 -

Years of amortization:

10 -

Name:

Ivo -

Annual Income:

60000 -

SSN:

123456789 -

Age of property:

8 -

Address of property:

Brno -

Locale:

Rural -

Property Sale Price:

50000

-

-

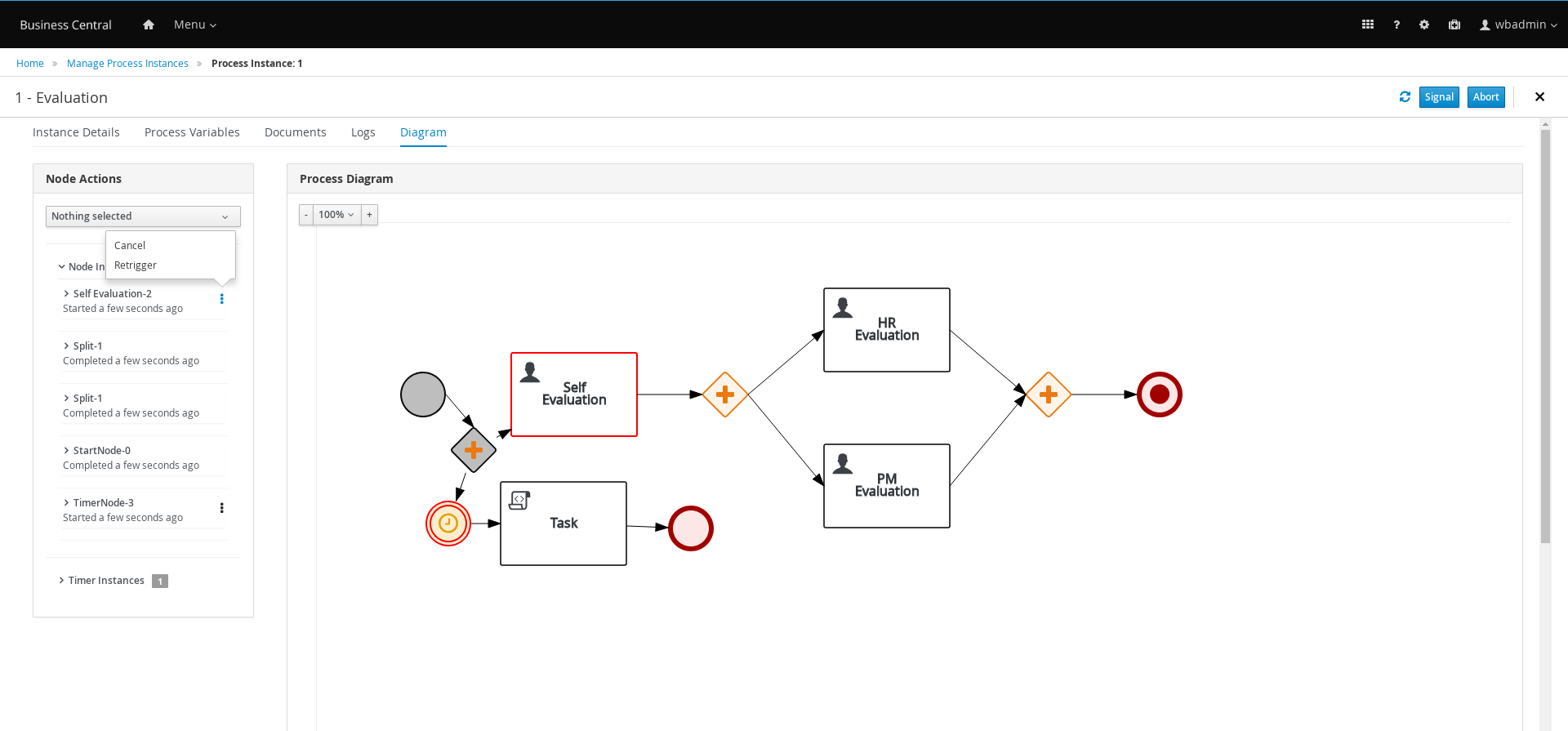

Click Submit to start a new process instance. After starting the process instance, the Instance Details view opens.

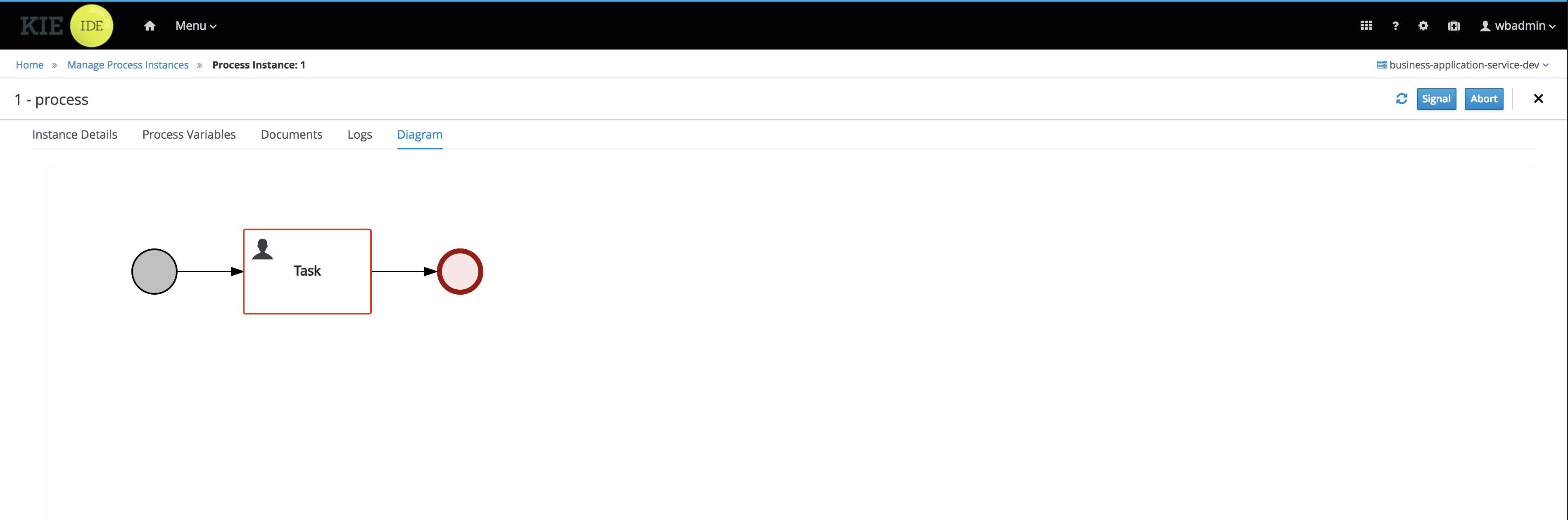

-

Click the Diagram tab to view the process flow within the process diagram. The state of the process is highlighted as it moves through each task.

-

Log out of Business Central and log back in as

Katy. -

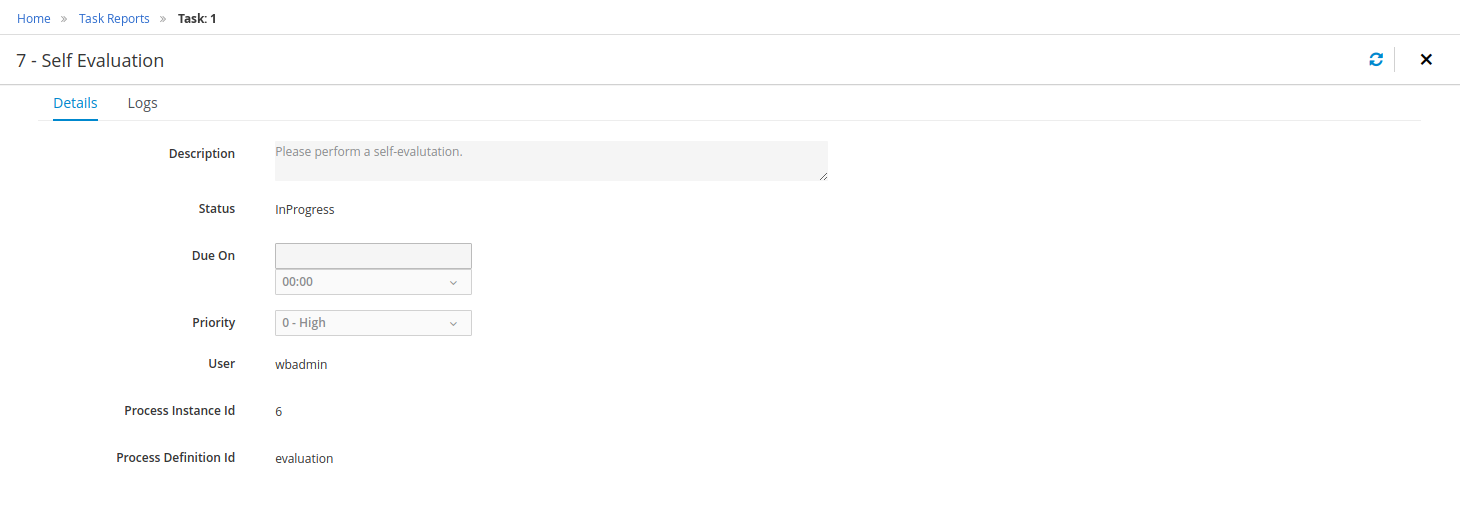

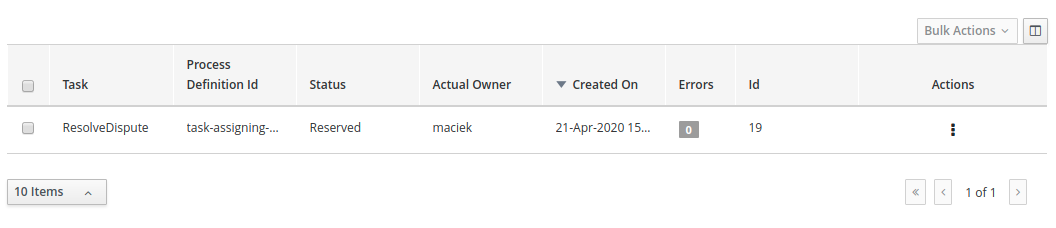

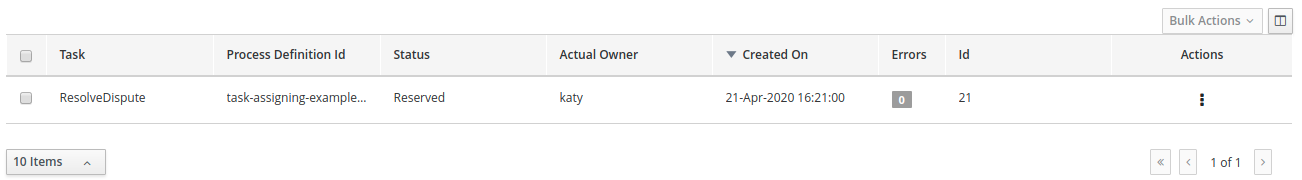

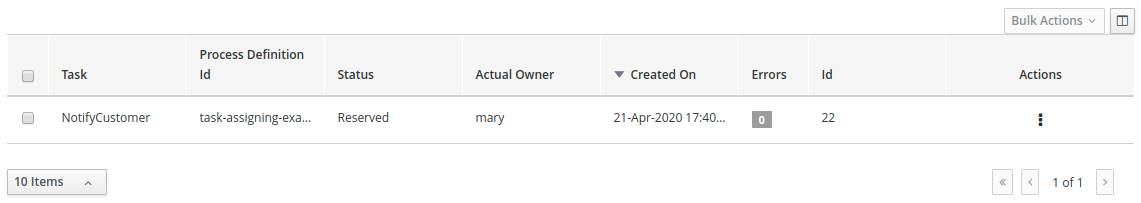

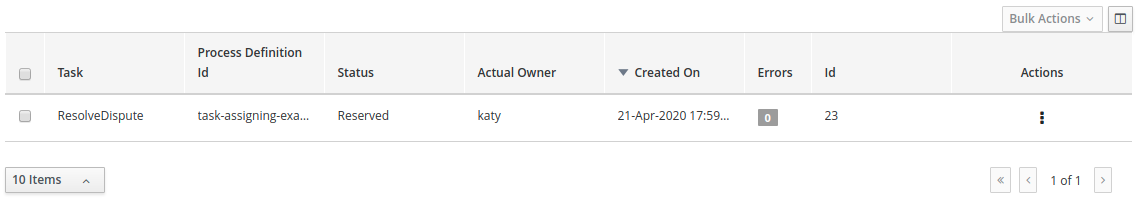

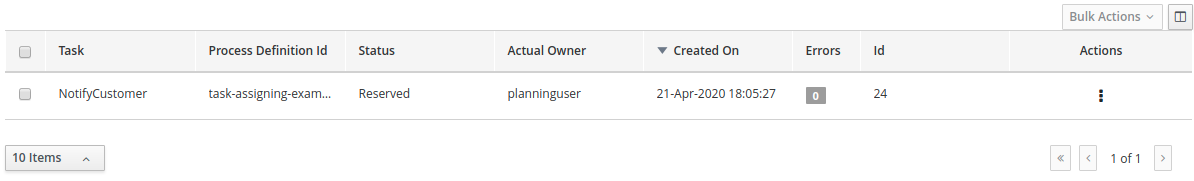

Click Menu → Track → Task Inbox. This takes you to the Qualify form.

-

Click the three vertical dots in the Actions column and select and click Claim. The Qualify task Status is now

Reserved. -

Click the Qualify task row to open and review the task information. Click Claim and then Start at the bottom of the form.

The application form is now active for approval or denial.

-

To approve the application, select Is mortgage application in limit? and click Complete.

-

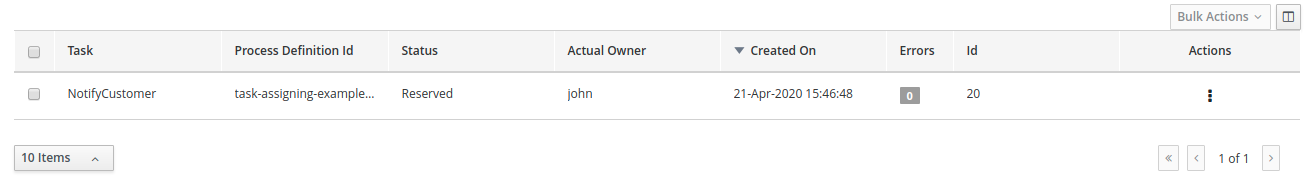

In the Task Inbox, click anywhere in the Final Approval row to open the Final Approval task.

-

In the Final Approval row, click the three vertical dots in the Actions column and click Claim.

-

Click anywhere in the Final Approval row to open the Final Approval task. Click Start at the bottom of the form.

-

Note that the Inlimit check box is selected to reflect that that application is ready for final approval. Click Complete.

| The Save and Release buttons are only used to either pause the approval process and save the instance if you are waiting on a field value, or to release the task for another user to modify. |

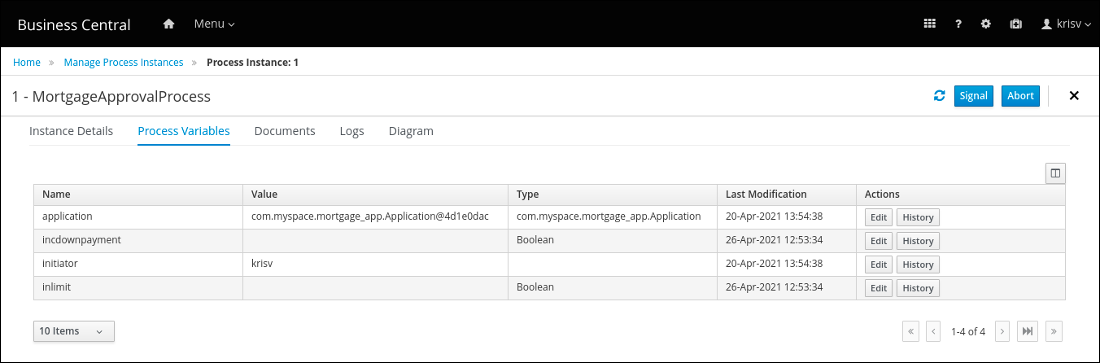

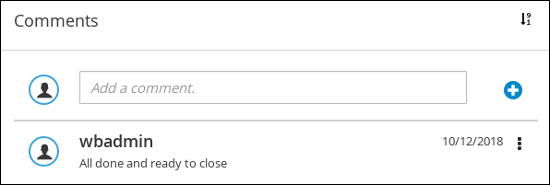

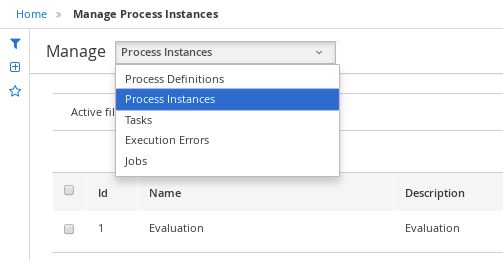

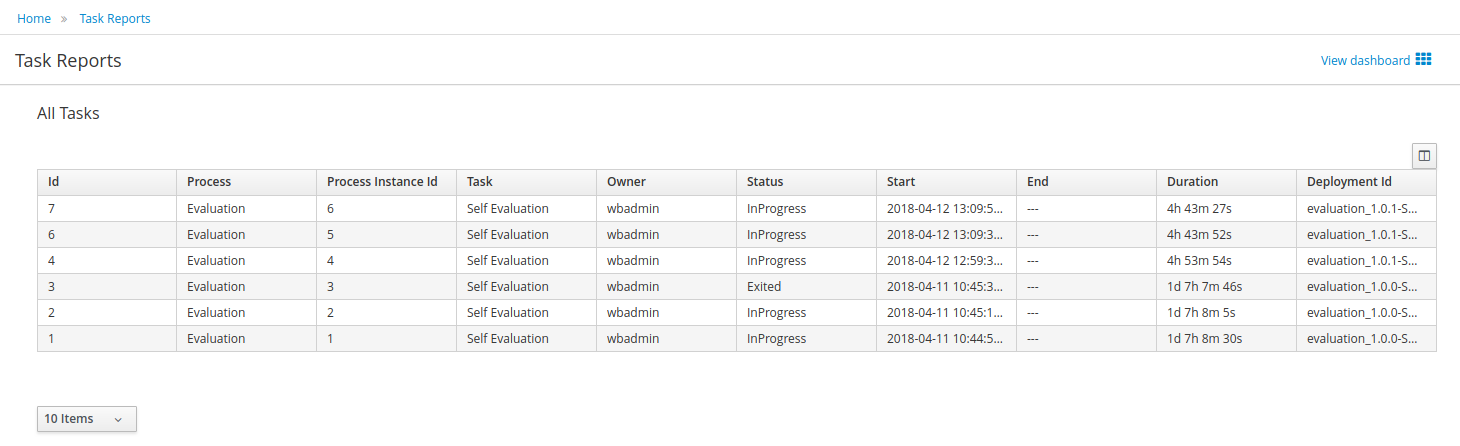

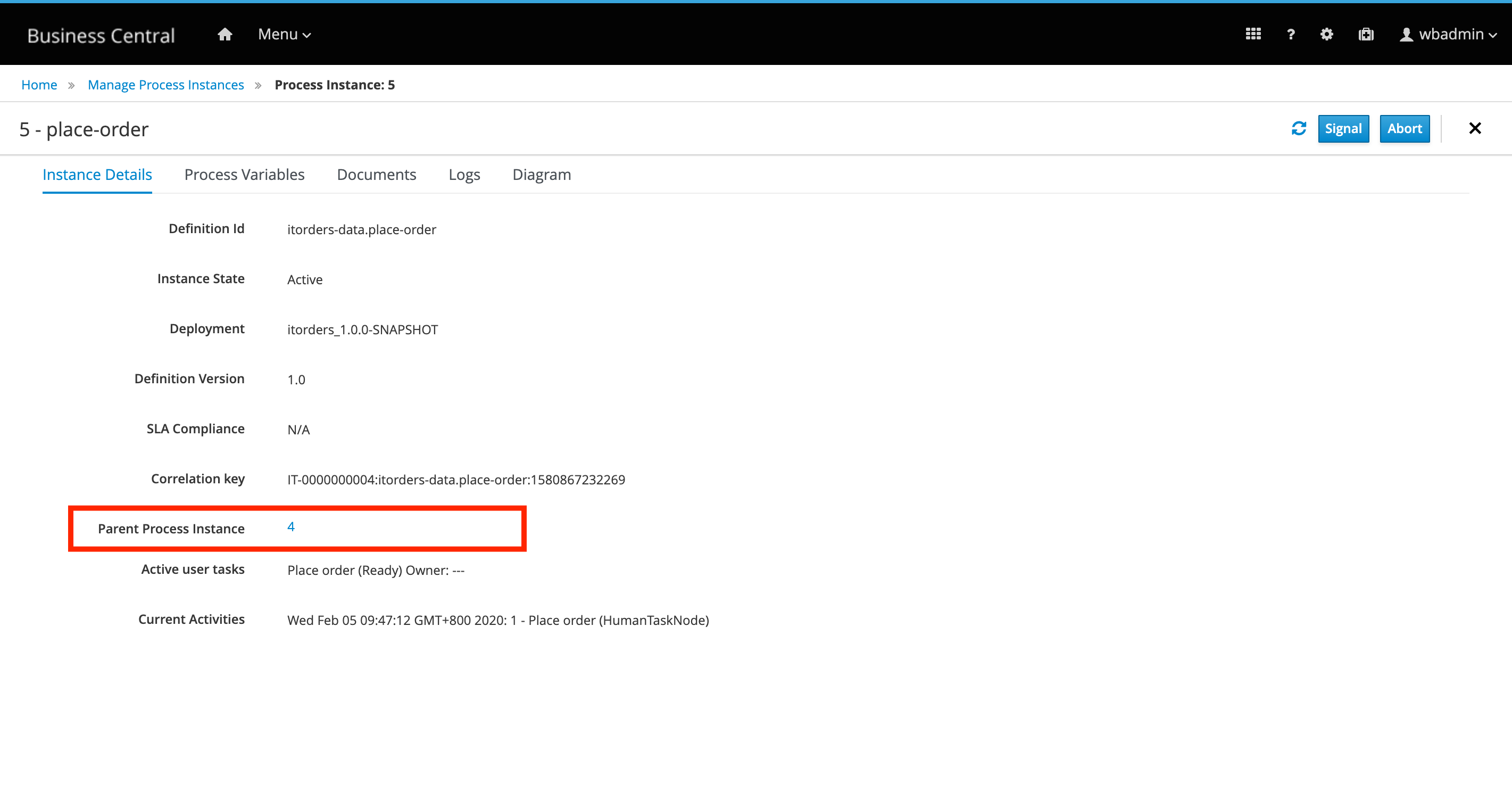

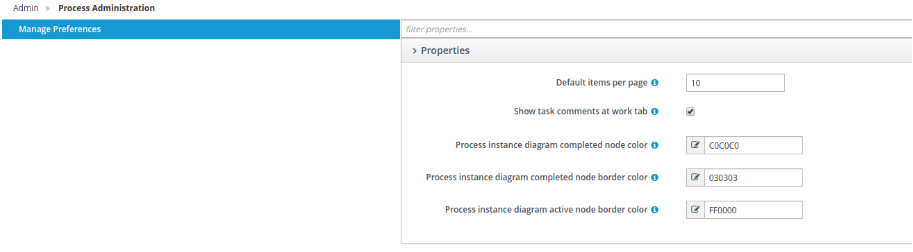

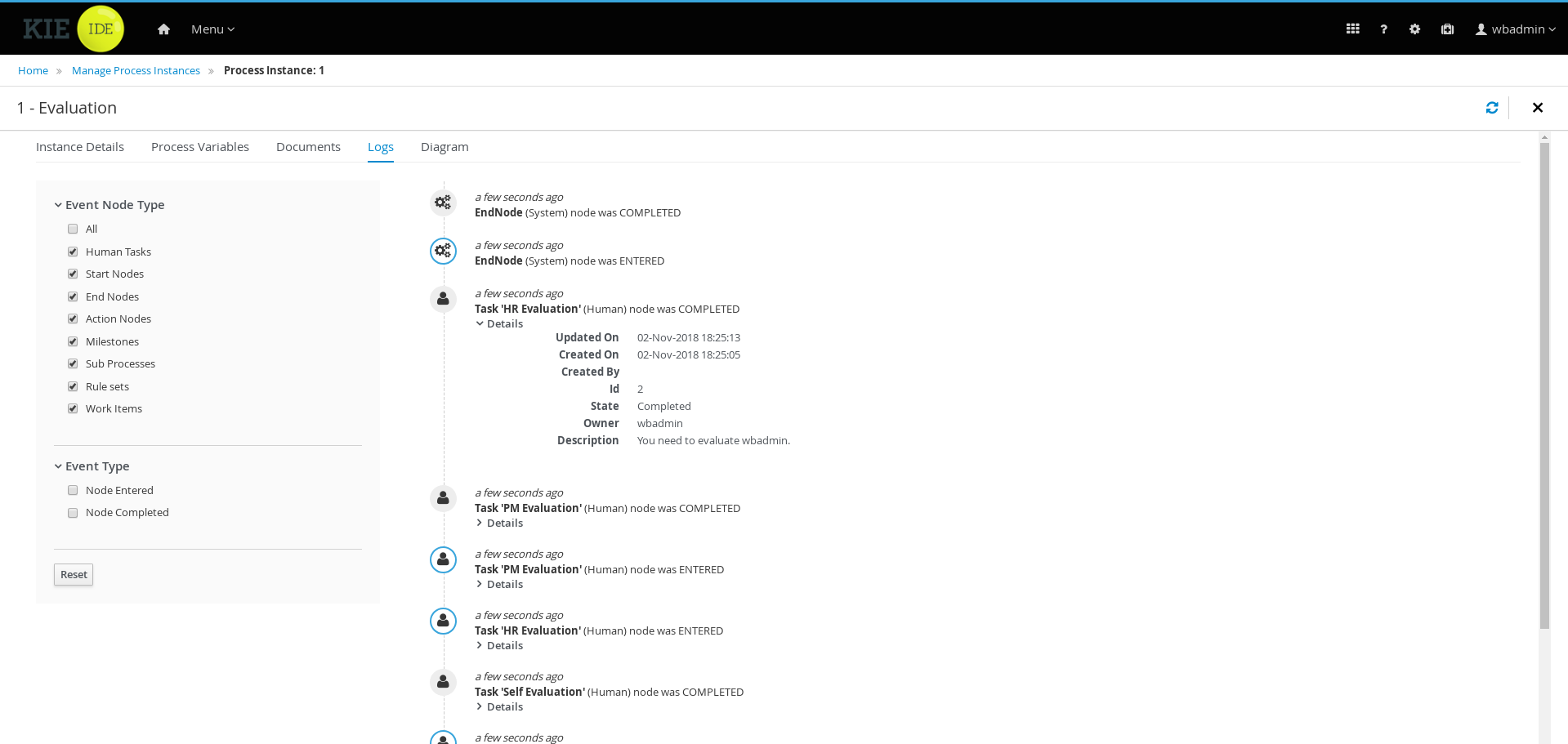

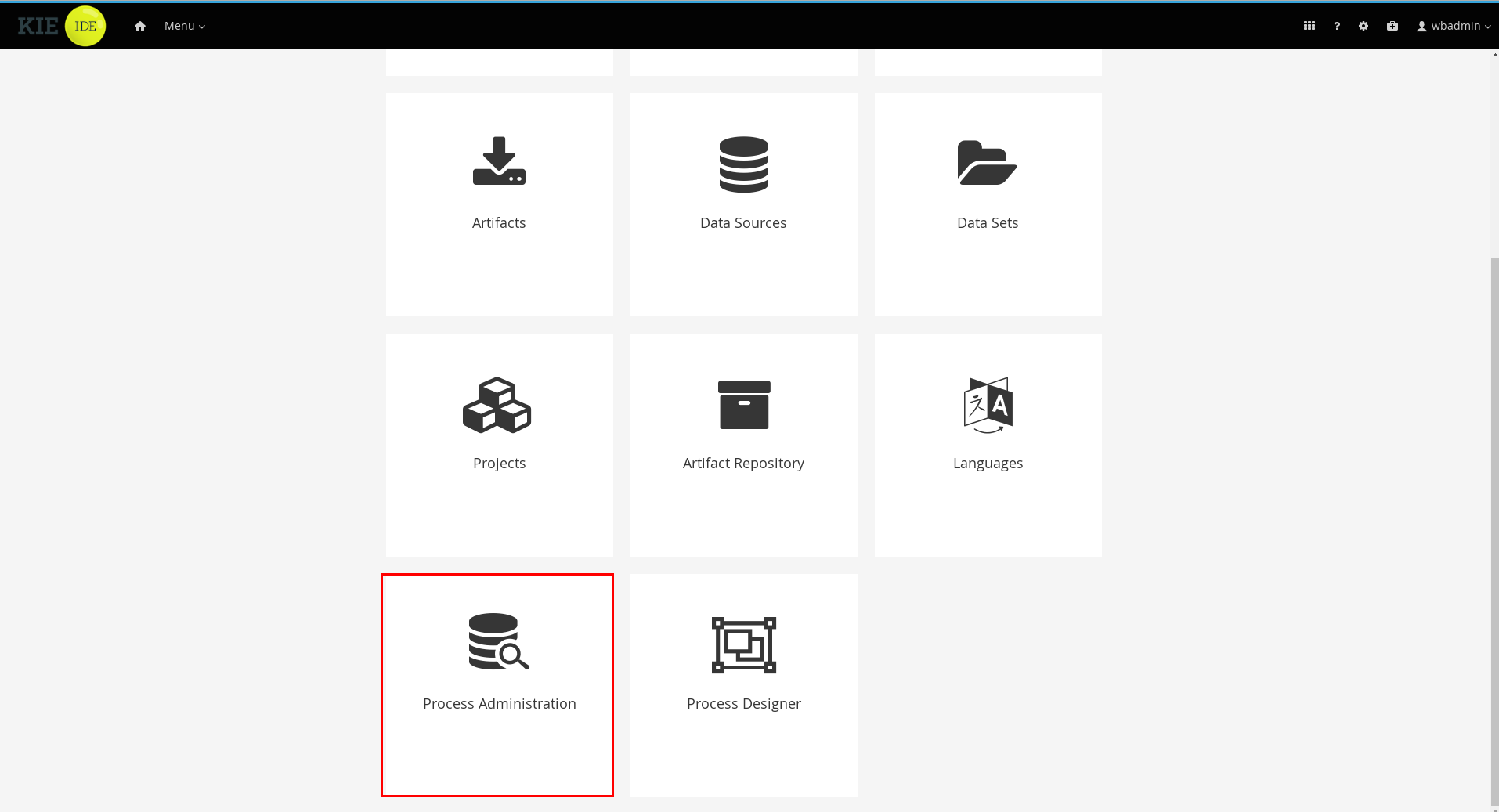

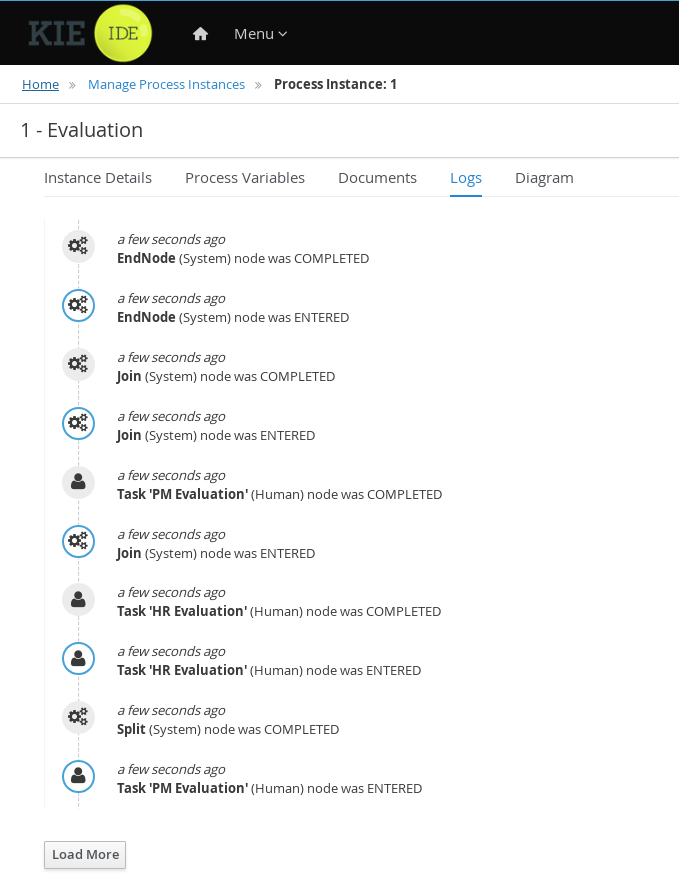

3.9. Monitoring the MortgageApprovalProcess process application

The following chapter shows how different bank employees, such as a system administrator or a knowledge worker, might use some of the monitoring capabilities to track an instance of the mortgage approval process.

-

KIE Server is deployed and connected to Business Central.

-

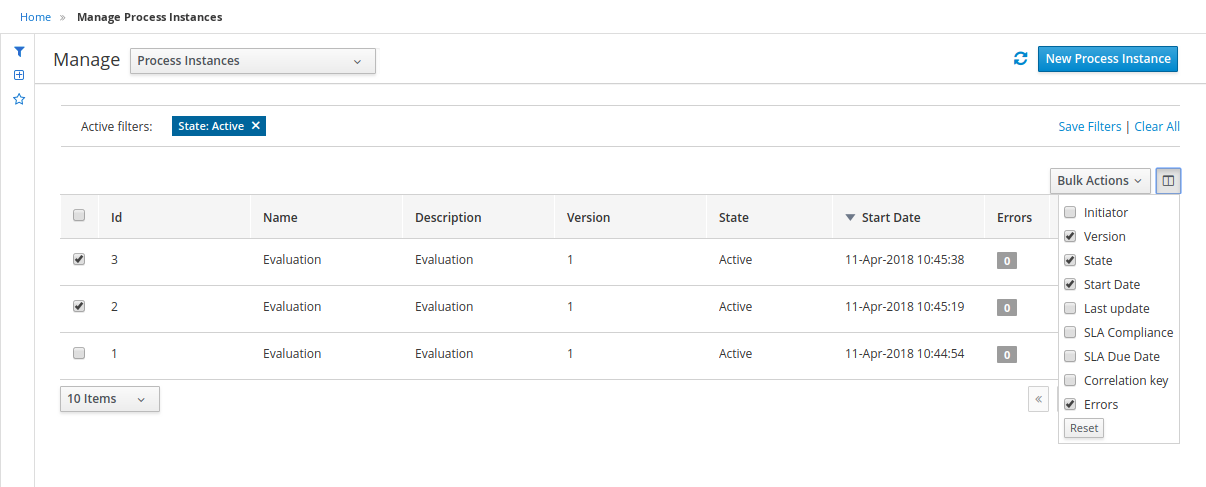

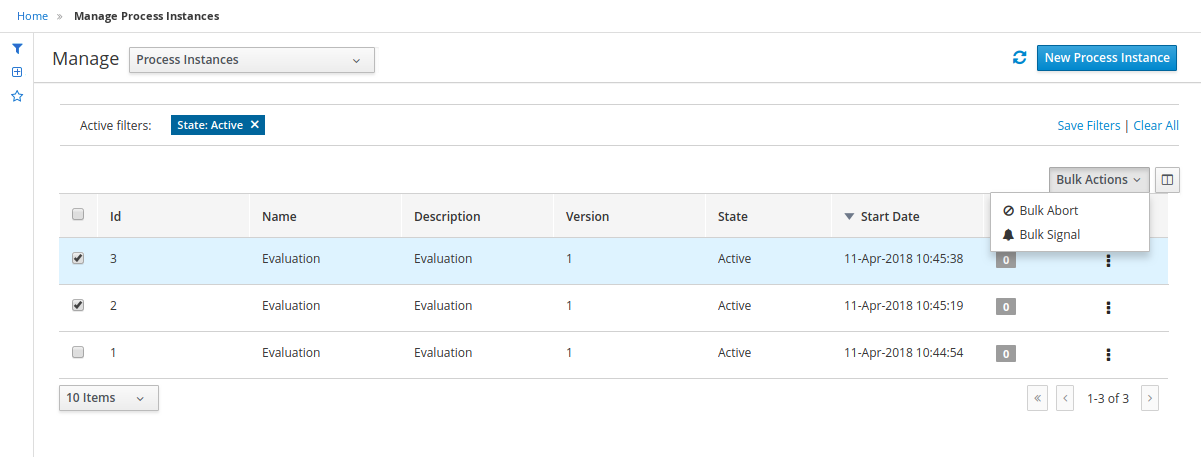

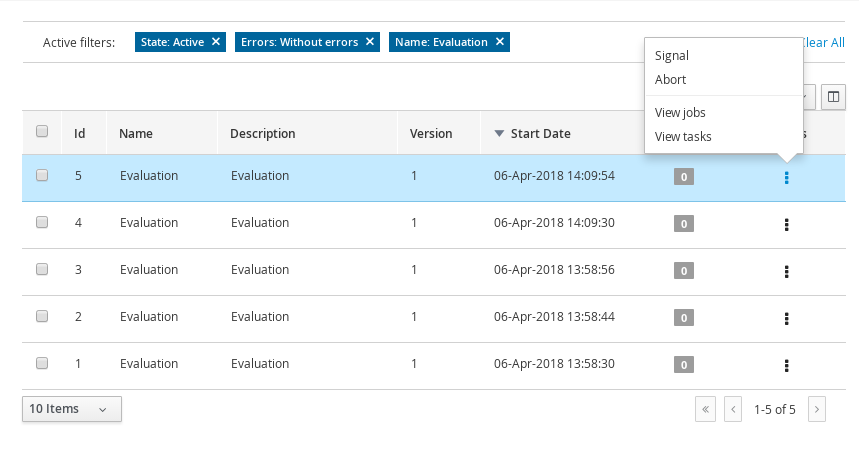

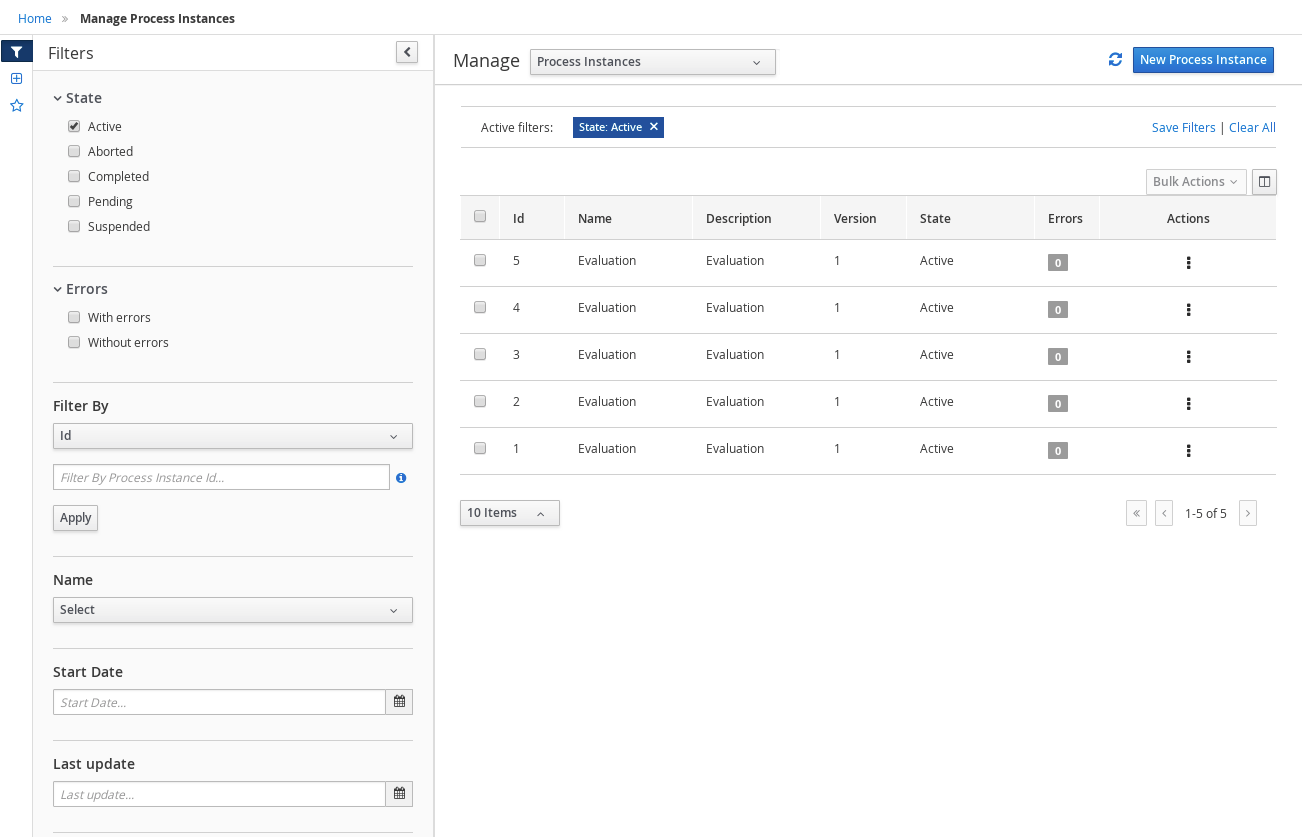

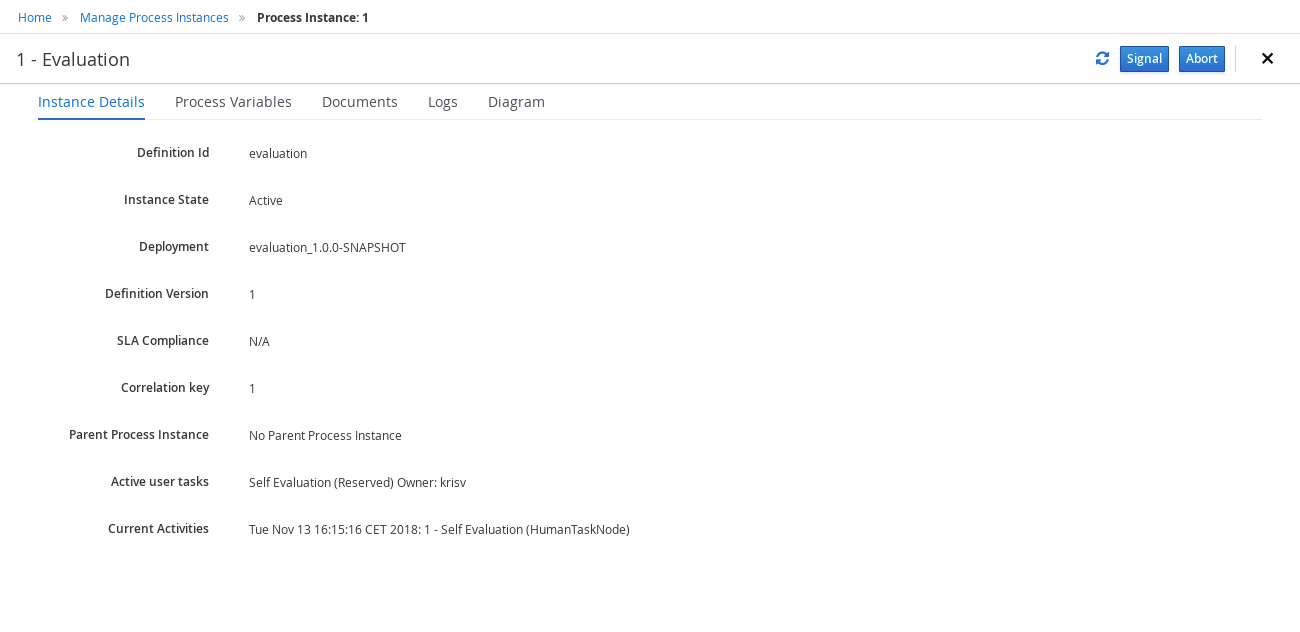

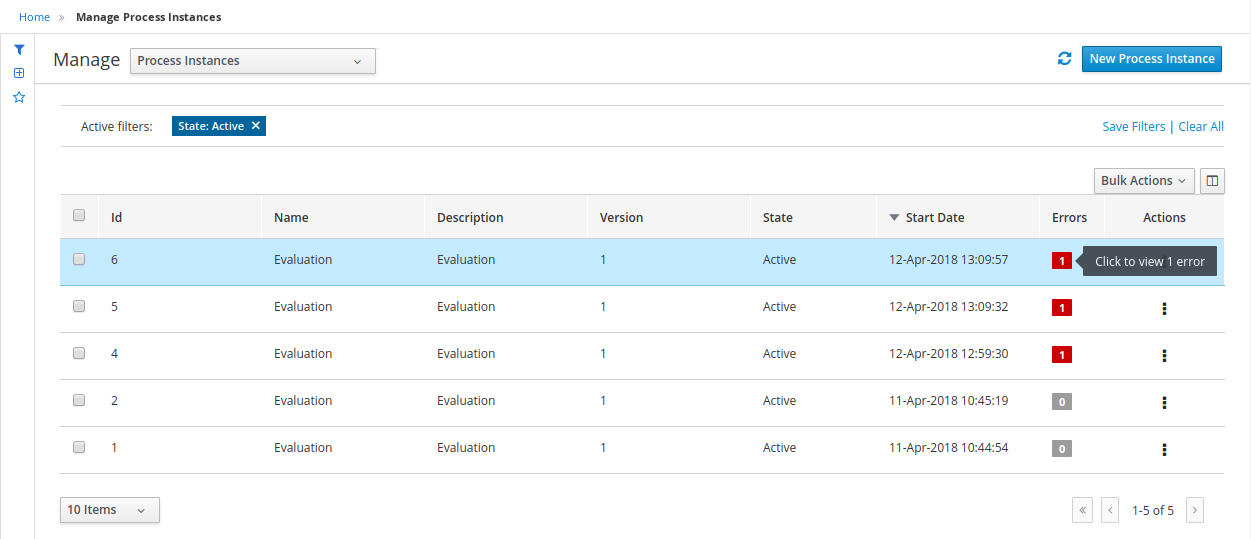

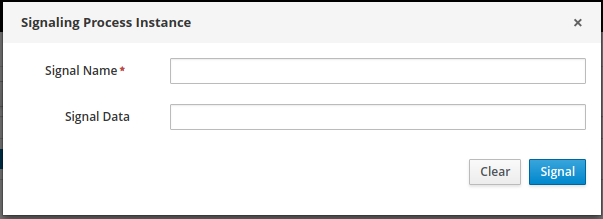

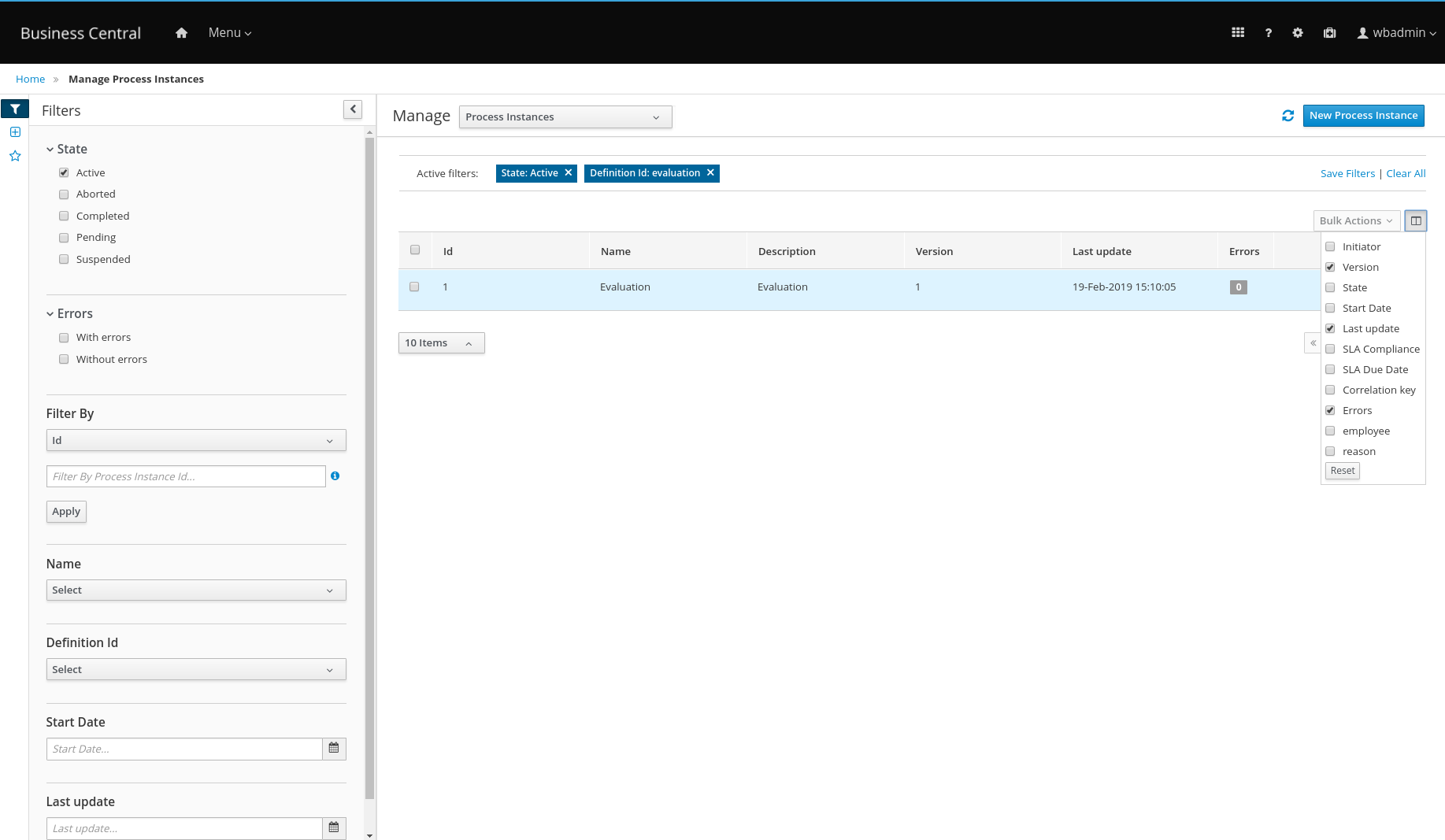

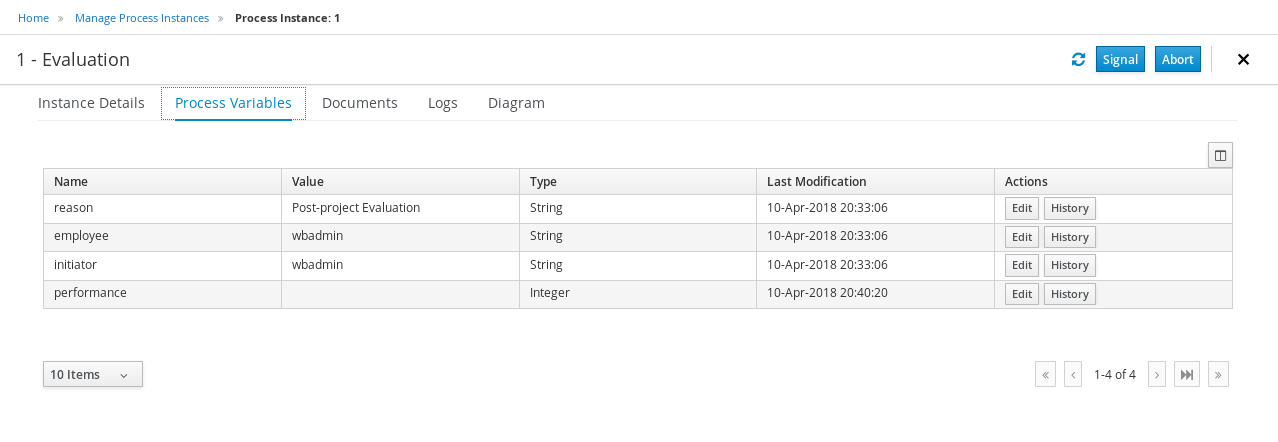

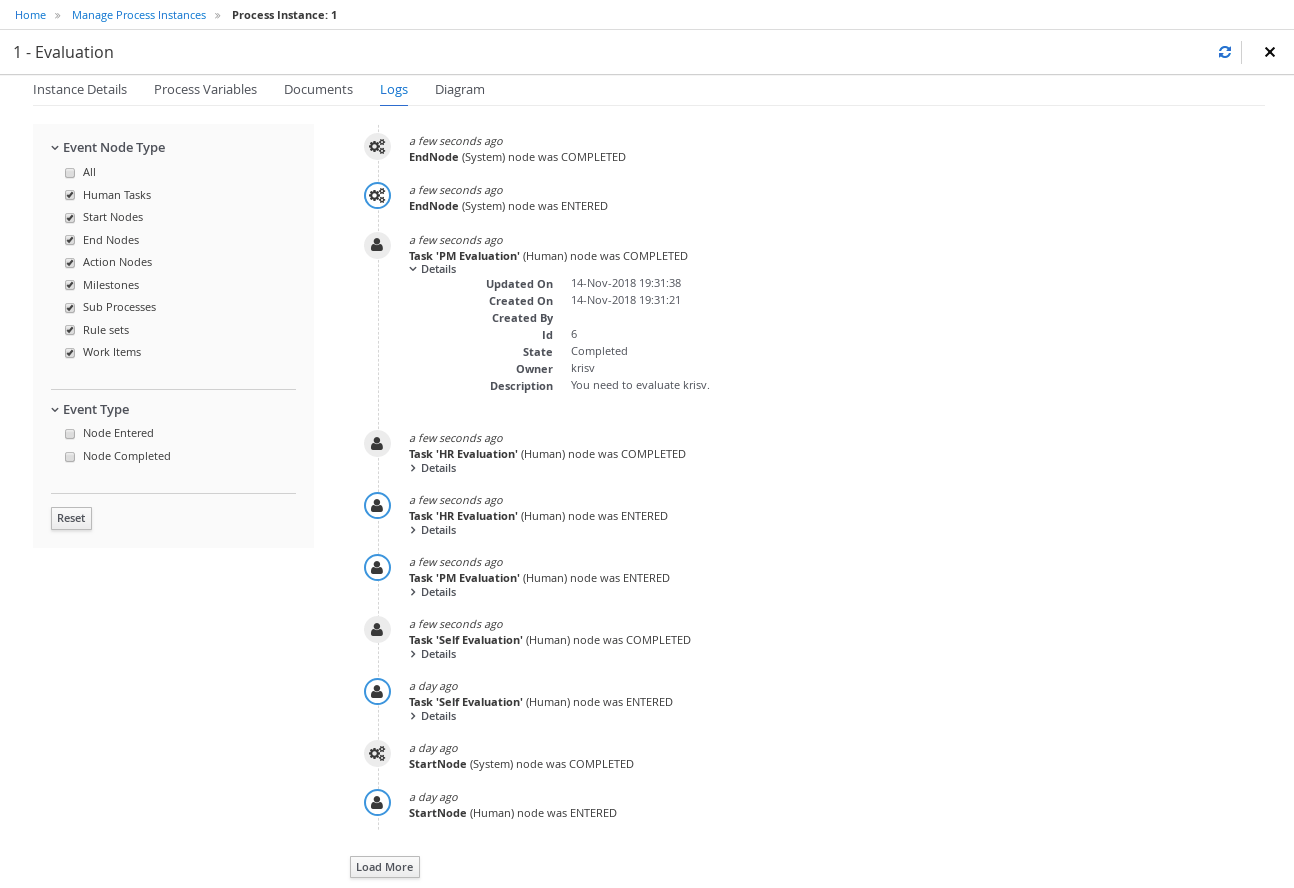

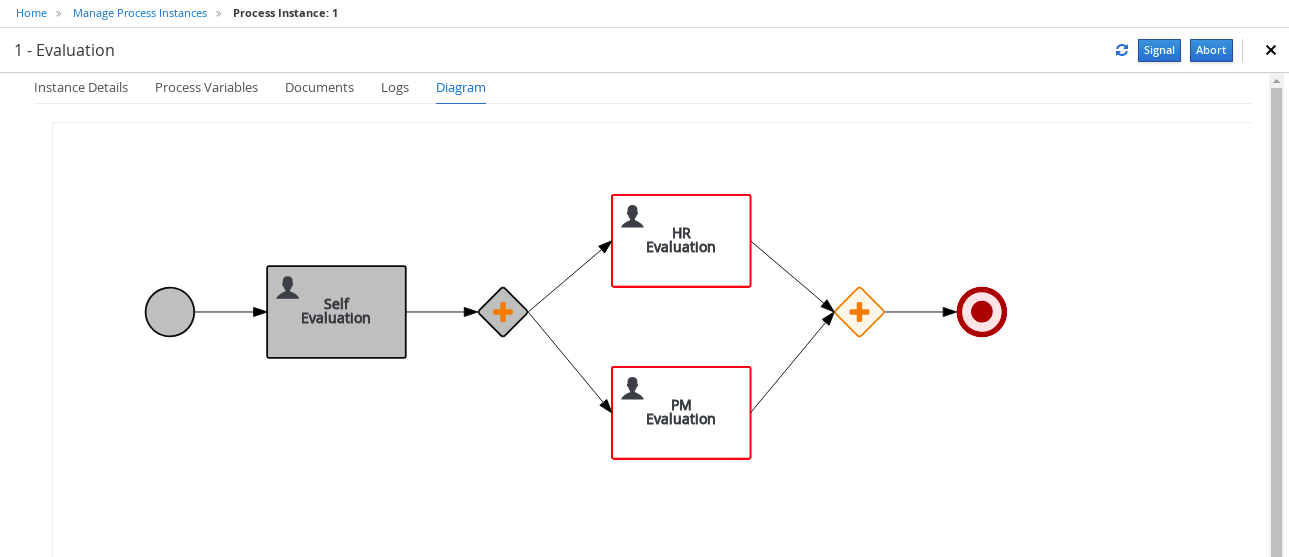

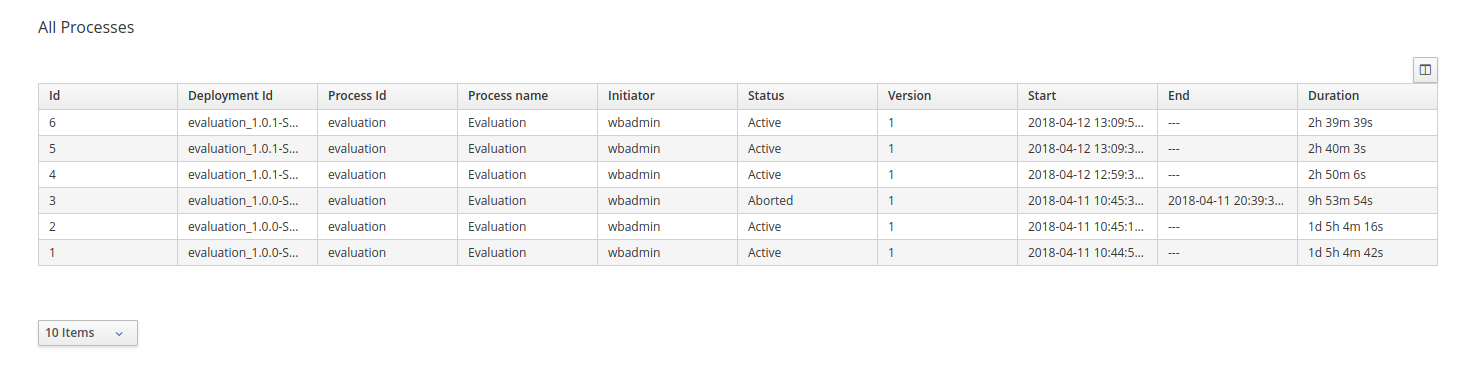

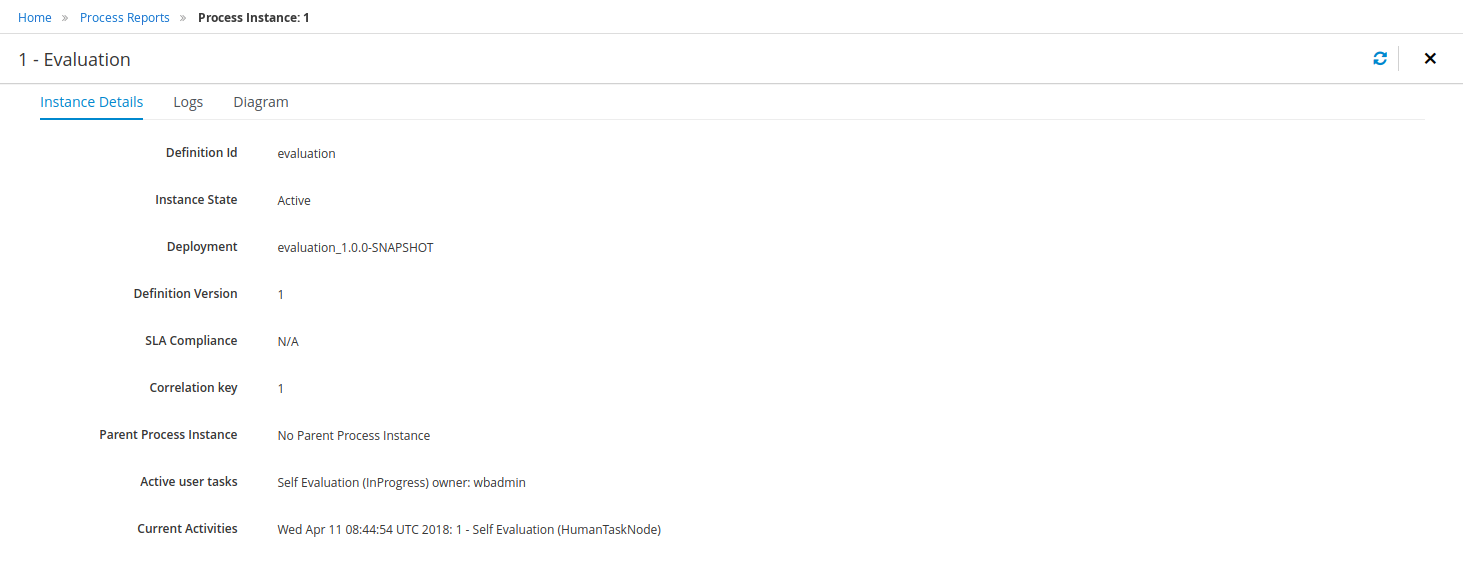

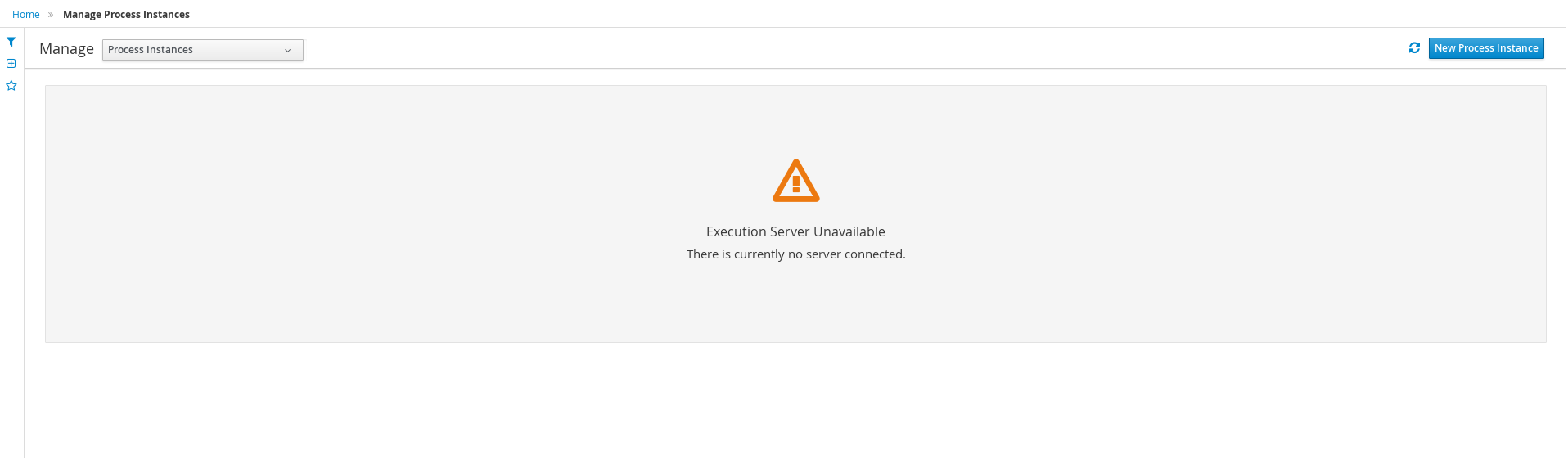

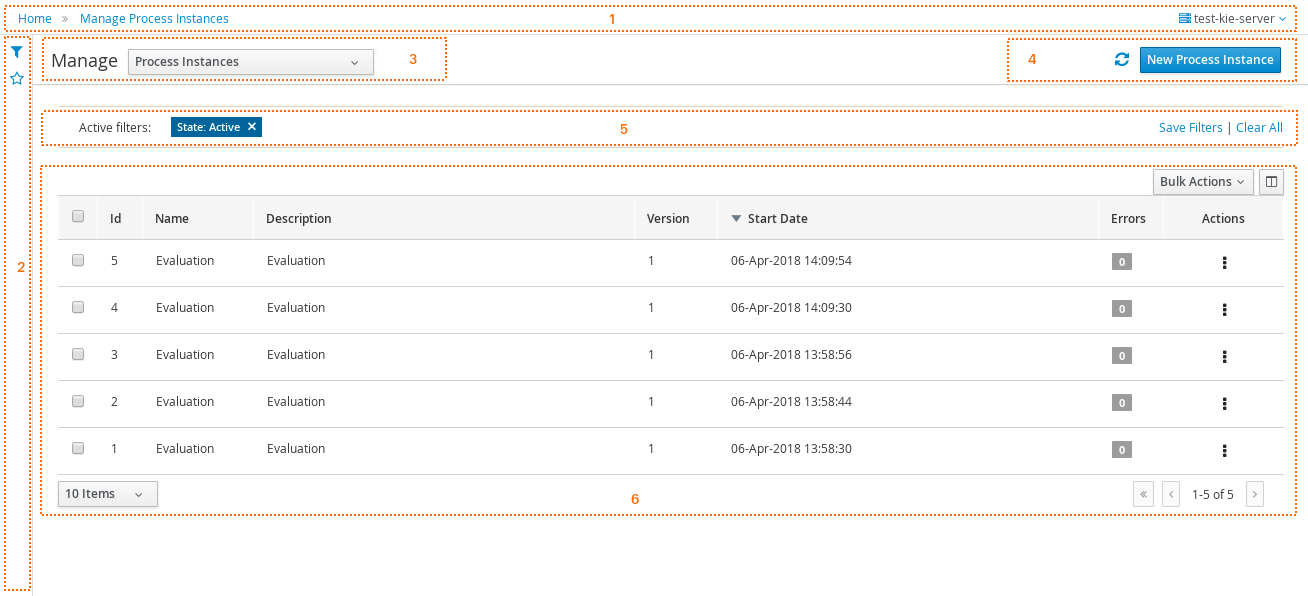

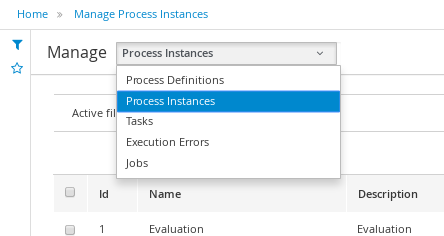

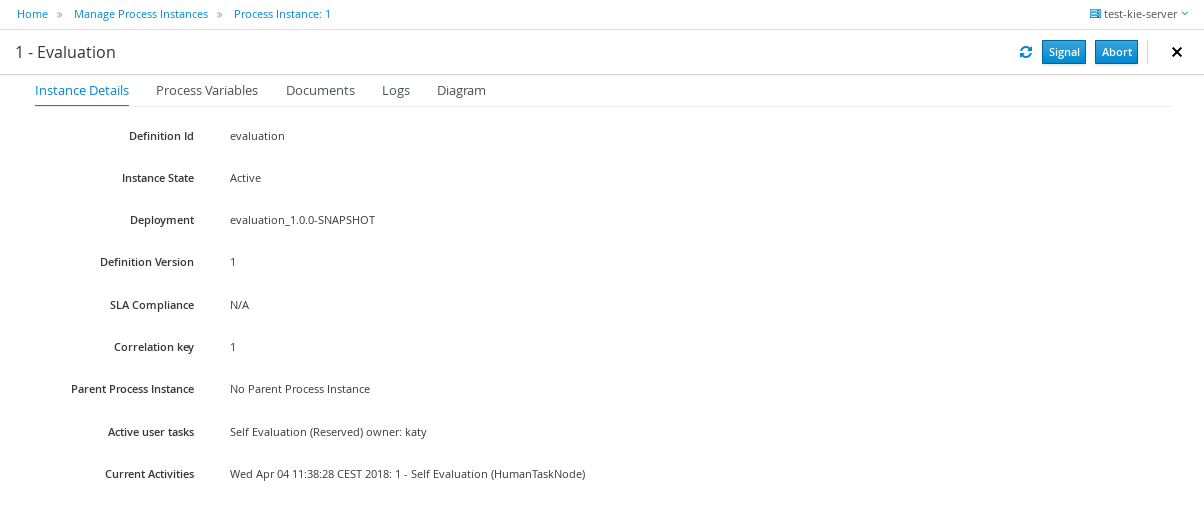

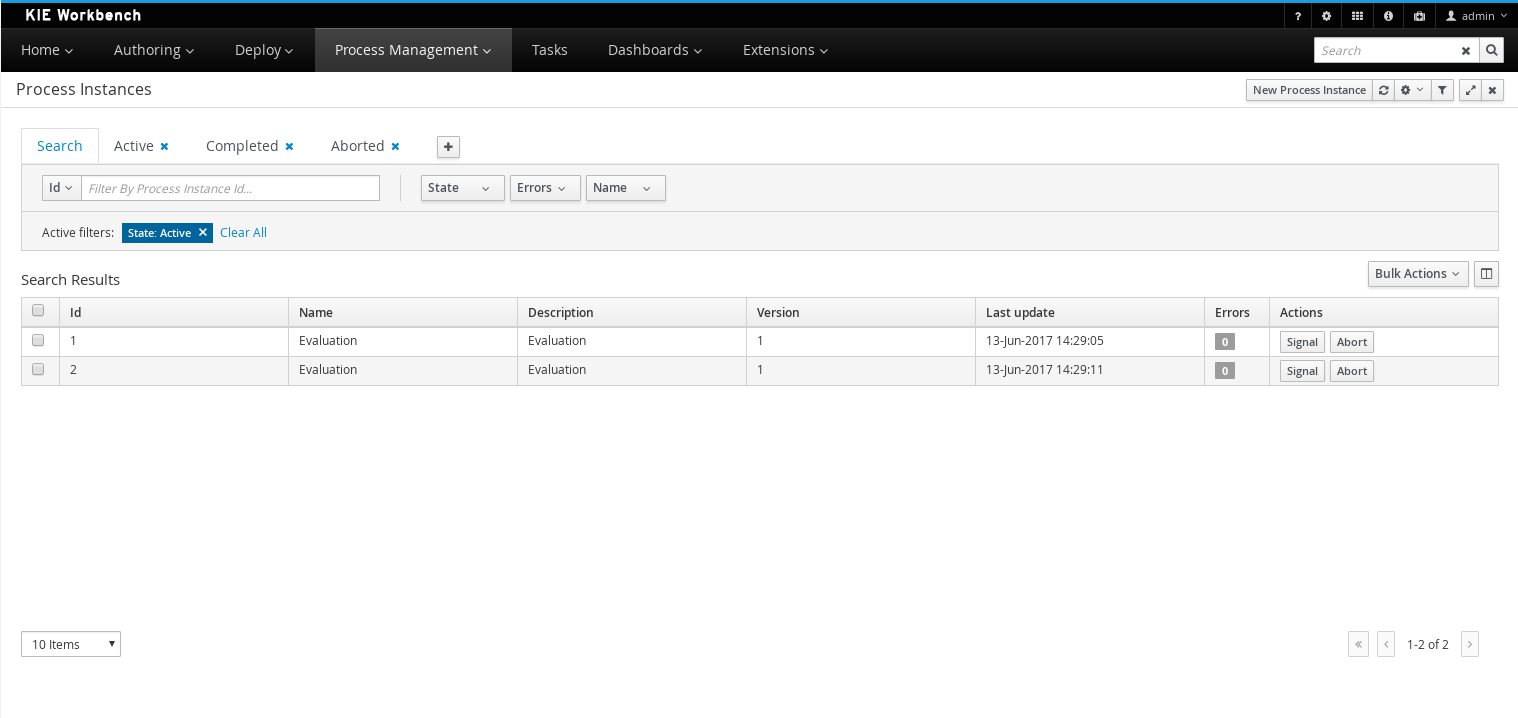

Log in to jBPM and click Menu → Manage → Process Instances.

-

In the Manage Process Instances window, you can set filters, such as State, Errors, Id, and so on.

-

Select Completed in the State filter to view all completed MortgageApprovalProcess instances.

-

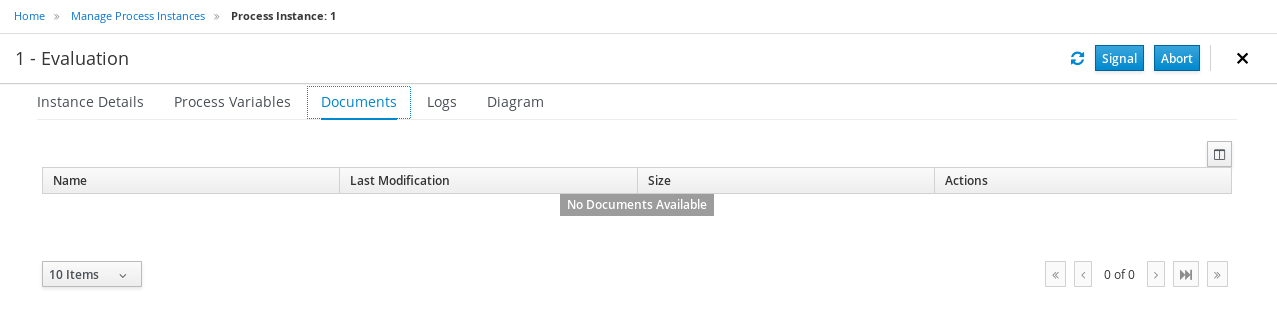

Click on the completed process instance.

-

Click each of the following tabs to get a feel for what type of information is available to monitor a specific process instance:

-

Instance Details

-

Process Variables

-

Documents

-

Logs

-

Diagram

-

-

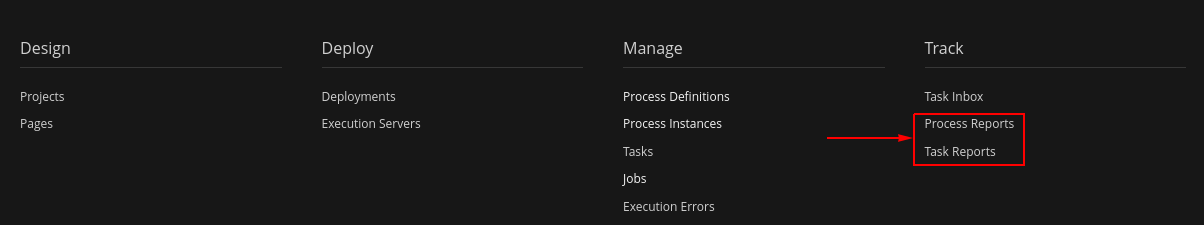

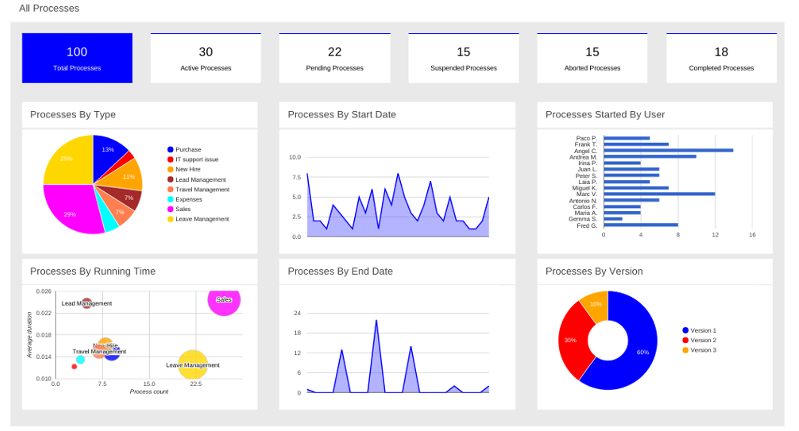

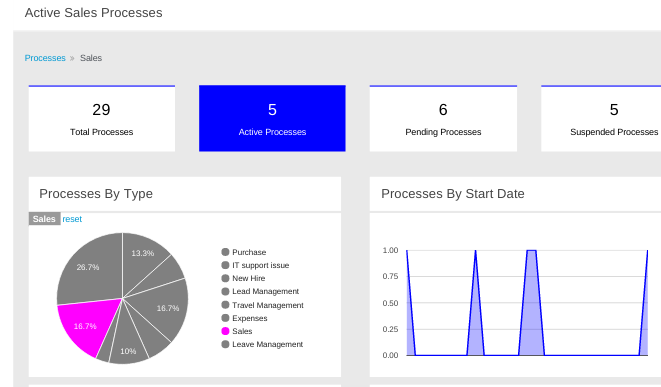

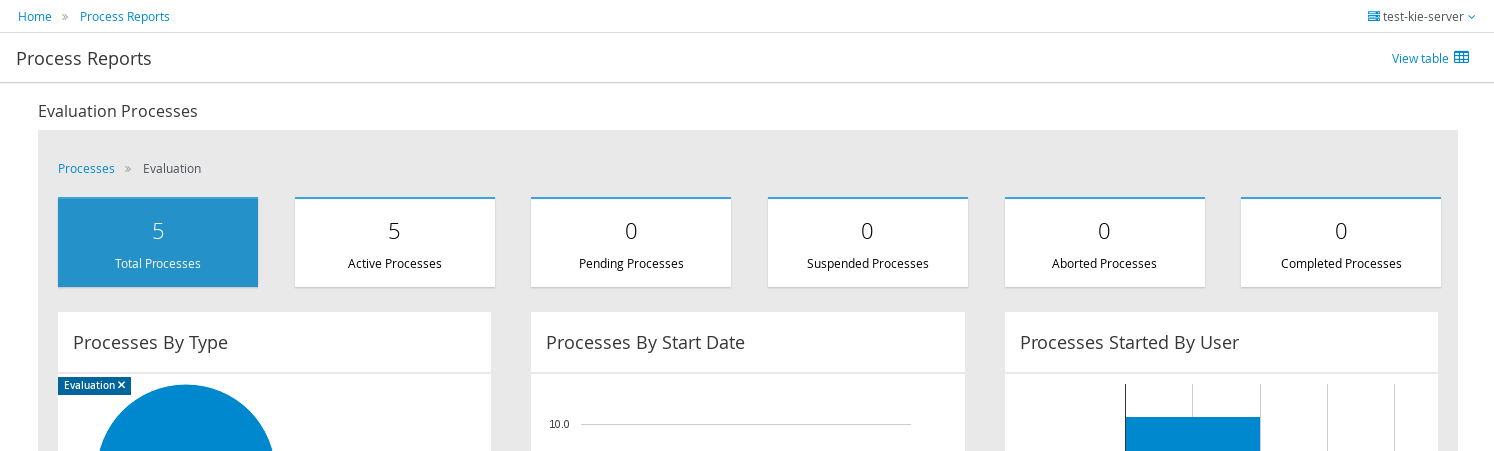

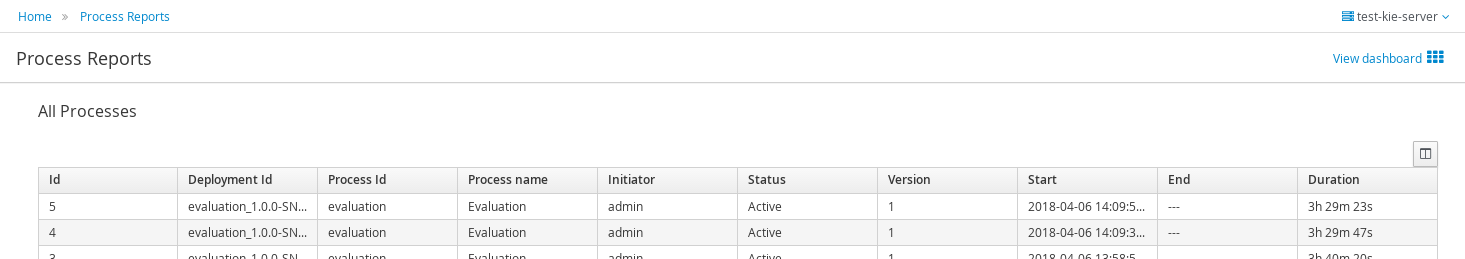

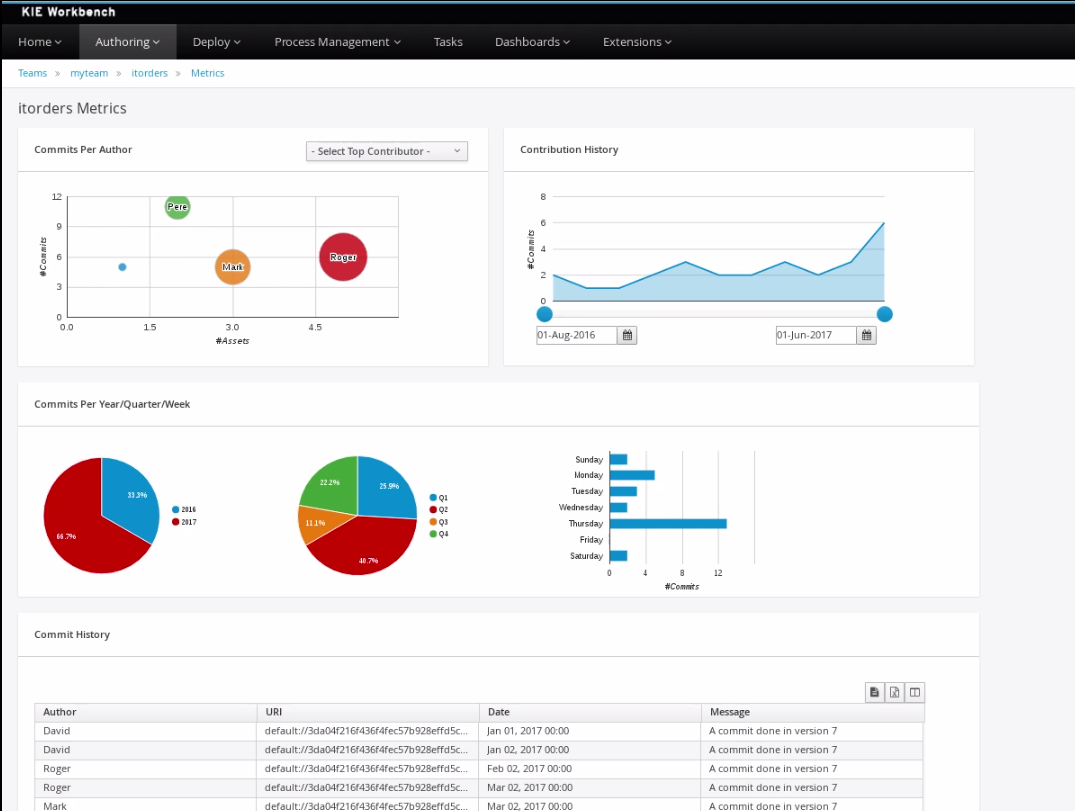

Click Menu → Track → Process Reports. This view contains a variety of charts that can help a senior process manager to gain an overview of all processes based on Type, Start Date, Running Time, and so on to assist with task reporting.

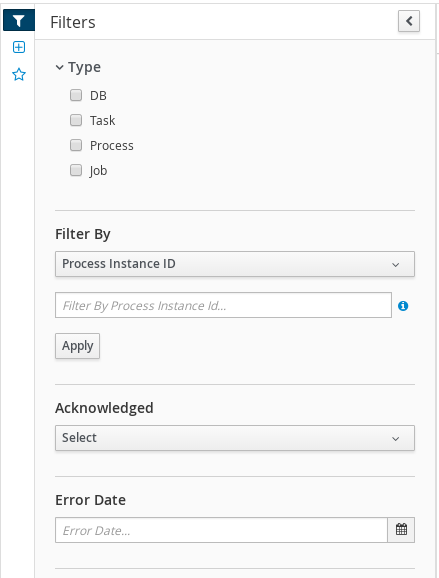

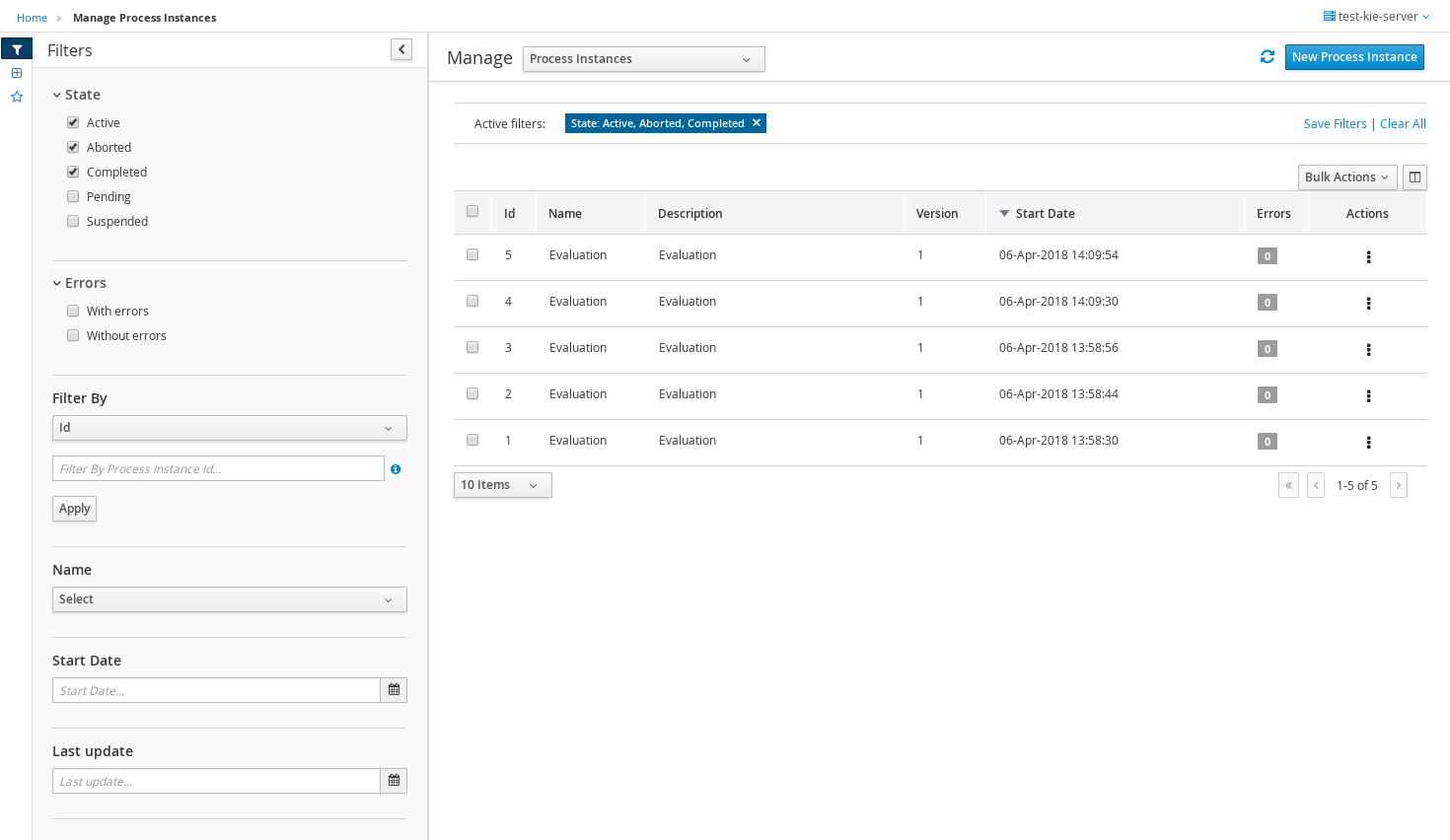

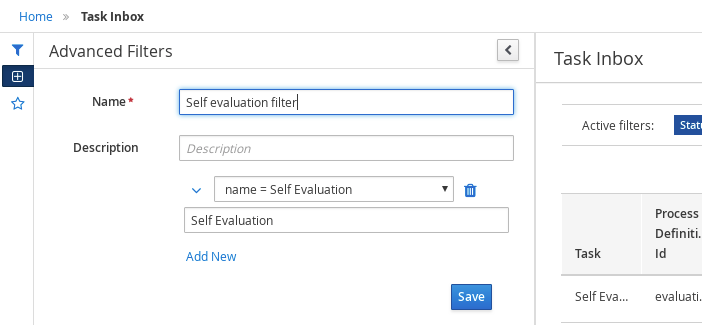

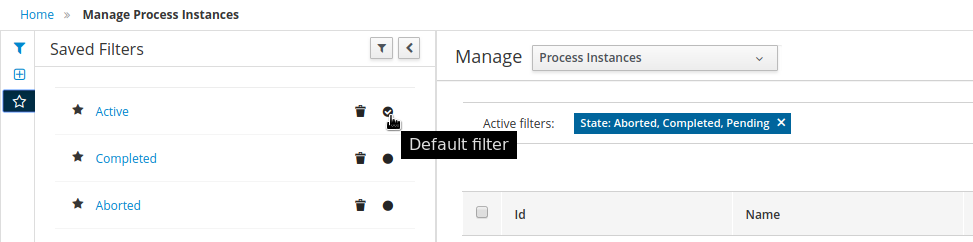

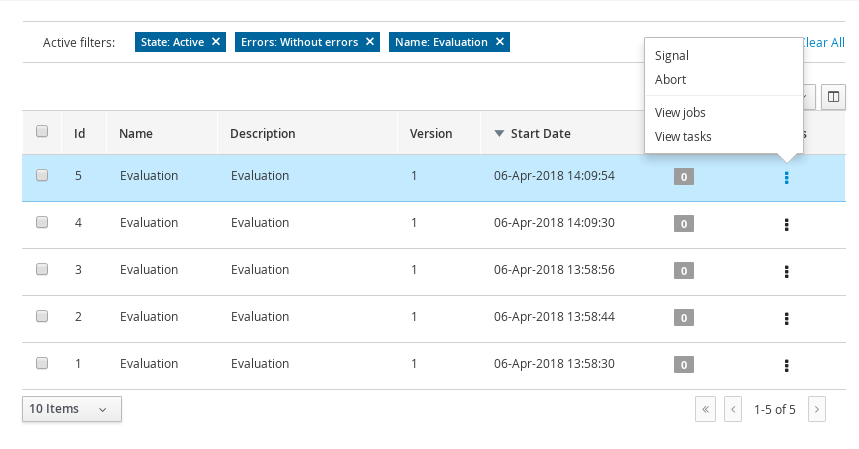

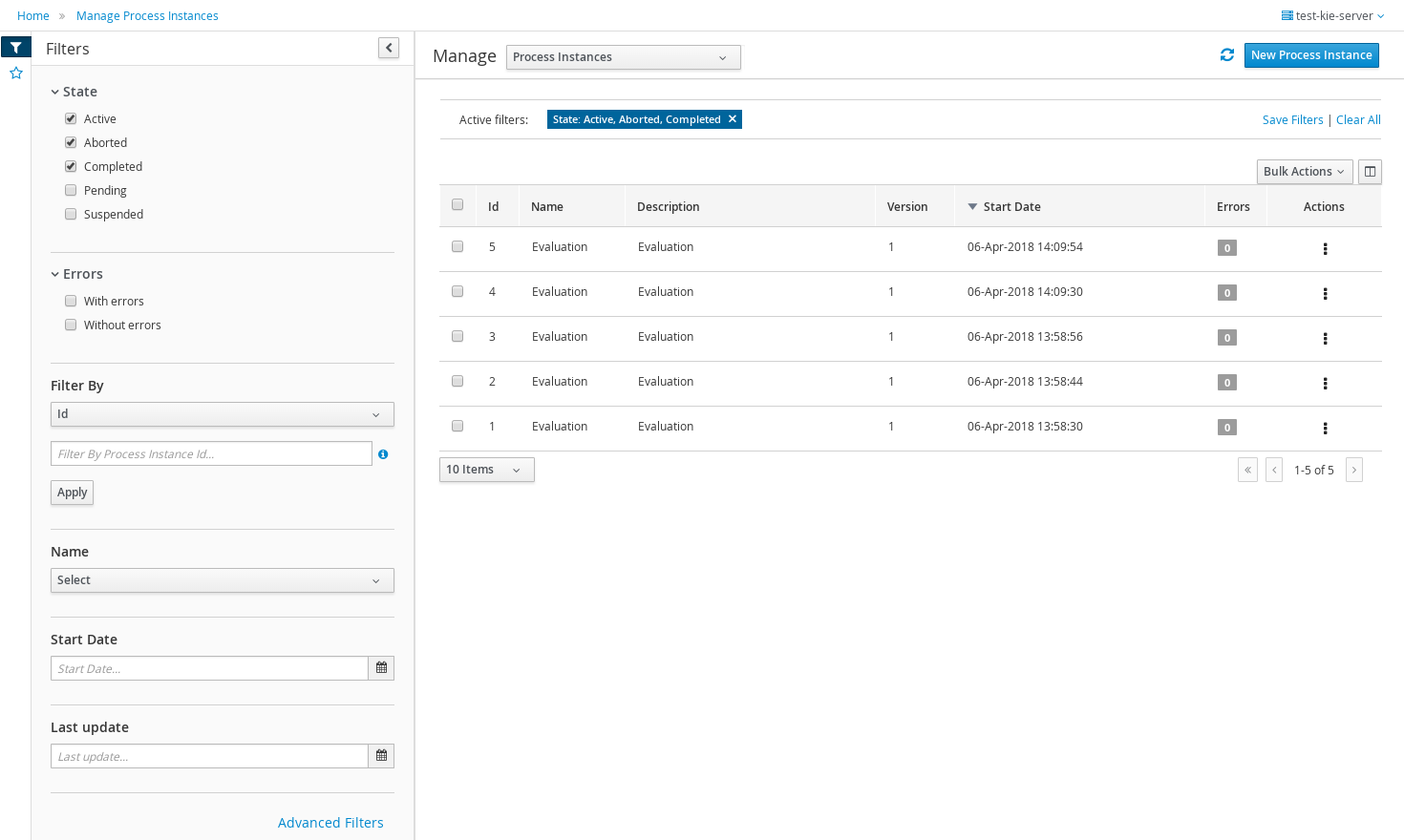

3.9.1. Filtering process instances using default or advanced filters

Business Central now provides you with default and advanced filters to help you filter and search through running process instances. You can also create custom filters using the Advanced Filters option.

3.9.1.1. Filtering process instances using default filters

Filter process instances by attributes such as State, Errors, Filter By, Name, Start Date, and Last update.

-

In Business Central, go to Menu → Manage → Process Instances.

-

On the Manage Process Instances page, click the filter icon on the left of the page to expand the Filters pane.

This pane lists the following process attributes which you can use to filter process instances:

-

State: Filter process instances based on their state (Active, Aborted, Completed, Pending, and Suspended).

-

Errors: Filter process instances by errors.

-

Filter By: Filter process instances based on Id, Initiator, Correlation Key, or Description attribute.

-

Select the required attribute.

-

Enter the search query in the text field below.

-

Click Apply.

-

-

Name: Filter process instances by definition names.

-

Definition Id: Filter process instances by process definition IDs.

-

Deployment Id: Filter process instances by process deployment IDs.

-

Parent Process Instance Id: Filter process instances by parent process instance IDs.

-

SLA Compliance: Filter process instances by SLA compliance states.

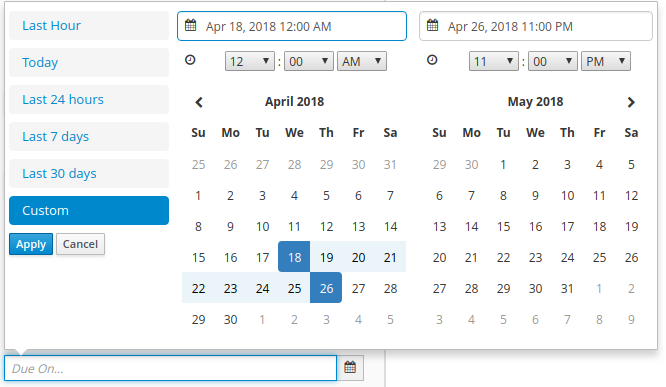

-

Start Date: Filter process instances by creation dates.

-

Last update: Filter process instances by last modified dates.

-

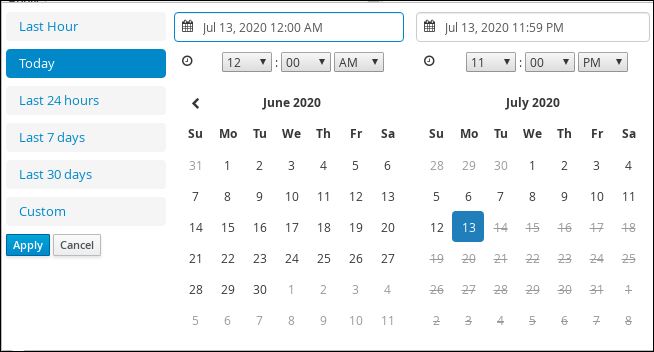

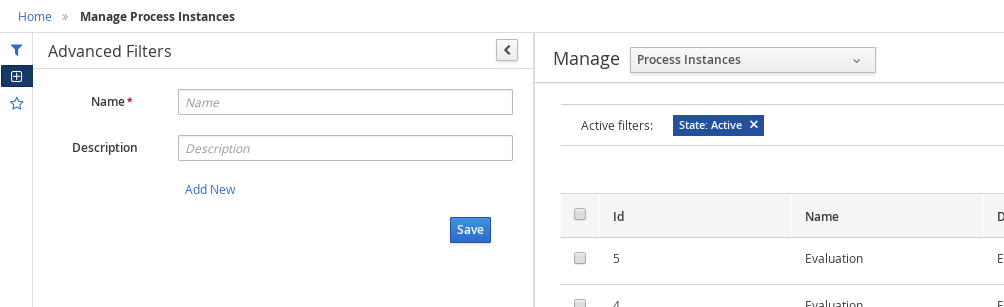

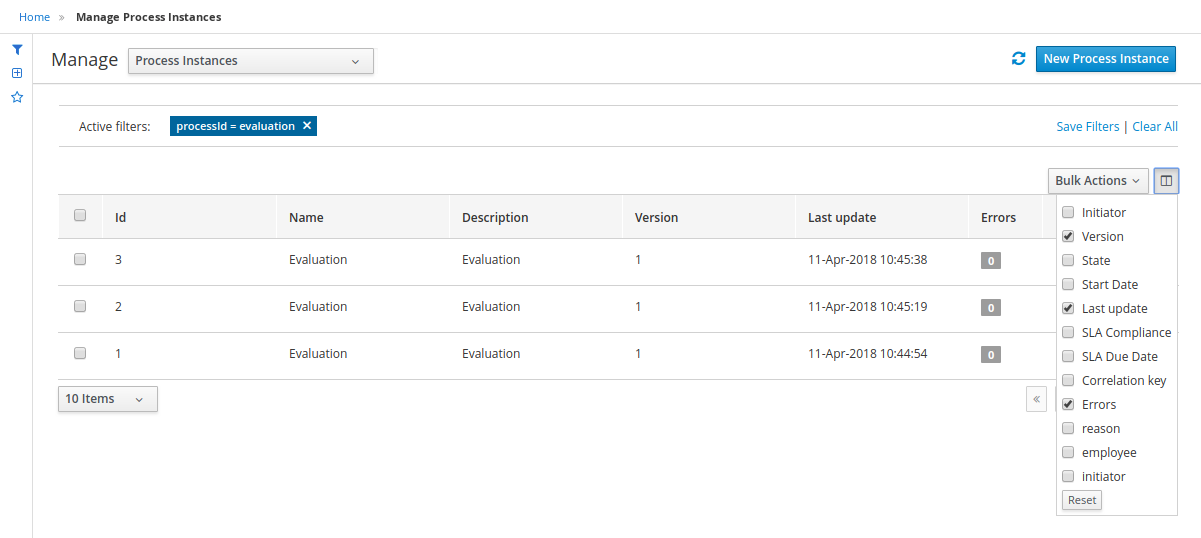

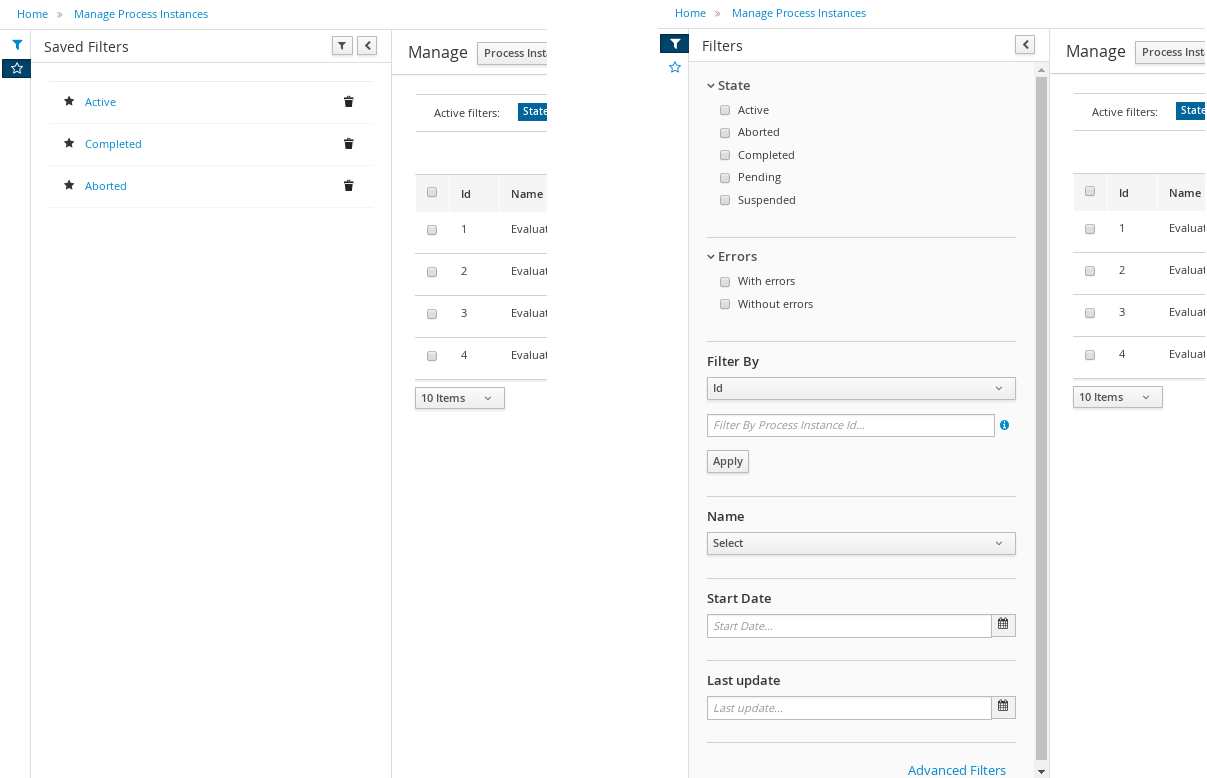

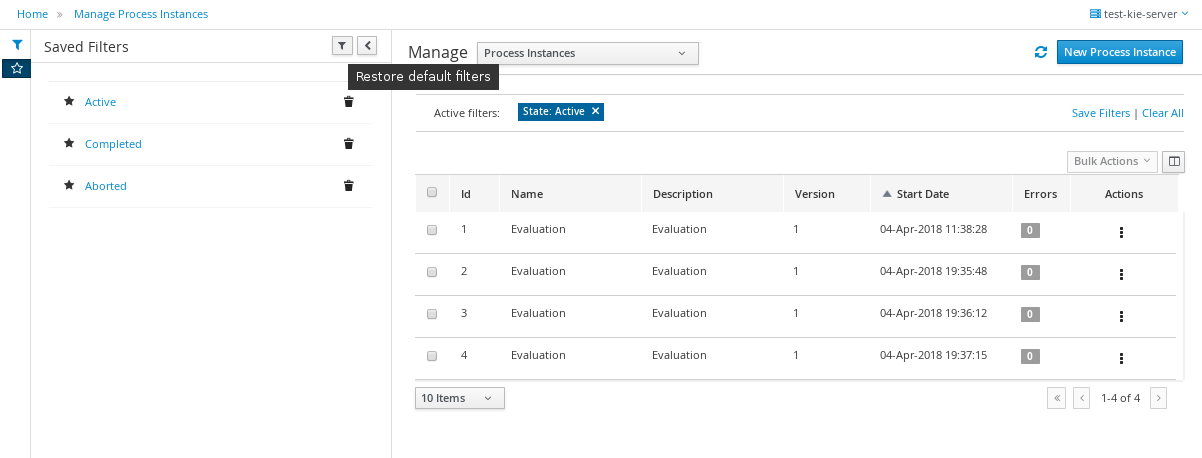

3.9.1.2. Filtering process instances using advanced filters

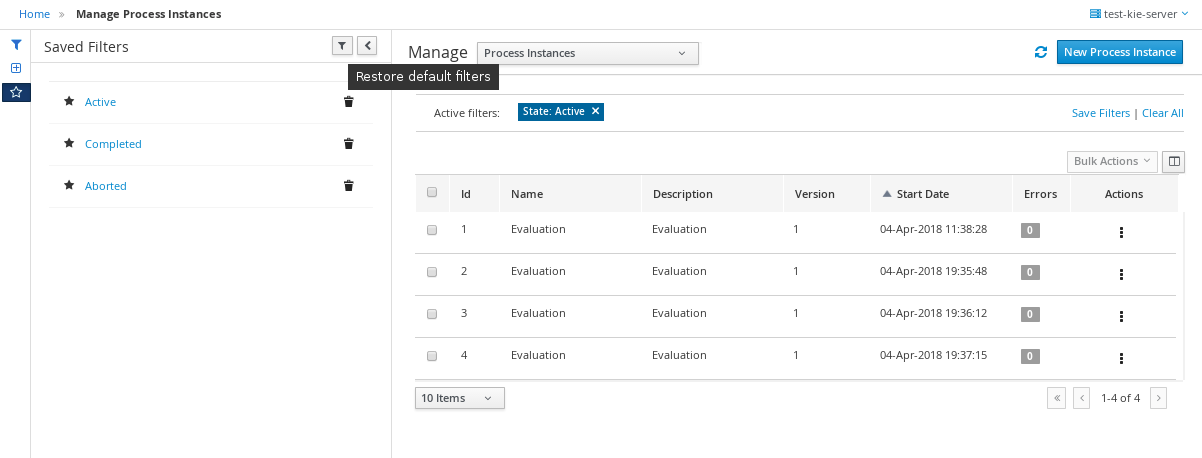

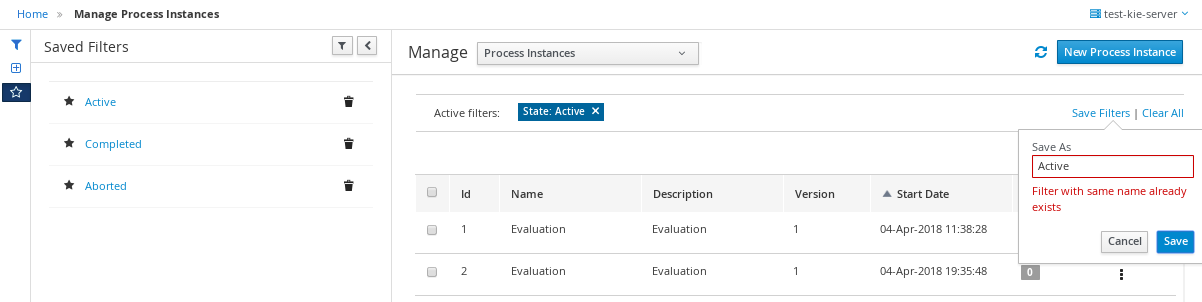

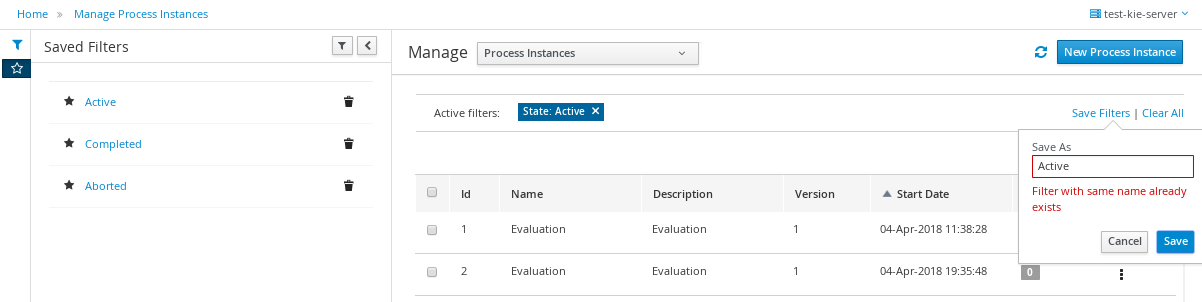

Use the Advanced Filters option to create custom process instance filters. The newly created custom filter is added to the Saved Filters pane, which is accessible by clicking on the star icon on the left of the Manage Process Instances page.

-

In Business Central, go to Menu → Manage → Process Instances.

-

On the Manage Process Instances page, on the left of the page click the Advanced Filters icon.

-

In the Advanced Filters pane, enter the name and description of the filter, and click Add New.

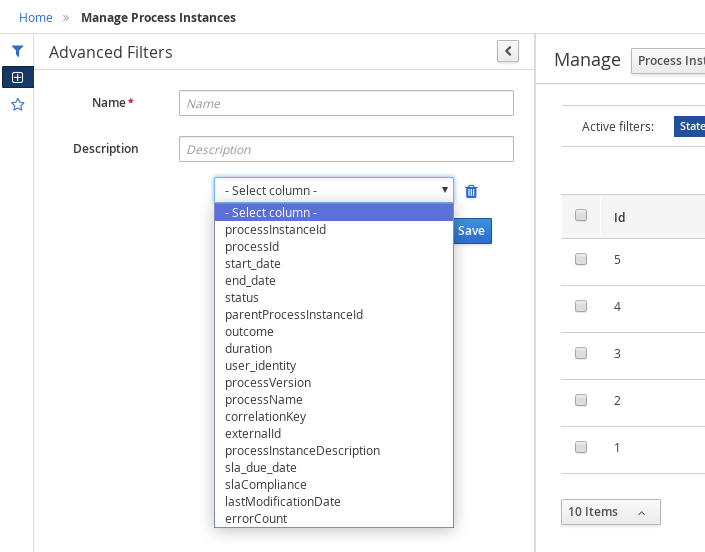

-

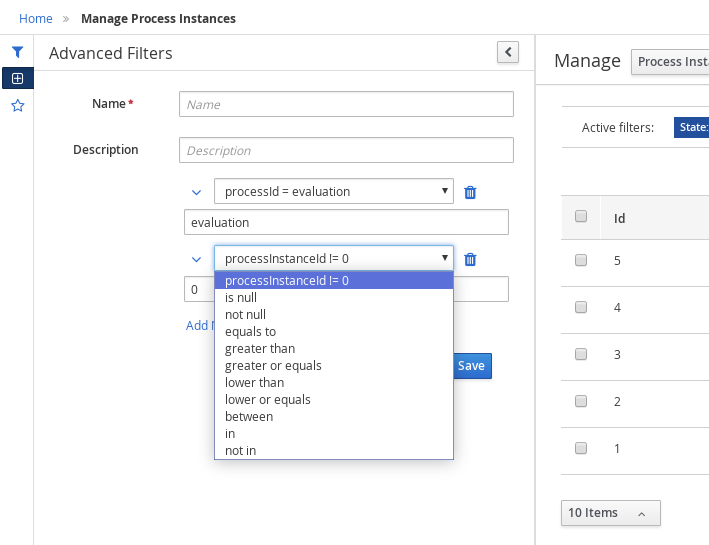

Select an attribute from the Select column drop-down list, for example, processName. The content of the drop-down changes to processName != value1.

-

Click the drop-down again and choose the required logical query. For the processName attribute, choose equals to.

-

Change the value of the text field to the name of the process you want to filter.

The name must match the value defined in the business process of the project.

-

Click Save and the processes are filtered according to the filter definition.

-

Click the star icon to open the Saved Filters pane.

In the Saved Filters pane, you can view all the saved advanced filters.

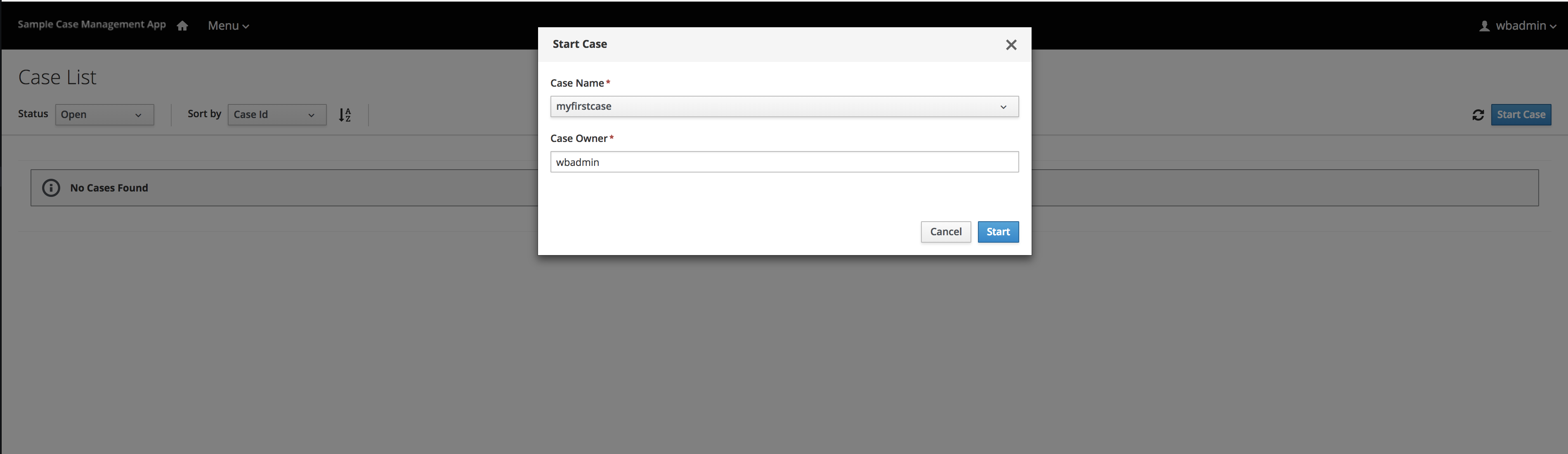

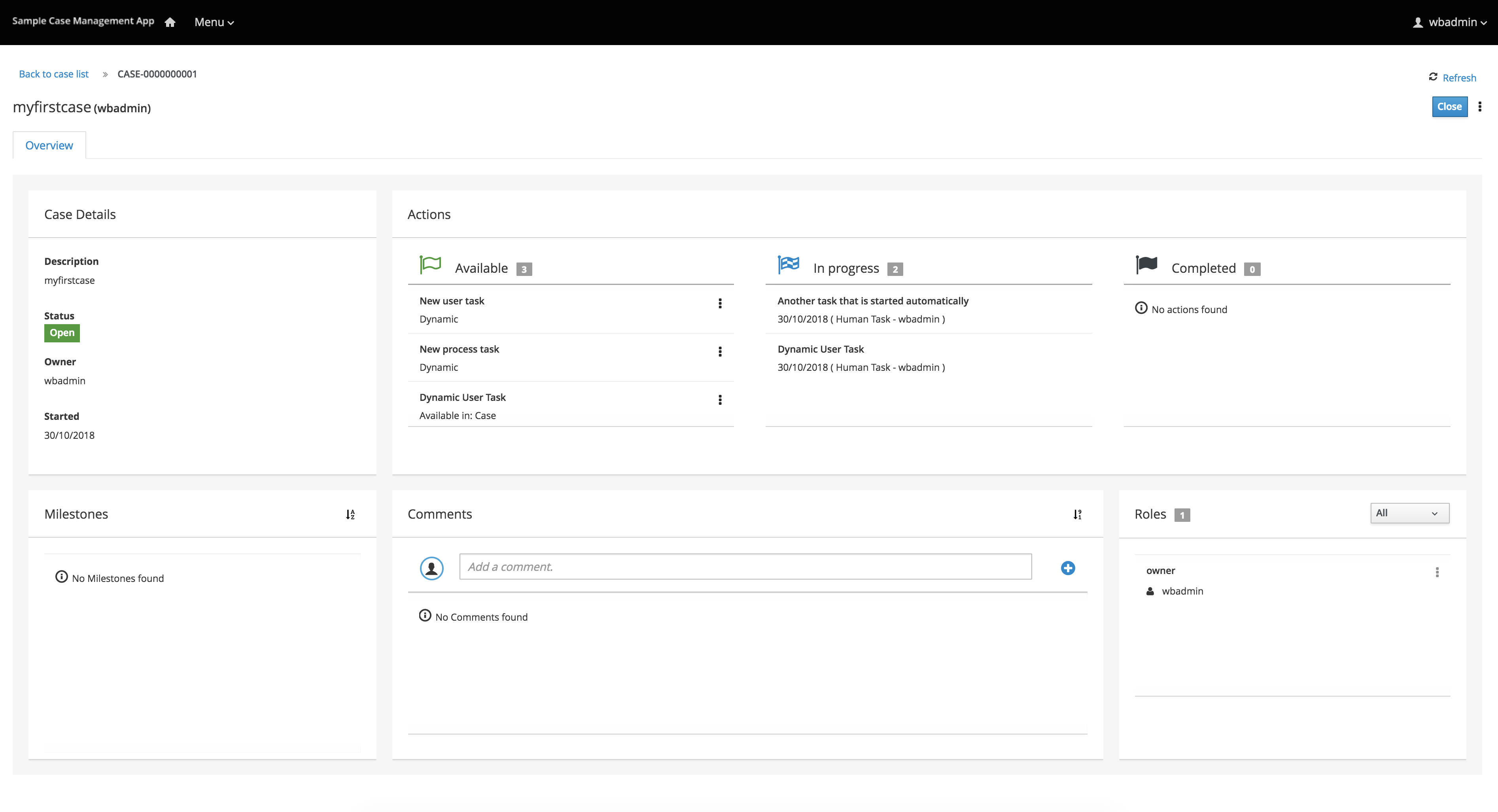

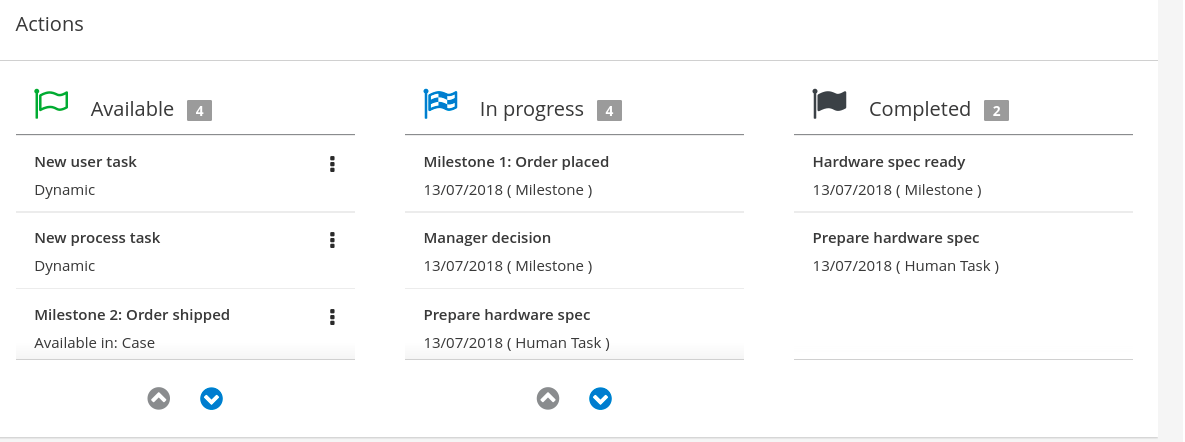

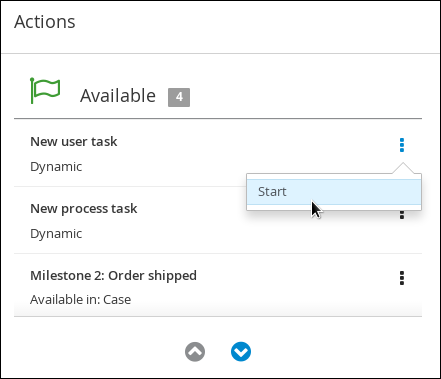

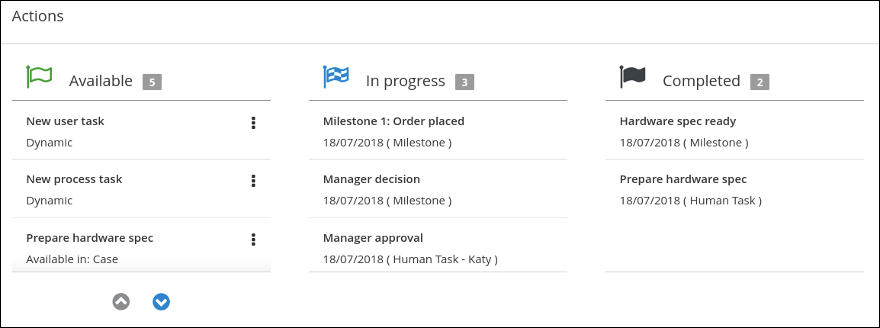

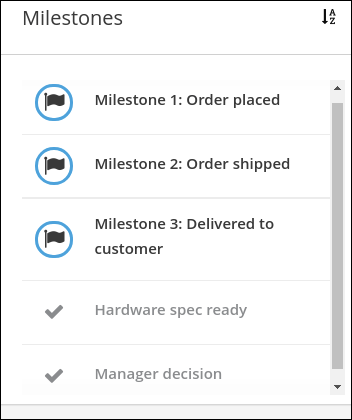

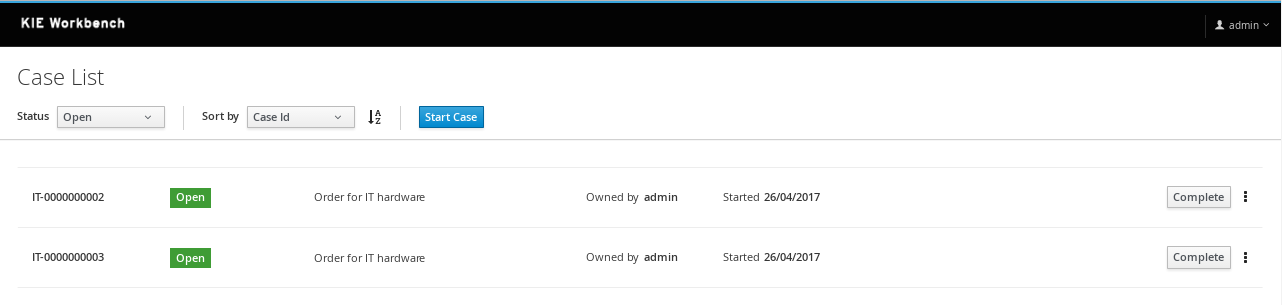

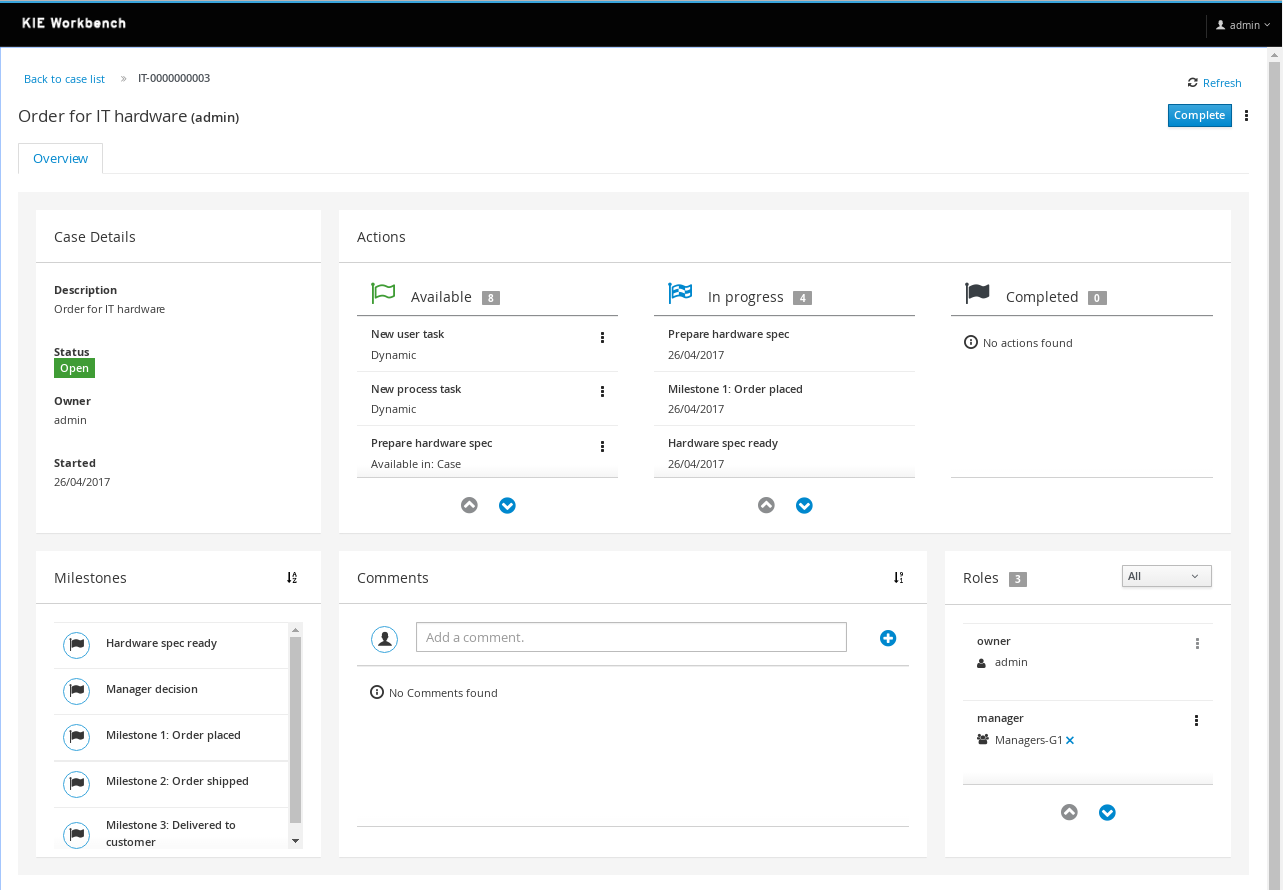

4. Getting started with case management in jBPM

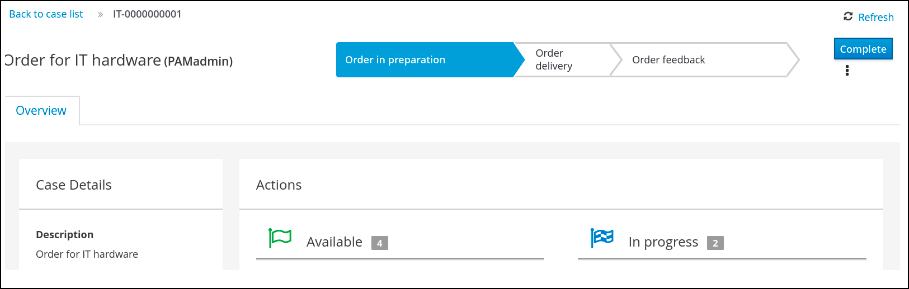

As a business rules and processes developer, you can use case management assets in Business Central to create unpredictable and ad hoc case processes. Case workers or process administrators can also use Business Central for case management and execution. jBPM provides example projects with example business assets in Business Central as a reference. This document describes how to create and test an example IT orders project based on the IT_Orders sample project included in Business Central.

-

Red Hat JBoss Enterprise Application Platform 7.4 is installed. For installation information, see Red Hat JBoss Enterprise Application Platform 7.4 Installation Guide.

-

jBPM is installed and configured with KIE Server. For more information see Installing and configuring jBPM on Red Hat JBoss EAP 7.4.

-

jBPM is running and you can log in to Business Central with the

kie-server,user, andadminroles. -

You have reviewed the information in Designing and building cases for case management.

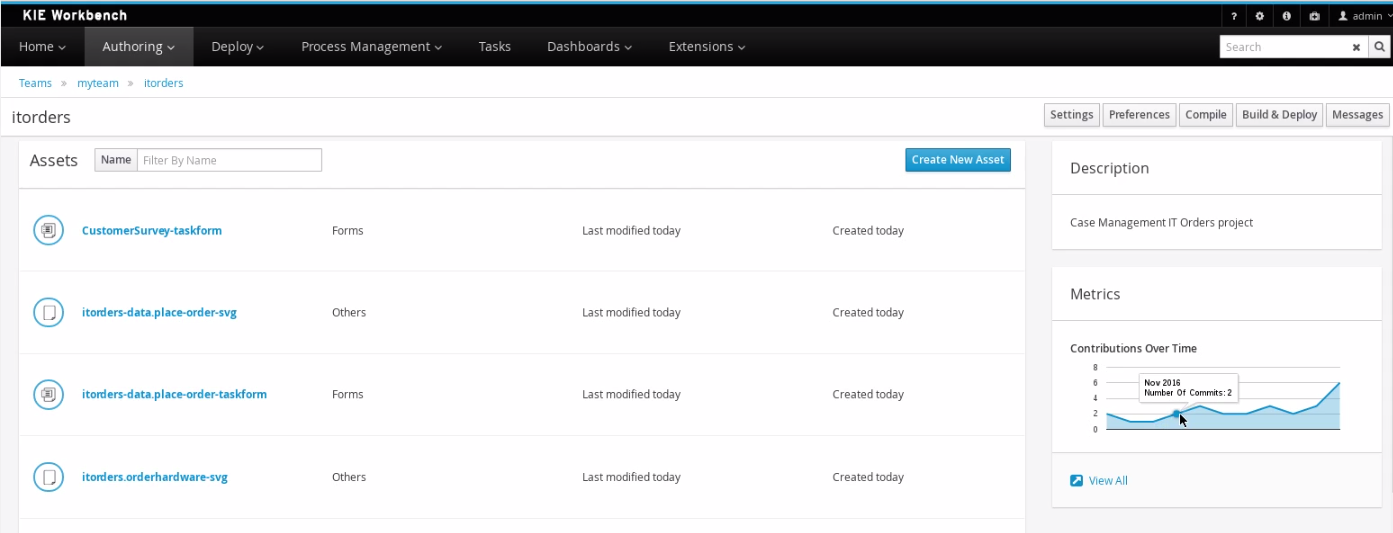

4.1. Reviewing the IT_Orders sample project

Before you create your own case management project, review the existing IT_Orders sample case management project in Business Central. This sample project contains predefined case management assets as a reference for your own case projects.

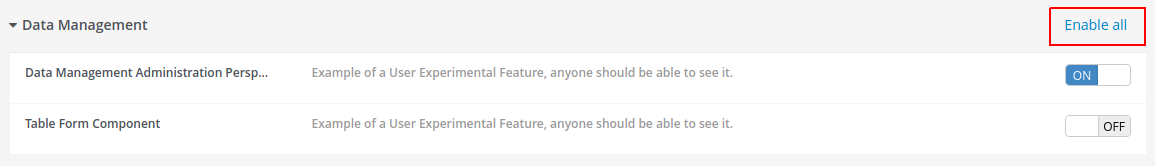

|

The business process application example includes features that are Technology Preview only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs), might not be functionally complete, and are not recommended for production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. For more information about Red Hat Technology Preview support, see Technology Preview Features Support Scope. |

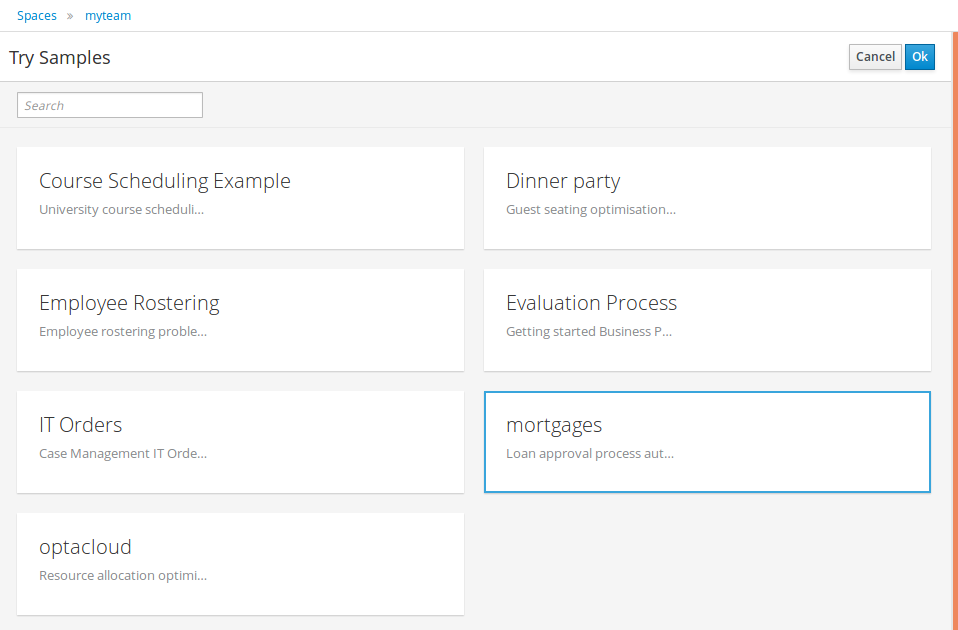

-

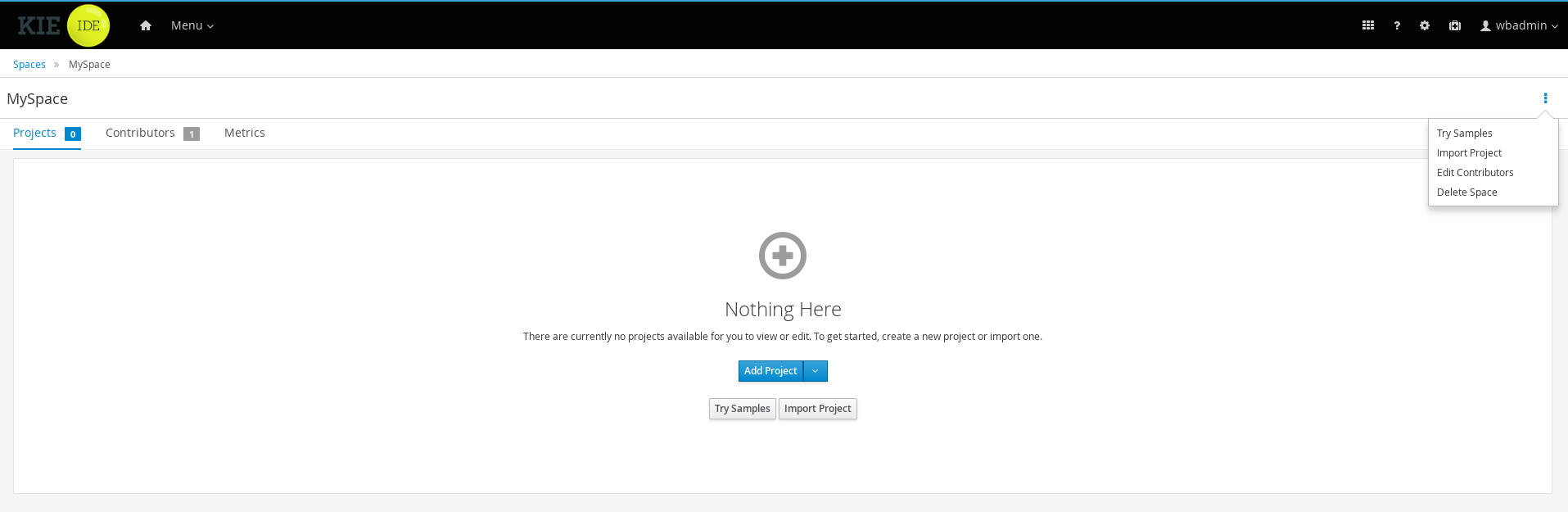

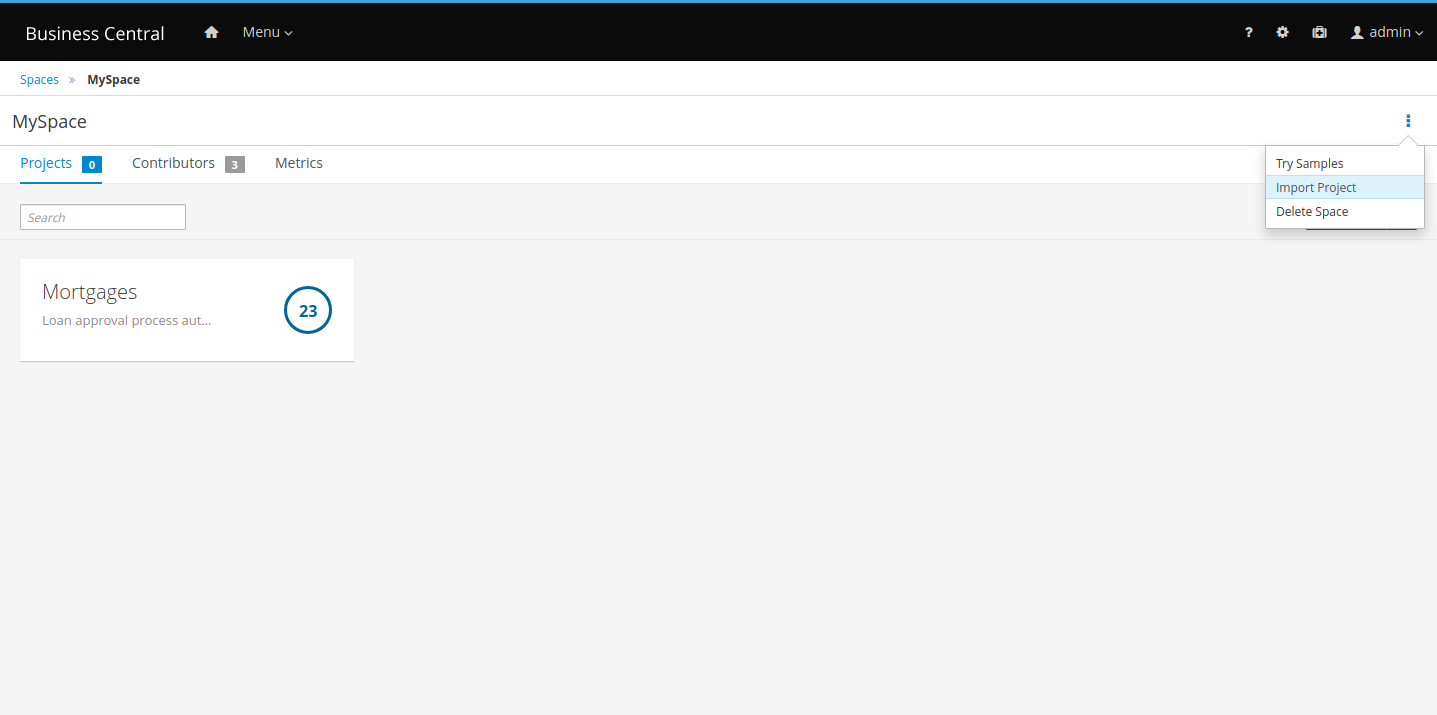

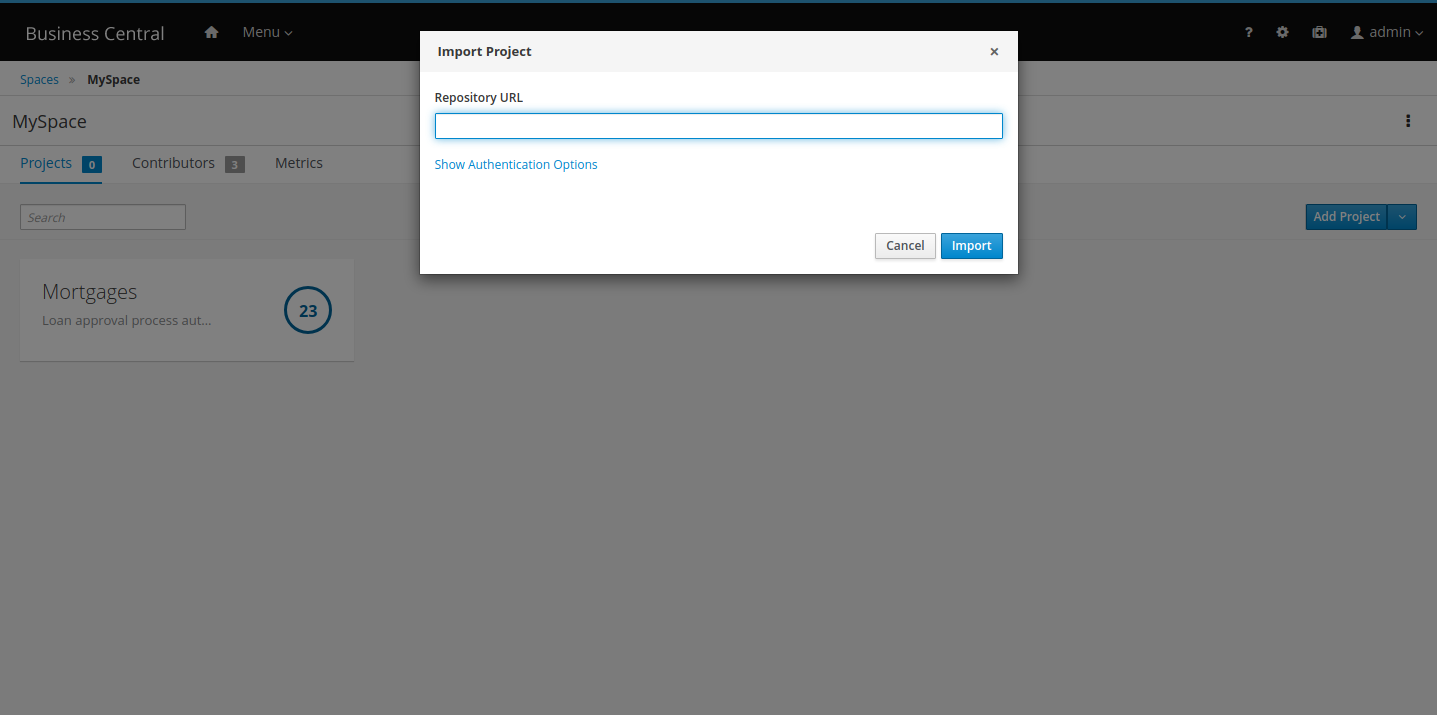

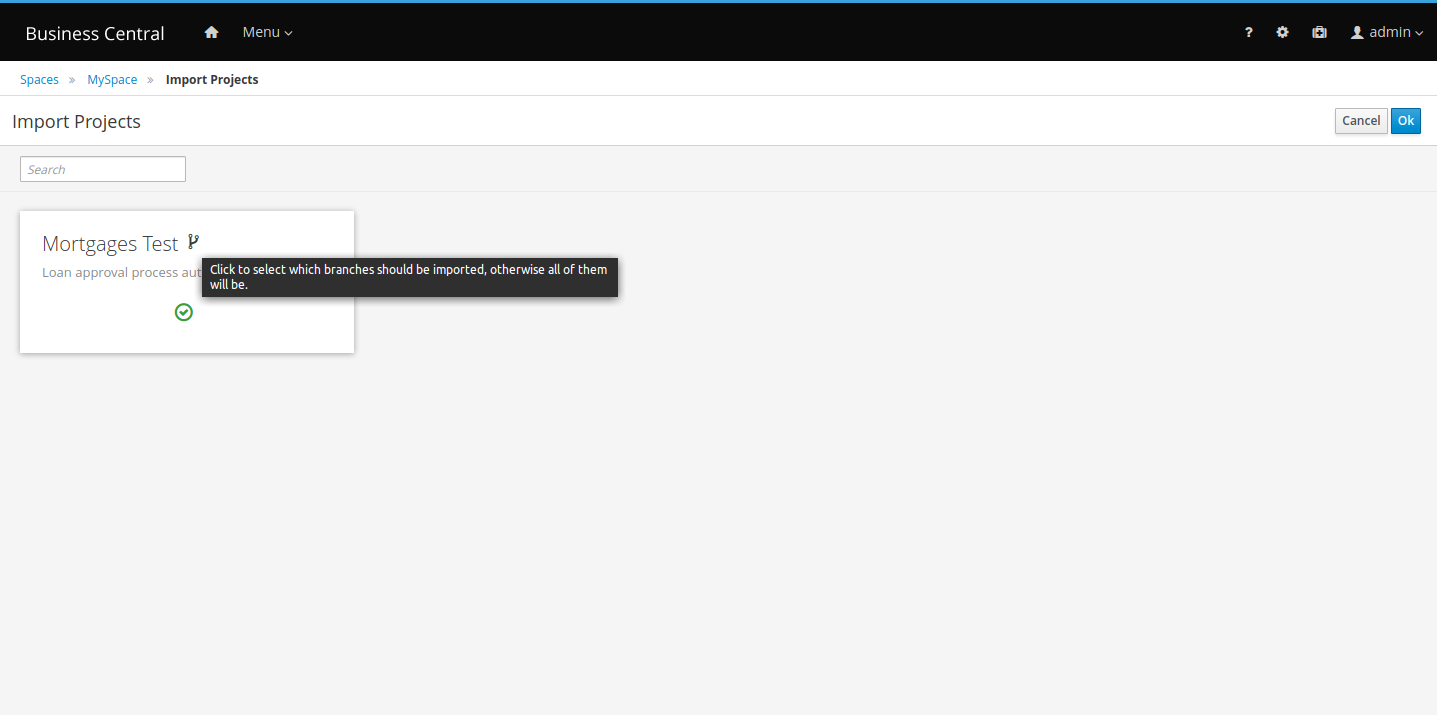

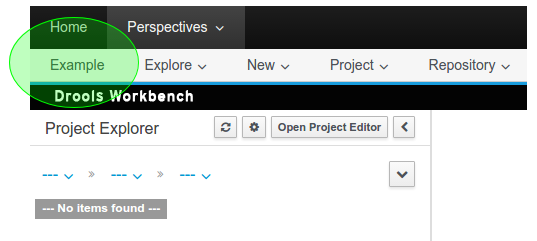

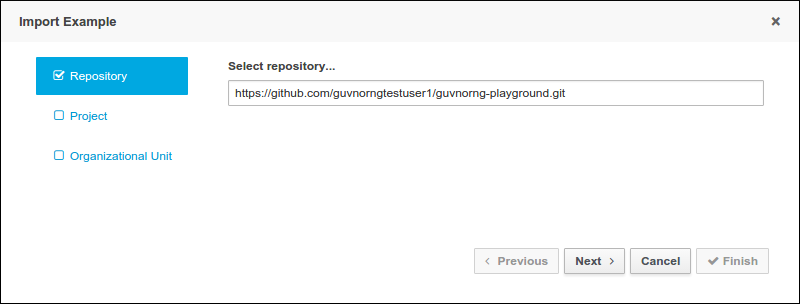

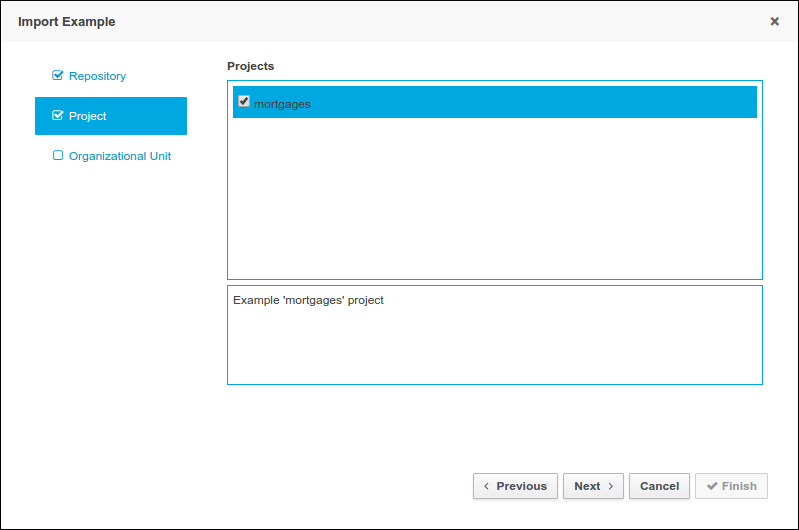

In Business Central, go to Menu → Design → Projects. If there are existing projects, you can access the samples by clicking the MySpace default space and selecting Try Samples from the Add Project drop-down menu. If there are no existing projects, click Try samples.

-

Select IT_Orders and click Ok.

The Assets view of the project opens. Select each example asset to explore how the project is designed to achieve the specified goal or workflow.

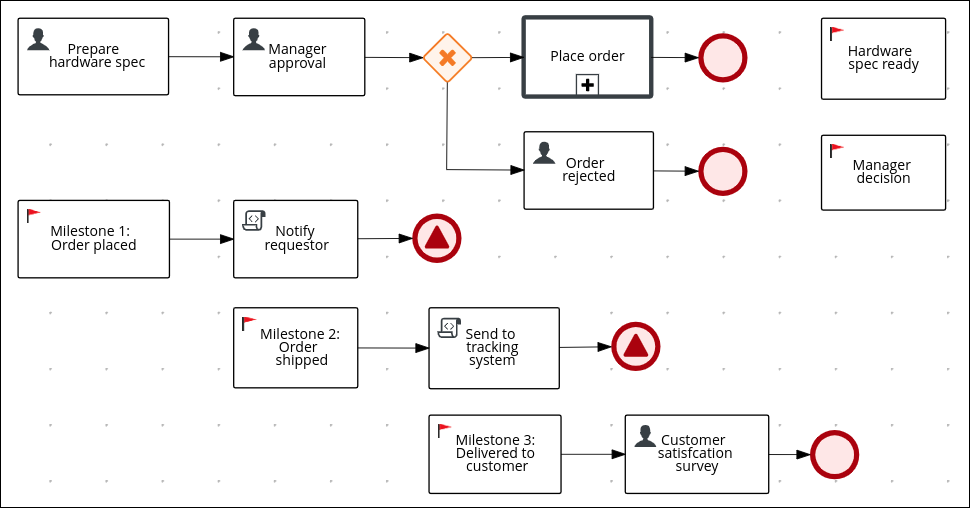

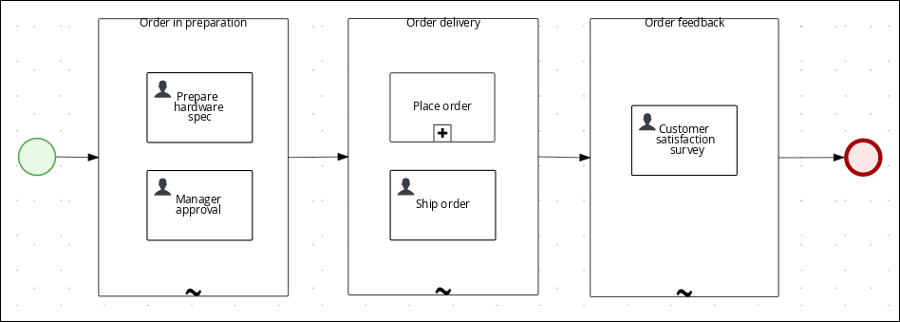

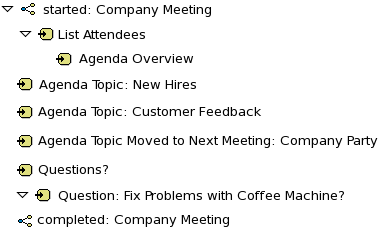

Review the orderhardware business process to help you understand the business process flow.

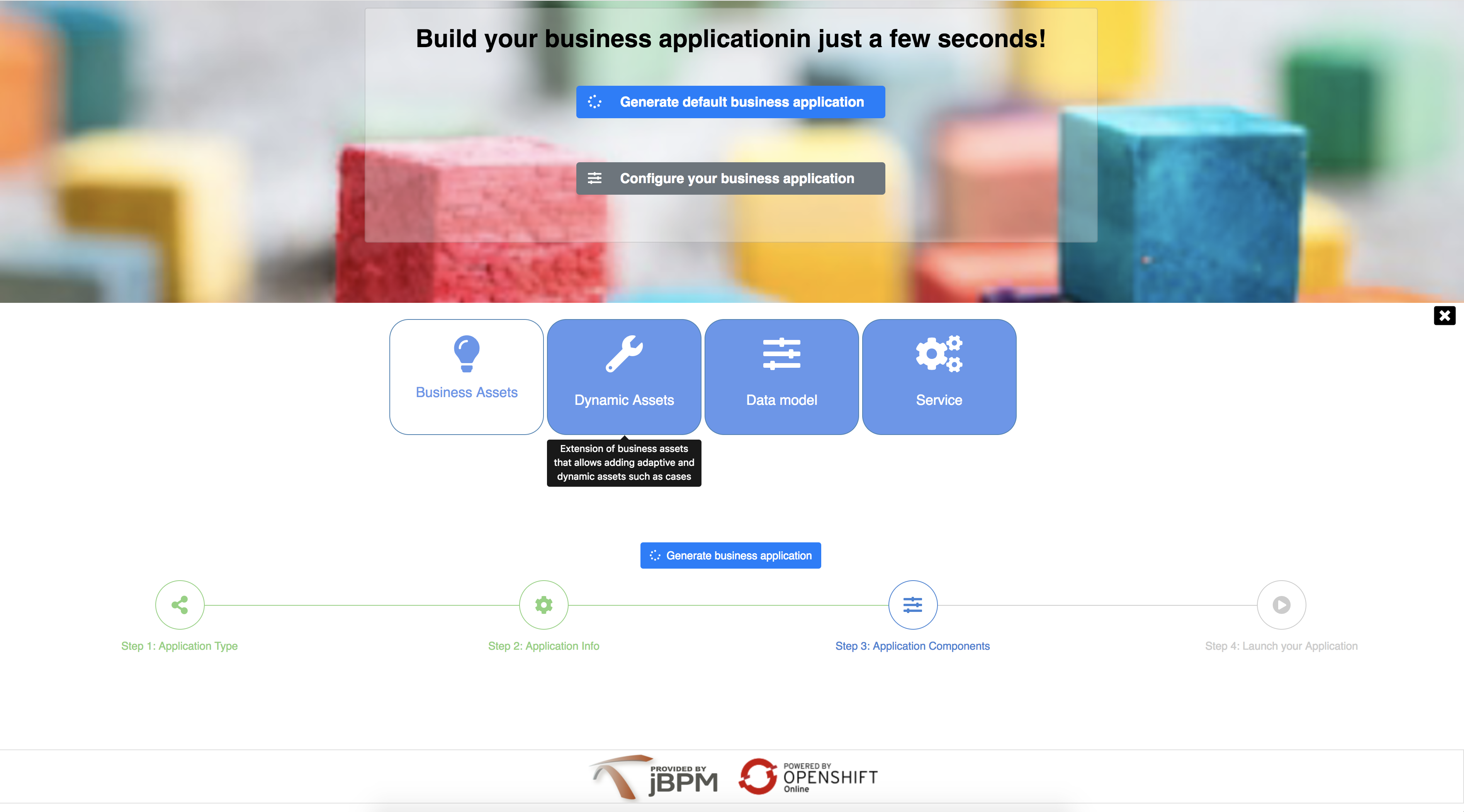

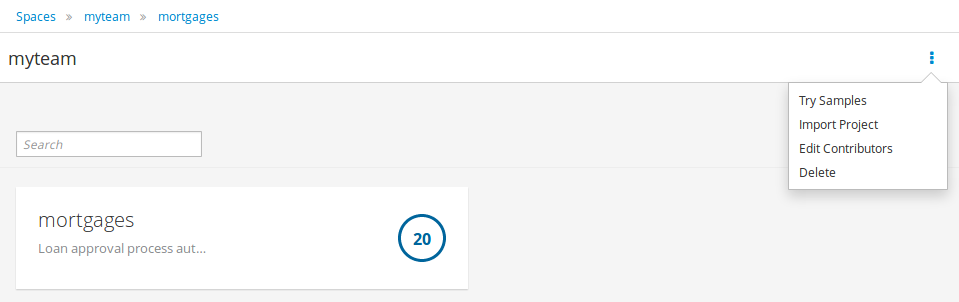

4.2. Creating a new IT_Orders case project

Create a new IT_Orders project in Business Central to gain an understanding of all of the required assets and how they are used in the project.

-

Log in to Business Central and go to Menu → Design → Projects.

Business Central provides a default space called MySpace, as shown in the following image. You can use the default space to create and test example projects.

Figure 22. Default spaces

Figure 22. Default spaces -

Click the Add Project drop-down arrow and select the Case project option:

Figure 23. Case project

Figure 23. Case project -

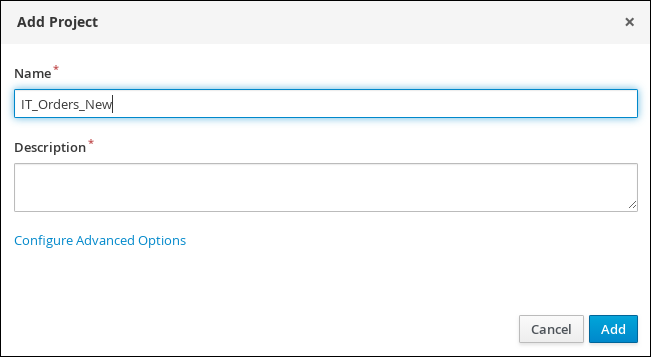

In the Add Project window, enter

IT_Orders_Newin the Name field and enter a project Description. Figure 24. Add Project window

Figure 24. Add Project window -

Click Add to add the project.

The Assets view of the project opens.

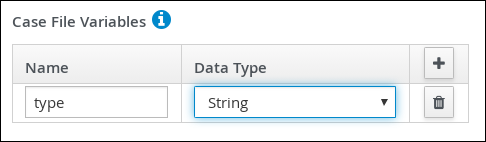

4.3. Data objects

Data objects are the building blocks for the rule assets that you create. Data objects are custom data types implemented as Java objects in specified packages of your project. For example, you might create a Person object with data fields Name, Address, and DateOfBirth to specify personal details for loan application rules. These custom data types determine what data your assets and your decision services are based on.

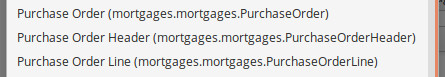

4.3.1. Creating the ITOrderService data object

The ITOrderService data object specifies the data type that will be used to define the IT Orders variables.

-

The IT_Orders_New project is created.

-

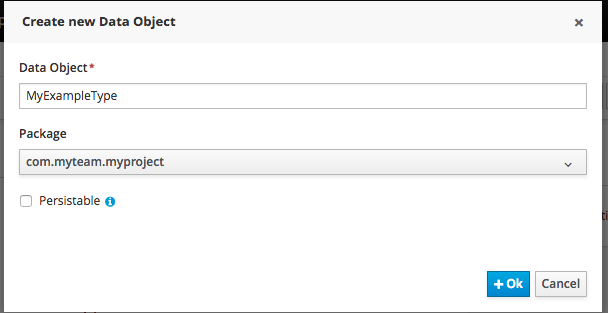

Click Add Asset → Data Object.

-

In the Create new Data Object wizard, enter the following values:

-

Data Object:

ITOrderService -

Package:

com.myspace.it_orders_new

-

-

Click Ok.

-

Click

next to the Package drop-down menu to specify a new package for the data object.

next to the Package drop-down menu to specify a new package for the data object. -

Input

org.jbpm.demo.it_orders.servicesand click Add. -

Click Save, and then click Yes, Move to confirm your changes.

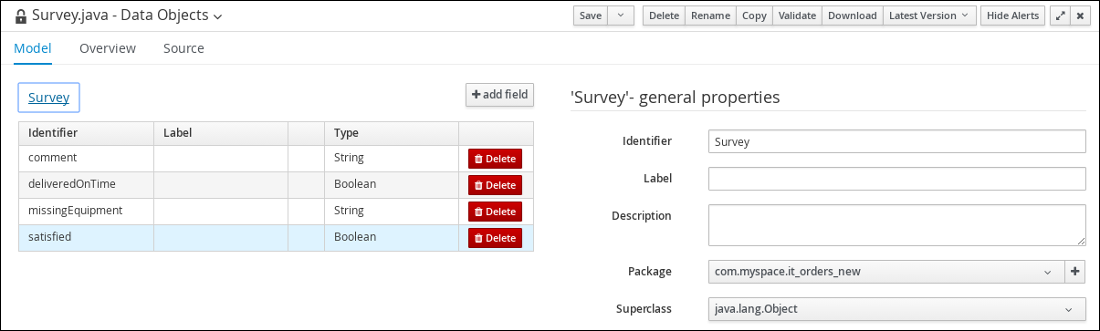

4.3.2. Creating the Survey data object

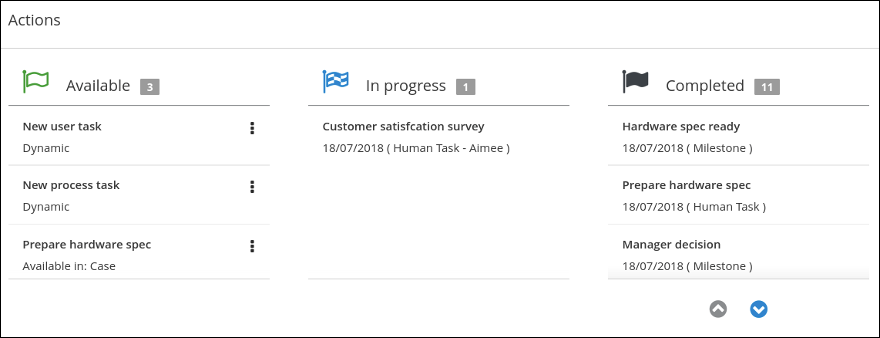

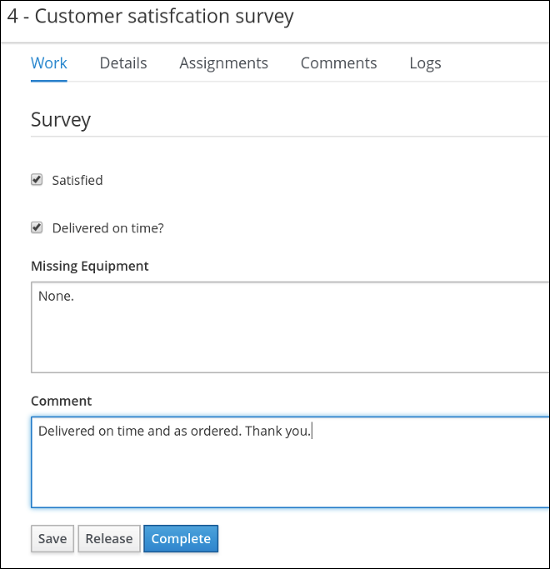

The Survey data object contains data fields, such as deliveredOnTime and missingEquipment. You will use the data and values when you design your case.

-

The IT_Orders_New project is created.

-

In Business Central, go to Menu → Design → Projects and click IT_Orders_New.

-

Click Add Asset → Data Object.

-

In the Create new Data Object wizard, enter the following values:

-

Data Object:

Survey -

Package:

com.myspace.it_orders_new

-

-

Click Ok.

-

Add the Survey data object constraints.

-

Click add field.

-

Enter the following values:

-

Id:

comment -

Label: Leave empty

-

Type:

String

-

-

Click Create and continue, and then enter the following values:

-

Id:

deliveredOnTime -

Label: Leave empty

-

Type:

Boolean

-

-

Click Create and continue, and then enter the following values:

-

Id:

missingEquipment -

Label: Leave empty

-

Type:

String

-

-

Click Create and continue, and then enter the following values:

-

Id:

satisfied -

Label: Leave empty

-

Type:

Boolean

-

-

Click Create.

-

-

Click Save to confirm your changes.

Figure 25. Survey data object details

Figure 25. Survey data object details

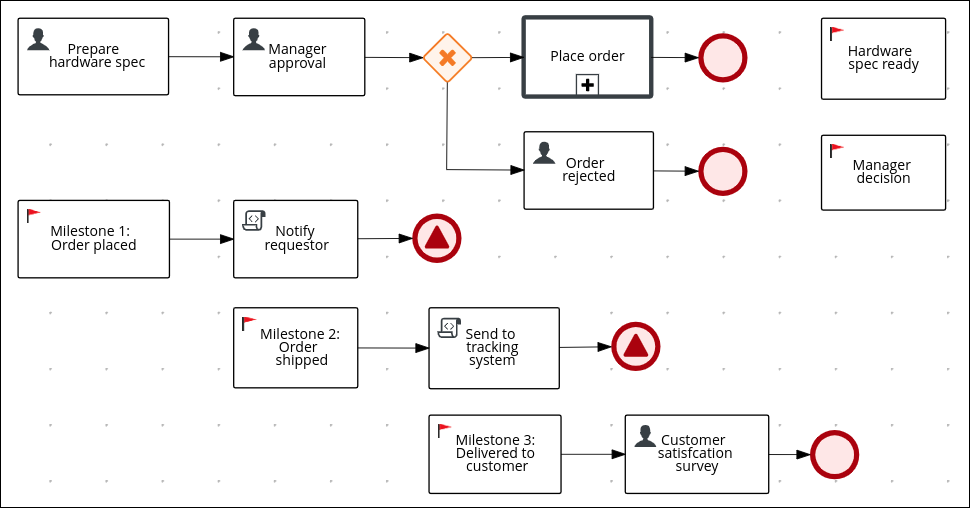

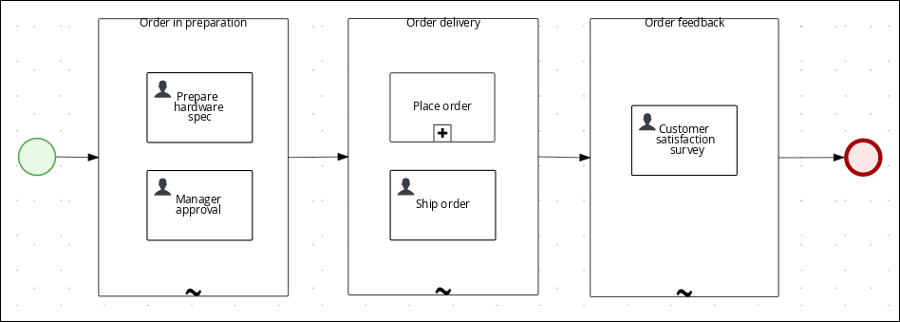

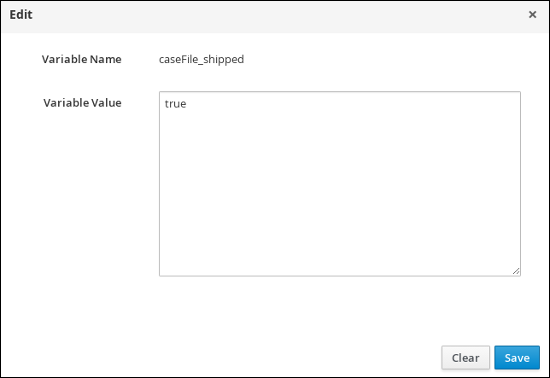

4.4. Designing the case definition

You design cases using the process designer in Business Central. Case design is the basis of case management and sets the specific goals and tasks for each case. The case flow can be modified dynamically during run time by adding dynamic tasks or processes. In this procedure, you will create this same case definition to familiarize yourself with the case definition design process.

The IT_Orders sample project in Business Central includes the following orderhardware business process case definition.

-

You have created a new case in Business Central. For more information, see Creating a new IT_Orders case project.

-

You have created the data objects. For more information, see Data objects.

-

In Business Central, go to Menu → Design → Projects and click IT_Orders_New.

-

Click Add Asset → Case Definition.

-

In the Create new Case definition window, add the following required information:

-

Case Definition: Input

orderhardware. This is usually the subject of the case or project that is being case managed. -

Package: Select com.myspace.it_orders_new to specify the location that the case file is created in.

-

-

Click Ok to open the process designer.

-

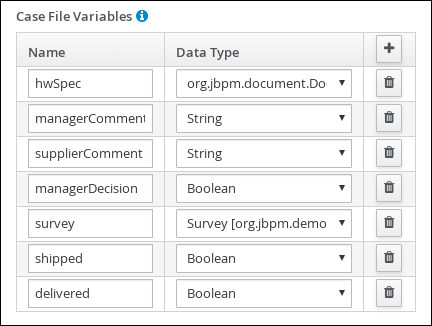

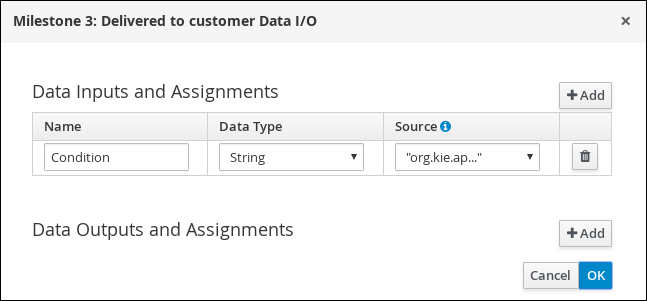

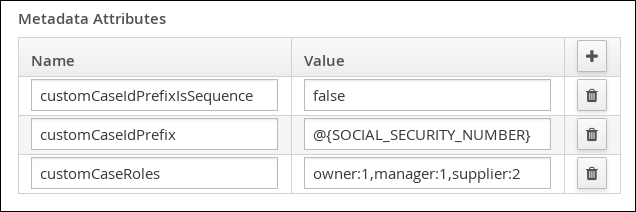

Define values for the case file variables that are accessible to the sub-processes, subcases, and business rules used in the case.

-

In the upper-right corner, click the Properties

icon.

icon. -

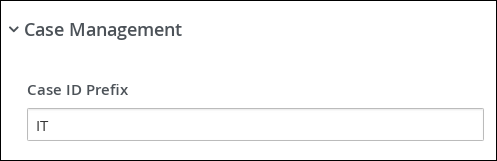

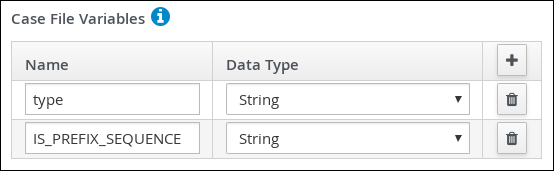

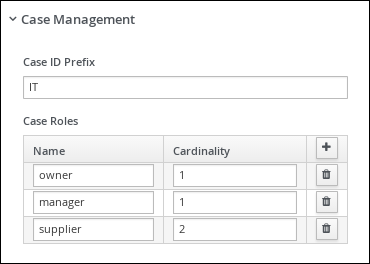

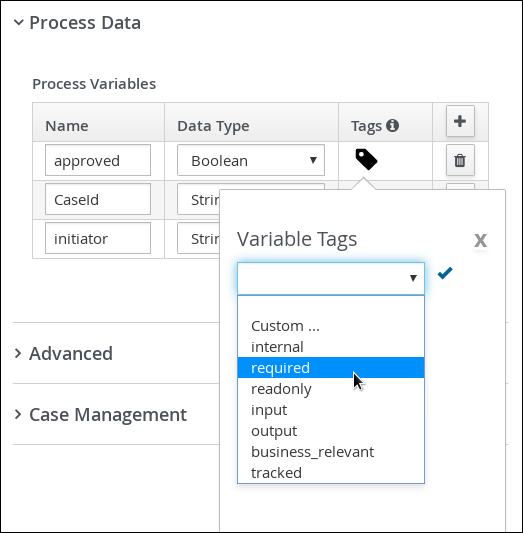

Scroll down and expand Case Management, click

in the Case File Variables section, and enter the following:

in the Case File Variables section, and enter the following: Figure 27. orderhardware case file variables

Figure 27. orderhardware case file variables

The following case file variables are custom data types:

-

hwSpec: org.jbpm.document.Document (type in this value)

-

survey: Survey [com.myspace.it_orders_new] (select this value)

-

-

Click Save.

-

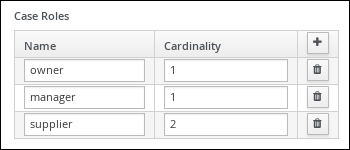

Define the roles involved in the case.

-

In the upper-right corner, click the Properties

icon.

icon. -

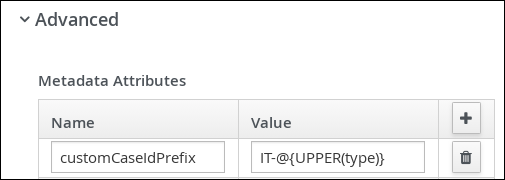

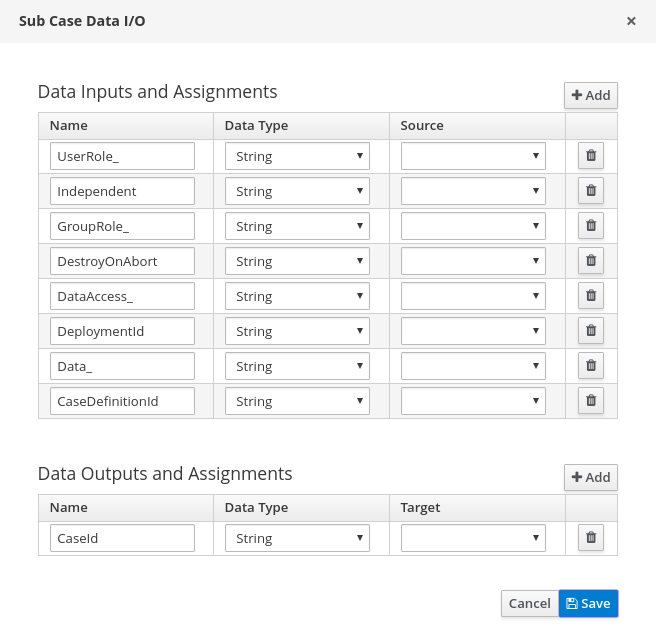

Scroll down and expand Case Management, click

in the Case Roles section, and enter the following:

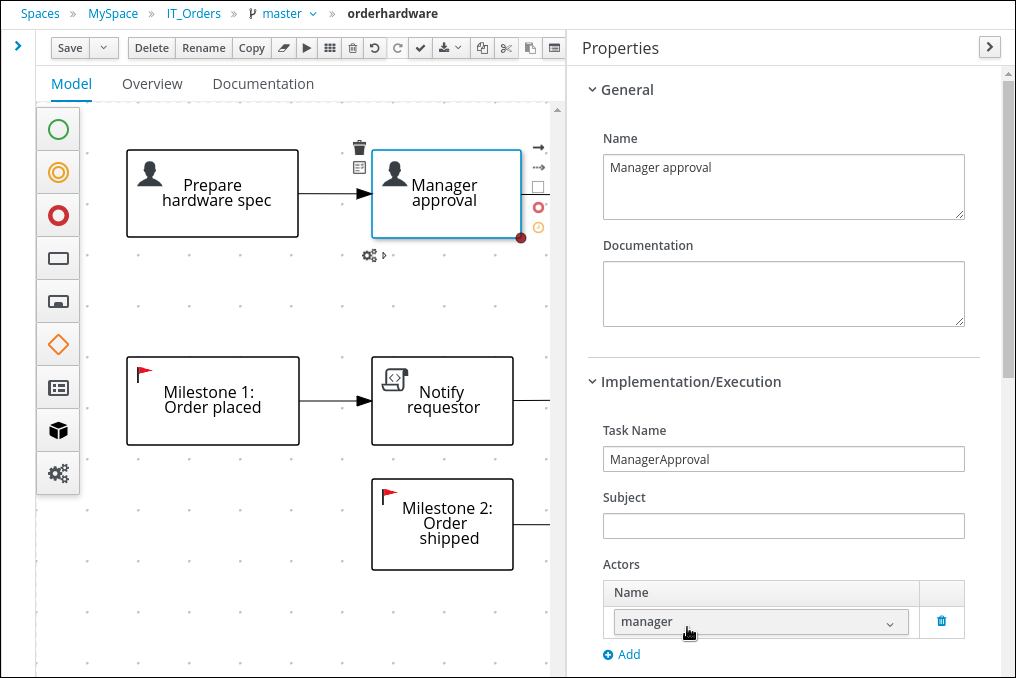

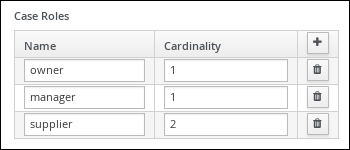

in the Case Roles section, and enter the following: Figure 28. orderhardware case roles

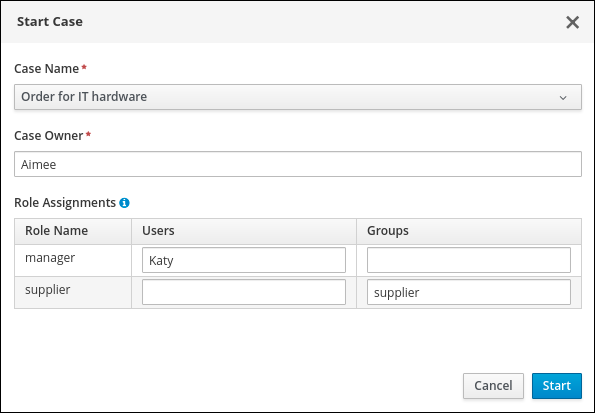

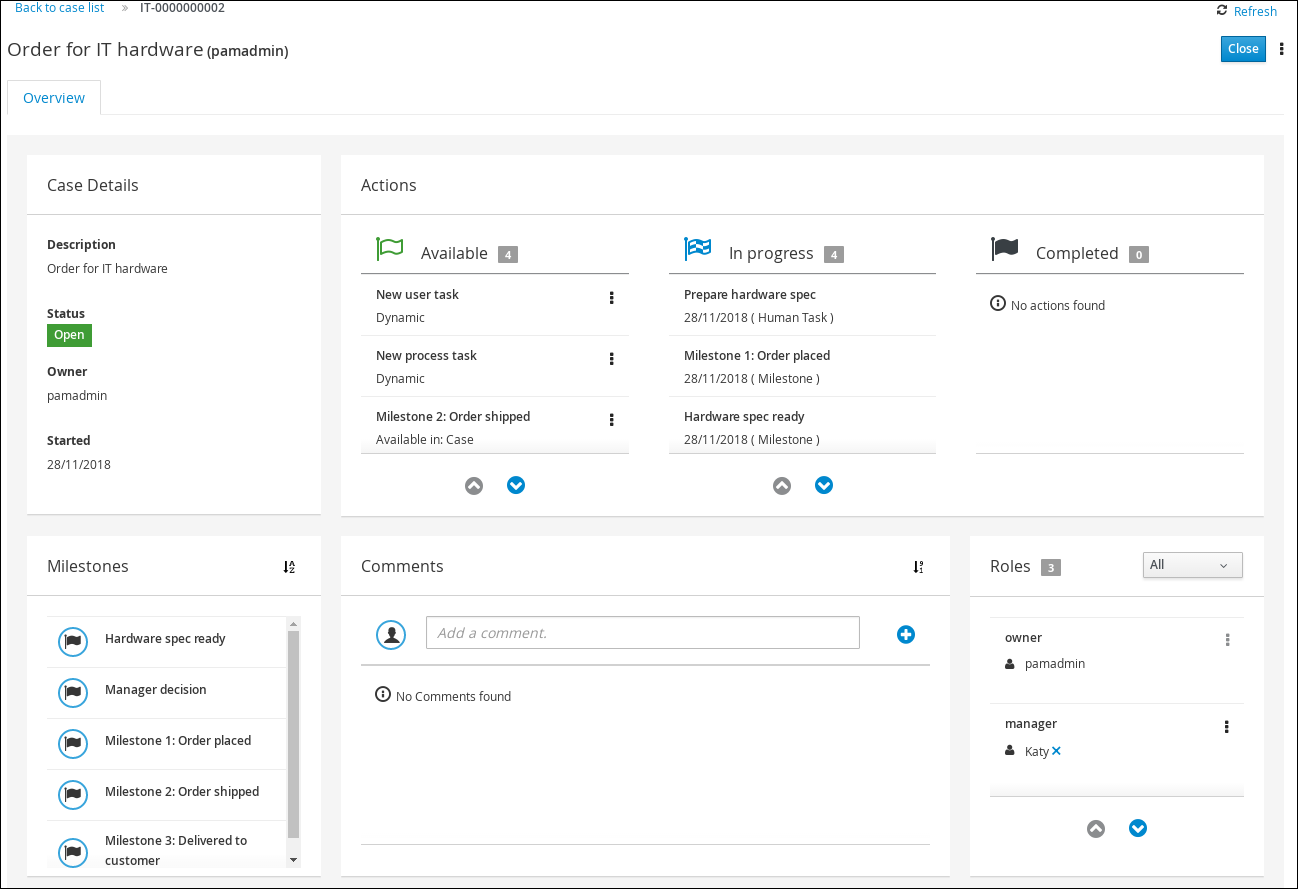

Figure 28. orderhardware case roles-

owner: The employee who is making the hardware order request. The role cardinality is set to1, which means that only one person or group can be assigned to this role. -

manager: The employee’s manager; the person who will approve or deny the requested hardware. The role cardinality is set to1, which means that only one person or group can be assigned to this role. -

supplier: The available suppliers of IT hardware in the system. The role cardinality is set to2, which means that more than one supplier can be assigned to this role.

-

-

-

Click Save.

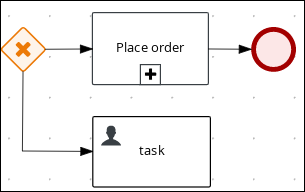

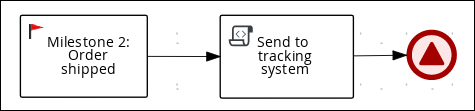

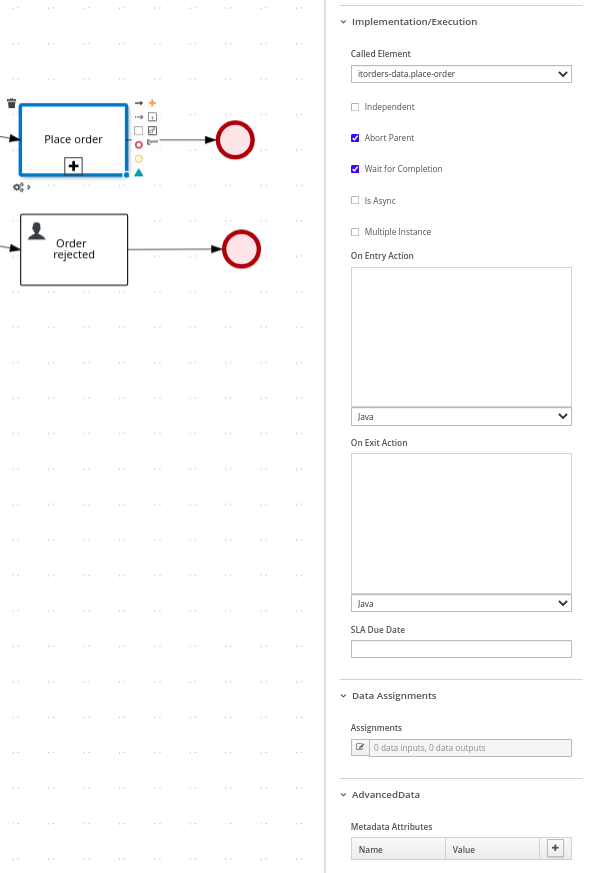

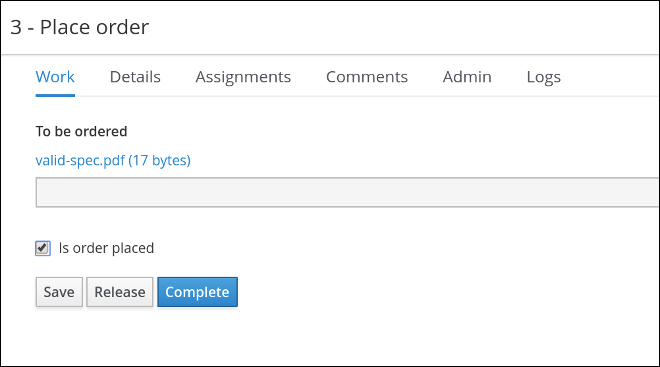

4.4.1. Creating the Place order sub-process

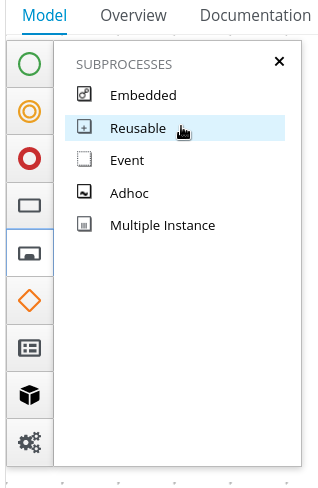

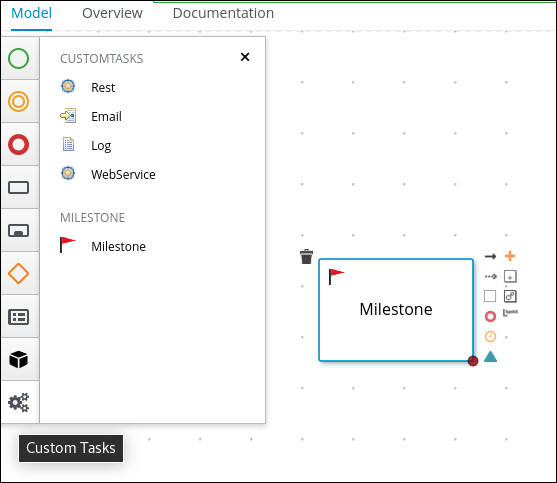

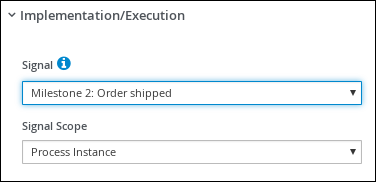

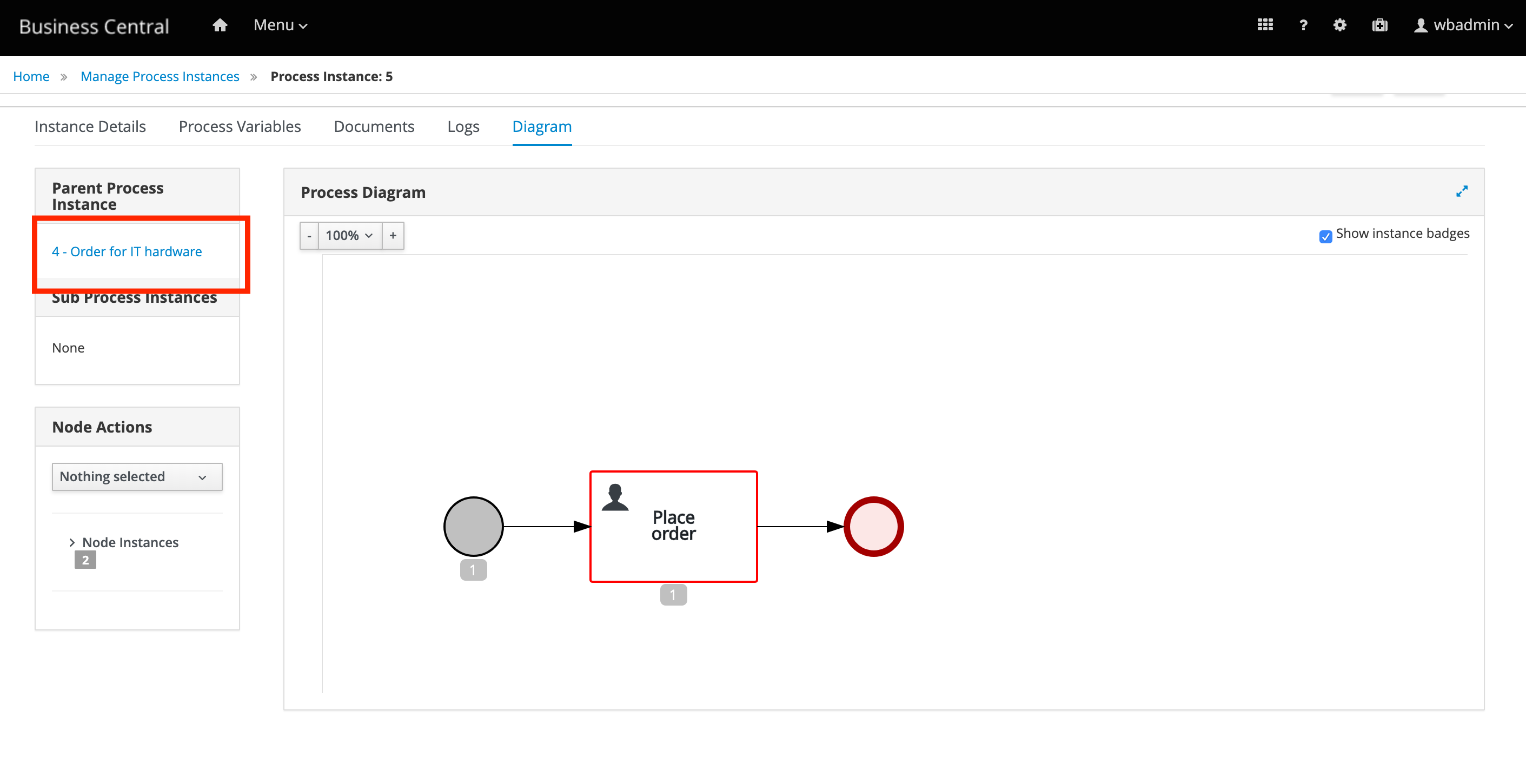

Create the Place order sub-process, which is a separate business process that is carried out by the supplier. This is a reusable process that occurs during the course of case execution as described in Designing the case definition.

-

You have created a new case in Business Central. For more information, see Creating a new IT_Orders case project.

-

You have created the data objects. For more information, see Data objects.

-

In Business Central, go to Menu → Design → Projects → IT_Orders_New.

-

From the project menu, click Add Asset → Business Process.

-

In the Create new Business Process wizard, enter the following values:

-

Business Process:

place-order -

Package: Select

com.myspace.it_orders_new

-

-

Click Ok. The diagram editor opens.

-

Click an empty space in the canvas, and in the upper-right corner, click the Properties

icon.

icon. -

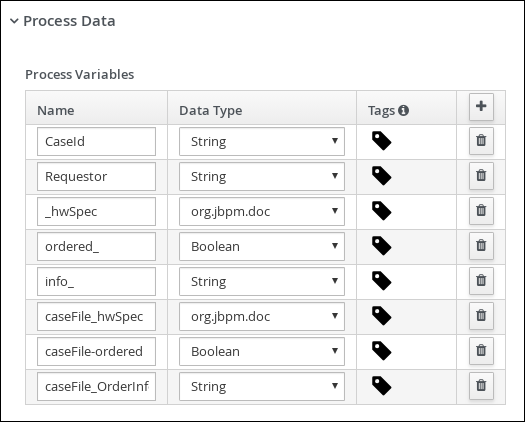

Scroll down and expand Process Data and click

in the Process Variables section and enter the following:

in the Process Variables section and enter the following:

-

Click Save.

-

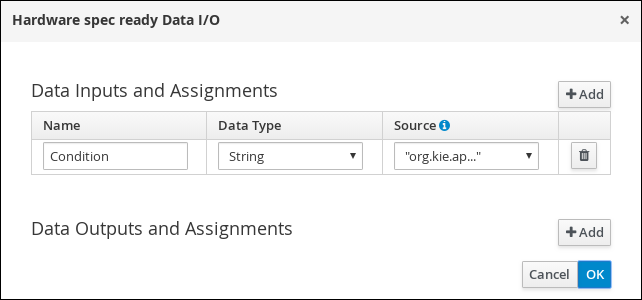

Drag a start event onto the canvas and create an outgoing connection from the start event to a task and convert the new task to a user task.

-

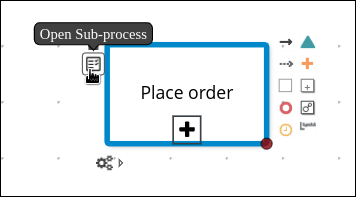

Click the user task and in the Properties panel, input

Place orderin the Name field. -

Expand Implementation/Execution, click Add below the Groups menu, click Select → New, and input

supplier. -

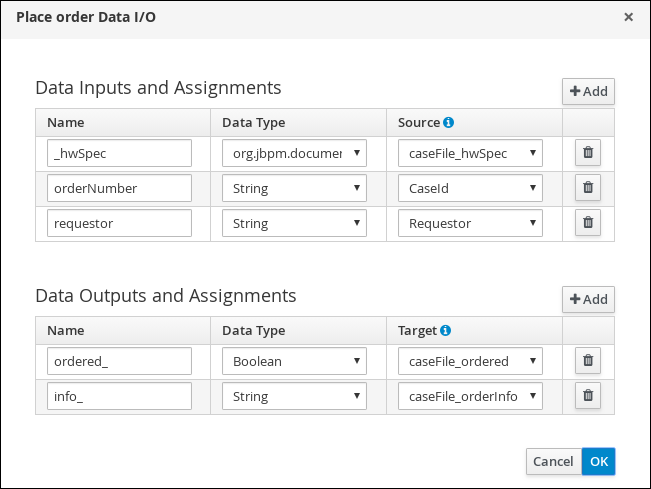

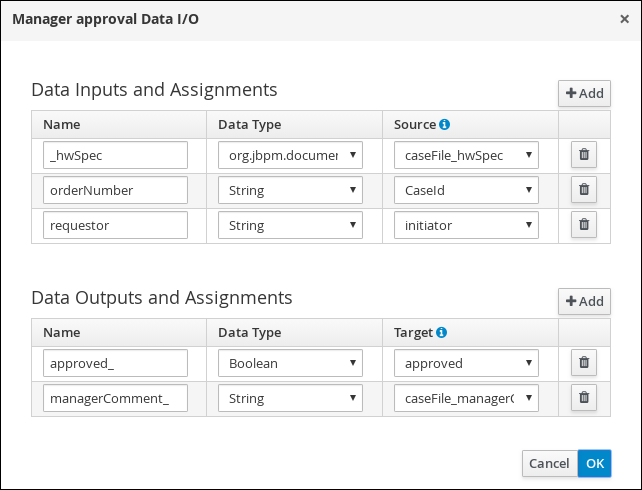

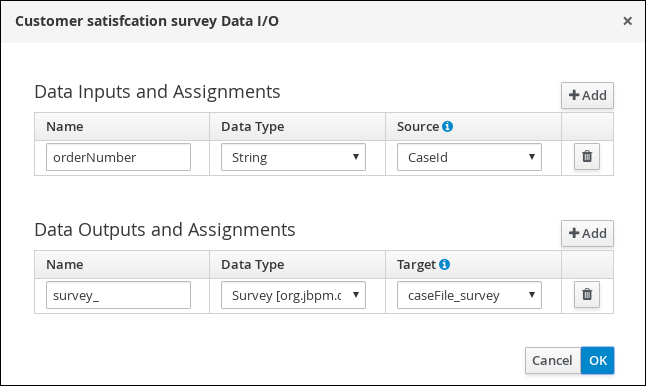

Click

in the Assignments field and add the following:

in the Assignments field and add the following:

For the first input assignment, select Custom for the Data Type and input

org.jbpm.document.Document. -

Click OK.

-

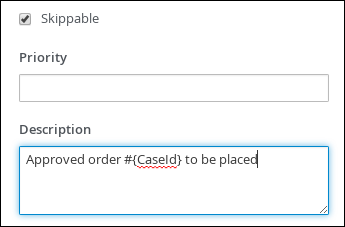

Select the Skippable check box and enter the following text in the Description field:

Approved order #{CaseId} to be placed

-

Create an outgoing connection from the Place order user task and connect it to an end event.

-

Click Save to confirm your changes.

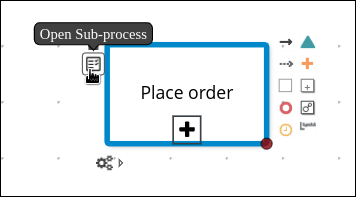

You can open the sub-process in a new editor in Business Central by clicking the Place order task in the main process and then clicking the Open Sub-process task icon.

4.4.2. Creating the Manager approval business process

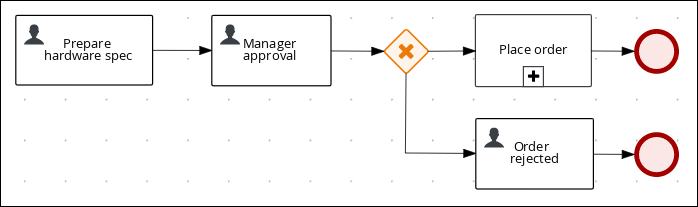

The manager approval process determines whether or not the order will be placed or rejected.

-

In Business Central, go to Menu → Design → Projects → IT_Orders_New → orderhardware Business Processes.

-

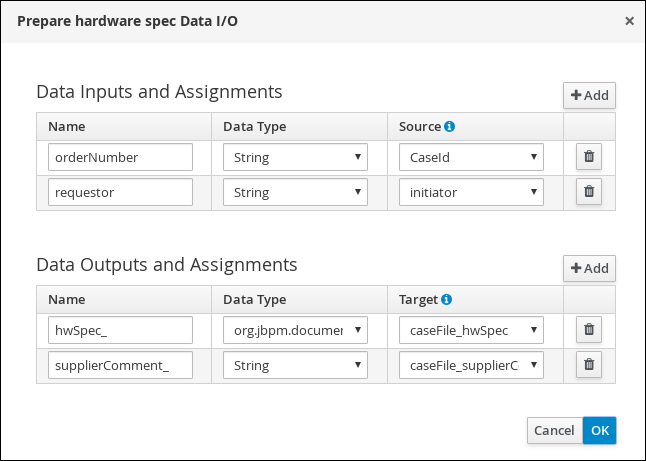

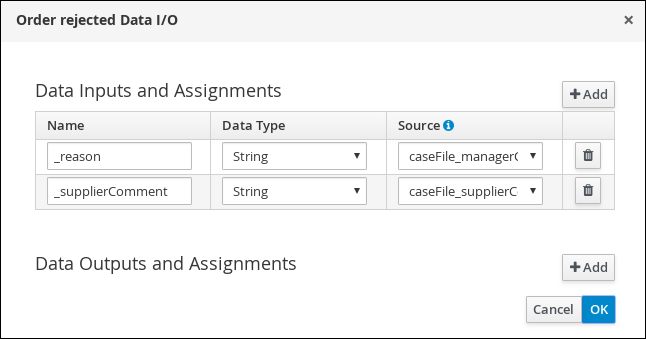

Create and configure the

Prepare hardware specuser task:-

Expand Tasks in the Object Library and drag a user task onto the canvas and convert the new task to a user task.

-

Click the new user task and click the Properties

icon in the upper-right corner.

icon in the upper-right corner. -

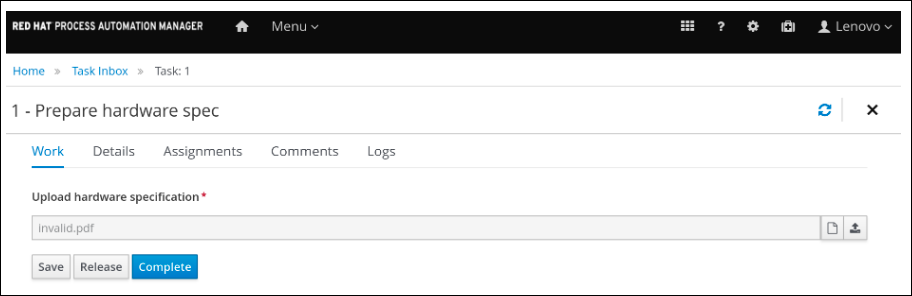

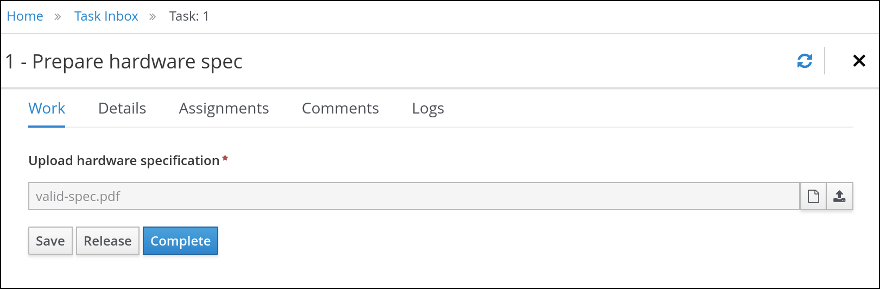

Input

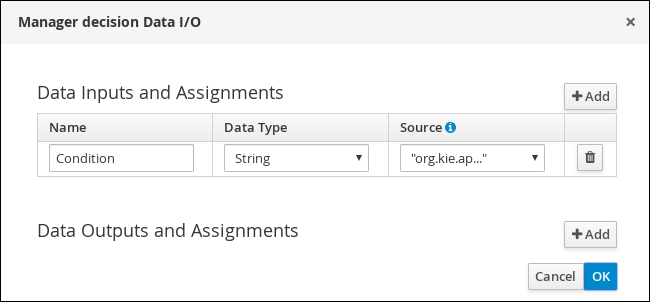

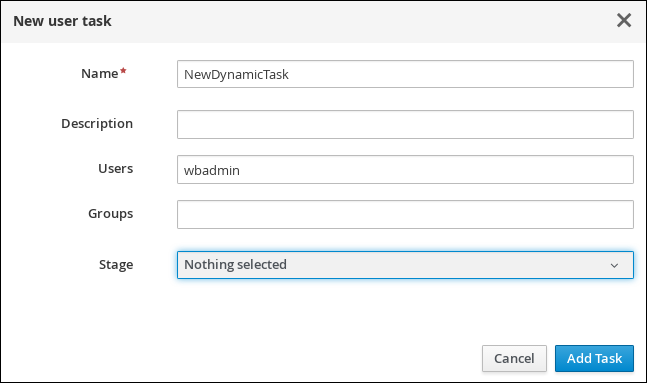

Prepare hardware specin the Name field. -